图像拼接在实际的应用场景很广,比如无人机航拍,遥感图像等等,图像拼接是进一步做图像理解基础步骤,拼接效果的好坏直接影响接下来的工作,所以一个好的图像拼接算法非常重要。

再举一个身边的例子吧,你用你的手机对某一场景拍照,但是你没有办法一次将所有你要拍的景物全部拍下来,所以你对该场景从左往右依次拍了好几张图,来把你要拍的所有景物记录下来。那么我们能不能把这些图像拼接成一个大图呢?我们利用opencv就可以做到图像拼接的效果!

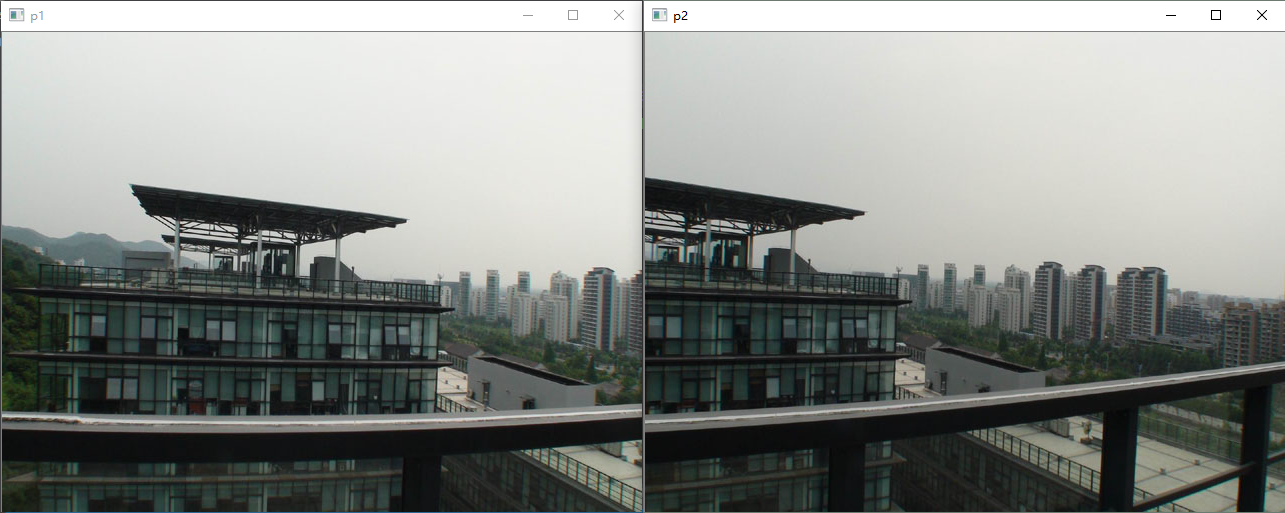

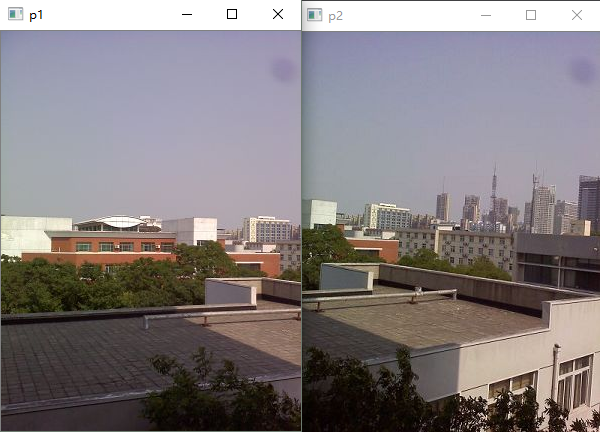

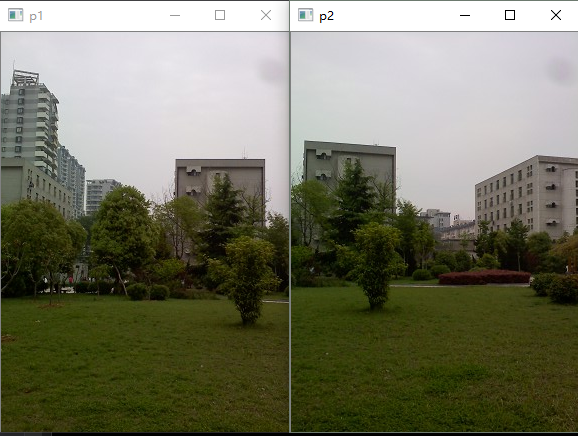

比如我们有对这两张图进行拼接。

从上面两张图可以看出,这两张图有比较多的重叠部分,这也是拼接的基本要求。

那么要实现图像拼接需要那几步呢?简单来说有以下几步:

- 对每幅图进行特征点提取

- 对对特征点进行匹配

- 进行图像配准

- 把图像拷贝到另一幅图像的特定位置

- 对重叠边界进行特殊处理

好吧,那就开始正式实现图像配准。

第一步就是特征点提取。现在CV领域有很多特征点的定义,比如sift、surf、harris角点、ORB都是很有名的特征因子,都可以用来做图像拼接的工作,他们各有优势。本文将使用ORB和SURF进行图像拼接,用其他方法进行拼接也是类似的。

基于SURF的图像拼接

用SIFT算法来实现图像拼接是很常用的方法,但是因为SIFT计算量很大,所以在速度要求很高的场合下不再适用。所以,它的改进方法SURF因为在速度方面有了明显的提高(速度是SIFT的3倍),所以在图像拼接领域还是大有作为。虽说SURF精确度和稳定性不及SIFT,但是其综合能力还是优越一些。下面将详细介绍拼接的主要步骤。

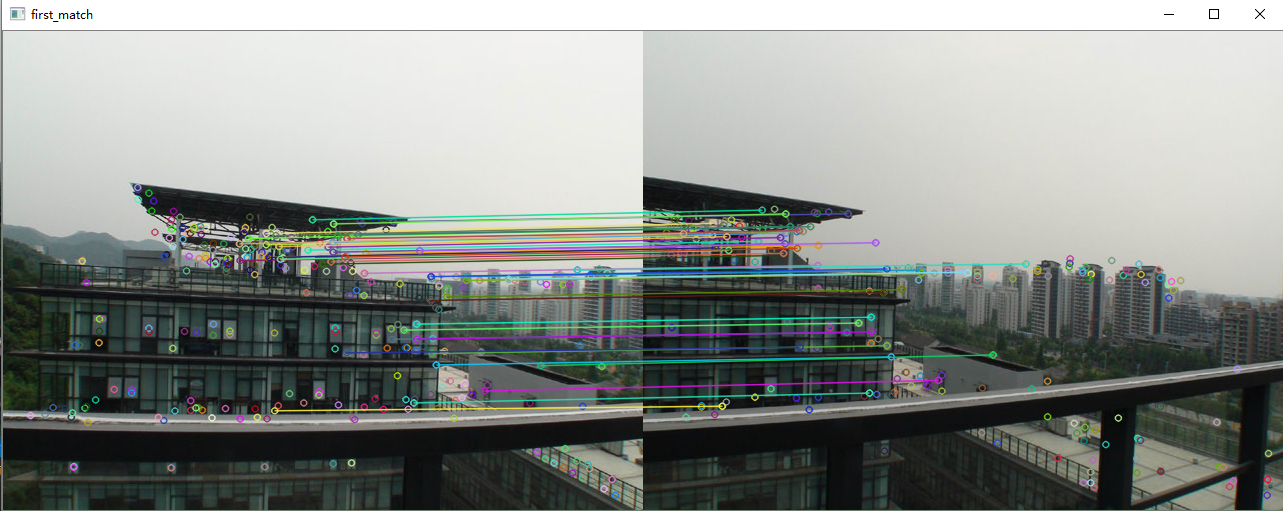

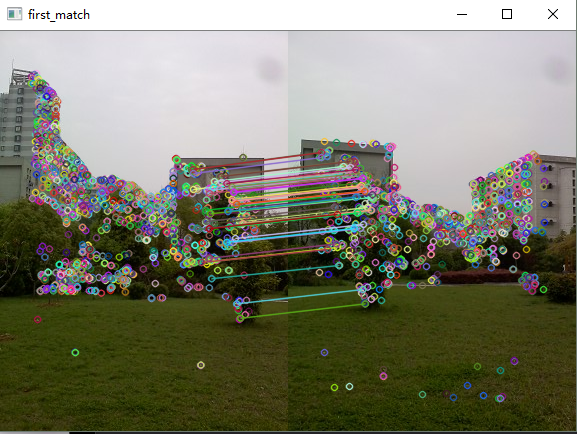

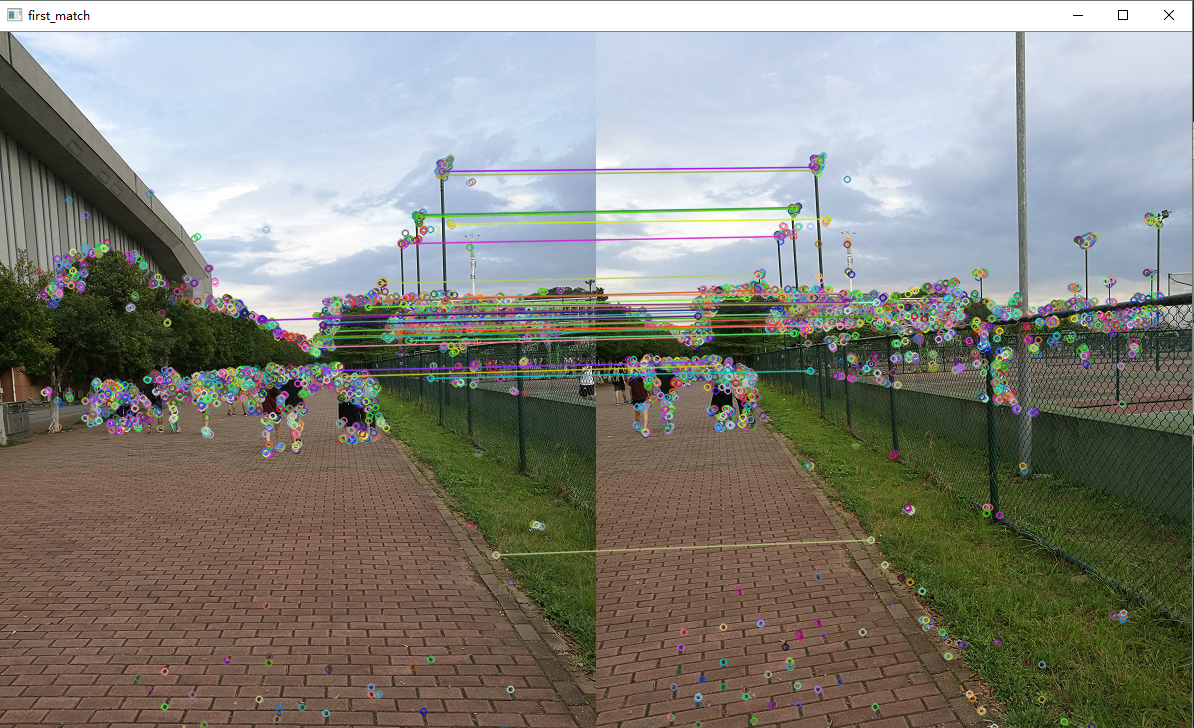

1.特征点提取和匹配

1 //提取特征点

2 SurfFeatureDetector Detector(2000);

3 vector<KeyPoint> keyPoint1, keyPoint2;

4 Detector.detect(image1, keyPoint1);

5 Detector.detect(image2, keyPoint2);

6

7 //特征点描述,为下边的特征点匹配做准备

8 SurfDescriptorExtractor Descriptor;

9 Mat imageDesc1, imageDesc2;

10 Descriptor.compute(image1, keyPoint1, imageDesc1);

11 Descriptor.compute(image2, keyPoint2, imageDesc2);

12

13 FlannBasedMatcher matcher;

14 vector<vector<DMatch> > matchePoints;

15 vector<DMatch> GoodMatchePoints;

16

17 vector<Mat> train_desc(1, imageDesc1);

18 matcher.add(train_desc);

19 matcher.train();

20

21 matcher.knnMatch(imageDesc2, matchePoints, 2);

22 cout << "total match points: " << matchePoints.size() << endl;

23

24 // Lowe's algorithm,获取优秀匹配点

25 for (int i = 0; i < matchePoints.size(); i++)

26 {

27 if (matchePoints[i][0].distance < 0.4 * matchePoints[i][1].distance)

28 {

29 GoodMatchePoints.push_back(matchePoints[i][0]);

30 }

31 }

32

33 Mat first_match;

34 drawMatches(image02, keyPoint2, image01, keyPoint1, GoodMatchePoints, first_match);

35 imshow("first_match ", first_match);

2.图像配准

这样子我们就可以得到了两幅待拼接图的匹配点集,接下来我们进行图像的配准,即将两张图像转换为同一坐标下,这里我们需要使用findHomography函数来求得变换矩阵。但是需要注意的是,findHomography函数所要用到的点集是Point2f类型的,所有我们需要对我们刚得到的点集GoodMatchePoints再做一次处理,使其转换为Point2f类型的点集。

1 vector<Point2f> imagePoints1, imagePoints2;

2

3 for (int i = 0; i<GoodMatchePoints.size(); i++)

4 {

5 imagePoints2.push_back(keyPoint2[GoodMatchePoints[i].queryIdx].pt);

6 imagePoints1.push_back(keyPoint1[GoodMatchePoints[i].trainIdx].pt);

7 }

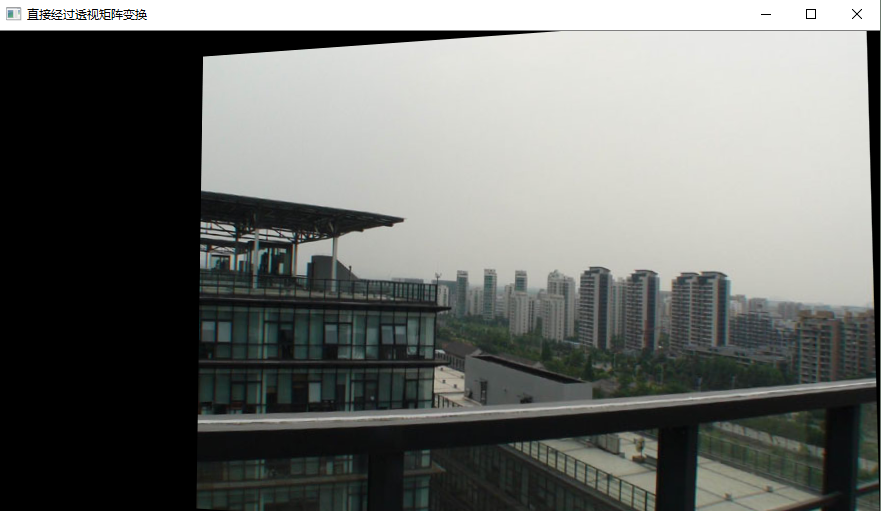

这样子,我们就可以拿着imagePoints1, imagePoints2去求变换矩阵了,并且实现图像配准。值得注意的是findHomography函数的参数中我们选泽了CV_RANSAC,这表明我们选择RANSAC算法继续筛选可靠地匹配点,这使得匹配点解更为精确。

1 //获取图像1到图像2的投影映射矩阵 尺寸为3*3

2 Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC);

3 ////也可以使用getPerspectiveTransform方法获得透视变换矩阵,不过要求只能有4个点,效果稍差

4 //Mat homo=getPerspectiveTransform(imagePoints1,imagePoints2);

5 cout << "变换矩阵为:\n" << homo << endl << endl; //输出映射矩阵

6

7 //图像配准

8 Mat imageTransform1, imageTransform2;

9 warpPerspective(image01, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), image02.rows));

10 //warpPerspective(image01, imageTransform2, adjustMat*homo, Size(image02.cols*1.3, image02.rows*1.8));

11 imshow("直接经过透视矩阵变换", imageTransform1);

12 imwrite("trans1.jpg", imageTransform1);

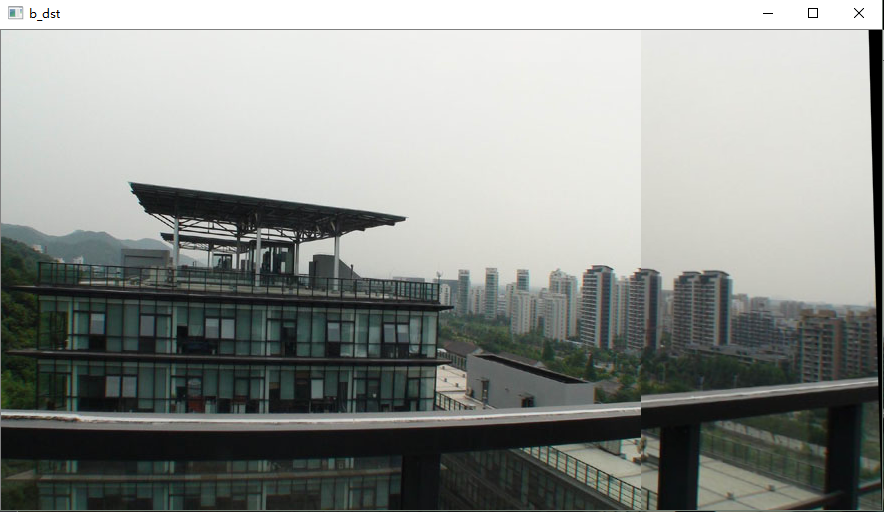

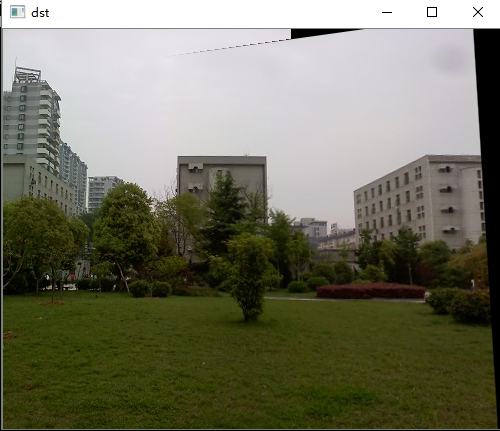

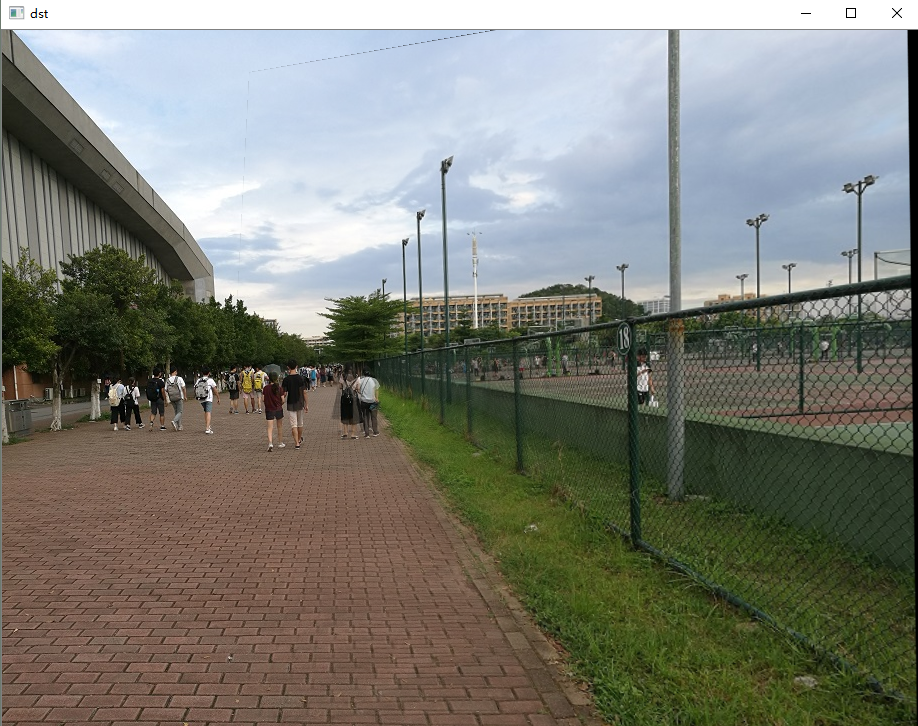

3. 图像拷贝

拷贝的思路很简单,就是将左图直接拷贝到配准图上就可以了。

1 //创建拼接后的图,需提前计算图的大小

2 int dst_width = imageTransform1.cols; //取最右点的长度为拼接图的长度

3 int dst_height = image02.rows;

4

5 Mat dst(dst_height, dst_width, CV_8UC3);

6 dst.setTo(0);

7

8 imageTransform1.copyTo(dst(Rect(0, 0, imageTransform1.cols, imageTransform1.rows)));

9 image02.copyTo(dst(Rect(0, 0, image02.cols, image02.rows)));

10

11 imshow("b_dst", dst);

4.图像融合(去裂缝处理)

从上图可以看出,两图的拼接并不自然,原因就在于拼接图的交界处,两图因为光照色泽的原因使得两图交界处的过渡很糟糕,所以需要特定的处理解决这种不自然。这里的处理思路是加权融合,在重叠部分由前一幅图像慢慢过渡到第二幅图像,即将图像的重叠区域的像素值按一定的权值相加合成新的图像。

1 //优化两图的连接处,使得拼接自然

2 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst)

3 {

4 int start = MIN(corners.left_top.x, corners.left_bottom.x);//开始位置,即重叠区域的左边界

5

6 double processWidth = img1.cols - start;//重叠区域的宽度

7 int rows = dst.rows;

8 int cols = img1.cols; //注意,是列数*通道数

9 double alpha = 1;//img1中像素的权重

10 for (int i = 0; i < rows; i++)

11 {

12 uchar* p = img1.ptr<uchar>(i); //获取第i行的首地址

13 uchar* t = trans.ptr<uchar>(i);

14 uchar* d = dst.ptr<uchar>(i);

15 for (int j = start; j < cols; j++)

16 {

17 //如果遇到图像trans中无像素的黑点,则完全拷贝img1中的数据

18 if (t[j * 3] == 0 && t[j * 3 + 1] == 0 && t[j * 3 + 2] == 0)

19 {

20 alpha = 1;

21 }

22 else

23 {

24 //img1中像素的权重,与当前处理点距重叠区域左边界的距离成正比,实验证明,这种方法确实好

25 alpha = (processWidth - (j - start)) / processWidth;

26 }

27

28 d[j * 3] = p[j * 3] * alpha + t[j * 3] * (1 - alpha);

29 d[j * 3 + 1] = p[j * 3 + 1] * alpha + t[j * 3 + 1] * (1 - alpha);

30 d[j * 3 + 2] = p[j * 3 + 2] * alpha + t[j * 3 + 2] * (1 - alpha);

31

32 }

33 }

34 }

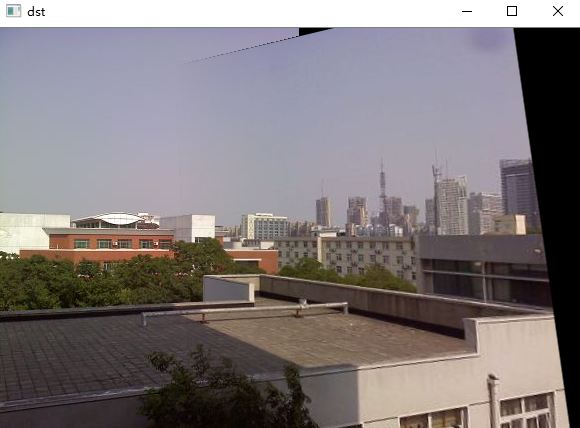

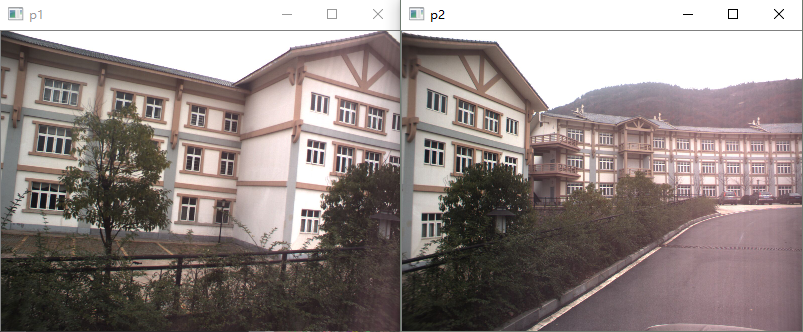

多尝试几张,验证拼接效果

测试一

测试二

测试三

最后给出完整的SURF算法实现的拼接代码。

1 #include "highgui/highgui.hpp"

2 #include "opencv2/nonfree/nonfree.hpp"

3 #include "opencv2/legacy/legacy.hpp"

4 #include <iostream>

5

6 using namespace cv;

7 using namespace std;

8

9 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst);

10

11 typedef struct

12 {

13 Point2f left_top;

14 Point2f left_bottom;

15 Point2f right_top;

16 Point2f right_bottom;

17 }four_corners_t;

18

19 four_corners_t corners;

20

21 void CalcCorners(const Mat& H, const Mat& src)

22 {

23 double v2[] = { 0, 0, 1 };//左上角

24 double v1[3];//变换后的坐标值

25 Mat V2 = Mat(3, 1, CV_64FC1, v2); //列向量

26 Mat V1 = Mat(3, 1, CV_64FC1, v1); //列向量

27

28 V1 = H * V2;

29 //左上角(0,0,1)

30 cout << "V2: " << V2 << endl;

31 cout << "V1: " << V1 << endl;

32 corners.left_top.x = v1[0] / v1[2];

33 corners.left_top.y = v1[1] / v1[2];

34

35 //左下角(0,src.rows,1)

36 v2[0] = 0;

37 v2[1] = src.rows;

38 v2[2] = 1;

39 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

40 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

41 V1 = H * V2;

42 corners.left_bottom.x = v1[0] / v1[2];

43 corners.left_bottom.y = v1[1] / v1[2];

44

45 //右上角(src.cols,0,1)

46 v2[0] = src.cols;

47 v2[1] = 0;

48 v2[2] = 1;

49 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

50 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

51 V1 = H * V2;

52 corners.right_top.x = v1[0] / v1[2];

53 corners.right_top.y = v1[1] / v1[2];

54

55 //右下角(src.cols,src.rows,1)

56 v2[0] = src.cols;

57 v2[1] = src.rows;

58 v2[2] = 1;

59 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

60 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

61 V1 = H * V2;

62 corners.right_bottom.x = v1[0] / v1[2];

63 corners.right_bottom.y = v1[1] / v1[2];

64

65 }

66

67 int main(int argc, char *argv[])

68 {

69 Mat image01 = imread("g5.jpg", 1); //右图

70 Mat image02 = imread("g4.jpg", 1); //左图

71 imshow("p2", image01);

72 imshow("p1", image02);

73

74 //灰度图转换

75 Mat image1, image2;

76 cvtColor(image01, image1, CV_RGB2GRAY);

77 cvtColor(image02, image2, CV_RGB2GRAY);

78

79

80 //提取特征点

81 SurfFeatureDetector Detector(2000);

82 vector<KeyPoint> keyPoint1, keyPoint2;

83 Detector.detect(image1, keyPoint1);

84 Detector.detect(image2, keyPoint2);

85

86 //特征点描述,为下边的特征点匹配做准备

87 SurfDescriptorExtractor Descriptor;

88 Mat imageDesc1, imageDesc2;

89 Descriptor.compute(image1, keyPoint1, imageDesc1);

90 Descriptor.compute(image2, keyPoint2, imageDesc2);

91

92 FlannBasedMatcher matcher;

93 vector<vector<DMatch> > matchePoints;

94 vector<DMatch> GoodMatchePoints;

95

96 vector<Mat> train_desc(1, imageDesc1);

97 matcher.add(train_desc);

98 matcher.train();

99

100 matcher.knnMatch(imageDesc2, matchePoints, 2);

101 cout << "total match points: " << matchePoints.size() << endl;

102

103 // Lowe's algorithm,获取优秀匹配点

104 for (int i = 0; i < matchePoints.size(); i++)

105 {

106 if (matchePoints[i][0].distance < 0.4 * matchePoints[i][1].distance)

107 {

108 GoodMatchePoints.push_back(matchePoints[i][0]);

109 }

110 }

111

112 Mat first_match;

113 drawMatches(image02, keyPoint2, image01, keyPoint1, GoodMatchePoints, first_match);

114 imshow("first_match ", first_match);

115

116 vector<Point2f> imagePoints1, imagePoints2;

117

118 for (int i = 0; i<GoodMatchePoints.size(); i++)

119 {

120 imagePoints2.push_back(keyPoint2[GoodMatchePoints[i].queryIdx].pt);

121 imagePoints1.push_back(keyPoint1[GoodMatchePoints[i].trainIdx].pt);

122 }

123

124

125

126 //获取图像1到图像2的投影映射矩阵 尺寸为3*3

127 Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC);

128 ////也可以使用getPerspectiveTransform方法获得透视变换矩阵,不过要求只能有4个点,效果稍差

129 //Mat homo=getPerspectiveTransform(imagePoints1,imagePoints2);

130 cout << "变换矩阵为:\n" << homo << endl << endl; //输出映射矩阵

131

132 //计算配准图的四个顶点坐标

133 CalcCorners(homo, image01);

134 cout << "left_top:" << corners.left_top << endl;

135 cout << "left_bottom:" << corners.left_bottom << endl;

136 cout << "right_top:" << corners.right_top << endl;

137 cout << "right_bottom:" << corners.right_bottom << endl;

138

139 //图像配准

140 Mat imageTransform1, imageTransform2;

141 warpPerspective(image01, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), image02.rows));

142 //warpPerspective(image01, imageTransform2, adjustMat*homo, Size(image02.cols*1.3, image02.rows*1.8));

143 imshow("直接经过透视矩阵变换", imageTransform1);

144 imwrite("trans1.jpg", imageTransform1);

145

146

147 //创建拼接后的图,需提前计算图的大小

148 int dst_width = imageTransform1.cols; //取最右点的长度为拼接图的长度

149 int dst_height = image02.rows;

150

151 Mat dst(dst_height, dst_width, CV_8UC3);

152 dst.setTo(0);

153

154 imageTransform1.copyTo(dst(Rect(0, 0, imageTransform1.cols, imageTransform1.rows)));

155 image02.copyTo(dst(Rect(0, 0, image02.cols, image02.rows)));

156

157 imshow("b_dst", dst);

158

159

160 OptimizeSeam(image02, imageTransform1, dst);

161

162

163 imshow("dst", dst);

164 imwrite("dst.jpg", dst);

165

166 waitKey();

167

168 return 0;

169 }

170

171

172 //优化两图的连接处,使得拼接自然

173 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst)

174 {

175 int start = MIN(corners.left_top.x, corners.left_bottom.x);//开始位置,即重叠区域的左边界

176

177 double processWidth = img1.cols - start;//重叠区域的宽度

178 int rows = dst.rows;

179 int cols = img1.cols; //注意,是列数*通道数

180 double alpha = 1;//img1中像素的权重

181 for (int i = 0; i < rows; i++)

182 {

183 uchar* p = img1.ptr<uchar>(i); //获取第i行的首地址

184 uchar* t = trans.ptr<uchar>(i);

185 uchar* d = dst.ptr<uchar>(i);

186 for (int j = start; j < cols; j++)

187 {

188 //如果遇到图像trans中无像素的黑点,则完全拷贝img1中的数据

189 if (t[j * 3] == 0 && t[j * 3 + 1] == 0 && t[j * 3 + 2] == 0)

190 {

191 alpha = 1;

192 }

193 else

194 {

195 //img1中像素的权重,与当前处理点距重叠区域左边界的距离成正比,实验证明,这种方法确实好

196 alpha = (processWidth - (j - start)) / processWidth;

197 }

198

199 d[j * 3] = p[j * 3] * alpha + t[j * 3] * (1 - alpha);

200 d[j * 3 + 1] = p[j * 3 + 1] * alpha + t[j * 3 + 1] * (1 - alpha);

201 d[j * 3 + 2] = p[j * 3 + 2] * alpha + t[j * 3 + 2] * (1 - alpha);

202

203 }

204 }

205 }

基于ORB的图像拼接

利用ORB进行图像拼接的思路跟上面的思路基本一样,只是特征提取和特征点匹配的方式略有差异罢了。这里就不再详细介绍思路了,直接贴代码看效果。

1 #include "highgui/highgui.hpp"

2 #include "opencv2/nonfree/nonfree.hpp"

3 #include "opencv2/legacy/legacy.hpp"

4 #include <iostream>

5

6 using namespace cv;

7 using namespace std;

8

9 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst);

10

11 typedef struct

12 {

13 Point2f left_top;

14 Point2f left_bottom;

15 Point2f right_top;

16 Point2f right_bottom;

17 }four_corners_t;

18

19 four_corners_t corners;

20

21 void CalcCorners(const Mat& H, const Mat& src)

22 {

23 double v2[] = { 0, 0, 1 };//左上角

24 double v1[3];//变换后的坐标值

25 Mat V2 = Mat(3, 1, CV_64FC1, v2); //列向量

26 Mat V1 = Mat(3, 1, CV_64FC1, v1); //列向量

27

28 V1 = H * V2;

29 //左上角(0,0,1)

30 cout << "V2: " << V2 << endl;

31 cout << "V1: " << V1 << endl;

32 corners.left_top.x = v1[0] / v1[2];

33 corners.left_top.y = v1[1] / v1[2];

34

35 //左下角(0,src.rows,1)

36 v2[0] = 0;

37 v2[1] = src.rows;

38 v2[2] = 1;

39 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

40 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

41 V1 = H * V2;

42 corners.left_bottom.x = v1[0] / v1[2];

43 corners.left_bottom.y = v1[1] / v1[2];

44

45 //右上角(src.cols,0,1)

46 v2[0] = src.cols;

47 v2[1] = 0;

48 v2[2] = 1;

49 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

50 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

51 V1 = H * V2;

52 corners.right_top.x = v1[0] / v1[2];

53 corners.right_top.y = v1[1] / v1[2];

54

55 //右下角(src.cols,src.rows,1)

56 v2[0] = src.cols;

57 v2[1] = src.rows;

58 v2[2] = 1;

59 V2 = Mat(3, 1, CV_64FC1, v2); //列向量

60 V1 = Mat(3, 1, CV_64FC1, v1); //列向量

61 V1 = H * V2;

62 corners.right_bottom.x = v1[0] / v1[2];

63 corners.right_bottom.y = v1[1] / v1[2];

64

65 }

66

67 int main(int argc, char *argv[])

68 {

69 Mat image01 = imread("t1.jpg", 1); //右图

70 Mat image02 = imread("t2.jpg", 1); //左图

71 imshow("p2", image01);

72 imshow("p1", image02);

73

74 //灰度图转换

75 Mat image1, image2;

76 cvtColor(image01, image1, CV_RGB2GRAY);

77 cvtColor(image02, image2, CV_RGB2GRAY);

78

79

80 //提取特征点

81 OrbFeatureDetector surfDetector(3000);

82 vector<KeyPoint> keyPoint1, keyPoint2;

83 surfDetector.detect(image1, keyPoint1);

84 surfDetector.detect(image2, keyPoint2);

85

86 //特征点描述,为下边的特征点匹配做准备

87 OrbDescriptorExtractor SurfDescriptor;

88 Mat imageDesc1, imageDesc2;

89 SurfDescriptor.compute(image1, keyPoint1, imageDesc1);

90 SurfDescriptor.compute(image2, keyPoint2, imageDesc2);

91

92 flann::Index flannIndex(imageDesc1, flann::LshIndexParams(12, 20, 2), cvflann::FLANN_DIST_HAMMING);

93

94 vector<DMatch> GoodMatchePoints;

95

96 Mat macthIndex(imageDesc2.rows, 2, CV_32SC1), matchDistance(imageDesc2.rows, 2, CV_32FC1);

97 flannIndex.knnSearch(imageDesc2, macthIndex, matchDistance, 2, flann::SearchParams());

98

99 // Lowe's algorithm,获取优秀匹配点

100 for (int i = 0; i < matchDistance.rows; i++)

101 {

102 if (matchDistance.at<float>(i, 0) < 0.4 * matchDistance.at<float>(i, 1))

103 {

104 DMatch dmatches(i, macthIndex.at<int>(i, 0), matchDistance.at<float>(i, 0));

105 GoodMatchePoints.push_back(dmatches);

106 }

107 }

108

109 Mat first_match;

110 drawMatches(image02, keyPoint2, image01, keyPoint1, GoodMatchePoints, first_match);

111 imshow("first_match ", first_match);

112

113 vector<Point2f> imagePoints1, imagePoints2;

114

115 for (int i = 0; i<GoodMatchePoints.size(); i++)

116 {

117 imagePoints2.push_back(keyPoint2[GoodMatchePoints[i].queryIdx].pt);

118 imagePoints1.push_back(keyPoint1[GoodMatchePoints[i].trainIdx].pt);

119 }

120

121

122

123 //获取图像1到图像2的投影映射矩阵 尺寸为3*3

124 Mat homo = findHomography(imagePoints1, imagePoints2, CV_RANSAC);

125 ////也可以使用getPerspectiveTransform方法获得透视变换矩阵,不过要求只能有4个点,效果稍差

126 //Mat homo=getPerspectiveTransform(imagePoints1,imagePoints2);

127 cout << "变换矩阵为:\n" << homo << endl << endl; //输出映射矩阵

128

129 //计算配准图的四个顶点坐标

130 CalcCorners(homo, image01);

131 cout << "left_top:" << corners.left_top << endl;

132 cout << "left_bottom:" << corners.left_bottom << endl;

133 cout << "right_top:" << corners.right_top << endl;

134 cout << "right_bottom:" << corners.right_bottom << endl;

135

136 //图像配准

137 Mat imageTransform1, imageTransform2;

138 warpPerspective(image01, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), image02.rows));

139 //warpPerspective(image01, imageTransform2, adjustMat*homo, Size(image02.cols*1.3, image02.rows*1.8));

140 imshow("直接经过透视矩阵变换", imageTransform1);

141 imwrite("trans1.jpg", imageTransform1);

142

143

144 //创建拼接后的图,需提前计算图的大小

145 int dst_width = imageTransform1.cols; //取最右点的长度为拼接图的长度

146 int dst_height = image02.rows;

147

148 Mat dst(dst_height, dst_width, CV_8UC3);

149 dst.setTo(0);

150

151 imageTransform1.copyTo(dst(Rect(0, 0, imageTransform1.cols, imageTransform1.rows)));

152 image02.copyTo(dst(Rect(0, 0, image02.cols, image02.rows)));

153

154 imshow("b_dst", dst);

155

156

157 OptimizeSeam(image02, imageTransform1, dst);

158

159

160 imshow("dst", dst);

161 imwrite("dst.jpg", dst);

162

163 waitKey();

164

165 return 0;

166 }

167

168

169 //优化两图的连接处,使得拼接自然

170 void OptimizeSeam(Mat& img1, Mat& trans, Mat& dst)

171 {

172 int start = MIN(corners.left_top.x, corners.left_bottom.x);//开始位置,即重叠区域的左边界

173

174 double processWidth = img1.cols - start;//重叠区域的宽度

175 int rows = dst.rows;

176 int cols = img1.cols; //注意,是列数*通道数

177 double alpha = 1;//img1中像素的权重

178 for (int i = 0; i < rows; i++)

179 {

180 uchar* p = img1.ptr<uchar>(i); //获取第i行的首地址

181 uchar* t = trans.ptr<uchar>(i);

182 uchar* d = dst.ptr<uchar>(i);

183 for (int j = start; j < cols; j++)

184 {

185 //如果遇到图像trans中无像素的黑点,则完全拷贝img1中的数据

186 if (t[j * 3] == 0 && t[j * 3 + 1] == 0 && t[j * 3 + 2] == 0)

187 {

188 alpha = 1;

189 }

190 else

191 {

192 //img1中像素的权重,与当前处理点距重叠区域左边界的距离成正比,实验证明,这种方法确实好

193 alpha = (processWidth - (j - start)) / processWidth;

194 }

195

196 d[j * 3] = p[j * 3] * alpha + t[j * 3] * (1 - alpha);

197 d[j * 3 + 1] = p[j * 3 + 1] * alpha + t[j * 3 + 1] * (1 - alpha);

198 d[j * 3 + 2] = p[j * 3 + 2] * alpha + t[j * 3 + 2] * (1 - alpha);

199

200 }

201 }

202 }

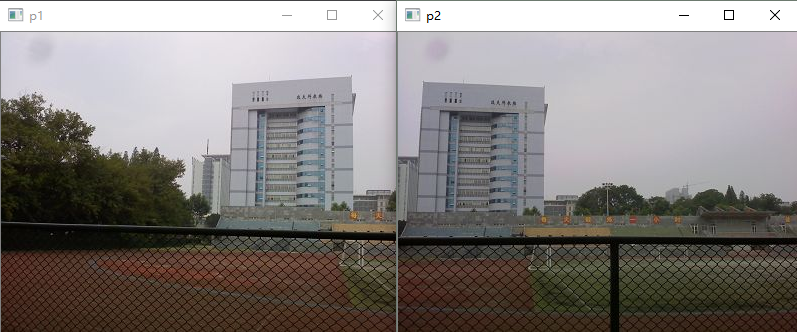

看一看拼接效果,我觉得还是不错的。

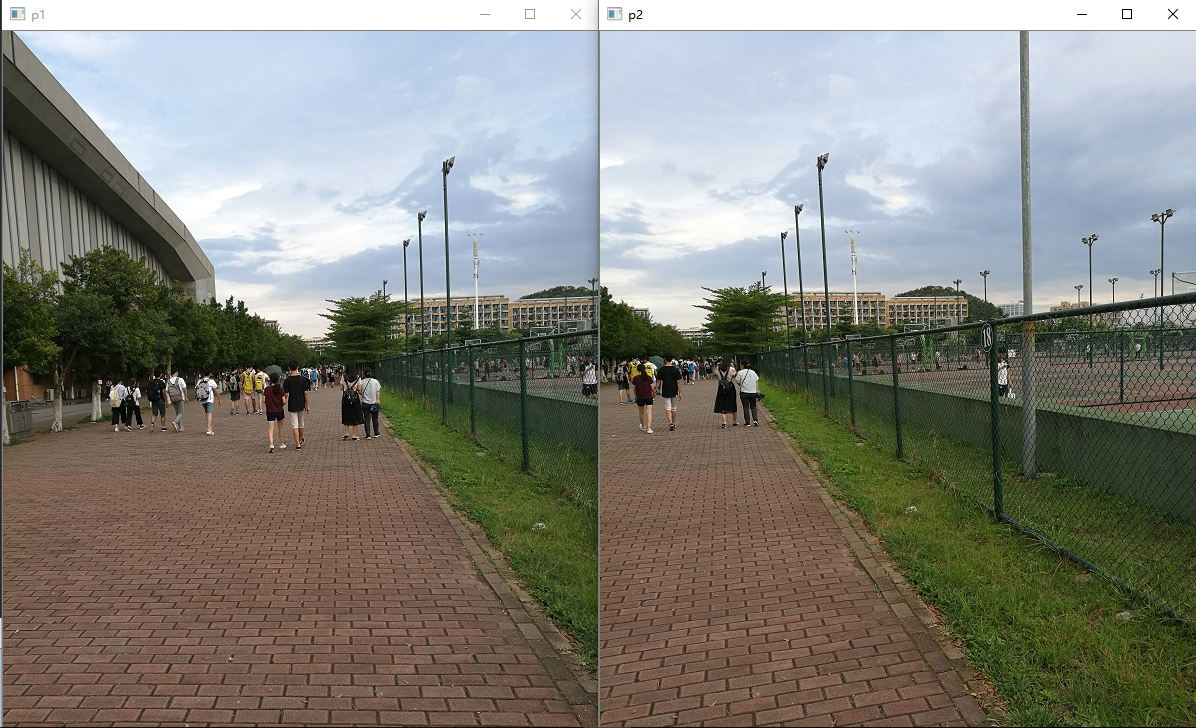

看一下这一组图片,这组图片产生了鬼影,为什么?因为两幅图中的人物走动了啊!所以要做图像拼接,尽量保证使用的是静态图片,不要加入一些动态因素干扰拼接。

opencv自带的拼接算法stitch

opencv其实自己就有实现图像拼接的算法,当然效果也是相当好的,但是因为其实现很复杂,而且代码量很庞大,其实在一些小应用下的拼接有点杀鸡用牛刀的感觉。最近在阅读sticth源码时,发现其中有几个很有意思的地方。

1.opencv stitch选择的特征检测方式

一直很好奇opencv stitch算法到底选用了哪个算法作为其特征检测方式,是ORB,SIFT还是SURF?读源码终于看到答案。

1 #ifdef HAVE_OPENCV_NONFREE

2 stitcher.setFeaturesFinder(new detail::SurfFeaturesFinder());

3 #else

4 stitcher.setFeaturesFinder(new detail::OrbFeaturesFinder());

5 #endif

在源码createDefault函数中(默认设置),第一选择是SURF,第二选择才是ORB(没有NONFREE模块才选),所以既然大牛们这么选择,必然是经过综合考虑的,所以应该SURF算法在图像拼接有着更优秀的效果。

2.opencv stitch获取匹配点的方式

以下代码是opencv stitch源码中的特征点提取部分,作者使用了两次特征点提取的思路:先对图一进行特征点提取和筛选匹配(1->2),再对图二进行特征点的提取和匹配(2->1),这跟我们平时的一次提取的思路不同,这种二次提取的思路可以保证更多的匹配点被选中,匹配点越多,findHomography求出的变换越准确。这个思路值得借鉴。

1 matches_info.matches.clear();

2

3 Ptr<flann::IndexParams> indexParams = new flann::KDTreeIndexParams();

4 Ptr<flann::SearchParams> searchParams = new flann::SearchParams();

5

6 if (features2.descriptors.depth() == CV_8U)

7 {

8 indexParams->setAlgorithm(cvflann::FLANN_INDEX_LSH);

9 searchParams->setAlgorithm(cvflann::FLANN_INDEX_LSH);

10 }

11

12 FlannBasedMatcher matcher(indexParams, searchParams);

13 vector< vector<DMatch> > pair_matches;

14 MatchesSet matches;

15

16 // Find 1->2 matches

17 matcher.knnMatch(features1.descriptors, features2.descriptors, pair_matches, 2);

18 for (size_t i = 0; i < pair_matches.size(); ++i)

19 {

20 if (pair_matches[i].size() < 2)

21 continue;

22 const DMatch& m0 = pair_matches[i][0];

23 const DMatch& m1 = pair_matches[i][1];

24 if (m0.distance < (1.f - match_conf_) * m1.distance)

25 {

26 matches_info.matches.push_back(m0);

27 matches.insert(make_pair(m0.queryIdx, m0.trainIdx));

28 }

29 }

30 LOG("\n1->2 matches: " << matches_info.matches.size() << endl);

31

32 // Find 2->1 matches

33 pair_matches.clear();

34 matcher.knnMatch(features2.descriptors, features1.descriptors, pair_matches, 2);

35 for (size_t i = 0; i < pair_matches.size(); ++i)

36 {

37 if (pair_matches[i].size() < 2)

38 continue;

39 const DMatch& m0 = pair_matches[i][0];

40 const DMatch& m1 = pair_matches[i][1];

41 if (m0.distance < (1.f - match_conf_) * m1.distance)

42 if (matches.find(make_pair(m0.trainIdx, m0.queryIdx)) == matches.end())

43 matches_info.matches.push_back(DMatch(m0.trainIdx, m0.queryIdx, m0.distance));

44 }

45 LOG("1->2 & 2->1 matches: " << matches_info.matches.size() << endl);

这里我仿照opencv源码二次提取特征点的思路对我原有拼接代码进行改写,实验证明获取的匹配点确实较一次提取要多。

1 //提取特征点

2 SiftFeatureDetector Detector(1000); // 海塞矩阵阈值,在这里调整精度,值越大点越少,越精准

3 vector<KeyPoint> keyPoint1, keyPoint2;

4 Detector.detect(image1, keyPoint1);

5 Detector.detect(image2, keyPoint2);

6

7 //特征点描述,为下边的特征点匹配做准备

8 SiftDescriptorExtractor Descriptor;

9 Mat imageDesc1, imageDesc2;

10 Descriptor.compute(image1, keyPoint1, imageDesc1);

11 Descriptor.compute(image2, keyPoint2, imageDesc2);

12

13 FlannBasedMatcher matcher;

14 vector<vector<DMatch> > matchePoints;

15 vector<DMatch> GoodMatchePoints;

16

17 MatchesSet matches;

18

19 vector<Mat> train_desc(1, imageDesc1);

20 matcher.add(train_desc);

21 matcher.train();

22

23 matcher.knnMatch(imageDesc2, matchePoints, 2);

24

25 // Lowe's algorithm,获取优秀匹配点

26 for (int i = 0; i < matchePoints.size(); i++)

27 {

28 if (matchePoints[i][0].distance < 0.4 * matchePoints[i][1].distance)

29 {

30 GoodMatchePoints.push_back(matchePoints[i][0]);

31 matches.insert(make_pair(matchePoints[i][0].queryIdx, matchePoints[i][0].trainIdx));

32 }

33 }

34 cout<<"\n1->2 matches: " << GoodMatchePoints.size() << endl;

35

36 #if 1

37

38 FlannBasedMatcher matcher2;

39 matchePoints.clear();

40 vector<Mat> train_desc2(1, imageDesc2);

41 matcher2.add(train_desc2);

42 matcher2.train();

43

44 matcher2.knnMatch(imageDesc1, matchePoints, 2);

45 // Lowe's algorithm,获取优秀匹配点

46 for (int i = 0; i < matchePoints.size(); i++)

47 {

48 if (matchePoints[i][0].distance < 0.4 * matchePoints[i][1].distance)

49 {

50 if (matches.find(make_pair(matchePoints[i][0].trainIdx, matchePoints[i][0].queryIdx)) == matches.end())

51 {

52 GoodMatchePoints.push_back(DMatch(matchePoints[i][0].trainIdx, matchePoints[i][0].queryIdx, matchePoints[i][0].distance));

53 }

54

55 }

56 }

57 cout<<"1->2 & 2->1 matches: " << GoodMatchePoints.size() << endl;

58 #endif

最后再看一下opencv stitch的拼接效果吧~速度虽然比较慢,但是效果还是很好的。

1 #include <iostream>

2 #include <opencv2/core/core.hpp>

3 #include <opencv2/highgui/highgui.hpp>

4 #include <opencv2/imgproc/imgproc.hpp>

5 #include <opencv2/stitching/stitcher.hpp>

6 using namespace std;

7 using namespace cv;

8 bool try_use_gpu = false;

9 vector<Mat> imgs;

10 string result_name = "dst1.jpg";

11 int main(int argc, char * argv[])

12 {

13 Mat img1 = imread("34.jpg");

14 Mat img2 = imread("35.jpg");

15

16 imshow("p1", img1);

17 imshow("p2", img2);

18

19 if (img1.empty() || img2.empty())

20 {

21 cout << "Can't read image" << endl;

22 return -1;

23 }

24 imgs.push_back(img1);

25 imgs.push_back(img2);

26

27

28 Stitcher stitcher = Stitcher::createDefault(try_use_gpu);

29 // 使用stitch函数进行拼接

30 Mat pano;

31 Stitcher::Status status = stitcher.stitch(imgs, pano);

32 if (status != Stitcher::OK)

33 {

34 cout << "Can't stitch images, error code = " << int(status) << endl;

35 return -1;

36 }

37 imwrite(result_name, pano);

38 Mat pano2 = pano.clone();

39 // 显示源图像,和结果图像

40 imshow("全景图像", pano);

41 if (waitKey() == 27)

42 return 0;

43 }

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 浏览器原生「磁吸」效果!Anchor Positioning 锚点定位神器解析

· 没有源码,如何修改代码逻辑?

· 一个奇形怪状的面试题:Bean中的CHM要不要加volatile?

· [.NET]调用本地 Deepseek 模型

· 一个费力不讨好的项目,让我损失了近一半的绩效!

· 全网最简单!3分钟用满血DeepSeek R1开发一款AI智能客服,零代码轻松接入微信、公众号、小程

· .NET 10 首个预览版发布,跨平台开发与性能全面提升

· 《HelloGitHub》第 107 期

· 全程使用 AI 从 0 到 1 写了个小工具

· 从文本到图像:SSE 如何助力 AI 内容实时呈现?(Typescript篇)