容器的监控方案有多种,如单台docker主机的监控,可以使用docker stats或者cAdvisor web页面进行监控。但针对于Kubernetes这种容器编排工具而言docker单主机的监控已经不足以满足需求,在Kubernetes的生态圈中也诞生了一个个监控方案,如常用的dashboard,部署cAdvisor+Heapster+InfluxDB+Grafana监控方案,部署Prometheus和Grafana监控方案等。在这里主要讲述一下cAdvisor+Heapster监控方案。

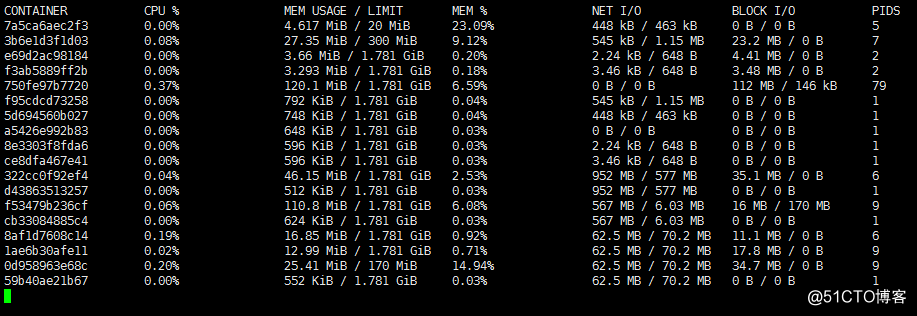

# docker stats

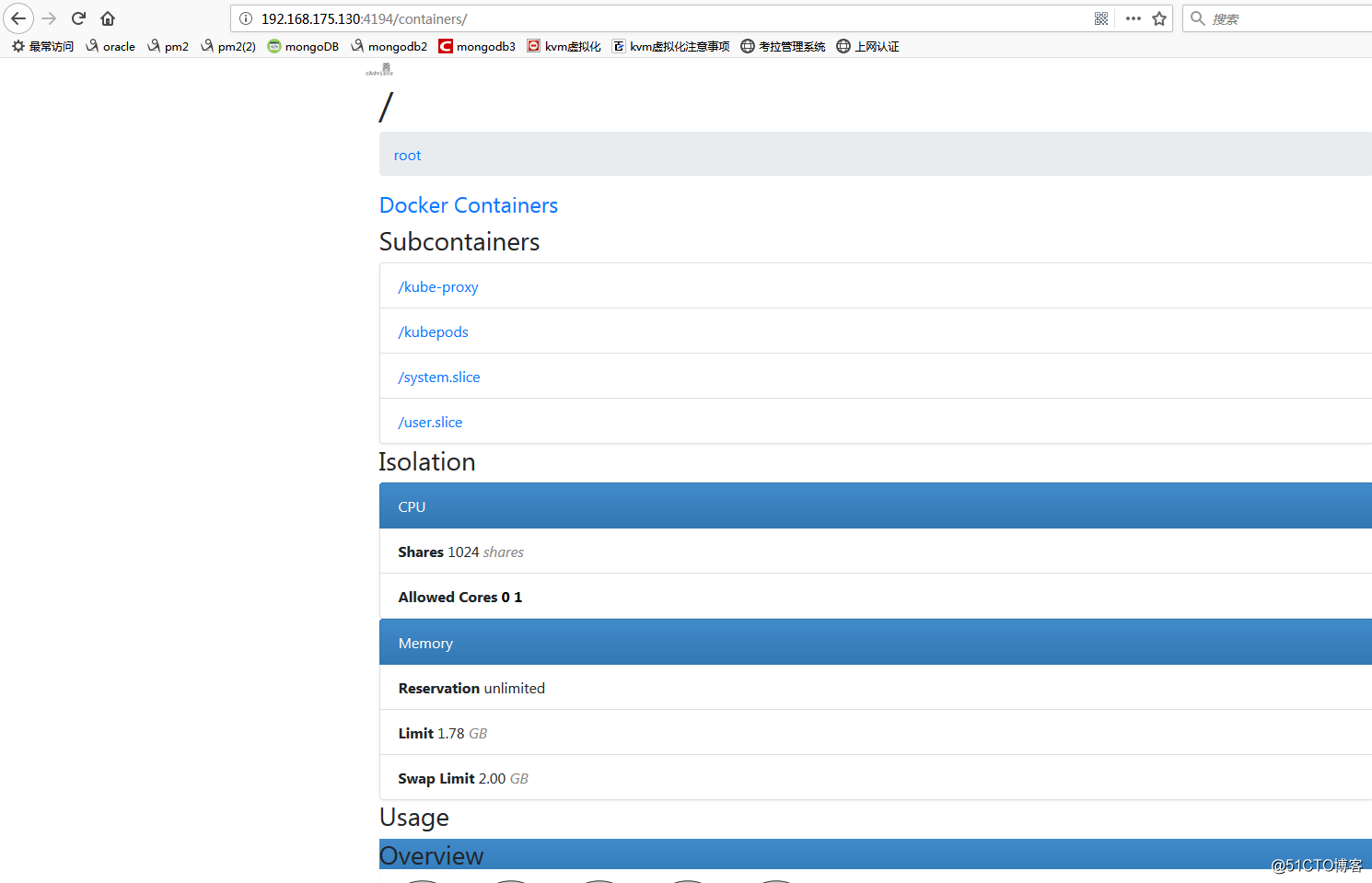

Google的 cAdvisor 是另一个知名的开源容器监控工具,cAdvisor是docker容器创建后自动起的一个容器进程,用户可以通过Web界面访问当前节点和容器的性能数据(CPU、内存、网络、磁盘、文件系统等等),非常详细。cAdvisor可以直接通过访问docker主机的4194端口访问。

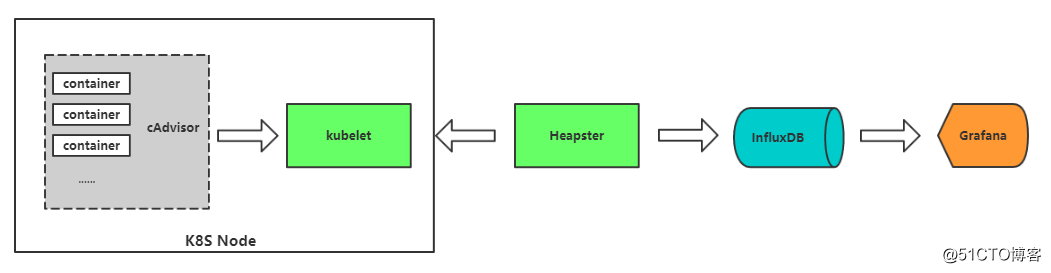

一、集群监控原理

cAdvisor:容器数据收集。

Heapster:集群监控数据收集,汇总所有节点监控数据。

InfluxDB:时序数据库,存储监控数据。

Grafana:可视化展示。

由图可知,cAdvisor用于获取k8s node节点上的容器数据,内存,CPU,Disk用量,网络流量等,cAdvisor只支持实时存储,不支持持久化存储,由Heapster汇总所有节点的数据,交由InfluxDB来做持久化存储,最后再由Grafana作为前端的Web展示页面来使用。

二、搭建cAdvisor+Heapster+InfluxDB+Grafana

①从官网上拉取安装包

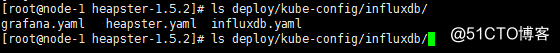

获取v1.5.2heapster+influxdb+grafana安装yaml文件到 heapster release 页面下载最新版本的 heapster:

# wget https://github.com/kubernetes/heapster/archive/v1.5.2.zip

# unzip v1.5.2.zip

②修改yaml文件,如镜像路径(国外镜像,不×××下载不了)

修改influxdb.yam并启用

注意:数据库需要最先启用,后面收集到的数据才能保存

[root@node-1 monitor]# cat influxdb.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-influxdb

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: influxdb

spec:

containers:

- name: influxdb

image: registry.cn-hangzhou.aliyuncs.com/google-containers/heapster-influxdb-amd64:v1.1.1

volumeMounts:

- mountPath: /data

name: influxdb-storage

volumes:

- name: influxdb-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

task: monitoring

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-influxdb

name: monitoring-influxdb

namespace: kube-system

spec:

type: NodePort

ports:

- nodePort: 31001

port: 8086

targetPort: 8086

selector:

k8s-app: influxdb

# kubectl create -f influxdb.yaml

修改heapster.yaml

[root@node-1 monitor]# cat heapster.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: heapster

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: heapster

subjects:

- kind: ServiceAccount

name: heapster

namespace: kube-system

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: heapster

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: heapster

spec:

serviceAccountName: heapster

containers:

- name: heapster

image: registry.cn-hangzhou.aliyuncs.com/google-containers/heapster-amd64:v1.4.2

imagePullPolicy: IfNotPresent

command:

- /heapster

- --source=kubernetes:https://kubernetes.default

- --sink=influxdb:http://monitoring-influxdb:8086

---

apiVersion: v1

kind: Service

metadata:

labels:

task: monitoring

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: Heapster

name: heapster

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 8082

selector:

k8s-app: heapster

# kubectl create -f heapster.yaml

修改grafana.yaml

[root@node-1 monitor]# cat grafana.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: registry.cn-hangzhou.aliyuncs.com/google-containers/heapster-grafana-amd64:v4.4.1

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

type: NodePort

ports:

- nodePort: 30108

port: 80

targetPort: 3000

selector:

k8s-app: grafana

# kubectl create -f grafana.yaml

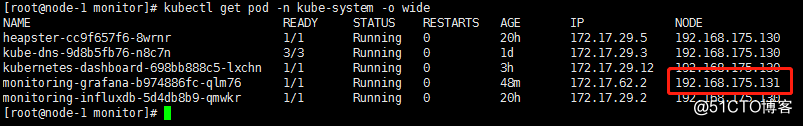

浏览器访问:

访问grafana中指定的nodeport,看在那个node节点上

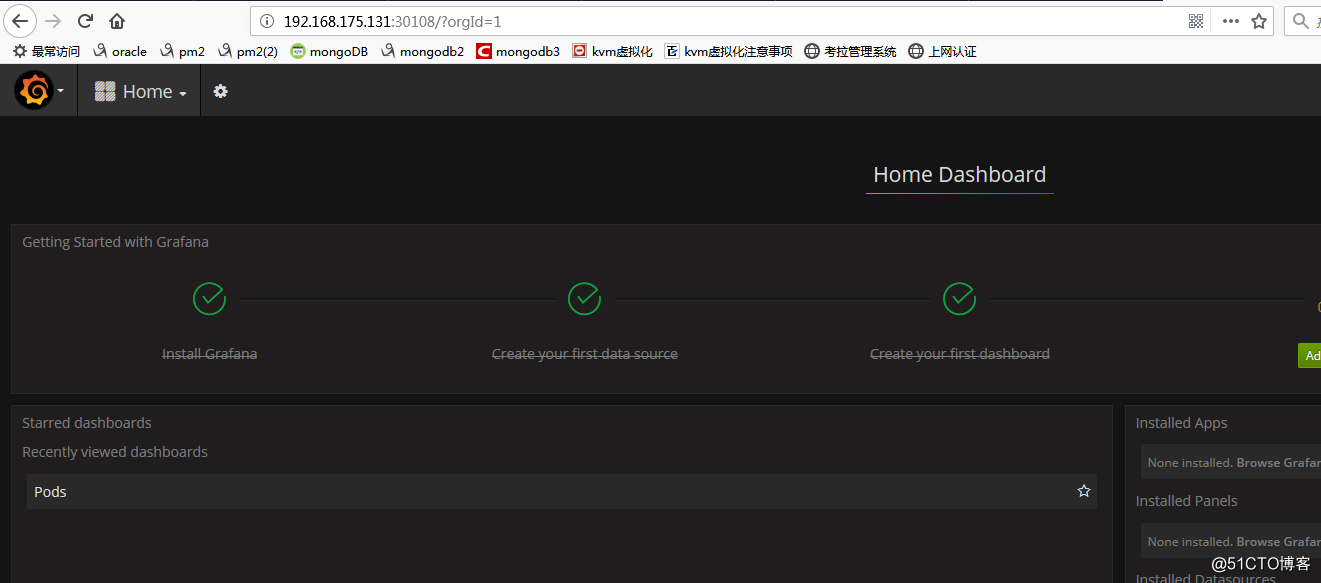

页面访问:

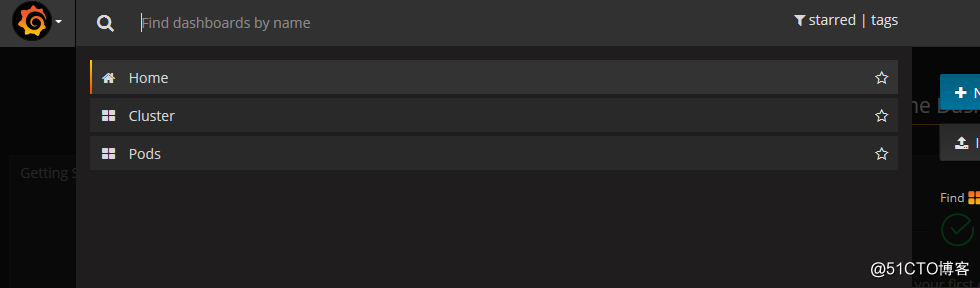

查看监控内容:

Home选项下的Cluster是查看node节点的相关监控状态

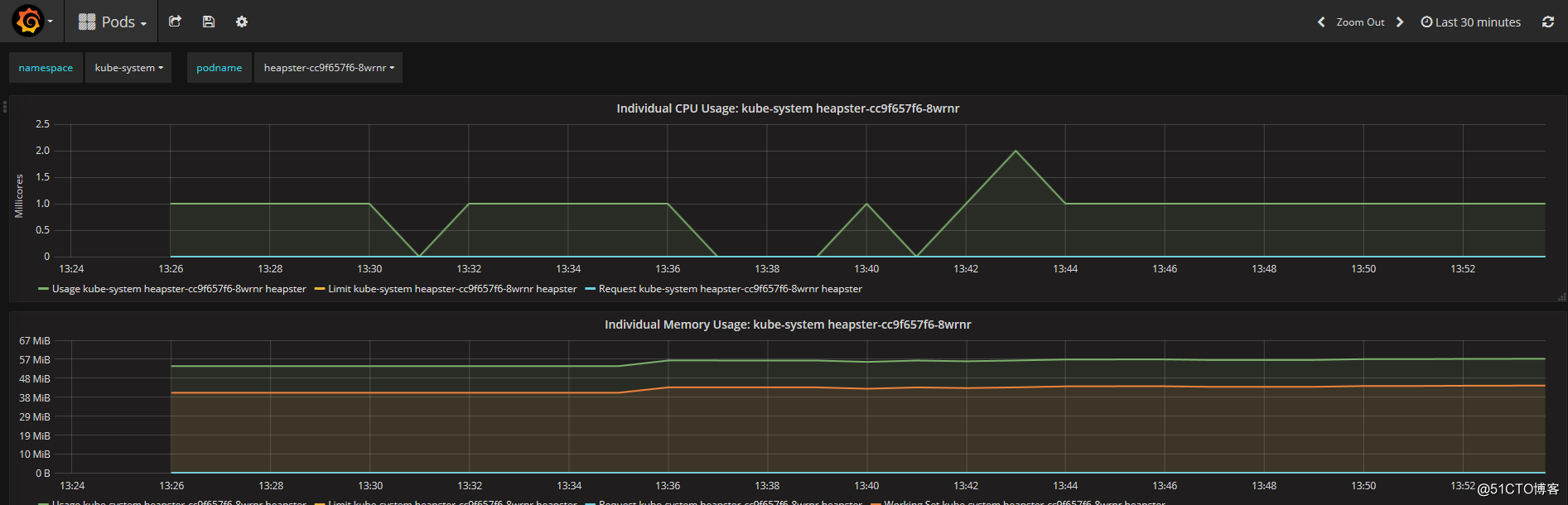

Home选项下的Pods是查看由node节点收集来的相关namespace下的pod主机的监控内容

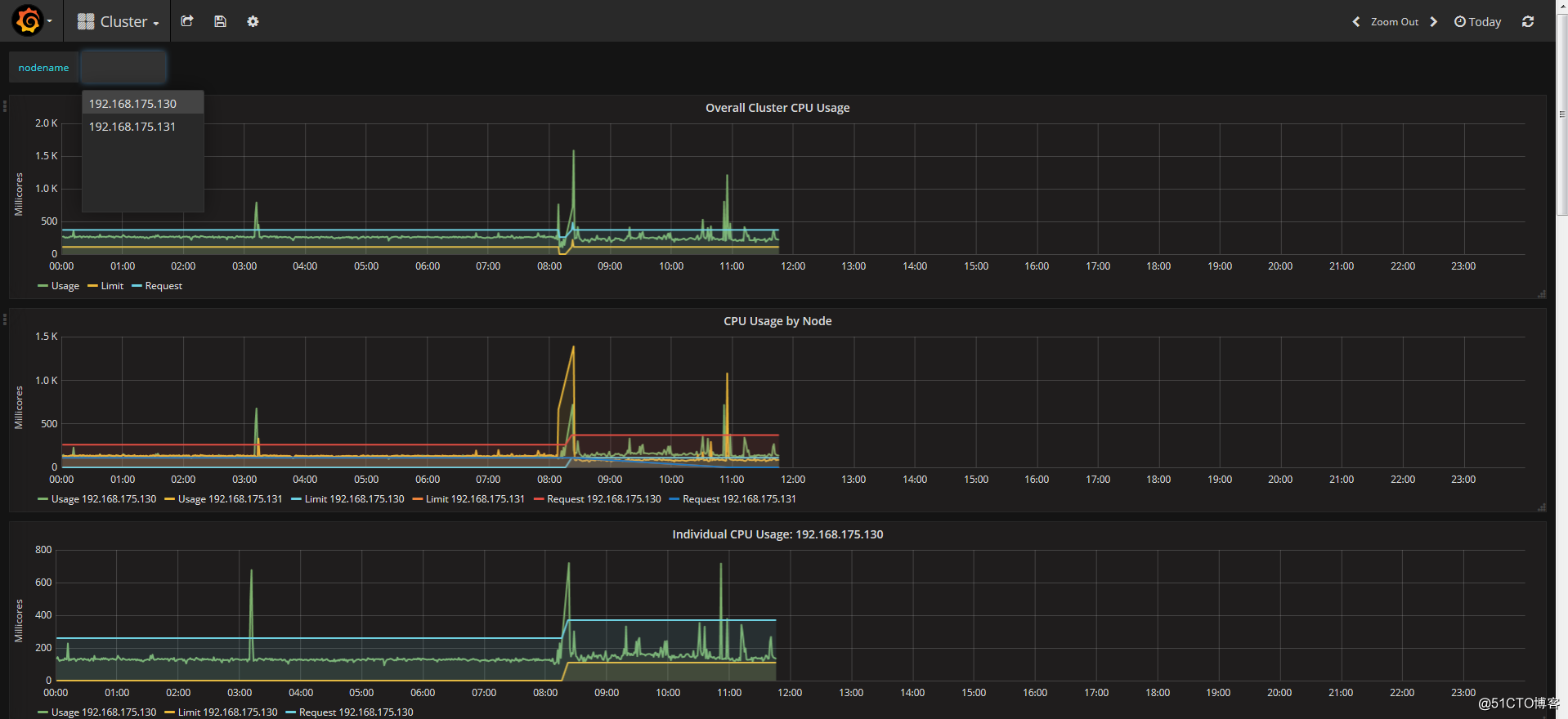

如下图选定node主机节点,监控CPU,内存等状态参数信息,可以指定时间区间进行查看

如下图选定namespaces下的指定pod,对该pod的状态参数进行监控

浙公网安备 33010602011771号

浙公网安备 33010602011771号