实验内容

现有某电商网站用户对商品的收藏数据,记录了用户收藏的商品id以及收藏日期,名为buyer_favorite1。

buyer_favorite1包含:买家id,商品id,收藏日期这三个字段,数据以“\t”分割,样本数据及格式如下:

- 买家id 商品id 收藏日期

- 10181 1000481 2010-04-04 16:54:31

- 20001 1001597 2010-04-07 15:07:52

- 20001 1001560 2010-04-07 15:08:27

- 20042 1001368 2010-04-08 08:20:30

- 20067 1002061 2010-04-08 16:45:33

- 20056 1003289 2010-04-12 10:50:55

- 20056 1003290 2010-04-12 11:57:35

- 20056 1003292 2010-04-12 12:05:29

- 20054 1002420 2010-04-14 15:24:12

- 20055 1001679 2010-04-14 19:46:04

- 20054 1010675 2010-04-14 15:23:53

- 20054 1002429 2010-04-14 17:52:45

- 20076 1002427 2010-04-14 19:35:39

- 20054 1003326 2010-04-20 12:54:44

- 20056 1002420 2010-04-15 11:24:49

- 20064 1002422 2010-04-15 11:35:54

- 20056 1003066 2010-04-15 11:43:01

- 20056 1003055 2010-04-15 11:43:06

- 20056 1010183 2010-04-15 11:45:24

- 20056 1002422 2010-04-15 11:45:49

- 20056 1003100 2010-04-15 11:45:54

- 20056 1003094 2010-04-15 11:45:57

- 20056 1003064 2010-04-15 11:46:04

- 20056 1010178 2010-04-15 16:15:20

- 20076 1003101 2010-04-15 16:37:27

- 20076 1003103 2010-04-15 16:37:05

- 20076 1003100 2010-04-15 16:37:18

- 20076 1003066 2010-04-15 16:37:31

- 20054 1003103 2010-04-15 16:40:14

- 20054 1003100 2010-04-15 16:40:16

要求编写MapReduce程序,统计每个买家收藏商品数量。

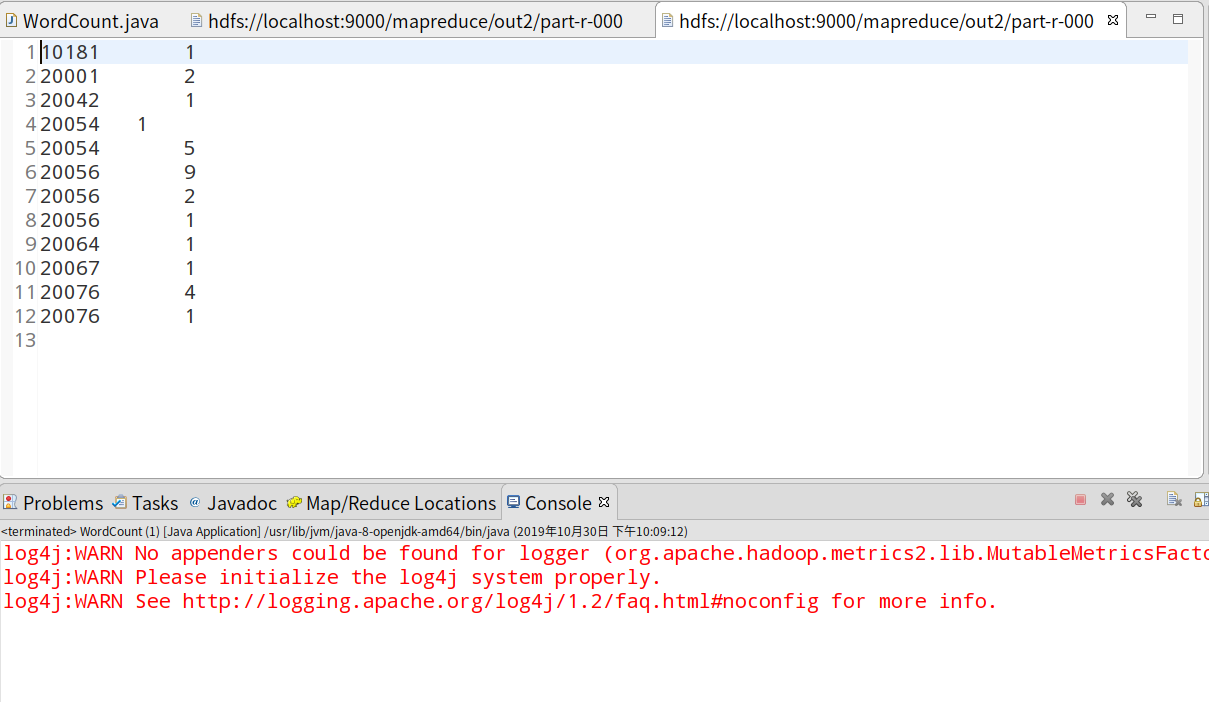

统计结果数据如下:

- 买家id 商品数量

- 10181 1

- 20001 2

- 20042 1

- 20054 6

- 20055 1

- 20056 12

- 20064 1

- 20067 1

- 20076 5

实验步骤

1.环境配置以及map工程建立

参考资料:http://dblab.xmu.edu.cn/blog/hadoop-build-project-using-eclipse/

2.编写Java代码,并描述其设计思路。

下图描述了该mapreduce的执行过程

大致思路是将hdfs上的文本作为输入,MapReduce通过InputFormat会将文本进行切片处理,并将每行的首字母相对于文本文件的首地址的偏移量作为输入键值对的key,文本内容作为输入键值对的value,经过在map函数处理,输出中间结果<word,1>的形式,并在reduce函数中完成对每个单词的词频统计。整个程序代码主要包括两部分:Mapper部分和Reducer部分。

1 package mapreduce; 2 3 import java.io.IOException; 4 import java.util.StringTokenizer; 5 import org.apache.hadoop.fs.Path; 6 import org.apache.hadoop.io.IntWritable; 7 import org.apache.hadoop.io.Text; 8 import org.apache.hadoop.mapreduce.Job; 9 import org.apache.hadoop.mapreduce.Mapper; 10 import org.apache.hadoop.mapreduce.Reducer; 11 import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; 12 import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; 13 14 public class WordCount { 15 public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { 16 Job job = Job.getInstance(); 17 job.setJobName("WordCount"); 18 job.setJarByClass(WordCount.class); 19 job.setMapperClass(doMapper.class); 20 job.setReducerClass(doReducer.class); 21 job.setOutputKeyClass(Text.class); 22 job.setOutputValueClass(IntWritable.class); 23 Path in = new Path("hdfs://localhost:9000/mapreduce/in/buyer_favorite2.txt"); 24 Path out = new Path("hdfs://localhost:9000/mapreduce/out2"); 25 FileInputFormat.addInputPath(job, in); 26 FileOutputFormat.setOutputPath(job, out); 27 System.exit(job.waitForCompletion(true) ? 0 : 1); 28 } 29 30 public static class doMapper extends Mapper<Object, Text, Text, IntWritable> { 31 public static final IntWritable one = new IntWritable(1); 32 public static Text word = new Text(); 33 34 protected void map(Object key, Text value, Context context) throws IOException, InterruptedException { 35 StringTokenizer tokenizer = new StringTokenizer(value.toString(), "\t"); 36 word.set(tokenizer.nextToken()); 37 context.write(word, one); 38 } 39 } 40 41 public static class doReducer extends Reducer<Text, IntWritable, Text, IntWritable> { 42 private IntWritable result = new IntWritable(); 43 44 protected void reduce(Text key, Iterable<IntWritable> values, Context context) 45 throws IOException, InterruptedException { 46 int sum = 0; 47 for (IntWritable value : values) { 48 sum += value.get(); 49 } 50 result.set(sum); 51 context.write(key, result); 52 } 53 } 54 }

运行结果截图