一、k8s master部署

Master节点上会运行的组件:

etcd,kube-apiserver,kube-controller-manager,kuctl,kubeadm.kubelet,kube-proxy,flannel,docker

Kubeadm,官方k8s一键部署工具

Flannel,网络插件,确保节点间能够互相通信

环境初始化:

1)hosts解析

cat >>/etc/hosts <<EOF

0.0.0.1 k8s-master

0.0.0.2 k8s-node-1

0.0.0.3 k8s-node-2

EOF

2) 设置安全组开放端口

如果节点间无安全组限制,可以忽略,否则至少开通如下端口

K8s-maser节点:TCP:6443, 2379 , 60080, 60081,udp协议端口全开放

k8s-slaver节点:udp协议端口全开放

设置iptables和selinux

systemctl stop firewalld

systemctl disable firewalld

sed -ri ’s#(SELINUX=).*#\1disabled#’ /etc/selinux/config

setenforce 0

iptables -F

iptables -X

iptables -Z

Iptables -P FORWARD ACCEPT

关闭swap分区

swapoff -a # 临时关闭

sed -i ’/ swap / s/^\(.*\)$/#\1/g’ /etc/fstab # 注释到swap那一行 永久关闭

确保ntp时间正确

hwclock -w

修改内核参数

cat > /etc/sysctl.d/k8s.conf <<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

vm.max_map_count=262144

EOF

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

安装docker基础环境

curl -o /etc/yum.repos.d/docker-ce.repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum makecache fast

yum install -y docker-ce-19.03.15 docker-ce-cli-19.03.15

mkdir -p /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors":["https://ms9glx6x.mirror.aliyuncs.com"],

"exec-opts":["native.cgroupdriver=systemd"]

}

EOF

#启动

systemctl start docker && systemctl enable docker

安装kubeadm,kubelet和kubectl

1.设置源

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum clean all && yum makecache

yum install -y kubelet-1.19.3 kubeadm-1.19.3 kubectl-1.19.3

设置kubelet开机启动

systemctl enable kubelet

systemctl enable docker

#启动

systemctl start kubelet

#查看 kubeadm版本

kubeadm version

初始化k8s-master主节点(只有主节点需要)

kubeadm init --apiserver-advertise-address=10.0.0.10 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.3 --service-cidr=10.1.0.0/16 --pod-network-cidr=10.2.0.0/16 --service-dns-domain=cluster.local --ignore-preflight-errors=Swap --ignore-preflight-errors=NumCPU

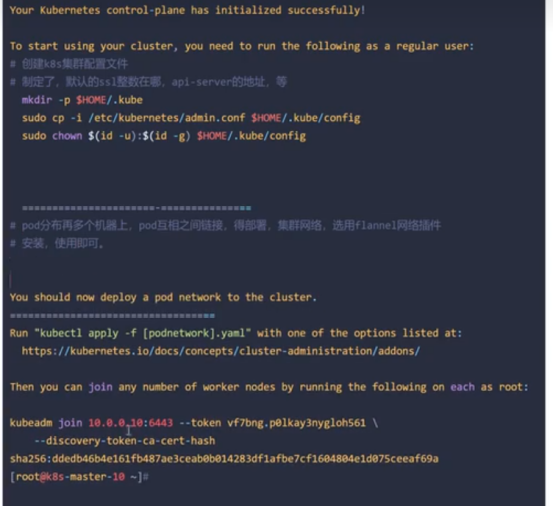

maser成功安装后:

创建k8s集群配置文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

查看集群状态:

主节点上运行:

kubectl get nodes

安装网络插件flannel

下载https://github.com/coreos/flannel.git

修改kube-flannel.yml中network属性,和kubeadm初始pod网段保持一致

sed -i ’s/10.244.0.0/10.2.0.0/’ Documentation/kube-flannel.yml

修改默认网卡名称

vi Documentation/kube-flannel.yml

修改完安装插件,执行

kubectl create -f Documentation/kube-flannel.yml

kubectl get nodes -owide

#运行pod

kubectl run --help

配置k8s命令补全工具

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

二、k8s node部署

Node节点上运行的组件:

kuctl,kubelet,kube-proxy,flannel,docker

环境初始化:

2)hosts解析

cat >>/etc/hosts <<EOF

0.0.0.1 k8s-master

0.0.0.2 k8s-node-1

0.0.0.3 k8s-node-2

EOF

3) 设置安全组开放端口

如果节点间无安全组限制,可以忽略,否则至少开通如下端口

K8s-maser节点:TCP:6443, 2379 , 60080, 60081,udp协议端口全开放

k8s-slaver节点:udp协议端口全开放

设置iptables和selinux

systemctl stop firewalld

systemctl disable firewalld

sed -ri ’s#(SELINUX=).*#\1disabled#’ /etc/selinux/config

setenforce 0

iptables -F

iptables -X

iptables -Z

Iptables -P FORWARD ACCEPT

关闭swap分区

swapoff -a # 临时关闭

sed -i ’/ swap / s/^\(.*\)$/#\1/g’ /etc/fstab # 注释到swap那一行 永久关闭

确保ntp时间正确

hwclock -w

修改内核参数

cat > /etc/sysctl.d/k8s.conf <<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

vm.max_map_count=262144

EOF

modprobe br_netfilter

sysctl -p /etc/sysctl.d/k8s.conf

安装docker基础环境

curl -o /etc/yum.repos.d/docker-ce.repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum makecache fast

yum install -y docker-ce-19.03.15 docker-ce-cli-19.03.15

mkdir -p /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors":["https://ms9glx6x.mirror.aliyuncs.com"],

"exec-opts":["native.cgroupdriver-systemd"]

}

EOF

#启动

systemctl start docker && systemctl enable docker

安装kubeadm,kubelet和kubectl

1.设置源

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum clean all && yum makecache

yum install -y kubelet-1.19.3 kubeadm-1.19.3 kubectl-1.19.3

设置kubelet开机启动

systemctl enable kubelet

systemctl enable docker

#启动

systemctl start kubelet

#查看 kubeadm版本

kubeadm version

Node节点加入集群

kubeadm join 192.168.0.185:6443 --token xwc4yz.g1zic1bv52wrwf0r --discovery-token-ca-cert-hash sha256:34c556c6a086d80b587c101f81b1602ba102feccc8dccd74a10fd25ab7cfa0ba #获取前面创建好的token令牌,所有node节点都执行

kube-vip 高可用

1,集群规划

10.172.2.1 k8smater1

10.172.2.2 k8smater2

10.172.2.3 k8smater3

2,开始操作

先在master1上进行。

export VIP=10.172.2.119 #VIP地址

export INTERFACE=eth0 #网卡名称

export master1=10.172.2.1

export master2=10.172.2.2

export master3=10.172.2.3

mkdir -p /etc/kubernetes/manifests #此步骤只在master1上第一个M节点做,

docker pull docker.io/plndr/kube-vip:0.5.5 #拉取kube-vip镜像

docker run --network host --rm plndr/kube-vip:0.5.5 manifest pod --interface $INTERFACE --vip $VIP --controlplane --services --bgp --localAS 60000 --bgpRouterID $master1 --bgppeers $master2:60000::false,$master3:60000::false |tee /etc/kubernetes/manifests/kube-vip.yaml

#初始化集群

kubeadm init --kubernetes-version=1.23.1 --apiserver-advertise-address=${VIP} --apiserver-bind-port 6443 --control-plane-endpoint=${VIP}:6443 --service-cidr=10.1.0.0/16 --pod-network-cidr=192.168.0.0/16 --upload-certs

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

export KUBECONFIG=/etc/kubernetes/admin.conf

kubectl apply -f flannel.yaml

Master2节点

export VIP=10.172.2.119 #VIP地址

export INTERFACE=eth0 #网卡名称

export master1=10.172.2.1

export master2=10.172.2.2

export master3=10.172.2.3

#加入集群

kubeadm join $VIP:6443 --token **** --discovery-token-ca-cert-hash ****

#拉取镜像生成资源清单,注意参数不同

docker pull docker.io/plndr/kube-vip:0.5.5 #拉取kube-vip镜像

docker run --network host --rm plndr/kube-vip:0.5.5 manifest pod --interface $INTERFACE --vip $VIP --controlplane --services --bgp --localAS 60000 --bgpRouterID $master2 --bgppeers $master1:60000::false,$master3:60000::false |tee /etc/kubernetes/manifests/kube-vip.yaml

Master3节点

export VIP=10.172.2.119 #VIP地址

export INTERFACE=eth0 #网卡名称

export master1=10.172.2.1

export master2=10.172.2.2

export master3=10.172.2.3

#加入集群

kubeadm join $VIP:6443 --token **** --discovery-token-ca-cert-hash ****

docker pull docker.io/plndr/kube-vip:0.5.5 #拉取kube-vip镜像

docker run --network host --rm plndr/kube-vip:0.5.5 manifest pod --interface $INTERFACE --vip $VIP --controlplane --services --bgp --localAS 60000 --bgpRouterID $master3 --bgppeers $master1:60000::false,$master2:60000::false |tee /etc/kubernetes/manifests/kube-vip.yaml

浙公网安备 33010602011771号

浙公网安备 33010602011771号