这里我们选用较新版本 kubeadm-1.17.4 + docker-19.03.5 + flannel-0.12,并开启ipvs。

前置条件:

- 一台或多台机器

- 操作系统:CentOS7.x-86_x64

- 运行内存:2GB或更多

- 系统内核:2CPU或更多

- 硬盘大小:30GB或更多

- 集群中所有机器之间网络互通可以访问外网(需要拉取镜像)

- 节点之中不可以有重复的主机名、MAC 地址、Product_uuid

- 禁止交换分区

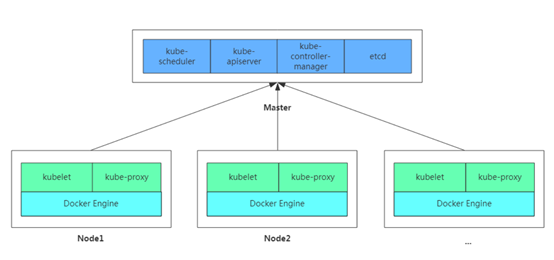

kubernetes架构图:

1) 设置主机名以及域名解析

hostnamectl set-hostname k8s-128

cat >> /etc/hosts <<EOF 192.168.17.128 k8s-128 192.168.17.129 k8s-129 192.168.17.130 k8s-130 192.168.17.200 myregistry.com EOF

2) 安装依赖包以及常用软件

yum -y install vim curl wget unzip ntpdate net-tools ipvsadm ipset sysstat conntrack libseccomp

ntpdate ntp1.aliyun.com # 同步系统时间

3) 关闭swap、selinux、firewalld

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config systemctl stop firewalld && systemctl disable firewalld

注意:

- k8s要求关闭swap分区,以防容器运行在虚拟内存,导致性能大大降低。

- 生产环境要根据实际需要配置防火墙规则,可参考>>>Kubernetes集群开启Firewall

4) 调整系统内核参数

cat > /etc/sysctl.d/kubernetes.conf <<EOF # 开启网桥模式,关闭ipv6协议(必要) net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1 net.ipv6.conf.all.disable_ipv6=1 # 启用IP路由转发功能,关闭TW状态快速回收机制 net.ipv4.ip_forward=1 net.ipv4.tcp_tw_recycle=0 # 禁止使用Swap空间,只有当系统OOM时才允许使用它 vm.swappiness=0 # 设置文件句柄数目,打开数目(可选) fs.file-max=2000000 fs.nr_open=2000000 fs.inotify.max_user_instances=512 fs.inotify.max_user_watches=1280000 # 设置系统最大连接数(可选) net.netfilter.nf_conntrack_max=524288 EOF sysctl -p /etc/sysctl.d/kubernetes.conf

注意:红色为较为重要,其余为可选优化方案。

5) 加载系统ipvs相关模块

modprobe br_netfilter cat > /etc/sysconfig/modules/ipvs.modules <<EOF #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 EOF chmod 755 /etc/sysconfig/modules/ipvs.modules sh /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_

6) 其他可选系统优化

1、修改yum源

# 备份原有镜像源文件 mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.bak # 下载阿里云镜像源文件 wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo # 重新生成缓存文件 yum clean all && yum makecache

2、安装nfs服务

# 安装nfs和rpcbind yum install -y nfs-common nfs-utils rpcbind # 创建目录并赋予权限 mkdir /nfsdata chmod 666 /nfsdata chown nfsnobody /nfsdata cat /etc/exports /nfsdata *(rw,no_root_squash,no_all_squash,sync) # 重启服务 systemctl start nfs && systemctl enable nfs systemctl start rpcbind && systemctl enable rpcbind

3、调整日志规则

mkdir /var/log/journal # 持久化保存日志的目录 mkdir /etc/systemd/journald.conf.d cat > /etc/systemd/journald.conf.d/99-prophet.conf <<EOF [Journal] # 持久化保存到磁盘 Storage=persistent # 压缩历史日志 Compress=yes SyncIntervalSec=5m RateLimitInterval=30s RateLimitBurst=1000 # 最大占用空间 10G SystemMaxUse=10G # 单日志文件最大 200M SystemMaxFileSize=200M # 日志保存时间 2 周 MaxRetentionSec=2week # 不将日志转发到 syslog ForwardToSyslog=no EOF systemctl restart systemd-journald

4、升级系统内核

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm # 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装 一次! yum --enablerepo=elrepo-kernel install -y kernel-lt # 设置开机从新内核启动 grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)" # 重启后安装内核源文件 yum --enablerepo=elrepo-kernel install kernel-lt-devel-$(uname -r) kernel-lt-headers-$(uname -r)

5、关闭NUMA

cp /etc/default/grub{,.bak} vim /etc/default/grub # 在 GRUB_CMDLINE_LINUX 一行添加 `numa=off` 参数,如下所示: diff /etc/default/grub.bak /etc/default/grub 6c6 < GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rhgb quiet" --- > GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rhgb quiet numa=off" cp /boot/grub2/grub.cfg{,.bak} grub2-mkconfig -o /boot/grub2/grub.cfg

7) 安装部署docker

# 安装docker组件

yum install -y yum-utils device-mapper-persistent-data lvm2

# 设置docker镜像源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 这里指定v19.03.5版本

yum install -y docker-ce-19.03.5 docker-ce-cli-19.03.5

# 配置镜像加速,仓库地址,日志规则

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://jc3y13r3.mirror.aliyuncs.com"],

"insecure-registries":[""],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": { "max-size": "100m" }

}

EOF

# 重启docker服务,设置开机自启

mkdir -p /etc/systemd/system/docker.service.d

systemctl daemon-reload && systemctl restart docker && systemctl enable docker

{ "authorization-plugins": [], //访问授权插件 "data-root": "", //docker数据持久化存储的根目录 "dns": [], //DNS服务器 "dns-opts": [], //DNS配置选项,如端口等 "dns-search": [], //DNS搜索域名 "exec-opts": [], //执行选项 "exec-root": "", //执行状态的文件的根目录 "experimental": false, //是否开启试验性特性 "storage-driver": "", //存储驱动器 "storage-opts": [], //存储选项 "labels": [], //键值对式标记docker元数据 "live-restore": true, //dockerd挂掉是否保活容器(避免了docker服务异常而造成容器退出) "log-driver": "", //容器日志的驱动器 "log-opts": {}, //容器日志的选项 , //设置容器网络MTU(最大传输单元) "pidfile": "", //daemon PID文件的位置 "cluster-store": "", //集群存储系统的URL "cluster-store-opts": {}, //配置集群存储 "cluster-advertise": "", //对外的地址名称 , //设置每个pull进程的最大并发 , //设置每个push进程的最大并发 "default-shm-size": "64M", //设置默认共享内存的大小 , //设置关闭的超时时限(who?) "debug": true, //开启调试模式 "hosts": [], //监听地址(?) "log-level": "", //日志级别 "tls": true, //开启传输层安全协议TLS "tlsverify": true, //开启输层安全协议并验证远程地址 "tlscacert": "", //CA签名文件路径 "tlscert": "", //TLS证书文件路径 "tlskey": "", //TLS密钥文件路径 "swarm-default-advertise-addr": "", //swarm对外地址 "api-cors-header": "", //设置CORS(跨域资源共享-Cross-origin resource sharing)头 "selinux-enabled": false, //开启selinux(用户、进程、应用、文件的强制访问控制) "userns-remap": "", //给用户命名空间设置 用户/组 "group": "", //docker所在组 "cgroup-parent": "", //设置所有容器的cgroup的父类(?) "default-ulimits": {}, //设置所有容器的ulimit "init": false, //容器执行初始化,来转发信号或控制(reap)进程 "init-path": "/usr/libexec/docker-init", //docker-init文件的路径 "ipv6": false, //开启IPV6网络 "iptables": false, //开启防火墙规则 "ip-forward": false, //开启net.ipv4.ip_forward "ip-masq": false, //开启ip掩蔽(IP封包通过路由器或防火墙时重写源IP地址或目的IP地址的技术) "userland-proxy": false, //用户空间代理 "userland-proxy-path": "/usr/libexec/docker-proxy", //用户空间代理路径 "ip": "0.0.0.0", //默认IP "bridge": "", //将容器依附(attach)到桥接网络上的桥标识 "bip": "", //指定桥接ip "fixed-cidr": "", //(ipv4)子网划分,即限制ip地址分配范围,用以控制容器所属网段实现容器间(同一主机或不同主机间)的网络访问 "fixed-cidr-v6": "", //(ipv6)子网划分 "default-gateway": "", //默认网关 "default-gateway-v6": "", //默认ipv6网关 "icc": false, //容器间通信 "raw-logs": false, //原始日志(无颜色、全时间戳) "allow-nondistributable-artifacts": [], //不对外分发的产品提交的registry仓库 "registry-mirrors": [], //registry仓库镜像 "seccomp-profile": "", //seccomp配置文件 "insecure-registries": [], //非https的registry地址 "no-new-privileges": false, //禁止新优先级(??) "default-runtime": "runc", //OCI联盟(The Open Container Initiative)默认运行时环境 , //内存溢出被杀死的优先级(-1000~1000) "node-generic-resources": ["NVIDIA-GPU=UUID1", "NVIDIA-GPU=UUID2"], //对外公布的资源节点 "runtimes": { //运行时 "cc-runtime": { "path": "/usr/bin/cc-runtime" }, "custom": { "path": "/usr/local/bin/my-runc-replacement", "runtimeArgs": [ "--debug" ] } } }

8) 安装部署kubernetes

# 设置kubernetes镜像源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装k8s组件,这里指定v1.17.4版本

yum -y install kubeadm-1.17.1 kubectl-1.17.1 kubelet-1.17.1

# 设置kubelet开机自启

systemctl enable kubelet.service

9) 初始化管理节点

# 获取初始化配置文件,并修改相关参数

kubeadm config print init-defaults > kubeadm-config.yaml

vim kubeadm-config.yaml

--- apiVersion: kubeadm.k8s.io/v1beta2 bootstrapTokens: - groups: - system:bootstrappers:kubeadm:default-node-token token: abcdef.0123456789abcdef ttl: 24h0m0s usages: - signing - authentication kind: InitConfiguration localAPIEndpoint: advertiseAddress: 192.168.17.137 # 主节点地址 bindPort: 6443 # apiserver默认端口 nodeRegistration: criSocket: /var/run/dockershim.sock name: k8s-master # 主节点名称 taints: # 主节点默认污点 - effect: NoSchedule key: node-role.kubernetes.io/master --- apiServer: timeoutForControlPlane: 4m0s apiVersion: kubeadm.k8s.io/v1beta2 certificatesDir: /etc/kubernetes/pki # 证书存放位置 clusterName: kubernetes controllerManager: {} dns: type: CoreDNS etcd: local: dataDir: /var/lib/etcd imageRepository: registry.aliyuncs.com/google_containers # 修改镜像源 kind: ClusterConfiguration kubernetesVersion: v1.17.4 # 修改k8s版本 networking: dnsDomain: cluster.local podSubnet: "10.244.0.0/16" # 指定pod网络范围,必须与flannel配置一致 serviceSubnet: 10.96.0.0/12 scheduler: {} --- # kube-proxy开启ipvs apiVersion: kubeproxy.config.k8s.io/v1alpha1 kind: KubeProxyConfiguration featureGates: SupportIPVSProxyMode: true mode: ipvs ---

kubeadm init --config=kubeadm-config.yaml --upload-certs | tee kubeadm-init.log

# 根据初始化日志提示,需要将生成的admin.conf拷贝到.kube/config。 mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

[init] Using Kubernetes version: v1.15.1 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Activating the kubelet service [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8s-master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.17.137] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.17.137 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.17.137 127.0.0.1 ::1] [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 21.005629 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.15" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace [upload-certs] Using certificate key: 48ed9ff6d019a6f4ce9d854b42146d0085432d28bd2671cccd6eb69382d427d2 [mark-control-plane] Marking the node k8s-master01 as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node k8s-master01 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: abcdef.0123456789abcdef [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.17.137:6443 --token abcdef.0123456789abcdef \ --discovery-token-ca-cert-hash sha256:4ed1fe2c50eff803e7348c7f9229081839b598092d03effe1417e2fda9f340d2

[init]:指定版本进行初始化操作 [preflight] :初始化前的检查和下载所需要的Docker镜像文件。 [kubelet-start] :生成kubelet的配置文件”/var/lib/kubelet/config.yaml”,没有这个文件kubelet无法启动,所以初始化之前的kubelet实际上启动失败。 [certificates]:生成Kubernetes使用的证书,存放在/etc/kubernetes/pki目录中。 [kubeconfig] :生成 KubeConfig 文件,存放在/etc/kubernetes目录中,组件之间通信需要使用对应文件。 [control-plane]:使用/etc/kubernetes/manifest目录下的YAML文件,安装 Master 组件。 [etcd]:使用/etc/kubernetes/manifest/etcd.yaml安装Etcd服务。 [wait-control-plane]:等待control-plan部署的Master组件启动。 [apiclient]:检查Master组件服务状态。 [uploadconfig]:更新配置 [kubelet]:使用configMap配置kubelet。 [patchnode]:更新CNI信息到Node上,通过注释的方式记录。 [mark-control-plane]:为当前节点打标签,打了角色Master,和不可调度标签,这样默认就不会使用Master节点来运行Pod。 [bootstrap-token]:生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到 [addons]:安装附加组件CoreDNS和kube-proxy

10) 加入工作节点

# 根据初始化日志提示,执行kubeadm join命令加入集群

kubeadm join 192.168.17.137:6443 --token abcdef.0123456789abcdef \ --discovery-token-ca-cert-hash sha256:260796226d38de54c3c851ad48abf40ff97228cda68ce892cb813d9104c9a914

kubeadm-join.log

kubeadm-join.log 注意: 默认token有效期为24小时,失效后请在主节点使用以下命令重新生成

kubeadm token create --print-join-command

11) 部署网络插件

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml

注意:

- 如果网络不好建议先下载文件,修改镜像地址(一般在106和120行),再部署。

- 如果有多网卡的话,可能会出现dns无法解析,则需要指定参数--iface=<iface-name>。

- 当然你也可以直接使用我修改好的文件(已更换镜像源到aliyuncs)。

# You can get more information at: # https://www.cnblogs.com/leozhanggg/p/12571957.html --- apiVersion: policy/v1beta1 kind: PodSecurityPolicy metadata: name: psp.flannel.unprivileged annotations: seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default spec: privileged: false volumes: - configMap - secret - emptyDir - hostPath allowedHostPaths: - pathPrefix: "/etc/cni/net.d" - pathPrefix: "/etc/kube-flannel" - pathPrefix: "/run/flannel" readOnlyRootFilesystem: false # Users and groups runAsUser: rule: RunAsAny supplementalGroups: rule: RunAsAny fsGroup: rule: RunAsAny # Privilege Escalation allowPrivilegeEscalation: false defaultAllowPrivilegeEscalation: false # Capabilities allowedCapabilities: ['NET_ADMIN'] defaultAddCapabilities: [] requiredDropCapabilities: [] # Host namespaces hostPID: false hostIPC: false hostNetwork: true hostPorts: - min: 0 max: 65535 # SELinux seLinux: # SELinux is unused in CaaSP rule: 'RunAsAny' --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel rules: - apiGroups: ['extensions'] resources: ['podsecuritypolicies'] verbs: ['use'] resourceNames: ['psp.flannel.unprivileged'] - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.11.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-amd64 namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - amd64 hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-amd64 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-amd64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-arm64 namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - arm64 hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-arm64 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-arm64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-arm namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - arm hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-arm command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-arm command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-ppc64le namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - ppc64le hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-ppc64le command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-ppc64le command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-s390x namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - s390x hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-s390x command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/leozhanggg/flannel:v0.12.0-s390x command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg flannel-0.12-aliyuncs

12) 检查集群健康状况

[root@k8s-master ~]# kubectl get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-0 Healthy {"health":"true"} [root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 37m v1.15.1 k8s-node01 Ready <none> 5m22s v1.15.1 k8s-node02 Ready <none> 5m18s v1.15.1 [root@k8s-master ~]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE coredns-bccdc95cf-h2ngj 1/1 Running 0 14m coredns-bccdc95cf-m78lt 1/1 Running 0 14m etcd-k8s-master 1/1 Running 0 13m kube-apiserver-k8s-master 1/1 Running 0 13m kube-controller-manager-k8s-master 1/1 Running 0 13m kube-flannel-ds-amd64-j774f 1/1 Running 0 9m48s kube-flannel-ds-amd64-t8785 1/1 Running 0 9m48s kube-flannel-ds-amd64-wgbtz 1/1 Running 0 9m48s kube-proxy-ddzdx 1/1 Running 0 14m kube-proxy-nwhzt 1/1 Running 0 14m kube-proxy-p64rw 1/1 Running 0 13m kube-scheduler-k8s-master 1/1 Running 0 13m

13) 其他操作

# 设置kubectl命令自动补全

yum install -y bash-completion source /usr/share/bash-completion/bash_completion source <(kubectl completion bash) echo "source <(kubectl completion bash)" >> ~/.bashrc

# 主节点默认污点移除

kubectl taint nodes --all node-role.kubernetes.io/master-

# 将node节点标记为不可调度,不影响现有Pod kubectl cordon node-name # 驱逐该节点的Pod kubectl drain node-name # 维护结束,节点重新投入使用 kubectl uncordon node-name

# K8S服务NodePort默认端口范围是30000-32767,可以通过以下操作进行修改: vim /etc/kubernetes/manifests/kube-apiserver.yaml --- apiVersion: v1 kind: Pod metadata: creationTimestamp: null labels: component: kube-apiserver tier: control-plane name: kube-apiserver namespace: kube-system spec: containers: - command: - kube-apiserver - --service-node-port-range=2-65535 # 增加此配置 - --advertise-address=192.168.17.128 ......

# 重启kube-apiserver即可 docker restart $(docker ps | grep k8s_kube-apiserver | awk '{print $1}')

14) 常见报错处理

1、[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1 # 解决办法:echo "1" > /proc/sys/net/bridge/bridge-nf-call-iptables 2、[ERROR Swap]: running with swap on is not supported. Please disable swap # 解决办法:关闭swap分区 swapoff -a vim /etc/fstab #/dev/mapper/rhel-swap swap swap defaults 0 0 3、[ERROR DirAvailable--var-lib-etcd]: /var/lib/etcd is not empty # 解决办法:直接删除/var/lib/etcd文件夹 rm -rf /var/lib/etcd 4、The connection to the server localhost:8080 was refused - did you specify the right host or port? # 解决办法: 为了使用kubectl访问apiserver,在~/.bash_profile中追加下面的环境变量: export KUBECONFIG=/etc/kubernetes/admin.conf source ~/.bash_profile 重新初始化kubectl 5、Error execution phase preflight: [preflight] Some fatal errors occurred: [ERROR Port-6443]: Port 6443 is in use [ERROR Port-10251]: Port 10251 is in use [ERROR Port-10252]: Port 10252 is in use [ERROR FileAvailable--etc-kubernetes-manifests-kube-apiserver.yaml]: /etc/kubernetes/manifests/kube-apiserver.yaml already exists [ERROR FileAvailable--etc-kubernetes-manifests-kube-controller-manager.yaml]: /etc/kubernetes/manifests/kube-controller-manager.yaml already exists [ERROR FileAvailable--etc-kubernetes-manifests-kube-scheduler.yaml]: /etc/kubernetes/manifests/kube-scheduler.yaml already exists [ERROR FileAvailable--etc-kubernetes-manifests-etcd.yaml]: /etc/kubernetes/manifests/etcd.yaml already exists [ERROR Port-10250]: Port 10250 is in use [preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...` # 解决办法:安装提示忽略掉加上参数 --ignore-preflight-errors=all

折腾kubernetes各种问题汇总

kubectl命令可参考: Kubernetes kubectl 命令表 kubernetes常用命令整理

作者:Leozhanggg

出处:https:////www.cnblogs.com/leozhanggg/p/12571957.html

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。