dolphinscheduler 2.0.2 安装部署踩坑

1.创建dolphinscheduler 用户 要有sudo权限

2.部署dolphinscheduler的机器 需要相互免密

3.解压文件 cd dolphinscheduler-2.0.1/conf

因为我部署的dolphinscheduler元数据库是mysql 所以需要修改conf目录下的 application-mysql.yaml 和 common.properties

application-mysql.yaml

spring:

datasource:

driver-class-name: com.mysql.jdbc.Driver

#主要修改成自己的mysql连接地址

url: jdbc:mysql://ds1:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8

username: dolphinscheduler

password: dolphinscheduler

hikari:

connection-test-query: select 1

minimum-idle: 5

auto-commit: true

validation-timeout: 3000

pool-name: DolphinScheduler

maximum-pool-size: 50

connection-timeout: 30000

idle-timeout: 600000

leak-detection-threshold: 0

initialization-fail-timeout: 1

~

~3.54.数据库初始化

因为是使用mysql作为默认元数据库 所以需要添加mysql-connector-java驱动包到DolphinScheduler的lib目录下

#登录mysql

mysql -uroot -p

#创建mysql库和对应的账号密码

mysql> CREATE DATABASE dolphinscheduler DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

mysql> GRANT ALL PRIVILEGES ON dolphinscheduler.* TO '{user}'@'%' IDENTIFIED BY '{password}';

mysql> GRANT ALL PRIVILEGES ON dolphinscheduler.* TO '{user}'@'localhost' IDENTIFIED BY '{password}';

mysql> flush privileges;

把user和password 改成自己要设置的用户和密码4.执行script 目录下的创建表及导入基础数据脚本

sh script/create-dolphinscheduler.sh

日志最后一行出现 create DolphinScheduler success 表示引入脚本成功

检查创建的元数据库里有没有生成对应的表

注:common.properties 在第一次部署的时候可能不需要修改 在执行 install.sh 之后 去部署机器上可以修改配置 然后重启所有 workers生效

common.properties

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

#用户数据本地目录路径,请确认该目录存在并具有读写权限

data.basedir.path=/tmp/dolphinscheduler

# 资源存储类型: HDFS, S3, NONE

resource.storage.type=HDFS

#hdfs数据存储路径

resource.upload.path=/dolphinscheduler

#是否启动kerberos

hadoop.security.authentication.startup.state=false

# java.security.krb5.conf path

#java.security.krb5.conf.path=/opt/krb5.conf

# login user from keytab username

login.user.keytab.username=hdfs-mycluster@ESZ.COM

# login user from keytab path

login.user.keytab.path=/u01/isi/application/bigsoft/ds-2.0.1/conf/hdfs.headless.keytab

# kerberos expire time, the unit is hour

kerberos.expire.time=2

# resource view suffixs

#resource.view.suffixs=txt,log,sh,bat,conf,cfg,py,java,sql,xml,hql,properties,json,yml,yaml,ini,js

# hdfs用户 必须要有在hdfs根路径下创建目录的权限

# if resource.storage.type=HDFS, the user must have the permission to create directories under the HDFS root path

hdfs.root.user=hdfs

# namenode地址 如果开启了高可用 就把hdfs的core-site.xml 和 hdfs-site.xml 俩配置文件复制到conf里一份

# if resource.storage.type=S3, the value like: s3a://dolphinscheduler; if resource.storage.type=HDFS and namenode HA is enabled, you need to copy core-site.xml and hdfs-site.xml to conf dir

fs.defaultFS=hdfs://ds1:8020

# if resource.storage.type=S3, s3 endpoint

fs.s3a.endpoint=http://192.168.xx.xx:9010

# if resource.storage.type=S3, s3 access key

fs.s3a.access.key=xxxxxxxxxx

# if resource.storage.type=S3, s3 secret key

fs.s3a.secret.key=xxxxxxxxxx

# resourcemanager端口

# resourcemanager port, the default value is 8088 if not specified

resource.manager.httpaddress.port=8088

# resourcemanager是ha 就把地址都写上 如果不是高可用 空着就行

# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single, keep this value empty

yarn.resourcemanager.ha.rm.ids=

# 如果resourcemanager HA被启用或未使用resourcemanager,请保持默认值;如果resourcemanager为single,则只需要将主机名替换为实际的resourcemanager主机名即可

# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single, you only need to replace ds1 to actual resourcemanager hostname

yarn.application.status.address=http://ds1:%s/ws/v1/cluster/apps/%s

# 历史服务器地址

# job history status url when application number threshold is reached(default 10000, maybe it was set to 1000)

yarn.job.history.status.address=http://ds1:19888/ws/v1/history/mapreduce/jobs/%s

#数据源加密 保持默认

# datasource encryption enable

datasource.encryption.enable=false

# datasource encryption salt

datasource.encryption.salt=!@#$%^&*

# 是否使用sudo,如果设置为true,执行用户是租户用户,部署用户需要sudo权限;如果设置为false,则执行用户是部署用户,不需要sudo权限

# use sudo or not, if set true, executing user is tenant user and deploy user needs sudo permissions; if set false, executing user is the deploy user and doesn't need sudo permissions

sudo.enable=true

# network interface preferred like eth0, default: empty

#dolphin.scheduler.network.interface.preferred=

# network IP gets priority, default: inner outer

#dolphin.scheduler.network.priority.strategy=default

# system env path

#dolphinscheduler.env.path=env/dolphinscheduler_env.sh

# 保持默认

# development state

development.state=false

#插件路径 默认就行

#datasource.plugin.dir config

datasource.plugin.dir=lib/plugin/datasource

cd dolphinscheduler-2.0.1/conf/env

5. 修改 dolphinscheduler_env.sh 将环境变量的配置 修改成自己的环境变量

dolphinscheduler_env.sh

#ambari/HDP 环境 需要手动加上机器的环境变量之后 再配置海豚的env环境 暂时没有部署的直接注释掉就行

#海豚就是拼接命令 然后根据env环境去调用组件的

export HADOOP_HOME=/usr/hdp/3.1.4.0-315/hadoop/

export HADOOP_CONF_DIR=/usr/hdp/3.1.4.0-315/hadoop/etc/hadoop

#export SPARK_HOME1=/opt/soft/spark1

export SPARK_HOME2=/usr/hdp/3.1.4.0-315/spark2

#export PYTHON_HOME=/opt/soft/python

export JAVA_HOME=/usr/local/jdk1.8.0_112

export HIVE_HOME=/usr/hdp/3.1.4.0-315/hive

#export FLINK_HOME=

export DATAX_HOME=/u01/isi/application/bigsoft/datax

export PATH=$HADOOP_HOME/bin:$SPARK_HOME1/bin:$SPARK_HOME2/bin:$PYTHON_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$FLINK_HOME/bin:$DATAX_HOME/bin:$PATH 6. 修改安装配置文件

vim /dolphinscheduler-2.0.0/conf/config/install_config.conf

install_config.conf

#重头戏来了 部署命令的文件

# ---------------------------------------------------------

# INSTALL MACHINE

# ---------------------------------------------------------

# A comma separated list of machine hostname or IP would be installed DolphinScheduler,

# including master, worker, api, alert. If you want to deploy in pseudo-distributed

# mode, just write a pseudo-distributed hostname

# Example for hostnames: ips="ds1,ds2,ds3,ds4,ds5", Example for IPs: ips="192.168.8.1,192.168.8.2,192.168.8.3,192.168.8.4,192.168.8.5"

# 分布式集群补数的机器名或者ip地址

ips="ds1,ds2,ds3"

# Port of SSH protocol, default value is 22. For now we only support same port in all `ips` machine

# modify it if you use different ssh port

# ssh默认端口

sshPort="22"

# A comma separated list of machine hostname or IP would be installed Master server, it

# must be a subset of configuration `ips`.

# Example for hostnames: masters="ds1,ds2", Example for IPs: masters="192.168.8.1,192.168.8.2"

# master部署在哪台机器上 必须是配置`ips`的子集

masters="ds2"

# A comma separated list of machine <hostname>:<workerGroup> or <IP>:<workerGroup>.All hostname or IP must be a

# subset of configuration `ips`, And workerGroup have default value as `default`, but we recommend you declare behind the hosts

# Example for hostnames: workers="ds1:default,ds2:default,ds3:default", Example for IPs: workers="192.168.8.1:default,192.168.8.2:default,192.168.8.3:default"

# worker在哪个用户组 可以后续在worker机器的conf里面改

workers="ds1:default,ds2:default,ds3:default"

# A comma separated list of machine hostname or IP would be installed Alert server, it

# must be a subset of configuration `ips`.

# Example for hostname: alertServer="ds3", Example for IP: alertServer="192.168.8.3"

# 告警服务器地址

alertServer="ds3"

# A comma separated list of machine hostname or IP would be installed API server, it

# must be a subset of configuration `ips`.

# Example for hostname: apiServers="ds1", Example for IP: apiServers="192.168.8.1"

# api服务器地址

apiServers="ds1"

# The directory to install DolphinScheduler for all machine we config above. It will automatically be created by `install.sh` script if not exists.

# Do not set this configuration same as the current path (pwd)

# 安装目录

installPath="/u01/isi/application/bigsoft/ds-2.0.1"

# The user to deploy DolphinScheduler for all machine we config above. For now user must create by yourself before running `install.sh`

# script. The user needs to have sudo privileges and permissions to operate hdfs. If hdfs is enabled than the root directory needs

# to be created by this user

# 部署用户 需要sudo权限和操作hdfs的权限 可以按照官网文档新建 也可以用之前的

deployUser="isi"

# The directory to store local data for all machine we config above. Make sure user `deployUser` have permissions to read and write this directory.

# 用于存储我们上面配置的所有机器的本地数据的目录。确保用户 `deployUser` 有读写这个目录的权限。

dataBasedirPath="/tmp/dolphinscheduler"

# ---------------------------------------------------------

# DolphinScheduler ENV

# ---------------------------------------------------------

# JAVA_HOME, we recommend use same JAVA_HOME in all machine you going to install DolphinScheduler

# and this configuration only support one parameter so far.

# java环境变量地址

javaHome="/usr/local/jdk1.8.0_112"

# DolphinScheduler API service port, also this is your DolphinScheduler UI component's URL port, default value is 12345

# api服务端口 也是 web ui的 url端口 后续可以在部署api的worker上修改

apiServerPort="12345"

# ---------------------------------------------------------

# Database

# NOTICE: If database value has special characters, such as `.*[]^${}\+?|()@#&`, Please add prefix `\` for escaping.

# ---------------------------------------------------------

# The type for the metadata database

# Supported values: ``postgresql``, ``mysql`, `h2``.

# 元数据库类型

DATABASE_TYPE=${DATABASE_TYPE:-"mysql"}

# Spring datasource url, following <HOST>:<PORT>/<database>?<parameter> format, If you using mysql, you could use jdbc

# string jdbc:mysql://127.0.0.1:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8 as example

# 连接元数据的url

SPRING_DATASOURCE_URL=${SPRING_DATASOURCE_URL:-"jdbc:mysql://ds1:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8"}

# Spring datasource username 用户

SPRING_DATASOURCE_USERNAME=${SPRING_DATASOURCE_USERNAME:-"dolphinscheduler"}

# Spring datasource password 密码

SPRING_DATASOURCE_PASSWORD=${SPRING_DATASOURCE_PASSWORD:-"dolphinscheduler"}

# ---------------------------------------------------------

# Registry Server

# ---------------------------------------------------------

# Registry Server plugin name, should be a substring of `registryPluginDir`, DolphinScheduler use this for verifying configuration consistency

registryPluginName="zookeeper"

# Registry Server address. zk的地址

registryServers="ds1:2181,ds2:2181,ds3:2181"

# The root of zookeeper, for now DolphinScheduler default registry server is zookeeper.

# zk上创建的目录

zkRoot="/dolphinscheduler"

# ---------------------------------------------------------

# Worker Task Server

# ---------------------------------------------------------

# Worker Task Server plugin dir. DolphinScheduler will find and load the worker task plugin jar package from this dir.

taskPluginDir="lib/plugin/task"

# resource storage type: HDFS, S3, NONE

# 资源存储类型

resourceStorageType="HDFS"

# resource store on HDFS/S3 path, resource file will store to this hdfs path, self configuration, please make sure the directory exists on hdfs and has read write permissions. "/dolphinscheduler" is recommended

# hdfs上的存放路径

resourceUploadPath="/dolphinscheduler"

# if resourceStorageType is HDFS,defaultFS write namenode address,HA, you need to put core-site.xml and hdfs-site.xml in the conf directory.

# if S3,write S3 address,HA,for example :s3a://dolphinscheduler,

# Note,S3 be sure to create the root directory /dolphinscheduler

# namenode地址

defaultFS="hdfs://ds1:8020"

# if resourceStorageType is S3, the following three configuration is required, otherwise please ignore

s3Endpoint="http://192.168.xx.xx:9010"

s3AccessKey="xxxxxxxxxx"

s3SecretKey="xxxxxxxxxx"

# resourcemanager port, the default value is 8088 if not specified

# resourcemanager端口

resourceManagerHttpAddressPort="8088"

# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single node, keep this value empty

# 如果启用了资源管理器 HA,请设置 HA IP;如果资源管理器是单节点,则将此值保留为空

yarnHaIps=""

# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single node, you only need to replace 'yarnIp1' to actual resourcemanager hostname

# # 如果resourcemanager HA开启或不使用resourcemanager,请保持默认值;如果resourcemanager是单节点,你只需要将'yarnIp1'替换为实际的resourcemanager主机名

singleYarnIp="ds1"

# who has permission to create directory under HDFS/S3 root path

# Note: if kerberos is enabled, please config hdfsRootUser=

# 有操作hdfs权限的用户

hdfsRootUser="hdfs"

# kerberos config

# whether kerberos starts, if kerberos starts, following four items need to config, otherwise please ignore

kerberosStartUp="false"

# kdc krb5 config file path

krb5ConfPath="$installPath/conf/krb5.conf"

# keytab username,watch out the @ sign should followd by \\

keytabUserName="hdfs-mycluster\\@ESZ.COM"

# username keytab path

keytabPath="$installPath/conf/hdfs.headless.keytab"

# kerberos expire time, the unit is hour

kerberosExpireTime="2"

# use sudo or not

# 打开sudo权限

sudoEnable="true"

# worker tenant auto create

# 租户需要手动创建 需要和部署用户还有hdfs用户有关系

workerTenantAutoCreate="false"

7.切换到部署用户dolphinscheduler,然后执行一键部署脚本,如果不切换用户 可能会出现用户权限操作失败的问题

su dolphinscheduler

sh install.sh部署脚本完成之后 启动的服务有这几种

1: ApiApplicationServer WorkerServer LoggerServer

2: WorkerServer

3: LoggerServer WorkerServer AlertServer MasterServer

8.其他的启停命令

# 一键停止集群所有服务

sh ./bin/stop-all.sh

# 一键开启集群所有服务

sh ./bin/start-all.sh

# 启停 Master

sh ./bin/dolphinscheduler-daemon.sh stop master-server

sh ./bin/dolphinscheduler-daemon.sh start master-server

# 启停 Worker

sh ./bin/dolphinscheduler-daemon.sh start worker-server

sh ./bin/dolphinscheduler-daemon.sh stop worker-server

# 启停 Api

sh ./bin/dolphinscheduler-daemon.sh start api-server

sh ./bin/dolphinscheduler-daemon.sh stop api-server

# 启停 Logger

sh ./bin/dolphinscheduler-daemon.sh start logger-server

sh ./bin/dolphinscheduler-daemon.sh stop logger-server

# 启停 Alert

sh ./bin/dolphinscheduler-daemon.sh start alert-server

sh ./bin/dolphinscheduler-daemon.sh stop alert-server

WebUI

访问前端页面地址: http://Ava01:12345/dolphinscheduler ,出现前端登录页面 主机名是部署了ApiApplicationServer 的机器

默认用户名密码:admin/dolphinscheduler123

踩坑

关于worker.properties

安装到不同机器的这个配置文件 是用来指定这台机器 归于哪个用户组下 在配置1.3.8版本的时候 发现在admin账号上配置对应的worker组 在有的时候不起作用,master日志会有

[ERROR] 2021-12-08 15:37:00.405 org.apache.dolphinscheduler.server.master.consumer.TaskPriorityQueueConsumer:[154] - dispatch error: fail to execute : Command [type=TASK_EXECUTE_REQUEST, opaque=2988, bodyLen=1506] due to no suitable worker, current task needs worker group Ava03 to execute

这样的报错,需要手动停止ds服务,修改每台机器上的worker.properties 之后 ,启动ds服务.

#worker.properties 这个表示 这个机器归属的用户组是default 和 Ava01 这两个用户组

worker.groups=default,Ava01其他:

1.资源中心创建文件只支持网页上传,直接将jar包传到资源中心的目录是识别不了的

2.映射的worker组优先级大于流程启动选择的worker组,最好保证需要跑的任务的环境在那台worker上进行配置了

3.关于参数 流程节点中 使用 ${xx} 来表示参数 但是下面传参的时候 格式需要去掉 ${} 直接用xx接受参数 看下图

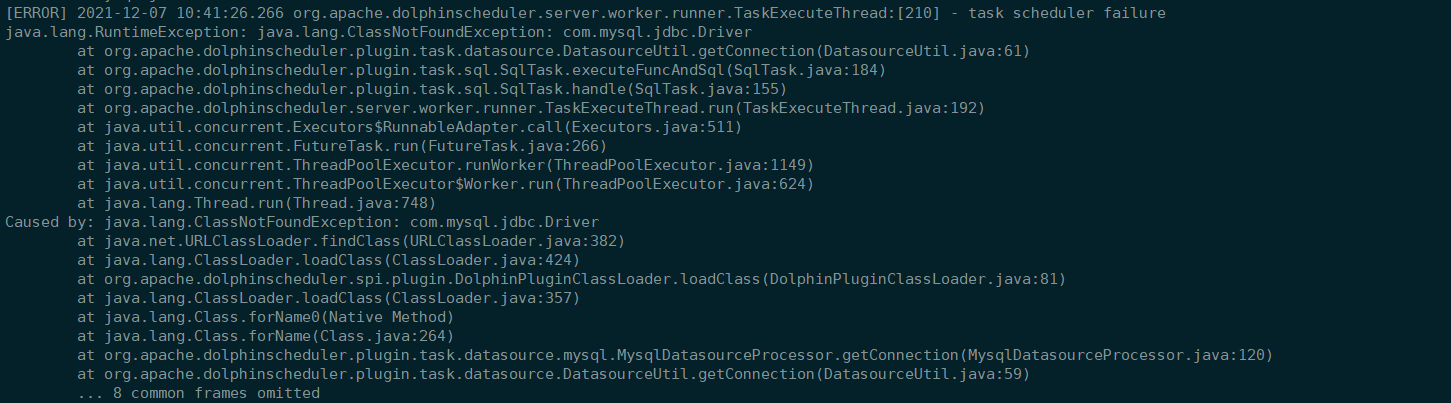

4.关于2.0部署 手动添加mysql驱动包之后 调度任务执行sql节点会报错:找不到驱动类的解决办法:

手动复制驱动jar包到procedure的插件库里 或者 把驱动程序包放在 lib/plugin/task/sql 目录下就可以了

2.0.0 找不到驱动的问题解决

在解压之后的dolphinscheduler lib里面已经手动添加了mysql的驱动包,安装之后的ds2目录lib下也是有这个驱动包的,在web界面上 数据源连接mysql也可以连接成功,但是任务一配到sql节点 就报错没有jdbc驱动包.

解决办法: MySQL :: Download MySQL Connector/J (Archived Versions) 下载mysql驱动包 手动导入到解压之后的安装包的lib目录上

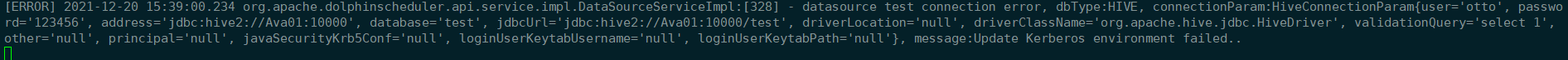

2.0.1 构建hive数据源 报错:Update Kerberos environment failed

解决办法:如果不是kerberos环境的话,需要将conf/cpmmon.properties中的 java.security.krb5.conf.path的配置项注释掉,重启workers. 构建hive数据源就可以成功.

2021.12.24 免密登录一直配置不成功 查了各种资料 最后发现是SELinux的问题 需要关闭或者临时关闭

#查看当前状态命令

getenforce

#临时关闭SELinux

setenforce 0

#临时开启SELinux

setenforce 1

#具体办法

https://www.cnblogs.com/liuzgg/p/11656532.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号