消费一下kafka的__consumer_offsets

__consumer_offsets

consumer默认将offset保存在Kafka一个内置的topic中,该topic为__consumer_offsets

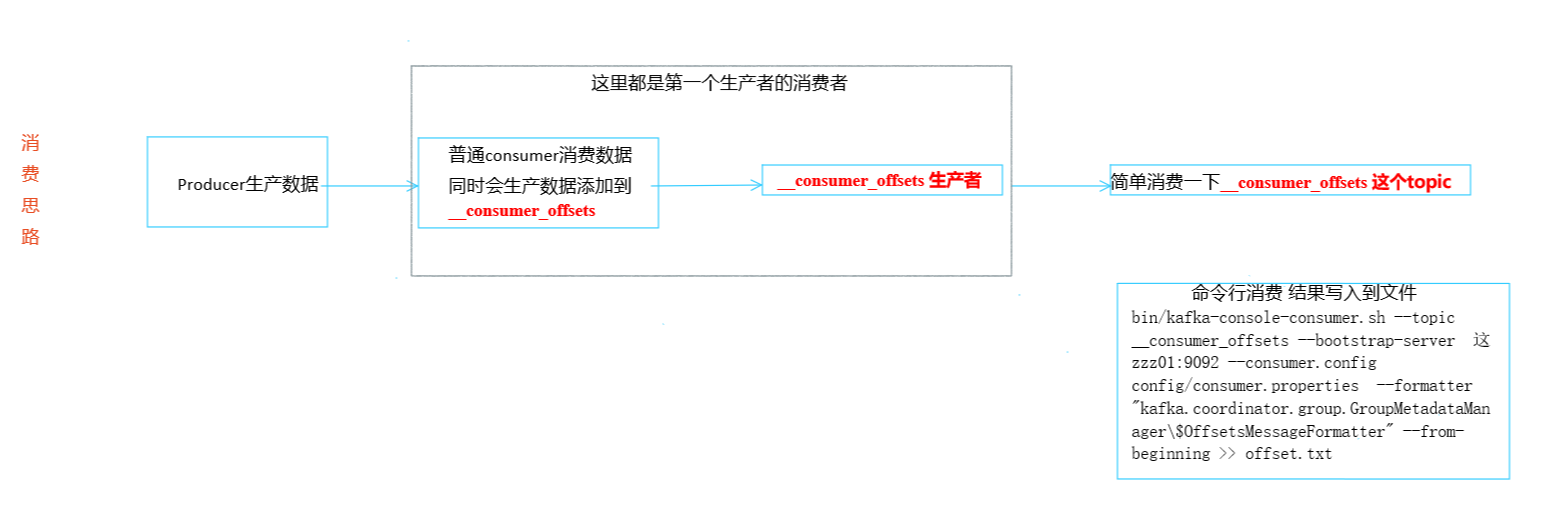

__consumer_offsets 为kafka中的topic, 那就可以通过消费者进行消费.大概思路:

1.先启动一个生产者:

offset_Producer

package Look_offset;

import org.apache.kafka.clients.producer.*;

import java.util.Properties;

import java.util.concurrent.ExecutionException;

/*简单一个生产者 给offset_Consumer提供数据消费的*/

public class offset_Producer {

public static void main(String[] args) throws ExecutionException, InterruptedException {

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "zzz01:9092");

properties.put(ProducerConfig.BATCH_SIZE_CONFIG, "16384");

properties.put(ProducerConfig.LINGER_MS_CONFIG, 1);

properties.put(ProducerConfig.BUFFER_MEMORY_CONFIG, 33554432);

//

properties.put(ProducerConfig.ACKS_CONFIG, "all");

properties.put("retries", 3);

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringSerializer");

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringSerializer");

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<>(properties);

for (int i = 0; i < 1000; i++) {

ProducerRecord<String, String> producerRecord = new ProducerRecord<>("otto", "害羞小向晚" + i + "次");

//回调函数 acks设置为all 等所有follower落盘完成之后返回一个回执消息

kafkaProducer.send(producerRecord, new Callback() {

@Override

public void onCompletion(RecordMetadata metadata, Exception exception) {

if (exception != null) {

exception.printStackTrace();

} else {

System.out.println(metadata.topic() + " 数据:" + producerRecord.value() + " " + "分区: " + metadata.partition() + " "

+ "offset:" + metadata.offset());

}

}

});

//同步发送的意思就是,一条消息发送之后,会阻塞当前线程,直至返回ack。

//由于send方法返回的是一个Future对象,根据Futrue对象的特点,我们也可以实现同步发送的效果,只需在调用Future对象的get方发即可。

Thread.sleep(5);

}

kafkaProducer.close();

}

}

2. 在kafka上启动脚本

消费__consumer_offsets的脚本:

#将结果输出到文件 方便查看

bin/kafka-console-consumer.sh --topic __consumer_offsets --bootstrap-server Ava01:9092 --consumer.config config/consumer.properties --formatter "kafka.coordinator.group.GroupMetadataManager\$OffsetsMessageFormatter" --from-beginning >>kafka_offset.txt3.启动消费者

又是消费者又是生产者 产生的offset放进去__consumer_offset要被在kafaka中用脚本启动的消费者消费offset_Consumer

package Look_offset;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import java.time.Duration;

import java.util.ArrayList;

import java.util.Properties;

/*

* 又是消费者又是生产者 产生的offset放进去__consumer_offset要被offset_Consumer2消费*/

public class offset_Consumer {

public static void main(String[] args) {

// 1. 创建配置对象

Properties properties = new Properties();

// 2. 给配置对象添加参数

// 添加连接

properties.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "zzz01:9092");

// 配置序列化 必须

properties.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

properties.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

// 配置消费者组

properties.put(ConsumerConfig.GROUP_ID_CONFIG, "Ava");

// 修改分区分配策略

// properties.put(ConsumerConfig.PARTITION_ASSIGNMENT_STRATEGY_CONFIG, "org.apache.kafka.clients.consumer.RoundRobinAssignor");

// 不排除内部offset,不然看不到__consumer_offsets

properties.put(ConsumerConfig.EXCLUDE_INTERNAL_TOPICS_CONFIG, "false");

//3. 创建kafka消费者

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(properties);

//4. 设置消费主题 形参是列表

ArrayList<String> arrayList = new ArrayList<>();

// 更换主题

arrayList.add("otto");

consumer.subscribe(arrayList);

//5. 消费数据

while (true) {

// 读取消息

ConsumerRecords<String, String> consumerRecords = consumer.poll(Duration.ofSeconds(1));

// 输出消息

for (ConsumerRecord<String, String> consumerRecord : consumerRecords) {

System.out.println(consumerRecord.value() + " "+ "offset: "+consumerRecord.offset());

}

}

}

}

绝不摆烂

浙公网安备 33010602011771号

浙公网安备 33010602011771号