Envoy负载均衡

还用之前下载的代码

cd servicemesh_in_practise/Cluster-Manager/ring-hashcat docker-compose.yaml

# Author: MageEdu <mage@magedu.com>

# Version: v1.0.1

# Site: www.magedu.com

#

version: '3.3'

services:

envoy:

image: envoyproxy/envoy-alpine:v1.21-latest

environment:

- ENVOY_UID=0

- ENVOY_GID=0

volumes:

- ./front-envoy.yaml:/etc/envoy/envoy.yaml

networks:

envoymesh:

ipv4_address: 172.31.25.2

aliases:

- front-proxy

depends_on:

- webserver01-sidecar

- webserver02-sidecar

- webserver03-sidecar

webserver01-sidecar:

image: envoyproxy/envoy-alpine:v1.21-latest

environment:

- ENVOY_UID=0

- ENVOY_GID=0

volumes:

- ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml

hostname: red

networks:

envoymesh:

ipv4_address: 172.31.25.11

aliases:

- myservice

- red

webserver01:

image: ikubernetes/demoapp:v1.0

environment:

- ENVOY_UID=0

- ENVOY_GID=0

environment:

- PORT=8080

- HOST=127.0.0.1

network_mode: "service:webserver01-sidecar"

depends_on:

- webserver01-sidecar

webserver02-sidecar:

image: envoyproxy/envoy-alpine:v1.21-latest

environment:

- ENVOY_UID=0

- ENVOY_GID=0

volumes:

- ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml

hostname: blue

networks:

envoymesh:

ipv4_address: 172.31.25.12

aliases:

- myservice

- blue

webserver02:

image: ikubernetes/demoapp:v1.0

environment:

- ENVOY_UID=0

- ENVOY_GID=0

environment:

- PORT=8080

- HOST=127.0.0.1

network_mode: "service:webserver02-sidecar"

depends_on:

- webserver02-sidecar

webserver03-sidecar:

image: envoyproxy/envoy-alpine:v1.21-latest

environment:

- ENVOY_UID=0

- ENVOY_GID=0

volumes:

- ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml

hostname: green

networks:

envoymesh:

ipv4_address: 172.31.25.13

aliases:

- myservice

- green

webserver03:

image: ikubernetes/demoapp:v1.0

environment:

- ENVOY_UID=0

- ENVOY_GID=0

environment:

- PORT=8080

- HOST=127.0.0.1

network_mode: "service:webserver03-sidecar"

depends_on:

- webserver03-sidecar

networks:

envoymesh:

driver: bridge

ipam:

config:

- subnet: 172.31.25.0/24

cat envoy-sidecar-proxy.yaml

# Author: MageEdu <mage@magedu.com>

# Version: v1.0.1

# Site: www.magedu.com

#

admin:

profile_path: /tmp/envoy.prof

access_log_path: /tmp/admin_access.log

address:

socket_address:

address: 0.0.0.0

port_value: 9901

static_resources:

listeners:

- name: listener_0

address:

socket_address: { address: 0.0.0.0, port_value: 80 }

filter_chains:

- filters:

- name: envoy.filters.network.http_connection_manager

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager

stat_prefix: ingress_http

codec_type: AUTO

route_config:

name: local_route

virtual_hosts:

- name: local_service

domains: ["*"]

routes:

- match: { prefix: "/" }

route: { cluster: local_cluster }

http_filters:

- name: envoy.filters.http.router

clusters:

- name: local_cluster

connect_timeout: 0.25s

type: STATIC

lb_policy: ROUND_ROBIN

load_assignment:

cluster_name: local_cluster

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address: { address: 127.0.0.1, port_value: 8080 }

cat front-envoy.yaml

admin:

profile_path: /tmp/envoy.prof

access_log_path: /tmp/admin_access.log

address:

socket_address: { address: 0.0.0.0, port_value: 9901 }

static_resources:

listeners:

- name: listener_0

address:

socket_address: { address: 0.0.0.0, port_value: 80 }

filter_chains:

- filters:

- name: envoy.filters.network.http_connection_manager

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager

stat_prefix: ingress_http

codec_type: AUTO

route_config:

name: local_route

virtual_hosts:

- name: webservice

domains: ["*"]

routes:

- match: { prefix: "/" }

route:

cluster: web_cluster_01

hash_policy:

# - connection_properties:

# source_ip: true

- header:

header_name: User-Agent

http_filters:

- name: envoy.filters.http.router

clusters:

- name: web_cluster_01

connect_timeout: 0.5s

type: STRICT_DNS

lb_policy: RING_HASH

ring_hash_lb_config:

maximum_ring_size: 1048576

minimum_ring_size: 512

load_assignment:

cluster_name: web_cluster_01

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: myservice

port_value: 80

health_checks:

- timeout: 5s

interval: 10s

unhealthy_threshold: 2

healthy_threshold: 2

http_health_check:

path: /livez

expected_statuses:

start: 200

end: 399启动

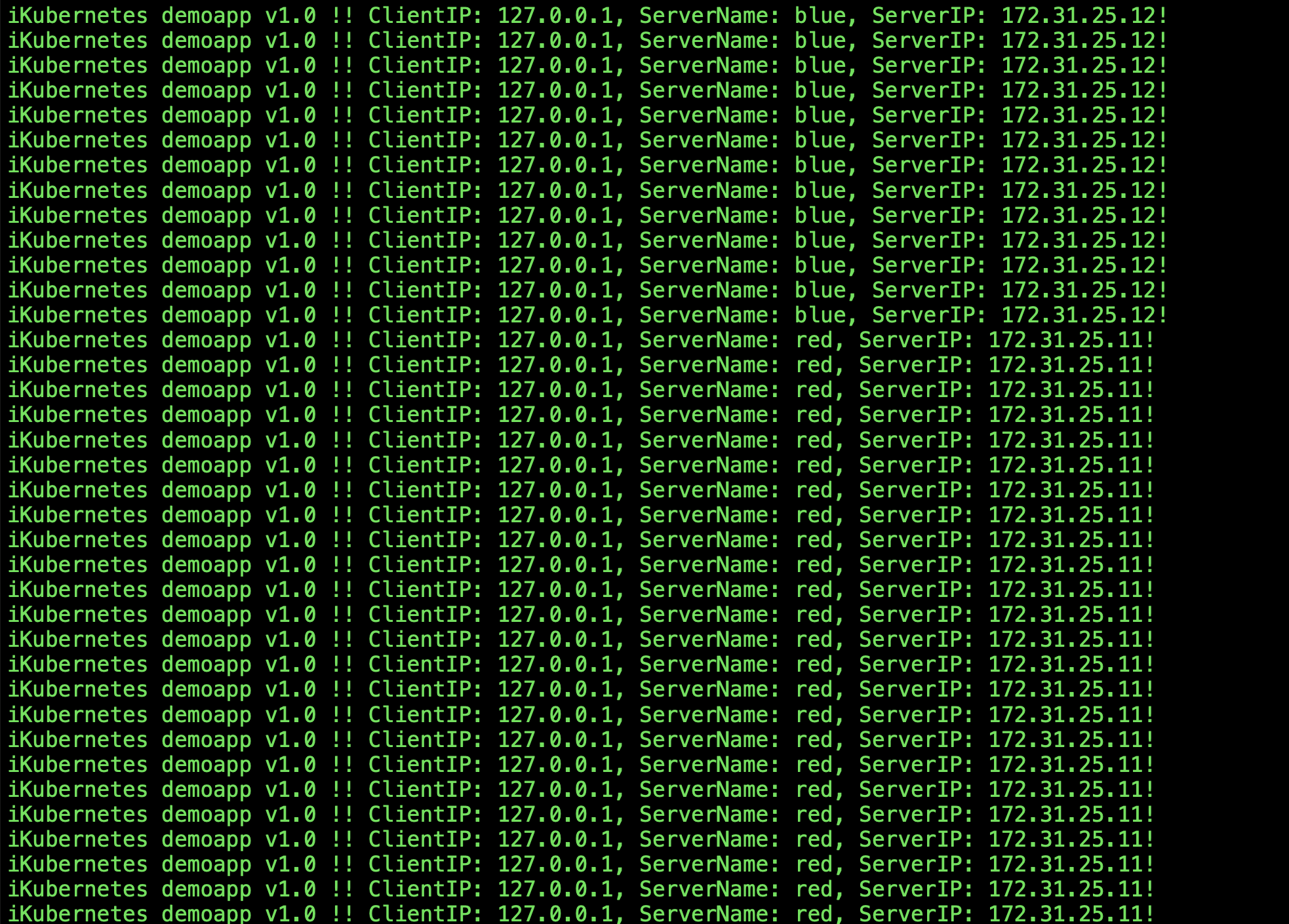

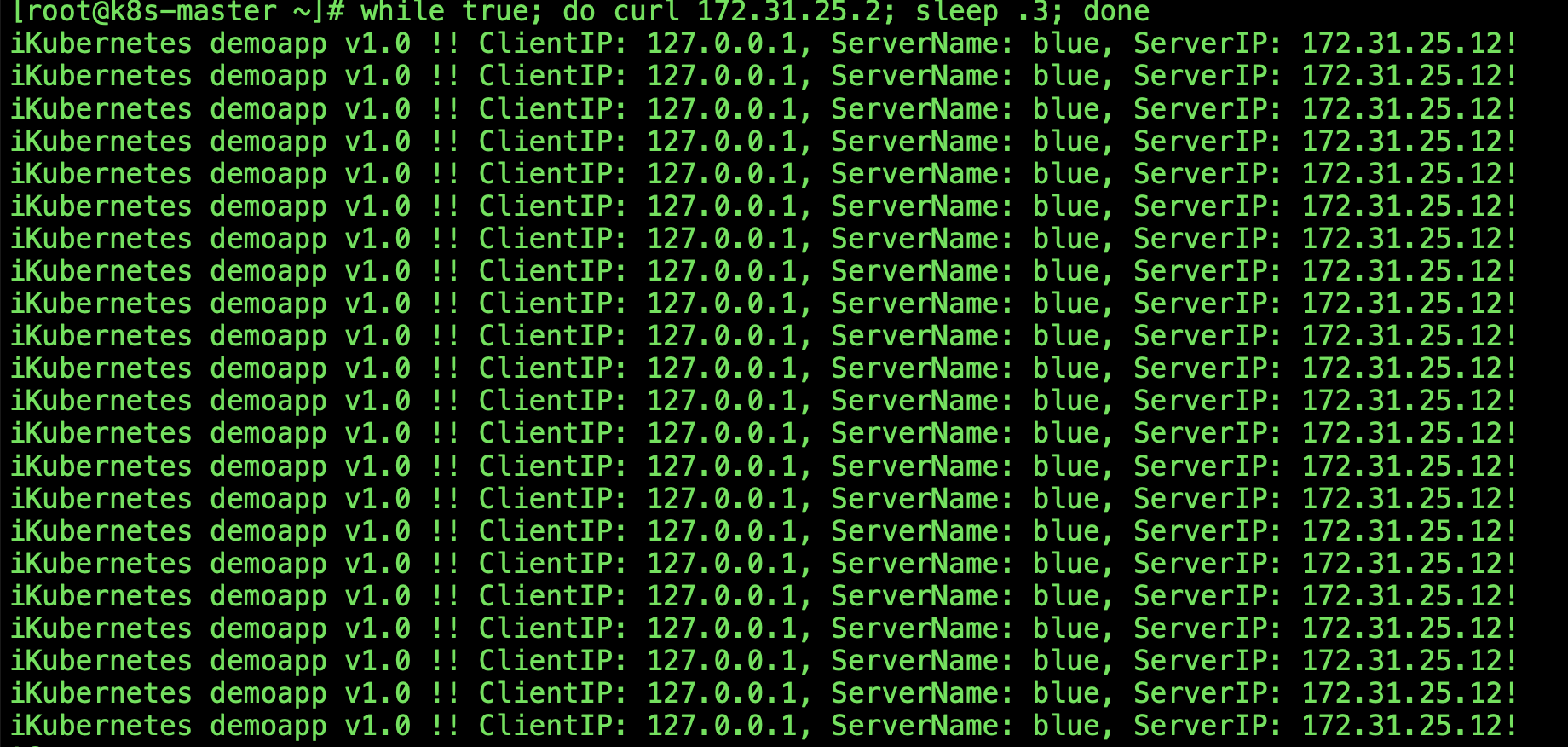

docker-compose up我们在路由hash策略中,hash计算的是用户的浏览器类型,因而,使用如下命令持续发起请求可以看出,用户请求将始终被定向到同一个后端端点;因为其浏览器类型一直未变。

while true; do curl 172.31.25.2; sleep .3; done

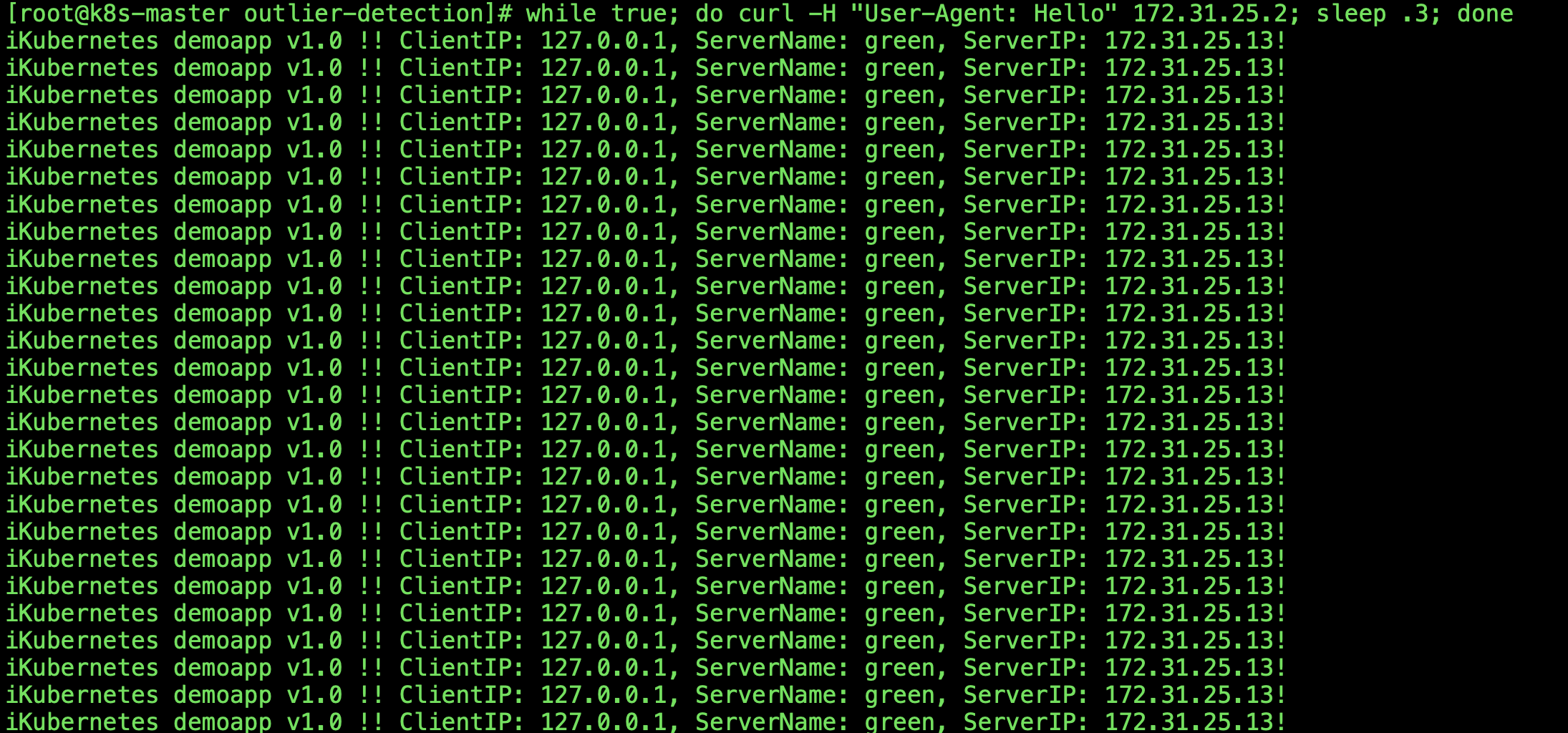

我们可以模拟使用另一个浏览器再次发请求;其请求可能会被调度至其它节点,也可能仍然调度至前一次的相同节点之上;这取决于hash算法的计算结果;

while true; do curl -H "User-Agent: Hello" 172.31.25.2; sleep .3; done

也可以使用如下命令,将一个后端端点的健康检查结果置为失败,动态改变端点,并再次判定其调度结果,验证此前调度至该节点的请求是否被重新分配到了其它节点;

curl -X POST -d 'livez=FAIL' http://172.31.25.12/livez可以看到当livez设置为FAIL后ServerName从blue变成了red