019 Ceph整合openstack

一、整合 glance ceph

1.1 查看servverb关于openstack的用户

[root@serverb ~]# vi ./keystonerc_admin

unset OS_SERVICE_TOKEN export OS_USERNAME=admin export OS_PASSWORD=9f0b699989a04a05 export OS_AUTH_URL=http://172.25.250.11:5000/v2.0 export PS1='[\u@\h \W(keystone_admin)]\$ ' export OS_TENANT_NAME=admin export OS_REGION_NAME=RegionOne

[root@serverb ~(keystone_admin)]# openstack service list

1.2 安装ceph包

[root@serverb ~(keystone_admin)]# yum -y install ceph-common

[root@serverb ~(keystone_admin)]# chown ceph:ceph /etc/ceph/

1.3 创建RBD镜像

root@serverc ~]# ceph osd pool create images 128 128

pool 'images' created

[root@serverc ~]# ceph osd pool application enable images rbd

enabled application 'rbd' on pool 'images'

[root@serverc ~]# ceph osd pool ls

images

1.4创建Ceph池用户

[root@serverc ~]# ceph auth get-or-create client.images mon 'profile rbd' osd 'profile rbd pool=images' -o /etc/ceph/ceph.client.images.keyring

[root@serverc ~]# ll /etc/ceph/ceph.client.images.keyring

-rw-r--r-- 1 root root 64 Mar 30 14:18 /etc/ceph/ceph.client.images.keyring

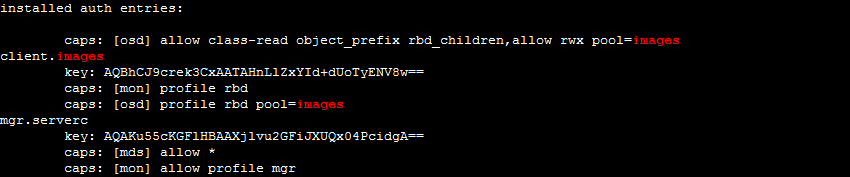

[root@serverc ~]# ceph auth list|grep -A 4 images

1.5 复制到serverb节点并验证

[root@serverc ~]# scp /etc/ceph/ceph.conf ceph@serverb:/etc/ceph/ceph.conf

ceph.conf 100% 470 725.4KB/s 00:00

[root@serverc ~]# scp /etc/ceph/ceph.client.images.keyring ceph@serverb:/etc/ceph/ceph.client.images.keyring

ceph.client.images.keyring 100% 64 145.2KB/s 00:00

[root@serverb ~(keystone_admin)]# ceph --id images -s

cluster: id: 2d58e9ec-9bc0-4d43-831c-24b345fc2a94 health: HEALTH_OK services: mon: 3 daemons, quorum serverc,serverd,servere mgr: serverc(active), standbys: serverd, servere osd: 9 osds: 9 up, 9 in data: pools: 1 pools, 128 pgs objects: 14 objects, 25394 kB usage: 1044 MB used, 133 GB / 134 GB avail pgs: 128 active+clean

1.6 修改秘钥环权限

[root@serverb ~(keystone_admin)]# chgrp glance /etc/ceph/ceph.client.images.keyring

[root@serverb ~(keystone_admin)]# chmod 0640 /etc/ceph/ceph.client.images.keyring

1.7 修改glance后端存储

[root@serverb ~(keystone_admin)]# vim /etc/glance/glance-api.conf

[glance_store] stores = rbd default_store = rbd filesystem_store_datadir = /var/lib/glance/images/ rbd_store_chunk_size = 8 rbd_store_pool = images rbd_store_user = images rbd_store_ceph_conf = /etc/ceph/ceph.conf rados_connect_timeout = 0 os_region_name=RegionOne

[root@serverb ~(keystone_admin)]# grep -Ev "^$|^[#;]" /etc/glance/glance-api.conf

[DEFAULT] bind_host = 0.0.0.0 bind_port = 9292 workers = 2 image_cache_dir = /var/lib/glance/image-cache registry_host = 0.0.0.0 debug = False log_file = /var/log/glance/api.log log_dir = /var/log/glance [cors] [cors.subdomain] [database] connection = mysql+pymysql://glance:27c082e7c4a9413c@172.25.250.11/glance [glance_store] stores = rbd default_store = rbd default_store = file filesystem_store_datadir = /var/lib/glance/images/ rbd_store_chunk_size = 8 rbd_store_pool = images rbd_store_user = images rbd_store_ceph_conf = /etc/ceph/ceph.conf rados_connect_timeout = 0 os_region_name=RegionOne [image_format] [keystone_authtoken] auth_uri = http://172.25.250.11:5000/v2.0 auth_type = password project_name=services username=glance password=99b29d9142514f0f auth_url=http://172.25.250.11:35357 [matchmaker_redis] [oslo_concurrency] [oslo_messaging_amqp] [oslo_messaging_notifications] [oslo_messaging_rabbit] [oslo_messaging_zmq] [oslo_middleware] [oslo_policy] policy_file = /etc/glance/policy.json [paste_deploy] flavor = keystone [profiler] [store_type_location_strategy] [task] [taskflow_executor]

[root@serverb ~(keystone_admin)]# systemctl restart openstack-glance-api

1.8 创建image测试

[root@serverb ~(keystone_admin)]# wget http://materials/small.img

[root@serverb ~(keystone_admin)]# openstack image create --container-format bare --disk-format raw --file ./small.img "Small Image"

+------------------+------------------------------------------------------+ | Field | Value | +------------------+------------------------------------------------------+ | checksum | ee1eca47dc88f4879d8a229cc70a07c6 | | container_format | bare | | created_at | 2019-03-30T06:35:40Z | | disk_format | raw | | file | /v2/images/f60f2b0f-8c7d-42c2-b158-53d9a436efc9/file | | id | f60f2b0f-8c7d-42c2-b158-53d9a436efc9 | | min_disk | 0 | | min_ram | 0 | | name | Small Image | | owner | 79cf145d371e48ef96f608cbf85d1788 | | protected | False | | schema | /v2/schemas/image | | size | 13287936 | | status | active | | tags | | | updated_at | 2019-03-30T06:35:41Z | | virtual_size | None | | visibility | private | +------------------+------------------------------------------------------+

[root@serverb ~(keystone_admin)]# rbd --id images -p images ls

14135e67-39bb-4b1c-aa11-fd5b26599ee7 42030515-33b2-4875-8b63-119a0dbc12d4 f60f2b0f-8c7d-42c2-b158-53d9a436efc9

[root@serverb ~(keystone_admin)]# glance image-list

+--------------------------------------+-------------+ | ID | Name | +--------------------------------------+-------------+ | f60f2b0f-8c7d-42c2-b158-53d9a436efc9 | Small Image | +--------------------------------------+-------------+

[root@serverb ~(keystone_admin)]# rbd --id images info images/f60f2b0f-8c7d-42c2-b158-53d9a436efc9

rbd image 'f60f2b0f-8c7d-42c2-b158-53d9a436efc9': size 12976 kB in 2 objects order 23 (8192 kB objects) block_name_prefix: rbd_data.109981f1d12 format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten flags: create_timestamp: Sat Mar 30 14:35:41 2019

1.9 删除一个镜像操作

[root@serverb ~(keystone_admin)]# openstack image delete "Small Image"

[root@serverb ~(keystone_admin)]# openstack image list

[root@serverb ~(keystone_admin)]# rbd --id images -p images ls

14135e67-39bb-4b1c-aa11-fd5b26599ee7 42030515-33b2-4875-8b63-119a0dbc12d4

[root@serverb ~(keystone_admin)]# ceph osd pool ls --id images

images

二、整合ceph和cinder

2.1 创建RBD镜像

[root@serverc ~]# ceph osd pool create volumes 128

pool 'volumes' created

[root@serverc ~]# ceph osd pool application enable volumes rbd

enabled application 'rbd' on pool 'volumes'

2.2 创建ceph用户

[root@serverc ~]# ceph auth get-or-create client.volumes mon 'profile rbd' osd 'profile rbd pool=volumes,profile rbd pool=images' -o /etc/ceph/ceph.client.volumes.keyring

[root@serverc ~]# ceph auth list|grep -A 4 volumes

installed auth entries: client.volumes key: AQBOEZ9ckRr3BxAAaWB8lpYRrUQ+z/Bgk3Rfbg== caps: [mon] profile rbd caps: [osd] profile rbd pool=volumes,profile rbd pool=images mgr.serverc key: AQAKu55cKGFlHBAAXjlvu2GFiJXUQx04PcidgA== caps: [mds] allow * caps: [mon] allow profile mgr

2.3 复制到serverb节点

[root@serverc ~]# scp /etc/ceph/ceph.client.volumes.keyring ceph@serverb:/etc/ceph/ceph.client.volumes.keyring

ceph@serverb's password: ceph.client.volumes.keyring 100% 65 108.3KB/s 00:00

[root@serverc ~]# ceph auth get-key client.volumes|ssh ceph@serverb tee ./client.volumes.key

ceph@serverb's password: AQBOEZ9ckRr3BxAAaWB8lpYRrUQ+z/Bgk3Rfbg==[root@serverc ~]#

2.3 serverb节点确认验证

[root@serverb ~(keystone_admin)]# ceph --id volumes -s

cluster: id: 2d58e9ec-9bc0-4d43-831c-24b345fc2a94 health: HEALTH_OK services: mon: 3 daemons, quorum serverc,serverd,servere mgr: serverc(active), standbys: serverd, servere osd: 9 osds: 9 up, 9 in data: pools: 2 pools, 256 pgs objects: 14 objects, 25394 kB usage: 1048 MB used, 133 GB / 134 GB avail pgs: 256 active+clean

2.4 修改秘钥环权限

[root@serverb ~(keystone_admin)]# chgrp cinder /etc/ceph/ceph.client.volumes.keyring

[root@serverb ~(keystone_admin)]# chmod 0640 /etc/ceph/ceph.client.volumes.keyring

2.5 生成uuid

[root@serverb ~(keystone_admin)]# uuidgen |tee ~/myuuid.txt

f3fbcf03-e208-4fba-9c47-9ff465847468

2.6 修改cinder后端存储

[root@serverb ~(keystone_admin)]# vi /etc/cinder/cinder.conf

enabled_backends = ceph glance_api_version = 2 #default_volume_type = iscsi [ceph] volume_driver = cinder.volume.drivers.rbd.RBDDriver rbd_pool = volumes rbd_user = volumes rbd_ceph_conf = /etc/ceph/ceph.conf rbd_flatten_volume_from_snapshot = false rbd_secret_uuid = f3fbcf03-e208-4fba-9c47-9ff465847468 rbd_max_clone_depth = 5 rbd_store_chunk_size = 4 rados_connect_timeout = -1 # 指定volume_backend_name,可忽略 volume_backend_name = ceph

2.7 启动并查看日志

[root@serverb ~(keystone_admin)]# systemctl restart openstack-cinder-api

[root@serverb ~(keystone_admin)]# systemctl restart openstack-cinder-volume

[root@serverb ~(keystone_admin)]# systemctl restart openstack-cinder-scheduler

[student@serverb ~]$ sudo tail -20 /var/log/cinder/volume.log

2019-03-30 15:11:05.800 23646 INFO cinder.volume.manager [req-76edfdb3-dd84-4377-9a4e-de5f79391609 - - - - -] Driver initialization completed successfully. 2019-03-30 15:11:05.819 23646 INFO cinder.volume.manager [req-76edfdb3-dd84-4377-9a4e-de5f79391609 - - - - -] Initializing RPC dependent components of volume driver RBDDriver (1.2.0) 2019-03-30 15:11:05.871 23646 INFO cinder.volume.manager [req-76edfdb3-dd84-4377-9a4e-de5f79391609 - - - - -] Driver post RPC initialization completed successfully. 2019-03-30 15:12:30.398 23878 INFO cinder.volume.manager [req-ba2a8ef1-e3f0-4a36-a0eb-5a300367c60c - - - - -] Driver initialization completed successfully. 2019-03-30 15:12:30.420 23878 INFO cinder.volume.manager [req-ba2a8ef1-e3f0-4a36-a0eb-5a300367c60c - - - - -] Initializing RPC dependent components of volume driver RBDDriver (1.2.0) 2019-03-30 15:12:30.474 23878 INFO cinder.volume.manager [req-ba2a8ef1-e3f0-4a36-a0eb-5a300367c60c - - - - -] Driver post RPC initialization completed successfully.

2.8 创建XML模板

[root@serverb ~(keystone_admin)]# vim ~/ceph.xml

<secret ephemeral="no" private="no"> <uuid>f3fbcf03-e208-4fba-9c47-9ff465847468</uuid> <usage type="ceph"> <name>client.volumes secret</name> </usage> </secret>

[root@serverb ~(keystone_admin)]# virsh secret-define --file ~/ceph.xml

Secret f3fbcf03-e208-4fba-9c47-9ff465847468 created

[root@serverb ~(keystone_admin)]# virsh secret-set-value --secret f3fbcf03-e208-4fba-9c47-9ff465847468 --base64 $(cat /home/ceph/client.volumes.key)

Secret value set

2.9 创建volume卷测试

[root@serverb ~(keystone_admin)]# openstack volume create --description "Test Volume" --size 1 testvolume

+---------------------+--------------------------------------+ | Field | Value | +---------------------+--------------------------------------+ | attachments | [] | | availability_zone | nova | | bootable | false | | consistencygroup_id | None | | created_at | 2019-03-30T07:22:26.436978 | | description | Test Volume | | encrypted | False | | id | b9cf60d5-3cff-4cde-ab1d-4747adff7943 | | migration_status | None | | multiattach | False | | name | testvolume | | properties | | | replication_status | disabled | | size | 1 | | snapshot_id | None | | source_volid | None | | status | creating | | type | None | | updated_at | None | | user_id | 8e0be34493e04722ba03ab30fbbf3bf8 | +---------------------+--------------------------------------+

2.9 确认验证

[root@serverb ~(keystone_admin)]# openstack volume list -c ID -c 'Display Name' -c Status -c Size

+--------------------------------------+--------------+-----------+------+

| ID | Display Name | Status | Size |

+--------------------------------------+--------------+-----------+------+

| b9cf60d5-3cff-4cde-ab1d-4747adff7943 | testvolume | available | 1 |

+--------------------------------------+--------------+-----------+------+

[root@serverb ~(keystone_admin)]# rbd --id volumes -p volumes ls

volume-b9cf60d5-3cff-4cde-ab1d-4747adff7943

[root@serverb ~(keystone_admin)]# rbd --id volumes -p volumes info volumes/volume-b9cf60d5-3cff-4cde-ab1d-4747adff7943

rbd image 'volume-b9cf60d5-3cff-4cde-ab1d-4747adff7943': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.10e3589b2aec format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten flags: create_timestamp: Sat Mar 30 15:22:27 2019

2.10 删除操作

[root@serverb ~(keystone_admin)]# openstack volume delete testvolume

[root@serverb ~(keystone_admin)]# openstack volume list

[root@serverb ~(keystone_admin)]# rbd --id volumes -p volumes ls

[root@serverb ~(keystone_admin)]# rm ~/ceph.xml

[root@serverb ~(keystone_admin)]# rm ~/myuuid.txt

博主声明:本文的内容来源主要来自红帽官方指导手册,由本人实验完成操作验证,需要的朋友可以关注红帽网站,https://access.redhat.com/documentation/en-us/red_hat_ceph_storage/2/html/ceph_block_device_to_openstack_guide/

---------------------------------------------------------------------------

个性签名:我以为我很颓废,今天我才知道,原来我早报废了。

如果觉得本篇文章最您有帮助,欢迎转载,且在文章页面明显位置给出原文链接!记得在右下角点个“推荐”,博主在此感谢!