Flume—(6)多数据源汇总

多数据源汇总案例

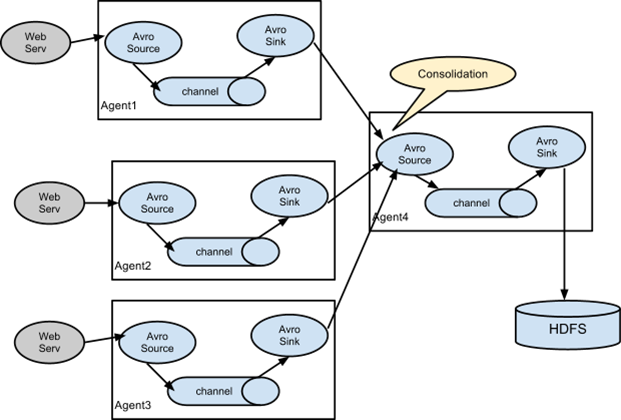

1.案例需求:

hadoop103上的Flume-1监控文件/opt/module/group.log

hddoop102上的Flume-2监控某一个端口的数据流,

Flume-1与Flume-2将数据发送给hadoop104上的Flume-3,Flume-3将最终数据打印到控制台。

2. 需求分析:

3.实现步骤:

0)准备工作

分发Flume

[ck@hadoop102 module]$ xsync flume

在hadoop102、hadoop103以及hadoop104的/opt/module/flume/job目录下创建一个group3文件夹。

[ck@hadoop102 job]$ mkdir group3 [ck@hadoop103 job]$ mkdir group3 [ck@hadoop104 job]$ mkdir group3

1).创建flume1-logger-flume.conf

配置Source用于监控hive.log文件,配置Sink输出数据到下一级Flume。

在hadoop103上创建配置文件并打开

[ck@hadoop103 group3]$ touch flume1-logger-flume.conf [ck@hadoop103 group3]$ vim flume1-logger-flume.conf

添加如下内容

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/module/group.log a1.sources.r1.shell = /bin/bash -c # Describe the sink a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop104 a1.sinks.k1.port = 4141 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

2).创建flume2-netcat-flume.conf

配置Source监控端口44444数据流,配置Sink数据到下一级Flume:

在hadoop102上创建配置文件并打开

[ck@hadoop102 group3]$ touch flume2-netcat-flume.conf [ck@hadoop102 group3]$ vim flume2-netcat-flume.conf

添加如下内容

# Name the components on this agent a2.sources = r1 a2.sinks = k1 a2.channels = c1 # Describe/configure the source a2.sources.r1.type = netcat a2.sources.r1.bind = hadoop102 a2.sources.r1.port = 44444 # Describe the sink a2.sinks.k1.type = avro a2.sinks.k1.hostname = hadoop104 a2.sinks.k1.port = 4141 # Use a channel which buffers events in memory a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1

3).创建flume3-flume-logger.conf

配置source用于接收flume1与flume2发送过来的数据流,最终合并后sink到控制台。

在hadoop104上创建配置文件并打开

[ck@hadoop104 group3]$ touch flume3-flume-logger.conf [ck@hadoop104 group3]$ vim flume3-flume-logger.conf

添加如下内容

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c1 # Describe/configure the source a3.sources.r1.type = avro a3.sources.r1.bind = hadoop104 a3.sources.r1.port = 4141 # Describe the sink a3.sinks.k1.type = logger # Describe the channel a3.channels.c1.type = memory a3.channels.c1.capacity = 1000 a3.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c1 a3.sinks.k1.channel = c1

4).执行配置文件

分别开启对应配置文件:flume3-flume-logger.conf,flume2-netcat-flume.conf,flume1-logger-flume.conf。

[ck@hadoop104 flume]$ bin/flume-ng agent –conf conf/ –name a3 –conf-file job/group3/flume3-flume-logger.conf -Dflume.root.logger=INFO,console [ck@hadoop102 flume]$ bin/flume-ng agent –conf conf/ –name a2 –conf-file job/group3/flume2-netcat-flume.conf [ck@hadoop103 flume]$ bin/flume-ng agent –conf conf/ –name a1 –conf-file job/group3/flume1-logger-flume.conf

5).在hadoop103上向/opt/module目录下的group.log追加内容

[ck@hadoop103 module]$ echo ‘hello’ > group.log

6).在hadoop102上向44444端口发送数据

[ck@hadoop102 flume]$ telnet hadoop102 44444

7).检查hadoop104上数据

分发Flume

浙公网安备 33010602011771号

浙公网安备 33010602011771号