statefulset 及storageclass

https://www.cnblogs.com/00986014w/p/9406962.html

storageclass

先搭建好nfs,本次nfs服务器为10.10.101.175

使用rbac认证的

1,创建serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-serviceaccount #名字随意,下面会用到

[root@10 vitess]# kubectl get serviceaccount | grep nfs-serviceaccount

nfs-serviceaccount 1 17s [root@10 vitess]#

2,部署nfs-client.yaml

kind: Deployment apiVersion: apps/v1 metadata: name: default-nfs-client spec: selector: matchLabels: app: default-nfs-client replicas: 1 strategy: type: Recreate template: metadata: labels: app: default-nfs-client spec: serviceAccount: nfs-serviceaccount #与上面创建的serviceaccount名字对应 containers: - name: nfs-client image: registry.cn-hangzhou.aliyuncs.com/open-ali/nfs-client-provisioner volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: default/nfs #这个名字后面要用,可以随便写,但要和storageclass对应 - name: NFS_SERVER value: 10.10.101.175 #nfs服务器地址 - name: NFS_PATH value: /nfs #nfs目录 volumes: - name: nfs-client-root nfs: server: 10.10.101.175 #nfs服务器地址 path: /nfs #nfs目录

[root@10 vitess]# kubectl get deploy | grep default-nfs-client

default-nfs-client 1/1 1 1 19m [root@10 vitess]#

3、创建集群角色clusterrole.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-clusterrole

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list", "watch", "create", "update", "patch"]

[root@10 vitess]# kubectl get ClusterRole | grep nfs-clusterrole

nfs-clusterrole 16s

[root@10 vitess]#

4、创建集群绑定规则clusterrolebinding.yaml

kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: nfs-clusterrolebinding subjects: - kind: ServiceAccount name: nfs-serviceaccount #第一步创建的serviceaccount

namespace: default roleRef: kind: ClusterRole name: nfs-clusterrole #第三步创建的clusterrole apiGroup: rbac.authorization.k8s.io

[root@10 vitess]# kubectl get ClusterRoleBinding | grep nfs-clusterrolebinding nfs-clusterrolebinding 65s [root@10 vitess]#

5,创建storageclass

kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: nfs-storageclass provisioner: default/nfs #与nfs-client.yaml里env下PROVISIONER_NAME名字对应

[root@10 pvc]# kubectl get storageclass | grep default/nfs nfs-storageclass default/nfs Delete Immediate false 38s [root@10 pvc]#

这样没有基于rbac的storageclass 动态存储就创建好了

注意:

集群所有节点必须安装了nfs客户端,否则挂载pod且pod使用pv时会报下面错误

yum install -y nfs-utils #安装客户端

6、接下来要创建测试的pvc,以检测StorageClass能否正常工作,

创建pvc,storageClassName应确保与上面创建的StorageClass名称一致。

apiVersion: v1 kind: PersistentVolumeClaim metadata: name: vitess-pvc spec: accessModes: - ReadWriteOnce resources: #定义资源要求PV满足这个PVC的要求才会被匹配到 requests: storage: 10Mi

storageClassName: vitess-storageclass #与第五步创建的storageclass名字对应

可以看到已经绑定了

[root@10 pvc]# kubectl get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE vitess-pvc Bound pvc-fc9e6191-9126-4500-838b-b214b1dfed76 10Mi RWO vitess-storageclass 107m

7、创建pod使用这个PVC

kind: Pod apiVersion: v1 metadata: name: test-pod spec: containers: - name: test-pod image: busybox command: - "/bin/sh" args: - "-c" - "touch /mnt/SUCCESS && exit 0 || exit 1" volumeMounts: - name: nfs-pvc mountPath: "/mnt" restartPolicy: "Never" volumes: - name: nfs-pvc persistentVolumeClaim: claimName: vitess-pvc #与上面pvc名字对应

查看Pod状态是否变为Completed。如果是,则应该能在NFS系统的共享路径中看到一个SUCCESS文件。

登录nfs服务器,切换到/nfs/hadoop目录,可以看到有default-hadoop-pvc-pvc-e33bc091-088a-433e-8516-834c5422e0b2目录,他下面有个SUCCESS文件,说明已经成功了

这个文件夹的命名方式和我们上面的规则:${namespace}-${pvcName}-${pvName}是一样的

[root@10 nfs]# ll 总用量 12 drwxrwxrwx 2 root root 6 4月 9 16:23 archived-default-hadoop-ha-pvc-hadoop-0-pvc-dd0db528-1f41-4cd9-83d8-43016bbae5ed drwxr-xr-x 3 root root 16 4月 9 16:57 backup drwxrwxrwx 2 root root 21 4月 9 16:33 default-vitess-pvc-pvc-e33bc091-088a-433e-8516-834c5422e0b2 drwxr-xr-x. 8 root root 8192 4月 9 16:59 dev drwxrwxrwx 3 root root 73 4月 9 17:10 hadoop [root@10 nfs]# ll default-hadoop-pvc-pvc-e33bc091-088a-433e-8516-834c5422e0b2/ 总用量 0 -rw-r--r-- 1 root root 0 4月 9 16:57 SUCCESS

这样,StorageClass动态创建PV的功能就成功实现了。

9、statefulset 实例

apiVersion: v1 kind: Service metadata: name: hadoop labels: app: hadoop spec: ports: - name: ssh port: 22 - name: zk-client port: 2181 - name: zk-listen port: 2881 - name: zk-leader port: 3881 - name: nn-journalnode1 port: 8280 - name: nn-journalnode2 port: 8485 - name: webhdfs port: 9870 clusterIP: None selector: app: hadoop--- apiVersion: apps/v1 kind: StatefulSet metadata: name: hadoop spec: serviceName: "hadoop" replicas: 3 selector: matchLabels: app: hadoop template: metadata: labels: app: hadoop spec: containers: - name: hadoop image: 10.10.101.175/onlineshop/hadoop-ha:v2 ports: - name: ssh containerPort: 22 - name: zk-client containerPort: 21181 - name: zk-listen containerPort: 2881 - name: zk-leader containerPort: 3881 - name: nn-journalnode1 containerPort: 8280 - name: nn-journalnode2 containerPort: 8485 - name: webhdfs containerPort: 9870 command: - "/bin/bash" - "/opt/start.sh" - "-d" volumeMounts: - name: hadoop-volume #与volumeClaimTemplate定义的名字一致 mountPath: /tmp volumeClaimTemplates: - metadata: name: hadoop-volume #名字随便 spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 1Mi storageClassName: vitess-storageclass #第五步创建的storageclass

StatefulSet为每个Pod副本创建了一个DNS域名,这个域名的格式为:$(podname).(headless server name).namespace.svc.cluster.local

,也就意味着服务间是通过Pod域名来通信而非Pod IP,因为当Pod所在Node发生故障时,Pod会被飘移到其它Node上,Pod IP会发生变化,但是Pod域名不会有变化。

本例域名为hadoop-0.hadoop.default.svc.cluster.local,可以简写为hadoop-0.hadoop

sh-4.2$ telnet hadoop-0.hadoop 22 Trying 10.168.114.193... Connected to hadoop-0.hadoop. Escape character is '^]'. SSH-2.0-OpenSSH_7.4

其他sc

hostpath

apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: annotations: storageLimit: "50" storageUsed: "0" type: HostPath name: host-path-mysql provisioner: kubernetes.io/no-provisioner reclaimPolicy: Delete volumeBindingMode: Immediate

lvm

allowVolumeExpansion: true apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: annotations: storageLimit: "100" type: CSI-LVM name: middleware-lvm parameters: fsType: ext4 lvmType: striping vgName: vg_middleware volumeType: LVM provisioner: localplugin.csi.alibabacloud.com reclaimPolicy: Delete volumeBindingMode: WaitForFirstConsumer

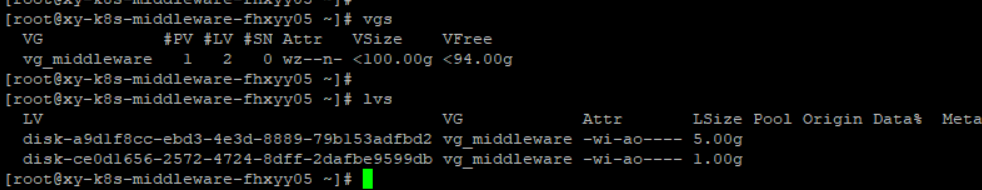

这个需要在节点上创建名为vg_middleware的vg

步骤

mkfs.ext4 /dev/vdb pvcreate /dev/vdb vgcreate vg_middleware /dev/vdb