2.1 pytorch快速入门

本文主要介绍机器学习中常见任务的API。

处理数据

PyTorch有两个处理数据的方式:torch.utils.data.DataLoader 和torch.utils.data.Dataset 。

Dataset存储样本及其相应的标签,

DataLoader 在Dataset的外层用迭代器进行包装。

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision.transforms import ToTensor

PyTorch提供特定于领域的库,如TorchText、TorchVision和TorchAudio,所有这些库都包括数据集。在本教程中,我们将使用TorchVision数据集。

torchvision.datasets模块包含许多真实世界视觉数据的Dataset对象,如CIFAR,COCO. 在本教程中,我们使用FashionMNIST数据集。每个TorchVisionDataset都包括两个参数:transform和target_transform以分别用于修改样本和标签。

# Download training data from open datasets.

training_data = datasets.FashionMNIST(

root="data",

train=True,

download=True,

transform=ToTensor(),

)

# Download test data from open datasets.

test_data = datasets.FashionMNIST(

root="data",

train=False,

download=True,

transform=ToTensor(),

)

输出是这样的:

D:\testproj\pytorchOne\venvtorch\Scripts\python.exe D:\testproj\pytorchOne\quickstart.py

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-images-idx3-ubyte.gz to data\FashionMNIST\raw\train-images-idx3-ubyte.gz

100.0%

Extracting data\FashionMNIST\raw\train-images-idx3-ubyte.gz to data\FashionMNIST\raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/train-labels-idx1-ubyte.gz to data\FashionMNIST\raw\train-labels-idx1-ubyte.gz

100.0%

Extracting data\FashionMNIST\raw\train-labels-idx1-ubyte.gz to data\FashionMNIST\raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-images-idx3-ubyte.gz to data\FashionMNIST\raw\t10k-images-idx3-ubyte.gz

100.0%

Extracting data\FashionMNIST\raw\t10k-images-idx3-ubyte.gz to data\FashionMNIST\raw

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz

Downloading http://fashion-mnist.s3-website.eu-central-1.amazonaws.com/t10k-labels-idx1-ubyte.gz to data\FashionMNIST\raw\t10k-labels-idx1-ubyte.gz

100.0%

Extracting data\FashionMNIST\raw\t10k-labels-idx1-ubyte.gz to data\FashionMNIST\raw

Process finished with exit code 0

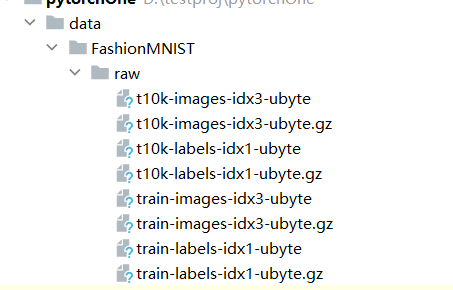

在工程的根目录下添加了如下文件:

我们将数据集作为参数传递给DataLoader。这在我们的数据集上封装了一个可迭代的,并支持自动批处理、采样、混洗和多进程数据加载。在这里,我们定义了一个64的批量大小,即数据加载器可迭代中的每个元素都将返回一批(64个特征和标签)。

batch_size = 64

# Create data loaders.

train_dataloader = DataLoader(training_data, batch_size=batch_size)

test_dataloader = DataLoader(test_data, batch_size=batch_size)

for X, y in test_dataloader:

print(f"Shape of X [N, C, H, W]: {X.shape}")

print(f"Shape of y: {y.shape} {y.dtype}")

break

我们来看看输出:

Shape of X [N, C, H, W]: torch.Size([64, 1, 28, 28])

Shape of y: torch.Size([64]) torch.int64

创建模型

为了在PyTorch中定义神经网络,我们创建了一个继承自nn.Module的类。我们在__init__函数中定义网络的层,并在前向函数中指定数据如何通过网络。为了加速神经网络中的操作,我们将其移动到GPU或MPS(如果可用)。

# Get cpu, gpu or mps device for training.

device = (

"cuda"

if torch.cuda.is_available()

else "mps"

if torch.backends.mps.is_available()

else "cpu"

)

print(f"Using {device} device")

# Define model

class NeuralNetwork(nn.Module):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.linear_relu_stack = nn.Sequential(

nn.Linear(28*28, 512),

nn.ReLU(),

nn.Linear(512, 512),

nn.ReLU(),

nn.Linear(512, 10)

)

def forward(self, x):

x = self.flatten(x)

logits = self.linear_relu_stack(x)

return logits

model = NeuralNetwork().to(device)

print(model)

输出如下:

Using cpu device

NeuralNetwork(

(flatten): Flatten(start_dim=1, end_dim=-1)

(linear_relu_stack): Sequential(

(0): Linear(in_features=784, out_features=512, bias=True)

(1): ReLU()

(2): Linear(in_features=512, out_features=512, bias=True)

(3): ReLU()

(4): Linear(in_features=512, out_features=10, bias=True)

)

)

优化模型参数

为了训练模型,我们需要一个损失函数和一个优化器。

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=1e-3)

在单个训练循环中,模型对训练数据集进行预测(分批提供给它),并对预测误差进行反向传播以调整模型的参数。

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset)

model.train()

for batch, (X, y) in enumerate(dataloader):

X, y = X.to(device), y.to(device)

# Compute prediction error

pred = model(X)

loss = loss_fn(pred, y)

# Backpropagation

optimizer.zero_grad()

loss.backward()

optimizer.step()

if batch % 100 == 0:

loss, current = loss.item(), (batch + 1) * len(X)

print(f"loss: {loss:>7f} [{current:>5d}/{size:>5d}]")

我们还根据测试数据集检查模型的性能,以确保它在学习。

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

model.eval()

test_loss, correct = 0, 0

with torch.no_grad():

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

test_loss += loss_fn(pred, y).item()

correct += (pred.argmax(1) == y).type(torch.float).sum().item()

test_loss /= num_batches

correct /= size

print(f"Test Error: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

训练过程是通过几个迭代(epochs)进行的。在每个历时中,模型学习参数以做出更好的预测。我们在每个历时中打印模型的准确度和损失;我们希望看到准确度在每个历时中增加,损失在每个历时中减少。

epochs = 5

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train(train_dataloader, model, loss_fn, optimizer)

test(test_dataloader, model, loss_fn)

print("Done!")

查看训练过程的输出

Epoch 1

-------------------------------

loss: 2.298773 [ 64/60000]

loss: 2.288743 [ 6464/60000]

loss: 2.266891 [12864/60000]

loss: 2.269755 [19264/60000]

loss: 2.260278 [25664/60000]

loss: 2.214578 [32064/60000]

loss: 2.232736 [38464/60000]

loss: 2.191824 [44864/60000]

loss: 2.183783 [51264/60000]

loss: 2.164333 [57664/60000]

Test Error:

Accuracy: 38.6%, Avg loss: 2.161599

Epoch 2

-------------------------------

loss: 2.159602 [ 64/60000]

loss: 2.153630 [ 6464/60000]

loss: 2.097003 [12864/60000]

loss: 2.125920 [19264/60000]

loss: 2.080615 [25664/60000]

loss: 1.998236 [32064/60000]

loss: 2.039693 [38464/60000]

loss: 1.950894 [44864/60000]

loss: 1.957268 [51264/60000]

loss: 1.893443 [57664/60000]

Test Error:

Accuracy: 54.2%, Avg loss: 1.897607

Epoch 3

-------------------------------

loss: 1.916339 [ 64/60000]

loss: 1.889156 [ 6464/60000]

loss: 1.775602 [12864/60000]

loss: 1.833217 [19264/60000]

loss: 1.727981 [25664/60000]

loss: 1.651068 [32064/60000]

loss: 1.687254 [38464/60000]

loss: 1.577951 [44864/60000]

loss: 1.611350 [51264/60000]

loss: 1.507689 [57664/60000]

Test Error:

Accuracy: 63.2%, Avg loss: 1.528882

Epoch 4

-------------------------------

loss: 1.583922 [ 64/60000]

loss: 1.547395 [ 6464/60000]

loss: 1.399195 [12864/60000]

loss: 1.486909 [19264/60000]

loss: 1.374229 [25664/60000]

loss: 1.344481 [32064/60000]

loss: 1.368184 [38464/60000]

loss: 1.283136 [44864/60000]

loss: 1.326591 [51264/60000]

loss: 1.228549 [57664/60000]

Test Error:

Accuracy: 64.5%, Avg loss: 1.252939

Epoch 5

-------------------------------

loss: 1.319819 [ 64/60000]

loss: 1.299578 [ 6464/60000]

loss: 1.135219 [12864/60000]

loss: 1.254325 [19264/60000]

loss: 1.136180 [25664/60000]

loss: 1.141785 [32064/60000]

loss: 1.167674 [38464/60000]

loss: 1.097063 [44864/60000]

loss: 1.143751 [51264/60000]

loss: 1.062997 [57664/60000]

Test Error:

Accuracy: 65.1%, Avg loss: 1.080314

Done!

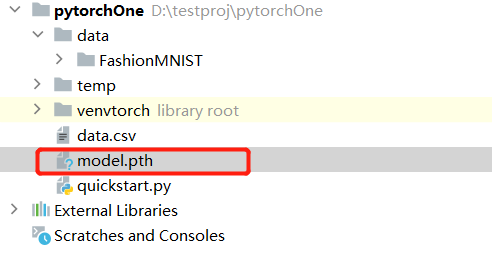

保存模型

保存模型的常用方法是序列化内部状态字典(包含模型参数)。

torch.save(model.state_dict(), "model.pth")

print("Saved PyTorch Model State to model.pth")

点击查看保存模型的输出

Saved PyTorch Model State to model.pth

加载模型

加载模型的过程包括重新创建模型结构并将状态字典加载到其中。

model = NeuralNetwork()

model.load_state_dict(torch.load("model.pth"))

这个模型现在可以用来进行预测了。

classes = [

"T-shirt/top",

"Trouser",

"Pullover",

"Dress",

"Coat",

"Sandal",

"Shirt",

"Sneaker",

"Bag",

"Ankle boot",

]

model.eval()

x, y = test_data[0][0], test_data[0][1]

with torch.no_grad():

pred = model(x)

predicted, actual = classes[pred[0].argmax(0)], classes[y]

print(f'Predicted: "{predicted}", Actual: "{actual}"')

预测结果的输出是:

Predicted: "Ankle boot", Actual: "Ankle boot"

浙公网安备 33010602011771号

浙公网安备 33010602011771号