SpringBoot 整合 Avro 与 Kafka

更多内容,前往 IT-BLOG

【需求】:生产者发送数据至 kafka 序列化使用 Avro,消费者通过 Avro 进行反序列化,并将数据通过 MyBatisPlus 存入数据库。

一、环境介绍

【1】Apache Avro 1.8;【2】Spring Kafka 1.2;【3】Spring Boot 1.5;【4】Maven 3.5;

1 <?xml version="1.0" encoding="UTF-8"?> 2 <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" 3 xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> 4 <modelVersion>4.0.0</modelVersion> 5 6 <groupId>com.codenotfound</groupId> 7 <artifactId>spring-kafka-avro</artifactId> 8 <version>0.0.1-SNAPSHOT</version> 9 10 <name>spring-kafka-avro</name> 11 <description>Spring Kafka - Apache Avro Serializer Deserializer Example</description> 12 <url>https://www.codenotfound.com/spring-kafka-apache-avro-serializer-deserializer-example.html</url> 13 14 <parent> 15 <groupId>org.springframework.boot</groupId> 16 <artifactId>spring-boot-starter-parent</artifactId> 17 <version>1.5.4.RELEASE</version> 18 </parent> 19 20 <properties> 21 <java.version>1.8</java.version> 22 23 <spring-kafka.version>1.2.2.RELEASE</spring-kafka.version> 24 <avro.version>1.8.2</avro.version> 25 </properties> 26 27 <dependencies> 28 <!-- spring-boot --> 29 <dependency> 30 <groupId>org.springframework.boot</groupId> 31 <artifactId>spring-boot-starter</artifactId> 32 </dependency> 33 <dependency> 34 <groupId>org.springframework.boot</groupId> 35 <artifactId>spring-boot-starter-test</artifactId> 36 <scope>test</scope> 37 </dependency> 38 <!-- spring-kafka --> 39 <dependency> 40 <groupId>org.springframework.kafka</groupId> 41 <artifactId>spring-kafka</artifactId> 42 <version>${spring-kafka.version}</version> 43 </dependency> 44 <dependency> 45 <groupId>org.springframework.kafka</groupId> 46 <artifactId>spring-kafka-test</artifactId> 47 <version>${spring-kafka.version}</version> 48 <scope>test</scope> 49 </dependency> 50 <!-- avro --> 51 <dependency> 52 <groupId>org.apache.avro</groupId> 53 <artifactId>avro</artifactId> 54 <version>${avro.version}</version> 55 </dependency> 56 </dependencies> 57 58 <build> 59 <plugins> 60 <!-- spring-boot-maven-plugin --> 61 <plugin> 62 <groupId>org.springframework.boot</groupId> 63 <artifactId>spring-boot-maven-plugin</artifactId> 64 </plugin> 65 <!-- avro-maven-plugin --> 66 <plugin> 67 <groupId>org.apache.avro</groupId> 68 <artifactId>avro-maven-plugin</artifactId> 69 <version>${avro.version}</version> 70 <executions> 71 <execution> 72 <phase>generate-sources</phase> 73 <goals> 74 <goal>schema</goal> 75 </goals> 76 <configuration> 77 <sourceDirectory>${project.basedir}/src/main/resources/avro/</sourceDirectory> 78 <outputDirectory>${project.build.directory}/generated/avro</outputDirectory> 79 </configuration> 80 </execution> 81 </executions> 82 </plugin> 83 </plugins> 84 </build> 85 </project>

二、Avro 文件

【1】Avro 依赖于由使用JSON定义的原始类型组成的架构。对于此示例,我们将使用Apache Avro入门指南中的“用户”模式,如下所示。该模式存储在src / main / resources / avro下的 user.avsc文件中。我这里使用的是 electronicsPackage.avsc。namespace 指定你生成 java 类时指定的 package 路径,name 表时生成的文件。

1 {"namespace": "com.yd.cyber.protocol.avro", 2 "type": "record", 3 "name": "ElectronicsPackage", 4 "fields": [ 5 {"name":"package_number","type":["string","null"],"default": null}, 6 {"name":"frs_site_code","type":["string","null"],"default": null}, 7 {"name":"frs_site_code_type","type":["string","null"],"default":null}, 8 {"name":"end_allocate_code","type":["string","null"],"default": null}, 9 {"name":"code_1","type":["string","null"],"default": null}, 10 {"name":"aggregat_package_code","type":["string","null"],"default": null} 11 ] 12 }

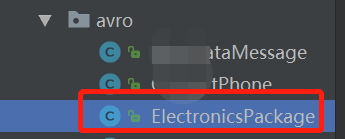

【2】Avro附带了代码生成功能,该代码生成功能使我们可以根据上面定义的“用户”模式自动创建Java类。一旦生成了相关的类,就无需直接在程序中使用架构。这些类可以使用 avro-tools.jar 或项目是Maven 项目,调用 Maven Projects 进行 compile 自动生成 electronicsPackage.java 文件:如下是通过 maven 的方式

【3】这将导致生成一个 electronicsPackage.java 类,该类包含架构和许多 Builder构造 electronicsPackage对象的方法。

三、为 Kafka 主题生成 Avro消息

Kafka Byte 在其主题中存储和传输数组。但是,当我们使用 Avro对象时,我们需要在这些 Byte数组之间进行转换。在0.9.0.0版之前,Kafka Java API使用 Encoder/ Decoder接口的实现来处理转换,但是在新API中,这些已经被 Serializer/ Deserializer接口实现代替。Kafka附带了许多 内置(反)序列化器,但不包括Avro。为了解决这个问题,我们将创建一个 AvroSerializer类,该类Serializer专门为 Avro对象实现接口。然后,我们实现将 serialize() 主题名称和数据对象作为输入的方法,在本例中,该对象是扩展的 Avro对象 SpecificRecordBase。该方法将Avro对象序列化为字节数组并返回结果。这个类属于通用类,一次配置多次使用。

1 package com.yd.cyber.web.avro; 2 3 import java.io.ByteArrayOutputStream; 4 import java.io.IOException; 5 import java.util.Map; 6 7 import org.apache.avro.io.BinaryEncoder; 8 import org.apache.avro.io.DatumWriter; 9 import org.apache.avro.io.EncoderFactory; 10 import org.apache.avro.specific.SpecificDatumWriter; 11 import org.apache.avro.specific.SpecificRecordBase; 12 import org.apache.kafka.common.errors.SerializationException; 13 import org.apache.kafka.common.serialization.Serializer; 14 15 /** 16 * avro序列化类 17 * @author zzx 18 * @creat 2020-03-11-19:17 19 */ 20 public class AvroSerializer<T extends SpecificRecordBase> implements Serializer<T> { 21 @Override 22 public void close() {} 23 24 @Override 25 public void configure(Map<String, ?> arg0, boolean arg1) {} 26 27 @Override 28 public byte[] serialize(String topic, T data) { 29 if(data == null) { 30 return null; 31 } 32 DatumWriter<T> writer = new SpecificDatumWriter<>(data.getSchema()); 33 ByteArrayOutputStream byteArrayOutputStream = new ByteArrayOutputStream(); 34 BinaryEncoder binaryEncoder = EncoderFactory.get().directBinaryEncoder(byteArrayOutputStream , null); 35 try { 36 writer.write(data, binaryEncoder); 37 binaryEncoder.flush(); 38 byteArrayOutputStream.close(); 39 }catch (IOException e) { 40 throw new SerializationException(e.getMessage()); 41 } 42 return byteArrayOutputStream.toByteArray(); 43 } 44 }

四、AvroConfig 配置类

Avro 配置信息在 AvroConfig 配置类中,现在,我们需要更改,AvroConfig 开始使用我们的自定义 Serializer实现。这是通过将“ VALUE_SERIALIZER_CLASS_CONFIG”属性设置为 AvroSerializer该类来完成的。此外,我们更改了ProducerFactory 和KafkaTemplate 通用类型,使其指定 ElectronicsPackage 而不是 String。当我们有多个序列化的时候,这个配置文件需要多次需求,添加自己需要序列化的对象。

1 package com.yd.cyber.web.avro; 2 3 /** 4 * @author zzx 5 * @creat 2020-03-11-20:23 6 */ 7 @Configuration 8 @EnableKafka 9 public class AvroConfig { 10 11 @Value("${spring.kafka.bootstrap-servers}") 12 private String bootstrapServers; 13 14 @Value("${spring.kafka.producer.max-request-size}") 15 private String maxRequestSize; 16 17 @Bean 18 public Map<String, Object> avroProducerConfigs() { 19 Map<String, Object> props = new HashMap<>(); 20 props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, bootstrapServers); 21 props.put(ProducerConfig.MAX_REQUEST_SIZE_CONFIG, maxRequestSize); 22 props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class); 23 props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, AvroSerializer.class); 24 return props; 25 } 26 27 @Bean 28 public ProducerFactory<String, ElectronicsPackage> elProducerFactory() { 29 return new DefaultKafkaProducerFactory<>(avroProducerConfigs()); 30 } 31 32 @Bean 33 public KafkaTemplate<String, ElectronicsPackage> elKafkaTemplate() { 34 return new KafkaTemplate<>(elProducerFactory()); 35 } 36 }

五、通过 kafkaTemplate 发送消息

最后就是通过 Controller类调用 kafkaTemplate 的 send 方法接受一个Avro electronicsPackage对象作为输入。请注意,我们还更新了 kafkaTemplate 泛型类型。

1 package com.yd.cyber.web.controller.aggregation; 2 3 import com.yd.cyber.protocol.avro.ElectronicsPackage; 4 import com.yd.cyber.web.vo.ElectronicsPackageVO; 5 import org.slf4j.Logger; 6 import org.slf4j.LoggerFactory; 7 import org.springframework.beans.BeanUtils; 8 import org.springframework.kafka.core.KafkaTemplate; 9 import org.springframework.web.bind.annotation.GetMapping; 10 import org.springframework.web.bind.annotation.RequestMapping; 11 import org.springframework.web.bind.annotation.RestController; 12 import javax.annotation.Resource; 13 14 /** 15 * <p> 16 * InnoDB free: 4096 kB 前端控制器 17 * </p> 18 * 19 * @author zzx 20 * @since 2020-04-19 21 */ 22 @RestController 23 @RequestMapping("/electronicsPackageTbl") 24 public class ElectronicsPackageController { 25 26 //日誌 27 private static final Logger log = LoggerFactory.getLogger(ElectronicsPackageController.class); 28 29 @Resource 30 private KafkaTemplate<String,ElectronicsPackage> kafkaTemplate; 31 32 @GetMapping("/push") 33 public void push(){ 34 ElectronicsPackageVO electronicsPackageVO = new ElectronicsPackageVO(); 35 electronicsPackageVO.setElectId(9); 36 electronicsPackageVO.setAggregatPackageCode("9"); 37 electronicsPackageVO.setCode1("9"); 38 electronicsPackageVO.setEndAllocateCode("9"); 39 electronicsPackageVO.setFrsSiteCodeType("9"); 40 electronicsPackageVO.setFrsSiteCode("9"); 41 electronicsPackageVO.setPackageNumber("9"); 42 ElectronicsPackage electronicsPackage = new ElectronicsPackage(); 43 BeanUtils.copyProperties(electronicsPackageVO,electronicsPackage); 44 //发送消息 45 kafkaTemplate.send("Electronics_Package",electronicsPackage); 46 log.info("Electronics_Package TOPIC 发送成功"); 47 } 48 }

六、从 Kafka主题消费 Avro消息反序列化

收到的消息需要反序列化为 Avro格式。为此,我们创建一个 AvroDeserializer 实现该 Deserializer接口的类。该 deserialize()方法将主题名称和Byte数组作为输入,然后将其解码回Avro对象。从 targetType类参数中检索需要用于解码的模式,该类参数需要作为参数传递给 AvroDeserializer构造函数。

1 package com.yd.cyber.web.avro; 2 3 import java.io.ByteArrayInputStream; 4 import java.io.IOException; 5 import java.util.Arrays; 6 import java.util.Map; 7 8 import org.apache.avro.generic.GenericRecord; 9 import org.apache.avro.io.BinaryDecoder; 10 import org.apache.avro.io.DatumReader; 11 import org.apache.avro.io.DecoderFactory; 12 import org.apache.avro.specific.SpecificDatumReader; 13 import org.apache.avro.specific.SpecificRecordBase; 14 import org.apache.kafka.common.errors.SerializationException; 15 import org.apache.kafka.common.serialization.Deserializer; 16 import org.slf4j.Logger; 17 import org.slf4j.LoggerFactory; 18 19 import javax.xml.bind.DatatypeConverter; 20 21 /** 22 * avro反序列化 23 * @author fuyx 24 * @creat 2020-03-12-15:19 25 */ 26 public class AvroDeserializer<T extends SpecificRecordBase> implements Deserializer<T> { 27 //日志系统 28 private static final Logger LOGGER = LoggerFactory.getLogger(AvroDeserializer.class); 29 30 protected final Class<T> targetType; 31 32 public AvroDeserializer(Class<T> targetType) { 33 this.targetType = targetType; 34 } 35 @Override 36 public void close() {} 37 38 @Override 39 public void configure(Map<String, ?> arg0, boolean arg1) {} 40 41 @Override 42 public T deserialize(String topic, byte[] data) { 43 try { 44 T result = null; 45 if(data == null) { 46 return null; 47 } 48 LOGGER.debug("data='{}'", DatatypeConverter.printHexBinary(data)); 49 ByteArrayInputStream in = new ByteArrayInputStream(data); 50 DatumReader<GenericRecord> userDatumReader = new SpecificDatumReader<>(targetType.newInstance().getSchema()); 51 BinaryDecoder decoder = DecoderFactory.get().directBinaryDecoder(in, null); 52 result = (T) userDatumReader.read(null, decoder); 53 LOGGER.debug("deserialized data='{}'", result); 54 return result; 55 } catch (Exception ex) { 56 throw new SerializationException( 57 "Can't deserialize data '" + Arrays.toString(data) + "' from topic '" + topic + "'", ex); 58 } finally { 59 60 } 61 } 62 }

七、反序列化的配置类

我将反序列化的配置和序列化的配置都放置在 AvroConfig 配置类中。在 AvroConfig 需要被这样更新了AvroDeserializer用作值“VALUE_DESERIALIZER_CLASS_CONFIG”属性。我们还更改了 ConsumerFactory 和 ConcurrentKafkaListenerContainerFactory通用类型,以使其指定 ElectronicsPackage 而不是 String。将 DefaultKafkaConsumerFactory 通过1个新的创造 AvroDeserializer 是需要 “User.class”作为构造函数的参数。需要使用Class<?> targetType,AvroDeserializer 以将消费 byte[]对象反序列化为适当的目标对象(在此示例中为 ElectronicsPackage 类)。

1 @Configuration 2 @EnableKafka 3 public class AvroConfig { 4 5 @Value("${spring.kafka.bootstrap-servers}") 6 private String bootstrapServers; 7 8 @Value("${spring.kafka.producer.max-request-size}") 9 private String maxRequestSize; 10 11 12 @Bean 13 public Map<String, Object> consumerConfigs() { 14 Map<String, Object> props = new HashMap<>(); 15 props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, bootstrapServers); 16 props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class); 17 props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, AvroDeserializer.class); 18 props.put(ConsumerConfig.GROUP_ID_CONFIG, "avro"); 19 20 return props; 21 } 22 23 @Bean 24 public ConsumerFactory<String, ElectronicsPackage> consumerFactory() { 25 return new DefaultKafkaConsumerFactory<>(consumerConfigs(), new StringDeserializer(), 26 new AvroDeserializer<>(ElectronicsPackage.class)); 27 } 28 29 @Bean 30 public ConcurrentKafkaListenerContainerFactory<String, ElectronicsPackage> kafkaListenerContainerFactory() { 31 ConcurrentKafkaListenerContainerFactory<String, ElectronicsPackage> factory = 32 new ConcurrentKafkaListenerContainerFactory<>(); 33 factory.setConsumerFactory(consumerFactory()); 34 35 return factory; 36 } 37 38 }

八、消费者消费消息

消费者通过 @KafkaListener 监听对应的 Topic ,这里需要注意的是,网上直接获取对象的参数传的是对象,比如这里可能需要传入 ElectronicsPackage 类,但是我这样写的时候,error日志总说是返回序列化的问题,所以我使用 GenericRecord 对象接收,也就是我反序列化中定义的对象,是没有问题的。然后我将接收到的消息通过 mybatisplus 存入到数据库。

1 package com.zzx.cyber.web.controller.dataSource.intercompany; 2 3 import com.zzx.cyber.web.service.ElectronicsPackageService; 4 import com.zzx.cyber.web.vo.ElectronicsPackageVO; 5 import org.apache.avro.generic.GenericRecord; 6 import org.slf4j.Logger; 7 import org.slf4j.LoggerFactory; 8 import org.springframework.beans.BeanUtils; 9 import org.springframework.kafka.annotation.KafkaListener; 10 import org.springframework.stereotype.Controller; 11 12 import javax.annotation.Resource; 13 14 /** 15 * @desc: 16 * @author: zzx 17 * @creatdate 2020/4/1912:21 18 */ 19 @Controller 20 public class ElectronicsPackageConsumerController { 21 22 //日志 23 private static final Logger log = LoggerFactory.getLogger(ElectronicsPackageConsumerController.class); 24 25 //服务层 26 @Resource 27 private ElectronicsPackageService electronicsPackageService; 28 /** 29 * 扫描数据测试 30 * @param genericRecordne 31 */ 32 @KafkaListener(topics = {"Electronics_Package"}) 33 public void receive(GenericRecord genericRecordne) throws Exception { 34 log.info("数据接收:electronicsPackage + "+ genericRecordne.toString()); 35 //业务处理类,mybatispuls 自动生成的类 36 ElectronicsPackageVO electronicsPackageVO = new ElectronicsPackageVO(); 37 //将收的数据复制过来 38 BeanUtils.copyProperties(genericRecordne,electronicsPackageVO); 39 try { 40 //落库 41 log.info("数据入库"); 42 electronicsPackageService.save(electronicsPackageVO); 43 } catch (Exception e) { 44 throw new Exception("插入异常"+e); 45 } 46 } 47 }