Flink 客户端操作命令及可视化工具

Flink 提供了丰富的客户端操作来提交任务和与任务进行交互。下面主要从Flink命令行、Scala Shell、SQL Client、Restful API 和 Web 五个方面进行整理。

在 Flink 安装目录的 bin目录下可以看到 flink,start-scala-shell.sh 和 sql-client.sh 等文件,这些都是客户端操作的入口。

flink 常见操作:可以通过 -help 查看帮助

run 运行任务

-d:以分离模式运行作业

-c:如果没有在 jar包中指定入口类,则需要在这里通过这个参数指定;

-m:指定需要连接的 jobmanager(主节点)地址,使用这个参数可以指定一个不同于配置文件中的 jobmanager,可以说是yarn集群名称;

-p:指定程序的并行度。可以覆盖配置文件中的默认值;

-s:保存点(savepoint)的路径以还原作业来自(例如hdfs:///flink/savepoint-1537);

就可以看到我们提交的 JobManager,默认是一个并发。

list 查看任务列表

-m:jobmanager<arg>作业管理器(主)的地址连接。

1 [root@hadoop1 flink-1.10.1]# bin/flink list -m 127.0.0.1:8081 2 Waiting for response... 3 ------------------ Running/Restarting Jobs ------------------- 4 09.07.2020 16:44:09 : dce7b69ad15e8756766967c46122736f : CarTopSpeedWindowingExample (RUNNING) 5 -------------------------------------------------------------- 6 No scheduled jobs.

Stop 停止任务

需要指定 jobmanager 的ip:prot 和 jobId。如下报错可知,一个 job能够被 stop 要求所有的 source 都是可以 stoppable的,即实现了 StoppableFunction 接口。

1 [root@hadoop1 flink-1.10.1]# bin/flink stop -m 127.0.0.1:8081 dce7b69ad15e8756766967c46122736f 2 Suspending job "dce7b69ad15e8756766967c46122736f" with a savepoint. 3 4 ------------------------------------------------------------ 5 The program finished with the following exception: 6 7 org.apache.flink.util.FlinkException: Could not stop with a savepoint job "dce7b69ad15e8756766967c46122736f". 8 at org.apache.flink.client.cli.CliFrontend.lambda$stop$5(CliFrontend.java:458)

StoppableFunction 接口如下,属于优雅停止任务。

1 /** 2 * @Description 需要 stoppabel 的函数必须实现此接口,例如流式任务 source* 3 * stop() 方法在任务收到 stop信号的时候调用 4 * source 在接收到这个信号后,必须停止发送新的数据优雅的停止。 5 * @Date 2020/7/9 17:26 6 */ 7 @PublicEvolving 8 public interface StoppableFunction { 9 /** 10 * 停止 source,与 cancel() 不同的是,这是一个让 source优雅停止的请求。 11 * 等待中的数据可以继续发送出去,不需要立即停止 12 */ 13 void stop(); 14 }

Cancel 取消任务

如果在 conf/flink-conf.yaml 里面配置 state.savepoints.dir,会保存 savepoint,否则不会保存 savepoint。(重启)

state.savepoints.dir: file:///tmp/savepoint

执行 Cancel 命令取消任务

1 [root@hadoop1 flink-1.10.1]# bin/flink cancel -m 127.0.0.1:8081 -s e8ce0d111262c52bf8228d5722742d47 2 DEPRECATION WARNING: Cancelling a job with savepoint is deprecated. Use "stop" instead. 3 Cancelling job e8ce0d111262c52bf8228d5722742d47 with savepoint to default savepoint directory. 4 Cancelled job e8ce0d111262c52bf8228d5722742d47. Savepoint stored in file:/tmp/savepoint/savepoint-e8ce0d-f7fa96a085d8.

也可以在停止的时候显示指定 savepoint 目录

1 [root@hadoop1 flink-1.10.1]# bin/flink cancel -m 127.0.0.1:8081 -s /tmp/savepoint f58bb4c49ee5580ab5f27fdb24083353 2 DEPRECATION WARNING: Cancelling a job with savepoint is deprecated. Use "stop" instead. 3 Cancelling job f58bb4c49ee5580ab5f27fdb24083353 with savepoint to /tmp/savepoint. 4 Cancelled job f58bb4c49ee5580ab5f27fdb24083353. Savepoint stored in file:/tmp/savepoint/savepoint-f58bb4-127b7e84910e.

取消和停止(流作业)的区别如下:

● cancel() 调用,立即调用作业算子的 cancel() 方法,以尽快取消它们。如果算子在接到 cancel() 调用后没有停止,Flink 将开始定期中断算子线程的执行,直到所有算子停止为止。

● stop() 调用,是更优雅的停止正在运行流作业的方式。stop() 仅适用于 source 实现了StoppableFunction 接口的作业。当用户请求停止作业时,作业的所有 source 都将接收 stop() 方法调用。直到所有 source 正常关闭时,作业才会正常结束。这种方式,使 作业正常处理完所有作业。

触发 savepoint

当需要生成 savepoint文件时,需要手动触发 savepoint 。如下,需要指定正在运行的 JobID 和生成文件的存放目录。同时,我们也可以看到它会返回给用户存放的 savepoint的文件名称等信息。

1 [root@hadoop1 flink-1.10.1]# bin/flink run -d examples/streaming/TopSpeedWindowing.jar 2 Executing TopSpeedWindowing example with default input data set. 3 Use --input to specify file input. 4 Printing result to stdout. Use --output to specify output path. 5 Job has been submitted with JobID 216c427d63e3754eb757d2cc268a448d 6 [root@hadoop1 flink-1.10.1]# bin/flink savepoint -m 127.0.0.1:8081 216c427d63e3754eb757d2cc268a448d /tmp/savepoint/ 7 Triggering savepoint for job 216c427d63e3754eb757d2cc268a448d. 8 Waiting for response... 9 Savepoint completed. Path: file:/tmp/savepoint/savepoint-216c42-154a34cf6bfd 10 You can resume your program from this savepoint with the run command.

savepoint 和 checkpoint 的区别:

● checkpoint 是增量做的,每次的时间较短,数据量较小,只要在程序里面启用后会自动触发,用户无须感知;savepoint 是全量做的,每次的时间较长,数据量较大,需要用户主动去触发。

● checkpoint 是作业 failover 的时候自动使用,不需要用户指定。savepoint 一般用于程序的版本更新,bug修复,A/B Test等场景,需要用户指定。

从指定 savepoint 中启动

1 [root@hadoop1 flink-1.10.1]# bin/flink run -d -s /tmp/savepoint/savepoint-f58bb4-127b7e84910e/ examples/streaming/TopSpeedWindowing.jar 2 Executing TopSpeedWindowing example with default input data set. 3 Use --input to specify file input. 4 Printing result to stdout. Use --output to specify output path. 5 Job has been submitted with JobID 1a5c5ce279e0e4bd8609f541b37652e2

查看JobManager的日志能够看到 Reset the checkpoint ID 为我们指定的 savepoint文件中的ID

modify 修改任务并行度

这里修改master 的conf/flink-conf.yaml 将 task slot 数修改为4。并通过 xsync分发到 两个slave节点上。

taskmanager.numberOfTaskSlots: 4

修改参数后需要重启集群生效:关闭/启动集群

1 [root@hadoop1 flink-1.10.1]# bin/stop-cluster.sh && bin/start-cluster.sh 2 Stopping taskexecutor daemon (pid: 8236) on host hadoop2. 3 Stopping taskexecutor daemon (pid: 8141) on host hadoop3. 4 Stopping standalonesession daemon (pid: 22633) on host hadoop1. 5 Starting cluster. 6 Starting standalonesession daemon on host hadoop1. 7 Starting taskexecutor daemon on host hadoop2. 8 Starting taskexecutor daemon on host hadoop3.

启动任务

1 [root@hadoop1 flink-1.10.1]# bin/flink run -d examples/streaming/TopSpeedWindowing.jar 2 Executing TopSpeedWindowing example with default input data set. 3 Use --input to specify file input. 4 Printing result to stdout. Use --output to specify output path. 5 Job has been submitted with JobID 2e833a438da7d8052f14d5433910515a

从页面上能看到 Task Slots 总计变为了 8,运行的Slot 为 1,剩余Slot数量为7。

1 [root@hadoop1 flink-1.10.1]# bin/flink modify -p 4 cc22cc3d09f5d65651d637be6fb0a1c3 2 "modify" is not a valid action.

Info 显示程序的执行计划

1 [root@hadoop1 flink-1.10.1]# bin/flink info examples/streaming/TopSpeedWindowing.jar 2 ----------------------- Execution Plan ----------------------- 3 {"nodes":[{"id":1,"type":"Source: Custom Source","pact":"Data Source","contents":"Source: Custom Source","parallelism":1},{"id":2,"type":"Timestamps/Watermarks","pact":"Operator","contents":"Timestamps/Watermarks","parallelism":1,"predecessors":[{"id":1,"ship_strategy":"FORWARD","side":"second"}]},{"id":4,"type":"Window(GlobalWindows(), DeltaTrigger, TimeEvictor, ComparableAggregator, PassThroughWindowFunction)","pact":"Operator","contents":"Window(GlobalWindows(), DeltaTrigger, TimeEvictor, ComparableAggregator, PassThroughWindowFunction)","parallelism":1,"predecessors":[{"id":2,"ship_strategy":"HASH","side":"second"}]},{"id":5,"type":"Sink: Print to Std. Out","pact":"Data Sink","contents":"Sink: Print to Std. Out","parallelism":1,"predecessors":[{"id":4,"ship_strategy":"FORWARD","side":"second"}]}]} 4 --------------------------------------------------------------

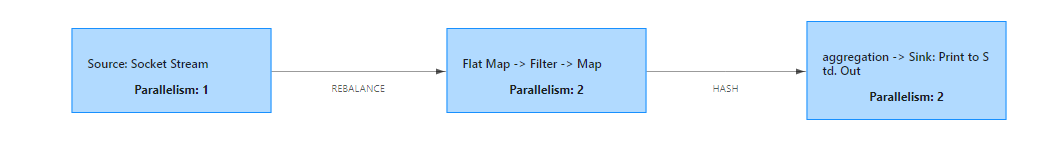

拷贝输出的 json 内容,粘贴到这个网站:http://flink.apache.org/visualizer/可以生成类似如下的执行图。

Flink On Yarn

【链接】

SQL Client Beta

进入 Flink SQL

[root@hadoop1 flink-1.10.1]# bin/sql-client.sh embedded

Select 查询,按Q退出如下界面;

1 Flink SQL> select 'hello word'; 2 SQL Query Result (Table) 3 Table program finished. Page: Last of 1 Updated: 16:37:04.649 4 5 EXPR$0 6 hello word 7 8 9 10 11 Q Quit + Inc Refresh G Goto Page N Next Page O Open Row 12 R Refresh - Dec Refresh L Last Page P Prev Page

打开http://hadoop1:8081 能看到这条 select 语句产生的查询任务已经结束了。这个查询采用的是读取固定数据集的 Custom Source,输出用的是 Stream Collect Sink,且只输出一条结果。

explain 查看 SQL 的执行计划。

1 Flink SQL> explain SELECT name, COUNT(*) AS cnt FROM (VALUES ('Bob'), ('Alice'), ('Greg'), ('Bob')) AS NameTable(name) GROUP BY name; 2 == Abstract Syntax Tree == //抽象语法树 3 LogicalAggregate(group=[{0}], cnt=[COUNT()]) 4 +- LogicalValues(type=[RecordType(VARCHAR(5) name)], tuples=[[{ _UTF-16LE'Bob' }, { _UTF-16LE'Alice' }, { _UTF-16LE'Greg' }, { _UTF-16LE'Bob' }]]) 5 6 == Optimized Logical Plan == //优化后的逻辑执行计划 7 GroupAggregate(groupBy=[name], select=[name, COUNT(*) AS cnt]) 8 +- Exchange(distribution=[hash[name]]) 9 +- Values(type=[RecordType(VARCHAR(5) name)], tuples=[[{ _UTF-16LE'Bob' }, { _UTF-16LE'Alice' }, { _UTF-16LE'Greg' }, { _UTF-16LE'Bob' }]]) 10 11 == Physical Execution Plan == //物理执行计划 12 Stage 13 : Data Source 13 content : Source: Values(tuples=[[{ _UTF-16LE'Bob' }, { _UTF-16LE'Alice' }, { _UTF-16LE'Greg' }, { _UTF-16LE'Bob' }]]) 14 15 Stage 15 : Operator 16 content : GroupAggregate(groupBy=[name], select=[name, COUNT(*) AS cnt]) 17 ship_strategy : HASH

结果展示

SQL Client 支持两种模式来维护并展示查询结果:

table mode

在内存中物化查询结果,并以分页 table 形式展示。用户可以通过以下命令启用 table mode:例如如下案例;

1 Flink SQL> SET execution.result-mode=table; 2 [INFO] Session property has been set. 3 4 Flink SQL> SELECT name, COUNT(*) AS cnt FROM (VALUES ('Bob'), ('Alice'), ('Greg'), ('Bob')) AS NameTable(name) GROUP BY name; 5 SQL Query Result (Table) 6 Table program finished. Page: Last of 1 Updated: 16:55:08.589 7 8 name cnt 9 Alice 1 10 Greg 1 11 Bob 2 12 13 14 15 Q Quit + Inc Refresh G Goto Page N Next Page O Open Row 16 R Refresh - Dec Refresh L Last Page P Prev Page

changelog mode

不会物化查询结果,而是直接对 continuous query 产生的添加和撤回(retractions) 结果进行展示:如下案例中的-表示撤回消息

1 Flink SQL> SET execution.result-mode=changelog; 2 [INFO] Session property has been set. 3 4 Flink SQL> SELECT name, COUNT(*) AS cnt FROM (VALUES ('Bob'), ('Alice'), ('Greg'), ('Bob')) AS NameTable(name) GROUP BY name; 5 SQL Query Result (Changelog) 6 Table program finished. Updated: 16:58:05.777 7 8 +/- name cnt 9 + Bob 1 10 + Alice 1 11 + Greg 1 12 - Bob 1 13 + Bob 2 14 15 16 17 Q Quit + Inc Refresh O Open Row 18 R Refresh - Dec Refresh

Environment Files

CREATE TABLE 创建表 DDL语句:

1 Flink SQL> CREATE TABLE pvuv_sink ( 2 > dt VARCHAR, 3 > pv BIGINT, 4 > uv BIGINT 5 > ) ; 6 [INFO] Table has been created.

SHOW TABLES 查看所有表名

1 Flink SQL> show tables; 2 pvuv_sink

DESCRIBE 表名,查看表的详细信息;

1 Flink SQL> describe pvuv_sink; 2 root 3 |-- dt: STRING 4 |-- pv: BIGINT 5 |-- uv: BIGINT

插入等操作均与关系型数据库操作语句一样,省略N个操作

Restful API

接下来我们演示如何通过 rest api 来提交 jar包和执行任务。

浙公网安备 33010602011771号

浙公网安备 33010602011771号