04-VGG16 图像分类

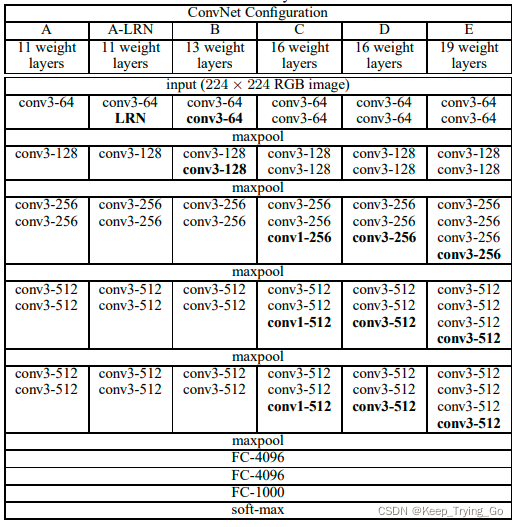

图1 VGG的网络结构

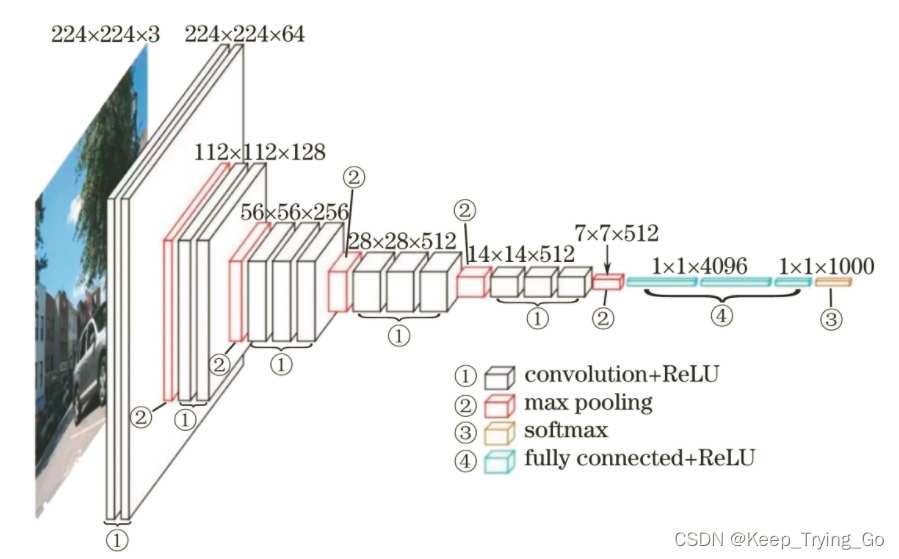

图2 VGG16的网络

VGG网络结构的理解,参考:https://blog.csdn.net/Keep_Trying_Go/article/details/123943751

Cifar10的vgg16网络pytorch实现,参考:https://www.jianshu.com/p/49013b2cbcb3

VGG16的pytorch实现:

1 import torch 2 from torch import nn 3 4 # 构建 VGGNet16 网络模型 5 class VGGNet16(nn.Module): 6 def __init__(self): 7 super(VGGNet16, self).__init__() 8 9 self.Conv1 = nn.Sequential( 10 # CIFAR10 数据集是彩色图 - RGB三通道, 所以输入通道为 3, 图片大小为 32*32 11 nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1), 12 nn.BatchNorm2d(64), 13 # inplace-选择是否对上层传下来的tensor进行覆盖运算, 可以有效地节省内存/显存 14 nn.ReLU(inplace=True), 15 16 nn.Conv2d(in_channels=64, out_channels=64, kernel_size=3, stride=1, padding=1), 17 nn.BatchNorm2d(64), 18 nn.ReLU(inplace=True), 19 # 池化层 20 nn.MaxPool2d(kernel_size=2, stride=2) 21 ) 22 23 self.Conv2 = nn.Sequential( 24 nn.Conv2d(in_channels=64, out_channels=128, kernel_size=3, stride=1, padding=1), 25 nn.BatchNorm2d(128), 26 nn.ReLU(inplace=True), 27 28 nn.Conv2d(in_channels=128, out_channels=128, kernel_size=3, stride=1, padding=1), 29 nn.BatchNorm2d(128), 30 nn.ReLU(inplace=True), 31 32 nn.MaxPool2d(kernel_size=2, stride=2) 33 ) 34 35 self.Conv3 = nn.Sequential( 36 nn.Conv2d(in_channels=128, out_channels=256, kernel_size=3, stride=1, padding=1), 37 nn.BatchNorm2d(256), 38 nn.ReLU(inplace=True), 39 40 nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1), 41 nn.BatchNorm2d(256), 42 nn.ReLU(inplace=True), 43 44 nn.Conv2d(in_channels=256, out_channels=256, kernel_size=3, stride=1, padding=1), 45 nn.BatchNorm2d(256), 46 nn.ReLU(inplace=True), 47 48 nn.MaxPool2d(kernel_size=2, stride=2) 49 ) 50 51 self.Conv4 = nn.Sequential( 52 nn.Conv2d(in_channels=256, out_channels=512, kernel_size=3, stride=1, padding=1), 53 nn.BatchNorm2d(512), 54 nn.ReLU(inplace=True), 55 56 nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1), 57 nn.BatchNorm2d(512), 58 nn.ReLU(inplace=True), 59 60 nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1), 61 nn.BatchNorm2d(512), 62 nn.ReLU(inplace=True), 63 64 nn.MaxPool2d(kernel_size=2, stride=2) 65 ) 66 67 self.Conv5 = nn.Sequential( 68 nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1), 69 nn.BatchNorm2d(512), 70 nn.ReLU(inplace=True), 71 72 nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1), 73 nn.BatchNorm2d(512), 74 nn.ReLU(inplace=True), 75 76 nn.Conv2d(in_channels=512, out_channels=512, kernel_size=3, stride=1, padding=1), 77 nn.BatchNorm2d(512), 78 nn.ReLU(inplace=True), 79 80 nn.MaxPool2d(kernel_size=2, stride=2) 81 ) 82 83 # 全连接层 84 self.fc = nn.Sequential( 85 86 nn.Linear(512, 256), 87 nn.ReLU(inplace=True), 88 # 使一半的神经元不起作用,防止参数量过大导致过拟合 89 nn.Dropout(0.5), 90 91 nn.Linear(256, 128), 92 nn.ReLU(inplace=True), 93 nn.Dropout(0.5), 94 95 nn.Linear(128, 10) 96 ) 97 98 def forward(self, x): 99 # 五个卷积层 100 x = self.Conv1(x) 101 x = self.Conv2(x) 102 x = self.Conv3(x) 103 x = self.Conv4(x) 104 x = self.Conv5(x) 105 106 # 数据平坦化处理,为接下来的全连接层做准备 107 x = x.view(-1, 512) 108 x = self.fc(x) 109 return x

classfyNet_train.py:

1 import torch 2 from torch.utils.data import DataLoader 3 from torch import nn, optim 4 from torchvision import datasets, transforms 5 from torchvision.transforms.functional import InterpolationMode 6 7 from matplotlib import pyplot as plt 8 9 10 import time 11 12 from Lenet5 import Lenet5_new 13 from Resnet18 import ResNet18,ResNet18_new 14 from AlexNet import AlexNet 15 from Vgg16 import VGGNet16 16 from Densenet import DenseNet121, DenseNet169, DenseNet201, DenseNet264 17 18 def main(): 19 20 print("Load datasets...") 21 22 # transforms.RandomHorizontalFlip(p=0.5)---以0.5的概率对图片做水平横向翻转 23 # transforms.ToTensor()---shape从(H,W,C)->(C,H,W), 每个像素点从(0-255)映射到(0-1):直接除以255 24 # transforms.Normalize---先将输入归一化到(0,1),像素点通过"(x-mean)/std",将每个元素分布到(-1,1) 25 transform_train = transforms.Compose([ 26 # transforms.Resize((224, 224), interpolation=InterpolationMode.BICUBIC), 27 transforms.RandomCrop(32, padding=4), # 先四周填充0,在吧图像随机裁剪成32*32 28 transforms.RandomHorizontalFlip(p=0.5), 29 transforms.ToTensor(), 30 transforms.Normalize((0.485, 0.456, 0.406), (0.229, 0.224, 0.225)) 31 ]) 32 33 transform_test = transforms.Compose([ 34 # transforms.Resize((224, 224), interpolation=InterpolationMode.BICUBIC), 35 transforms.RandomCrop(32, padding=4), # 先四周填充0,在吧图像随机裁剪成32*32 36 transforms.ToTensor(), 37 transforms.Normalize((0.485, 0.456, 0.406), (0.229, 0.224, 0.225)) 38 ]) 39 40 # 内置函数下载数据集 41 train_dataset = datasets.CIFAR10(root="./data/Cifar10/", train=True, 42 transform = transform_train, 43 download=True) 44 test_dataset = datasets.CIFAR10(root = "./data/Cifar10/", 45 train = False, 46 transform = transform_test, 47 download=True) 48 49 print(len(train_dataset), len(test_dataset)) 50 51 Batch_size = 64 52 train_loader = DataLoader(train_dataset, batch_size=Batch_size, shuffle = True, num_workers=4) 53 test_loader = DataLoader(test_dataset, batch_size = Batch_size, shuffle = False, num_workers=4) 54 55 # 设置CUDA 56 device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") 57 58 # 初始化模型 59 # 直接更换模型就行,其他无需操作 60 # model = Lenet5_new().to(device) 61 # model = ResNet18().to(device) 62 # model = ResNet18_new().to(device) 63 model = VGGNet16().to(device) 64 65 # model = DenseNet121().to(device) 66 67 # model = AlexNet(num_classes=10, init_weights=True).to(device) 68 print("Resnet_new train...") 69 70 # 构造损失函数和优化器 71 criterion = nn.CrossEntropyLoss() # 多分类softmax构造损失 72 # opt = optim.SGD(model.parameters(), lr=0.01, momentum=0.8, weight_decay=0.001) 73 opt = optim.SGD(model.parameters(), lr=0.01, momentum=0.9, weight_decay=0.0005) 74 75 # 动态更新学习率 ------每隔step_size : lr = lr * gamma 76 schedule = optim.lr_scheduler.StepLR(opt, step_size=10, gamma=0.6, last_epoch=-1) 77 78 # 开始训练 79 print("Start Train...") 80 81 epochs = 100 82 83 loss_list = [] 84 train_acc_list =[] 85 test_acc_list = [] 86 epochs_list = [] 87 88 for epoch in range(0, epochs): 89 90 start = time.time() 91 92 model.train() 93 94 running_loss = 0.0 95 batch_num = 0 96 97 for i, (inputs, labels) in enumerate(train_loader): 98 99 inputs, labels = inputs.to(device), labels.to(device) 100 101 # 将数据送入模型训练 102 outputs = model(inputs) 103 # 计算损失 104 loss = criterion(outputs, labels).to(device) 105 106 # 重置梯度 107 opt.zero_grad() 108 # 计算梯度,反向传播 109 loss.backward() 110 # 根据反向传播的梯度值优化更新参数 111 opt.step() 112 113 # 100个batch的 loss 之和 114 running_loss += loss.item() 115 # loss_list.append(loss.item()) 116 batch_num+=1 117 118 119 epochs_list.append(epoch) 120 121 # 每一轮结束输出一下当前的学习率 lr 122 lr_1 = opt.param_groups[0]['lr'] 123 print("learn_rate:%.15f" % lr_1) 124 schedule.step() 125 126 end = time.time() 127 print('epoch = %d/100, batch_num = %d, loss = %.6f, time = %.3f' % (epoch+1, batch_num, running_loss/batch_num, end-start)) 128 running_loss=0.0 129 130 # 每个epoch训练结束,都进行一次测试验证 131 model.eval() 132 train_correct = 0.0 133 train_total = 0 134 135 test_correct = 0.0 136 test_total = 0 137 138 # 训练模式不需要反向传播更新梯度 139 with torch.no_grad(): 140 141 # print("=======================train=======================") 142 for inputs, labels in train_loader: 143 inputs, labels = inputs.to(device), labels.to(device) 144 outputs = model(inputs) 145 146 pred = outputs.argmax(dim=1) # 返回每一行中最大值元素索引 147 train_total += inputs.size(0) 148 train_correct += torch.eq(pred, labels).sum().item() 149 150 151 # print("=======================test=======================") 152 for inputs, labels in test_loader: 153 inputs, labels = inputs.to(device), labels.to(device) 154 outputs = model(inputs) 155 156 pred = outputs.argmax(dim=1) # 返回每一行中最大值元素索引 157 test_total += inputs.size(0) 158 test_correct += torch.eq(pred, labels).sum().item() 159 160 print("train_total = %d, Accuracy = %.5f %%, test_total= %d, Accuracy = %.5f %%" %(train_total, 100 * train_correct / train_total, test_total, 100 * test_correct / test_total)) 161 162 train_acc_list.append(100 * train_correct / train_total) 163 test_acc_list.append(100 * test_correct / test_total) 164 165 # print("Accuracy of the network on the 10000 test images:%.5f %%" % (100 * test_correct / test_total)) 166 # print("===============================================") 167 168 fig = plt.figure(figsize=(4, 4)) 169 170 plt.plot(epochs_list, train_acc_list, label='train_acc_list') 171 plt.plot(epochs_list, test_acc_list, label='test_acc_list') 172 plt.legend() 173 plt.title("train_test_acc") 174 plt.savefig('Vgg16_acc_epoch_{:04d}.png'.format(epochs)) 175 plt.close() 176 177 if __name__ == "__main__": 178 179 main()

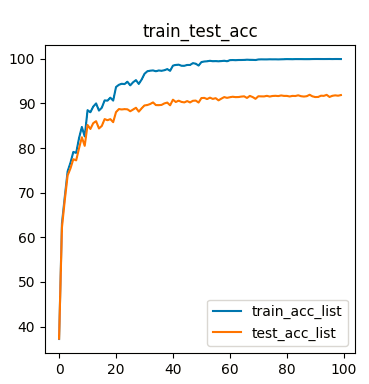

Loss和acc

1 torch.Size([64, 10]) 2 Load datasets... 3 Files already downloaded and verified 4 Files already downloaded and verified 5 50000 10000 6 Resnet_new train... 7 Start Train... 8 learn_rate:0.010000000000000 9 epoch = 1/100, batch_num = 782, loss = 1.732917, time = 19.383 10 train_total = 50000, Accuracy = 37.20000 %, test_total= 10000, Accuracy = 37.28000 % 11 learn_rate:0.010000000000000 12 epoch = 2/100, batch_num = 782, loss = 1.305845, time = 19.334 13 train_total = 50000, Accuracy = 63.11600 %, test_total= 10000, Accuracy = 62.17000 % 14 learn_rate:0.010000000000000 15 epoch = 3/100, batch_num = 782, loss = 1.062025, time = 18.995 16 train_total = 50000, Accuracy = 69.08400 %, test_total= 10000, Accuracy = 68.32000 % 17 learn_rate:0.010000000000000 18 epoch = 4/100, batch_num = 782, loss = 0.902800, time = 19.255 19 train_total = 50000, Accuracy = 74.78600 %, test_total= 10000, Accuracy = 73.85000 % 20 learn_rate:0.010000000000000 21 epoch = 5/100, batch_num = 782, loss = 0.798435, time = 19.302 22 train_total = 50000, Accuracy = 76.71200 %, test_total= 10000, Accuracy = 75.44000 % 23 learn_rate:0.010000000000000 24 epoch = 6/100, batch_num = 782, loss = 0.725354, time = 18.885 25 train_total = 50000, Accuracy = 79.13000 %, test_total= 10000, Accuracy = 77.50000 % 26 learn_rate:0.010000000000000 27 epoch = 7/100, batch_num = 782, loss = 0.670722, time = 19.167 28 train_total = 50000, Accuracy = 78.91000 %, test_total= 10000, Accuracy = 77.24000 % 29 learn_rate:0.010000000000000 30 epoch = 8/100, batch_num = 782, loss = 0.622110, time = 19.405 31 train_total = 50000, Accuracy = 82.21200 %, test_total= 10000, Accuracy = 79.93000 % 32 learn_rate:0.010000000000000 33 epoch = 9/100, batch_num = 782, loss = 0.582806, time = 19.697 34 train_total = 50000, Accuracy = 84.72800 %, test_total= 10000, Accuracy = 82.41000 % 35 learn_rate:0.010000000000000 36 epoch = 10/100, batch_num = 782, loss = 0.545543, time = 19.311 37 train_total = 50000, Accuracy = 82.59600 %, test_total= 10000, Accuracy = 80.49000 % 38 learn_rate:0.006000000000000 39 epoch = 11/100, batch_num = 782, loss = 0.445286, time = 19.681 40 train_total = 50000, Accuracy = 88.49000 %, test_total= 10000, Accuracy = 85.17000 % 41 learn_rate:0.006000000000000 42 epoch = 12/100, batch_num = 782, loss = 0.415665, time = 19.810 43 train_total = 50000, Accuracy = 88.02200 %, test_total= 10000, Accuracy = 84.28000 % 44 learn_rate:0.006000000000000 45 epoch = 13/100, batch_num = 782, loss = 0.393188, time = 19.475 46 train_total = 50000, Accuracy = 89.29200 %, test_total= 10000, Accuracy = 85.58000 % 47 learn_rate:0.006000000000000 48 epoch = 14/100, batch_num = 782, loss = 0.384272, time = 19.397 49 train_total = 50000, Accuracy = 90.00400 %, test_total= 10000, Accuracy = 86.02000 % 50 learn_rate:0.006000000000000 51 epoch = 15/100, batch_num = 782, loss = 0.367519, time = 19.404 52 train_total = 50000, Accuracy = 88.39600 %, test_total= 10000, Accuracy = 84.39000 % 53 learn_rate:0.006000000000000 54 epoch = 16/100, batch_num = 782, loss = 0.353370, time = 19.367 55 train_total = 50000, Accuracy = 89.02800 %, test_total= 10000, Accuracy = 84.95000 % 56 learn_rate:0.006000000000000 57 epoch = 17/100, batch_num = 782, loss = 0.337196, time = 19.267 58 train_total = 50000, Accuracy = 90.68200 %, test_total= 10000, Accuracy = 86.49000 % 59 learn_rate:0.006000000000000 60 epoch = 18/100, batch_num = 782, loss = 0.328400, time = 19.162 61 train_total = 50000, Accuracy = 90.61800 %, test_total= 10000, Accuracy = 86.24000 % 62 learn_rate:0.006000000000000 63 epoch = 19/100, batch_num = 782, loss = 0.321378, time = 19.516 64 train_total = 50000, Accuracy = 91.28600 %, test_total= 10000, Accuracy = 86.48000 % 65 learn_rate:0.006000000000000 66 epoch = 20/100, batch_num = 782, loss = 0.308869, time = 19.430 67 train_total = 50000, Accuracy = 90.63200 %, test_total= 10000, Accuracy = 85.80000 % 68 learn_rate:0.003600000000000 69 epoch = 21/100, batch_num = 782, loss = 0.247416, time = 19.520 70 train_total = 50000, Accuracy = 93.67400 %, test_total= 10000, Accuracy = 88.06000 % 71 learn_rate:0.003600000000000 72 epoch = 22/100, batch_num = 782, loss = 0.229310, time = 19.652 73 train_total = 50000, Accuracy = 94.17400 %, test_total= 10000, Accuracy = 88.73000 % 74 learn_rate:0.003600000000000 75 epoch = 23/100, batch_num = 782, loss = 0.213272, time = 19.489 76 train_total = 50000, Accuracy = 94.40600 %, test_total= 10000, Accuracy = 88.65000 % 77 learn_rate:0.003600000000000 78 epoch = 24/100, batch_num = 782, loss = 0.211405, time = 19.379 79 train_total = 50000, Accuracy = 94.33600 %, test_total= 10000, Accuracy = 88.72000 % 80 learn_rate:0.003600000000000 81 epoch = 25/100, batch_num = 782, loss = 0.205438, time = 19.634 82 train_total = 50000, Accuracy = 94.86200 %, test_total= 10000, Accuracy = 88.68000 % 83 learn_rate:0.003600000000000 84 epoch = 26/100, batch_num = 782, loss = 0.204082, time = 19.582 85 train_total = 50000, Accuracy = 94.04800 %, test_total= 10000, Accuracy = 88.25000 % 86 learn_rate:0.003600000000000 87 epoch = 27/100, batch_num = 782, loss = 0.201109, time = 19.710 88 train_total = 50000, Accuracy = 94.74800 %, test_total= 10000, Accuracy = 88.63000 % 89 learn_rate:0.003600000000000 90 epoch = 28/100, batch_num = 782, loss = 0.193234, time = 20.506 91 train_total = 50000, Accuracy = 95.22600 %, test_total= 10000, Accuracy = 89.03000 % 92 learn_rate:0.003600000000000 93 epoch = 29/100, batch_num = 782, loss = 0.186218, time = 20.077 94 train_total = 50000, Accuracy = 94.37400 %, test_total= 10000, Accuracy = 88.16000 % 95 learn_rate:0.003600000000000 96 epoch = 30/100, batch_num = 782, loss = 0.184991, time = 19.689 97 train_total = 50000, Accuracy = 95.35400 %, test_total= 10000, Accuracy = 88.90000 % 98 learn_rate:0.002160000000000 99 epoch = 31/100, batch_num = 782, loss = 0.136958, time = 19.751 100 train_total = 50000, Accuracy = 96.63600 %, test_total= 10000, Accuracy = 89.55000 % 101 learn_rate:0.002160000000000 102 epoch = 32/100, batch_num = 782, loss = 0.125427, time = 19.617 103 train_total = 50000, Accuracy = 97.20600 %, test_total= 10000, Accuracy = 89.64000 % 104 learn_rate:0.002160000000000 105 epoch = 33/100, batch_num = 782, loss = 0.120248, time = 19.401 106 train_total = 50000, Accuracy = 97.33800 %, test_total= 10000, Accuracy = 89.87000 % 107 learn_rate:0.002160000000000 108 epoch = 34/100, batch_num = 782, loss = 0.115083, time = 19.397 109 train_total = 50000, Accuracy = 97.39800 %, test_total= 10000, Accuracy = 90.23000 % 110 learn_rate:0.002160000000000 111 epoch = 35/100, batch_num = 782, loss = 0.114687, time = 19.366 112 train_total = 50000, Accuracy = 97.21200 %, test_total= 10000, Accuracy = 89.63000 % 113 learn_rate:0.002160000000000 114 epoch = 36/100, batch_num = 782, loss = 0.109333, time = 19.510 115 train_total = 50000, Accuracy = 97.38600 %, test_total= 10000, Accuracy = 89.61000 % 116 learn_rate:0.002160000000000 117 epoch = 37/100, batch_num = 782, loss = 0.108010, time = 19.427 118 train_total = 50000, Accuracy = 97.31600 %, test_total= 10000, Accuracy = 89.67000 % 119 learn_rate:0.002160000000000 120 epoch = 38/100, batch_num = 782, loss = 0.107088, time = 19.471 121 train_total = 50000, Accuracy = 97.44400 %, test_total= 10000, Accuracy = 90.01000 % 122 learn_rate:0.002160000000000 123 epoch = 39/100, batch_num = 782, loss = 0.104569, time = 19.626 124 train_total = 50000, Accuracy = 97.69600 %, test_total= 10000, Accuracy = 90.19000 % 125 learn_rate:0.002160000000000 126 epoch = 40/100, batch_num = 782, loss = 0.101726, time = 19.698 127 train_total = 50000, Accuracy = 97.30400 %, test_total= 10000, Accuracy = 89.58000 % 128 learn_rate:0.001296000000000 129 epoch = 41/100, batch_num = 782, loss = 0.075249, time = 19.575 130 train_total = 50000, Accuracy = 98.47800 %, test_total= 10000, Accuracy = 90.84000 % 131 learn_rate:0.001296000000000 132 epoch = 42/100, batch_num = 782, loss = 0.068694, time = 19.723 133 train_total = 50000, Accuracy = 98.62800 %, test_total= 10000, Accuracy = 90.32000 % 134 learn_rate:0.001296000000000 135 epoch = 43/100, batch_num = 782, loss = 0.067876, time = 19.391 136 train_total = 50000, Accuracy = 98.68800 %, test_total= 10000, Accuracy = 90.62000 % 137 learn_rate:0.001296000000000 138 epoch = 44/100, batch_num = 782, loss = 0.063653, time = 19.400 139 train_total = 50000, Accuracy = 98.44400 %, test_total= 10000, Accuracy = 90.34000 % 140 learn_rate:0.001296000000000 141 epoch = 45/100, batch_num = 782, loss = 0.060083, time = 19.632 142 train_total = 50000, Accuracy = 98.44000 %, test_total= 10000, Accuracy = 90.23000 % 143 learn_rate:0.001296000000000 144 epoch = 46/100, batch_num = 782, loss = 0.059634, time = 19.318 145 train_total = 50000, Accuracy = 98.62000 %, test_total= 10000, Accuracy = 90.53000 % 146 learn_rate:0.001296000000000 147 epoch = 47/100, batch_num = 782, loss = 0.058439, time = 19.398 148 train_total = 50000, Accuracy = 98.62200 %, test_total= 10000, Accuracy = 90.22000 % 149 learn_rate:0.001296000000000 150 epoch = 48/100, batch_num = 782, loss = 0.059515, time = 19.400 151 train_total = 50000, Accuracy = 99.02800 %, test_total= 10000, Accuracy = 90.59000 % 152 learn_rate:0.001296000000000 153 epoch = 49/100, batch_num = 782, loss = 0.052101, time = 19.299 154 train_total = 50000, Accuracy = 98.90400 %, test_total= 10000, Accuracy = 90.64000 % 155 learn_rate:0.001296000000000 156 epoch = 50/100, batch_num = 782, loss = 0.053953, time = 19.545 157 train_total = 50000, Accuracy = 98.48400 %, test_total= 10000, Accuracy = 90.20000 % 158 learn_rate:0.000777600000000 159 epoch = 51/100, batch_num = 782, loss = 0.038023, time = 19.497 160 train_total = 50000, Accuracy = 99.27200 %, test_total= 10000, Accuracy = 91.20000 % 161 learn_rate:0.000777600000000 162 epoch = 52/100, batch_num = 782, loss = 0.034283, time = 19.596 163 train_total = 50000, Accuracy = 99.39800 %, test_total= 10000, Accuracy = 91.24000 % 164 learn_rate:0.000777600000000 165 epoch = 53/100, batch_num = 782, loss = 0.033378, time = 19.633 166 train_total = 50000, Accuracy = 99.44400 %, test_total= 10000, Accuracy = 90.98000 % 167 learn_rate:0.000777600000000 168 epoch = 54/100, batch_num = 782, loss = 0.031124, time = 19.618 169 train_total = 50000, Accuracy = 99.55800 %, test_total= 10000, Accuracy = 91.30000 % 170 learn_rate:0.000777600000000 171 epoch = 55/100, batch_num = 782, loss = 0.029543, time = 19.532 172 train_total = 50000, Accuracy = 99.45600 %, test_total= 10000, Accuracy = 91.01000 % 173 learn_rate:0.000777600000000 174 epoch = 56/100, batch_num = 782, loss = 0.033662, time = 19.586 175 train_total = 50000, Accuracy = 99.48400 %, test_total= 10000, Accuracy = 91.18000 % 176 learn_rate:0.000777600000000 177 epoch = 57/100, batch_num = 782, loss = 0.031087, time = 19.479 178 train_total = 50000, Accuracy = 99.44000 %, test_total= 10000, Accuracy = 90.69000 % 179 learn_rate:0.000777600000000 180 epoch = 58/100, batch_num = 782, loss = 0.028195, time = 19.431 181 train_total = 50000, Accuracy = 99.49200 %, test_total= 10000, Accuracy = 91.07000 % 182 learn_rate:0.000777600000000 183 epoch = 59/100, batch_num = 782, loss = 0.027279, time = 19.645 184 train_total = 50000, Accuracy = 99.56800 %, test_total= 10000, Accuracy = 91.42000 % 185 learn_rate:0.000777600000000 186 epoch = 60/100, batch_num = 782, loss = 0.029286, time = 19.497 187 train_total = 50000, Accuracy = 99.45200 %, test_total= 10000, Accuracy = 91.25000 % 188 learn_rate:0.000466560000000 189 epoch = 61/100, batch_num = 782, loss = 0.022495, time = 19.473 190 train_total = 50000, Accuracy = 99.69200 %, test_total= 10000, Accuracy = 91.39000 % 191 learn_rate:0.000466560000000 192 epoch = 62/100, batch_num = 782, loss = 0.018659, time = 19.638 193 train_total = 50000, Accuracy = 99.72600 %, test_total= 10000, Accuracy = 91.50000 % 194 learn_rate:0.000466560000000 195 epoch = 63/100, batch_num = 782, loss = 0.018382, time = 19.400 196 train_total = 50000, Accuracy = 99.68600 %, test_total= 10000, Accuracy = 91.41000 % 197 learn_rate:0.000466560000000 198 epoch = 64/100, batch_num = 782, loss = 0.017849, time = 19.423 199 train_total = 50000, Accuracy = 99.73200 %, test_total= 10000, Accuracy = 91.43000 % 200 learn_rate:0.000466560000000 201 epoch = 65/100, batch_num = 782, loss = 0.017944, time = 19.490 202 train_total = 50000, Accuracy = 99.73400 %, test_total= 10000, Accuracy = 91.53000 % 203 learn_rate:0.000466560000000 204 epoch = 66/100, batch_num = 782, loss = 0.015910, time = 19.577 205 train_total = 50000, Accuracy = 99.75400 %, test_total= 10000, Accuracy = 91.59000 % 206 learn_rate:0.000466560000000 207 epoch = 67/100, batch_num = 782, loss = 0.016576, time = 19.553 208 train_total = 50000, Accuracy = 99.80400 %, test_total= 10000, Accuracy = 91.23000 % 209 learn_rate:0.000466560000000 210 epoch = 68/100, batch_num = 782, loss = 0.017278, time = 19.376 211 train_total = 50000, Accuracy = 99.77600 %, test_total= 10000, Accuracy = 91.68000 % 212 learn_rate:0.000466560000000 213 epoch = 69/100, batch_num = 782, loss = 0.014584, time = 19.391 214 train_total = 50000, Accuracy = 99.76400 %, test_total= 10000, Accuracy = 91.43000 % 215 learn_rate:0.000466560000000 216 epoch = 70/100, batch_num = 782, loss = 0.014346, time = 19.376 217 train_total = 50000, Accuracy = 99.73000 %, test_total= 10000, Accuracy = 91.03000 % 218 learn_rate:0.000279936000000 219 epoch = 71/100, batch_num = 782, loss = 0.013272, time = 19.388 220 train_total = 50000, Accuracy = 99.85400 %, test_total= 10000, Accuracy = 91.61000 % 221 learn_rate:0.000279936000000 222 epoch = 72/100, batch_num = 782, loss = 0.013748, time = 19.495 223 train_total = 50000, Accuracy = 99.87600 %, test_total= 10000, Accuracy = 91.58000 % 224 learn_rate:0.000279936000000 225 epoch = 73/100, batch_num = 782, loss = 0.012674, time = 19.588 226 train_total = 50000, Accuracy = 99.86800 %, test_total= 10000, Accuracy = 91.57000 % 227 learn_rate:0.000279936000000 228 epoch = 74/100, batch_num = 782, loss = 0.010842, time = 19.790 229 train_total = 50000, Accuracy = 99.86800 %, test_total= 10000, Accuracy = 91.70000 % 230 learn_rate:0.000279936000000 231 epoch = 75/100, batch_num = 782, loss = 0.010044, time = 19.389 232 train_total = 50000, Accuracy = 99.89000 %, test_total= 10000, Accuracy = 91.54000 % 233 learn_rate:0.000279936000000 234 epoch = 76/100, batch_num = 782, loss = 0.011285, time = 19.551 235 train_total = 50000, Accuracy = 99.87800 %, test_total= 10000, Accuracy = 91.67000 % 236 learn_rate:0.000279936000000 237 epoch = 77/100, batch_num = 782, loss = 0.010124, time = 19.554 238 train_total = 50000, Accuracy = 99.88400 %, test_total= 10000, Accuracy = 91.71000 % 239 learn_rate:0.000279936000000 240 epoch = 78/100, batch_num = 782, loss = 0.011759, time = 19.421 241 train_total = 50000, Accuracy = 99.86800 %, test_total= 10000, Accuracy = 91.65000 % 242 learn_rate:0.000279936000000 243 epoch = 79/100, batch_num = 782, loss = 0.009691, time = 19.387 244 train_total = 50000, Accuracy = 99.88800 %, test_total= 10000, Accuracy = 91.79000 % 245 learn_rate:0.000279936000000 246 epoch = 80/100, batch_num = 782, loss = 0.009218, time = 19.331 247 train_total = 50000, Accuracy = 99.90200 %, test_total= 10000, Accuracy = 91.69000 % 248 learn_rate:0.000167961600000 249 epoch = 81/100, batch_num = 782, loss = 0.008903, time = 19.342 250 train_total = 50000, Accuracy = 99.93000 %, test_total= 10000, Accuracy = 91.69000 % 251 learn_rate:0.000167961600000 252 epoch = 82/100, batch_num = 782, loss = 0.007217, time = 19.370 253 train_total = 50000, Accuracy = 99.91800 %, test_total= 10000, Accuracy = 91.56000 % 254 learn_rate:0.000167961600000 255 epoch = 83/100, batch_num = 782, loss = 0.009057, time = 19.660 256 train_total = 50000, Accuracy = 99.90400 %, test_total= 10000, Accuracy = 91.69000 % 257 learn_rate:0.000167961600000 258 epoch = 84/100, batch_num = 782, loss = 0.008270, time = 19.449 259 train_total = 50000, Accuracy = 99.93200 %, test_total= 10000, Accuracy = 91.66000 % 260 learn_rate:0.000167961600000 261 epoch = 85/100, batch_num = 782, loss = 0.008173, time = 19.083 262 train_total = 50000, Accuracy = 99.93000 %, test_total= 10000, Accuracy = 91.82000 % 263 learn_rate:0.000167961600000 264 epoch = 86/100, batch_num = 782, loss = 0.007313, time = 19.442 265 train_total = 50000, Accuracy = 99.93000 %, test_total= 10000, Accuracy = 91.62000 % 266 learn_rate:0.000167961600000 267 epoch = 87/100, batch_num = 782, loss = 0.008067, time = 19.381 268 train_total = 50000, Accuracy = 99.91800 %, test_total= 10000, Accuracy = 91.54000 % 269 learn_rate:0.000167961600000 270 epoch = 88/100, batch_num = 782, loss = 0.005623, time = 19.330 271 train_total = 50000, Accuracy = 99.91800 %, test_total= 10000, Accuracy = 91.62000 % 272 learn_rate:0.000167961600000 273 epoch = 89/100, batch_num = 782, loss = 0.006794, time = 18.877 274 train_total = 50000, Accuracy = 99.92200 %, test_total= 10000, Accuracy = 91.94000 % 275 learn_rate:0.000167961600000 276 epoch = 90/100, batch_num = 782, loss = 0.006518, time = 19.140 277 train_total = 50000, Accuracy = 99.94600 %, test_total= 10000, Accuracy = 91.59000 % 278 learn_rate:0.000100776960000 279 epoch = 91/100, batch_num = 782, loss = 0.006348, time = 19.888 280 train_total = 50000, Accuracy = 99.95600 %, test_total= 10000, Accuracy = 91.43000 % 281 learn_rate:0.000100776960000 282 epoch = 92/100, batch_num = 782, loss = 0.005723, time = 19.920 283 train_total = 50000, Accuracy = 99.95600 %, test_total= 10000, Accuracy = 91.45000 % 284 learn_rate:0.000100776960000 285 epoch = 93/100, batch_num = 782, loss = 0.006090, time = 19.181 286 train_total = 50000, Accuracy = 99.95000 %, test_total= 10000, Accuracy = 91.73000 % 287 learn_rate:0.000100776960000 288 epoch = 94/100, batch_num = 782, loss = 0.005721, time = 19.542 289 train_total = 50000, Accuracy = 99.94800 %, test_total= 10000, Accuracy = 91.70000 % 290 learn_rate:0.000100776960000 291 epoch = 95/100, batch_num = 782, loss = 0.005370, time = 19.360 292 train_total = 50000, Accuracy = 99.95200 %, test_total= 10000, Accuracy = 91.92000 % 293 learn_rate:0.000100776960000 294 epoch = 96/100, batch_num = 782, loss = 0.005382, time = 19.227 295 train_total = 50000, Accuracy = 99.95800 %, test_total= 10000, Accuracy = 91.45000 % 296 learn_rate:0.000100776960000 297 epoch = 97/100, batch_num = 782, loss = 0.005764, time = 19.219 298 train_total = 50000, Accuracy = 99.94200 %, test_total= 10000, Accuracy = 91.71000 % 299 learn_rate:0.000100776960000 300 epoch = 98/100, batch_num = 782, loss = 0.005558, time = 19.063 301 train_total = 50000, Accuracy = 99.95600 %, test_total= 10000, Accuracy = 91.81000 % 302 learn_rate:0.000100776960000 303 epoch = 99/100, batch_num = 782, loss = 0.005447, time = 19.151 304 train_total = 50000, Accuracy = 99.95400 %, test_total= 10000, Accuracy = 91.73000 % 305 learn_rate:0.000100776960000 306 epoch = 100/100, batch_num = 782, loss = 0.005246, time = 19.358 307 train_total = 50000, Accuracy = 99.94800 %, test_total= 10000, Accuracy = 91.87000 %

图3 vgg-16_epoch_100_acc