k8s-(maser节点api-server、scheduler、controller-manager.sh)

1、在maser执行、生成认证文件

[root@linux-node1 k8s-cert]# cat k8s-cert.sh cat > ca-config.json <<EOF { "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } } } EOF cat > ca-csr.json <<EOF { "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing", "O": "k8s", "OU": "System" } ] } EOF cfssl gencert -initca ca-csr.json | cfssljson -bare ca - #----------------------- cat > server-csr.json <<EOF { "CN": "kubernetes", "hosts": [ "10.0.0.1", "127.0.0.1", "192.168.56.11", "192.168.56.14", "192.168.56.17", "192.168.56.15", "192.168.56.16", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server #----------------------- cat > admin-csr.json <<EOF { "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ] } EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin #----------------------- cat > kube-proxy-csr.json <<EOF { "CN": "system:kube-proxy", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

server-csr.json中host ip地址

"192.168.56.11", master01

"192.168.56.14", master02

"192.168.56.17", lb vip

"192.168.56.15", lb1

"192.168.56.16", lb2

[root@linux-node1 k8s]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p [root@linux-node1 k8s]# mkdir k8s-cert [root@linux-node1 k8s]# cd k8s-cert [root@linux-node1 k8s-cert]# pwd /root/k8s/k8s-cert #k8s-cert.sh中注意host地址,如果少加直接重新生成,重启就可以 [root@linux-node1 k8s-cert]# sh k8s-cert.sh [root@linux-node1 k8s-cert]# cp ca*pem server*pem /opt/kubernetes/ssl/

2、创建token.csv认证文件

# 创建 TLS Bootstrapping Token #BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ') BOOTSTRAP_TOKEN=0fb61c46f8991b718eb38d27b605b008 cat > token.csv <<EOF ${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap" EOF cat /opt/kubernetes/cfg/token.csv 0fb61c46f8991b718eb38d27b605b008,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

3、拷贝kubernetes执行命令

[root@linux-node1 k8s]# pwd /root/k8s [root@linux-node1 k8s]# tar xf kubernetes-server-linux-amd64.tar.gz [root@linux-node1 k8s]# cd kubernetes/server/bin/ [root@linux-node1 k8s]# scp kube-apiserver kube-controller-manager kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin/

4、启动api-server

[root@linux-node1 master]# cat apiserver.sh #!/bin/bash MASTER_ADDRESS=$1 ETCD_SERVERS=$2 cat <<EOF >/opt/kubernetes/cfg/kube-apiserver KUBE_APISERVER_OPTS="--logtostderr=true \\ --v=4 \\ --etcd-servers=${ETCD_SERVERS} \\ --bind-address=${MASTER_ADDRESS} \\ --secure-port=6443 \\ --advertise-address=${MASTER_ADDRESS} \\ --allow-privileged=true \\ --service-cluster-ip-range=10.0.0.0/24 \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\ --authorization-mode=RBAC,Node \\ --kubelet-https=true \\ --enable-bootstrap-token-auth \\ --token-auth-file=/opt/kubernetes/cfg/token.csv \\ --service-node-port-range=30000-50000 \\ --tls-cert-file=/opt/kubernetes/ssl/server.pem \\ --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\ --client-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --etcd-cafile=/opt/etcd/ssl/ca.pem \\ --etcd-certfile=/opt/etcd/ssl/server.pem \\ --etcd-keyfile=/opt/etcd/ssl/server-key.pem" EOF cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service [Unit] Description=Kubernetes API Server Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF systemctl daemon-reload systemctl enable kube-apiserver systemctl restart kube-apiserver

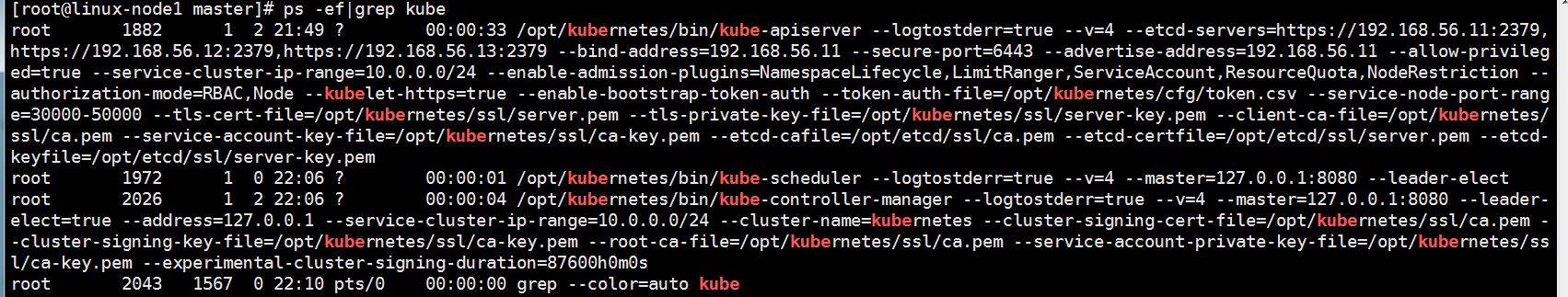

[root@linux-node1 master]# pwd /root/k8s/master [root@linux-node1 master]# sh apiserver.sh 192.168.56.11 https://192.168.56.11:2379,https://192.168.56.12:2379,https://192.168.56.13:2379 #检查是否正常 [root@linux-node1 master]# ps -ef|grep api root 1882 1 3 21:49 ? 00:00:23 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.56.11:2379,https://192.168.56.12:2379,https://192.168.56.13:2379 --bind-address=192.168.56.11 --secure-port=6443 --advertise-address=192.168.56.11 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem root 1920 1567 0 22:01 pts/0 00:00:00 grep --color=auto api

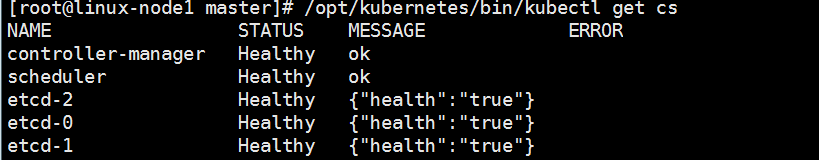

5、启动scheduler.sh、controller-manager.sh

[root@linux-node1 master]# cat scheduler.sh #!/bin/bash MASTER_ADDRESS=$1 cat <<EOF >/opt/kubernetes/cfg/kube-scheduler KUBE_SCHEDULER_OPTS="--logtostderr=true \\ --v=4 \\ --master=${MASTER_ADDRESS}:8080 \\ --leader-elect" EOF cat <<EOF >/usr/lib/systemd/system/kube-scheduler.service [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF systemctl daemon-reload systemctl enable kube-scheduler systemctl restart kube-scheduler

[root@linux-node1 master]# cat controller-manager.sh #!/bin/bash MASTER_ADDRESS=$1 cat <<EOF >/opt/kubernetes/cfg/kube-controller-manager KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\ --v=4 \\ --master=${MASTER_ADDRESS}:8080 \\ --leader-elect=true \\ --address=127.0.0.1 \\ --service-cluster-ip-range=10.0.0.0/24 \\ --cluster-name=kubernetes \\ --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\ --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --root-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --experimental-cluster-signing-duration=87600h0m0s" EOF cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS Restart=on-failure [Install] WantedBy=multi-user.target EOF systemctl daemon-reload systemctl enable kube-controller-manager systemctl restart kube-controller-manager

sh scheduler.sh 127.0.0.1

sh controller-manager.sh 127.0.0.1