3、自创建数据集:基于点击率预测

1、如何制作自己的图数据

import warnings

warnings.filterwarnings("ignore")

import torch

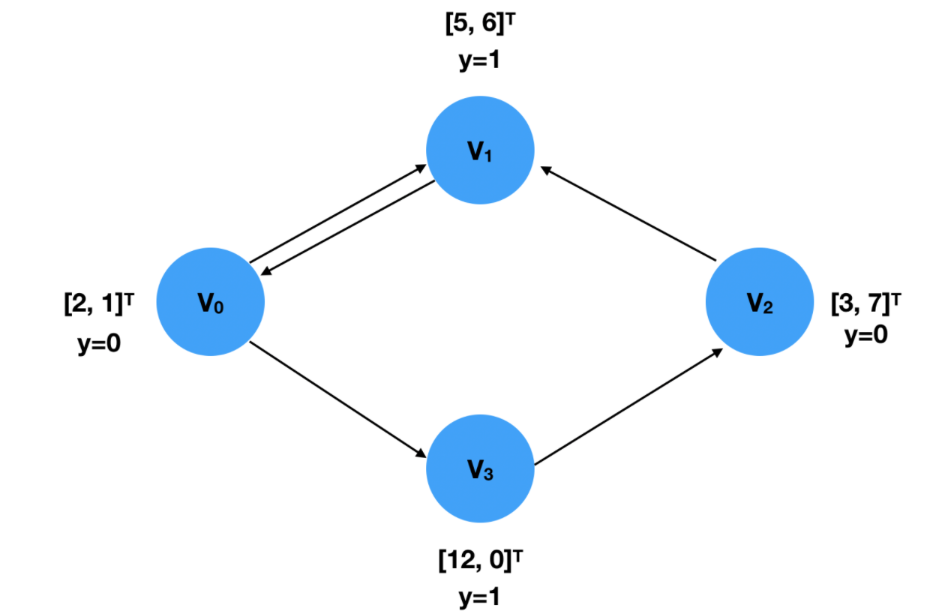

创建一个图,信息如下:

x是每个点的输入特征,y是每个点的标签

x = torch.tensor([[2,1], [5,6], [3,7], [12,0]], dtype=torch.float)

y = torch.tensor([0, 1, 0, 1], dtype=torch.float)

edge_index = torch.tensor([[0, 1, 2, 0, 3],#起始点

[1, 0, 1, 3, 2]], dtype=torch.long)#终止点

边的顺序定义无所谓的,上下两种是一样的

edge_index = torch.tensor([[0, 2, 1, 0, 3],

[3, 1, 0, 1, 2]], dtype=torch.long)

创建torch_geometric中的图

from torch_geometric.data import Data

x = torch.tensor([[2,1], [5,6], [3,7], [12,0]], dtype=torch.float)

y = torch.tensor([0, 1, 0, 1], dtype=torch.float)

edge_index = torch.tensor([[0, 2, 1, 0, 3],

[3, 1, 0, 1, 2]], dtype=torch.long)

data = Data(x=x, y=y, edge_index=edge_index)

data

Data(x=[4, 2], edge_index=[2, 5], y=[4])

2、故事是这样的

- 在很久很久以前,有一群哥们在淘宝一顿逛,最后可能买了一些商品

- yoochoose-clicks:表示用户的浏览行为,其中一个session_id就表示一次登录都浏览了啥东西

- item_id就是他所浏览的商品,其中yoochoose-buys描述了他最终是否购会买点啥呢,也就是咱们的标签

from sklearn.preprocessing import LabelEncoder

import pandas as pd

df = pd.read_csv('yoochoose-clicks.dat', header=None)

df.columns=['session_id','timestamp','item_id','category']

buy_df = pd.read_csv('yoochoose-buys.dat', header=None)

buy_df.columns=['session_id','timestamp','item_id','price','quantity']

item_encoder = LabelEncoder()

df['item_id'] = item_encoder.fit_transform(df.item_id)

df.head()

| session_id | timestamp | item_id | category | |

|---|---|---|---|---|

| 0 | 1 | 2014-04-07T10:51:09.277Z | 2053 | 0 |

| 1 | 1 | 2014-04-07T10:54:09.868Z | 2052 | 0 |

| 2 | 1 | 2014-04-07T10:54:46.998Z | 2054 | 0 |

| 3 | 1 | 2014-04-07T10:57:00.306Z | 9876 | 0 |

| 4 | 2 | 2014-04-07T13:56:37.614Z | 19448 | 0 |

import numpy as np

#数据有点多,咱们只选择其中一小部分来建模

sampled_session_id = np.random.choice(df.session_id.unique(), 100000, replace=False)

df = df.loc[df.session_id.isin(sampled_session_id)]

df.nunique()

session_id 100000

timestamp 357912

item_id 20243

category 117

dtype: int64

把标签也拿到手

df['label'] = df.session_id.isin(buy_df.session_id)

df.head()

| session_id | timestamp | item_id | category | label | |

|---|---|---|---|---|---|

| 316 | 89 | 2014-04-07T14:12:35.665Z | 6240 | 0 | False |

| 317 | 89 | 2014-04-07T14:12:51.832Z | 2230 | 0 | False |

| 1121 | 408 | 2014-04-02T11:39:52.556Z | 12239 | 0 | True |

| 1122 | 408 | 2014-04-02T11:39:59.933Z | 12239 | 0 | True |

| 1362 | 459 | 2014-04-03T17:32:50.791Z | 26433 | 0 | False |

3、接下来我们制作数据集

- 咱们把每一个session_id都当作一个图,每一个图具有多个点和一个标签

- 其中每个图中的点就是其item_id,特征咱们暂且用其id来表示,之后会做embedding

数据集制作流程

- 1.首先遍历数据中每一组session_id,目的是将其制作成(from torch_geometric.data import Data)格式

- 2.对每一组session_id中的所有item_id进行编码(例如15453,3651,15452)就按照数值大小编码成(2,0,1)

- 3.这样编码的目的是制作edge_index,因为在edge_index中我们需要从0,1,2,3.。。开始

- 4.点的特征就由其ID组成,edge_index是这样,因为咱们浏览的过程中是有顺序的比如(0,0,2,1)

- 5.所以边就是0->0,0->2,2->1这样的,对应的索引就为target_nodes: [0 2 1],source_nodes: [0 0 2]

- 6.最后转换格式data = Data(x=x, edge_index=edge_index, y=y)

- 7.最后将数据集保存下来(以后就不用重复处理了)

这部分代码就把中间过程打印出来,方便同学们理解

from torch_geometric.data import InMemoryDataset

from tqdm import tqdm

df_test = df[:100]

grouped = df_test.groupby('session_id')

i= 0

for session_id, group in tqdm(grouped):

i= i+ 1

print('session_id:',session_id)

sess_item_id = LabelEncoder().fit_transform(group.item_id) #6240和2230转换成1,0

print('sess_item_id:',sess_item_id)

group = group.reset_index(drop=True)

group['sess_item_id'] = sess_item_id

print('group:',group)

#node_features就是item_id

node_features = group.loc[group.session_id==session_id,['sess_item_id','item_id']].sort_values('sess_item_id').item_id.drop_duplicates().values

node_features = torch.LongTensor(node_features).unsqueeze(1)

print('node_features:',node_features)

target_nodes = group.sess_item_id.values[1:] #除了第1个

source_nodes = group.sess_item_id.values[:-1]#除了最后波1个

print('target_nodes:',target_nodes)

print('source_nodes:',source_nodes)

edge_index = torch.tensor([source_nodes, target_nodes], dtype=torch.long)

x = node_features

print("x",x)

y = torch.FloatTensor([group.label.values[0]])

print("y",y)

data = Data(x=x, edge_index=edge_index, y=y)

print('data:',data)

if i >3:

break

14%|███████████▊ | 3/21 [00:00<00:00, 66.33it/s]

session_id: 89

sess_item_id: [1 0]

group: session_id timestamp item_id category label sess_item_id

0 89 2014-04-07T14:12:35.665Z 6240 0 False 1

1 89 2014-04-07T14:12:51.832Z 2230 0 False 0

node_features: tensor([[2230],

[6240]])

target_nodes: [0]

source_nodes: [1]

x tensor([[2230],

[6240]])

y tensor([0.])

data: Data(x=[2, 1], edge_index=[2, 1], y=[1])

session_id: 408

sess_item_id: [0 0]

group: session_id timestamp item_id category label sess_item_id

0 408 2014-04-02T11:39:52.556Z 12239 0 True 0

1 408 2014-04-02T11:39:59.933Z 12239 0 True 0

node_features: tensor([[12239]])

target_nodes: [0]

source_nodes: [0]

x tensor([[12239]])

y tensor([1.])

data: Data(x=[1, 1], edge_index=[2, 1], y=[1])

session_id: 459

sess_item_id: [1 0 2 0 2 0 0]

group: session_id timestamp item_id category label sess_item_id

0 459 2014-04-03T17:32:50.791Z 26433 0 False 1

1 459 2014-04-03T17:39:07.398Z 17492 0 False 0

2 459 2014-04-03T17:40:16.246Z 43130 0 False 2

3 459 2014-04-03T17:40:26.514Z 17492 0 False 0

4 459 2014-04-03T17:40:35.374Z 43130 0 False 2

5 459 2014-04-03T17:40:46.581Z 17492 0 False 0

6 459 2014-04-03T17:40:59.556Z 17492 0 False 0

node_features: tensor([[17492],

[26433],

[43130]])

target_nodes: [0 2 0 2 0 0]

source_nodes: [1 0 2 0 2 0]

x tensor([[17492],

[26433],

[43130]])

y tensor([0.])

data: Data(x=[3, 1], edge_index=[2, 6], y=[1])

session_id: 482

sess_item_id: [0 0]

group: session_id timestamp item_id category label sess_item_id

0 482 2014-04-07T11:17:08.426Z 4855 0 False 0

1 482 2014-04-07T11:17:10.575Z 4855 0 False 0

node_features: tensor([[4855]])

target_nodes: [0]

source_nodes: [0]

x tensor([[4855]])

y tensor([0.])

data: Data(x=[1, 1], edge_index=[2, 1], y=[1])

from torch_geometric.data import InMemoryDataset

from tqdm import tqdm

"""

执行顺序:

(1)检查raw_file_names,是否却文件

(2)若缺少文件,下载download

(3)processed_file_names:检查self.processed_dir目录下是否存在self.processed_file_names属性方法返回的所有文件,没有就会走process

"""

class YooChooseBinaryDataset(InMemoryDataset):

def __init__(self, root, transform=None, pre_transform=None):

super(YooChooseBinaryDataset, self).__init__(root, transform, pre_transform) # transform就是数据增强,对每一个数据都执行

self.data, self.slices = torch.load(self.processed_paths[0])

@property

def raw_file_names(self): #检查self.raw_dir目录下是否存在raw_file_names()属性方法返回的每个文件

#如有文件不存在,则调用download()方法执行原始文件下载

return []

@property

def processed_file_names(self): #检查self.processed_dir目录下是否存在self.processed_file_names属性方法返回的所有文件,没有就会走process

return ['yoochoose_click_binary_1M_sess.dataset']

def download(self):

pass

def process(self):

data_list = []

# process by session_id

grouped = df.groupby('session_id')

for session_id, group in tqdm(grouped):

sess_item_id = LabelEncoder().fit_transform(group.item_id)

group = group.reset_index(drop=True)

group['sess_item_id'] = sess_item_id

node_features = group.loc[group.session_id==session_id,['sess_item_id','item_id']].sort_values('sess_item_id').item_id.drop_duplicates().values

node_features = torch.LongTensor(node_features).unsqueeze(1)

target_nodes = group.sess_item_id.values[1:]

source_nodes = group.sess_item_id.values[:-1]

edge_index = torch.tensor([source_nodes, target_nodes], dtype=torch.long)

x = node_features

y = torch.FloatTensor([group.label.values[0]])

data = Data(x=x, edge_index=edge_index, y=y)

data_list.append(data)

data, slices = self.collate(data_list)

torch.save((data, slices), self.processed_paths[0])

dataset = YooChooseBinaryDataset(root='data/')

Processing...

100%|█████████████████████████████████████████████████████████████████████████| 100000/100000 [02:50<00:00, 586.85it/s]

Done!

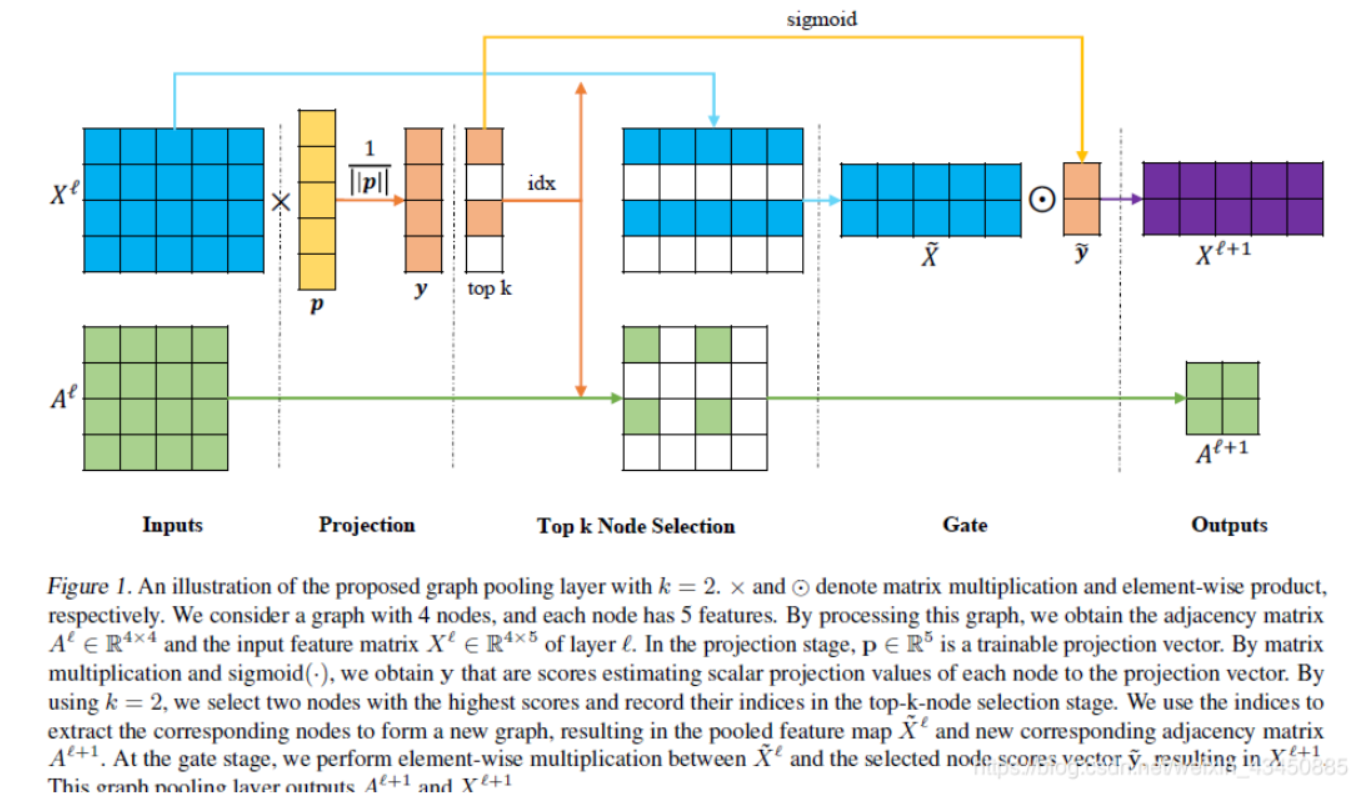

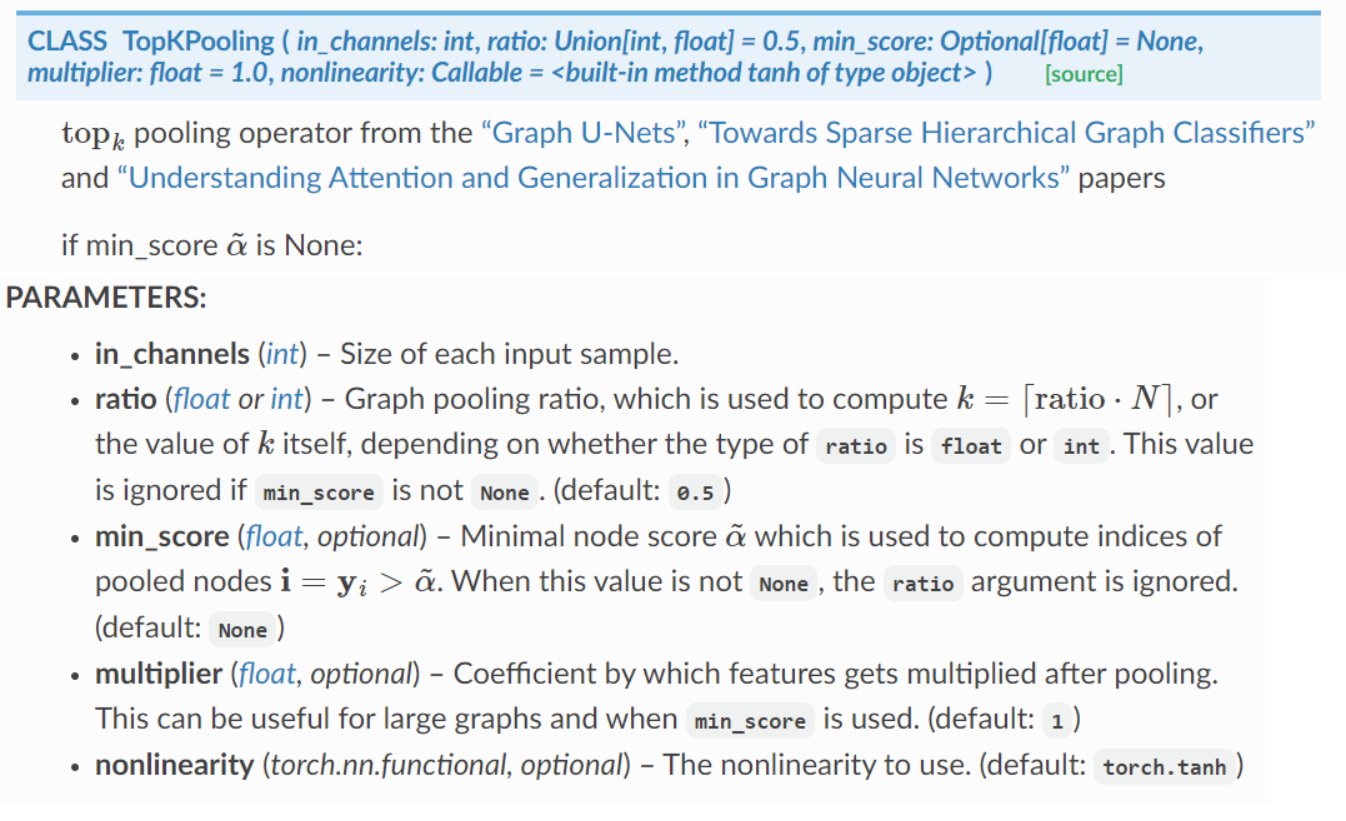

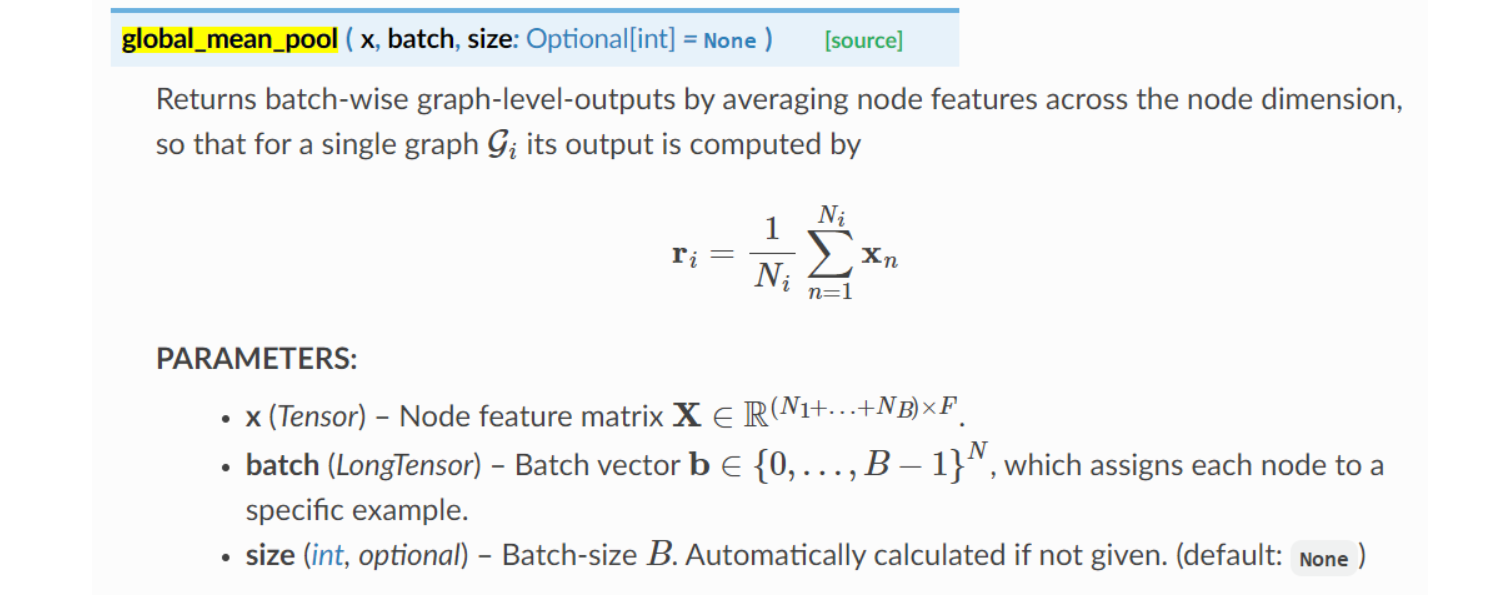

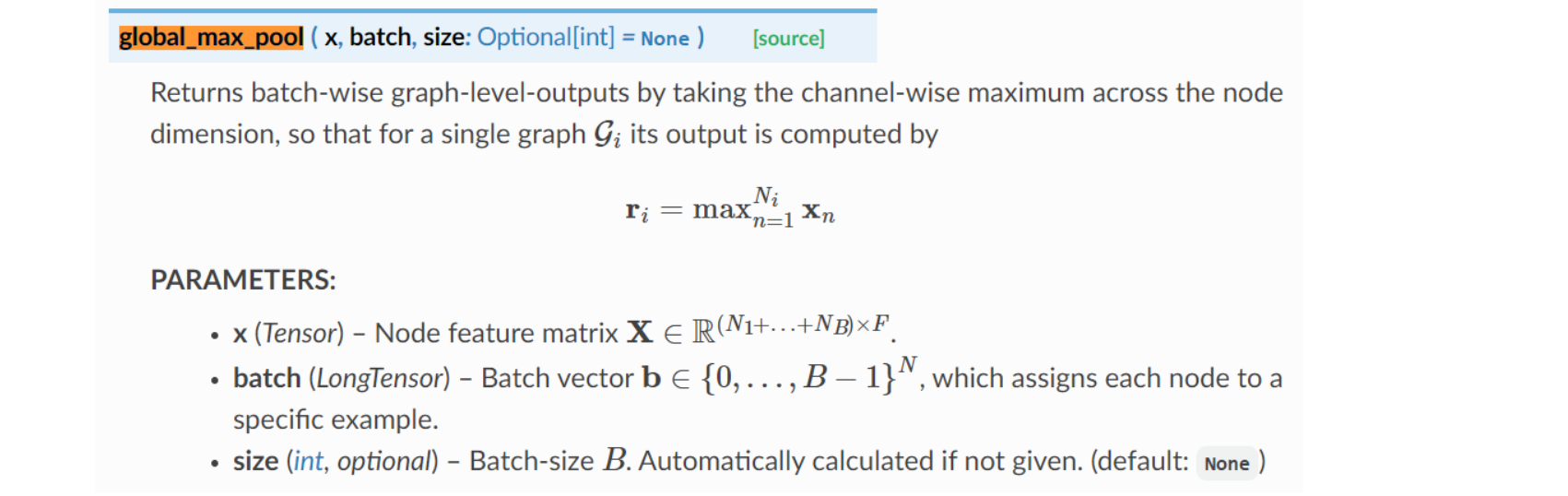

4、API文档解释如下:

TopKPooling流程

- 其实就是对图进行剪枝操作,选择分低的节点剔除掉,然后再重新组合成一个新的图

- 具体来讲:X为[4,5],p(类似于考试题)为可训练的参数[5,1],相乘得到y,为[4,1]。

- 那么,y就是分数,比如[0.9,0.6,0.8,0.5]。因此,第二和第四排名比较低,为白色。就淘汰了。同样的,x第2行和第四行也为白色。

- 同样的,下面邻接矩阵2、4行和2、4列都不要了。因为邻接矩阵中第二个人(节点)和第四个人都不要了。

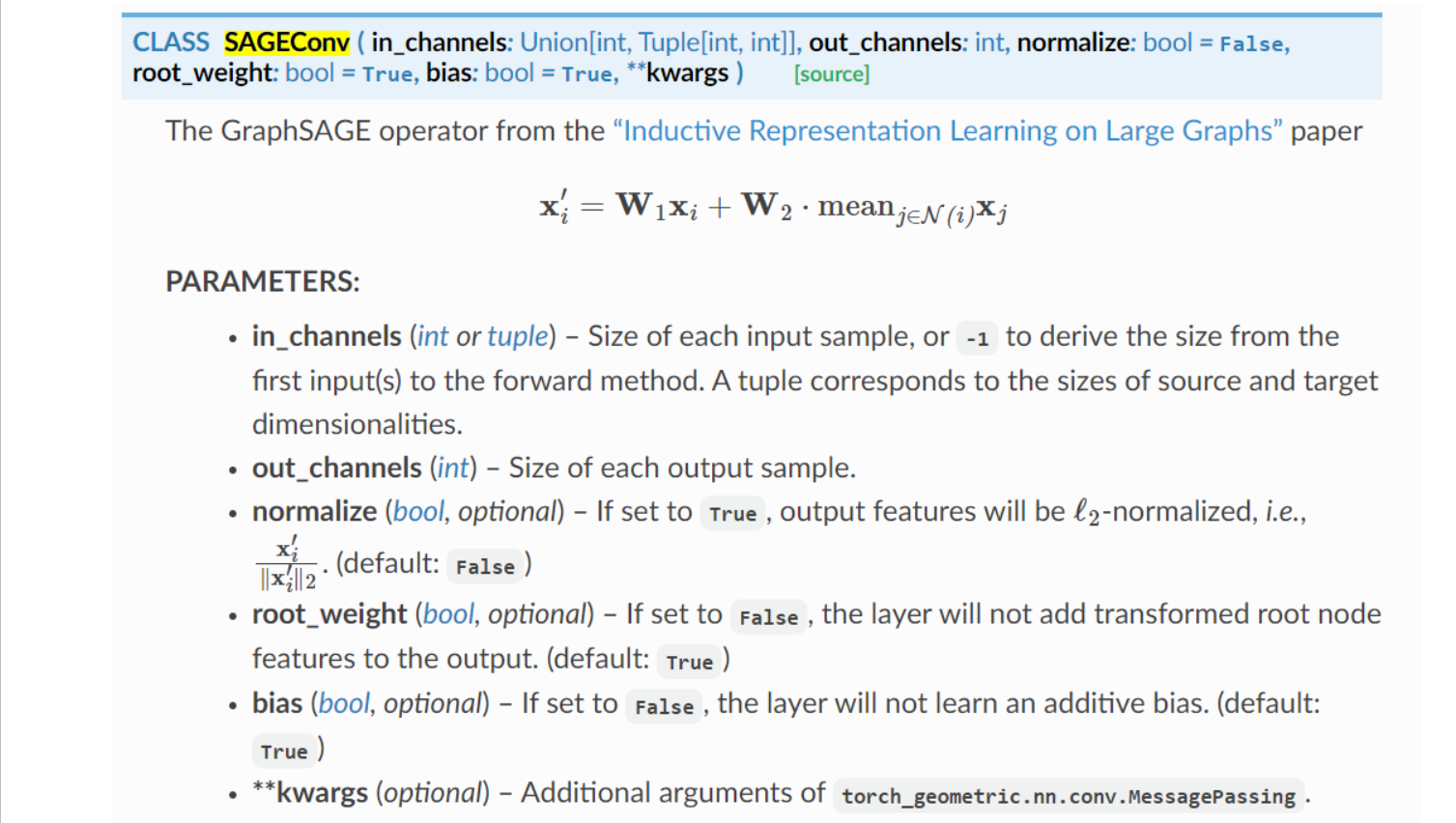

构建网络模型

- 模型可以任选,这里只是举例而已

- 跟咱们图像中的卷积和池化操作非常类似,最后再全连接输出

embed_dim = 128

from torch_geometric.nn import TopKPooling,SAGEConv

from torch_geometric.nn import global_mean_pool as gap, global_max_pool as gmp

import torch.nn.functional as F

class Net(torch.nn.Module): #针对图进行分类任务

def __init__(self):

super(Net, self).__init__()

self.conv1 = SAGEConv(embed_dim, 128) #自身向量*w+邻居向量平均*w2

self.pool1 = TopKPooling(128, ratio=0.8)

self.conv2 = SAGEConv(128, 128)

self.pool2 = TopKPooling(128, ratio=0.8)

self.conv3 = SAGEConv(128, 128)

self.pool3 = TopKPooling(128, ratio=0.8)

self.item_embedding = torch.nn.Embedding(num_embeddings=df.item_id.max() +10, embedding_dim=embed_dim)

self.lin1 = torch.nn.Linear(128, 128)

self.lin2 = torch.nn.Linear(128, 64)

self.lin3 = torch.nn.Linear(64, 1)

self.bn1 = torch.nn.BatchNorm1d(128)

self.bn2 = torch.nn.BatchNorm1d(64)

self.act1 = torch.nn.ReLU()

self.act2 = torch.nn.ReLU()

def forward(self, data):

#data DataBatch(x=[183, 1], edge_index=[2, 197], y=[64], batch=[183], ptr=[65])

#其中,batch是所有点的个数,每64个图的所有的点为一个batch

#y是所有图的个数。

x, edge_index, batch = data.x, data.edge_index, data.batch # x:n*1,其中每个图里点的个数是不同的

x = self.item_embedding(x)# n*1*128 特征编码后的结果

print('item_embedding',x.shape)

x = x.squeeze(1) # n*128

print('squeeze',x.shape)

#第一步,把183个点,每个点128维向量,和两行的邻接矩阵输入。

x = F.relu(self.conv1(x, edge_index))# n*128

print('conv1',x.shape)

#pool保留183个点中的0.8=172

x, edge_index, _, batch, _, _ = self.pool1(x, edge_index, None, batch)# pool之后得到 n*0.8个点

#torch.Size([172, 128])

print('self.pool1',x.shape)

#torch.Size([2, 175])

print('self.pool1',edge_index.shape)

#torch.Size([172])

print('self.pool1',batch.shape)

#x1 = torch.cat([gmp(x, batch), gap(x, batch)], dim=1)

x1 = gap(x, batch)

#gap torch.Size([64, 128])

print('gap',x1.shape)

# print('gmp',gmp(x, batch).shape) # batch*128

# print('gap',gap(x, batch).shape) # batch*256

x = F.relu(self.conv2(x, edge_index))

print('conv2',x.shape)

x, edge_index, _, batch, _, _ = self.pool2(x, edge_index, None, batch)

print('pool2',x.shape)

print('pool2',edge_index.shape)

print('pool2',batch.shape)

#x2 = torch.cat([gmp(x, batch), gap(x, batch)], dim=1)

x2 = gap(x, batch)

print('x2',x2.shape)

x = F.relu(self.conv3(x, edge_index))

print('conv3',x.shape)

x, edge_index, _, batch, _, _ = self.pool3(x, edge_index, None, batch)

print('pool3',x.shape)

#x3 = torch.cat([gmp(x, batch), gap(x, batch)], dim=1)

x3 = gap(x, batch)

print('x3',x3.shape)# batch * 256

x = x1 + x2 + x3 # 获取不同尺度的全局特征

x = self.lin1(x)

print('lin1',x.shape)

x = self.act1(x)

x = self.lin2(x)

print('lin2',x.shape)

x = self.act2(x)

x = F.dropout(x, p=0.5, training=self.training)

x = torch.sigmoid(self.lin3(x)).squeeze(1)#batch个结果

print('sigmoid',x.shape)

return x

from torch_geometric.loader import DataLoader

def train():

model.train()

loss_all = 0

for data in train_loader:

data = data

#print('data',data)

optimizer.zero_grad()

output = model(data)

label = data.y

loss = crit(output, label)

loss.backward()

loss_all += data.num_graphs * loss.item()

optimizer.step()

return loss_all / len(dataset)

model = Net()

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

crit = torch.nn.BCELoss()

train_loader = DataLoader(dataset, batch_size=64)

for epoch in range(10):

print('epoch:',epoch)

loss = train()

print(loss)

epoch: 0

0.21383523407101632

epoch: 1

0.1923125632107258

epoch: 2

0.17628825497269632

epoch: 3

0.15730181092619896

epoch: 4

0.1406132375997305

epoch: 5

0.12482743380367756

epoch: 6

0.11302556532740593

epoch: 7

0.1032185257422924

epoch: 8

0.09486922759741545

epoch: 9

0.09064080653965473

from sklearn.metrics import roc_auc_score

def evalute(loader,model):

model.eval()

prediction = []

labels = []

with torch.no_grad():

for data in loader:

data = data#.to(device)

pred = model(data)#.detach().cpu().numpy()

label = data.y#.detach().cpu().numpy()

prediction.append(pred)

labels.append(label)

prediction = np.hstack(prediction)

labels = np.hstack(labels)

return roc_auc_score(labels,prediction)

for epoch in range(1):

roc_auc_score = evalute(dataset,model)

print('roc_auc_score',roc_auc_score)

roc_auc_score 0.9325659815540558

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧