2、点分类任务

1、Cora dataset(数据集描述:Yang et al. (2016))

- 论文引用数据集,每一个点有1433维向量

- 最终要对每个点进行7分类任务(每个类别只有20个点有标注)

from torch_geometric.datasets import Planetoid#下载数据集用的

from torch_geometric.transforms import NormalizeFeatures

dataset = Planetoid(root='data/Planetoid', name='Cora', transform=NormalizeFeatures())#transform预处理

print()

print(f'Dataset: {dataset}:')

print('======================')

print(f'Number of graphs: {len(dataset)}')

print(f'Number of features: {dataset.num_features}')

print(f'Number of classes: {dataset.num_classes}')

data = dataset[0] # Get the first graph object.

print()

print(data)

print('===========================================================================================================')

# Gather some statistics about the graph.

print(f'Number of nodes: {data.num_nodes}')

print(f'Number of edges: {data.num_edges}')

print(f'Average node degree: {data.num_edges / data.num_nodes:.2f}')

print(f'Number of training nodes: {data.train_mask.sum()}')

print(f'Training node label rate: {int(data.train_mask.sum()) / data.num_nodes:.2f}')

print(f'Has isolated nodes: {data.has_isolated_nodes()}')

print(f'Has self-loops: {data.has_self_loops()}')

print(f'Is undirected: {data.is_undirected()}')

Dataset: Cora():

======================

Number of graphs: 1

Number of features: 1433

Number of classes: 7

Data(x=[2708, 1433], edge_index=[2, 10556], y=[2708], train_mask=[2708], val_mask=[2708], test_mask=[2708])

===========================================================================================================

Number of nodes: 2708

Number of edges: 10556

Average node degree: 3.90

Number of training nodes: 140

Training node label rate: 0.05

Has isolated nodes: False

Has self-loops: False

Is undirected: True

- val_mask和test_mask分别表示这个点需要被用到哪个集中

# 可视化部分

%matplotlib inline

import matplotlib.pyplot as plt

from sklearn.manifold import TSNE

def visualize(h, color):

z = TSNE(n_components=2).fit_transform(h.detach().cpu().numpy())

plt.figure(figsize=(10,10))

plt.xticks([])

plt.yticks([])

plt.scatter(z[:, 0], z[:, 1], s=70, c=color, cmap="Set2")

plt.show()

2、试试直接用传统的全连接层会咋样(Multi-layer Perception Network)

import torch

from torch.nn import Linear

import torch.nn.functional as F

class MLP(torch.nn.Module):

def __init__(self, hidden_channels):

super().__init__()

torch.manual_seed(12345)

self.lin1 = Linear(dataset.num_features, hidden_channels)

self.lin2 = Linear(hidden_channels, dataset.num_classes)

def forward(self, x):

x = self.lin1(x)

x = x.relu()

x = F.dropout(x, p=0.5, training=self.training)

x = self.lin2(x)

return x

model = MLP(hidden_channels=16)

print(model)

MLP(

(lin1): Linear(in_features=1433, out_features=16, bias=True)

(lin2): Linear(in_features=16, out_features=7, bias=True)

)

model = MLP(hidden_channels=16)

criterion = torch.nn.CrossEntropyLoss() # Define loss criterion.

optimizer = torch.optim.Adam(model.parameters(), lr=0.01, weight_decay=5e-4) # Define optimizer.

def train():

model.train()

optimizer.zero_grad() # Clear gradients.

out = model(data.x) # Perform a single forward pass.

loss = criterion(out[data.train_mask], data.y[data.train_mask]) # Compute the loss solely based on the training nodes.

loss.backward() # Derive gradients.

optimizer.step() # Update parameters based on gradients.

return loss

def test():

model.eval()

out = model(data.x)

pred = out.argmax(dim=1) # Use the class with highest probability.

test_correct = pred[data.test_mask] == data.y[data.test_mask] # Check against ground-truth labels.

test_acc = int(test_correct.sum()) / int(data.test_mask.sum()) # Derive ratio of correct predictions.

return test_acc

for epoch in range(1, 210):

loss = train()

print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}')

Epoch: 209, Loss: 0.3570

准确率计算

test_acc = test()

print(f'Test Accuracy: {test_acc:.4f}')

Test Accuracy: 0.5890

3、Graph Neural Network (GNN)

将全连接层替换成GCN层

from torch_geometric.nn import GCNConv

class GCN(torch.nn.Module):

def __init__(self, hidden_channels):

super().__init__()

torch.manual_seed(1234567)

self.conv1 = GCNConv(dataset.num_features, hidden_channels)

self.conv2 = GCNConv(hidden_channels, dataset.num_classes)

def forward(self, x, edge_index):

x = self.conv1(x, edge_index)

x = x.relu()

x = F.dropout(x, p=0.5, training=self.training)

x = self.conv2(x, edge_index)

return x

model = GCN(hidden_channels=16)

print(model)

GCN(

(conv1): GCNConv(1433, 16)

(conv2): GCNConv(16, 7)

)

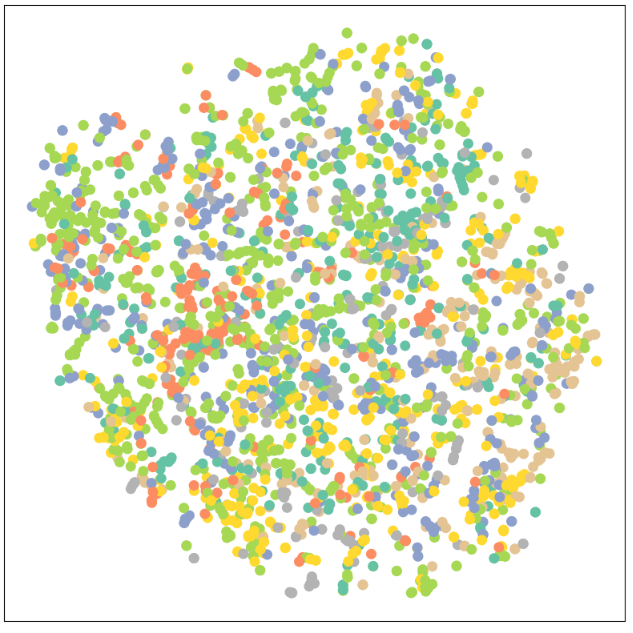

可视化时由于输出是7维向量,所以降维成2维进行展示

model = GCN(hidden_channels=16)

model.eval()

out = model(data.x, data.edge_index)

visualize(out, color=data.y)

训练GCN模型

model = GCN(hidden_channels=16)

optimizer = torch.optim.Adam(model.parameters(), lr=0.01, weight_decay=5e-4)

criterion = torch.nn.CrossEntropyLoss()

def train():

model.train()

optimizer.zero_grad()

out = model(data.x, data.edge_index)

loss = criterion(out[data.train_mask], data.y[data.train_mask])

loss.backward()

optimizer.step()

return loss

def test():

model.eval()

out = model(data.x, data.edge_index)

pred = out.argmax(dim=1)

test_correct = pred[data.test_mask] == data.y[data.test_mask]

test_acc = int(test_correct.sum()) / int(data.test_mask.sum())

return test_acc

for epoch in range(1, 101):

loss = train()

print(f'Epoch: {epoch:03d}, Loss: {loss:.4f}')

Epoch: 100, Loss: 0.5799

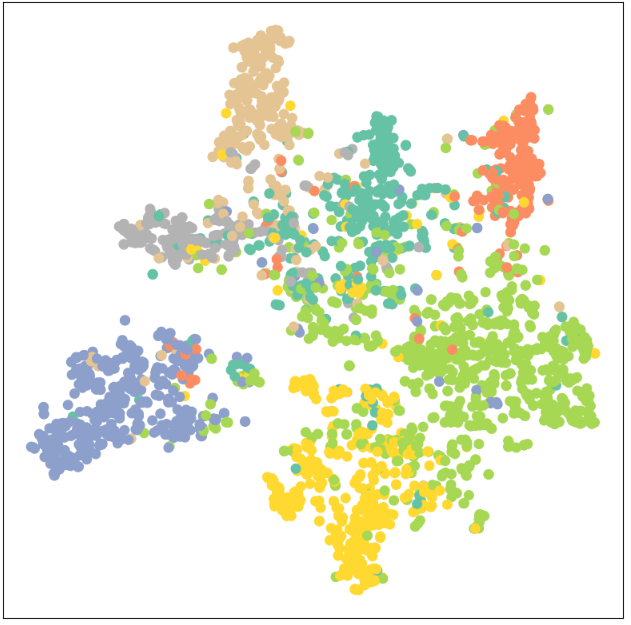

准确率计算

test_acc = test()

print(f'Test Accuracy: {test_acc:.4f}')

Test Accuracy: 0.8150

从59%到81%,这个提升还是蛮大的;训练后的可视化展示如下:

model.eval()

out = model(data.x, data.edge_index)

visualize(out, color=data.y)

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧