NLP(四):word2vec + siameseLSTM改进, pytorch如何使用GPU

一、查看GPU

>>> import torch >>> torch.cuda.device_count() 1 >>> torch.cuda.get_device_name(0) 'GeForce GTX 1060' >>> torch.cuda.is_available() True

二、CPU转移到GPU步骤

""" 注意,转移到GPU步骤: (1)设置种子:torch.cuda.manual_seed(123) (2)device = torch.device('cuda')定义设备 (3)损失函数转移:criterion = nn.BCEWithLogitsLoss().to(self.device) (4)网络模型转移:myLSTM = SiameseLSTM(128).to(self.device) (5)数据转移:a, b, label= a.cuda(), b.cuda(), label.cuda() """

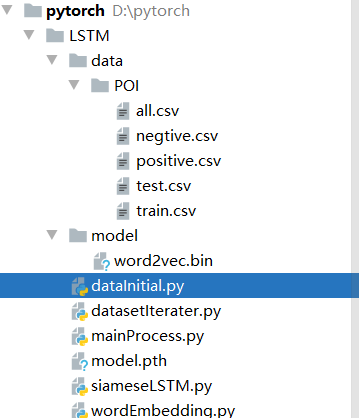

三、项目目录

四、代码步骤

1、数据初始化

import numpy as np import pandas as pd import os class DataInitial(object): def __init__(self): pass def all_data(self): df1 = pd.read_csv("data/POI/negtive.csv") df2 = pd.read_csv("data/POI/positive.csv") df = pd.concat([df1,df2],ignore_index=True) df.to_csv("data/POI/all.csv",index=False,sep=',') def split(self): df = pd.read_csv('data/POI/all.csv') df = df.sample(frac=1.0) cut_idx = int(round(0.2 * df.shape[0])) df_test, df_train = df.iloc[:cut_idx], df.iloc[cut_idx:] df_test.to_csv("data/POI/test.csv",index=False,sep=',') df_train.to_csv("data/POI/train.csv", index=False, sep=',') def train_data(self): train_texta = pd.read_csv("data/POI/train.csv")["address_1"] train_textb = pd.read_csv("data/POI/train.csv")["address_2"] train_label = pd.read_csv("data/POI/train.csv")["tag"] return train_texta,train_textb,train_label def test_data(self): test_texta = pd.read_csv("data/POI/test.csv")["address_1"] test_textb = pd.read_csv("data/POI/test.csv")["address_2"] test_label = pd.read_csv("data/POI/test.csv")["tag"] return test_texta,test_textb,test_label

2、DataSet

import torch.utils.data as data import torch class DatasetIterater(data.Dataset): def __init__(self, texta, textb, label): self.texta = texta self.textb = textb self.label = label def __getitem__(self, item): texta = self.texta[item] textb = self.textb[item] label = self.label[item] return texta, textb, label def __len__(self): return len(self.texta)

3、词嵌入

import jieba from gensim.models import Word2Vec import torch import gensim import numpy as np model = gensim.models.KeyedVectors.load_word2vec_format('model/word2vec.bin', binary=True) class WordEmbedding(object): def __init__(self): pass def sentenceTupleToEmbedding(self, data1, data2): aCutListMaxLen = max([len(list(jieba.cut(sentence_a))) for sentence_a in data1]) bCutListMaxLen = max([len(list(jieba.cut(sentence_a))) for sentence_a in data2]) maxLen = max(aCutListMaxLen,bCutListMaxLen) seq_len = maxLen a = self.sqence_vec(data1, seq_len) #batch_size, sqence, embedding b = self.sqence_vec(data2, seq_len) return torch.FloatTensor(a), torch.FloatTensor(b) def sqence_vec(self, data, seq_len): data_a_vec = [] for sequence_a in data: sequence_vec = [] # sequence * 128 for word_a in jieba.cut(sequence_a): if word_a in model: sequence_vec.append(model[word_a]) sequence_vec = np.array(sequence_vec) add = np.zeros((seq_len - sequence_vec.shape[0], 128)) sequenceVec = np.vstack((sequence_vec, add)) data_a_vec.append(sequenceVec) a_vec = np.array(data_a_vec) return a_vec

4、孪生LSTM

import torch from torch import nn class SiameseLSTM(nn.Module): def __init__(self, input_size): super(SiameseLSTM, self).__init__() self.lstm = nn.LSTM(input_size=input_size, hidden_size=10, num_layers=4, batch_first=True) self.fc = nn.Linear(10, 1) def forward(self, data1, data2): out1, (h1, c1) = self.lstm(data1) out2, (h2, c2) = self.lstm(data2) pre1 = out1[:, -1, :] pre2 = out2[:, -1, :] dis = torch.abs(pre1 - pre2) out = self.fc(dis) return out

5、主程序

import torch from torch import nn from torch.utils.data import DataLoader import pandas as pd from datasetIterater import DatasetIterater import jieba from wordEmbedding import WordEmbedding from siameseLSTM import SiameseLSTM import numpy as np from dataInitial import DataInitial word = WordEmbedding() """ 注意,转移到GPU步骤: (1)设置种子:torch.cuda.manual_seed(123) (2)device = torch.device('cuda')定义设备 (3)损失函数转移:criterion = nn.BCEWithLogitsLoss().to(self.device) (4)网络模型转移:myLSTM = SiameseLSTM(128).to(self.device) (5)数据转移:a, b, label= a.cuda(), b.cuda(), label.cuda() """ class MainProcess(object): def __init__(self): self.learning_rate = 0.001 torch.cuda.manual_seed(123) self.device = torch.device('cuda') self.train_texta, self.train_textb, self.train_label = DataInitial().train_data() self.test_texta, self.test_textb, self.test_label = DataInitial().test_data() self.train_data = DatasetIterater(self.train_texta,self.train_textb,self.train_label) self.test_data = DatasetIterater(self.test_texta, self.test_textb, self.test_label) self.train_iter = DataLoader(dataset=self.train_data, batch_size=32, shuffle=True) self.test_iter = DataLoader(dataset=self.test_data, batch_size=128, shuffle=True) self.myLSTM = SiameseLSTM(128).to(self.device) self.criterion = nn.BCEWithLogitsLoss().to(self.device) self.optimizer = torch.optim.Adam(self.myLSTM.parameters(), lr=self.learning_rate) def binary_acc(self, preds, y): preds = torch.round(torch.sigmoid(preds)) correct = torch.eq(preds, y).float() acc = correct.sum() / len(correct) return acc def train(self, mynet, train_iter, optimizer, criterion, epoch): avg_acc = [] avg_loss = [] mynet.train() for batch_id, (data1, data2, label) in enumerate(train_iter): try: a, b = word.sentenceTupleToEmbedding(data1, data2) except Exception as e: continue a, b, label= a.cuda(non_blocking=True), b.cuda(non_blocking=True), label.cuda(non_blocking=True) distence = mynet(a, b) loss = criterion(distence, label.float().unsqueeze(-1)) acc = self.binary_acc(distence, label.float().unsqueeze(-1)).item() avg_acc.append(acc) optimizer.zero_grad() loss.backward() optimizer.step() if batch_id % 100==0: print("轮数:", epoch, "batch: ",batch_id,"训练损失:", loss.item()) avg_loss.append(loss.item()) avg_acc = np.array(avg_acc).mean() avg_loss = np.array(avg_loss).mean() print('train acc:', avg_acc) print("train loss", avg_loss) def eval(self, mynet, test_iter, criteon, epoch): mynet.eval() avg_acc = [] avg_loss = [] with torch.no_grad(): for batch_id, (data1, data2, label) in enumerate(test_iter): try: a, b = word.sentenceTupleToEmbedding(data1, data2) except Exception as e: continue a, b, label= a.cuda(non_blocking=True), b.cuda(non_blocking=True), label.cuda(non_blocking=True) distence = mynet(a, b) loss = criteon(distence, label.float().unsqueeze(-1)) acc = self.binary_acc(distence, label.float().unsqueeze(-1)).item() avg_acc.append(acc) avg_loss.append(loss.item()) if batch_id>50: break avg_acc = np.array(avg_acc).mean() avg_loss = np.array(avg_loss).mean() print('>>test acc:', avg_acc) print(">>test loss:", avg_loss) return (avg_acc, avg_loss) def main(self): min_loss = 100000 for epoch in range(50): self.train(self.myLSTM, self.train_iter, self.optimizer, self.criterion, epoch) eval_acc, eval_loss = self.eval(self.myLSTM, self.test_iter, self.criterion, epoch) if eval_loss < min_loss: min_loss = eval_loss print("save model") torch.save(self.myLSTM.state_dict(), 'model.pth') if __name__ == '__main__': MainProcess().main()

五、实验结果

六、测试

def test(self): self.myLSTM.load_state_dict(torch.load('model.pth')) data1 = ("浙江杭州富阳区银湖街黄先生的外卖",) data2 = ("富阳区浙江富阳区银湖街道新常村",) try: a, b = word.sentenceTupleToEmbedding(data1, data2) print(a.shape, b.shape) except Exception as e: print(e) a, b = a.cuda(non_blocking=True), b.cuda(non_blocking=True) distence = self.myLSTM(a, b) preds = torch.round(torch.sigmoid(distence)) print(preds.item())

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 开发者必知的日志记录最佳实践

· SQL Server 2025 AI相关能力初探

· Linux系列:如何用 C#调用 C方法造成内存泄露

· AI与.NET技术实操系列(二):开始使用ML.NET

· 记一次.NET内存居高不下排查解决与启示

· 阿里最新开源QwQ-32B,效果媲美deepseek-r1满血版,部署成本又又又降低了!

· 开源Multi-agent AI智能体框架aevatar.ai,欢迎大家贡献代码

· Manus重磅发布:全球首款通用AI代理技术深度解析与实战指南

· 被坑几百块钱后,我竟然真的恢复了删除的微信聊天记录!

· 没有Manus邀请码?试试免邀请码的MGX或者开源的OpenManus吧