Hadoop 上Hive 的操作

数据dept表的准备:

--创建dept表 CREATE TABLE dept( deptno int, dname string, loc string) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS textfile;

数据文件准备:

vi detp.txt 10,ACCOUNTING,NEW YORK 20,RESEARCH,DALLAS 30,SALES,CHICAGO 40,OPERATIONS,BOSTON

数据表emp准备:

CREATE TABLE emp( empno int, ename string, job string, mgr int, hiredate string, sal int, comm int, deptno int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS textfile;

表emp数据准备:

vi emp.txt 7369,SMITH,CLERK,7902,1980-12-17,800,null,20 7499,ALLEN,SALESMAN,7698,1981-02-20,1600,300,30 7521,WARD,SALESMAN,7698,1981-02-22,1250,500,30 7566,JONES,MANAGER,7839,1981-04-02,2975,null,20 7654,MARTIN,SALESMAN,7698,1981-09-28,1250,1400,30 7698,BLAKE,MANAGER,7839,1981-05-01,2850,null,30 7782,CLARK,MANAGER,7839,1981-06-09,2450,null,10 7788,SCOTT,ANALYST,7566,1987-04-19,3000,null,20 7839,KING,PRESIDENT,null,1981-11-17,5000,null,10 7844,TURNER,SALESMAN,7698,1981-09-08,1500,0,30 7876,ADAMS,CLERK,7788,1987-05-23,1100,null,20 7900,JAMES,CLERK,7698,1981-12-03,950,null,30 7902,FORD,ANALYST,7566,1981-12-02,3000,null,20 7934,MILLER,CLERK,7782,1982-01-23,1300,null,10

把数据文件装到表里

load data local inpath '/home/hadoop/tmp/dept.txt' overwrite into table dept; load data local inpath '/home/hadoop/tmp/emp.txt' overwrite into table emp;

查询语句

select d.dname,d.loc,e.empno,e.ename,e.hiredate from dept d join emp e on e.deptno = d.deptno ; * 可以看到走的是map reduce 程序

二、Hive分区

hive分区的目的

* hive为了避免全表扫描,从而引进分区技术来将数据进行划分。减少不必要数据的扫描,从而提高效率。

hive分区和mysql分区的区别

* mysql分区字段用的是表内字段;而hive分区字段采用表外字段。

hive的分区技术

* hive的分区字段是一个伪字段,但是可以用来进行操作。

* 分区字段不进行区分大小写

* 分区可以是表分区或者分区的分区,可以有多个分区

hive分区根据

* 看业务,只要是某个标识能把数据区分开来。比如:年、月、日、地域、性别等

分区关键字

* partitioned by(字段)

分区本质

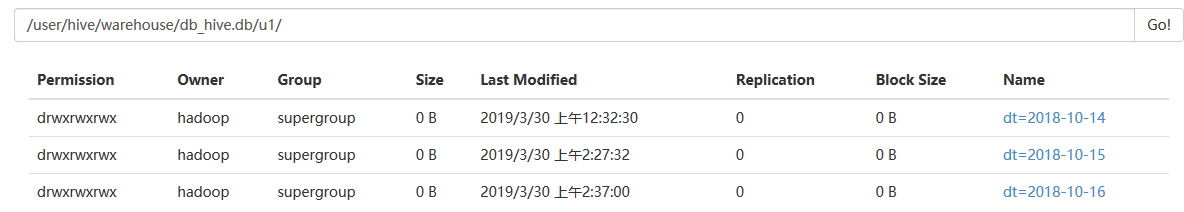

* 在表的目录或者是分区的目录下在创建目录,分区的目录名为指定字段=值

创建分区表:

create table if not exists u1( id int, name string, age int ) partitioned by(dt string) row format delimited fields terminated by ' '

stored as textfile;

数据准备:

[hadoop@master tmp]$ more u1.txt 1 xm1 16 2 xm2 18 3 xm3 22

加载数据:

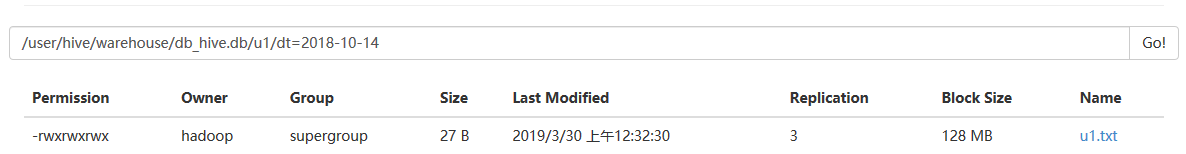

load data local inpath '/home/hadoop/tmp/u1.txt' into table u1 partition(dt="2018-10-14");

查询:

hive> select * from u1; OK 1 xm1 16 2018-10-14 2 xm2 18 2018-10-14 3 xm3 22 2018-10-14 Time taken: 5.919 seconds, Fetched: 3 row(s)

查询分区:

hive> select * from u1 where dt='2018-10-15'; OK 1 xm1 16 2018-10-15 2 xm2 18 2018-10-15 3 xm3 22 2018-10-15 Time taken: 0.413 seconds, Fetched: 3 row(s)

Hive的二级分区

创建表u2

create table if not exists u2(id int,name string,age int) partitioned by(month int,day int) row format delimited fields terminated by ' ' stored as textfile;

导入数据:

load data local inpath '/home/hadoop/tmp/u2.txt' into table u2 partition(month=9,day=14);

数据查询:

hive> select * from u2; OK 1 xm1 16 9 14 2 xm2 18 9 14 Time taken: 0.303 seconds, Fetched: 2 row(s)

分区修改:

查看分区:

hive> show partitions u1;

OK

dt=2018-10-14

dt=2018-10-15

增加分区:

> alter table u1 add partition(dt="2018-10-16"); OK

查看新增加的分区:

hive> show partitions u1; OK dt=2018-10-14 dt=2018-10-15 dt=2018-10-16 Time taken: 0.171 seconds, Fetched: 3 row(s)

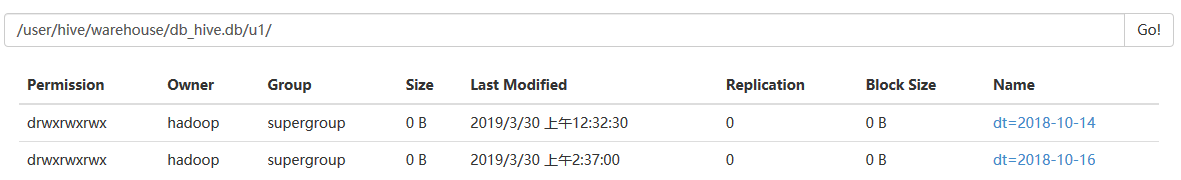

删除分区:

hive> alter table u1 drop partition(dt="2018-10-15"); Dropped the partition dt=2018-10-15 OK Time taken: 0.576 seconds hive> select * from u1 ; OK 1 xm1 16 2018-10-14 2 xm2 18 2018-10-14 3 xm3 22 2018-10-14 Time taken: 0.321 seconds, Fetched: 3 row(s)

三、hive动态分区

hive配置文件hive-site.xml 文件里有配置参数:

hive.exec.dynamic.partition=true; 是否允许动态分区 hive.exec.dynamic.partition.mode=strict/nostrict; 动态区模式为严格模式 strict: 严格模式,最少需要一个静态分区列(需指定固定值) nostrict:非严格模式,允许所有的分区字段都为动态。 hive.exec.max.dynamic.partitions=1000; 允许最大的动态分区 hive.exec.max.dynamic.partitions.pernode=100; 单个节点允许最大分区

创建动态分区表

动态分区表的创建语句与静态分区表相同,不同之处在与导入数据,静态分区表可以从本地文件导入,但是动态分区表需要使用from…insert into语句导入。

create table if not exists u3(id int,name string,age int) partitioned by(month int,day int)

row format delimited fields terminated by ' ' stored as textfile;

导入数据,将u2表中的数据加载到u3中:

from u2 insert into table u3 partition(month,day) select id,name,age,month,day;

FAILED: SemanticException [Error 10096]: Dynamic partition strict mode requires at least one static partition column. To turn this off set hive.exec.dynamic.partition.mode=nonstrict

解决方法:

要动态插入分区必需设置hive.exec.dynamic.partition.mode=nonstrict

hive> set hive.exec.dynamic.partition.mode;

hive.exec.dynamic.partition.mode=strict

hive> set hive.exec.dynamic.partition.mode=nonstrict;

然后再次插入就可以了

查询:

hive> select * from u3; OK 1 xm1 16 9 14 2 xm2 18 9 14 Time taken: 0.451 seconds, Fetched: 2 row(s)

hive分桶

分桶目的作用

* 更加细致地划分数据;对数据进行抽样查询,较为高效;可以使查询效率提高

* 记住,分桶比分区,更高的查询效率。

分桶原理关键字

* 分桶字段是表内字段,默认是对分桶的字段进行hash值,然后再模于总的桶数,得到的值则是分区桶数。每个桶中都有数据,但每个桶中的数据条数不一定相等。

bucket

clustered by(id) into 4 buckets

分桶的本质

* 在表目录或者分区目录中创建文件。

分桶案例

* 分四个桶

create table if not exists u4(id int, name string, age int) partitioned by(month int,day int) clustered by(id) into 4 buckets row format delimited fields terminated by ' ' stored as textfile;

对分桶的数据不能使用load的方式加载数据,使用load方式加载不会报错,但是没有分桶的效果。

为分桶表添加数据,需要设置set hive.enforce.bucketing=true;

首先将数据添加到u2表中

1 xm1 16 2 xm2 18 3 xm3 22 4 xh4 20 5 xh5 22 6 xh6 23 7 xh7 25 8 xh8 28 9 xh9 32

load data local inpath '/home/hadoop/tmp/u2.txt' into table u2 partition(month=9,day=14);

加载到桶表中:

from u2 insert into table u4 partition(month=9,day=14) select id,name,age where month = 9 and day = 14;

2019-03-31 15:43:26,755 Stage-1 map = 0%, reduce = 0% 2019-03-31 15:43:34,241 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 0.85 sec 2019-03-31 15:43:41,681 Stage-1 map = 100%, reduce = 25%, Cumulative CPU 1.95 sec 2019-03-31 15:43:45,855 Stage-1 map = 100%, reduce = 50%, Cumulative CPU 3.21 sec 2019-03-31 15:43:47,927 Stage-1 map = 100%, reduce = 75%, Cumulative CPU 4.35 sec 2019-03-31 15:43:48,959 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 5.35 sec MapReduce Total cumulative CPU time: 5 seconds 350 msec Ended Job = job_1554061731326_0001 Loading data to table db_hive.u4 partition (month=9, day=14) MapReduce Jobs Launched: Stage-Stage-1: Map: 1 Reduce: 4 Cumulative CPU: 5.35 sec HDFS Read: 20301 HDFS Write: 405 SUCCESS Total MapReduce CPU Time Spent: 5 seconds 350 msec

加载日志可以看到有:Map: 1 Reduce: 4

对分桶进行查询:tablesample(bucket x out of y on id)

* x:表示从哪个桶开始查询

* y:表示桶的总数,一般为桶的总数的倍数或者因子。

* x不能大于y。

hive> select * from u4; OK 8 xh8 28 9 14 4 xh4 20 9 14 9 xh9 32 9 14 5 xh5 22 9 14 1 xm1 16 9 14 6 xh6 23 9 14 2 xm2 18 9 14 7 xh7 25 9 14 3 xm3 22 9 14 Time taken: 0.148 seconds, Fetched: 9 row(s)

> select * from u4 tablesample(bucket 1 out of 4 on id); OK 8 xh8 28 9 14 4 xh4 20 9 14 Time taken: 0.149 seconds, Fetched: 2 row(s) hive> select * from u4 tablesample(bucket 2 out of 4 on id); OK 9 xh9 32 9 14 5 xh5 22 9 14 1 xm1 16 9 14 Time taken: 0.069 seconds, Fetched: 3 row(s) hive> select * from u4 tablesample(bucket 1 out of 2 on id); OK 8 xh8 28 9 14 4 xh4 20 9 14 6 xh6 23 9 14 2 xm2 18 9 14 Time taken: 0.089 seconds, Fetched: 4 row(s) hive> select * from u4 tablesample(bucket 1 out of 8 on id) where age > 22; OK 8 xh8 28 9 14 Time taken: 0.075 seconds, Fetched: 1 row(s)

随机查询:

select * from u4 order by rand() limit 3;

OK

1 xm1 16 9 14

3 xm3 22 9 14

6 xh6 23 9 14

Time taken: 20.724 seconds, Fetched: 3 row(s) --走map reduce任务

> select * from u4 tablesample(3 rows); OK 8 xh8 28 9 14 4 xh4 20 9 14 9 xh9 32 9 14 Time taken: 0.073 seconds, Fetched: 3 row(s)

hive> select * from u4 tablesample(30 percent); OK 8 xh8 28 9 14 4 xh4 20 9 14 9 xh9 32 9 14 Time taken: 0.058 seconds, Fetched: 3 row(s)

> select * from u4 tablesample(3G); OK 8 xh8 28 9 14 4 xh4 20 9 14 9 xh9 32 9 14 5 xh5 22 9 14 1 xm1 16 9 14 6 xh6 23 9 14 2 xm2 18 9 14 7 xh7 25 9 14 3 xm3 22 9 14 Time taken: 0.069 seconds, Fetched: 9 row(s)

hive> select * from u4 tablesample(3K); OK 8 xh8 28 9 14 4 xh4 20 9 14 9 xh9 32 9 14 5 xh5 22 9 14 1 xm1 16 9 14 6 xh6 23 9 14 2 xm2 18 9 14 7 xh7 25 9 14 3 xm3 22 9 14 Time taken: 0.058 seconds, Fetched: 9 row(s)

* 分区与分桶的对比

* 分区使用表外的字段,分桶使用表内字段

* 分区可以使用load加载数据,而分桶就必须要使用insert into方式加载数据

* 分区常用;分桶少用

hive数据导入

* load从本地加载

* load从hdfs中加载

* insert into方式加载

* location指定

* like指定,克隆

* ctas语句指定(create table as)

* 手动将数据copy到表目录

hive数据导出

* insert into方式导出

* insert overwrite local directory:导出到本地某个目录

* insert overwrite directory:导出到hdfs某个目录

导出到文件

hive -S -e “use gp1801;select * from u2” > /home/out/02/result

浙公网安备 33010602011771号

浙公网安备 33010602011771号