spark的安装配置

环境说明:

操作系统: centos7 64位 3台

centos7-1 192.168.111.10 master

centos7-2 192.168.111.11 slave1

centos7-3 192.168.111.12 slave21.安装jdk,配置jdk环境变量

https://www.cnblogs.com/zhangjiahao/p/8551362.html

2.安装配置scala

https://www.cnblogs.com/zhangjiahao/p/11689268.html

3.安装spark

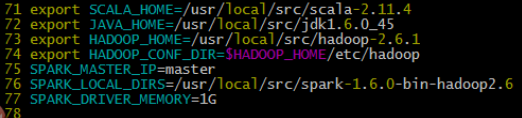

cp spark-env.sh.template spark-env.sh

调整为如下内容

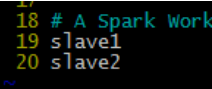

cp slaves.template slaves

调整为如下内容

3.将配置好的spark安装目录,分发到slave1/2节点上 scp -r /usr/local/src/spark-2.0.2-bin-hadoop2.6 root@slave1:/usr/local/src(slave2同理,将salve1改为slave2即可)

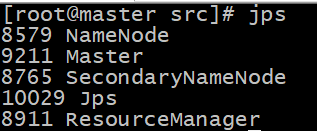

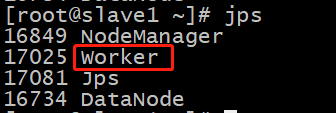

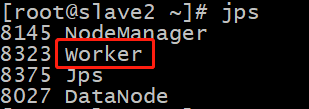

启动Spark ./sbin/start-all.sh