增量式 爬虫

# 增量式 爬虫

概念: 监测网站的数据更新的情况,只爬取网站更新的数据.

核心: 去重

实现 Redis set集合也行

-- 如何实现redis去重? --

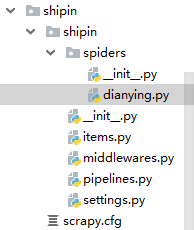

# 爬取电影站的更新数据 url去重 https://www.4567tv.tv/frim/index1.html

# 下面代码以 http://www.922dyy.com/dianying/dongzuopian/ 为例 作为起始页

# spider.py 爬虫文件 # -*- coding: utf-8 -*- import scrapy from scrapy.linkextractors import LinkExtractor from scrapy.spiders import CrawlSpider, Rule from redis import Redis from shipin.items import ShipinItem class DianyingSpider(CrawlSpider): conn = Redis(host='127.0.0.1',port=6379) # 连接对象 name = 'dianying' # allowed_domains = ['www.xx.com'] start_urls = ['http://www.922dyy.com/dianying/dongzuopian/'] rules = ( Rule(LinkExtractor(allow=r'/dongzuopian/index\d+\.html'), callback='parse_item', follow=False), #这里需要所有页面时候改为True ) # 只提取页码url def parse_item(self, response): # 解析出当前页码对应页面中 电影详情页 的url li_list = response.xpath('/html/body/div[2]/div[2]/div[2]/ul/li') for li in li_list: # 解析详情页的url detail_url = 'http://www.922dyy.com' + li.xpath('./div/a/@href').extract_first() # ex = self.conn.sadd('mp4_detail_url',detail_url) # 有返回值 # ex == 1 该url没有被请求过 ex==0在集合中,该url已经被请求过了 if ex==1: print('有新数据可爬.....') yield scrapy.Request(url=detail_url,callback=self.parse_detail) else: print('暂无新数据可以爬取') def parse_detail(self,response): name = response.xpath('//*[@id="film_name"]/text()').extract_first() m_type = response.xpath('//*[@id="left_info"]/p[1]/text()').extract_first() print(name,'--',m_type) item = ShipinItem() #实例化 item['name'] = name item['m_type'] = m_type yield item

# items.py # -*- coding: utf-8 -*- import scrapy class ShipinItem(scrapy.Item): name = scrapy.Field() m_type = scrapy.Field()

# pipelines.py 管道 # -*- coding: utf-8 -*- class ShipinPipeline(object): def process_item(self, item, spider): conn = spider.conn dic = { 'name':item['name'], 'm_type':item['m_type'] } conn.lpush('movie_data',str(dic)) #一般这里不str的话会报错,数据类型dict的错误 return item

# settings.py 里面 ITEM_PIPELINES = { 'shipin.pipelines.ShipinPipeline': 300, } BOT_NAME = 'shipin' SPIDER_MODULES = ['shipin.spiders'] NEWSPIDER_MODULE = 'shipin.spiders' USER_AGENT = 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36' ROBOTSTXT_OBEY = False LOG_LEVEL = 'ERROR'

流程: scrapy startproject Name

cd Name

scrapy genspider -t crawl 爬虫文件名 www.example.com

注意点: 增量式爬虫,会判断url在不在集合里面,sadd (集合的方法) 返回值1就是没在里面,就是新数据.

lpush lrange llen --key 都是redis里面列表类型的方法

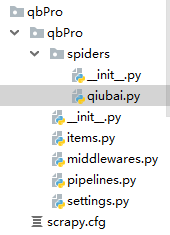

下面是糗事百科段子页面的数据 (作者/段子) 增量式爬取

# 爬虫.py # -*- coding: utf-8 -*- import scrapy,hashlib from qbPro.items import QbproItem from redis import Redis # 只爬取当前页面 class QiubaiSpider(scrapy.Spider): name = 'qiubai' conn = Redis(host='127.0.0.1',port=6379) start_urls = ['https://www.qiushibaike.com/text/'] def parse(self, response): div_list = response.xpath('//*[@id="content-left"]/div') for div in div_list: # 数据指纹:爬取到一条数据的唯一标识 author = div.xpath('./div/a[2]/h2/text() | ./div/span[2]/h2/text()').extract_first().strip() content = div.xpath('./a/div/span[1]//text()').extract() content = ''.join(content).replace('\n','') item = QbproItem() # 实例化 item['author'] = author item['content'] = content # 给爬取到的数据生成一个数据指纹 data = author+content hash_key = hashlib.sha256(data.encode()).hexdigest() ex = self.conn.sadd('hash_key',hash_key) # 输指纹存进 集合里面 if ex == 1: print('有数据更新') yield item else: print('无数据更新')

# items.py # -*- coding: utf-8 -*- import scrapy class QbproItem(scrapy.Item): author = scrapy.Field() content = scrapy.Field()

# pipelines.py 管道 # -*- coding: utf-8 -*- class QbproPipeline(object): def process_item(self, item, spider): conn = spider.conn dic = { 'author': item['author'], 'content': item['content'] } conn.lpush('qiubai', str(dic)) return item

# settings.py 设置

ITEM_PIPELINES = { 'qbPro.pipelines.QbproPipeline': 300, } USER_AGENT = 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/73.0.3683.86 Safari/537.36' ROBOTSTXT_OBEY = False LOG_LEVEL = 'ERROR'