import re

import urllib.request, urllib.error

import xlwt

import sqlite3

from bs4 import BeautifulSoup

# 指定url内容

baseurl = "https://movie.douban.com/top250?start="

def askURL(baseurl):

# 伪造请求头功能

headers = {

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.132 Safari/537.36"}

# 封装对象

request = urllib.request.Request(baseurl, headers=headers)

html = ""

try:

response = urllib.request.urlopen(request)

html = response.read().decode("utf-8")

except urllib.error.URLError as e:

if hasattr(e, "code"):

print(e.code)

if hasattr(e, "reason"):

print(e.reason)

return html

# 创建电影链接正则表达式对象,表示规则(字符串的模式):以<a href="开头 + 一组(.*?) + 以">结尾

findLink = re.compile(r'<a href="(.*?)">')

# 电影图片链接规则:以<img开头 + 中间匹配多个.* + 以src="开头 + 一组(.*?)", 忽略换行符re.S'

findImgSrc = re.compile(r'<img.*src="(.*?)"', re.S)

# 电影片名规则:以<span class="title"> + (.*?) + </span>

findTitle = re.compile(r'<span class="title">(.*?)</span>')

# 电影评分规则:<span class="rating_num" property="v:average"> + (.*?) + </span>

findRating = re.compile(r'<span class="rating_num" property="v:average">(.*?)</span>')

# 评价人数规则版一:<span> + (.*?) + </span>

# findJudge = re.compile(r'<span>(.*?)</span>')

# 评价人数规则版二:<span> + (.*?) + </span>

findJudge = re.compile(r'<span>(\d*)人评价</span>')

# 概况规则:<span class="inq"> + (.*?) + </span>

findInq = re.compile(r'<span class="inq">(.*?)</span>')

# 相关内容规则:<p class=""> + (.*?) + </p>

findBd = re.compile(r'<p class="">(.*?)</p>', re.S)

def getData(baseurl):

# 定义获取网页数据功能

datalist = []

for i in range(0, 10):

url = baseurl + str(i * 25)

html = askURL(url)

# 逐一解析数据

soup = BeautifulSoup(html, "html.parser")

# 查找符合要求标签是div同时属性class_是item的内容

for item in soup.find_all("div", class_="item"):

# 保存一部电影

data = []

# 电影的所有信息转字符串

item = str(item)

# 电影详情链接

link = re.findall(findLink, item)[0]

data.append(link)

# 图片

img = re.findall(findImgSrc, item)[0]

data.append(img)

# 标题

title = re.findall(findTitle, item)

# 标题判断中英文

if (len(title) == 2):

ctitle = title[0]

data.append(ctitle)

otitle = title[1].replace("/", "")

data.append(otitle)

else:

data.append(title[0])

# 无英文名时用空格填充

data.append(" ")

# 评分数

rating = re.findall(findRating, item)[0]

data.append(rating)

# 评价数

judge = re.findall(findJudge, item)[0]

data.append(judge)

# 概况

inq = re.findall(findInq, item)

# 概况判断是否空

if len(inq) != 0:

inq = inq[0].replace("。", "")

data.append(inq)

else:

data.append(" ")

# 相关内容

bd = re.findall(findBd, item)[0]

# 相关内容替换规则:'<br + 中间包含多个部分(\s+)? + /> + 不止(\s+)?',替换成" ",来着bd

bd = re.sub('<br(\s+)?/>(\s+)?', ' ', bd)

bd = re.sub('/', ' ', bd)

data.append(bd.strip())

datalist.append(data)

return datalist

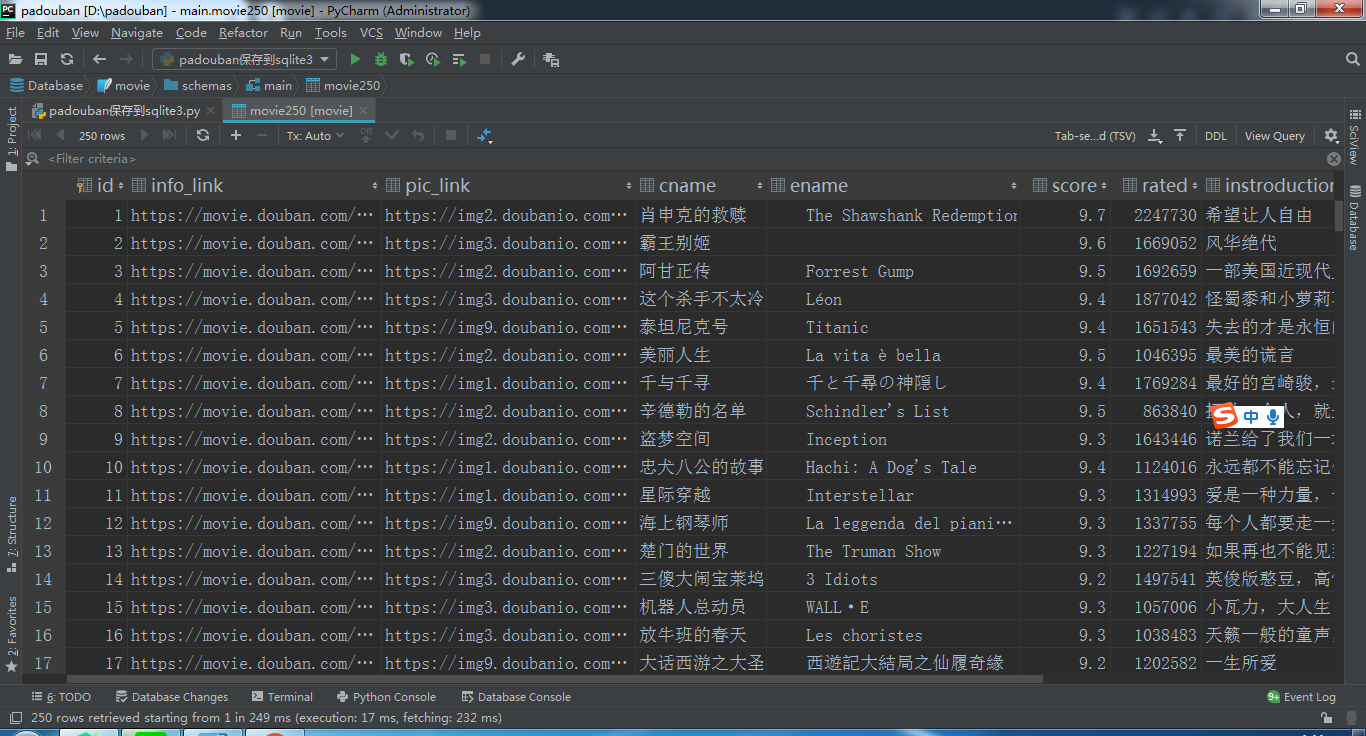

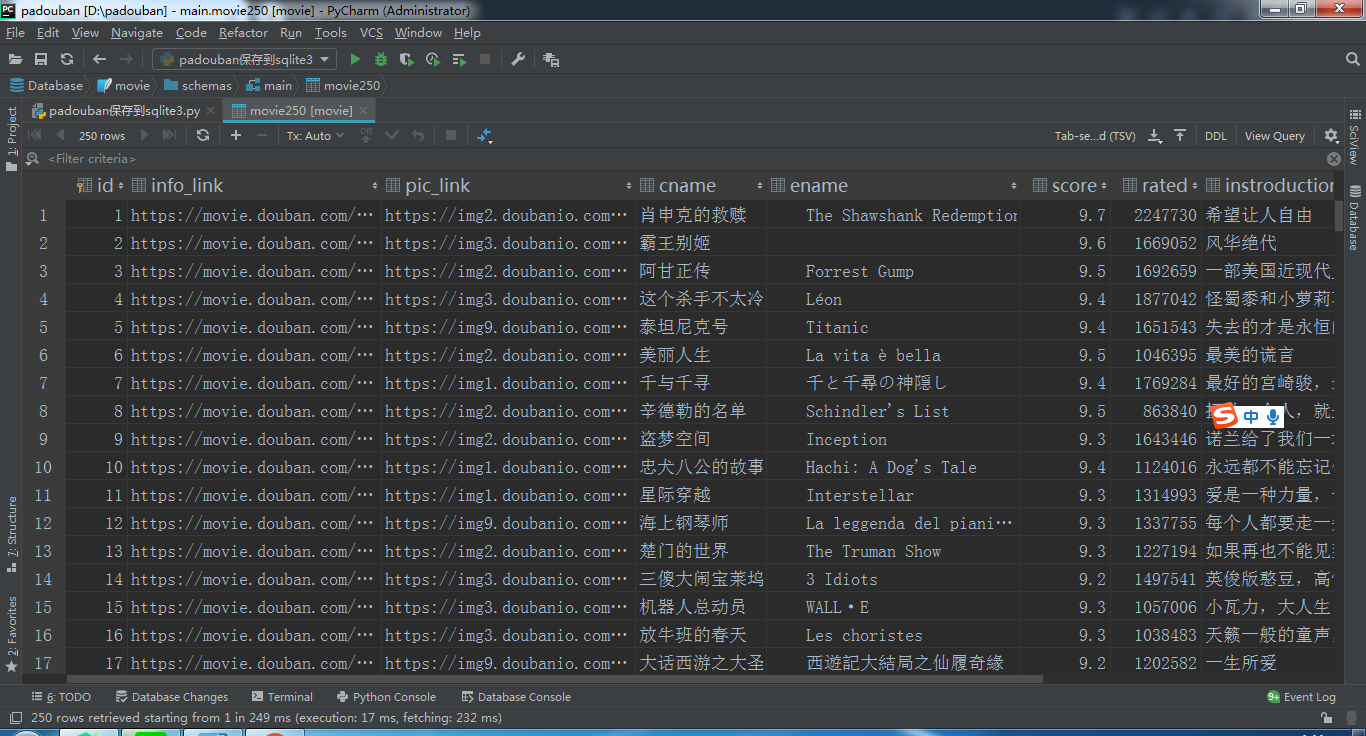

# 初始化数据库相关

def init_db(dbpath):

sql = '''

create table movie250(

id integer primary key autoincrement,

info_link text,

pic_link text,

cname varchar,

ename varchar,

score numeric,

rated numeric,

instroduction text,

info text

);

'''

conn = sqlite3.connect(dbpath)

cursor = conn.cursor()

cursor.execute(sql)

conn.commit()

conn.close()

# 保存数据功能

def saveData(datalist, dbpath):

# 调用创建数据库

init_db(dbpath)

print("开始保存")

# 连接数据库部分

conn = sqlite3.connect(dbpath)

cur = conn.cursor()

# 获取数据

for data in datalist:

for index in range(len(data)):

if index == 4 or index == 5:

continue

data[index] = '"' + data[index] + '"'

sql = '''

insert into movie250(

info_link,pic_link,cname,ename,score,rated,instroduction,info)

values(%s)''' % ",".join(data)

print(sql)

cur.execute(sql)

conn.commit()

cur.close()

conn.close()

if __name__ == '__main__':

# 获取网页数据

datalist = getData(baseurl)

# 保存数据

dbpath = "movie.db"

saveData(datalist, dbpath)

print("保存成功")

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】凌霞软件回馈社区,博客园 & 1Panel & Halo 联合会员上线

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 深入理解 Mybatis 分库分表执行原理

· 如何打造一个高并发系统?

· .NET Core GC压缩(compact_phase)底层原理浅谈

· 现代计算机视觉入门之:什么是图片特征编码

· .NET 9 new features-C#13新的锁类型和语义

· Sdcb Chats 技术博客:数据库 ID 选型的曲折之路 - 从 Guid 到自增 ID,再到

· 语音处理 开源项目 EchoSharp

· 《HelloGitHub》第 106 期

· Spring AI + Ollama 实现 deepseek-r1 的API服务和调用

· 使用 Dify + LLM 构建精确任务处理应用

2020-01-21 读取excel表格.py

2020-01-21 allure的其他参数

2020-01-21 生成allure测试报告:

2020-01-21 Java - 配置Java环境

2020-01-21 Java JDK for Windows

2020-01-21 生成测试报告:

2020-01-21 预处理和后处理