hdfs命令行操作

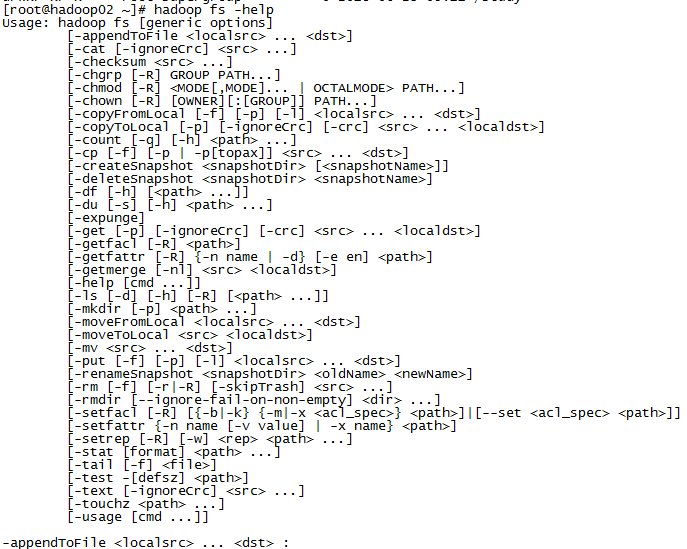

集群环境中,可以在任意一个节点上通过命令行操作hdfs,hdfs命令很多都跟Linux文件系统命令一样,只是都要加上hadoop fs。可通过hadoop fs -help查看hdfs命令:

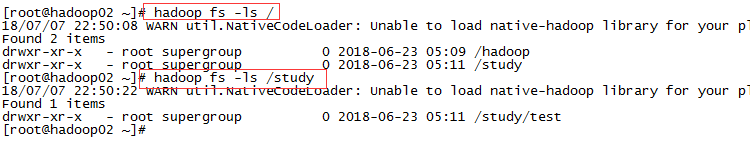

1,列出目录:

hadoop fs -ls /

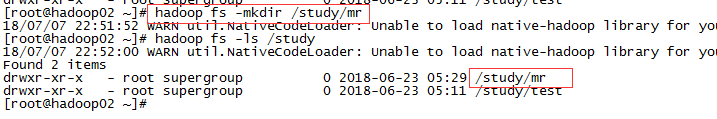

2,创建目录:

hadoop fs -mkdir /study/mr

加上-p可以创建多级目录:

hadoop fs -mkdir -p /study/mr/wordcount/input

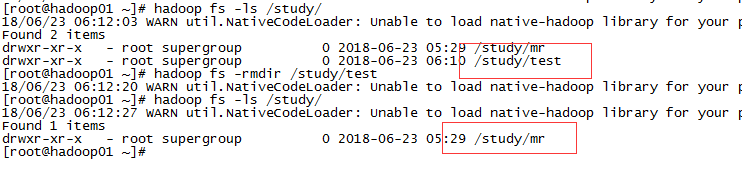

3,删除目录:

hadoop fs -rmdir /study/test

4,上传文件:

hadoop fs -put install.log /study/test,也可以用copyFromLocal从本地复制到hdfs。

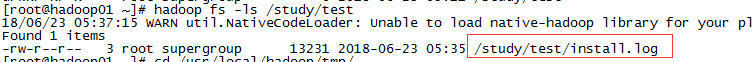

上传完后通过hadoop fs -ls /study/test查看

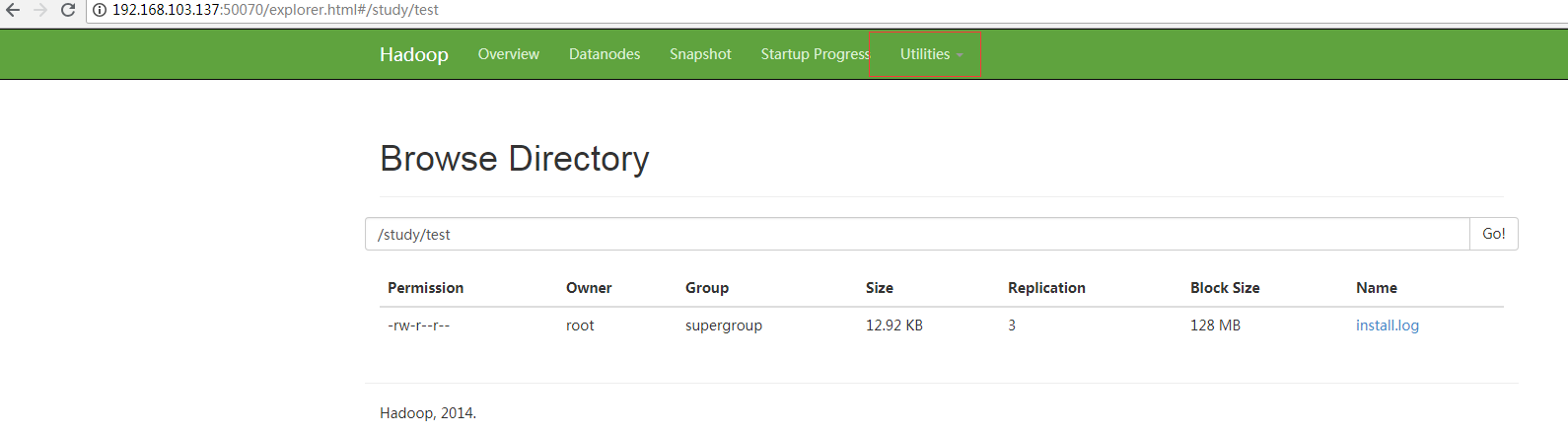

通过web端也可查看,http://192.168.103.137:50070

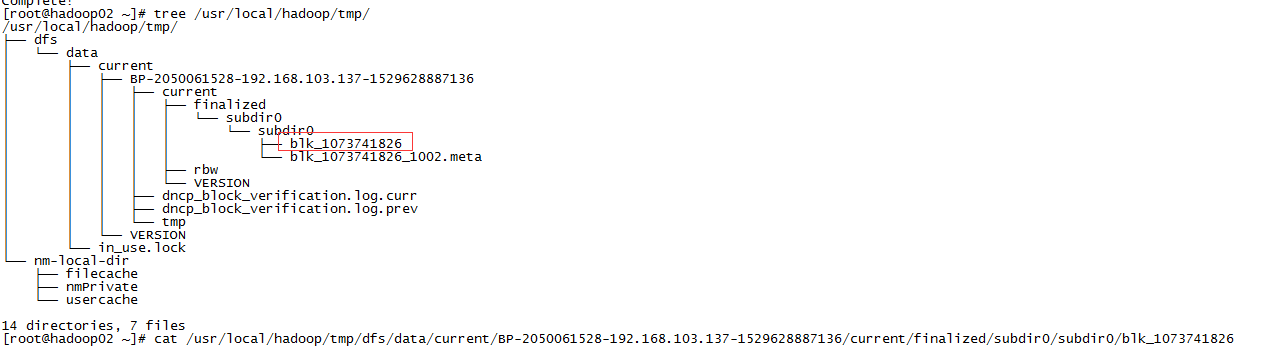

上传后的文件有3个备份文件(注意,显示3个备份并不一定有3个备份文件),分别位于三个datanode节点,上传的数据在/usr/local/hadoop/tmp/dfs/data/current下

用cat命令查看blk_1073741826文件,跟原上传文件一样。

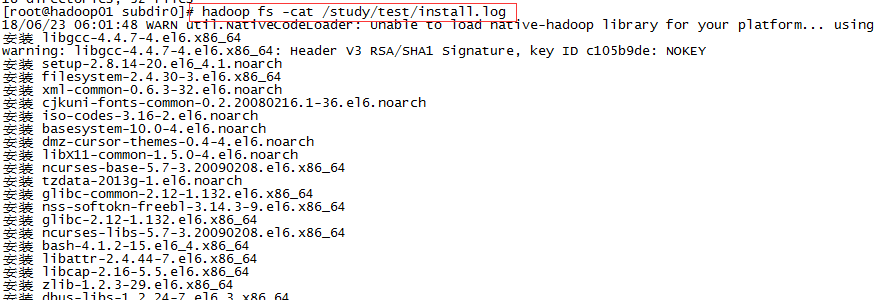

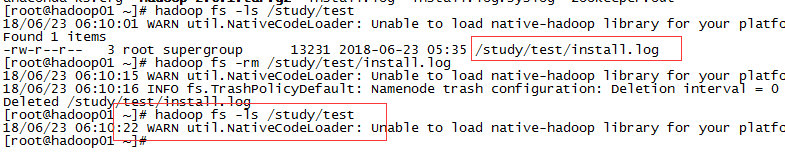

5,查看文件:

hadoop fs -cat /study/test/install.log

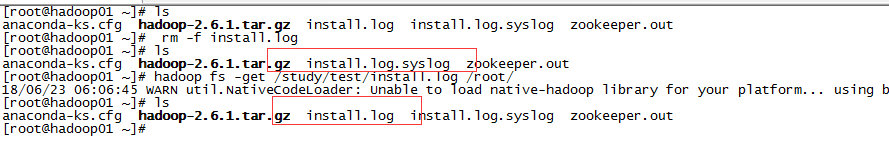

6,下载文件:

hadoop fs -get /study/test/install.log /root/,也可以用copyToLocal从hdfs复制到本地

7,删除文件:

hadoop fs -rm /study/test/install.log

浙公网安备 33010602011771号

浙公网安备 33010602011771号