java.net.ConnectException: Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

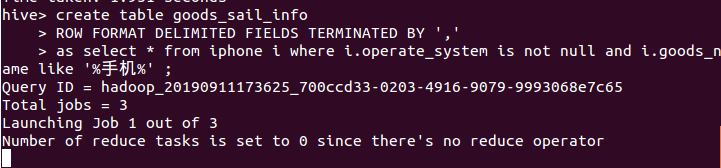

今天使用在hive中建表,并在hive中将查询到的语句插入到新表中时,一直开在如图所示位置不动

等待了20多分钟,然后报了这么个错

java.net.ConnectException: Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

at sun.reflect.GeneratedConstructorAccessor49.newInstance(Unknown Source) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:423) at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:824) at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:750) at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1497) at org.apache.hadoop.ipc.Client.call(Client.java:1439) at org.apache.hadoop.ipc.Client.call(Client.java:1349) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:227) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116) at com.sun.proxy.$Proxy77.getNewApplication(Unknown Source) at org.apache.hadoop.yarn.api.impl.pb.client.ApplicationClientProtocolPBClientImpl.getNewApplication(ApplicationClientProtocolPBClientImpl.java:262) at sun.reflect.GeneratedMethodAccessor5.invoke(Unknown Source) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422) at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165) at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157) at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95) at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359) at com.sun.proxy.$Proxy78.getNewApplication(Unknown Source) at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.getNewApplication(YarnClientImpl.java:236) at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.createApplication(YarnClientImpl.java:244) at org.apache.hadoop.mapred.ResourceMgrDelegate.getNewJobID(ResourceMgrDelegate.java:193) at org.apache.hadoop.mapred.YARNRunner.getNewJobID(YARNRunner.java:265) at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:159) at org.apache.hadoop.mapreduce.Job$11.run(Job.java:1570) at org.apache.hadoop.mapreduce.Job$11.run(Job.java:1567) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889) at org.apache.hadoop.mapreduce.Job.submit(Job.java:1567) at org.apache.hadoop.mapred.JobClient$1.run(JobClient.java:576) at org.apache.hadoop.mapred.JobClient$1.run(JobClient.java:571) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889) at org.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:571) at org.apache.hadoop.mapred.JobClient.submitJob(JobClient.java:562) at org.apache.hadoop.hive.ql.exec.mr.ExecDriver.execute(ExecDriver.java:423) at org.apache.hadoop.hive.ql.exec.mr.MapRedTask.execute(MapRedTask.java:149) at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:205) at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:97) at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2664) at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:2335) at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:2011) at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1709) at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1703) at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:157) at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:218) at org.apache.hadoop.hive.cli.CliDriver.processLocalCmd(CliDriver.java:239) at org.apache.hadoop.hive.cli.CliDriver.processCmd(CliDriver.java:188) at org.apache.hadoop.hive.cli.CliDriver.processLine(CliDriver.java:402) at org.apache.hadoop.hive.cli.CliDriver.executeDriver(CliDriver.java:821) at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:759) at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:683) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.hadoop.util.RunJar.run(RunJar.java:239) at org.apache.hadoop.util.RunJar.main(RunJar.java:153) Caused by: java.net.ConnectException: 拒绝连接 at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717) at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206) at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531) at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:687) at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:790) at org.apache.hadoop.ipc.Client$Connection.access$3500(Client.java:411) at org.apache.hadoop.ipc.Client.getConnection(Client.java:1554) at org.apache.hadoop.ipc.Client.call(Client.java:1385) ... 55 more Job Submission failed with exception 'java.net.ConnectException(Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort)' FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.mr.MapRedTask. Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

在百度的过程中我参考了一篇博客,他的说法是由于没有启动historyserver引起的,于是我就按照他的方法修改了mapred-site.xml,结果还是不行,

于是我就想到了我之前配置过yarn,在开启Hadoop的时候我输入的命令是./sbin/start-dfs.sh,并没有输入./sbin/start-all.sh

于是我就开启了yarn,结果就可以正常的查询插入了。

参考博客:https://www.cnblogs.com/cac2020/p/10274979.html

yarn基本概念:https://baike.baidu.com/item/yarn/16075826?fr=aladdin