Spark:java api读取hdfs目录下多个文件

需求:

由于一个大文件,在spark中加载性能比较差。于是把一个大文件拆分为多个小文件后上传到hdfs,然而在spark2.2下如何加载某个目录下多个文件呢?

public class SparkJob { public static void main(String[] args) { String filePath = args[0]; // initialize spark session String appName = "Streaming-MRO-Load-Multiple-CSV-Files-Test"; SparkSession sparkSession = SparkHelper.getInstance().getAndConfigureSparkSession(appName); // reader multiple csv files. try { Dataset<Row> rows = sparkSession.read().option("delimiter", "|").option("header", false) .csv(filePath).toDF(getNCellSchema()); rows.show(10); } catch (Exception ex) { ex.printStackTrace(); } try { Dataset<String> rows = sparkSession.read().textFile(filePath); rows.show(10); } catch (Exception ex) { ex.printStackTrace(); } SparkHelper.getInstance().dispose(); } private static Seq<String> getNCellSchema() { List<String> ncellColumns = "m_id,m_eid,m_int_id,....."; List<String> columns = new ArrayList<String>(); for (String column : ncellColumns) { columns.add(column); } Seq<String> columnsSet = JavaConversions.asScalaBuffer(columns); return columnsSet; } }

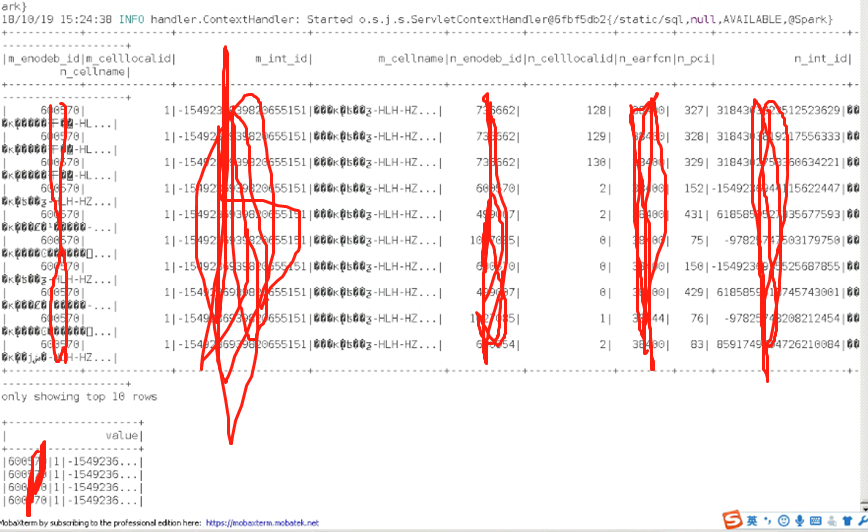

测试结果:

基础才是编程人员应该深入研究的问题,比如:

1)List/Set/Map内部组成原理|区别

2)mysql索引存储结构&如何调优/b-tree特点、计算复杂度及影响复杂度的因素。。。

3)JVM运行组成与原理及调优

4)Java类加载器运行原理

5)Java中GC过程原理|使用的回收算法原理

6)Redis中hash一致性实现及与hash其他区别

7)Java多线程、线程池开发、管理Lock与Synchroined区别

8)Spring IOC/AOP 原理;加载过程的。。。

【+加关注】。

浙公网安备 33010602011771号

浙公网安备 33010602011771号