Oracle 19.3 RAC on Redhat 7.6 安装最佳实践

2020-03-19 16:26 狂澜与玉昆0950 阅读(4636) 评论(1) 收藏 举报本文讲述了在Redhat Linux 7.6上安装Oracle 19.3 RAC的详细步骤,是一篇step by step指南;

借鉴资深工程师赵庆辉、赵靖宇等人技术博客或公众号编写。

一、实施前期准备工作

- 1.1 服务器安装操作系统

- 1.2 Oracle安装介质

- 1.3 共享存储规划

- 1.4 网络规范分配

二、安装前期准备工作

- 2.0 服务器检查

- 2.1 各节点系统时间校对

- 2.2 各节点关闭防火墙和SELinux

- 2.3 各节点检查系统依赖包安装情况

- 2.4 各节点配置/etc/hosts

- 2.5 各节点创建需要的用户和组

- 2.6 各节点创建安装目录

- 2.7 各节点系统配置文件修改

- 2.8 各节点设置用户的环境变量

- 3.1 解压GI的安装包

- 3.2 安装配置Xmanager软件

- 3.3 共享存储LUN的赋权

- 3.4 使用Xmanager图形化界面配置GI

- 3.5 验证crsctl的状态

- 3.6 测试集群的FAILED OVER功能

- 4.1 ASMCA创建磁盘组

本文安装环境:Redhat 7.6 + Oracle 19.3 GI & RAC

-----------------------------------------------------------------------------------------------------------------------------------

---环境介绍

|

分类 |

项目 |

说明 |

|

主机

|

操作系统 |

Red Hat Enterprise Linux Server release 7.6 (Maipo) |

|

操作系统内核版本 |

Linux version 3.10.0-957.el7.x86_64 |

|

|

硬件配置 |

Intel(R) Core(TM) i5-8250U CPU @ 1.60GHz && 2CPUS |

|

|

RAC 公共网卡 |

Ethernet,1000Mb |

|

|

CRS 网络网卡 |

Ethernet,1000Mb |

|

|

ASM网络网卡 |

Ethernet,1000Mb |

|

|

具体网络IP地址设置 |

# Public IP |

|

|

数据库

|

Oracle版本 |

Oracle 19.3 64位 |

|

运行模式 |

RAC |

|

|

ORACLE ASM |

SYS 3GB |

|

|

数据库名 |

C193 AL32UTF8字符集 |

|

|

实例名 |

C1931/ C1932 |

|

|

数据库用户 |

grid |

|

|

本地安装路径 |

/u01 50G |

---数据库系统规划

|

项目名称\服务器名 |

mm1903 |

mm1904 |

|

公共IP地址(pub-ip) |

192.168.56.56 |

192.168.56.57 |

|

虚拟IP地址(vip) |

192.168.56.58 |

192.168.56.59 |

|

私有IP地址(priv-ip) |

20.20.20.56 |

20.20.20.57 |

|

ASM网络地址 |

20.20.20.56 |

20.20.20.57 |

|

SCAN IP/NAME |

192.168.56.60 |

|

|

SCAN NAME |

mm1903-scan |

|

|

集群名称 |

mm1903-cluster |

|

|

集群数据库名 |

C193 |

|

|

集群数据库实例名称 |

C1931 |

C1932 |

|

OCR/Vote磁盘组 |

/dev/asm-diskb |

|

|

归档闪回磁盘组 |

/dev/asm-diske |

|

|

数据磁盘组 |

/dev/asm-diskg |

|

|

集群软件版本 |

19.3 |

|

|

数据库版本 |

19.3 |

|

|

集群软件BASE目录 |

/u01/app/grid |

|

|

集群软件HOME目录 |

/u01/app/19.3.0/grid |

|

|

数据库BASE目录 |

/u01/app/oracle |

|

|

数据库软件HOME目录 |

/u01/app/oracle/product/19.3.0 |

|

|

数据库监听端口 |

1521 |

|

|

数据库字符集 |

AL32UTF8 |

|

|

国家语言字符集 |

AL16UTF16 |

|

|

数据库块大小 |

8K |

|

-----------------------------------------------------------------------------------------------------------------------------------

本文安装环境:Redhat 7.6 + Oracle 19.3 GI & RAC

一、实施前期准备工作

1.1 服务器安装操作系统

在Oracle VM VirtualBox上配置完全相同的两台服务器,安装相同版本的Linux操作系统。留存系统光盘或者镜像文件。

我这里是Redhat Linux 7.6,系统目录大小均一致。对应Redhat Linux 7.6的系统镜像文件放在服务器上,供后面配置本地yum使用。

1.2 Oracle安装介质

Oracle 19.3 版本2个zip包(总大小6G+,注意空间):

LINUX.X64_193000_grid_home.zip

LINUX.X64_193000_db_home.zip

这个自己去Oracle官网下载,然后只需要上传到节点1即可。

1.3 共享存储规划

从Oracle 12CR1开始ASMFD被引入,相对于asmlib而言ASMFD具有IO过滤功能,能有效的防止非法写入,从而避免ASM磁盘被误写入。

所以在软件支持的前提下我们更推荐使用ASMFD。但根据Note 2034681.1,使用asmfd需要升级内核并安装GI Patch for Bug 27494830。

故本次安装没有使用asmfd,而采用使用udev。

从存储中划分出两台主机可以同时看到的共享LUN,3个1G的盘用作OCR和Voting Disk,其余分了3个12G的盘规划做用做数据盘和FRA。

注:19c安装GI时,可以选择是否配置GIMR,且默认不配置,我这里选择不配置,所以无需再给GIMR分配对应空间。

--Redhat7使用udev需要给磁盘创建分区,这里我使用fdisk 将对应盘创建一个主分区,分区号为2(这里只是为了区分): sdb sdc sdd sde sdf sdg sdb2 sdc2 sdd2 sde2 sdf2 sdg2 1G 1G 1G 12G 12G 12G --Redhat7中udev需绑定对应磁盘的分区,借助脚本查看磁盘的UUID:

for i in b c d e f g; do echo "KERNEL==\"sd?2\", SUBSYSTEM==\"block\", PROGRAM==\"/usr/lib/udev/scsi_id -g -u -d /dev/\$parent\", RESULT==\"`/usr/lib/udev/scsi_id -g -u -d /dev/sd\$i`\", SYMLINK+=\"asm-disk$i\", OWNER=\"grid\", GROUP=\"asmadmin\", MODE=\"0660\"" done --编辑udev规则文件:vi /etc/udev/rules.d/99-oracle-asmdevices.rules KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VBb639722b-ca23600a", SYMLINK+="asm-diskb", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VB9decb0f2-36c6eaf9", SYMLINK+="asm-diskc", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VBc164e474-ac1a4998", SYMLINK+="asm-diskd", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VB630a2ffb-321550c1", SYMLINK+="asm-diske", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VBf782becb-7f6544c2", SYMLINK+="asm-diskf", OWNER="grid", GROUP="asmadmin", MODE="0660" KERNEL=="sd?2", SUBSYSTEM=="block", PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="1ATA_VBOX_HARDDISK_VB8ebd6644-450da0cd", SYMLINK+="asm-diskg", OWNER="grid", GROUP="asmadmin", MODE="0660"

--udevadm配置重载生效: [root@mm1903 rules.d]# udevadm control --reload

[root@mm1903 rules.d]# udevadm trigger

或者

[root@mm1903 rules.d]# /sbin/udevadm trigger --type=devices --action=change

--确认udev绑定成功,已生成绑定后的设备: [root@mm1903 ~]# ls -ltr /dev/asm-disk* lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diskb -> sdb2 lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diskc -> sdc2 lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diskf -> sdf2 lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diske -> sde2 lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diskg -> sdg2 lrwxrwxrwx. 1 root root 4 3月 18 12:55 /dev/asm-diskd -> sdd2

--再将/etc/udev/rules.d/99-oracle-asmdevices.rules拷贝到另一节点,并执行使其生效。

--第二个节点mm1904最开始直接使用udevadm操作发现不行,此时需先partprobe,再udevadm触发即可成功 --使用partprobe将磁盘分区表变化信息通知内核,请求操作系统重新加载分区表 [root@mm1903 ~]# partprobe /dev/sdb

[root@mm1903 ~]# partprobe /dev/sdc

[root@mm1903 ~]# partprobe /dev/sdd

[root@mm1903 ~]# partprobe /dev/sde

[root@mm1903 ~]# partprobe /dev/sdf

[root@mm1903 ~]# partprobe /dev/sdg

--udevadm配置重载生效: [root@mm1903 ~]# udevadm control --reload

[root@mm1903 ~]# udevadm trigger

--确认udev已绑定成功: [root@mm1904 ~]# ll /dev/asm* lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diskb -> sdb2 lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diskc -> sdc2 lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diskd -> sdd2 lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diske -> sde2 lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diskf -> sdf2 lrwxrwxrwx. 1 root root 4 3月 18 12:58 /dev/asm-diskg -> sdg2

1.4 网络规范分配

公有网络 以及 私有网络。

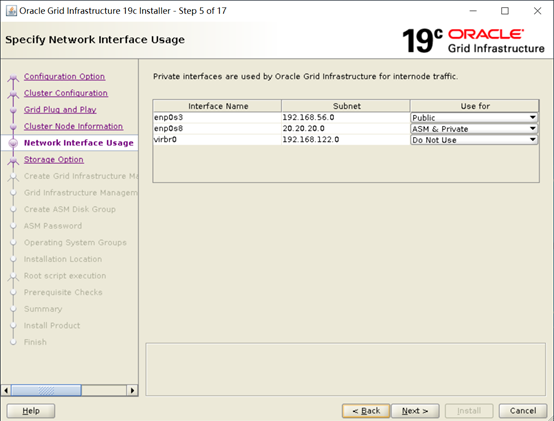

公有网络:这里实验环境是enp0s3是public IP,enp0s8是ASM & Private IP.

实际生产需根据实际情况调整规划,一般public是有OS层绑定(bonding),private是使用HAIP。

二、安装前期准备工作

2.0服务器检查

--检查CPU信息 cat /proc/cpuinfo | grep "model name" --检查物理内存容量,根据oracle安装文档,至少8GB物理内存。

cat /proc/meminfo|grep "MemTotal"

--检查交换空间容量

/usr/sbin/swapon

free –m

按照oracle安装文档,对交换空间配置要求如下:

4GB < 主机内存 <16GB, then swap >= 主机内存

主机内存 >= 16GB, then swap = 16GB

注,如果使用了HugePages,则需要先从物理内存中减掉HugePages占用的内存大小,再按上面的公式计算。

--检查文件系统空间 df -k

按照oracle安装文档,需要的文件系统如下:

/tmp至少需要1GB,再实际生产环境中我们推荐临时目录10GB以上。当前主机没有为临时目录配置单独挂载点,临时目录位于跟目录下,空间为50GB,符合安装要求。GRID_HOME至少需要8GB,ORACLE_HOME至少需要6.4GB。上面列出的只是安装最低要求,也就是软件实际占用的空间。但是为了避免以后打补丁时失败及运行过程中产生大量日志导致文件系统不足,Oracle 推荐至少分配100G的空间给GI和DB的安装目录。

--检查网卡信息

ifconfig

从Oracle 11.2.0.2开始要求私网必须支持多播,所以要顺便看一下网卡配置信息里是否有MULTICAST字样,Linux默认是开启的。

--检查操作系统版本 cat /etc/redhat-release --检查Linux内核版本 uname -r --检查系统运行级别 runlevel

按要求必须运行在3级或5级。如果想修改到3节省资源,执行下面的命令,修改完后需要重启生效。

systemctl set-default multi-user.target

2.1 各节点系统时间校对

各节点系统时间校对:

--检验时间和时区确认正确 date --配置系统时钟同步,关闭chrony服务,移除chrony配置文件(后续使用ctss) Oracle Clusterware要求集群中的所有节点时钟同步,常用的方法有: 1.Cluster TimeSynchronization daemon (ctssd) 2.Ntp 或 chrony(Redhat 7.X) 我们仍然建议在用户环境中配置ntp服务,这样可以保证中所有主机时钟一致。而chronyd是我们不建议的,这是因为chronyd并不能直接修改时钟,只是对系统时钟给出加快或放缓的建议。所以有些情况下效果并不明显。

--关闭并禁用chrony服务

systemctl list-unit-files|grep chronyd systemctl status chronyd systemctl disable chronyd systemctl stop chronyd

--删除其配置文件

mv /etc/chrony.conf /etc/chrony.conf_bak

--配置NTP,开启微调模式

编辑/etc/sysconfig/ntpd,在-g后面加上-x 和 -p参数

# Command line options for ntpd

OPTIONS="-g -x -p /var/run/ntpd.pid"

注:

1.默认情况下ntp是没有安装的,需要单独安装,才能有上面的配置文件。

2.X参数用于设置微调模式,防止时钟后退或大幅改变。P指定pid文件,都是必须的

参考文档:

OracleLinux: NTP Does Not Start Automatically After Server Reboot on OL7 (文档ID 2422378.1)

Tipson Troubleshooting NTP / chrony Issues (文档ID 2068875.1)

2.2 各节点关闭防火墙和SELinux

各节点关闭防火墙:

systemctl list-unit-files|grep firewalld systemctl status firewalld systemctl disable firewalld systemctl stop firewalld

各节点关闭SELinux:

getenforce cat /etc/selinux/config 手工修改/etc/selinux/config SELINUX=disabled,或使用下面命令: sed -i '/^SELINUX=.*/ s//SELINUX=disabled/' /etc/selinux/config setenforce 0

最后核实各节点已经关闭SELinux即可。

2.3 各节点检查系统必要的软件包安装情况

可以在配置好yum之后,直接安装:

yum -y install bc binutils compat-libcap1compat-libstdc++-33 compat-libstdc++-33.i686 dtrace-modules dtrace-modules-headersdtrace-modules-provider-headers dtrace-utils elfutils-libelf.i686elfutils-libelf elfutils-libelf-devel.i686 elfutils-libelf-devel glibcglibc.i686 glibc-devel glibc-devel.i686 ksh libaio libaio.i686 libaio-devellibaio-devel.i686 libdtrace-ctf-devel libXrender libXrender-devel libX11.i686libX11 libXau.i686 libXau libXi.i686 libXi libXtst libXtst.i686 libgcclibgcc.i686 librdmacm-devel.i686 librdmacm-devel libstdc++ libstdc++.i686libstdc++-devel libstdc++-devel.i686 libxcb.i686 libxcb make nfs-utilsnet-tools smartmontools python python-configshell python-rtslib python-sixtargetcli gcc gcc-c++ sysstat yum -y install compat-libcap1 libstdc++-devel* libaio-devel* compat-libstdc++-33*

2.4 各节点配置/etc/hosts

编辑/etc/hosts文件:

# Public IP 192.168.56.56 mm1903 192.168.56.57 mm1904 # Virtaul ip 192.168.56.58 mm1903-vip 192.168.56.59 mm1904-vip # Scan ip 192.168.56.60 mm1903-scan 192.168.56.61 mm1903-scan 192.168.56.62 mm1903-scan # ASM & Private IP 20.20.20.56 mm1903-priv 20.20.20.57 mm1904-priv

修改主机名(建议由SA调整):

--例如:修改主机名为mm1903: hostnamectl status hostnamectl set-hostname mm1903 hostnamectl status

2.5 各节点创建需要的用户和组

创建group & user,给oracle、grid设置密码:

groupadd -g 54321 oinstall groupadd -g 54322 dba groupadd -g 54323 oper groupadd -g 54324 backupdba groupadd -g 54325 dgdba groupadd -g 54326 kmdba groupadd -g 54327 asmdba groupadd -g 54328 asmoper groupadd -g 54329 asmadmin groupadd -g 54330 racdba useradd -u 54321 -g oinstall -G dba,asmdba,backupdba,dgdba,kmdba,racdba,oper oracle useradd -u 54322 -g oinstall -G asmadmin,asmdba,asmoper,dba grid echo oracle | passwd --stdin oracle echo oracle | passwd --stdin grid

我这里测试环境设置密码都是oracle,实际生产环境建议设置符合规范的复杂密码。

2.6 各节点创建安装目录

各节点创建安装目录(root用户):

mkdir -p /u01/app/19.3.0/grid mkdir -p /u01/app/grid mkdir -p /u01/app/oracle chown -R grid:oinstall /u01 chown oracle:oinstall /u01/app/oracle chmod -R 775 /u01/

2.7 各节点系统配置文件修改

内存内核参数修改:vi /etc/sysctl.conf

fs.file-max = 6815744 fs.aio-max-nr = 1048576 kernel.shmall = 3145728 kernel.shmmax = 8589934592 kernel.shmmni = 4096 kernel.sem = 250 32000 100 128 net.ipv4.ip_local_port_range = 9000 65500 net.core.rmem_default = 262144 net.core.rmem_max = 4194304 net.core.wmem_default = 262144 net.core.wmem_max = 1048576 vm.min_free_kbytes = 1048576 net.ipv4.conf.enp0s8.rp_filter = 2

--使其生效

sysctl -p /etc/sysctl.conf

说明:

1.参数rp_filter用于控制系统是否开启对数据包源地址的校验。0为不校验,1为开启严格校验,2为开启松散校验。当使用多个私网接口时需要将该参数设为0或2。详情参考: rp_filter for multipleprivate interconnects OL7 (文档 ID 2216652.1) 2.min_free_kbytes指的是系统预留内存大小,以K为单位。我们建议预留1G内存。

3.smmax是指单个共享内存段的大小,对于Oracle数据库来说就是SGA的部分,我们建议分配的足够大,即使超出物理内存的大小也不会有什么影响。 4.Shmall是指全部共享内存段之和的上限,在单个数据实例的环境中可以设为shmmax/pagesize Pagesize可以通过下面的命令得到: [root@mm1904 ~]# getconfPAGESIZE 40965.参数fs.aio-max-nr设置异步IO打开的句柄数最大值,1048576仅为Oracle安装的最小要求,每个进程打开的AIO 句柄并不相同,最多的打开了4096个。所以我们建议将这外参数调整为主机上所有实例的Oracle进程之和*4096。相关文档可以参考: What value should kernelparameter AIO-MAX-NR be set to ? (文档 ID 2229798.1)

设置用户shell限制

在两个节点上使用root用户编辑文件/etc/security/limits.conf,添加下面的内容:

grid soft nproc 2047 grid hard nproc 16384 grid soft nofile 1024 grid hard nofile 65536 grid soft stack 10240 grid hard stack 32768 oracle soft nproc 2047 oracle hard nproc 16384 oracle soft nofile 1024 oracle hard nofile 65536 oracle soft stack 10240 oracle hard stack 32768 oracle hard memlock 6291456 oracle soft memlock 6291456

Memlock用于设置用户运行时允许锁定的内存,单位为K。这里也可以先不设置,等后面开启大页时再行设置。

2.8 各节点设置用户的环境变量

第1个节点grid用户:

export ORACLE_SID=+ASM1; export ORACLE_BASE=/u01/app/grid; export ORACLE_HOME=/u01/app/19.3.0/grid; export PATH=$ORACLE_HOME/bin:$PATH; export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib;

第2个节点grid用户:

export ORACLE_SID=+ASM2; export ORACLE_BASE=/u01/app/grid; export ORACLE_HOME=/u01/app/19.3.0/grid; export PATH=$ORACLE_HOME/bin:$PATH; export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib;

第1个节点oracle用户:

export ORACLE_SID=mm1903; export ORACLE_BASE=/u01/app/oracle; export ORACLE_HOME=/u01/app/oracle/product/19.3.0/db_1; export PATH=$ORACLE_HOME/bin:$PATH; export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib;

第2个节点oracle用户:

export ORACLE_SID=mm1904; export ORACLE_BASE=/u01/app/oracle; export ORACLE_HOME=/u01/app/oracle/product/19.3.0/db_1; export PATH=$ORACLE_HOME/bin:$PATH; export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib;

三、GI(Grid Infrastructure)安装

3.1 解压GI的安装包

[grid@mm1903 grid]$ pwd /u01/app/19.3.0/grid [grid@mm1903 grid]$ unzip /use/local/src/resource/LINUX.X64_193000_grid_home.zip

3.2 安装配置Xmanager软件

安装过程需要启动图形界面,本例中使用了xmanager,在开始下面的命令之前先开启Xmanager - Passive,直接在SecureCRT连接的会话窗口中临时配置DISPLAY变量直接调用图形,下面的地址192.168.56.1是启动图形界面的机器地址。

export DISPLAY=192.168.56.1:0.0

3.3 共享存储LUN的赋权

在《Linux平台 Oracle 19c RAC安装Part1:准备工作 -> 1.3 共享存储规划》中已完成绑定和权限,这里不需要再次操作。

[root@mm1903 ~]# ll /dev/sd?2* brw-rw---- 1 root disk 8, 2 3月 19 13:06 /dev/sda2 brw-rw---- 1 grid asmadmin 8, 18 3月 19 13:25 /dev/sdb2 brw-rw---- 1 grid asmadmin 8, 34 3月 19 13:25 /dev/sdc2 brw-rw---- 1 grid asmadmin 8, 50 3月 19 13:25 /dev/sdd2 brw-rw---- 1 grid asmadmin 8, 66 3月 19 13:25 /dev/sde2 brw-rw---- 1 grid asmadmin 8, 82 3月 19 13:20 /dev/sdf2 brw-rw---- 1 grid asmadmin 8, 98 3月 19 13:25 /dev/sdg2

3.4 使用Xmanager图形化界面配置GI

然后一步步执行下面的命令:

[grid@mm1903 ~]$ export DISPLAY=192.168.56.1:0.0 [grid@mm1903 ~]$ xhost + access control disabled, clients can connect from any host xhost: must be on local machine to enable or disable access control. [grid@mm1903 ~]$ cd $ORACLE_HOME

[grid@mm1903 grid]$ ./gridSetup.sh

从12C R2开始,GI的配置就跟之前有一些变化,19C也一样,下面来看下GI配置的整个图形化安装的过程截图,几秒钟之后出现安装界面:

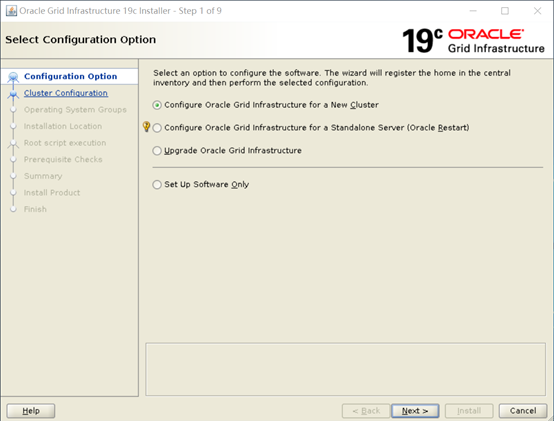

Step 1 of 9:选择第一项->配置新的集群。然后点下一步,进入下面的界面:

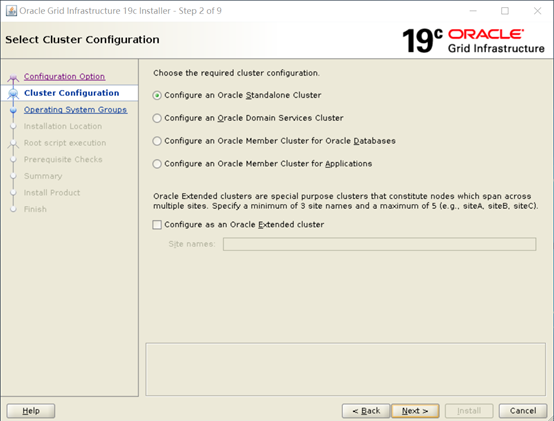

Step 2 of 9:选择第一项,Standalone Cluster->传统的RAC模式。然后点下一步进入下面的界面:

Step 3 of 17:这里输入集群名和scan name等信息,集群的名字不能超过15位。因为不使用GNS所以注意不要勾选,进入下一步:

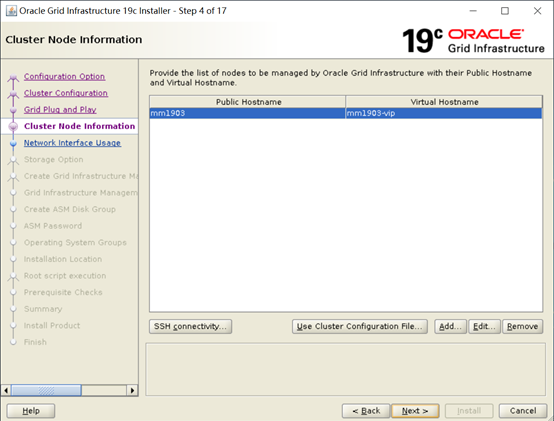

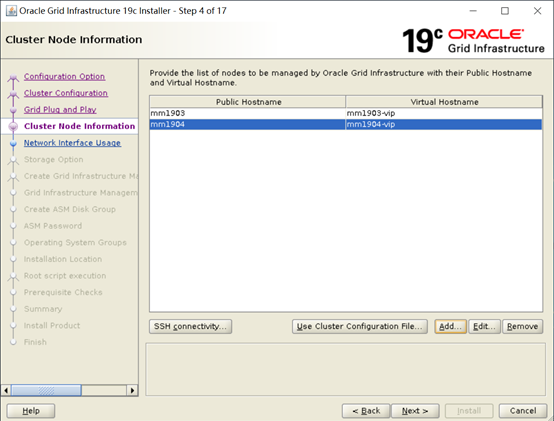

Step 4 of 17:在这里只列出了本地节点的信息,需要点击Add键将其它节点添加进去,添加完后如下。

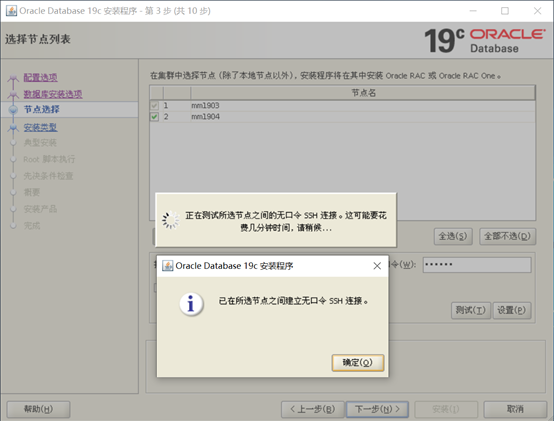

同时在这里建议点击SSH connectivity测试节点间的连通性,如果前面用户等效性配置错误,也可以在这里重新配置。确认无误后进下一界面:

Step 5 of 17:按照规划,将192.168.56.0网段设为public,将20.20.20.0网段设为ASM & Private,其它不使用的网段设为Do Not Use。然后进入下一界面:

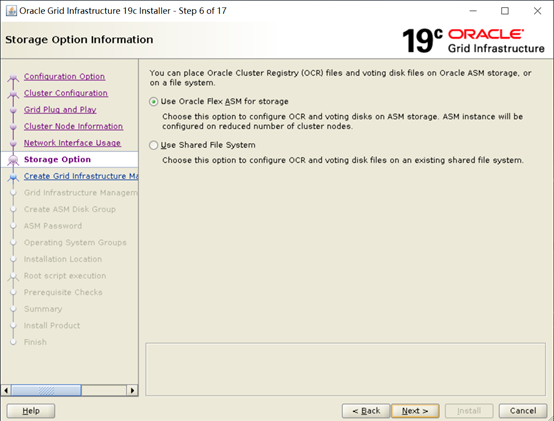

Step 6 of 17:这里选择Flex ASM,然后进入下一界面:

Step 7 of 17:从Oracle19.2开始GIMR已经变成可选项了,考虑到多数用户实际使用的不多。这里我们选择“No”,不进行创建GIMR,然后进入下一界面配置ASM磁盘组:

Step 8 of 17:这一界面默认没有磁盘列出,需要点击“Change Discovery Path”按钮,将磁盘搜索路径改为/dev/asmdisk*,可用磁盘就能列出来。配置OCR和Votedisk使用的磁盘组SYS,冗余级别选择Normal,FailureGroup不用选。注意,不要选Configure Oracle ASM Filter Driver,因为默认的内核版本不支持ASMAFD,如果选中进入下一步会报错,如果要坚持使用ASMAFD,则需要先升级内核版本,并安装GI补丁patch 27494830:

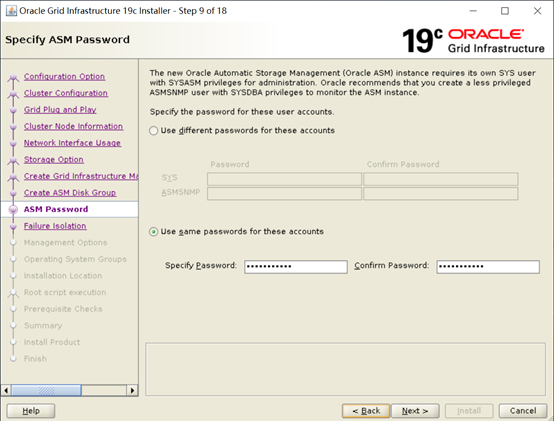

Step 9 of 17:输入密码,这里设置相同的密码即可。

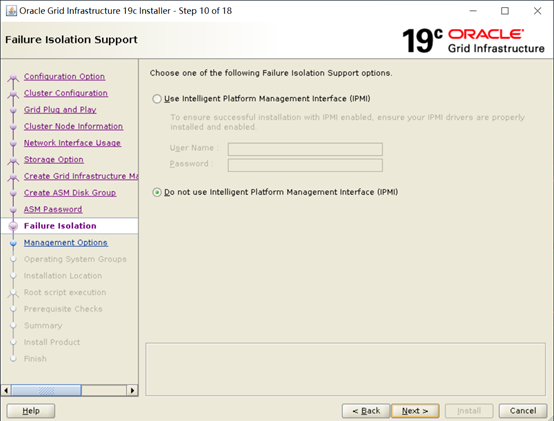

Step 10 of 17:不使用IPMI,下一步:

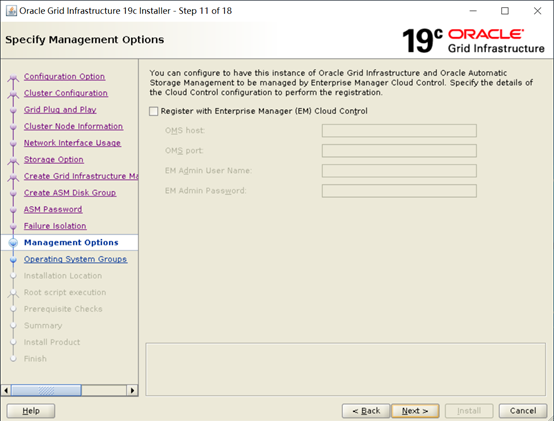

Step 11 of 17:暂时不配置Could Control。

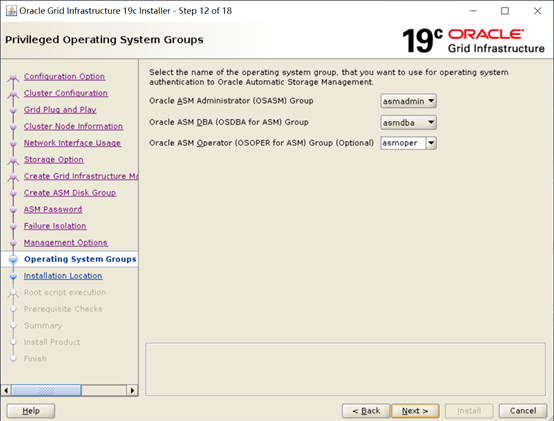

Step 12 of 17:配置操作系统用户组:

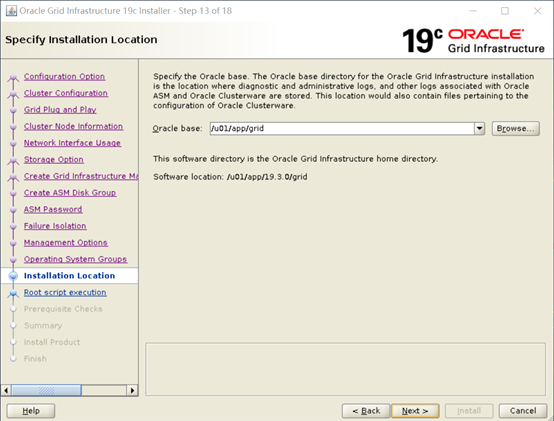

Step 13 of 17:指定Oracle Base:

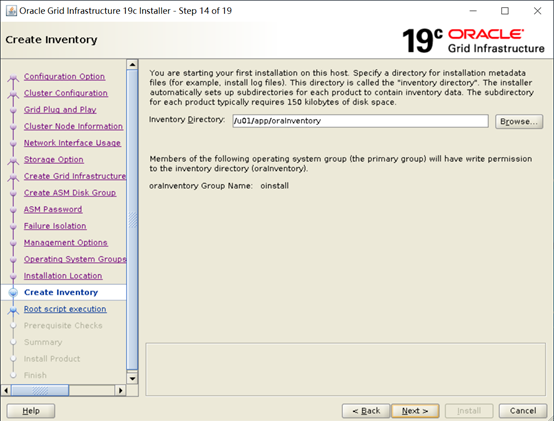

Step 14 of 17:设置inventory目录,保持默认。进入下一界面:

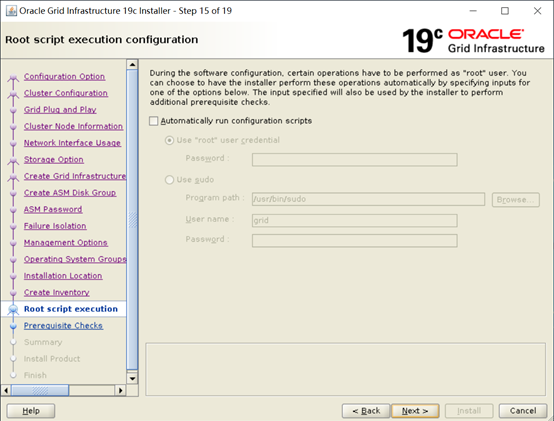

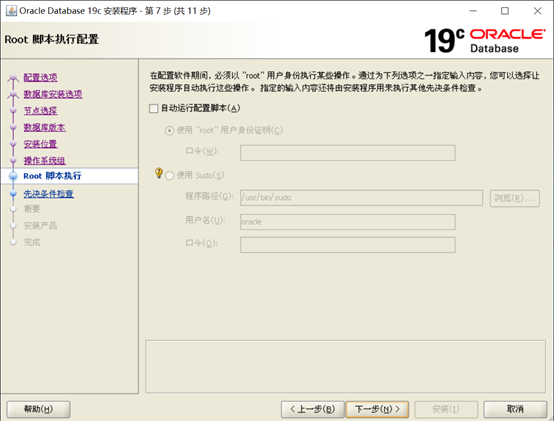

Step 15 of 17:这配置是否需要自动执行后面的root.sh却本,如果选中自动执行则需要输出root密码。这里选择不自动执行,然后点击下一步:

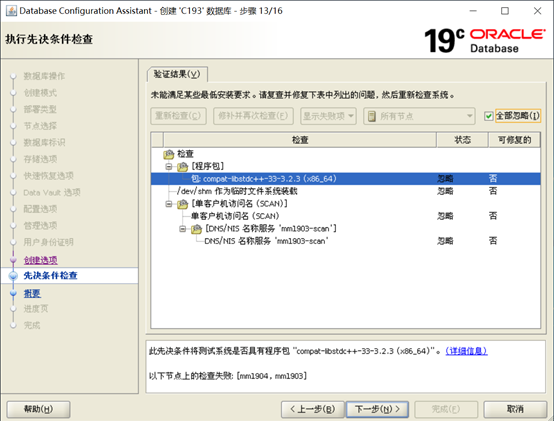

Step 16 of 17:

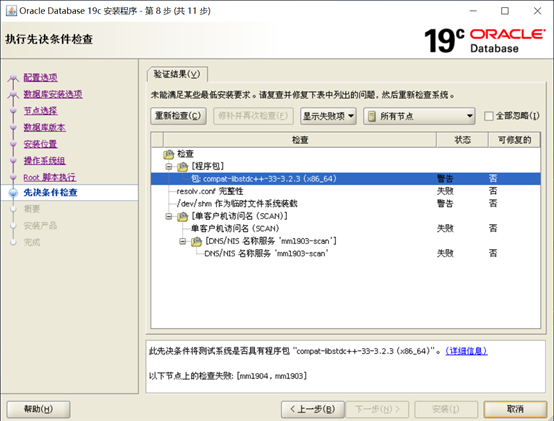

在这一界面,检查出来的问题都需要认真核对,确认确实可以忽略才可以点击“Ignore All”。如果这里检测出缺少某些RPM包,需要使用yum安装好。

我这里是自己的测试环境,分的配置较低,所以有内存和DNS之类的检测不通过,实际生产环境不应出现。

可以点击Fix&Check Again按钮,生成一个脚本,使用Root用户执行修复问题。

检查后确认问题可以忽略,则点Ignore All忽略错误,进入下一步:

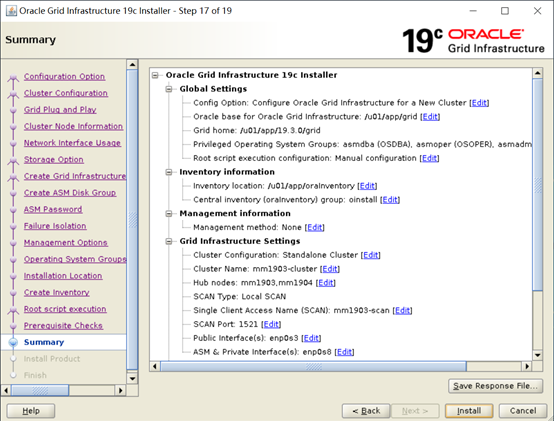

Step 17 of 17:这是一个Summery,最后确认一遍,没有问题点击Install,开始安装:

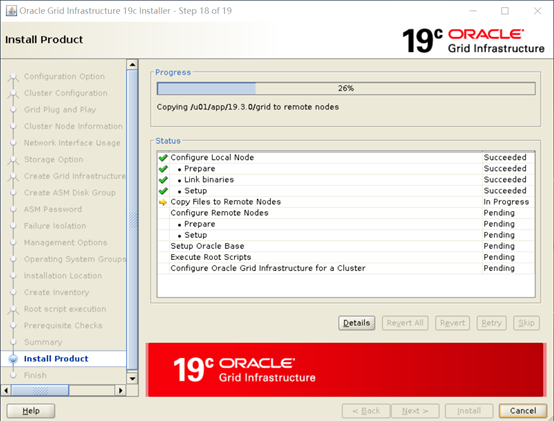

安装过程中:

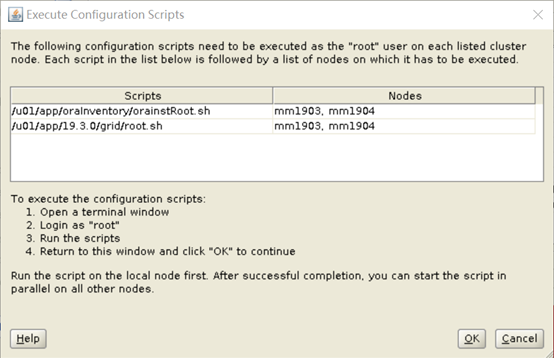

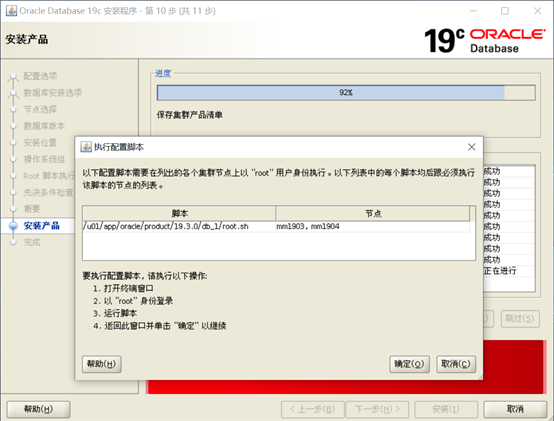

安装继续,直到弹出下面的窗口:

这是Grid安装最关键的地方,需要按提示以root用户在两个节点上依次执行两个脚本,第一次脚本在两个节点的执行情况完全一致,以一节点为例:

[root@mm1903 ~]# /u01/app/oraInventory/orainstRoot.sh Changing permissions of /u01/app/oraInventory. Adding read,write permissions for group. Removing read,write,execute permissions for world. Changing groupname of /u01/app/oraInventory to oinstall. The execution of the script is complete.

[root@mm1904 ~]# /u01/app/oraInventory/orainstRoot.sh Changing permissions of /u01/app/oraInventory. Adding read,write permissions for group. Removing read,write,execute permissions for world. Changing groupname of /u01/app/oraInventory to oinstall. The execution of the script is complete.

第二个脚本两个节点输出是不一样的,必须按顺序执行。以root用户在节点一上执行root.sh脚本:

[root@mm1903 ~]# /u01/app/19.3.0/grid/root.sh Performing root user operation. The following environment variables are set as: ORACLE_OWNER= grid ORACLE_HOME= /u01/app/19.3.0/grid Enter the full pathname of the local bin directory: [/usr/local/bin]: Copying dbhome to /usr/local/bin ... Copying oraenv to /usr/local/bin ... Copying coraenv to /usr/local/bin ... Creating /etc/oratab file... Entries will be added to the /etc/oratab file as needed by Database Configuration Assistant when a database is created Finished running generic part of root script. Now product-specific root actions will be performed. Relinking oracle with rac_on option Using configuration parameter file: /u01/app/19.3.0/grid/crs/install/crsconfig_params The log of current session can be found at: /u01/app/grid/crsdata/mm1903/crsconfig/rootcrs_mm1903_2020-03-18_03-55-26PM.log 2020/03/18 15:55:52 CLSRSC-594: Executing installation step 1 of 19: 'SetupTFA'. 2020/03/18 15:55:52 CLSRSC-594: Executing installation step 2 of 19: 'ValidateEnv'. 2020/03/18 15:55:52 CLSRSC-363: User ignored prerequisites during installation 2020/03/18 15:55:52 CLSRSC-594: Executing installation step 3 of 19: 'CheckFirstNode'. 2020/03/18 15:55:55 CLSRSC-594: Executing installation step 4 of 19: 'GenSiteGUIDs'. 2020/03/18 15:55:56 CLSRSC-594: Executing installation step 5 of 19: 'SetupOSD'. 2020/03/18 15:55:56 CLSRSC-594: Executing installation step 6 of 19: 'CheckCRSConfig'. 2020/03/18 15:55:59 CLSRSC-594: Executing installation step 7 of 19: 'SetupLocalGPNP'. 2020/03/18 15:56:35 CLSRSC-594: Executing installation step 8 of 19: 'CreateRootCert'. 2020/03/18 15:56:43 CLSRSC-594: Executing installation step 9 of 19: 'ConfigOLR'. 2020/03/18 15:56:54 CLSRSC-4002: Successfully installed Oracle Trace File Analyzer (TFA) Collector. 2020/03/18 15:57:03 CLSRSC-594: Executing installation step 10 of 19: 'ConfigCHMOS'. 2020/03/18 15:57:03 CLSRSC-594: Executing installation step 11 of 19: 'CreateOHASD'. 2020/03/18 15:57:09 CLSRSC-594: Executing installation step 12 of 19: 'ConfigOHASD'. 2020/03/18 15:57:09 CLSRSC-330: Adding Clusterware entries to file 'oracle-ohasd.service' 2020/03/18 15:58:02 CLSRSC-594: Executing installation step 13 of 19: 'InstallAFD'. 2020/03/18 15:58:08 CLSRSC-594: Executing installation step 14 of 19: 'InstallACFS'. 2020/03/18 15:59:13 CLSRSC-594: Executing installation step 15 of 19: 'InstallKA'. 2020/03/18 15:59:19 CLSRSC-594: Executing installation step 16 of 19: 'InitConfig'. 已成功创建并启动 ASM。 [DBT-30001] 已成功创建磁盘组。有关详细信息, 请查看 /u01/app/grid/cfgtoollogs/asmca/asmca-200318下午040017.log。 2020/03/18 16:06:21 CLSRSC-482: Running command: '/u01/app/19.3.0/grid/bin/ocrconfig -upgrade grid oinstall' CRS-4256: Updating the profile Successful addition of voting disk be8e31c82d2e4f99bfd133b83e46ec15. Successful addition of voting disk 8dac91231c6f4f6ebfebe323355bf358. Successful addition of voting disk 0a42efb529af4f92bf4a4882811dd259. Successfully replaced voting disk group with +SYS. CRS-4256: Updating the profile CRS-4266: Voting file(s) successfully replaced ## STATE File Universal Id File Name Disk group -- ----- ----------------- --------- --------- 1. ONLINE be8e31c82d2e4f99bfd133b83e46ec15 (/dev/sdb2) [SYS] 2. ONLINE 8dac91231c6f4f6ebfebe323355bf358 (/dev/sdc2) [SYS] 3. ONLINE 0a42efb529af4f92bf4a4882811dd259 (/dev/sdd2) [SYS] Located 3 voting disk(s). 2020/03/18 16:14:04 CLSRSC-594: Executing installation step 17 of 19: 'StartCluster'. 2020/03/18 16:16:44 CLSRSC-343: Successfully started Oracle Clusterware stack 2020/03/18 16:16:44 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'. 2020/03/18 16:20:26 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'. 2020/03/18 16:24:07 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

以root身份在节点二上执行:

[root@mm1904 ~]# /u01/app/19.3.0/grid/root.sh Performing root user operation. The following environment variables are set as: ORACLE_OWNER= grid ORACLE_HOME= /u01/app/19.3.0/grid Enter the full pathname of the local bin directory: [/usr/local/bin]: Copying dbhome to /usr/local/bin ... Copying oraenv to /usr/local/bin ... Copying coraenv to /usr/local/bin ... Creating /etc/oratab file... Entries will be added to the /etc/oratab file as needed by Database Configuration Assistant when a database is created Finished running generic part of root script. Now product-specific root actions will be performed. Relinking oracle with rac_on option Using configuration parameter file: /u01/app/19.3.0/grid/crs/install/crsconfig_params The log of current session can be found at: /u01/app/grid/crsdata/mm1904/crsconfig/rootcrs_mm1904_2020-03-18_04-29-49PM.log 2020/03/18 16:31:55 CLSRSC-594: Executing installation step 1 of 19: 'SetupTFA'. 2020/03/18 16:31:55 CLSRSC-594: Executing installation step 2 of 19: 'ValidateEnv'. 2020/03/18 16:31:55 CLSRSC-363: User ignored prerequisites during installation 2020/03/18 16:31:55 CLSRSC-594: Executing installation step 3 of 19: 'CheckFirstNode'. 2020/03/18 16:32:47 CLSRSC-594: Executing installation step 4 of 19: 'GenSiteGUIDs'. 2020/03/18 16:32:47 CLSRSC-594: Executing installation step 5 of 19: 'SetupOSD'. 2020/03/18 16:32:47 CLSRSC-594: Executing installation step 6 of 19: 'CheckCRSConfig'. 2020/03/18 16:32:50 CLSRSC-594: Executing installation step 7 of 19: 'SetupLocalGPNP'. 2020/03/18 16:33:52 CLSRSC-594: Executing installation step 8 of 19: 'CreateRootCert'. 2020/03/18 16:33:52 CLSRSC-594: Executing installation step 9 of 19: 'ConfigOLR'. 2020/03/18 16:34:28 CLSRSC-4002: Successfully installed Oracle Trace File Analyzer (TFA) Collector. 2020/03/18 16:35:27 CLSRSC-594: Executing installation step 10 of 19: 'ConfigCHMOS'. 2020/03/18 16:35:27 CLSRSC-594: Executing installation step 11 of 19: 'CreateOHASD'. 2020/03/18 16:36:14 CLSRSC-594: Executing installation step 12 of 19: 'ConfigOHASD'. 2020/03/18 16:36:14 CLSRSC-330: Adding Clusterware entries to file 'oracle-ohasd.service' 2020/03/18 16:38:37 CLSRSC-594: Executing installation step 13 of 19: 'InstallAFD'. 2020/03/18 16:40:00 CLSRSC-594: Executing installation step 14 of 19: 'InstallACFS'. 2020/03/18 16:43:54 CLSRSC-594: Executing installation step 15 of 19: 'InstallKA'. 2020/03/18 16:44:46 CLSRSC-594: Executing installation step 16 of 19: 'InitConfig'. 2020/03/18 16:46:09 CLSRSC-594: Executing installation step 17 of 19: 'StartCluster'. 2020/03/18 16:49:44 CLSRSC-343: Successfully started Oracle Clusterware stack 2020/03/18 16:49:44 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'. 2020/03/18 16:52:09 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'. 2020/03/18 16:55:14 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

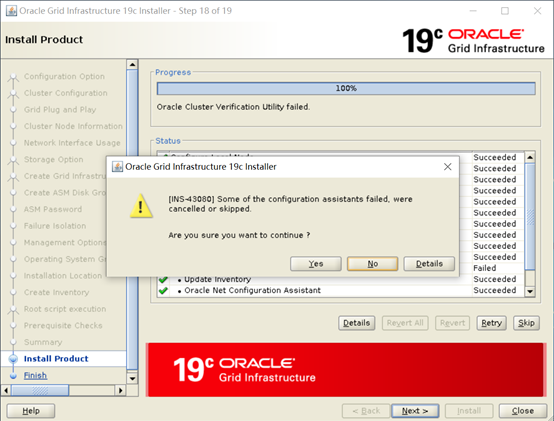

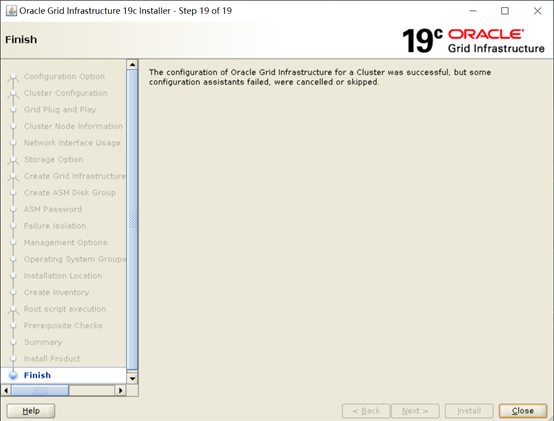

执行完成后,回到图形窗口点击OK继续后面的步骤:

最后提示集群检查未通过,点击details进行检查。本次安装是因为我们没有将scan ip配置在DNS中,可以忽略。点击“OK”继续:

至此GI安装完成,点击Close退出。

3.5 验证crsctl的状态

可以用grid用户执行crsctl stat res -t命令检查集群服务状态。

[root@mm1903 ~]# su - grid 上一次登录:三 3月 18 17:05:44 CST 2020 'abrt-cli status' timed out [grid@mm1903 ~]$ crsctl stat res -t -------------------------------------------------------------------------------- Name Target State Server State details -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.LISTENER.lsnr ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.chad ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.net1.network ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.ons ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.proxy_advm OFFLINE OFFLINE mm1903 STABLE OFFLINE OFFLINE mm1904 STABLE -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE mm1904 STABLE ora.LISTENER_SCAN2.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.LISTENER_SCAN3.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.SYS.dg(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.asm(ora.asmgroup) 1 ONLINE ONLINE mm1903 Started,STABLE 2 ONLINE ONLINE mm1904 Started,STABLE 3 OFFLINE OFFLINE STABLE ora.asmnet1.asmnetwork(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.cvu 1 ONLINE ONLINE mm1903 STABLE ora.mm1903.vip 1 ONLINE ONLINE mm1903 STABLE ora.mm1904.vip 1 ONLINE ONLINE mm1904 STABLE ora.qosmserver 1 ONLINE INTERMEDIATE mm1903 CHECK TIMED OUT,STAB LE ora.scan1.vip 1 ONLINE ONLINE mm1904 STABLE ora.scan2.vip 1 ONLINE ONLINE mm1903 STABLE ora.scan3.vip 1 ONLINE ONLINE mm1903 STABLE -------------------------------------------------------------------------------- [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ [grid@mm1903 ~]$ crsctl stat res -t -init -------------------------------------------------------------------------------- Name Target State Server State details -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.asm 1 ONLINE ONLINE mm1903 Started,STABLE ora.cluster_interconnect.haip 1 ONLINE ONLINE mm1903 STABLE ora.crf 1 ONLINE ONLINE mm1903 STABLE ora.crsd 1 ONLINE ONLINE mm1903 STABLE ora.cssd 1 ONLINE ONLINE mm1903 STABLE ora.cssdmonitor 1 ONLINE ONLINE mm1903 STABLE ora.ctssd 1 ONLINE ONLINE mm1903 ACTIVE:0,STABLE ora.diskmon 1 OFFLINE OFFLINE STABLE ora.drivers.acfs 1 ONLINE ONLINE mm1903 STABLE ora.evmd 1 ONLINE ONLINE mm1903 STABLE ora.gipcd 1 ONLINE ONLINE mm1903 STABLE ora.gpnpd 1 ONLINE ONLINE mm1903 STABLE ora.mdnsd 1 ONLINE ONLINE mm1903 STABLE ora.storage 1 ONLINE ONLINE mm1903 STABLE --------------------------------------------------------------------------------

3.6 测试集群的FAILED OVER功能

节点2被重启,查看节点1状态:

[grid@mm1903 ~]$ crsctl stat res -t -------------------------------------------------------------------------------- Name Target State Server State details -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.LISTENER.lsnr ONLINE ONLINE mm1903 STABLE ONLINE OFFLINE mm1904 STABLE ora.chad ONLINE ONLINE mm1903 STABLE ONLINE OFFLINE mm1904 STABLE ora.net1.network ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.ons ONLINE ONLINE mm1903 STABLE ONLINE ONLINE mm1904 STABLE ora.proxy_advm OFFLINE OFFLINE mm1903 STABLE OFFLINE OFFLINE mm1904 STABLE -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE ONLINE mm1904 STOPPING 3 OFFLINE OFFLINE STABLE ora.LISTENER_SCAN1.lsnr 1 ONLINE OFFLINE STABLE ora.LISTENER_SCAN2.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.LISTENER_SCAN3.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.SYS.dg(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.asm(ora.asmgroup) 1 ONLINE ONLINE mm1903 Started,STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.asmnet1.asmnetwork(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.cvu 1 ONLINE ONLINE mm1903 STABLE ora.mm1903.vip 1 ONLINE ONLINE mm1903 STABLE ora.mm1904.vip 1 ONLINE ONLINE mm1904 STOPPING ora.qosmserver 1 ONLINE ONLINE mm1903 STABLE ora.scan1.vip 1 ONLINE OFFLINE STABLE ora.scan2.vip 1 ONLINE ONLINE mm1903 STABLE ora.scan3.vip 1 ONLINE ONLINE mm1903 STABLE --------------------------------------------------------------------------------

节点1被重启,查看节点2状态:

[grid@mm1904 trace]$ crsctl stat res -t -------------------------------------------------------------------------------- Name Target State Server State details -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.LISTENER.lsnr ONLINE OFFLINE mm1904 STARTING ora.chad ONLINE OFFLINE mm1904 STABLE ora.net1.network ONLINE ONLINE mm1904 STABLE ora.ons ONLINE OFFLINE mm1904 STARTING ora.proxy_advm OFFLINE OFFLINE mm1904 STABLE -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup) 1 ONLINE OFFLINE STABLE 2 ONLINE OFFLINE mm1904 STARTING 3 ONLINE OFFLINE STABLE ora.LISTENER_SCAN1.lsnr 1 ONLINE OFFLINE mm1904 STARTING ora.LISTENER_SCAN2.lsnr 1 ONLINE OFFLINE mm1904 STARTING ora.LISTENER_SCAN3.lsnr 1 ONLINE OFFLINE mm1904 STARTING ora.SYS.dg(ora.asmgroup) 1 OFFLINE OFFLINE STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.asm(ora.asmgroup) 1 ONLINE OFFLINE STABLE 2 ONLINE ONLINE mm1904 Started,STABLE 3 OFFLINE OFFLINE STABLE ora.asmnet1.asmnetwork(ora.asmgroup) 1 ONLINE OFFLINE STABLE 2 ONLINE ONLINE mm1904 STABLE 3 OFFLINE OFFLINE STABLE ora.cvu 1 ONLINE ONLINE mm1904 STABLE ora.mm1903.vip 1 ONLINE INTERMEDIATE mm1904 FAILED OVER,STABLE ora.mm1904.vip 1 ONLINE ONLINE mm1904 STABLE ora.qosmserver 1 ONLINE OFFLINE mm1904 STARTING ora.scan1.vip 1 ONLINE ONLINE mm1904 STABLE ora.scan2.vip 1 ONLINE ONLINE mm1904 STABLE ora.scan3.vip 1 ONLINE ONLINE mm1904 STABLE --------------------------------------------------------------------------------

附:集群日志位置:

[grid@mm1903 ~]$ cd /u01/app/grid/diag/crs/mm1903/crs/trace/ [grid@mm1903 trace]$ ls -l alert.log -rw-rw---- 1 grid oinstall 30903 3月 18 17:50 alert.log [grid@mm1903 trace]$ tail -40f alert.log ACFS-9549: Kernel and command versions. Kernel: Build version: 19.0.0.0.0 Build full version: 19.3.0.0.0 Build hash: 9256567290 Bug numbers: NoTransactionInformation Commands: Build version: 19.0.0.0.0 Build full version: 19.3.0.0.0 Build hash: 9256567290 Bug numbers: NoTransactionInformation 2020-03-18 17:46:54.141 [CLSECHO(11621)]ACFS-9327: Verifying ADVM/ACFS devices. 2020-03-18 17:46:54.168 [CLSECHO(11633)]ACFS-9156: Detecting control device '/dev/asm/.asm_ctl_spec'. 2020-03-18 17:46:54.438 [CLSECHO(11660)]ACFS-9156: Detecting control device '/dev/ofsctl'. 2020-03-18 17:46:56.196 [CLSECHO(11815)]ACFS-9294: updating file /etc/sysconfig/oracledrivers.conf 2020-03-18 17:46:56.222 [CLSECHO(11827)]ACFS-9322: completed 2020-03-18 17:46:58.465 [CSSDMONITOR(11950)]CRS-8500: Oracle Clusterware CSSDMONITOR process is starting with operating system process ID 11950 2020-03-18 17:46:58.889 [OSYSMOND(11954)]CRS-8500: Oracle Clusterware OSYSMOND process is starting with operating system process ID 11954 2020-03-18 17:46:59.550 [CSSDAGENT(11995)]CRS-8500: Oracle Clusterware CSSDAGENT process is starting with operating system process ID 11995 2020-03-18 17:47:00.561 [OCSSD(12079)]CRS-8500: Oracle Clusterware OCSSD process is starting with operating system process ID 12079 2020-03-18 17:47:01.977 [OCSSD(12079)]CRS-1713: CSSD daemon is started in hub mode 2020-03-18 17:47:11.078 [OCSSD(12079)]CRS-1707: Lease acquisition for node mm1903 number 1 completed 2020-03-18 17:47:12.364 [OCSSD(12079)]CRS-1621: The IPMI configuration data for this node stored in the Oracle registry is incomplete; details at (:CSSNK00002:) in /u01/app/grid/diag/crs/mm1903/crs/trace/ocssd.trc 2020-03-18 17:47:12.364 [OCSSD(12079)]CRS-1617: The information required to do node kill for node mm1903 is incomplete; details at (:CSSNM00004:) in /u01/app/grid/diag/crs/mm1903/crs/trace/ocssd.trc 2020-03-18 17:47:12.564 [OCSSD(12079)]CRS-1605: CSSD voting file is online: /dev/sdc2; details in /u01/app/grid/diag/crs/mm1903/crs/trace/ocssd.trc. 2020-03-18 17:47:12.696 [OCSSD(12079)]CRS-1605: CSSD voting file is online: /dev/sdd2; details in /u01/app/grid/diag/crs/mm1903/crs/trace/ocssd.trc. 2020-03-18 17:47:12.827 [OCSSD(12079)]CRS-1605: CSSD voting file is online: /dev/sdb2; details in /u01/app/grid/diag/crs/mm1903/crs/trace/ocssd.trc. 2020-03-18 17:47:14.236 [OCSSD(12079)]CRS-1601: CSSD Reconfiguration complete. Active nodes are mm1903 mm1904 . 2020-03-18 17:47:16.337 [OCSSD(12079)]CRS-1720: Cluster Synchronization Services daemon (CSSD) is ready for operation. 2020-03-18 17:47:16.871 [OCTSSD(15102)]CRS-8500: Oracle Clusterware OCTSSD process is starting with operating system process ID 15102 2020-03-18 17:47:19.080 [OCTSSD(15102)]CRS-2407: The new Cluster Time Synchronization Service reference node is host mm1904. 2020-03-18 17:47:19.081 [OCTSSD(15102)]CRS-2401: The Cluster Time Synchronization Service started on host mm1903. 2020-03-18 17:47:25.583 [OLOGGERD(16461)]CRS-8500: Oracle Clusterware OLOGGERD process is starting with operating system process ID 16461 2020-03-18 17:47:29.387 [ORAROOTAGENT(9074)]CRS-5019: All OCR locations are on ASM disk groups [SYS], and none of these disk groups are mounted. Details are at "(:CLSN00140:)" in "/u01/app/grid/diag/crs/mm1903/crs/trace/ohasd_orarootagent_root.trc". 2020-03-18 17:49:47.918 [CRSD(17390)]CRS-8500: Oracle Clusterware CRSD process is starting with operating system process ID 17390 2020-03-18 17:50:21.351 [CRSD(17390)]CRS-1012: The OCR service started on node mm1903. 2020-03-18 17:50:21.848 [CRSD(17390)]CRS-1201: CRSD started on node mm1903. 2020-03-18 17:50:23.272 [ORAAGENT(17586)]CRS-8500: Oracle Clusterware ORAAGENT process is starting with operating system process ID 17586 2020-03-18 17:50:23.647 [ORAROOTAGENT(17597)]CRS-8500: Oracle Clusterware ORAROOTAGENT process is starting with operating system process ID 17597 2020-03-18 17:50:42.458 [ORAAGENT(17707)]CRS-8500: Oracle Clusterware ORAAGENT process is starting with operating system process ID 17707 ^C [grid@mm1903 trace]$ [grid@mm1903 trace]$ pwd /u01/app/grid/diag/crs/mm1903/crs/trace

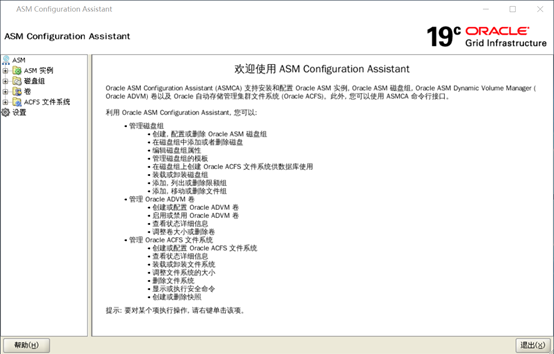

四、创建其他ASM磁盘组

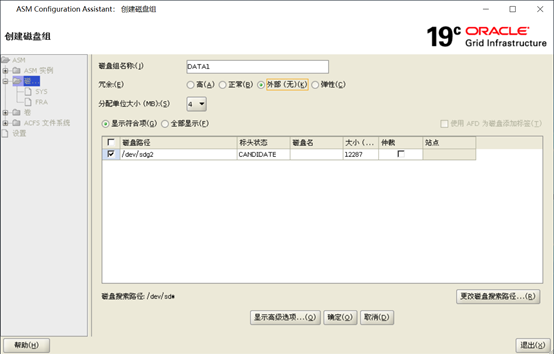

4.1 ASMCA创建磁盘组

GI软件安装过程中,只创建了一个磁盘组DGSYS,用于存储OCR和vote。接下来我们需要创建其它磁盘组来存放数据库相关文件。

下面是创建额外的数据和归档磁盘组的过程。

正式创建之前可以使用kfod先扫一下磁盘,确保所有磁盘都能被找到,这一步是可选的。

在两个节点上都执行:

[grid@mm1903 ~]$ kfod disks=all -------------------------------------------------------------------------------- Disk Size Path User Group ================================================================================ 1: 1023 MB /dev/sdb2 grid asmadmin 2: 1023 MB /dev/sdc2 grid asmadmin 3: 1023 MB /dev/sdd2 grid asmadmin 4: 12287 MB /dev/sde2 grid asmadmin 5: 12287 MB /dev/sdf2 grid asmadmin 6: 12287 MB /dev/sdg2 grid asmadmin -------------------------------------------------------------------------------- ORACLE_SID ORACLE_HOME ================================================================================

设置好DISPLAY环境变量,执行asmca打开图形界面:

[grid@mm1903 ~]$ export DISPLAY=192.168.56.1:0.0 [grid@mm1903 ~]$ xhost + access control disabled, clients can connect from any host xhost: must be on local machine to enable or disable access control. [grid@mm1903 ~]$ asmca

此处等待时间比较长,大约需要几分钟,图形界面如下:

首先映入眼帘的是鲜艳的19c配色图:

然后正式进入asmca的界面:

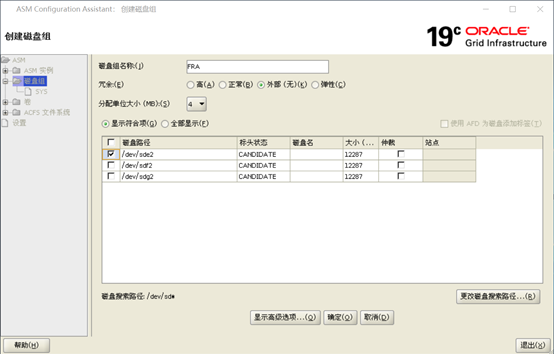

点击create按钮创建”FRA”磁盘组(磁盘选择/dev/asm-diske-f),

冗余选择external(生产如果选择external,底层存储必须已经做了RAID):

如图:(第一次创建少选择了一张盘,在后面有单独添加)

同样的步骤创建磁盘组DATA1(磁盘选择/dev/asm-diskg):

创建完成后,磁盘组信息如下:

至此初始需要的磁盘组都创建完成,SYS存储OCR和Votedisk,FRA用于存储闪回区和归档,DATA用于存放数据。

五、DB(Database)配置

5.1 解压DB的安装包

使用oracle用户解压DB软件:

[root@mm1903 ~]# su - oracle [oracle@mm1903 ~]$ cd /u01/app/oracle/product/19.3.0/db_1 [oracle@mm1903 db_1]$ unzip /usr/local/src/resource/LINUX.X64_193000_db_home.zip

从Oracle 18.3开始,Oracle软件也采用直接解压到Oracle Home下的方式。与GI一样只需要解压到一个节点即可。

5.2 DB软件配置

设置好DISPLAY环境变量后,启动runInstaller进行DB软件安装。

[oracle@mm1903 ~]$ cd /u01/app/oracle/product/19.3.0/db_1 [oracle@mm1903 db_1]$ export DISPLAY=192.168.1.102:0.0 [oracle@mm1903 db_1]$ xhost + access control disabled, clients can connect from any host [oracle@mm1903 db_1]$ ./runInstaller Launching Oracle Database Setup Wizard...

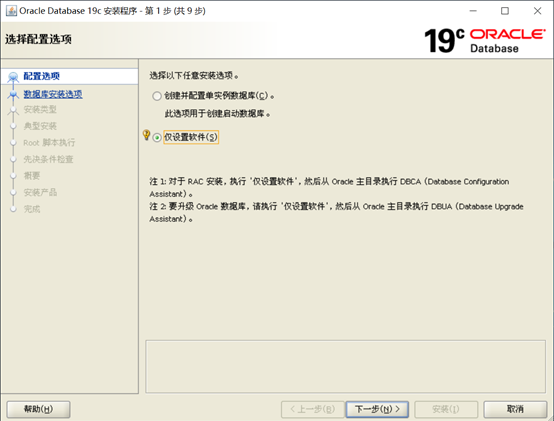

经过漫长的等待,弹出如下界面:

注意:对于RAC安装,一定要选择仅设置软件(Set Up Software Only),安装完软件之后再使用DBCA建库,这是与以往 版本不同的地方。不能在软件安装过程中创建数据库了。

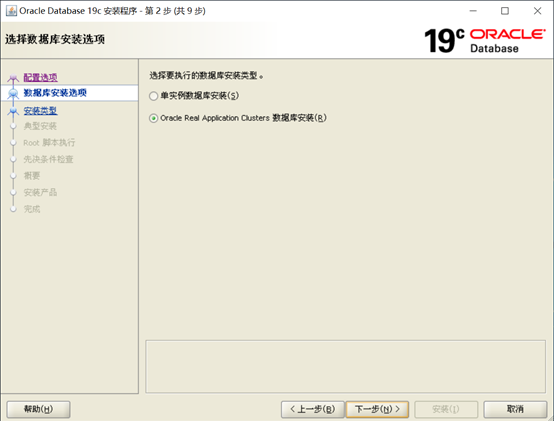

选择RAC安装,进入下一步:

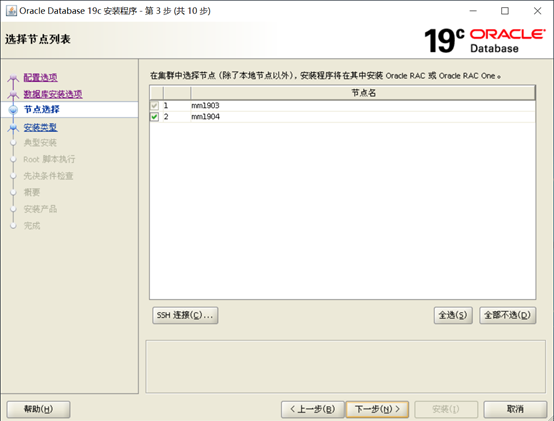

选择要安装RAC软件的节点。如果前没有配置Oracle用户在等效性,可以在这个界面点击SSH connectivity按钮进行配置。然后进入下一界面:

如图:

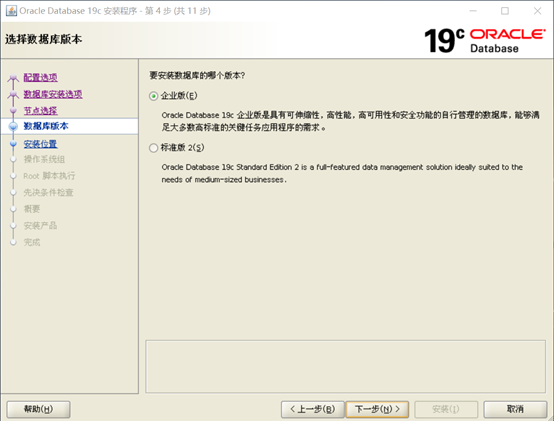

选择企业版,然后进入下一界面:

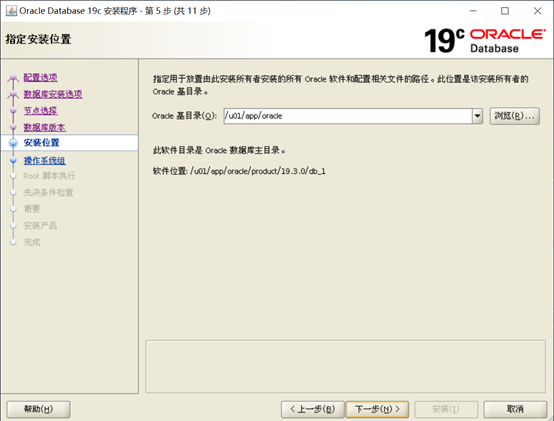

设置安装目录,用户Oracle用户的环境变量设置无误,这个界面中的Oracle Base和Oracle Home会自动列出来,保留默认即可。

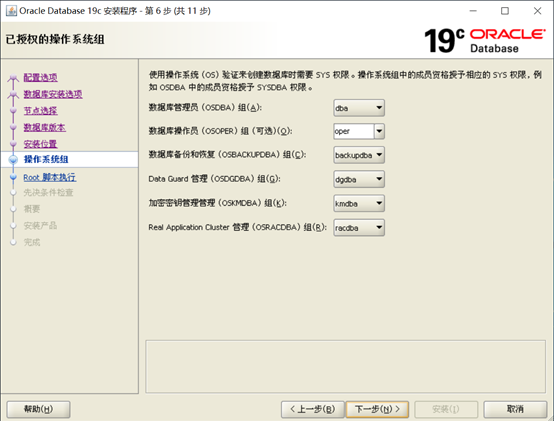

配置权限组映射,同样默认即可。

配置是否执行root.sh,这里选择不自动执行。然后点击下一步,执行预安装检查:

precheck这里如果有问题需要认真检查,对于配置问题可以点击”Fix & Check Again”生成一个脚本,由root用户进行自动修复。

最后,确认没有问题可以忽略,点Ignore All忽略:

点击next开始安装:

直到出现下面的界面:

运行root脚本。然后返回继续安装直到完成:

脚本运行如下:

[root@mm1903 ~]# /u01/app/oracle/product/19.3.0/db_1/root.sh Performing root user operation. The following environment variables are set as: ORACLE_OWNER= oracle ORACLE_HOME= /u01/app/oracle/product/19.3.0/db_1 Enter the full pathname of the local bin directory: [/usr/local/bin]: The contents of "dbhome" have not changed. No need to overwrite. The contents of "oraenv" have not changed. No need to overwrite. The contents of "coraenv" have not changed. No need to overwrite. Entries will be added to the /etc/oratab file as needed by Database Configuration Assistant when a database is created Finished running generic part of root script. Now product-specific root actions will be performed. [root@mm1903 ~]# [root@mm1904 ~]# /u01/app/oracle/product/19.3.0/db_1/root.sh Performing root user operation. The following environment variables are set as: ORACLE_OWNER= oracle ORACLE_HOME= /u01/app/oracle/product/19.3.0/db_1 Enter the full pathname of the local bin directory: [/usr/local/bin]: The contents of "dbhome" have not changed. No need to overwrite. The contents of "oraenv" have not changed. No need to overwrite. The contents of "coraenv" have not changed. No need to overwrite. Entries will be added to the /etc/oratab file as needed by Database Configuration Assistant when a database is created Finished running generic part of root script. Now product-specific root actions will be performed. [root@mm1904 ~]#

点击关闭:

至此,已完成DB软件的配置。

5.3 创建CDB数据库

以oracle用户登录,设置DISPLAY之后执行dbca,启动图形介面:

[oracle@mm1903 db_1]$ export DISPLAY=192.168.56.1:0.0 [oracle@mm1903 db_1]$ xhost + access control disabled, clients can connect from any host xhost: must be on local machine to enable or disable access control. [oracle@mm1903 db_1]$ ./runInstaller 正在启动 Oracle 数据库安装向导...

初始介面如下:

选择Create a database进入下一页:

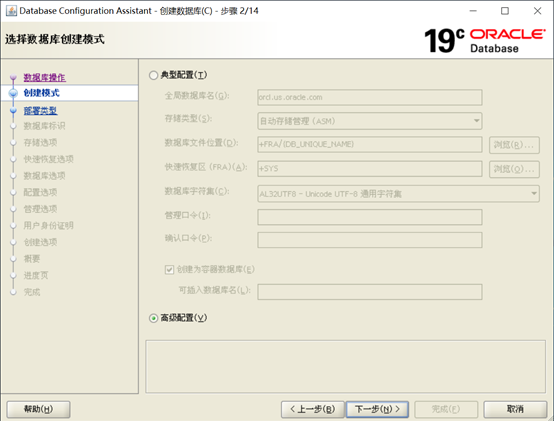

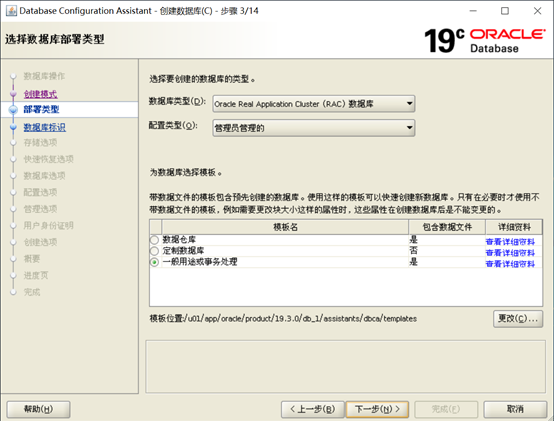

选择默认的高级模式,进入下一面界:

选择管理方式并选择General Purpose or Transaction Processing模板,然后点击下一步进行下一 界面:

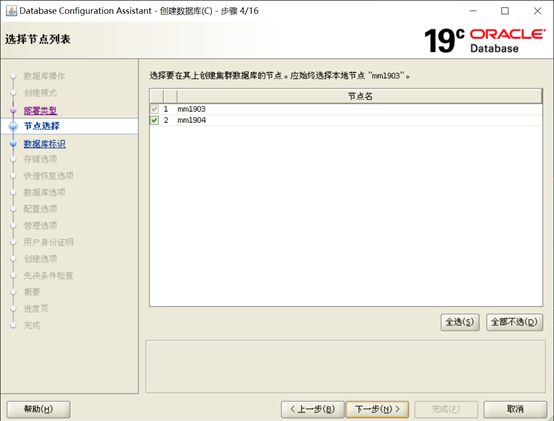

选择要创建数据库的节点,然后进入下一界面:

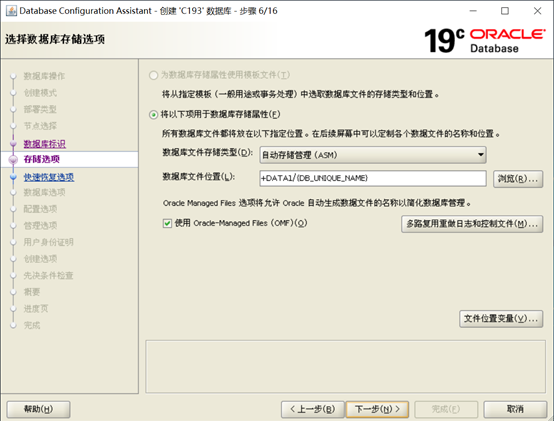

输入数据库名,选择创建Container database.

选择“Use Local Undo tablespace for PDBs”为每个PDB创建单独的Undo表空间。

可以一起创建PDB,也可以选择创建空的Container Database,这里暂时不创建PDB。

注意:这里建议使用OMF管理数据文件,如果选择不使用OMF创建数据库,在创建过程中会失败,报下面的错误:

Error while restoring PDB backup piece

这是因为安装程序无法创建pdbseed目录,解决的方法是自己在ASM中先自行创建下面的目录结构:+DATA1/C193/pdbseed.

然后进入下一界面:

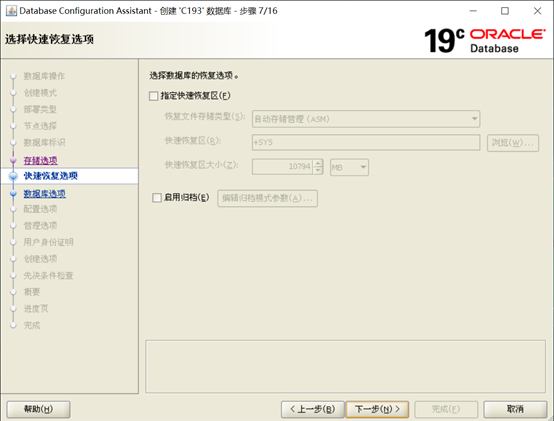

在这一界面中,暂时不开启闪回区和归档,然后点击下一步:

不开Vault和Label Security,然后进入下一界面:

指定SGA和PGA的大小,一般来说配置物理内存的一半给SGA和PGA。

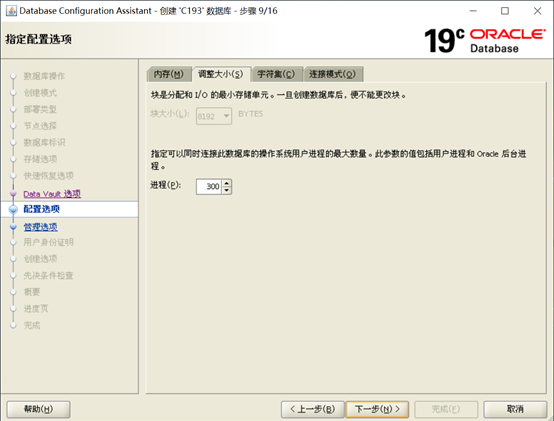

数据库块(8k)不可修改,可以修改参数processes,注意如果SGA太小的话processes不适过大, 否则实例无法启动。可以在创建完数据库之后再手工修改参数processes。

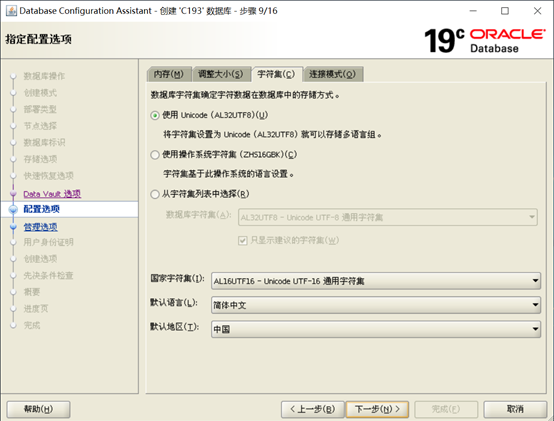

选择字符集AL32UTF8,不建议使用ZH16GBK,产生库使用ZH16GBK早晚会遇到生僻字的难题。

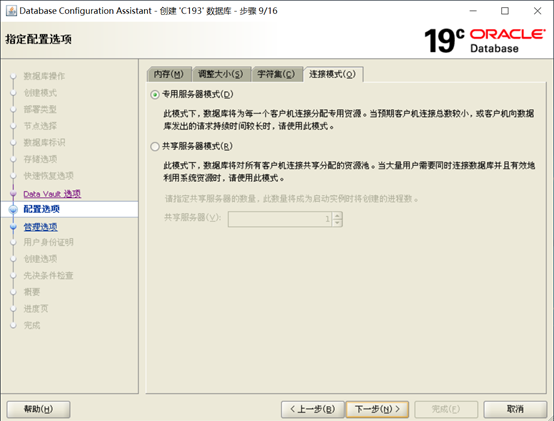

选择专用服务器模式:

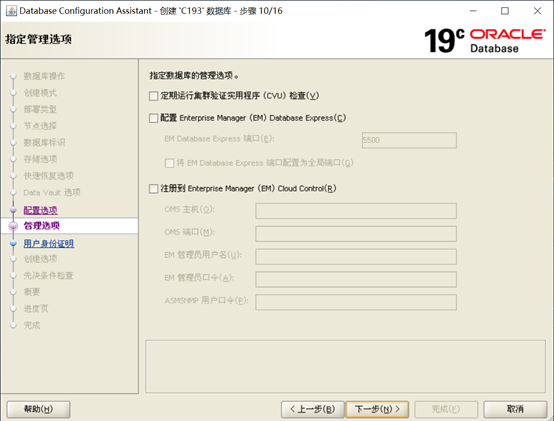

不需要执行CUV检查,暂时不用注册Cloud Control,点击下一步

输入sys用户和system用户的密码,这里设为同样的密码,然后下一步:

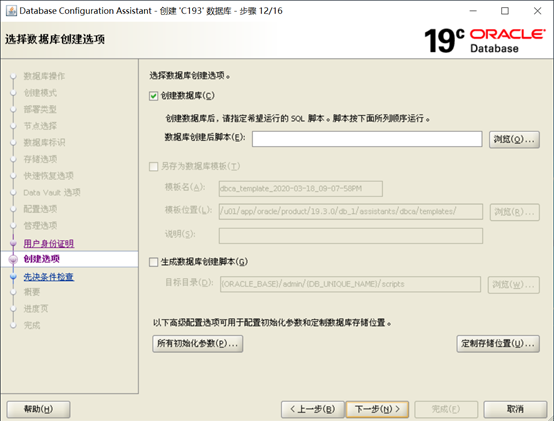

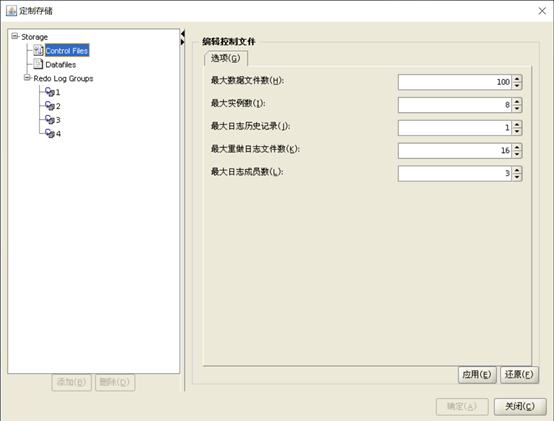

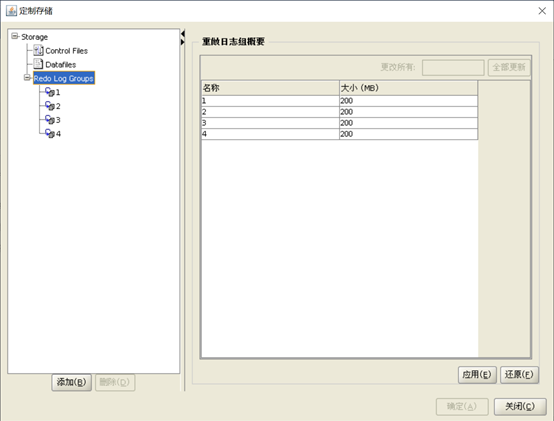

在这一界面中,点击Customize StorageLocation按钮自定义控制文件和日志文件:

控制文件:

每个节点至少3组日志。并且适当扩大日志大小,要不然上线后再扩日志比较麻烦。

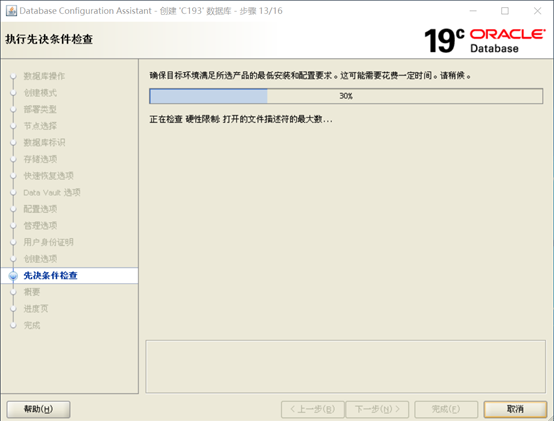

确认无误后,开始预检查:

与软件安装部分一样,如有配置方面的问题,可以点击Fix&Check Again,生成修复脚本使用root执行修。

对于可以忽略的问题,可以选中Ignore All忽略,然后点下一步开始安装:

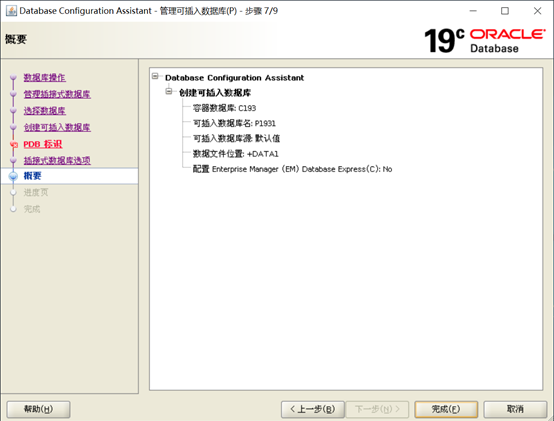

创建CDB数据库概要,没有问题即可点击完成:

开始数据库创建:

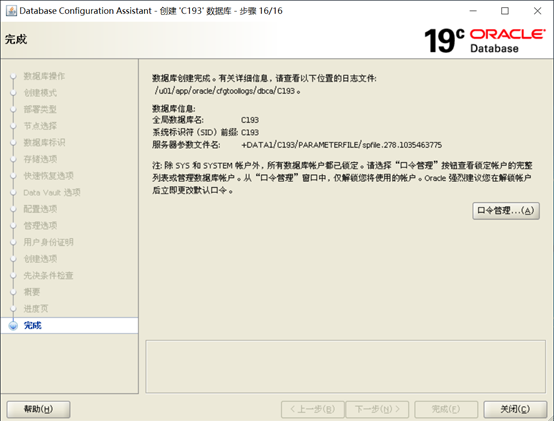

开始创建数据库,直到完成:

数据库创建已完成,密码管理界面显现,点close关闭窗口。

5.4 创建PDB数据库

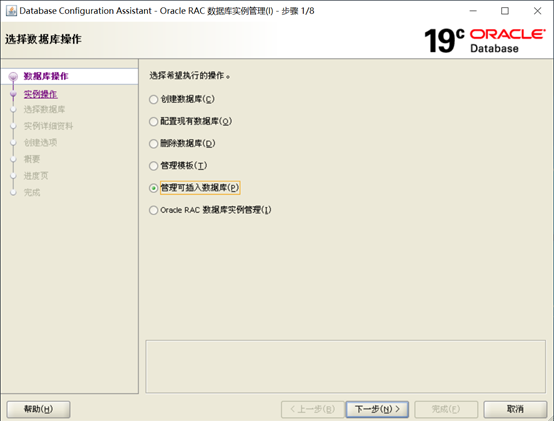

以oracle用户登录,设置DISPLAY之后执行dbca,再次启动图形介面:

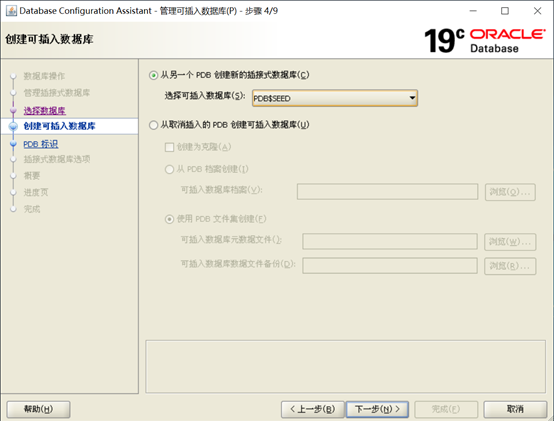

选择Manage Pluggable databases进入下一页:

选择Create a Pluggable database,进入下一页:

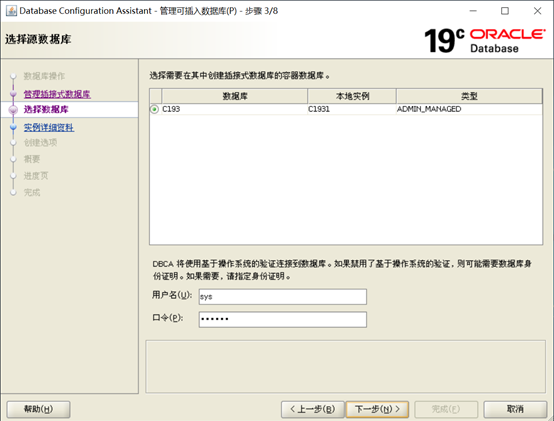

在这一页面需要先选择在哪个CDB里创建PDB,然后输入CDB的用户名和密码。如果 CDB使用操作系统认证也可以不输用户名和密码。然后进入下一页面:

在这个界面中可以选择从种子PDB创建,也可以从一个被拔出的PDB创建。因为我们没有被拔出的PDB需要插入,所以选择从种子PDB创建,然后进入下一界面。

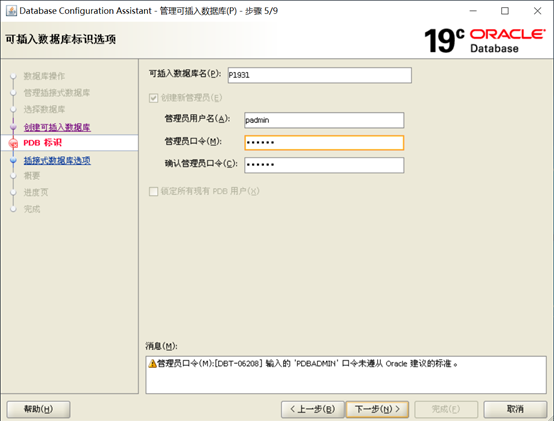

在一界面输入PDB的名字、管理员、管理员密码。然后进入下一界面:

可不选择user表空间,下一步开始安装创建PDB:

直到安装结束:

点击关闭:

最后,确认Pdb已经创建完成,并且已经注册到监听上:

[oracle@mm1903 ~]$ export NLS_LANG="AMERICAN_AMERICA.ZHS16GBK" [oracle@mm1903 ~]$ [oracle@mm1903 ~]$ sqlplus / as sysdba SQL*Plus: Release 19.0.0.0.0 - Production on Thu Mar 19 13:20:35 2020 Version 19.3.0.0.0 Copyright (c) 1982, 2019, Oracle. All rights reserved. Connected to: Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 - Production Version 19.3.0.0.0 SQL> set linesize 100 SQL> col name for a20 SQL> select name,open_mode from v$pdbs; NAME OPEN_MODE -------------------- -------------------- PDB$SEED READ ONLY P1931 READ WRITE SQL> exit Disconnected from Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 - Production Version 19.3.0.0.0 [oracle@mm1903 ~]$ [grid@mm1903 ~]$ lsnrctl LSNRCTL for Linux: Version 19.0.0.0.0 - Production on 19-MAR-2020 13:21:50 Copyright (c) 1991, 2019, Oracle. All rights reserved. Welcome to LSNRCTL, type "help" for information. LSNRCTL> status Connecting to (DESCRIPTION=(ADDRESS=(PROTOCOL=IPC)(KEY=LISTENER))) STATUS of the LISTENER ------------------------ Alias LISTENER Version TNSLSNR for Linux: Version 19.0.0.0.0 - Production Start Date 19-MAR-2020 11:07:34 Uptime 0 days 2 hr. 14 min. 17 sec Trace Level off Security ON: Local OS Authentication SNMP OFF Listener Parameter File /u01/app/19.3.0/grid/network/admin/listener.ora Listener Log File /u01/app/grid/diag/tnslsnr/mm1903/listener/alert/log.xml Listening Endpoints Summary... (DESCRIPTION=(ADDRESS=(PROTOCOL=ipc)(KEY=LISTENER))) (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=192.168.56.56)(PORT=1521))) (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=192.168.56.58)(PORT=1521))) Services Summary... Service "+ASM" has 1 instance(s). Instance "+ASM1", status READY, has 1 handler(s) for this service... Service "+ASM_DATA1" has 1 instance(s). Instance "+ASM1", status READY, has 1 handler(s) for this service... Service "+ASM_FRA" has 1 instance(s). Instance "+ASM1", status READY, has 1 handler(s) for this service... Service "+ASM_SYS" has 1 instance(s). Instance "+ASM1", status READY, has 1 handler(s) for this service... Service "C193" has 1 instance(s). Instance "C1931", status READY, has 1 handler(s) for this service... Service "C193XDB" has 1 instance(s). Instance "C1931", status READY, has 1 handler(s) for this service... Service "a12f2bfe86b26588e0550a00272f5be2" has 1 instance(s). Instance "C1931", status READY, has 1 handler(s) for this service... Service "p1931" has 1 instance(s). Instance "C1931", status READY, has 1 handler(s) for this service... The command completed successfully

至此,Oracle 19.3 RAC数据库已经创建成功。

目前如果你的企业想上12c系列的数据库,推荐直接选择19c(12c的最终版本12.2.0.3),19c相对18c来说更趋于稳定,Oracle的支持周期也更长。

5.5 验证crsctl的状态

grid用户登录,crsctl stat res -t 查看集群资源的状态,发现各节点的DB资源已经正常Open。

[grid@mm1903 ~]$ crsctl stat res -t -------------------------------------------------------------------------------- Name Target State Server State details -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.LISTENER.lsnr ONLINE ONLINE mm1903 STABLE ora.chad ONLINE ONLINE mm1903 STABLE ora.net1.network ONLINE ONLINE mm1903 STABLE ora.ons ONLINE ONLINE mm1903 STABLE ora.proxy_advm OFFLINE OFFLINE mm1903 STABLE -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 ONLINE OFFLINE STABLE ora.DATA1.dg(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.FRA.dg(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.LISTENER_SCAN2.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.LISTENER_SCAN3.lsnr 1 ONLINE ONLINE mm1903 STABLE ora.SYS.dg(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.asm(ora.asmgroup) 1 ONLINE ONLINE mm1903 Started,STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.asmnet1.asmnetwork(ora.asmgroup) 1 ONLINE ONLINE mm1903 STABLE 2 ONLINE OFFLINE STABLE 3 OFFLINE OFFLINE STABLE ora.c193.db 1 ONLINE ONLINE mm1903 Open,HOME=/u01/app/o racle/product/19.3.0 /db_1,STABLE ora.cvu 1 ONLINE ONLINE mm1903 STABLE ora.mm1903.vip 1 ONLINE ONLINE mm1903 STABLE ora.mm1904.vip 1 ONLINE INTERMEDIATE mm1903 FAILED OVER,STABLE ora.qosmserver 1 ONLINE ONLINE mm1903 STABLE ora.scan1.vip 1 ONLINE ONLINE mm1903 STABLE ora.scan2.vip 1 ONLINE ONLINE mm1903 STABLE ora.scan3.vip 1 ONLINE ONLINE mm1903 STABLE --------------------------------------------------------------------------------

oracle用户登录,sqlplus / as sysdba

[oracle@mm1903 ~]$ export NLS_LANG="AMERICAN_AMERICA.ZHS16GBK" [oracle@mm1903 ~]$ [oracle@mm1903 ~]$ [oracle@mm1903 ~]$ sqlplus / as sysdba SQL*Plus: Release 19.0.0.0.0 - Production on Thu Mar 19 13:22:55 2020 Version 19.3.0.0.0 Copyright (c) 1982, 2019, Oracle. All rights reserved. Connected to: Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 - Production Version 19.3.0.0.0 SQL> select inst_id, name, open_mode from gv$database; INST_ID NAME OPEN_MODE ---------- ------------------ ---------------------------------------- 1 C193 READ WRITE SQL> show con_id CON_ID ------------------------------ 1 SQL> show con_name CON_NAME ------------------------------ CDB$ROOT SQL> show pdbs CON_ID CON_NAME OPEN MODE RESTRICTED ---------- ------------------------------ ---------- ---------- 2 PDB$SEED READ ONLY NO 3 P1931 READ WRITE NO SQL> alter session set container = p1931; Session altered. SQL> show pdbs CON_ID CON_NAME OPEN MODE RESTRICTED ---------- ------------------------------ ---------- ---------- 3 P1931 READ WRITE NO SQL> select name from v$datafile; NAME -------------------------------------------------------------------------------- +DATA1/C193/A12F2BFE86B26588E0550A00272F5BE2/DATAFILE/system.280.1035464981 +DATA1/C193/A12F2BFE86B26588E0550A00272F5BE2/DATAFILE/sysaux.281.1035464983 +DATA1/C193/A12F2BFE86B26588E0550A00272F5BE2/DATAFILE/undotbs1.279.1035464981

5.6 添加实例节点

由于笔记本资源不足,在创建PDB数据库时无法同时打开两个虚拟机,无奈之下只好创建单节点的RAC PDB数据库实例,后面再把二节点添加到集群中去。

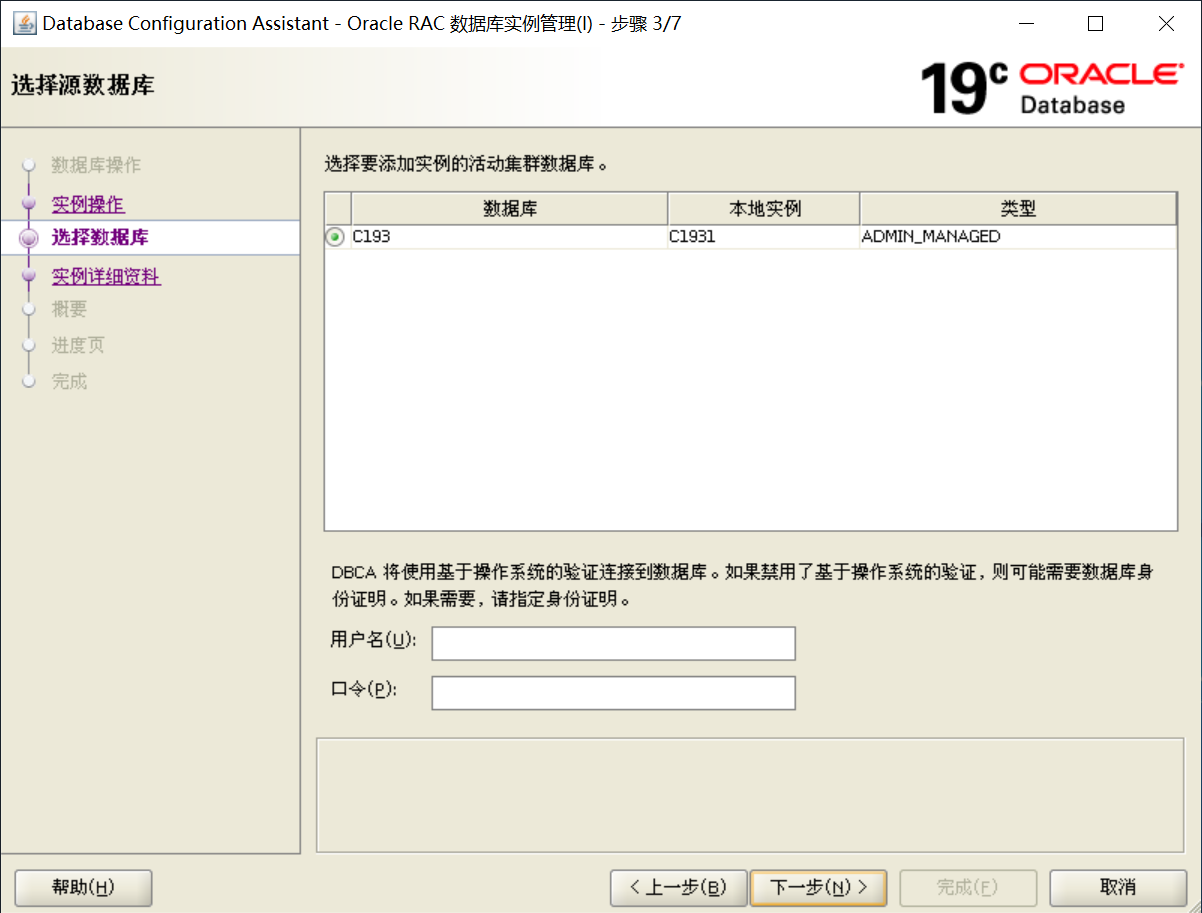

以下是添加节点实例的截图:

这一步选择Oracle RAC 数据库实例管理,点击下一步:

此页面选择添加实例,下一步:

这一步是选择活跃的集群,默认选项即可:

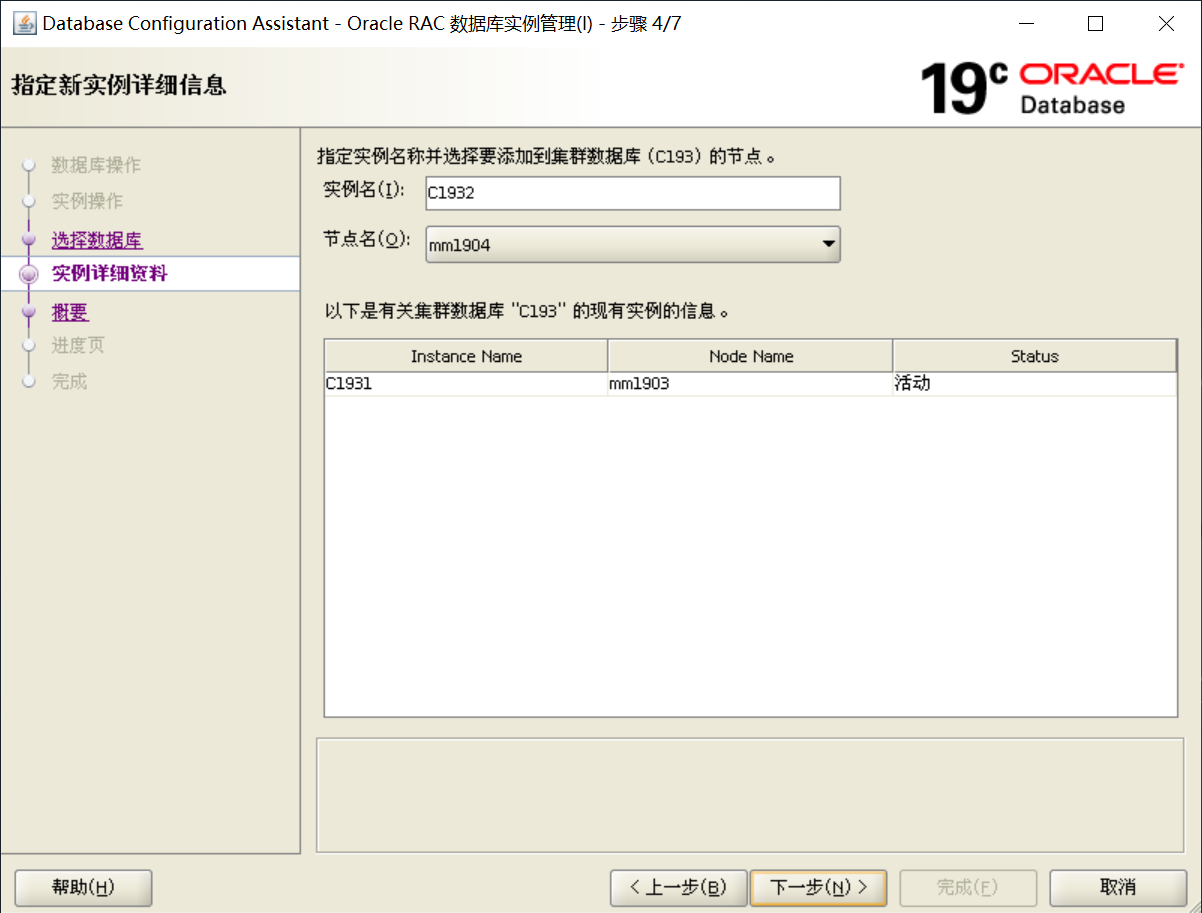

实例名位C1932,选择正确的节点名,点击下一步:

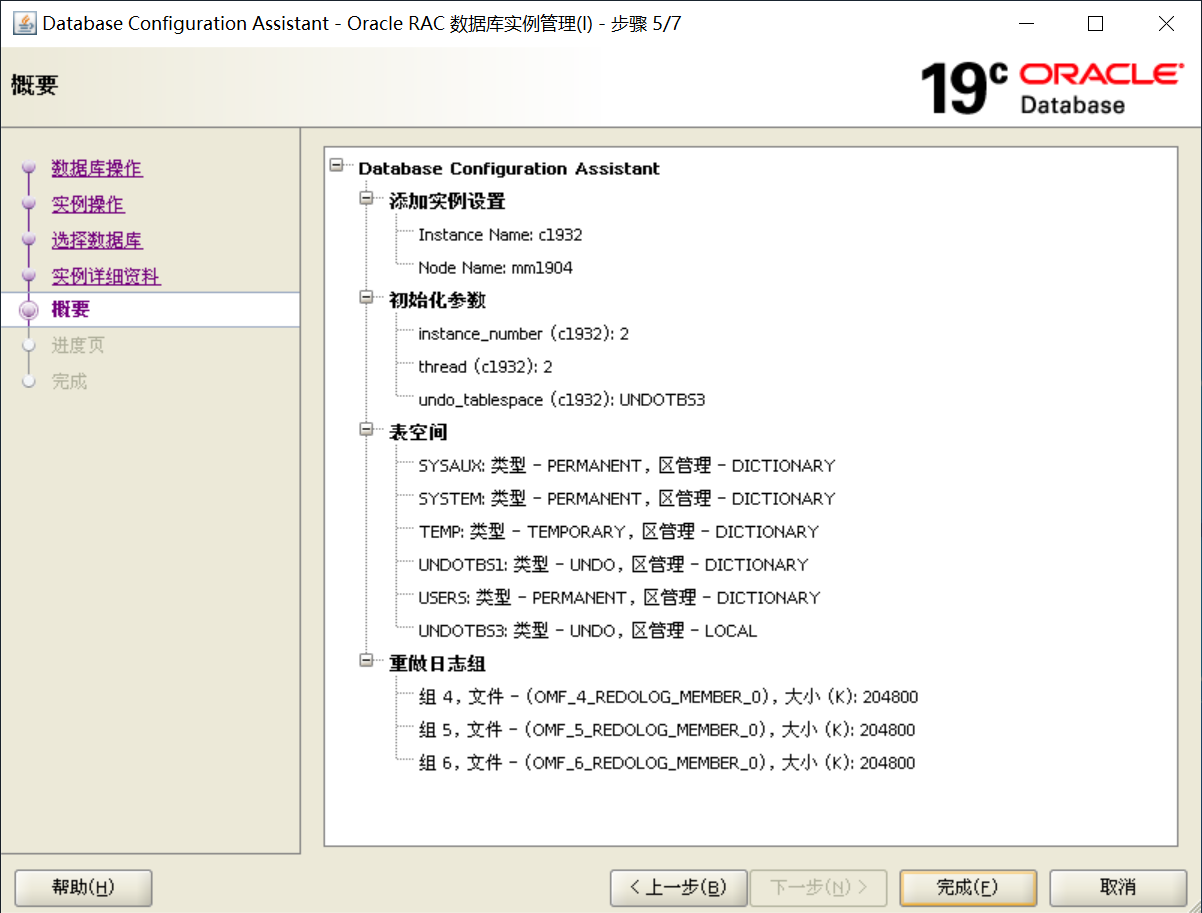

确认概览信息后,点击完成。

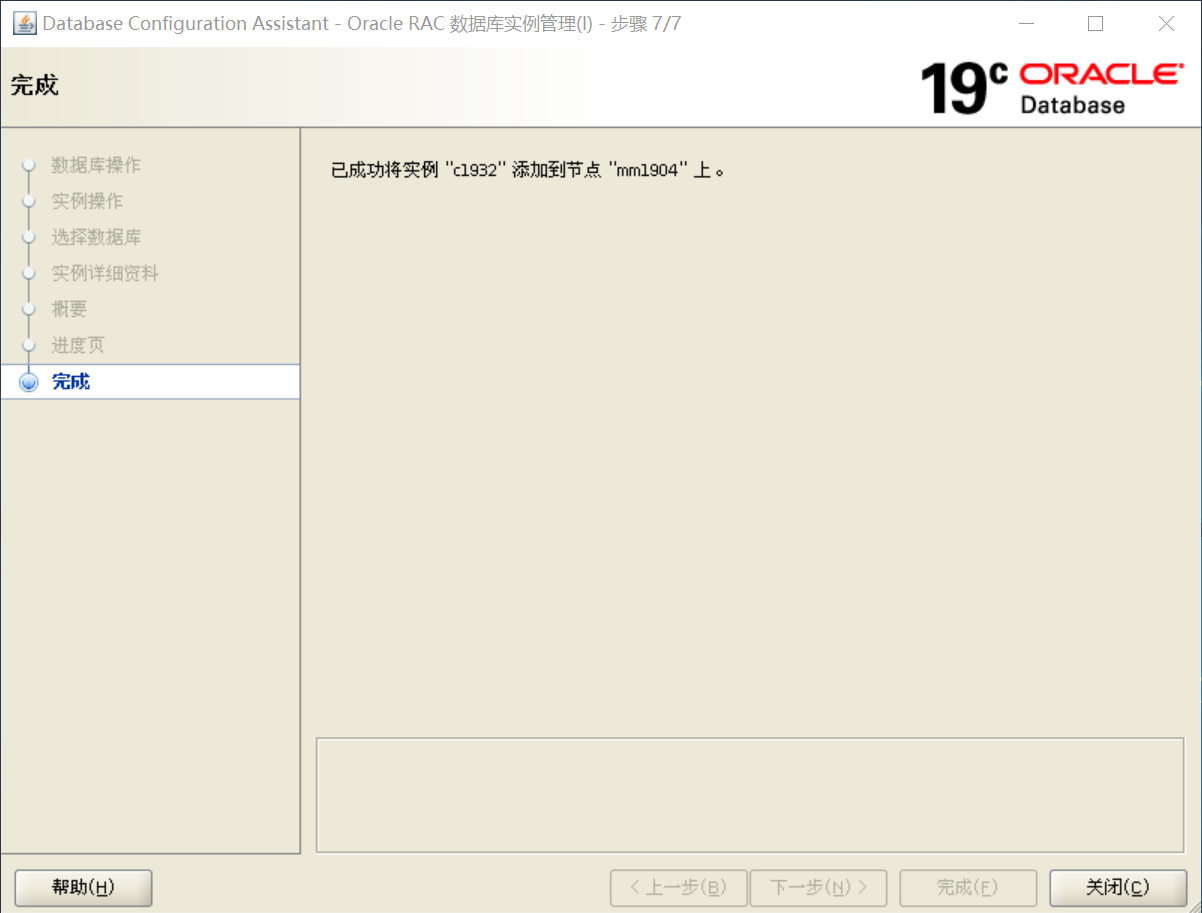

至此,节点实例添加成功。

--------------------------------------------------------------------------------------------------------------------

可以看到所有的资源均正常。

至此,整个在Redhat 7.6 上安装 Oracle 19.3 GI & RAC 的工作已经全部结束。

浙公网安备 33010602011771号

浙公网安备 33010602011771号