ACK One 构建应用系统的两地三中心容灾方案

作者:宇汇,壮怀,先河

概述

两地三中心是指在两个城市部署三个业务处理中心,即:生产中心、同城容灾中心、异地容灾中心。在一个城市部署 2 套环境形成同城双中心,同时处理业务并通过高速链路实现数据同步,可切换运行。在另一城市部署1套环境做异地灾备中心,做数据备份,当双中心同时故障时,异地灾备中心可切换处理业务。两地三中心容灾方案可以极大程度的保证业务的连续运行。

使用 ACK One 的多集群管理应用分发功能,可以帮助企业统一管理 3 个 K8s 集群,实现应用在 3 个 K8s 集群快速部署升级,同时实现应用在 3 个 K8s 集群上的差异化配置。配合使用 GTM(全局流量管理)可以实现在故障发生时业务流量在 3 个 K8s 集群的自动切换。对 RDS 数据层面的数据复制,本实践不做具体介绍,可参考 DTS 数据传输服务。

方案架构

前提条件

开启多集群管理主控实例[1]

通过管理关联集群[2],添加 3 个 K8s 集群到主控实例中,构建两地三中心。本实践中,作为示例,在北京部署 2 个 K8s 集群(cluster1-beijing 和 cluster2-beijing),在杭州部署 1 个 K8s 集群(cluster1-hangzhou)。

创建 GTM 实例[3]

应用部署

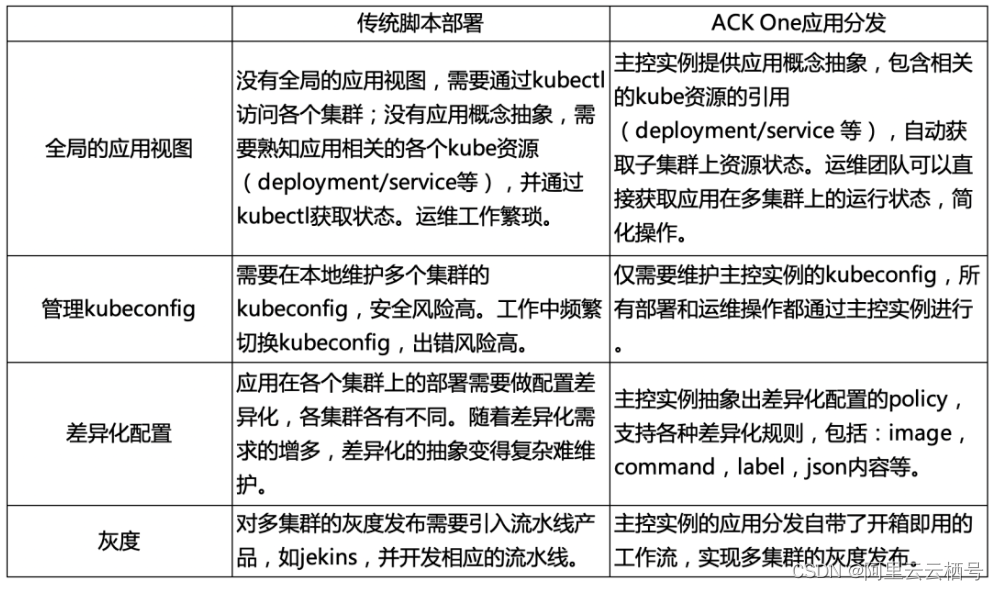

通过 ACK One 主控实例的应用分发功能[4],在 3 个 K8s 集群中分发应用。对比传统的脚本部署,使用 ACK One 的应用分发可获得如下收益。

本实践中,示例应用为 web 应用,包含 K8s Deployment/Service/Ingress/Configmap 资源,Service/Ingress 对外暴露服务,Deployment 读取 Configmap 中的配置参数。通过创建应用分发规则,将应用分发到 3 个 K8s 集群,包括 2 个北京集群,1 个杭州集群,实现两地三中心。分发过程中对 deployment 和 configmap 资源做差异化配置,以适应不用地点的集群,同时分发过程实现人工审核的灰度控制,限制错误的爆炸半径。

1. 执行一下命令创建命名空间 demo。

kubectl create namespace demo

2. 使用以下内容,创建 app-meta.yaml 文件。

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web-demo

name: web-demo

namespace: demo

spec:

replicas: 5

selector:

matchLabels:

app: web-demo

template:

metadata:

labels:

app: web-demo

spec:

containers:

- image: acr-multiple-clusters-registry.cn-hangzhou.cr.aliyuncs.com/ack-multiple-clusters/web-demo:0.4.0

name: web-demo

env:

- name: ENV_NAME

value: cluster1-beijing

volumeMounts:

- name: config-file

mountPath: "/config-file"

readOnly: true

volumes:

- name: config-file

configMap:

items:

- key: config.json

path: config.json

name: web-demo

---

apiVersion: v1

kind: Service

metadata:

name: web-demo

namespace: demo

labels:

app: web-demo

spec:

selector:

app: web-demo

ports:

- protocol: TCP

port: 80

targetPort: 8080

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: web-demo

namespace: demo

labels:

app: web-demo

spec:

rules:

- host: web-demo.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: web-demo

port:

number: 80

---

apiVersion: v1

kind: ConfigMap

metadata:

name: web-demo

namespace: demo

labels:

app: web-demo

data:

config.json: |

{

database-host: "beijing-db.pg.aliyun.com"

}

3. 执行以下命令,在主控实例上部署应用 web-demo。注意:在主控实例上创建 kube 资源并不会下发到子集群,此 kube 资源作为原数据,被后续 Application(步骤 4b)中引用。

kubectl apply -f app-meta.yaml

4. 创建应用分发规则。

- a. 执行以下命令,查看主控实例管理的关联集群,确定应用的分发目标

kubectl amc get managedcluster

预期输出:

Name Alias HubAccepted managedcluster-cxxx cluster1-hangzhou true managedcluster-cxxx cluster2-beijing true managedcluster-cxxx cluster1-beijing true

b. 使用以下内容,创建应用分发规则 app.yaml。替换示例中的和 managedcluster-cxxx 为实际待发布集群名称。分发规则定义的最佳实践在注释中说明。

在 app.yaml 中,包含以下资源类型:Policy (type:topology) 分发目标,Policy (type: override)差异化规则, Workflow 工作流,Application 应用。具体可参考:应用复制分发[5]、应用分发差异化配置[6]和应用集群间灰度分发[7]。

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: cluster1-beijing

namespace: demo

type: topology

properties:

clusters: ["<managedcluster-cxxx>"] #分发目标集群1 cluster1-beijing

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: cluster2-beijing

namespace: demo

type: topology

properties:

clusters: ["<managedcluster-cxxx>"] #分发目标集群2 cluster2-beijing

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: cluster1-hangzhou

namespace: demo

type: topology

properties:

clusters: ["<managedcluster-cxxx>"] #分发目标集群3 cluster1-hangzhou

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: override-env-cluster2-beijing

namespace: demo

type: override

properties:

components:

- name: "deployment"

traits:

- type: env

properties:

containerName: web-demo

env:

ENV_NAME: cluster2-beijing #对集群cluster2-beijing的deployment做环境变量的差异化配置

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: override-env-cluster1-hangzhou

namespace: demo

type: override

properties:

components:

- name: "deployment"

traits:

- type: env

properties:

containerName: web-demo

env:

ENV_NAME: cluster1-hangzhou #对集群cluster1-hangzhou的deployment做环境变量的差异化配置

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: override-replic-cluster1-hangzhou

namespace: demo

type: override

properties:

components:

- name: "deployment"

traits:

- type: scaler

properties:

replicas: 1 #对集群cluster1-hangzhou的deployment做副本数的差异化配置

---

apiVersion: core.oam.dev/v1alpha1

kind: Policy

metadata:

name: override-configmap-cluster1-hangzhou

namespace: demo

type: override

properties:

components:

- name: "configmap"

traits:

- type: json-merge-patch #对集群cluster1-hangzhou的deployment做configmap的差异化配置

properties:

data:

config.json: |

{

database-address: "hangzhou-db.pg.aliyun.com"

}

---

apiVersion: core.oam.dev/v1alpha1

kind: Workflow

metadata:

name: deploy-demo

namespace: demo

steps: #顺序部署cluster1-beijing,cluster2-beijing,cluster1-hangzhou。

- type: deploy

name: deploy-cluster1-beijing

properties:

policies: ["cluster1-beijing"]

- type: deploy

name: deploy-cluster2-beijing

properties:

auto: false #部署cluster2-beijing前需要人工审核

policies: ["override-env-cluster2-beijing", "cluster2-beijing"] #在部署cluster2-beijing时做环境变量的差异化

- type: deploy

name: deploy-cluster1-hangzhou

properties:

policies: ["override-env-cluster1-hangzhou", "override-replic-cluster1-hangzhou", "override-configmap-cluster1-hangzhou", "cluster1-hangzhou"]

#在部署cluster2-beijing时做环境变量,副本数,configmap的差异化

---

apiVersion: core.oam.dev/v1beta1

kind: Application

metadata:

annotations:

app.oam.dev/publishVersion: version8

name: web-demo

namespace: demo

spec:

components:

- name: deployment #独立引用deployment,方便差异化配置

type: ref-objects

properties:

objects:

- apiVersion: apps/v1

kind: Deployment

name: web-demo

- name: configmap #独立引用configmap,方便差异化配置

type: ref-objects

properties:

objects:

- apiVersion: v1

kind: ConfigMap

name: web-demo

- name: same-resource #不做差异化配置

type: ref-objects

properties:

objects:

- apiVersion: v1

kind: Service

name: web-demo

- apiVersion: networking.k8s.io/v1

kind: Ingress

name: web-demo

workflow:

ref: deploy-demo

5. 执行以下命令,在主控实例上部署分发规则 app.yaml。

kubectl apply -f app.yaml

6. 查看应用的部署状态。

kubectl get app web-demo -n demo

预期输出,workflowSuspending 表示部署暂停

NAME COMPONENT TYPE PHASE HEALTHY STATUS AGE web-demo deployment ref-objects workflowSuspending true 47h

7. 查看应用在各个集群上的运行状态

kubectl amc get deployment web-demo -n demo -m all

预期输出:

Run on ManagedCluster managedcluster-cxxx (cluster1-hangzhou) No resources found in demo namespace #第一次新部署应用,工作流还没有开始部署cluster1-hangzhou Run on ManagedCluster managedcluster-cxxx (cluster2-beijing) No resources found in demo namespace #第一次新部署应用,工作流还没有开始部署cluster2-beijiing,等待人工审核 Run on ManagedCluster managedcluster-cxxx (cluster1-beijing) NAME READY UP-TO-DATE AVAILABLE AGE web-demo 5/5 5 5 47h #Deployment在cluster1-beijing集群上运行正常

8. 人工审核通过,部署集群 cluster2-beijing,cluster1-hangzhou。

kubectl amc workflow resume web-demo -n demo Successfully resume workflow: web-demo

9. 查看应用的部署状态。

kubectl get app web-demo -n demo

预期输出,running 表示应用运行正常

NAME COMPONENT TYPE PHASE HEALTHY STATUS AGE web-demo deployment ref-objects running true 47h

10. 查看应用在各个集群上的运行状态

kubectl amc get deployment web-demo -n demo -m all

预期输出:

Run on ManagedCluster managedcluster-cxxx (cluster1-hangzhou) NAME READY UP-TO-DATE AVAILABLE AGE web-demo 1/1 1 1 47h Run on ManagedCluster managedcluster-cxxx (cluster2-beijing) NAME READY UP-TO-DATE AVAILABLE AGE web-demo 5/5 5 5 2d Run on ManagedCluster managedcluster-cxxx (cluster1-beijing) NAME READY UP-TO-DATE AVAILABLE AGE web-demo 5/5 5 5 47h

11. 查看应用在各个集群上的 Ingress 状态

kubectl amc get ingress -n demo -m all

预期结果,每个集群的 Ingress 运行正常,公网 IP 分配成功。

Run on ManagedCluster managedcluster-cxxx (cluster1-hangzhou) NAME CLASS HOSTS ADDRESS PORTS AGE web-demo nginx web-demo.example.com 47.xxx.xxx.xxx 80 47h Run on ManagedCluster managedcluster-cxxx (cluster2-beijing) NAME CLASS HOSTS ADDRESS PORTS AGE web-demo nginx web-demo.example.com 123.xxx.xxx.xxx 80 2d Run on ManagedCluster managedcluster-cxxx (cluster1-beijing) NAME CLASS HOSTS ADDRESS PORTS AGE web-demo nginx web-demo.example.com 182.xxx.xxx.xxx 80 2d

流量管理

通过配置全局流量管理,自动检测应用运行状态,并在异常发生时,自动切换流量到监控集群。

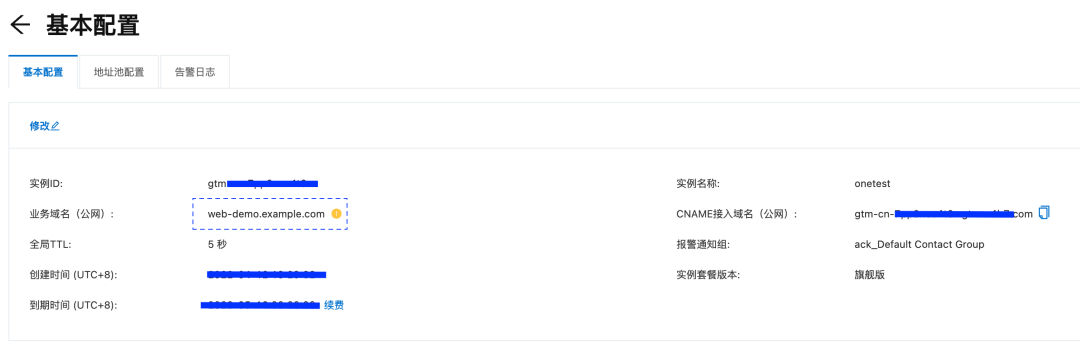

1. 配置全局流量管理实例,web-demo.example.com 为示例应用的域名,请替换为实际应用的域名,并设置 DNS 解析到全局流量管理的 CNAME 接入域名。

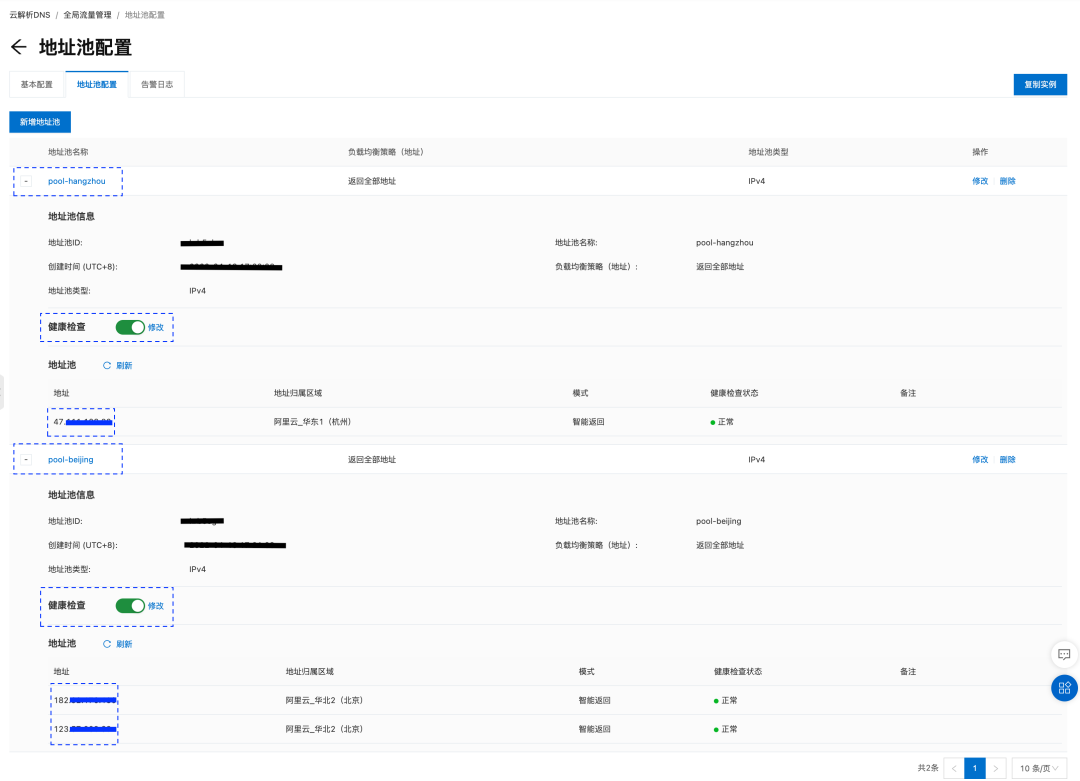

2. 在已创建的 GTM 示例中,创建 2 个地址池:

a、pool-beijing:包含 2 个北京集群的 Ingress IP 地址,负载均衡策略为返回全部地址,实现北京 2 个集群的负载均衡。Ingress IP 地址可通过在主控实例上运行 “kubectl amc get ingress -n demo -m all” 获取。

b、pool-hangzhou:包含 1 个杭州集群的 Ingress IP 地址。

3. 在地址池中开启健康检查,检查失败的地址将从地址池中移除,不再接收流量。

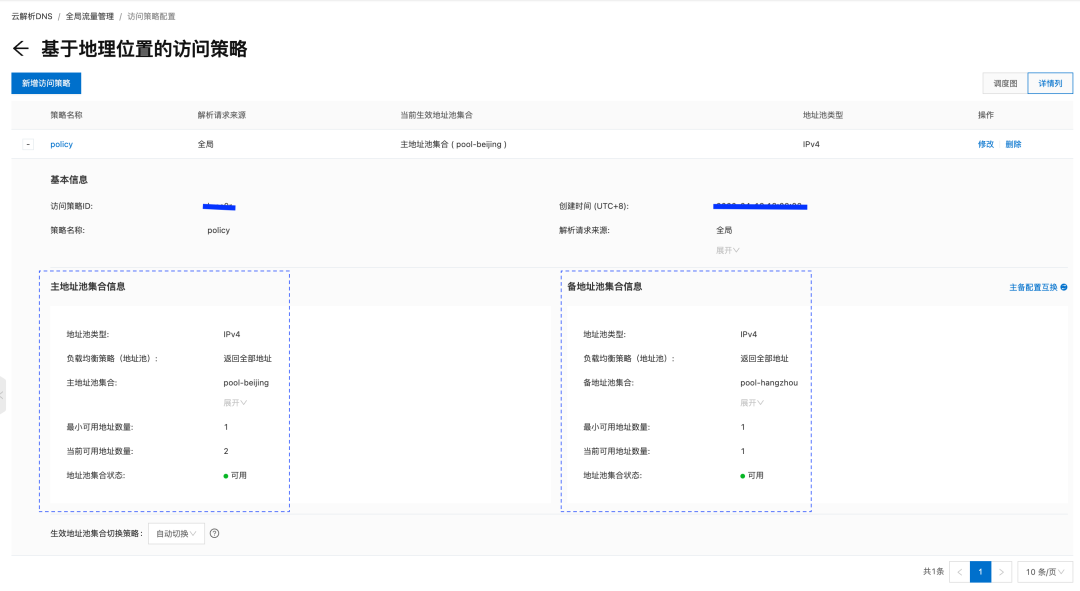

4. 配置访问策略,设置主地址池为北京地址池,备地址池为杭州地址池。正常流量都有北京集群应用处理,当所有北京集群应用不可用时,自动切换到杭州集群应用处理。

部署验证

1. 正常情况,所有有流量都有北京的 2 个集群上的应用处理,每个集群各处理 50% 流量。

for i in {1..50}; do curl web-demo.example.com; sleep 3; done

This is env cluster1-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster1-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster1-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

2. 当集群 cluster1-beijing 上的应用异常时,GTM 将所有的流量路由到 cluster2-bejing 集群处理。

for i in {1..50}; do curl web-demo.example.com; sleep 3; done

...

<html>

<head><title>503 Service Temporarily Unavailable</title></head>

<body>

<center><h1>503 Service Temporarily Unavailable</h1></center>

<hr><center>nginx</center>

</body>

</html>

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

This is env cluster2-beijing !

Config file is {

database-host: "beijing-db.pg.aliyun.com"

}

3. 当集群 cluster1-beijing 和 cluster2-beijing 上的应用同时异常时,GTM 将流量路由到 cluster1-hangzhou 集群处理。

for i in {1..50}; do curl web-demo.example.com; sleep 3; done

<head><title>503 Service Temporarily Unavailable</title></head>

<body>

<center><h1>503 Service Temporarily Unavailable</h1></center>

<hr><center>nginx</center>

</body>

</html>

<html>

<head><title>503 Service Temporarily Unavailable</title></head>

<body>

<center><h1>503 Service Temporarily Unavailable</h1></center>

<hr><center>nginx</center>

</body>

</html>

This is env cluster1-hangzhou !

Config file is {

database-address: "hangzhou-db.pg.aliyun.com"

}

This is env cluster1-hangzhou !

Config file is {

database-address: "hangzhou-db.pg.aliyun.com"

}

This is env cluster1-hangzhou !

Config file is {

database-address: "hangzhou-db.pg.aliyun.com"

}

This is env cluster1-hangzhou !

Config file is {

database-address: "hangzhou-db.pg.aliyun.com"

}

总结

本文侧重介绍了通过 ACK One 的多集群应用分发功能,可以帮助企业管理多集群环境,通过多集群主控示例提供的统一的应用下发入口,实现应用的多集群分发,差异化配置,工作流管理等分发策略。结合 GTM 全局流量管理,快速搭建管理两地三中心的应用容灾系统。

除多集群应用分发外,ACK One 更是支持连接并管理任何地域、任何基础设施上的 Kubernetes 集群,提供一致的管理和社区兼容的 API,支持对计算、网络、存储、安全、监控、日志、作业、应用、流量等进行统一运维管控。阿里云分布式云容器平台(简称 ACK One)是面向混合云、多集群、分布式计算、容灾等场景推出的企业级云原生平台。更多内容可以查看产品介绍分布式云容器平台 ACK One[8]。

相关链接

[1] 开启多集群管理主控实例:

[2] 通过管理关联集群:

[3] 创建 GTM 实例:

[4] 应用分发功能:

[5] 应用复制分发:

[6] 应用分发差异化配置:

[7] 应用集群间灰度分发:

[8] 分布式云容器平台 ACK One:

本文为阿里云原创内容,未经允许不得转载。