kubeadm快速部署kubernetes(十九)

安装要求

部署Kubernetes集群机器需要满足以下几个条件:

- 一台或多台机器,操作系统 CentOS7.x-86_x64

- 硬件配置:2GB或更多RAM,2个CPU或更多CPU,硬盘30GB或更多

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像

- 禁止swap分区

环境准备

1. 在所有节点上安装Docker和kubeadm

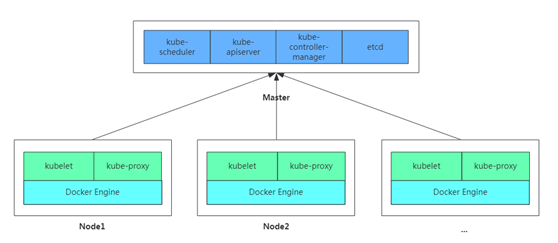

2. 部署Kubernetes Master

3. 部署容器网络插件

4. 部署 Kubernetes Node,将节点加入Kubernetes集群中

5. 部署Dashboard Web页面,可视化查看Kubernetes资源

#添加主机名与IP对应关系(记得设置主机名): cat << EOF >> /etc/hosts 192.168.0.122 k8s-master 192.168.0.123 k8s-node1 192.168.0.124 k8s-node2 EOF #关闭防火墙 systemctl stop firewalld systemctl disable firewalld iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat && iptables -P FORWARD ACCEPT #关闭selinux setenforce 0 sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/sysconfig/selinux sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/sysconfig/selinux sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/selinux/config getenforce #关闭swap swapoff -a sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab #安装ipvs相关模块 yum install -y epel-release conntrack ipvsadm ipset jq sysstat curl iptables libseccomp #加载内核模块 modprobe br_netfilter modprobe ip_vs modprobe ip_vs_rr modprobe ip_vs_wrr modprobe ip_vs_sh modprobe nf_conntrack_ipv4 cat > /etc/sysconfig/modules/ipvs.modules <<EOF #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 modprobe -- br_netfilter EOF chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules

lsmod |grep -e ip_vs -e nf_conntrack_ipv4 #设置内核参数 cat << EOF | tee /etc/sysctl.d/k8s.conf fs.file-max = 1000000 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 vm.swappiness = 0 net.ipv4.ip_forward = 1 net.ipv4.tcp_max_tw_buckets = 6000 net.ipv4.tcp_sack = 1 net.ipv4.tcp_window_scaling = 1 net.ipv4.tcp_rmem = 4096 87380 4194304 net.ipv4.tcp_wmem = 4096 16384 4194304 net.ipv4.tcp_max_syn_backlog = 16384 net.core.netdev_max_backlog = 32768 net.core.somaxconn = 32768 net.core.wmem_default = 8388608 net.core.rmem_default = 8388608 net.core.rmem_max = 16777216 net.core.wmem_max = 16777216 net.ipv4.tcp_timestamps = 1 net.ipv4.tcp_fin_timeout = 20 net.ipv4.tcp_synack_retries = 2 net.ipv4.tcp_syn_retries = 2 net.ipv4.tcp_syncookies = 1 net.ipv4.tcp_tw_reuse = 1 net.ipv4.tcp_mem = 94500000 915000000 927000000 net.ipv4.tcp_max_orphans = 3276800 net.ipv4.ip_local_port_range = 1024 65000 net.nf_conntrack_max = 6553500 net.netfilter.nf_conntrack_max = 6553500 net.netfilter.nf_conntrack_tcp_timeout_close_wait = 60 net.netfilter.nf_conntrack_tcp_timeout_fin_wait = 120 net.netfilter.nf_conntrack_tcp_timeout_time_wait = 120 net.netfilter.nf_conntrack_tcp_timeout_established = 3600 EOF sysctl --system

安装docker

yum install -y yum-utils device-mapper-persistent-data lvm2 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo yum makecache fast yum install -y docker-ce

systemctl enable docker

Docker建议配置阿里云镜像加速

安装完成后配置启动时的命令,否则docker会将iptables FORWARD chain的默认策略设置为DROP

另外Kubeadm建议将systemd设置为cgroup驱动,所以还要修改daemon.json

sed -i "13i ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT" /usr/lib/systemd/system/docker.service tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://bk6kzfqm.mirror.aliyuncs.com"], "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts": { "max-size": "100m" }, "storage-driver": "overlay2", "storage-opts": [ "overlay2.override_kernel_check=true" ] } EOF systemctl daemon-reload systemctl restart docker

安装kubeadm,kubelet和kubectl

#添加阿里云YUM软件源 cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF #安装 yum install -y kubelet kubeadm kubectl #开机自启 systemctl enable kubelet

部署Kubernetes Master

由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址。

初始化master [root@k8s-master ~]# kubeadm init \ --apiserver-advertise-address=192.168.0.122 \ --image-repository registry.aliyuncs.com/google_containers \ --kubernetes-version v1.15.0 \ --service-cidr=10.1.0.0/16 \ --pod-network-cidr=10.244.0.0/16 #使用kubectl工具: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config kubectl get cs

安装Pod网络插件(CNI)

这里使用canal。

[root@k8s-master ~]# kubectl apply -f https://docs.projectcalico.org/v3.3/getting-started/kubernetes/installation/hosted/canal/rbac.yaml

clusterrole.rbac.authorization.k8s.io/calico created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/canal-flannel created

clusterrolebinding.rbac.authorization.k8s.io/canal-calico created

[root@k8s-master ~]# kubectl apply -f https://docs.projectcalico.org/v3.3/getting-started/kubernetes/installation/hosted/canal/canal.yaml

configmap/canal-config created

daemonset.extensions/canal created

serviceaccount/canal created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

[root@k8s-master ~]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

canal-626dm 3/3 Running 0 4m20s 192.168.0.122 k8s-master <none> <none>

coredns-bccdc95cf-b7cvx 1/1 Running 0 27m 10.244.0.3 k8s-master <none> <none>

coredns-bccdc95cf-wzd2t 1/1 Running 0 27m 10.244.0.2 k8s-master <none> <none>

etcd-k8s-master 1/1 Running 0 26m 192.168.0.122 k8s-master <none> <none>

kube-apiserver-k8s-master 1/1 Running 0 27m 192.168.0.122 k8s-master <none> <none>

kube-controller-manager-k8s-master 1/1 Running 1 27m 192.168.0.122 k8s-master <none> <none>

kube-proxy-vbsch 1/1 Running 0 27m 192.168.0.122 k8s-master <none> <none>

kube-scheduler-k8s-master 1/1 Running 1 27m 192.168.0.122 k8s-master <none> <none>

加入Kubernetes Node

向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

[root@k8s-node02 ~]# kubeadm join 192.168.0.122:6443 --token z11w4p.ztixn53mzj0jcl17 \ --discovery-token-ca-cert-hash sha256:4a59c419b68c15908be2773e7c610f4bf514cb7188aee4fcc5edbe46f9459987

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 28m v1.15.0

k8s-node01 Ready <none> 17m v1.15.0

k8s-node02 Ready <none> 16m v1.15.0

kube-proxy开启ipvs

kubectl get configmap kube-proxy -n kube-system -o yaml > kube-proxy-configmap.yaml sed -i 's/mode: ""/mode: "ipvs"/' kube-proxy-configmap.yaml kubectl apply -f kube-proxy-configmap.yaml rm -f kube-proxy-configmap.yaml kubectl get pod -n kube-system | grep kube-proxy | awk '{system("kubectl delete pod "$1" -n kube-system")}'

测试kubernetes集群

[root@k8s-master ~]# kubectl create deployment nginx --image=nginx deployment.apps/nginx created [root@k8s-master ~]# kubectl expose deployment nginx --port=80 --type=NodePort service/nginx exposed [root@k8s-master ~]# kubectl get pod,svc -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES pod/nginx-554b9c67f9-6x7vl 1/1 Running 0 67m 10.244.1.2 k8s-node01 <none> <none> NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR service/kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 3h12m <none> service/nginx NodePort 10.1.116.158 <none> 80:31509/TCP 67m app=nginx

访问地址:http://NodeIP:Port

部署 Dashboard(UI)

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v1.10.1/src/deploy/recommended/kubernetes-dashboard.yaml

默认镜像国内无法访问,修改镜像地址为:registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.1

默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kube-system spec: type: NodePort ports: - port: 443 targetPort: 8443 nodePort: 30001 selector: k8s-app: kubernetes-dashboard

访问地址:https://NodeIP:30001

浙公网安备 33010602011771号

浙公网安备 33010602011771号