mysql之mha高可用及读写分离

MHA高可用

1.MHA介绍及工作原理

MHA(Master High Availability),由日本DeNA公司youshimaton(现就职于Facebook公司)开发。MHA能做到在10~30秒之内自动完成数据库的Failover,Failover的过程中,能最大程度上保证数据的一致性。

该软件由两部分组成:MHA Manager(管理节点)和MHA Node(数据节点)。

半同步复制,可以大大降低数据丢失的风险。MHA可以与半同步复制结合起来。

MHA高可用集群,要求一个复制集群中需要有三台数据库服务器,一主二从,不支持多实例。出于机器成本的考虑,淘宝也在该基础上进行了改造,淘宝TMHA支持一主一从

注意:必须使用独立的数据库节点,不支持多实例。

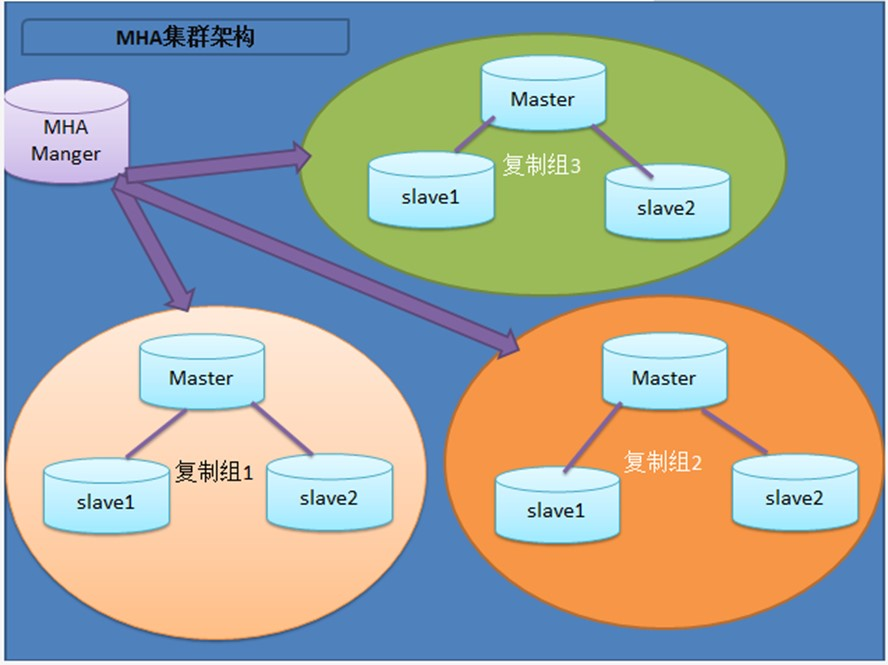

2.MHA企业架构图

3.MHA软件结构

Manager工具包主要包括以下几个工具:

- masterha_check_ssh 检查MHA的SSH配置状况

- masterha_check_repl 检查MySQL复制状况

- masterha_manger 启动MHA

- masterha_check_status 检测当前MHA运行状态

- masterha_master_monitor 检测master是否宕机

- masterha_conf_host 添加或删除配置的server信息

- masterha_master_switch 控制故障转移(自动或者手动)

Node工具包(这些工具通常由MHA Manager的脚本触发,无需人为操作)主要包括以下几个工具:

- save_binary_logs 保存和复制master的二进制日志

- apply_diff_relay_logs 识别差异的中继日志事件并将其差异的事件应用于其他的从节点

- slave filter_mysqlbinlog 去除不必要的ROLLBACK事件(MHA已不再使用这个工具)

- purge_relay_logs 清除中继日志(不会阻塞SQL线程)

4.MHA工作原理

1、Manager程序负责监控所有已知Node(1主2从所有节点)

2、当主库发生意外宕机

2.1 mysql实例故障(SSH能够连接到主机)

1、监控到主库宕机,选择一个新主(取消从库角色,reset slave),选择标准:数据较新的从库会被选择为新主(show slave status\G)

2、从库通过MHA自带脚本程序,立即保存缺失部分的binlog

3、二号从库会重新与新主构建主从关系,继续提供服务

4、如果VIP机制,将vip从原主库漂移到新主,让应用程序无感知

2.2 主节点服务器宕机(SSH已经连接不上了)

1、监控到主库宕机,尝试SSH连接,尝试失败

2、选择一个数据较新的从库成为新主库(取消从库角色 reset slave),判断细节:show slave status\G

3、计算从库之间的relay-log的差异,补偿到2号从库

4、二号从库会重新与新主构建主从关系,继续提供服务

5、如果VIP机制,将vip从原主库漂移到新主,让应用程序无感知

6、如果有binlog server机制,会继续讲binlog server中的记录的缺失部分的事务,补偿到新的主库

5.MHA搭建过程(需要在GTID的环境上进行搭建)

5.1 在/etc/my.cnf配置文件中添加(每个节点都要添加)

relay_log_purge=0 #保留mysql中relay_log

每个节点都需要做好解析

172.16.1.51 db01

172.16.1.52 db02

172.16.1.53 db03

5.2 各个节点安装mha node

mha下载地址:https://github.com/yoshinorim/mha4mysql-manager/releases

每个点都需要进行安装mha-node

yum -y install mha4mysql-node-0.58-0.el7.centos.noarch.rpm

5.3 在主库上新建mha管理用户

grant all privileges on *.* to mha@'172.16.1.%' identified by 'mha';

5.4 配置软件链接

ln -s /application/mysql/bin/mysqlbinlog /usr/bin/mysqlbinlog

ln -s /application/mysql/bin/mysql /usr/bin/mysql

5.5 部署manage节点(生产环境一般拿多一台服务器做manage节点,此处使用db03)

yum -y install mha4mysql-manager-0.58-0.el7.centos.noarch.rpm

5.6 创建manage目录与配置文件

mkdir -p /etc/mha

mkdir -p /var/log/mha/app1 ----》可以管理多套主从复制

vim /etc/mha/app1.cnf

[server default]

manager_log=/var/log/mha/app1/manager

manager_workdir=/var/log/mha/app1

master_binlog_dir=/data/binlog

user=mha

password=mha

ping_interval=2

repl_password=123

repl_user=repl

ssh_user=root

[server1]

hostname=172.16.1.51

port=3306

[server2]

hostname=172.16.1.52

port=3306

[server3]

hostname=172.16.1.53

port=3306

5.7配置各节点ssh互信

ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa >/dev/null 2>&1

ssh-copy-id -i /root/.ssh/id_dsa.pub root@172.16.1.51

ssh-copy-id -i /root/.ssh/id_dsa.pub root@172.16.1.52

ssh-copy-id -i /root/.ssh/id_dsa.pub root@172.16.1.53

5.8 检查互信

[root@db03 tools]# masterha_check_ssh --conf=/etc/mha/app1.cnf

Tue Apr 30 20:04:52 2019 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping

.Tue Apr 30 20:04:52 2019 - [info] Reading application default configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:04:52 2019 - [info] Reading server configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:04:52 2019 - [info] Starting SSH connection tests..

Tue Apr 30 20:04:53 2019 - [debug]

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.51(172.16.1.51:22) to root@172.16.

1.52(172.16.1.52:22)..Tue Apr 30 20:04:52 2019 - [debug] ok.

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.51(172.16.1.51:22) to root@172.16.

1.53(172.16.1.53:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [debug]

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.52(172.16.1.52:22) to root@172.16.

1.51(172.16.1.51:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [debug] Connecting via SSH from root@172.16.1.52(172.16.1.52:22) to root@172.16.

1.53(172.16.1.53:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [error][/usr/share/perl5/vendor_perl/MHA/SSHCheck.pm, ln63]

Tue Apr 30 20:04:53 2019 - [debug] Connecting via SSH from root@172.16.1.53(172.16.1.53:22) to root@172.16.

1.51(172.16.1.51:22)..Warning: Permanently added '172.16.1.53' (ECDSA) to the list of known hosts.

Permission denied (publickey,password).

Tue Apr 30 20:04:53 2019 - [error][/usr/share/perl5/vendor_perl/MHA/SSHCheck.pm, ln111] SSH connection from

root@172.16.1.53(172.16.1.53:22) to root@172.16.1.51(172.16.1.51:22) failed!SSH Configuration Check Failed!

at /usr/bin/masterha_check_ssh line 44.

[root@db03 tools]#

5.9 检查主从

[root@db03 tools]# masterha_check_ssh --conf=/etc/mha/app1.cnf

Tue Apr 30 20:04:52 2019 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping

.Tue Apr 30 20:04:52 2019 - [info] Reading application default configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:04:52 2019 - [info] Reading server configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:04:52 2019 - [info] Starting SSH connection tests..

Tue Apr 30 20:04:53 2019 - [debug]

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.51(172.16.1.51:22) to root@172.16.

1.52(172.16.1.52:22)..Tue Apr 30 20:04:52 2019 - [debug] ok.

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.51(172.16.1.51:22) to root@172.16.

1.53(172.16.1.53:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [debug]

Tue Apr 30 20:04:52 2019 - [debug] Connecting via SSH from root@172.16.1.52(172.16.1.52:22) to root@172.16.

1.51(172.16.1.51:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [debug] Connecting via SSH from root@172.16.1.52(172.16.1.52:22) to root@172.16.

1.53(172.16.1.53:22)..Tue Apr 30 20:04:53 2019 - [debug] ok.

Tue Apr 30 20:04:53 2019 - [error][/usr/share/perl5/vendor_perl/MHA/SSHCheck.pm, ln63]

Tue Apr 30 20:04:53 2019 - [debug] Connecting via SSH from root@172.16.1.53(172.16.1.53:22) to root@172.16.

1.51(172.16.1.51:22)..Warning: Permanently added '172.16.1.53' (ECDSA) to the list of known hosts.

Permission denied (publickey,password).

Tue Apr 30 20:04:53 2019 - [error][/usr/share/perl5/vendor_perl/MHA/SSHCheck.pm, ln111] SSH connection from

root@172.16.1.53(172.16.1.53:22) to root@172.16.1.51(172.16.1.51:22) failed!SSH Configuration Check Failed!

at /usr/bin/masterha_check_ssh line 44.

[root@db03 tools]# masterha_check_repl --conf=/etc/mha/app1.cnf

Tue Apr 30 20:05:38 2019 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping

.Tue Apr 30 20:05:38 2019 - [info] Reading application default configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:05:38 2019 - [info] Reading server configuration from /etc/mha/app1.cnf..

Tue Apr 30 20:05:38 2019 - [info] MHA::MasterMonitor version 0.58.

Tue Apr 30 20:05:40 2019 - [info] GTID failover mode = 1

Tue Apr 30 20:05:40 2019 - [info] Dead Servers:

Tue Apr 30 20:05:40 2019 - [info] Alive Servers:

Tue Apr 30 20:05:40 2019 - [info] 172.16.1.51(172.16.1.51:3306)

Tue Apr 30 20:05:40 2019 - [info] 172.16.1.52(172.16.1.52:3306)

Tue Apr 30 20:05:40 2019 - [info] 172.16.1.53(172.16.1.53:3306)

Tue Apr 30 20:05:40 2019 - [info] Alive Slaves:

Tue Apr 30 20:05:40 2019 - [info] 172.16.1.52(172.16.1.52:3306) Version=5.6.43-log (oldest major version

between slaves) log-bin:enabledTue Apr 30 20:05:40 2019 - [info] GTID ON

Tue Apr 30 20:05:40 2019 - [info] Replicating from 172.16.1.51(172.16.1.51:3306)

Tue Apr 30 20:05:40 2019 - [info] 172.16.1.53(172.16.1.53:3306) Version=5.6.43-log (oldest major version

between slaves) log-bin:enabledTue Apr 30 20:05:40 2019 - [info] GTID ON

Tue Apr 30 20:05:40 2019 - [info] Replicating from 172.16.1.51(172.16.1.51:3306)

Tue Apr 30 20:05:40 2019 - [info] Current Alive Master: 172.16.1.51(172.16.1.51:3306)

Tue Apr 30 20:05:40 2019 - [info] Checking slave configurations..

Tue Apr 30 20:05:40 2019 - [info] read_only=1 is not set on slave 172.16.1.52(172.16.1.52:3306).

Tue Apr 30 20:05:40 2019 - [info] read_only=1 is not set on slave 172.16.1.53(172.16.1.53:3306).

Tue Apr 30 20:05:40 2019 - [info] Checking replication filtering settings..

Tue Apr 30 20:05:40 2019 - [info] binlog_do_db= , binlog_ignore_db=

Tue Apr 30 20:05:40 2019 - [info] Replication filtering check ok.

Tue Apr 30 20:05:40 2019 - [info] GTID (with auto-pos) is supported. Skipping all SSH and Node package check

ing.Tue Apr 30 20:05:40 2019 - [info] Checking SSH publickey authentication settings on the current master..

Tue Apr 30 20:05:40 2019 - [info] HealthCheck: SSH to 172.16.1.51 is reachable.

Tue Apr 30 20:05:40 2019 - [info]

172.16.1.51(172.16.1.51:3306) (current master)

+--172.16.1.52(172.16.1.52:3306)

+--172.16.1.53(172.16.1.53:3306)

Tue Apr 30 20:05:40 2019 - [info] Checking replication health on 172.16.1.52..

Tue Apr 30 20:05:40 2019 - [info] ok.

Tue Apr 30 20:05:40 2019 - [info] Checking replication health on 172.16.1.53..

Tue Apr 30 20:05:40 2019 - [info] ok.

Tue Apr 30 20:05:40 2019 - [warning] master_ip_failover_script is not defined.

Tue Apr 30 20:05:40 2019 - [warning] shutdown_script is not defined.

Tue Apr 30 20:05:40 2019 - [info] Got exit code 0 (Not master dead).

MySQL Replication Health is OK.

[root@db03 tools]#

5.10 启动mha

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/mha/app1/manager.log 2>&1 &

6.故障演练

6.1 宕掉主库(db01)

[root@db01 tools]# /etc/init.d/mysqld stop

Shutting down MySQL...... SUCCESS!

6.2 查看mha日志

[root@db03 ~]# cat /var/log/mha/app1/manager

......

CHANGE MASTER TO MASTER_HOST='172.16.1.52', MASTER_PORT=3306, MASTER_AUTO_POSITION=1, MASTER_USER='repl', MASTER_PASSWORD='xxx'; #此处是恢复故障机的命令

......

----- Failover Report -----

app1: MySQL Master failover 172.16.1.51(172.16.1.51:3306) to 172.16.1.52(172.16.1.52:3306) succeeded

Master 172.16.1.51(172.16.1.51:3306) is down!

Check MHA Manager logs at db03:/var/log/mha/app1/manager for details.

Started automated(non-interactive) failover.

Selected 172.16.1.52(172.16.1.52:3306) as a new master.

172.16.1.52(172.16.1.52:3306): OK: Applying all logs succeeded.

172.16.1.53(172.16.1.53:3306): OK: Slave started, replicating from 172.16.1.52(172.16.1.52:3306)

172.16.1.52(172.16.1.52:3306): Resetting slave info succeeded.

Master failover to 172.16.1.52(172.16.1.52:3306) completed successfully. #此处表示已经成功切换到52这一台机器了

6.3 查看db03

mysql> show slave status\G

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 172.16.1.52 #已成功切换到db02

Master_User: repl

Master_Port: 3306

Connect_Retry: 60

Master_Log_File: mysql-bin.000001

Read_Master_Log_Pos: 805

Relay_Log_File: db03-relay-bin.000002

Relay_Log_Pos: 408

Relay_Master_Log_File: mysql-bin.000001

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Replicate_Do_DB:

Replicate_Ignore_DB:

Replicate_Do_Table:

Replicate_Ignore_Table:

Replicate_Wild_Do_Table:

Replicate_Wild_Ignore_Table:

Last_Errno: 0

Last_Error:

Skip_Counter: 0

Exec_Master_Log_Pos: 805

Relay_Log_Space: 611

Until_Condition: None

Until_Log_File:

Until_Log_Pos: 0

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: 0

Master_SSL_Verify_Server_Cert: No

Last_IO_Errno: 0

Last_IO_Error:

Last_SQL_Errno: 0

Last_SQL_Error:

Replicate_Ignore_Server_Ids:

Master_Server_Id: 52

Master_UUID: 901b2d37-6af9-11e9-9c6c-000c29481d4a

Master_Info_File: /application/mysql/data/master.info

SQL_Delay: 0

SQL_Remaining_Delay: NULL

Slave_SQL_Running_State: Slave has read all relay log; waiting for the slave I/O thread to update it

Master_Retry_Count: 86400

Master_Bind:

Last_IO_Error_Timestamp:

Last_SQL_Error_Timestamp:

Master_SSL_Crl:

Master_SSL_Crlpath:

Retrieved_Gtid_Set:

Executed_Gtid_Set: fac6353b-6a35-11e9-9770-000c29c0e349:1-3

Auto_Position: 1

1 row in set (0.00 sec)

6.3 恢复db01

1.在db01上,将db01重新加入到主从复制

[root@db01 tools]# /etc/init.d/mysqld start

CHANGE MASTER TO MASTER_HOST='172.16.1.52', MASTER_PORT=3306, MASTER_AUTO_POSITION=1, MASTER_USER='repl',MASTER_PASSWORD='123';

start slave

2.在mha配置文件中重新加入db01

[root@db03 tools]# vim /etc/mha/app1.cnf

[server default]

......

[server1]

hostname=172.16.1.51

port=3306

......

3.启动MHA了manager程序

masterha_check_ssh --conf=/etc/mha/app1.cnf

masterha_check_ssh --conf=/etc/mha/app1.cnf

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/mha/app1/manager.log 2>&1 &

7.使用MHA自带脚本实现IP FailOver(vip 漂移,应用透明)

7.1 上传脚本

[root@db03 bin]# cat master_ip_failover

#!/usr/bin/env perl

use strict;

use warnings FATAL => 'all';

use Getopt::Long;

my (

$command, $ssh_user, $orig_master_host, $orig_master_ip,

$orig_master_port, $new_master_host, $new_master_ip, $new_master_port

);

my $vip = '10.0.0.55/24';

my $key = '1';

my $ssh_start_vip = "/sbin/ifconfig eth1:$key $vip";

my $ssh_stop_vip = "/sbin/ifconfig eth1:$key down";

GetOptions(

'command=s' => \$command,

'ssh_user=s' => \$ssh_user,

'orig_master_host=s' => \$orig_master_host,

'orig_master_ip=s' => \$orig_master_ip,

'orig_master_port=i' => \$orig_master_port,

'new_master_host=s' => \$new_master_host,

'new_master_ip=s' => \$new_master_ip,

'new_master_port=i' => \$new_master_port,

);

exit &main();

sub main {

print "\n\nIN SCRIPT TEST====$ssh_stop_vip==$ssh_start_vip===\n\n";

if ( $command eq "stop" || $command eq "stopssh" ) {

my $exit_code = 1;

eval {

print "Disabling the VIP on old master: $orig_master_host \n";

&stop_vip();

$exit_code = 0;

};

if ($@) {

warn "Got Error: $@\n";

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "start" ) {

my $exit_code = 10;

eval {

print "Enabling the VIP - $vip on the new master - $new_master_host \n";

&start_vip();

$exit_code = 0;

};

if ($@) {

warn $@;

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "status" ) {

print "Checking the Status of the script.. OK \n";

exit 0;

}

else {

&usage();

exit 1;

}

}

sub start_vip() {

`ssh $ssh_user\@$new_master_host \" $ssh_start_vip \"`;

}

sub stop_vip() {

return 0 unless ($ssh_user);

`ssh $ssh_user\@$orig_master_host \" $ssh_stop_vip \"`;

}

sub usage {

print

"Usage: master_ip_failover --command=start|stop|stopssh|status --orig_master_host=host --orig_master_ip=

ip --orig_master_port=port --new_master_host=host --new_master_ip=ip --new_master_port=port\n";

[root@db03 bin]# dos2unix /usr/local/bin/master_ip_failover

[root@db03 bin]# chmod +x master_ip_failover

7.2 在mha配置文件中添加

[root@db03 bin]# vim /etc/mha/app1.cnf

[server default]

......

master_ip_failover_script=/usr/local/bin/master_ip_failover

......

7.3 修改master_ip_failover配置文件

my $vip = '172.16.1.100/24';

my $key = '1';

my $ssh_start_vip = "/sbin/ifconfig eth1:$key $vip";

my $ssh_stop_vip = "/sbin/ifconfig eth1:$key down";

7.4 重启mha

masterha_stop --conf=/etc/mha/app1.cnf

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/mha/app1/manager.log 2>&1 &

7.5 手工在主库上绑定vip,注意一定要和配置文件中的ethN一致,我的是eth1:1(1是key指定的值)

ifconfig eth1:1 172.16.1.100/24 #如最小化安装没有ifconfig 命令则需要使用yum -y install net-tools安装

[root@db02 tools]# ip a

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:48:1d:54 brd ff:ff:ff:ff:ff:ff

inet 172.16.1.52/24 brd 172.16.1.255 scope global eth1

valid_lft forever preferred_lft forever

inet 172.16.1.100/24 brd 172.16.1.255 scope global secondary eth1:1

valid_lft forever preferred_lft forever

7.6 切换测试

停止主库

[root@db02 tools]# /etc/init.d/mysqld stop

Shutting down MySQL...... SUCCESS!

此时发现vip已经在db01上了

[root@db01 tools]# ip a

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:c0:e3:53 brd ff:ff:ff:ff:ff:ff

inet 172.16.1.51/24 brd 172.16.1.255 scope global eth1

valid_lft forever preferred_lft forever

inet 172.16.1.100/24 brd 172.16.1.255 scope global secondary eth1:1

valid_lft forever preferred_lft forever

7.7 恢复db02主从复制关系

mysql> CHANGE MASTER TO MASTER_HOST='172.16.1.51', MASTER_PORT=3306, MASTER_AUTO_POSITION=1, MASTER_USER='re

pl',MASTER_PASSWORD='123';

在mha配置文件中加入

[server2]

hostname=172.16.1.52

port=3306

启动mha

8.实现外部数据补偿(binlogserver配置)

生产环境中一般是找一台额外的机器,必须要有5.6以上的版本,支持gtid并开启,我们直接用的是db03

8.1 在mha配置文件中加入

[root@db03 bin]# vim /etc/mha/app1.cnf

[server default]

manager_log=/var/log/mha/app1/manager

manager_workdir=/var/log/mha/app1

master_binlog_dir=/data/binlog

master_ip_failover_script=/usr/local/bin/master_ip_failover

password=mha

ping_interval=2

repl_password=123

repl_user=repl

ssh_user=root

user=mha

[server1]

hostname=172.16.1.51

port=3306

[server2]

hostname=172.16.1.52

port=3306

[binlog1]

no_master=1

hostname=172.16.1.53

master_binlog_dir=/data/mysql/binlog #提前创建好,这个目录不能和原有的binlog一致

mkdir -p /data/mysql/binlog

chown -R mysql.mysql /data/mysql/*

8.2 拉取主库binlog文件

cd /data/mysql/binlog -----》必须进入到自己创建好的目录

mysqlbinlog -R --host=172.16.1.51 --user=mha --password=mha --raw --stop-never mysql-bin.000001 &

8.3 重启mha

masterha_stop --conf=/etc/mha/app1.cnf

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/mha/app1/manager.log 2>&1 &

8.4 测试

刷新binlog日志在主库上

mysql> flush logs;

查看binserver目录

[root@db03 binlog]# ls

mysql-bin.000001 mysql-bin.000002 mysql-bin.000003 mysql-bin.000004

9.其它参数说明

ping_interval=2(在server标签下设置) manager检测节点存活的间隔时间,总共会探测4次。

candidate_master=1(在节点标签下设置)

#设置为候选master,如果设置该参数以后,发生主从切换以后将会将此从库提升为主库,即使这个主库不是集群中事件最新的slave,默认情况下如果一个slave落后master 100M的relay logs的话,MHA将不会选择该slave作为一个新的master,因为对于这个slave的恢复需要花费很长时间,通过设置check_repl_delay=0,MHA触发切换在选择一个新的master的时候将会忽略复制延时,这个参数对于设置了candidate_master=1的主机非常有用,因为这个候选主在切换的过程中一定是新的master

check_repl_delay=0(节点标签下设置) #用防止master故障时,切换时slave有延迟,卡在那里切不过来

10.Mysql之Atlas(读写分离)

Atlas 是由 Qihoo 360公司Web平台部基础架构团队开发维护的一个基于MySQL协议的数据中间层项目。它在MySQL官方推出的MySQL-Proxy 0.8.2版本的基础上,修改了大量bug,添加了很多功能特性。目前该项目在360公司内部得到了广泛应用,很多MySQL业务已经接入了Atlas平台,每天承载的读写请求数达几十亿条。

源码Github: https://github.com/Qihoo360/Atlas

10.1 Atlas的功用与应用场景

Atlas的功能有:读写分离、从库负载均衡、自动分表、IP过滤、SQL语句黑白名单、DBA可平滑上下线DB、自动摘除宕机的DB

Atlas的使用场景:Atlas是一个位于前端应用与后端MySQL数据库之间的中间件,它使得应用程序员无需再关心读写分离、分表等与MySQL相关的细节,可以专注于编写业务逻辑,同时使得DBA的运维工作对前端应用透明,上下线DB前端应用无感知

10.2 Atlas的安装过程

下载地址:https://github.com/Qihoo360/Atlas/releases

注意:

1、Atlas只能安装运行在64位的系统上

2、Centos 5.X安装 Atlas-XX.el5.x86_64.rpm,Centos 6.X安装Atlas-XX.el6.x86_64.rpm(经过测试centos7也可以使用6的版本)

3、后端mysql版本应大于5.1,建议使用Mysql 5.6以上

1.安装altas

rpm -ivh Atlas-2.2.1.el6.x86_64.rpm

2.修改配置文件

cd /usr/local/mysql-proxy/

cp test.cnf test.cnf.bak

vim /usr/local/mysql-proxy/conf/test.cnf

[mysql-proxy]

admin-username = user

admin-password = pwd

proxy-backend-addresses = 172.16.1.100:3306 # 设置写入主库vip的地址

proxy-read-only-backend-addresses = 172.16.1.52:3306,10.0.0.53:3306 # 设置只读的从库地址

pwds = repl:3yb5jEku5h4=,mha:O2jBXONX098= # 设置数据库管理用户,加密方法:/usr/local/mysql-proxy/bin/encrypt 密码

daemon = true

keepalive = true

event-threads = 8

log-level = message

log-path = /usr/local/mysql-proxy/log

sql-log=ON

proxy-address = 0.0.0.0:33060

admin-address = 0.0.0.0:2345

charset=utf8

3.启动atlas

/usr/local/mysql-proxy/bin/mysql-proxyd test start

10.3 Atlas读写分离测试

读测试

mysql -umha -pmha -h172.16.1.53 -P33060

show variables like 'server_id';

mysql> show variables like 'server_id';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| server_id | 52 |

+---------------+-------+

1 row in set (0.01 sec)

mysql> show variables like 'server_id';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| server_id | 53 |

+---------------+-------+

1 row in set (0.00 sec)

写测试

set global read_only=1; # 设置两个从节点为只读模式

mysql -umha -pmha -h172.16.1.53 -P33060

create database db1;

10.4 Atlas管理之添删节点

连接管理接口:

mysql -uuser -ppwd -h127.0.0.1 -P2345

打印帮助:

mysql> select * from help;

+----------------------------+---------------------------------------------------------+

| command | description |

+----------------------------+---------------------------------------------------------+

| SELECT * FROM help | shows this help |

| SELECT * FROM backends | lists the backends and their state |

| SET OFFLINE $backend_id | offline backend server, $backend_id is backend_ndx's id |

| SET ONLINE $backend_id | online backend server, ... |

| ADD MASTER $backend | example: "add master 127.0.0.1:3306", ... |

| ADD SLAVE $backend | example: "add slave 127.0.0.1:3306", ... |

| REMOVE BACKEND $backend_id | example: "remove backend 1", ... |

| SELECT * FROM clients | lists the clients |

| ADD CLIENT $client | example: "add client 192.168.1.2", ... |

| REMOVE CLIENT $client | example: "remove client 192.168.1.2", ... |

| SELECT * FROM pwds | lists the pwds |

| ADD PWD $pwd | example: "add pwd user:raw_password", ... |

| ADD ENPWD $pwd | example: "add enpwd user:encrypted_password", ... |

| REMOVE PWD $pwd | example: "remove pwd user", ... |

| SAVE CONFIG | save the backends to config file |

| SELECT VERSION | display the version of Atlas |

+----------------------------+---------------------------------------------------------+

16 rows in set (0.00 sec)

查看所有节点

mysql> SELECT * FROM backends;

+-------------+-------------------+-------+------+

| backend_ndx | address | state | type |

+-------------+-------------------+-------+------+

| 1 | 172.16.1.100:3306 | up | rw |

| 2 | 172.16.1.52:3306 | up | ro |

| 3 | 172.16.1.53:3306 | up | ro |

+-------------+-------------------+-------+------+

3 rows in set (0.00 sec)

动态删除节点:

mysql> REMOVE BACKEND 3;

Empty set (0.00 sec)

mysql> SELECT * FROM backends;

+-------------+-------------------+-------+------+

| backend_ndx | address | state | type |

+-------------+-------------------+-------+------+

| 1 | 172.16.1.100:3306 | up | rw |

| 2 | 172.16.1.52:3306 | up | ro |

+-------------+-------------------+-------+------+

2 rows in set (0.00 sec)

动态添加节点:

mysql> ADD SLAVE 172.16.1.53:3306;

Empty set (0.00 sec)

mysql> SELECT * FROM backends;

+-------------+-------------------+-------+------+

| backend_ndx | address | state | type |

+-------------+-------------------+-------+------+

| 1 | 172.16.1.100:3306 | up | rw |

| 2 | 172.16.1.52:3306 | up | ro |

| 3 | 172.16.1.53:3306 | up | ro |

+-------------+-------------------+-------+------+

3 rows in set (0.00 sec)

将修改保存至配置文件中

SAVE CONFIG;

10.5 Atlas之自动分表功能

使用Atlas的分表功能时,首先需要在配置文件test.cnf设置tables参数。

tables参数设置格式:数据库名.表名.分表字段.子表数量,

比如:

你的数据库名叫school,表名叫stu,分表字段叫id,总共分为2张表,那么就写school.stu.id.2,如果还有其他的分表,以逗号分隔即可。

用户需要手动建立2张子表(stu_0,stu_1,注意子表序号是从0开始的)。所有的子表必须在DB的同一个database里。

当通过Atlas执行(SELECT、DELETE、UPDATE、INSERT、REPLACE)操作时,Atlas会根据分表结果(id%2=k),定位到相应的子表(stu_k)。例如,执行select * from stu where id=3;,Atlas会自动从stu_1这张子表返回查询结果。但如果执行SQL语句(select * from stu;)时不带上id,则会提示执行stu 表不存在。

Atlas暂不支持自动建表和跨库分表的功能。

Atlas目前支持分表的语句有SELECT、DELETE、UPDATE、INSERT、REPLACE。

10.5.1 Atlas自动分表配置

配置文件

vim /usr/local/mysql-proxy/conf/test.cnf

tables = school.stu.id.5

重启atlas

(主库)手工创建,分表后的库和表,分别为定义的school 和 stu_0 stu_1 stu_2 stu_3 stu_4

create database school;

use school

create table stu_0 (id int,name varchar(20));

create table stu_1 (id int,name varchar(20));

create table stu_2 (id int,name varchar(20));

create table stu_3 (id int,name varchar(20));

create table stu_4 (id int,name varchar(20));

测试:

insert into stu values (3,'wang5');

insert into stu values (2,'li4');

insert into stu values (1,'zhang3');

insert into stu values (4,'m6');

insert into stu values (5,'zou7');

commit;

10.6 IP过滤与SQL黑名单

IP过滤:client-ips 该参数用来实现IP过滤功能。

在传统的开发模式中,应用程序直接连接DB,因此DB会对部署应用的机器(比如web服务器)的IP作访问授权。

在引入中间层后,因为连接DB的是Atlas,所以DB改为对部署Atlas的机器的IP作访问授权,如果任意一台客户端都可以连接Atlas,就会带来潜在的风险。

client-ips参数用来控制连接Atlas的客户端的IP,可以是精确IP,也可以是IP段,以逗号分隔写在一行上即可。

如:

client-ips=192.168.1.2, 192.168.2,这就代表192.168.1.2这个IP和192.168.2.*这个段的IP可以连接Atlas,其他IP均不能连接。

如果该参数不设置,则任意IP均可连接Atlas。

如果设置了client-ips参数,且Atlas前面挂有LVS,则必须设置lvs-ips参数,否则可以不设置lvs-ips。

SQL语句黑白名单

Atlas会屏蔽不带where条件的delete和update操作,以及sleep函数。

<wiz_tmp_tag id="wiz-table-range-border" contenteditable="false" style="display: none;">