Scala进阶之路-Spark独立模式(Standalone)集群部署

Scala进阶之路-Spark独立模式(Standalone)集群部署

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

我们知道Hadoop解决了大数据的存储和计算,存储使用HDFS分布式文件系统存储,而计算采用MapReduce框架进行计算,当你在学习MapReduce的操作时,尤其是Hive的时候(因为Hive底层其实仍然调用的MapReduce)是不是觉得MapReduce运行的特别慢?因此目前很多人都转型学习Spark,今天我们就一起学习部署Spark集群吧。

一.准备环境

如果你的服务器还么没有部署Hadoop集群,可以参考我之前写的关于部署Hadoop高可用的笔记:https://www.cnblogs.com/yinzhengjie/p/9154265.html

1>.启动HDFS分布式文件系统

[yinzhengjie@s101 download]$ more `which xzk.sh` #!/bin/bash #@author :yinzhengjie #blog:http://www.cnblogs.com/yinzhengjie #EMAIL:y1053419035@qq.com #判断用户是否传参 if [ $# -ne 1 ];then echo "无效参数,用法为: $0 {start|stop|restart|status}" exit fi #获取用户输入的命令 cmd=$1 #定义函数功能 function zookeeperManger(){ case $cmd in start) echo "启动服务" remoteExecution start ;; stop) echo "停止服务" remoteExecution stop ;; restart) echo "重启服务" remoteExecution restart ;; status) echo "查看状态" remoteExecution status ;; *) echo "无效参数,用法为: $0 {start|stop|restart|status}" ;; esac } #定义执行的命令 function remoteExecution(){ for (( i=102 ; i<=104 ; i++ )) ; do tput setaf 2 echo ========== s$i zkServer.sh $1 ================ tput setaf 9 ssh s$i "source /etc/profile ; zkServer.sh $1" done } #调用函数 zookeeperManger [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ more `which xcall.sh` #!/bin/bash #@author :yinzhengjie #blog:http://www.cnblogs.com/yinzhengjie #EMAIL:y1053419035@qq.com #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数" exit fi #获取用户输入的命令 cmd=$@ for (( i=101;i<=105;i++ )) do #使终端变绿色 tput setaf 2 echo ============= s$i $cmd ============ #使终端变回原来的颜色,即白灰色 tput setaf 7 #远程执行命令 ssh s$i $cmd #判断命令是否执行成功 if [ $? == 0 ];then echo "命令执行成功" fi done [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ more `which xrsync.sh` #!/bin/bash #@author :yinzhengjie #blog:http://www.cnblogs.com/yinzhengjie #EMAIL:y1053419035@qq.com #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数"; exit fi #获取文件路径 file=$@ #获取子路径 filename=`basename $file` #获取父路径 dirpath=`dirname $file` #获取完整路径 cd $dirpath fullpath=`pwd -P` #同步文件到DataNode for (( i=102;i<=105;i++ )) do #使终端变绿色 tput setaf 2 echo =========== s$i %file =========== #使终端变回原来的颜色,即白灰色 tput setaf 7 #远程执行命令 rsync -lr $filename `whoami`@s$i:$fullpath #判断命令是否执行成功 if [ $? == 0 ];then echo "命令执行成功" fi done [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ xzk.sh start 启动服务 ========== s102 zkServer.sh start ================ ZooKeeper JMX enabled by default Using config: /soft/zk/bin/../conf/zoo.cfg Starting zookeeper ... STARTED ========== s103 zkServer.sh start ================ ZooKeeper JMX enabled by default Using config: /soft/zk/bin/../conf/zoo.cfg Starting zookeeper ... STARTED ========== s104 zkServer.sh start ================ ZooKeeper JMX enabled by default Using config: /soft/zk/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [yinzhengjie@s101 download]$ [yinzhengjie@s101 download]$ xcall.sh jps ============= s101 jps ============ 2603 Jps 命令执行成功 ============= s102 jps ============ 2316 Jps 2287 QuorumPeerMain 命令执行成功 ============= s103 jps ============ 2284 QuorumPeerMain 2319 Jps 命令执行成功 ============= s104 jps ============ 2305 Jps 2276 QuorumPeerMain 命令执行成功 ============= s105 jps ============ 2201 Jps 命令执行成功 [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ start-dfs.sh SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] Starting namenodes on [s101 s105] s101: starting namenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-namenode-s101.out s105: starting namenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-namenode-s105.out s103: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s103.out s104: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s104.out s102: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s102.out Starting journal nodes [s102 s103 s104] s104: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s104.out s102: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s102.out s103: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s103.out SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] Starting ZK Failover Controllers on NN hosts [s101 s105] s101: starting zkfc, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-zkfc-s101.out s105: starting zkfc, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-zkfc-s105.out [yinzhengjie@s101 download]$ [yinzhengjie@s101 download]$ xcall.sh jps ============= s101 jps ============ 7909 Jps 7526 NameNode 7830 DFSZKFailoverController 命令执行成功 ============= s102 jps ============ 2817 QuorumPeerMain 4340 JournalNode 4412 Jps 4255 DataNode 命令执行成功 ============= s103 jps ============ 4256 JournalNode 2721 QuorumPeerMain 4328 Jps 4171 DataNode 命令执行成功 ============= s104 jps ============ 2707 QuorumPeerMain 4308 Jps 4151 DataNode 4236 JournalNode 命令执行成功 ============= s105 jps ============ 4388 DFSZKFailoverController 4284 NameNode 4446 Jps 命令执行成功 [yinzhengjie@s101 download]$

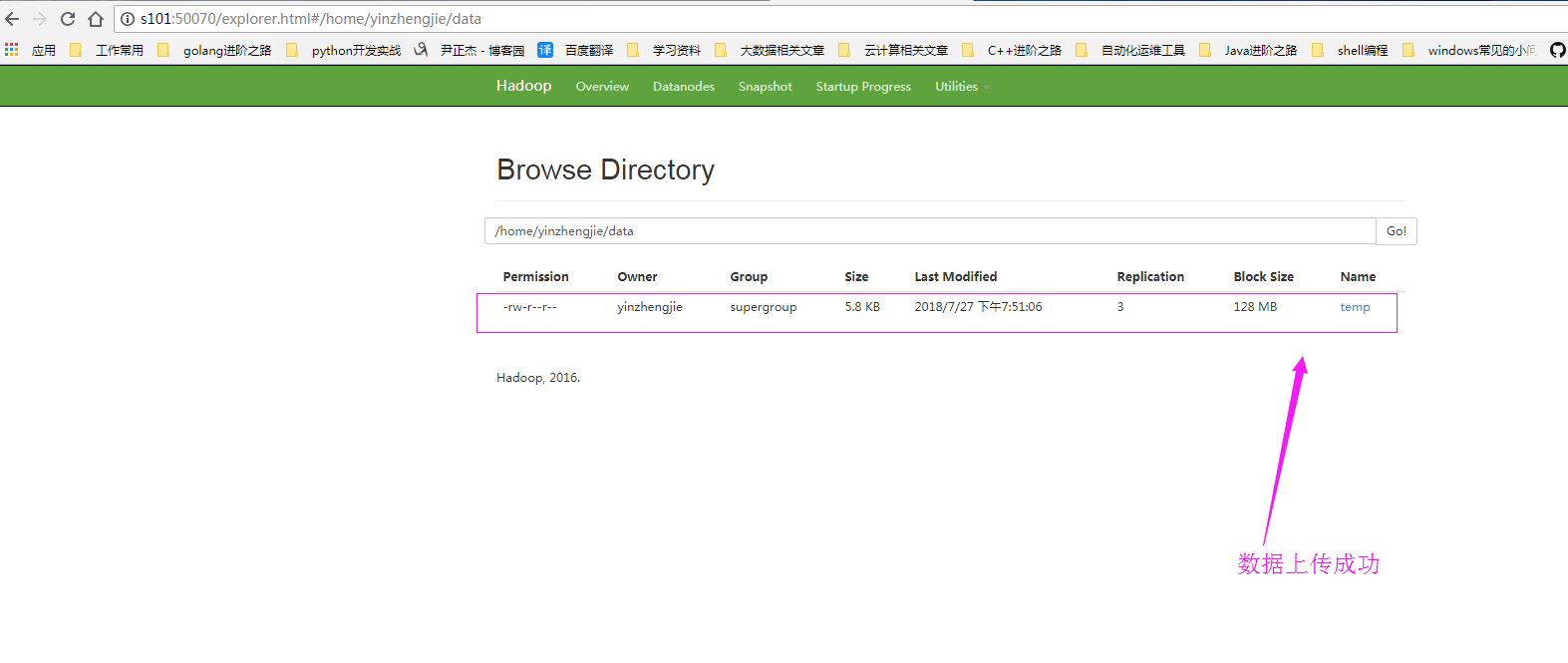

2>.上传测试数据到HDFS集群中

[yinzhengjie@s101 download]$ cat temp 0029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991902010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9+00721+99999102001ADDGF104991999999999999999999 0029029070999991903010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9-00941+99999102001ADDGF108991999999999999999999 0029029070999991904010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9-00611+99999101831ADDGF108991999999999999999999 0029029070999991905010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9-00561+99999101761ADDGF108991999999999999999999 0029029070999991906010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00281+99999101751ADDGF108991999999999999999999 0029029070999991907010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9-00671+99999101701ADDGF106991999999999999999999 0029029070999991908010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991909010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9-00721+99999102001ADDGF104991999999999999999999 0029029070999991910010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9+00941+99999102001ADDGF108991999999999999999999 0029029070999991911010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9-00611+99999101831ADDGF108991999999999999999999 0029029070999991912010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00561+99999101761ADDGF108991999999999999999999 0029029070999991913010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00281+99999101751ADDGF108991999999999999999999 0029029070999991914010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9+00671+99999101701ADDGF1069919999999999999999990029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991915010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9-00721+99999102001ADDGF104991999999999999999999 0029029070999991916010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9+00941+99999102001ADDGF108991999999999999999999 0029029070999991917010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9+00611+99999101831ADDGF108991999999999999999999 0029029070999991918010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00561+99999101761ADDGF108991999999999999999999 0029029070999991919010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9-00281+99999101751ADDGF108991999999999999999999 0029029070999991920010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9+00671+99999101701ADDGF1069919999999999999999990029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991902010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9+00721+99999102001ADDGF104991999999999999999999 0029029070999991903010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9-00941+99999102001ADDGF108991999999999999999999 0029029070999991904010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9+00611+99999101831ADDGF108991999999999999999999 0029029070999991905010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00561+99999101761ADDGF108991999999999999999999 0029029070999991906010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00281+99999101751ADDGF108991999999999999999999 0029029070999991907010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9-00671+99999101701ADDGF106991999999999999999999 0029029070999991908010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9+00781+99999102001ADDGF108991999999999999999999 0029029070999991909010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9-00721+99999102001ADDGF104991999999999999999999 0029029070999991910010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9+00941+99999102001ADDGF108991999999999999999999 0029029070999991911010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9+00611+99999101831ADDGF108991999999999999999999 0029029070999991912010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9-00561+99999101761ADDGF108991999999999999999999 0029029070999991913010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00281+99999101751ADDGF108991999999999999999999 0029029070999991914010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9+00671+99999101701ADDGF1069919999999999999999990029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 0029029070999991915010113004+64333+023450FM-12+000599999V0202901N008219999999N0000001N9+00721+99999102001ADDGF104991999999999999999999 0029029070999991916010120004+64333+023450FM-12+000599999V0209991C000019999999N0000001N9-00941+99999102001ADDGF108991999999999999999999 0029029070999991917010206004+64333+023450FM-12+000599999V0201801N008219999999N0000001N9+00611+99999101831ADDGF108991999999999999999999 0029029070999991918010213004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9-00561+99999101761ADDGF108991999999999999999999 0029029070999991919010220004+64333+023450FM-12+000599999V0201801N009819999999N0000001N9+00281+99999101751ADDGF108991999999999999999999 0029029070999991920010306004+64333+023450FM-12+000599999V0202001N009819999999N0000001N9+00671+99999101701ADDGF1069919999999999999999990029029070999991901010106004+64333+023450FM-12+000599999V0202701N015919999999N0000001N9-00781+99999102001ADDGF108991999999999999999999 [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ hdfs dfs -mkdir -p /home/yinzhengjie/data SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] [yinzhengjie@s101 download]$ [yinzhengjie@s101 download]$ hdfs dfs -ls -R / SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 03:44 /home drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 03:44 /home/yinzhengjie drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 03:44 /home/yinzhengjie/data [yinzhengjie@s101 download]$

[yinzhengjie@s101 download]$ hdfs dfs -put temp /home/yinzhengjie/data SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] [yinzhengjie@s101 download]$ [yinzhengjie@s101 download]$ [yinzhengjie@s101 download]$ hdfs dfs -ls -R / SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.1-bin/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 04:50 /home drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 04:50 /home/yinzhengjie drwxr-xr-x - yinzhengjie supergroup 0 2018-07-27 04:51 /home/yinzhengjie/data -rw-r--r-- 3 yinzhengjie supergroup 5936 2018-07-27 04:51 /home/yinzhengjie/data/temp [yinzhengjie@s101 download]$

二.部署Spark集群

1>.创建slaves文件

[yinzhengjie@s101 ~]$ cp /soft/spark/conf/slaves.template /soft/spark/conf/slaves [yinzhengjie@s101 ~]$ more /soft/spark/conf/slaves | grep -v ^# | grep -v ^$ s102 s103 s104 [yinzhengjie@s101 ~]$

2>.修改spark-env.sh配置文件

[yinzhengjie@s101 ~]$ cp /soft/spark/conf/spark-env.sh.template /soft/spark/conf/spark-env.sh [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ echo export JAVA_HOME=/soft/jdk >> /soft/spark/conf/spark-env.sh [yinzhengjie@s101 ~]$ echo SPARK_MASTER_HOST=s101 >> /soft/spark/conf/spark-env.sh [yinzhengjie@s101 ~]$ echo SPARK_MASTER_PORT=7077 >> /soft/spark/conf/spark-env.sh [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ grep -v ^# /soft/spark/conf/spark-env.sh | grep -v ^$ export JAVA_HOME=/soft/jdk SPARK_MASTER_HOST=s101 SPARK_MASTER_PORT=7077 [yinzhengjie@s101 ~]$

3>.将s101机器上的spark环境进行分发

[yinzhengjie@s101 ~]$ xrsync.sh /soft/spark spark/ spark-2.1.0-bin-hadoop2.7/ [yinzhengjie@s101 ~]$ xrsync.sh /soft/spark spark/ spark-2.1.0-bin-hadoop2.7/ [yinzhengjie@s101 ~]$ xrsync.sh /soft/spark/ =========== s102 %file =========== 命令执行成功 =========== s103 %file =========== 命令执行成功 =========== s104 %file =========== 命令执行成功 =========== s105 %file =========== 命令执行成功 [yinzhengjie@s101 ~]$ xrsync.sh /soft/spark-2.1.0-bin-hadoop2.7/ =========== s102 %file =========== 命令执行成功 =========== s103 %file =========== 命令执行成功 =========== s104 %file =========== 命令执行成功 =========== s105 %file =========== 命令执行成功 [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ su root Password: [root@s101 yinzhengjie]# [root@s101 yinzhengjie]# xrsync.sh /etc/profile =========== s102 %file =========== 命令执行成功 =========== s103 %file =========== 命令执行成功 =========== s104 %file =========== 命令执行成功 =========== s105 %file =========== 命令执行成功 [root@s101 yinzhengjie]# [root@s101 yinzhengjie]# exit exit [yinzhengjie@s101 ~]$

4>.在所有的spark节点的conf/目录创建core-site.xml和hdfs-site.xml软连接文件

[yinzhengjie@s101 ~]$ xcall.sh "ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml" ============= s101 ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml ============ 命令执行成功 ============= s102 ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml ============ 命令执行成功 ============= s103 ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml ============ 命令执行成功 ============= s104 ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml ============ 命令执行成功 ============= s105 ln -s /soft/hadoop/etc/hadoop/core-site.xml /soft/spark/conf/core-site.xml ============ 命令执行成功 [yinzhengjie@s101 ~]$ xcall.sh "ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml" ============= s101 ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml ============ 命令执行成功 ============= s102 ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml ============ 命令执行成功 ============= s103 ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml ============ 命令执行成功 ============= s104 ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml ============ 命令执行成功 ============= s105 ln -s /soft/hadoop/etc/hadoop/hdfs-site.xml /soft/spark/conf/hdfs-site.xml ============ 命令执行成功 [yinzhengjie@s101 ~]$

5>.启动Spark集群

[yinzhengjie@s101 ~]$ /soft/spark/sbin/start-all.sh starting org.apache.spark.deploy.master.Master, logging to /soft/spark/logs/spark-yinzhengjie-org.apache.spark.deploy.master.Master-1-s101.out s102: starting org.apache.spark.deploy.worker.Worker, logging to /soft/spark/logs/spark-yinzhengjie-org.apache.spark.deploy.worker.Worker-1-s102.out s104: starting org.apache.spark.deploy.worker.Worker, logging to /soft/spark/logs/spark-yinzhengjie-org.apache.spark.deploy.worker.Worker-1-s104.out s103: starting org.apache.spark.deploy.worker.Worker, logging to /soft/spark/logs/spark-yinzhengjie-org.apache.spark.deploy.worker.Worker-1-s103.out [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ xcall.sh jps ============= s101 jps ============ 7766 NameNode 8070 DFSZKFailoverController 8890 Master 8974 Jps 命令执行成功 ============= s102 jps ============ 4336 DataNode 4114 QuorumPeerMain 4744 Worker 4218 JournalNode 4795 Jps 命令执行成功 ============= s103 jps ============ 4736 Worker 4787 Jps 4230 JournalNode 4121 QuorumPeerMain 4347 DataNode 命令执行成功 ============= s104 jps ============ 7489 Worker 7540 Jps 6983 JournalNode 7099 DataNode 6879 QuorumPeerMain 命令执行成功 ============= s105 jps ============ 7456 DFSZKFailoverController 8038 Jps 7356 NameNode 命令执行成功 [yinzhengjie@s101 ~]$

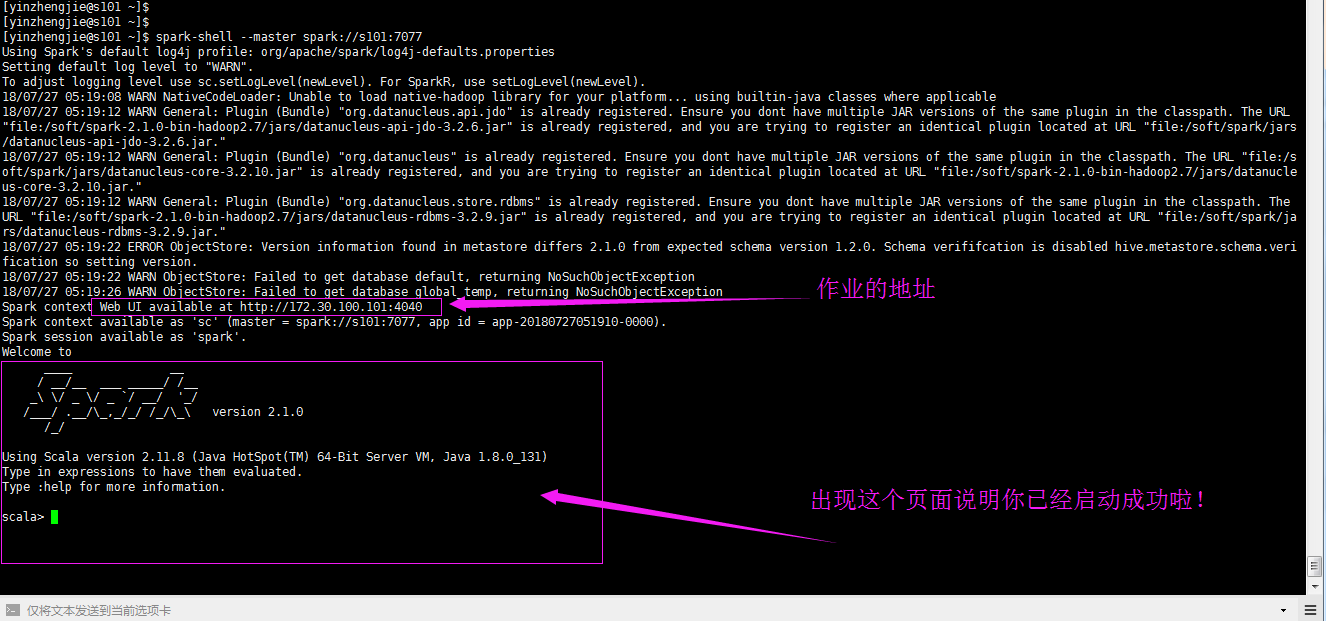

6>.启动spark-shell连接到spark集群

[yinzhengjie@s101 ~]$ spark-shell --master spark://s101:7077 Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel). 18/07/27 05:19:08 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 18/07/27 05:19:12 WARN General: Plugin (Bundle) "org.datanucleus.api.jdo" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/soft/spark-2.1.0-bin-hadoop2.7/jars/datanucleus-api-jdo-3.2.6.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/soft/spark/jars/datanucleus-api-jdo-3.2.6.jar." 18/07/27 05:19:12 WARN General: Plugin (Bundle) "org.datanucleus" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/soft/spark/jars/datanucleus-core-3.2.10.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/soft/spark-2.1.0-bin-hadoop2.7/jars/datanucleus-core-3.2.10.jar." 18/07/27 05:19:12 WARN General: Plugin (Bundle) "org.datanucleus.store.rdbms" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/soft/spark-2.1.0-bin-hadoop2.7/jars/datanucleus-rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/soft/spark/jars/datanucleus-rdbms-3.2.9.jar." 18/07/27 05:19:22 ERROR ObjectStore: Version information found in metastore differs 2.1.0 from expected schema version 1.2.0. Schema verififcation is disabled hive.metastore.schema.verification so setting version. 18/07/27 05:19:22 WARN ObjectStore: Failed to get database default, returning NoSuchObjectException 18/07/27 05:19:26 WARN ObjectStore: Failed to get database global_temp, returning NoSuchObjectException Spark context Web UI available at http://172.30.100.101:4040 Spark context available as 'sc' (master = spark://s101:7077, app id = app-20180727051910-0000). Spark session available as 'spark'. Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /___/ .__/\_,_/_/ /_/\_\ version 2.1.0 /_/ Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_131) Type in expressions to have them evaluated. Type :help for more information. scala>

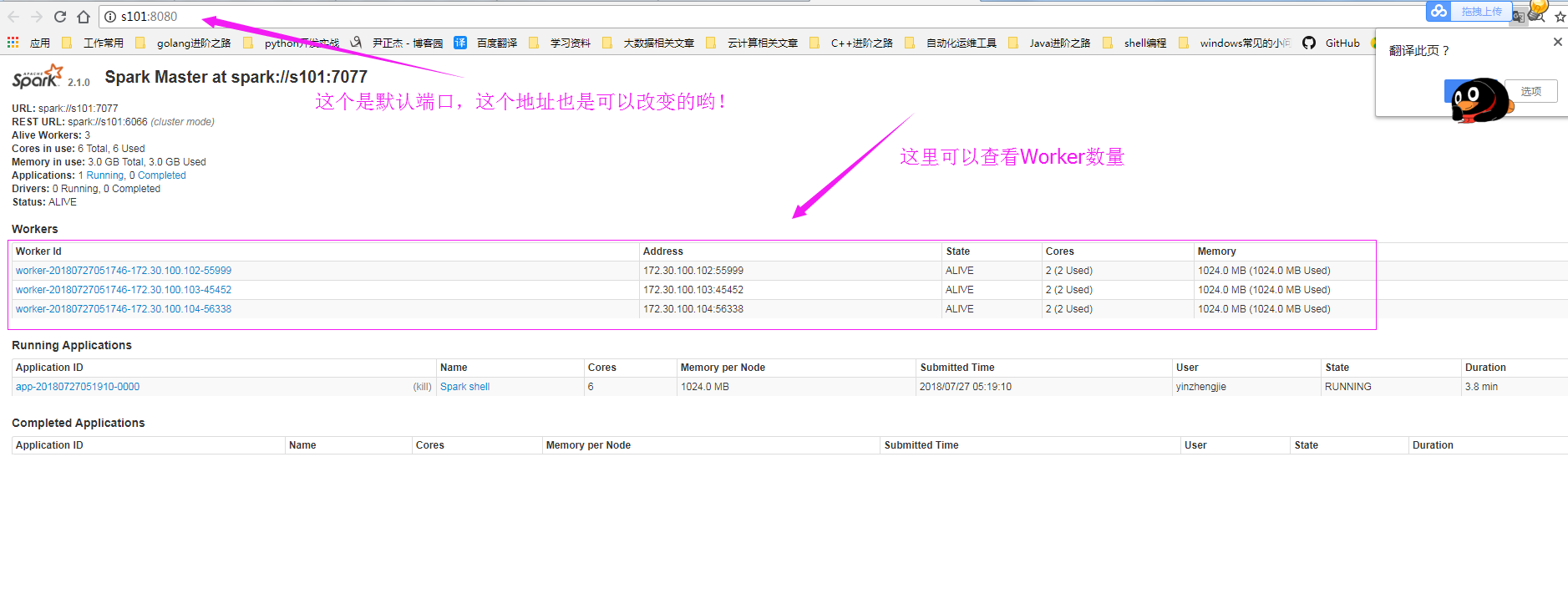

7>.查看WebUI界面

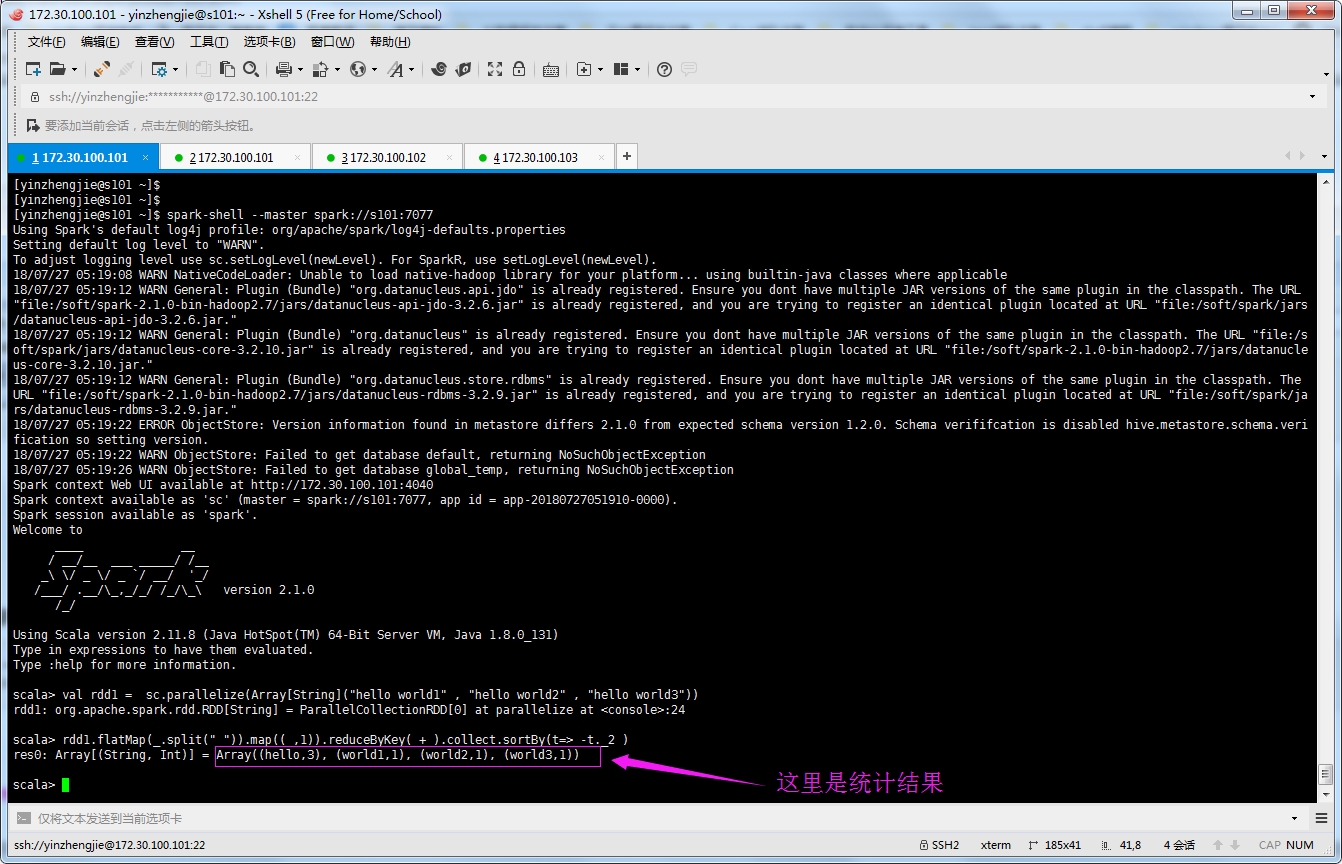

8>.编写程序在Spark集群上实现WordCount

val rdd1 = sc.parallelize(Array[String]("hello world1" , "hello world2" , "hello world3")) rdd1.flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).collect.sortBy(t=> -t._2 )

三.在Spark集群中执行代码

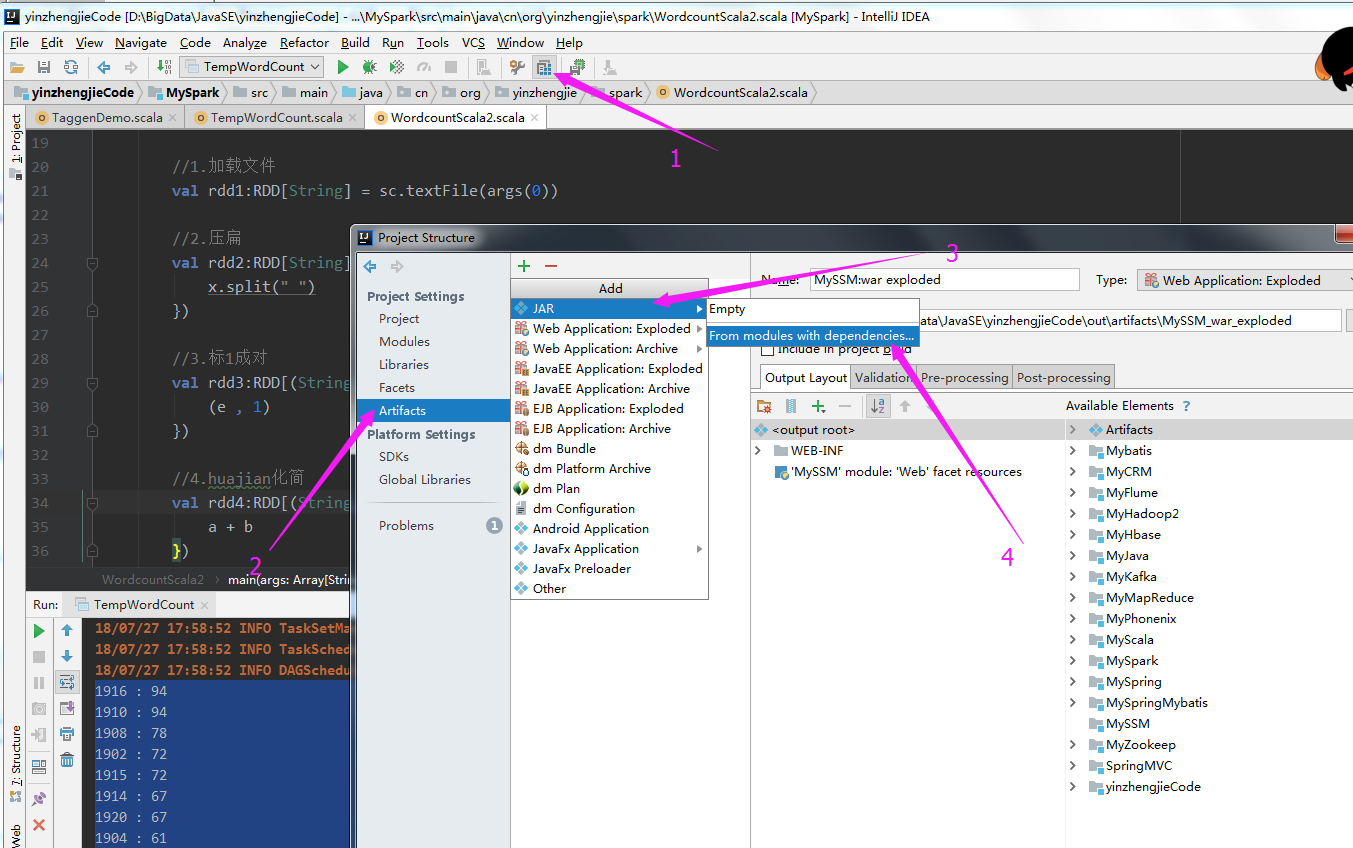

1>.Create JAR from Moudles

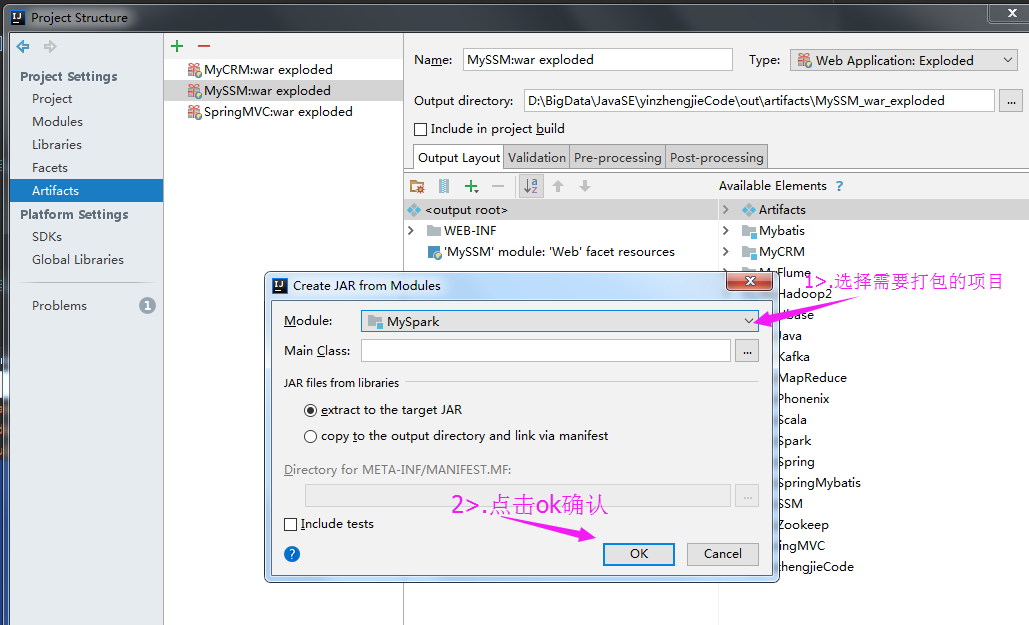

2>.选择需要打包的项目

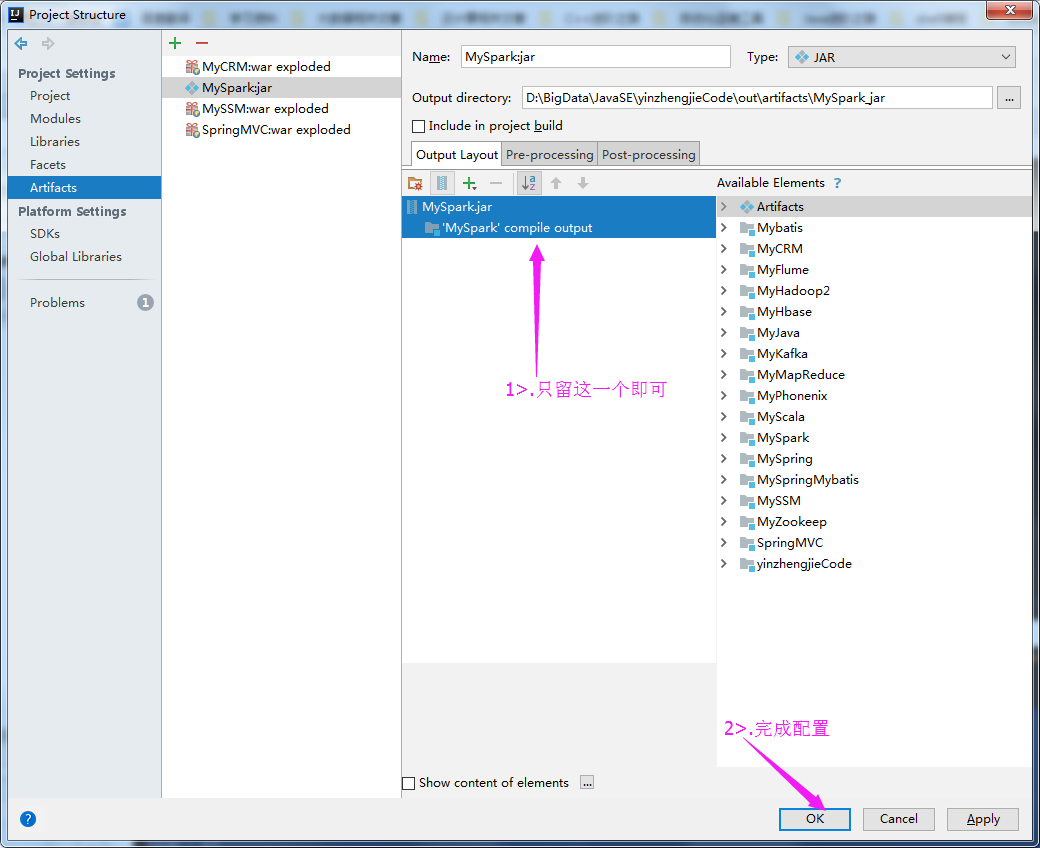

3>.删除第三方类库

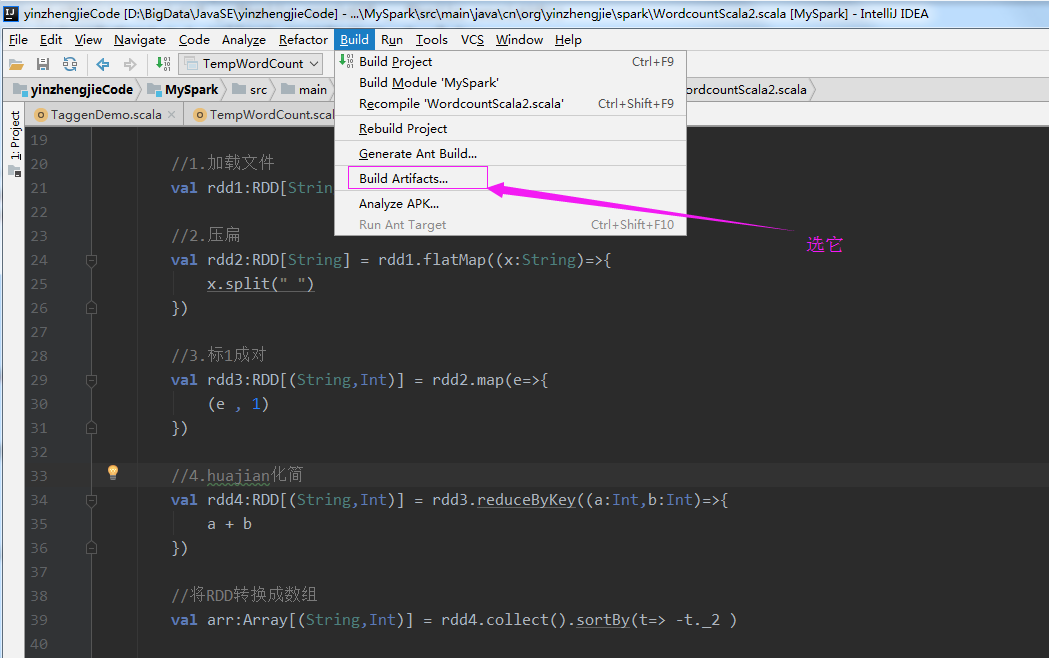

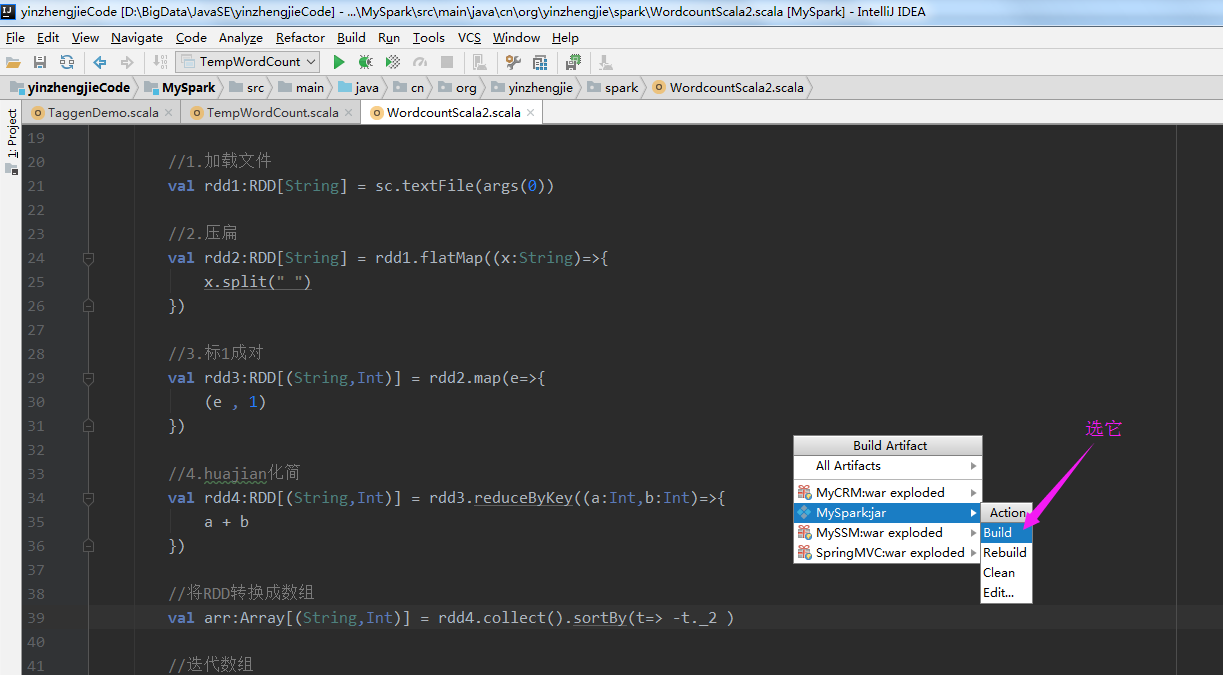

4>.点击Build Artifacts....

5>.选择build

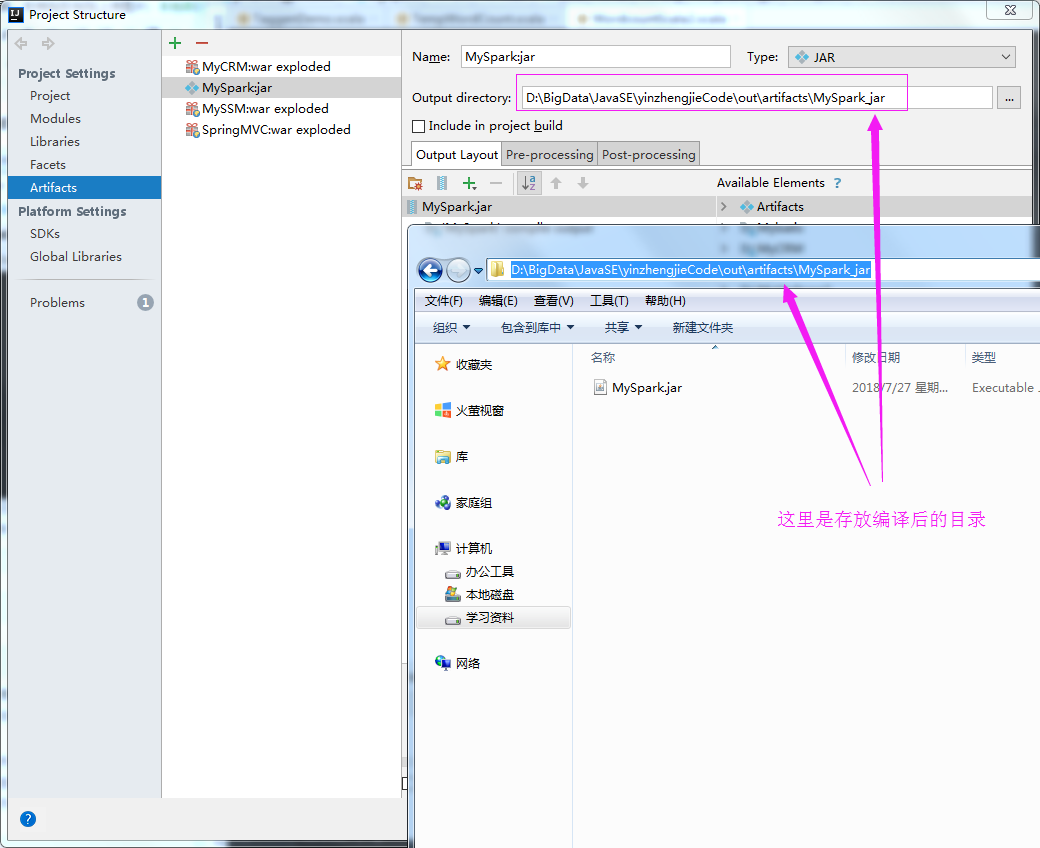

6>.查看编译后的文件

7>.将编译后的文件上传到服务器上

8>.

本文来自博客园,作者:尹正杰,转载请注明原文链接:https://www.cnblogs.com/yinzhengjie/p/9379045.html,个人微信: "JasonYin2020"(添加时请备注来源及意图备注,有偿付费)

当你的才华还撑不起你的野心的时候,你就应该静下心来学习。当你的能力还驾驭不了你的目标的时候,你就应该沉下心来历练。问问自己,想要怎样的人生。