Ceph Reef(18.2.X)的CephFS高可用集群实战案例

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

一.cephFS基础知识

1. CephFS概述

RBD提供了远程磁盘挂载的问题,但无法做到多个主机共享一个磁盘,如果有一份数据很多客户端都要读写该怎么办呢?这时CephFS作为文件系统解决方案就派上用场了。

CephFS是POSIX兼容的文件系统,它直接使用Ceph存储集群来存储数据。Ceph文件系统与Ceph块设备,同时提供S3和Swift API的Ceph对象存储或者原生库(librados)的实现机制稍显不同。

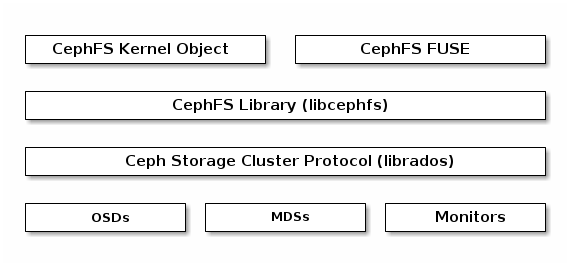

如上图所示,CephFS支持内核模块或者fuse方式访问,如果宿主机没有安装ceph模块,则可以考虑使用fuse方式访问。可以通过"modinfo ceph"来检查当前宿主机是否有ceph相关内核模块。

[root@ceph141 ~]# modinfo ceph

filename: /lib/modules/5.15.0-119-generic/kernel/fs/ceph/ceph.ko

license: GPL

description: Ceph filesystem for Linux

author: Patience Warnick <patience@newdream.net>

author: Yehuda Sadeh <yehuda@hq.newdream.net>

author: Sage Weil <sage@newdream.net>

alias: fs-ceph

srcversion: 8ABA5D4086D53861D2D72D1

depends: libceph,fscache,netfs

retpoline: Y

intree: Y

name: ceph

vermagic: 5.15.0-119-generic SMP mod_unload modversions

sig_id: PKCS#7

signer: Build time autogenerated kernel key

sig_key: 5F:22:FC:96:FA:F2:9E:F0:76:8D:52:81:4A:3C:6F:F8:61:08:2C:6D

sig_hashalgo: sha512

signature: 76:9E:6E:70:EF:E0:C3:A7:5F:24:C7:C1:2D:EC:24:B9:EE:84:17:64:

F3:18:EC:8F:59:C3:88:64:96:56:1C:FE:97:CE:91:36:C9:BF:98:4B:

A7:F1:63:A5:C8:31:23:5F:12:43:4F:D7:C0:D9:E7:97:72:33:4D:DA:

04:43:60:F9:91:E7:CD:BC:45:30:E4:5A:E0:1B:20:B3:8B:4A:FD:6D:

9F:C6:B8:75:BF:F4:AD:1B:E4:86:FE:85:34:CA:97:DE:17:45:D4:00:

D2:AA:71:3D:18:84:36:D5:33:31:7F:02:8B:1A:26:49:A2:B3:C0:02:

C8:9A:F6:02:7F:B3:82:9C:83:29:3B:F6:ED:E4:BA:BB:0D:F9:E8:27:

04:05:39:29:C8:33:BD:35:8B:77:29:48:89:8F:E9:DD:55:89:3D:CF:

2D:B8:E7:17:8E:20:F6:EE:7D:5B:6D:9A:92:A4:ED:6A:9D:9B:83:1E:

6B:A1:CA:70:28:43:BB:49:88:12:39:65:01:49:2F:0A:4B:D6:9F:26:

EB:D6:E5:FD:35:85:85:E6:CB:E2:87:D1:D3:9D:C8:8F:23:10:DC:D1:

C2:F0:D8:A7:AF:F6:1A:FD:0A:7C:40:1F:40:7B:08:4F:1A:8F:A9:45:

CC:99:57:DB:5E:35:F1:16:49:3A:1B:EF:B4:E6:4B:95:B5:56:A4:D1:

7E:5E:48:10:EE:BC:5C:1A:A7:B0:F1:2E:20:33:5B:55:24:CA:05:A6:

6B:16:20:AE:24:6A:4E:97:1B:81:E0:A1:03:E0:A9:C2:BD:05:36:E6:

09:33:F5:E5:D6:C2:17:CE:28:20:27:59:61:C3:18:06:DB:CC:0F:90:

81:AC:99:26:E1:93:CE:CF:A5:94:56:41:D9:32:C7:6F:FE:F1:8B:9D:

51:4B:82:FC:8B:90:21:B7:96:94:38:4C:DF:EC:0F:85:E5:90:3B:40:

C7:E3:04:7F:D9:F2:81:43:8C:E9:57:18:88:06:B5:3A:F9:E5:A3:01:

52:BD:E7:36:AF:D5:BD:64:16:C2:2A:3D:61:7B:10:09:35:91:C4:5D:

B6:0A:D0:22:2F:8E:A7:E7:48:EE:27:BA:86:E9:BA:96:27:55:E0:F5:

51:36:B7:B0:11:D6:50:6E:85:92:03:DA:95:0E:92:26:A2:7B:5F:AD:

19:C2:A2:CD:71:F3:D3:72:91:8C:79:3D:85:3B:75:C0:AA:81:0F:93:

49:6E:21:F5:38:F5:03:69:17:75:36:04:A8:81:6A:03:2E:7D:0D:10:

81:24:81:4E:78:93:08:8C:1E:CC:BA:5B:47:3A:08:40:A5:CE:4F:3A:

33:DF:A5:1D:4F:8F:0A:62:2A:A3:62:4B

parm: disable_send_metrics:Enable sending perf metrics to ceph cluster (default: on)

[root@ceph141 ~]#

推荐阅读:

https://docs.ceph.com/en/reef/architecture/#ceph-file-system

https://docs.ceph.com/en/reef/cephfs/#ceph-file-system

2.CephFS架构原理

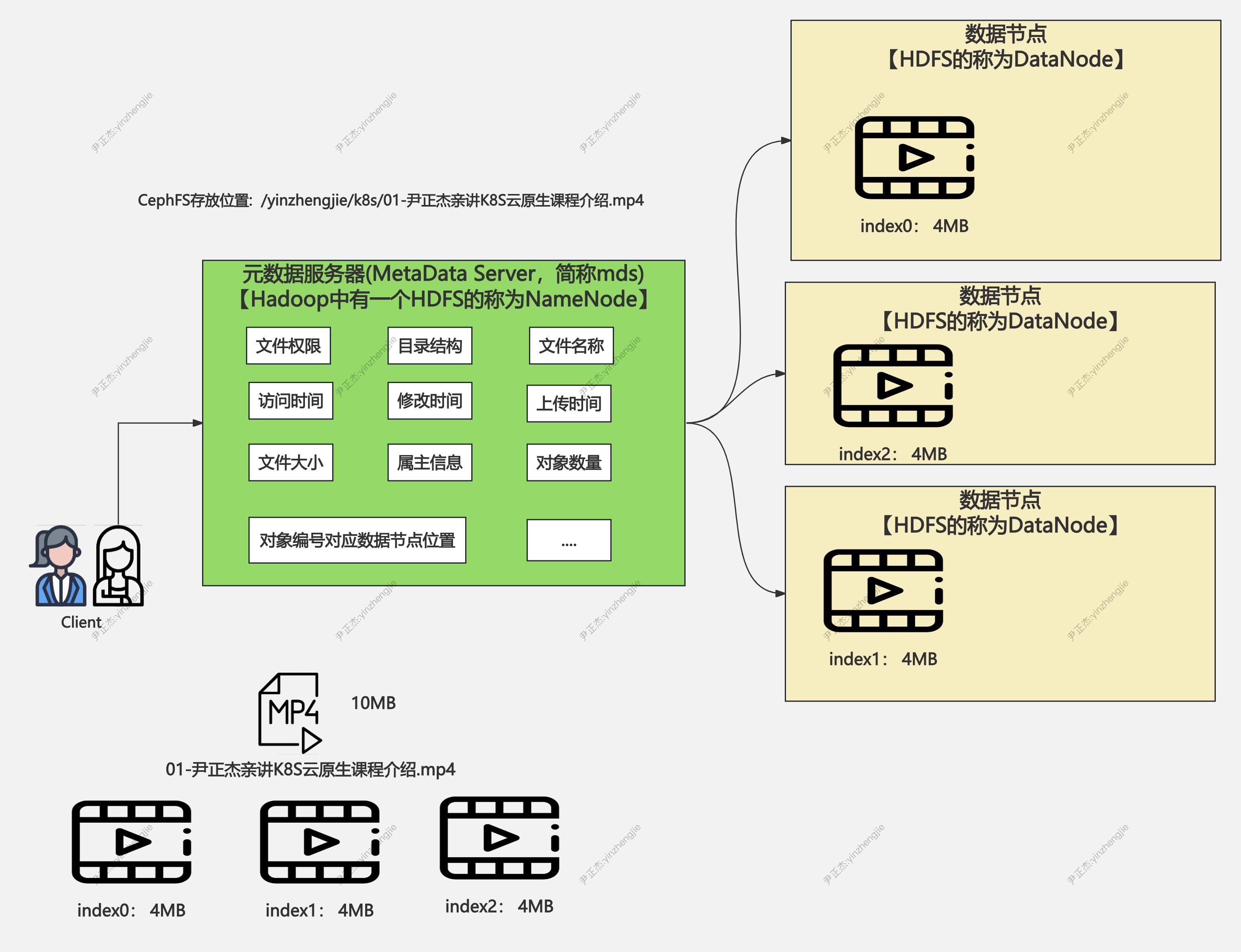

CephFS需要至少运行一个元数据服务器(MDS)守护进程(ceph-mds),此进程管理与CephFS上存储文件相关的元数据信息。

MDS虽然称为元数据服务,但是它却不存储任何元数据信息,它存在的目的仅仅是让我们rados集群提供存储接口。

客户端在访问文件接口时,首先链接到MDS上,在MDS到内存里面维持元数据的索引信息,从而间接找到去哪个数据节点读取数据。这一点和HDFS文件系统类似。

3.CephFS和NFS对比

相较于NFS来说,它主要有以下特点优势:

- 1.底层数据冗余的功能,底层的roados提供了基本数据冗余功能,因此不存在NFS的单点故障因素;

- 2.底层roados系统有N个存储节点组成,所以数据的存储可以分散I/O,吞吐量较高;

- 3.底层roados系统有N个存储节点组成,所以ceph提供的扩展性要相当的高;

二.cephFS的一主一从架构

推荐阅读:

https://docs.ceph.com/en/reef/cephfs/createfs/

1.创建两个存储池分别用于存储mds的元数据和数据

[root@ceph141 ~]# ceph osd pool create cephfs_data

pool 'cephfs_data' created

[root@ceph141 ~]#

[root@ceph141 ~]# ceph osd pool create cephfs_metadata

pool 'cephfs_metadata' created

[root@ceph141 ~]#

2.创建一个文件系统,名称为"yinzhengjie-cephfs"

[root@ceph141 ~]# ceph fs new yinzhengjie-cephfs cephfs_metadata cephfs_data

Pool 'cephfs_data' (id '6') has pg autoscale mode 'on' but is not marked as bulk.

Consider setting the flag by running

# ceph osd pool set cephfs_data bulk true

new fs with metadata pool 7 and data pool 6

[root@ceph141 ~]#

3.查看创建的存储池

[root@ceph141 ~]# ceph fs ls

name: yinzhengjie-cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

[root@ceph141 ~]#

[root@ceph141 ~]# ceph mds stat

yinzhengjie-cephfs:0

[root@ceph141 ~]#

[root@ceph141 ~]# ceph -s

cluster:

id: 3cb12fba-5f6e-11ef-b412-9d303a22b70f

health: HEALTH_ERR

1 filesystem is offline

1 filesystem is online with fewer MDS than max_mds

2 pool(s) do not have an application enabled

services:

mon: 3 daemons, quorum ceph141,ceph142,ceph143 (age 23h)

mgr: ceph141.cwgrgj(active, since 27h), standbys: ceph142.ymuzfe

mds: 0/0 daemons up

osd: 7 osds: 7 up (since 23h), 7 in (since 22h)

data:

volumes: 1/1 healthy

pools: 6 pools, 129 pgs

objects: 50 objects, 22 MiB

usage: 553 MiB used, 3.3 TiB / 3.3 TiB avail

pgs: 129 active+clean

[root@ceph141 ~]#

温馨提示:

- 1.此步骤不难发现,存储池的状态无法正常使用,而且集群是有错误的(HEALTH_ERR),因此我们需要先解决这个问题。

4.应用mds的文件系统

[root@ceph141 ~]# ceph orch apply mds yinzhengjie-cephfs

Scheduled mds.yinzhengjie-cephfs update...

[root@ceph141 ~]#

5.添加多个mds服务器

[root@ceph141 ~]# ceph orch daemon add mds yinzhengjie-cephfs ceph143 # 添加第一个mds

Deployed mds.yinzhengjie-cephfs.ceph143.ykwami on host 'ceph143'

[root@ceph141 ~]#

[root@ceph141 ~]# ceph mds stat

yinzhengjie-cephfs:1 {0=yinzhengjie-cephfs.ceph142.xhqpxn=up:active} 1 up:standby

[root@ceph141 ~]#

[root@ceph141 ~]#

[root@ceph141 ~]# ceph orch daemon add mds yinzhengjie-cephfs ceph141 # 添加第二个mds

Deployed mds.yinzhengjie-cephfs.ceph141.fvbkym on host 'ceph141'

[root@ceph141 ~]#

[root@ceph141 ~]# ceph mds stat

yinzhengjie-cephfs:1 {0=yinzhengjie-cephfs.ceph142.xhqpxn=up:active} 2 up:standby

[root@ceph141 ~]#

6.查看cephFS集群的详细信息

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs # 不难发现目前活跃提供服务是ceph141,备用的是ceph142

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 12 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 96.0k 1076G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph142.vpzcat

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

7.再次查看集群状态

[root@ceph141 ~]# ceph -s

cluster:

id: 3cb12fba-5f6e-11ef-b412-9d303a22b70f

health: HEALTH_WARN

2 pool(s) do not have an application enabled

services:

mon: 3 daemons, quorum ceph141,ceph142,ceph143 (age 24h)

mgr: ceph141.cwgrgj(active, since 27h), standbys: ceph142.ymuzfe

mds: 1/1 daemons up, 1 standby # 注意,一个启用,一个备用。

osd: 7 osds: 7 up (since 23h), 7 in (since 22h)

data:

volumes: 1/1 healthy

pools: 6 pools, 129 pgs

objects: 72 objects, 22 MiB

usage: 553 MiB used, 3.3 TiB / 3.3 TiB avail

pgs: 129 active+clean

[root@ceph141 ~]#

8.cephFS的一主一从架构高可用验证

8.1 查看cephFS集群的详细信息

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs # 不难发现目前活跃提供服务是ceph141,备用的是ceph142

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 12 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 96.0k 1076G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph142.vpzcat

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

8.2 ceph141直接关机

[root@ceph141 ~]# init 0

8.3 查看cephFS集群状态

[root@ceph143 ~]# ceph fs status yinzhengjie-cephfs # 很明显,ceph142接管了。【但是在接管之前,可能会卡一阵【30s】,需要迁移数据!】

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 10 13 12 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 64.0k 1614G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph143 ~]#

三.cephFS配置多主一从架构

1 修改之前的状态

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 10 13 12 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 105k 1076G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph141.fvbkym

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

2 修改mds的数量

[root@ceph141 ~]# ceph fs get yinzhengjie-cephfs | grep max_mds # 查看默认的数量为1,说白了,同时仅有一个对外提供服务

max_mds 1

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs set yinzhengjie-cephfs max_mds 2 # 我们可以让2个mds同时对外提供服务

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs get yinzhengjie-cephfs | grep max_mds

max_mds 2

[root@ceph141 ~]#

3 再次查看cephFS

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 10 13 12 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 177k 1076G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

温馨提示:

此时ceph142和ceph141同时对外提供服务,任何一个mds怪掉,都导致集群不可用!

4 添加一个备用的mds

[root@ceph141 ~]# ceph orch daemon add mds yinzhengjie-cephfs ceph143

Deployed mds.yinzhengjie-cephfs.ceph143.rsarvw on host 'ceph143'

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 10 13 12 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 177k 1076G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph143.rsarvw

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

温馨提示:

此事,ceph142和ceph143任意一个mds挂掉,ceph143会立刻接管保证集群的可用性。

5 验证是否生效

5.1 关掉人一个active的mds节点

[root@ceph142 ~]# init 0

5.2 查看cephFS是否生效

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 1 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active(laggy) yinzhengjie-cephfs.ceph142.vpzcat 0 0 0 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 1

POOL TYPE USED AVAIL

cephfs_metadata metadata 121k 1614G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

[root@ceph141 ~]# ceph -s

cluster:

id: 3cb12fba-5f6e-11ef-b412-9d303a22b70f

health: HEALTH_WARN

insufficient standby MDS daemons available

1/3 mons down, quorum ceph141,ceph143

3 osds down

1 host (3 osds) down

Degraded data redundancy: 91/273 objects degraded (33.333%), 37 pgs degraded, 129 pgs undersized

2 pool(s) do not have an application enabled

services:

mon: 3 daemons, quorum ceph141,ceph143 (age 2m), out of quorum: ceph142

mgr: ceph141.cwgrgj(active, since 107s)

mds: 2/2 daemons up

osd: 7 osds: 4 up (since 2m), 7 in (since 22h)

data:

volumes: 1/1 healthy

pools: 6 pools, 129 pgs

objects: 91 objects, 22 MiB

usage: 258 MiB used, 1.6 TiB / 1.6 TiB avail

pgs: 91/273 objects degraded (33.333%)

92 active+undersized

37 active+undersized+degraded

[root@ceph141 ~]#

温馨提示:

由于我的备用节点ceph143莫名了消失了,此时没有人接管142,因此会被标记为"laggy"延迟。

5.3 为了快速修复问题,只能再将143再重启启动

[root@ceph141 ~]# ceph orch daemon add mds yinzhengjie-cephfs ceph143

Deployed mds.yinzhengjie-cephfs.ceph143.vpvgvx on host 'ceph143'

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 resolve yinzhengjie-cephfs.ceph143.vpvgvx 0 0 0 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 121k 1614G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 1 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph143.vpvgvx Reqs: 0 /s 10 13 12 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 1

POOL TYPE USED AVAIL

cephfs_metadata metadata 121k 1614G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

[root@ceph141 ~]#

温馨提示:

启动后,不难发现ceph143由"resolve"(解决)变为"active"(活跃),说白了,就是备用节点上位了。

5.4 将ceph142节点拉起,观察是否会抢占

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph143.vpvgvx Reqs: 0 /s 10 13 12 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 192k 1614G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph142.vpzcat

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

温馨提示:

不难发现,ceph142处于备用mds,不会抢占之前的角色。

5.5 但是,过了一段时间,我的ceph143节点默认又消失了,此时ceph142正常上位!

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 0 3 2 0

1 active yinzhengjie-cephfs.ceph141.fvbkym Reqs: 0 /s 10 13 11 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 192k 1076G

cephfs_data data 0 1076G

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

[root@ceph141 ~]# ceph -s

cluster:

id: 3cb12fba-5f6e-11ef-b412-9d303a22b70f

health: HEALTH_WARN

insufficient standby MDS daemons available # 由于我的集群ceph143莫名其妙的挂了,导致没有备用的mds,因此此处会告警!

clock skew detected on mon.ceph142

2 pool(s) do not have an application enabled

services:

mon: 3 daemons, quorum ceph141,ceph142,ceph143 (age 2m)

mgr: ceph141.cwgrgj(active, since 8m), standbys: ceph142.ymuzfe

mds: 2/2 daemons up

osd: 7 osds: 7 up (since 2m), 7 in (since 22h)

data:

volumes: 1/1 healthy

pools: 6 pools, 129 pgs

objects: 91 objects, 22 MiB

usage: 394 MiB used, 3.3 TiB / 3.3 TiB avail

pgs: 129 active+clean

[root@ceph141 ~]#

四.cephfs的客户端之借助内核模块挂载

1.查看集群是否正常工作

[root@ceph141 ~]# ceph fs ls

name: yinzhengjie-cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

[root@ceph141 ~]#

[root@ceph141 ~]#

[root@ceph141 ~]# ceph fs status yinzhengjie-cephfs

yinzhengjie-cephfs - 0 clients

================

RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS

0 active yinzhengjie-cephfs.ceph142.vpzcat Reqs: 0 /s 10 13 12 0

POOL TYPE USED AVAIL

cephfs_metadata metadata 192k 1076G

cephfs_data data 0 1076G

STANDBY MDS

yinzhengjie-cephfs.ceph141.fvbkym

MDS version: ceph version 18.2.4 (e7ad5345525c7aa95470c26863873b581076945d) reef (stable)

[root@ceph141 ~]#

[root@ceph141 ~]# ceph -s

cluster:

id: 3cb12fba-5f6e-11ef-b412-9d303a22b70f

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph141,ceph142,ceph143 (age 61m)

mgr: ceph141.cwgrgj(active, since 68m), standbys: ceph142.ymuzfe

mds: 1/1 daemons up, 1 standby

osd: 7 osds: 7 up (since 61m), 7 in (since 23h)

data:

volumes: 1/1 healthy

pools: 3 pools, 65 pgs

objects: 43 objects, 455 KiB

usage: 374 MiB used, 3.3 TiB / 3.3 TiB avail

pgs: 65 active+clean

[root@ceph141 ~]#

2.管理节点创建用户并导出钥匙环和key文件

2.1 创建用户并授权

[root@ceph141 ~]# ceph auth add client.yinzhengjiefs mon 'allow r' mds 'allow rw' osd 'allow rwx'

added key for client.yinzhengjiefs

[root@ceph141 ~]#

[root@ceph141 ~]# ceph auth get client.yinzhengjiefs

[client.yinzhengjiefs]

key = AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

caps mds = "allow rw"

caps mon = "allow r"

caps osd = "allow rwx"

[root@ceph141 ~]#

2.2 导出认证信息

[root@ceph141 ~]# ceph auth get client.yinzhengjiefs > ceph.client.yinzhengjiefs.keyring

[root@ceph141 ~]#

[root@ceph141 ~]# cat ceph.client.yinzhengjiefs.keyring

[client.yinzhengjiefs]

key = AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

caps mds = "allow rw"

caps mon = "allow r"

caps osd = "allow rwx"

[root@ceph141 ~]#

[root@ceph141 ~]# ceph auth print-key client.yinzhengjiefs > yinzhengjiefs.key

[root@ceph141 ~]#

[root@ceph141 ~]# more yinzhengjiefs.key

AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

[root@ceph141 ~]#

3.将钥匙环和秘钥key拷贝到客户端指定目录

[root@ceph141 ~]# scp ceph.client.yinzhengjiefs.keyring yinzhengjiefs.key ceph143:/etc/ceph/

[root@ceph141 ~]#

[root@ceph141 ~]# scp ceph.client.yinzhengjiefs.keyring ceph142:/etc/ceph/

4.基于secretfile进行挂载

4.1 查看本地文件

[root@ceph143 ~]# ll /etc/ceph/ | grep yinzhengjie

-rw-r--r-- 1 root root 140 Aug 22 16:49 ceph.client.yinzhengjiefs.keyring

-rw-r--r-- 1 root root 40 Aug 22 16:49 yinzhengjiefs.key

[root@ceph143 ~]#

4.2 查看本地解析记录

[root@ceph143 ~]# grep ceph /etc/hosts

10.0.0.141 ceph141

10.0.0.142 ceph142

10.0.0.143 ceph143

[root@ceph143 ~]#

4.3 基于key文件进行挂载并尝试写入数据

[root@ceph143 ~]# df -h | grep mnt

[root@ceph143 ~]#

[root@ceph143 ~]# mount -t ceph ceph141:6789,ceph142:6789,ceph143:6789:/ /mnt -o name=yinzhengjiefs,secretfile=/etc/ceph/yinzhengjiefs.key

[root@ceph143 ~]#

[root@ceph143 ~]# df -h | grep mnt

10.0.0.141:6789,10.0.0.142:6789,10.0.0.143:6789:/ 1.1T 0 1.1T 0% /mnt

[root@ceph143 ~]#

[root@ceph143 ~]# ll /mnt/

total 4

drwxr-xr-x 2 root root 0 Aug 22 15:12 ./

drwxr-xr-x 21 root root 4096 Aug 21 17:52 ../

[root@ceph143 ~]#

[root@ceph143 ~]# cp /etc/os-release /etc/fstab /etc/hosts /mnt/

[root@ceph143 ~]#

[root@ceph143 ~]# ll /mnt/

total 6

drwxr-xr-x 2 root root 3 Aug 22 16:52 ./

drwxr-xr-x 21 root root 4096 Aug 21 17:52 ../

-rw-r--r-- 1 root root 657 Aug 22 16:52 fstab

-rw-r--r-- 1 root root 283 Aug 22 16:52 hosts

-rw-r--r-- 1 root root 386 Aug 22 16:52 os-release

[root@ceph143 ~]#

[root@ceph143 ~]# umount /mnt

[root@ceph143 ~]#

[root@ceph143 ~]# ll /mnt/

total 8

drwxr-xr-x 2 root root 4096 Aug 10 2023 ./

drwxr-xr-x 21 root root 4096 Aug 21 17:52 ../

[root@ceph143 ~]#

5.基于KEY进行挂载,无需拷贝秘钥文件!

[root@ceph143 ~]# more /etc/ceph/yinzhengjiefs.key

AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

[root@ceph143 ~]#

[root@ceph143 ~]# df -h | grep mnt

[root@ceph143 ~]#

[root@ceph143 ~]# mount -t ceph ceph141:6789,ceph142:6789,ceph143:6789:/ /mnt -o name=yinzhengjiefs,secret=AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

[root@ceph143 ~]#

[root@ceph143 ~]# df -h | grep mnt

10.0.0.141:6789,10.0.0.142:6789,10.0.0.143:6789:/ 1.1T 0 1.1T 0% /mnt

[root@ceph143 ~]#

[root@ceph143 ~]#

[root@ceph143 ~]# cp /etc/netplan/00-installer-config.yaml /mnt/

[root@ceph143 ~]#

[root@ceph143 ~]# ll /mnt/

total 7

drwxr-xr-x 2 root root 4 Aug 22 16:56 ./

drwxr-xr-x 21 root root 4096 Aug 21 17:52 ../

-rw-r--r-- 1 root root 237 Aug 22 16:56 00-installer-config.yaml

-rw-r--r-- 1 root root 657 Aug 22 16:52 fstab

-rw-r--r-- 1 root root 283 Aug 22 16:52 hosts

-rw-r--r-- 1 root root 386 Aug 22 16:52 os-release

[root@ceph143 ~]#

6.在启动一个客户端测试

[root@ceph142 ~]# df -h | grep mnt

[root@ceph142 ~]#

[root@ceph142 ~]# mount -t ceph ceph141:6789,ceph142:6789,ceph143:6789:/ /mnt -o name=yinzhengjiefs,secret=AQAF+8ZmIc85CRAABc/93tPRlyJFamvBR6oCOw==

[root@ceph142 ~]#

[root@ceph142 ~]# df -h | grep mnt

10.0.0.141:6789,10.0.0.142:6789,10.0.0.143:6789:/ 1.1T 0 1.1T 0% /mnt

[root@ceph142 ~]#

[root@ceph142 ~]# cp /etc/hostname /mnt/

[root@ceph142 ~]#

[root@ceph142 ~]# ll /mnt/

total 7

drwxr-xr-x 2 root root 5 Aug 22 17:00 ./

drwxr-xr-x 21 root root 4096 Aug 21 17:42 ../

-rw-r--r-- 1 root root 237 Aug 22 16:56 00-installer-config.yaml

-rw-r--r-- 1 root root 657 Aug 22 16:52 fstab

-rw-r--r-- 1 root root 8 Aug 22 17:00 hostname

-rw-r--r-- 1 root root 283 Aug 22 16:52 hosts

-rw-r--r-- 1 root root 386 Aug 22 16:52 os-release

[root@ceph142 ~]#

7.cephfs配置开机自动挂载

推挤阅读:

https://www.cnblogs.com/yinzhengjie/p/14305987.html#二cephfs开机自动挂载的三种方式

五.cephfs的客户端之基于用户空间fuse方式访问

1.FUSE概述

对于某些操作系统来说,它没有提供对应的ceph内核模块,我们还需要使用CephFS的话,可以通过FUSE方式来实现。

FUSE英文全称为:"Filesystem in Userspace",用于非特权用户能够无需操作内核而创建文件系统,但需要单独安装"ceph-fuse"程序包。

2.FUSE实战案例

2.1 安装ceph-fuse程序包(节点无需安装ceph-comon(该包会附带ceph内核模块机通用工具)软件包)

[root@ceph143 ~]# apt -y install ceph-fuse

2.2 使用ceph-fuse工具挂载cephFS

1.创建挂载点

[root@ceph143 ~]# mkdir /yinzhengjie/cephfs

2.开始挂载ceph

[root@ceph143 ~]# ll /etc/ceph/

total 32

drwxr-xr-x 2 root root 4096 Aug 28 21:35 ./

drwxr-xr-x 132 root root 12288 Aug 28 21:32 ../

-rw------- 1 root root 151 Aug 28 21:35 ceph.client.admin.keyring

-rw-r--r-- 1 root root 259 Aug 28 21:35 ceph.conf

-rw-r--r-- 1 root root 595 Aug 28 21:35 ceph.pub

-rw-r--r-- 1 root root 92 Feb 8 2024 rbdmap

[root@ceph143 ~]#

[root@ceph143 ~]# ceph-fuse -n client.admin -m ceph141:6789,ceph142:6789,ceph143:6789 /yinzhengjie/cephfs/

3.验证是否挂载成功

[root@ceph143 ~]# mount | grep cephfs

ceph-fuse on /yinzhengjie/cephfs type fuse.ceph-fuse (rw,nosuid,nodev,relatime,user_id=0,group_id=0,allow_other)

[root@ceph143 ~]#

2.3 查看挂载效果

[root@ceph143 ~]# stat -f /yinzhengjie/cephfs/

File: "/yinzhengjie/cephfs/"

ID: 0 Namelen: 255 Type: fuseblk

Block size: 4194304 Fundamental block size: 4194304

Blocks: Total: 121553 Free: 121553 Available: 121553

Inodes: Total: 1 Free: -1

[root@ceph143 ~]#

2.4 验证挂载效果

[root@ceph143 ~]# ll /yinzhengjie/cephfs/

total 5

drwxr-xr-x 2 root root 0 Aug 31 17:01 ./

drwxr-xr-x 4 root root 4096 Aug 31 16:56 ../

[root@ceph143 ~]#

[root@ceph143 ~]# cp /etc/os-release /yinzhengjie/cephfs/

[root@ceph143 ~]#

[root@ceph143 ~]# ll /yinzhengjie/cephfs/

total 5

drwxr-xr-x 2 root root 0 Aug 31 17:02 ./

drwxr-xr-x 4 root root 4096 Aug 31 16:56 ../

-rw-r--r-- 1 root root 386 Aug 31 17:02 os-release

[root@ceph143 ~]#

[root@ceph143 ~]# mount | grep cephfs

ceph-fuse on /yinzhengjie/cephfs type fuse.ceph-fuse (rw,nosuid,nodev,relatime,user_id=0,group_id=0,allow_other)

[root@ceph143 ~]#

2.5 卸载cephfs

[root@ceph143 ~]# mount | grep cephfs

ceph-fuse on /yinzhengjie/cephfs type fuse.ceph-fuse (rw,nosuid,nodev,relatime,user_id=0,group_id=0,allow_other)

[root@ceph143 ~]#

[root@ceph143 ~]# umount /yinzhengjie/cephfs

[root@ceph143 ~]#

[root@ceph143 ~]# mount | grep cephfs

[root@ceph143 ~]#

3.配置基于fuse方式的开机启动挂载

[root@ceph143 ~]# mount | grep cephfs

[root@ceph143 ~]#

[root@ceph143 ~]# echo 'none /yinzhengjie/cephfs fuse.ceph ceph.id=admin,ceph.conf=/etc/ceph/ceph.conf,_netdev,noatime 0 0' >> /etc/fstab

[root@ceph143 ~]#

[root@ceph143 ~]# mount -a

2024-08-31T17:08:09.741+0800 7f64ca2f23c0 -1 init, newargv = 0x557852fc6050 newargc=15

2024-08-31T17:08:09.741+0800 7f64ca2f23c0 -1 init, args.argv = 0x557853108800 args.argc=4

ceph-fuse[86672]: starting ceph client

ceph-fuse[86672]: starting fuse

[root@ceph143 ~]#

[root@ceph143 ~]# mount | grep cephfs

ceph-fuse on /yinzhengjie/cephfs type fuse.ceph-fuse (rw,nosuid,nodev,noatime,user_id=0,group_id=0,allow_other)

[root@ceph143 ~]#

[root@ceph143 ~]# ls -l /yinzhengjie/cephfs/

total 1

-rw-r--r-- 1 root root 386 Aug 31 17:02 os-release

[root@ceph143 ~]#

本文来自博客园,作者:尹正杰,转载请注明原文链接:https://www.cnblogs.com/yinzhengjie/p/18375087,个人微信: "JasonYin2020"(添加时请备注来源及意图备注,有偿付费)

当你的才华还撑不起你的野心的时候,你就应该静下心来学习。当你的能力还驾驭不了你的目标的时候,你就应该沉下心来历练。问问自己,想要怎样的人生。

标签:

Ceph

· 我干了两个月的大项目,开源了!

· 推荐一款非常好用的在线 SSH 管理工具

· 千万级的大表,如何做性能调优?

· 聊一聊 操作系统蓝屏 c0000102 的故障分析

· .NET周刊【1月第1期 2025-01-05】