搭建YARN集群

搭建YARN集群

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

一.启动HDFS集群

1>.搭建HDFS分布式集群

博主推荐阅读: https://www.cnblogs.com/yinzhengjie2020/p/12424192.html

2>.启动HDFS集群

[root@hadoop101.yinzhengjie.com ~]# manage-hdfs.sh start hadoop101.yinzhengjie.com | CHANGED | rc=0 >> starting namenode, logging to /yinzhengjie/softwares/hadoop-2.10.0-fully-mode/logs/hadoop-root-namenode-hadoop101.yinzhengjie.com.out hadoop105.yinzhengjie.com | CHANGED | rc=0 >> starting secondarynamenode, logging to /yinzhengjie/softwares/hadoop/logs/hadoop-root-secondarynamenode-hadoop105.yinzhengjie.com.out hadoop104.yinzhengjie.com | CHANGED | rc=0 >> starting datanode, logging to /yinzhengjie/softwares/hadoop/logs/hadoop-root-datanode-hadoop104.yinzhengjie.com.out hadoop103.yinzhengjie.com | CHANGED | rc=0 >> starting datanode, logging to /yinzhengjie/softwares/hadoop/logs/hadoop-root-datanode-hadoop103.yinzhengjie.com.out hadoop102.yinzhengjie.com | CHANGED | rc=0 >> starting datanode, logging to /yinzhengjie/softwares/hadoop/logs/hadoop-root-datanode-hadoop102.yinzhengjie.com.out Starting HDFS: [ OK ] [root@hadoop101.yinzhengjie.com ~]#

[root@hadoop101.yinzhengjie.com ~]# ansible all -m shell -a 'jps' hadoop102.yinzhengjie.com | CHANGED | rc=0 >> 5565 Jps 5358 DataNode hadoop103.yinzhengjie.com | CHANGED | rc=0 >> 5314 DataNode 5518 Jps hadoop105.yinzhengjie.com | CHANGED | rc=0 >> 5291 SecondaryNameNode 5407 Jps hadoop101.yinzhengjie.com | CHANGED | rc=0 >> 5280 NameNode 5584 Jps hadoop104.yinzhengjie.com | CHANGED | rc=0 >> 5504 Jps 5298 DataNode [root@hadoop101.yinzhengjie.com ~]#

二.修改YARN配置文件(yarn-site.xml)

1>.修改yarn-site.xm配置文件

[root@hadoop101.yinzhengjie.com ~]# vim ${HADOOP_HOME}/etc/hadoop/yarn-site.xml [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# cat ${HADOOP_HOME}/etc/hadoop/yarn-site.xml <?xml version="1.0"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <configuration> <!-- Site specific YARN configuration properties --> <!-- 配置YARN支持MapReduce框架 --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> <description> 此属性可以包含多个辅助服务的列表,以支持在YARN下运行的不同应用程序框架。以逗号分隔的服务列表,其中服务名称应仅包含a-zA-Z0-9_并且不能以数字开头。 设置此属性以通知NodeManager需要实现名为"mapreduce_shuffle"。该属性让NodeManager知道MapReduce容器从map任务到reduce任务的过程中需要执行shuffle操作。 因为shuffle是一个辅助服务,而不是NodeManager的一部分,所以必须在此显式设置其值。否则无法运行MR任务。 默认值为空,在本例中,指定了mapreduce_shuffle值,因为当前仅在集群中运行基于MapReduce的作业。 </description> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce_shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> <description> 此参数指示MapReduce如何执行shuffle操作。本例中为该参数指定的值是"org.apache.hadoop.mapred.ShuffleHandler"(其实就是默认值)。指示YARN使用这个类执行shuffle操作。 提供的类名称指示如何实现为属性"yarn.nodemanager.aux-services"设置的值。 </description> </property> <!-- 配置ResourceManager(简称RM)相关参数 --> <property> <name>yarn.resourcemanager.hostname</name> <value>hadoop101.yinzhengjie.com</value> <description>指定RM的主机名。默认值为"0.0.0.0"</description> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>${yarn.resourcemanager.hostname}:8030</value> <description> 指定调度程序接口的地址,即RM对ApplicationMaster暴露的访问地址。 ApplicationMaster通过该地址向RM申请资源、释放资源等。若不指定默认值为:"${yarn.resourcemanager.hostname}:8030" </description> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>${yarn.resourcemanager.hostname}:8031</value> <description>指定RM对NM暴露的地址。NM通过该地址向RM汇报心跳,领取任务等。若不指定默认值为:"${yarn.resourcemanager.hostname}:8031"</description> </property> <property> <name>yarn.resourcemanager.address</name> <value>${yarn.resourcemanager.hostname}:8032</value> <description> 指定RM中的应用程序管理器接口的地址,即RM对客户端暴露的地址。 客户端通过该地址向RM提交应用程序,杀死应用程序等。若不指定默认值为:"${yarn.resourcemanager.hostname}:8032" </description> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>${yarn.resourcemanager.hostname}:8033</value> <description>RM对管理员暴露的访问地址。管理员通过该地址向RM发送管理命令等。若不指定默认值为:"${yarn.resourcemanager.hostname}:8033"</description> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>${yarn.resourcemanager.hostname}:8088</value> <description>RM Web应用程序的http地址。如果仅提供主机作为值,则将在随机端口上提供webapp。若不指定默认值为:"${yarn.resourcemanager.hostname}:8088"</description> </property> <property> <name>yarn.resourcemanager.scheduler.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.capacity.CapacityScheduler</value> <description>用作资源调度程序的类。目前可用的有FIFO、CapacityScheduler和FairScheduler。</description> </property> <property> <name>yarn.resourcemanager.resource-tracker.client.thread-count</name> <value>50</value> <description>处理来自NodeManager的RPC请求的Handler数目。默认值为50</description> </property> <property> <name>yarn.resourcemanager.scheduler.client.thread-count</name> <value>50</value> <description>处理来自ApplicationMaster的RPC请求的Handler数目。默认值为50</description> </property> <property> <name>yarn.resourcemanager.nodes.include-path</name> <value></value> <description>指定包含节点的文件路径。即设置白名单,默认值为空。(改参数并不是必须配置的,先混个眼熟,后续用到可以直接拿来配置。)</description> </property> <property> <name>yarn.resourcemanager.nodes.exclude-path</name> <value></value> <description> 指定包含要排除的节点的文件路径。即设置黑名单,默认值为空。(改参数并不是必须配置的,先混个眼熟,后续用到可以直接拿来配置。) 如果发现若干个NodeManager存在问题,比如故障率很高,任务运行失败率高,则可以将之加入黑名单中。注意,这两个配置参数可以动态生效。(调用一个refresh命令即可) </description> </property> <property> <name>yarn.resourcemanager.nodemanagers.heartbeat-interval-ms</name> <value>3000</value> <description>集群中每个NodeManager的心跳间隔(以毫秒为单位)。默认值为1000ms(即1秒)</description> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>2048</value> <description> RM上每个容器请求的最小分配(以MB为单位)。低于此值的内存请求将被设置为此属性的值。此外,如果节点管理器配置为内存小于此值,则资源管理器将关闭该节点管理器。 此参数指定分配的每个容器最小内存为2048MB(即2G),该值不宜设置过大。默认值为1024MB。 由于我们将yarn.nodemanager.resource.memory-mb的值设置为81920MB,因此意味着此节点限制在任何给定时间内运行的容器数量不超过40个(81920/2048)个。 </description> </property> <property> <name>yarn.scheduler.maximum-allocation-mb</name> <value>81920</value> <description> RM上每个容器请求的最大分配(MB)。高于此值的内存请求将引发InvalidResourceRequestException。 此参数指定分配的每个容器最大内存为81920MB(即80G),该值不宜设置过小。默认值为8192MB(即8G)。 </description> </property> <property> <name>yarn.scheduler.minimum-allocation-vcores</name> <value>1</value> <description> 就虚拟CPU内核而言,RM上每个容器请求的最小分配。低于此值的请求将设置为此属性的值。默认值为1。 此外,资源管理器将关闭配置为具有比该值更少的虚拟核的节点管理器。 </description> </property> <property> <name>yarn.scheduler.maximum-allocation-vcores</name> <value>32</value> <description> 就虚拟CPU核心而言,RM处每个容器请求的最大分配。默认值为4。 高于此值的请求将引发InvalidResourceRequestException。 </description> </property> <!-- 配置NodeManager(简称NM)相关参数 --> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>81920</value> <description> 此参数用于指定YARN可以在每个节点上消耗的总内存(用于分配给容器的物理内存量,以MB为单位)。在生产环境中建议设置为物理内存的70%的容量即可. 如果设置为-1(默认值)且yarn.nodemanager.resource.detect-hardware-capabilities为true,则会自动计算(在Windows和Linux中)。在其他情况下,默认值为8192MB(即8GB)。 </description> </property> <property> <name>yarn.nodemanager.resource.cpu-vcores</name> <value>32</value> <description> 此参数可以指定分配给YARN容器的CPU内核数。理论上应该将其设置为小于节点上物理内核数。但在实际生产环境中可以将一个物理cpu当成2个来用,尤其是在CPU密集型的集群。 如果设置为-1(默认值)且yarn.nodemanager.resource.detect-hardware-capabilities为true,则会自动计算(在Windows和Linux中)。在其他情况下,默认值为8。 </description> </property> <property> <name>yarn.nodemanager.vmem-pmem-ratio</name> <value>3.0</value> <description> 此参数指定YARN容器配置的每个map和reduce任务使用的虚拟内存比的上限(换句话说,每使用1MB物理内存,最多可用的虚拟内存数)。 默认值是2.1,可以设置一个不同的值,比如3.0。不过生产环境中我一般都会禁用swap分区,目的在于尽量避免使用虚拟内存哟。因此若禁用了swap分区,个人觉得改参数配置可忽略。 </description> </property> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> <description> 每个DataNode上的NodeManager使用此属性来聚合应用程序日志。默认值为"false",启用日志聚合时,Hadoop收集作为应用程序一部分的每个容器的日志,并在应用完成后将这些文件移动到HDFS。 可以使用"yarn.nodemanager.remote-app-log-dir"和"yarn.nodemanager.remote-app-log-dir-suffix"属性来指定在HDFS中聚合日志的位置。 </description> </property> <property> <name>yarn.nodemanager.remote-app-log-dir</name> <value>/yinzhengjie/logs/hdfs/</value> <description> 此属性指定HDFS中聚合应用程序日志文件的目录。JobHistoryServer将应用日志存储在HDFS中的此目录中。默认值为"/tmp/logs" </description> </property> <property> <name>yarn.nodemanager.remote-app-log-dir-suffix</name> <value>yinzhengjie-logs</value> <description>远程日志目录将创建在"{yarn.nodemanager.remote-app-log-dir}/${user}/{thisParam}",默认值为"logs"。</description> </property> <property> <name>yarn.nodemanager.log-dirs</name> <value>/yinzhengjie/logs/yarn/container1,/yinzhengjie/logs/yarn/container2,/yinzhengjie/logs/yarn/container3</value> <description> 指定存储容器日志的位置,默认值为"${yarn.log.dir}/userlogs"。此属性指定YARN在Linux文件系统上发送应用程序日志文件的路径。通常会配置多个不同的挂在目录,以增强I/O性能。 由于上面启用了日志聚合功能,一旦应用程序完成,YARN将删除本地文件。可以通过JobHistroyServer访问它们(从汇总了日志的HDFS上)。 在该示例中将其设置为"/yinzhengjie/logs/yarn/container",只有NondeManager使用这些目录。 </description> </property> <property> <name>yarn.nodemanager.local-dirs</name> <value>/yinzhengjie/data/hdfs/nm1,/yinzhengjie/data/hdfs/nm2,/yinzhengjie/data/hdfs/nm3</value> <description> 指定用于存储本地化文件的目录列表(即指定中间结果存放位置,通常会配置多个不同的挂在目录,以增强I/O性能)。默认值为"${hadoop.tmp.dir}/nm-local-dir"。 YARN需要存储其本地文件,例如MapReduce的中间输出,将它们存储在本地文件系统上的某个位置。可以使用此参数指定多个本地目录,YARN的分布式缓存也是用这些本地资源文件。 </description> </property> <property> <name>yarn.nodemanager.log.retain-seconds</name> <value>10800</value> <description>保留用户日志的时间(以秒为单位)。仅在禁用日志聚合的情况下适用,默认值为:10800s(即3小时)。</description> </property> <property> <name>yarn.application.classpath</name> <value></value> <description> 此属性指定本地文件系统上用于存储在集群中执行应用程序所需的Hadoop,YARN和HDFS常用JAR文件的位置。以逗号分隔的CLASSPATH条目列表。 当此值为空时,将使用以下用于YARN应用程序的默认CLASSPATH。 对于Linux: $HADOOP_CONF_DIR, $HADOOP_COMMON_HOME/share/hadoop/common/*, $HADOOP_COMMON_HOME/share/hadoop/common/lib/*, $HADOOP_HDFS_HOME/share/hadoop/hdfs/*, $HADOOP_HDFS_HOME/share/hadoop/hdfs/lib/*, $HADOOP_YARN_HOME/share/hadoop/yarn/*, $HADOOP_YARN_HOME/share/hadoop/yarn/lib/* 对于Windows: %HADOOP_CONF_DIR%, %HADOOP_COMMON_HOME%/share/hadoop/common/*, %HADOOP_COMMON_HOME%/share/hadoop/common/lib/*, %HADOOP_HDFS_HOME%/share/hadoop/hdfs/*, %HADOOP_HDFS_HOME%/share/hadoop/hdfs/lib/*, %HADOOP_YARN_HOME%/share/hadoop/yarn/*, %HADOOP_YARN_HOME%/share/hadoop/yarn/lib/* 应用程序的ApplicationMaster和运行该应用程序都需要知道本地文件系统图上各个HDFS,YARN和Hadoop常用JAR文件所在的位置。 </description> </property> </configuration> [root@hadoop101.yinzhengjie.com ~]#

2>.使用ansiblle分发配置文件

[root@hadoop101.yinzhengjie.com ~]# tail -17 /etc/ansible/hosts #Add by yinzhengjie for Hadoop. [nn] hadoop101.yinzhengjie.com [snn] hadoop105.yinzhengjie.com [dn] hadoop102.yinzhengjie.com hadoop103.yinzhengjie.com hadoop104.yinzhengjie.com [other] hadoop102.yinzhengjie.com hadoop103.yinzhengjie.com hadoop104.yinzhengjie.com hadoop105.yinzhengjie.com [root@hadoop101.yinzhengjie.com ~]#

[root@hadoop101.yinzhengjie.com ~]# ansible other -m copy -a "src=${HADOOP_HOME}/etc/hadoop/yarn-site.xml dest=${HADOOP_HOME}/etc/hadoop/" hadoop102.yinzhengjie.com | CHANGED => { "ansible_facts": { "discovered_interpreter_python": "/usr/bin/python" }, "changed": true, "checksum": "8910e3a8414606ebaeeda440b0fc32cac584e0ed", "dest": "/yinzhengjie/softwares/hadoop/etc/hadoop/yarn-site.xml", "gid": 0, "group": "root", "md5sum": "33900cbf0053e7754d9b6b4991c4faf5", "mode": "0644", "owner": "root", "size": 7569, "src": "/root/.ansible/tmp/ansible-tmp-1602332056.56-7256-154782649881983/source", "state": "file", "uid": 0 } hadoop104.yinzhengjie.com | CHANGED => { "ansible_facts": { "discovered_interpreter_python": "/usr/bin/python" }, "changed": true, "checksum": "8910e3a8414606ebaeeda440b0fc32cac584e0ed", "dest": "/yinzhengjie/softwares/hadoop/etc/hadoop/yarn-site.xml", "gid": 0, "group": "root", "md5sum": "33900cbf0053e7754d9b6b4991c4faf5", "mode": "0644", "owner": "root", "size": 7569, "src": "/root/.ansible/tmp/ansible-tmp-1602332056.6-7259-266144991332982/source", "state": "file", "uid": 0 } hadoop105.yinzhengjie.com | CHANGED => { "ansible_facts": { "discovered_interpreter_python": "/usr/bin/python" }, "changed": true, "checksum": "8910e3a8414606ebaeeda440b0fc32cac584e0ed", "dest": "/yinzhengjie/softwares/hadoop/etc/hadoop/yarn-site.xml", "gid": 0, "group": "root", "md5sum": "33900cbf0053e7754d9b6b4991c4faf5", "mode": "0644", "owner": "root", "size": 7569, "src": "/root/.ansible/tmp/ansible-tmp-1602332056.6-7260-5791703664800/source", "state": "file", "uid": 0 } hadoop103.yinzhengjie.com | CHANGED => { "ansible_facts": { "discovered_interpreter_python": "/usr/bin/python" }, "changed": true, "checksum": "8910e3a8414606ebaeeda440b0fc32cac584e0ed", "dest": "/yinzhengjie/softwares/hadoop/etc/hadoop/yarn-site.xml", "gid": 0, "group": "root", "md5sum": "33900cbf0053e7754d9b6b4991c4faf5", "mode": "0644", "owner": "root", "size": 7569, "src": "/root/.ansible/tmp/ansible-tmp-1602332056.58-7258-195798408486819/source", "state": "file", "uid": 0 } [root@hadoop101.yinzhengjie.com ~]#

三.启动YARN访问并编写自定义启动脚本

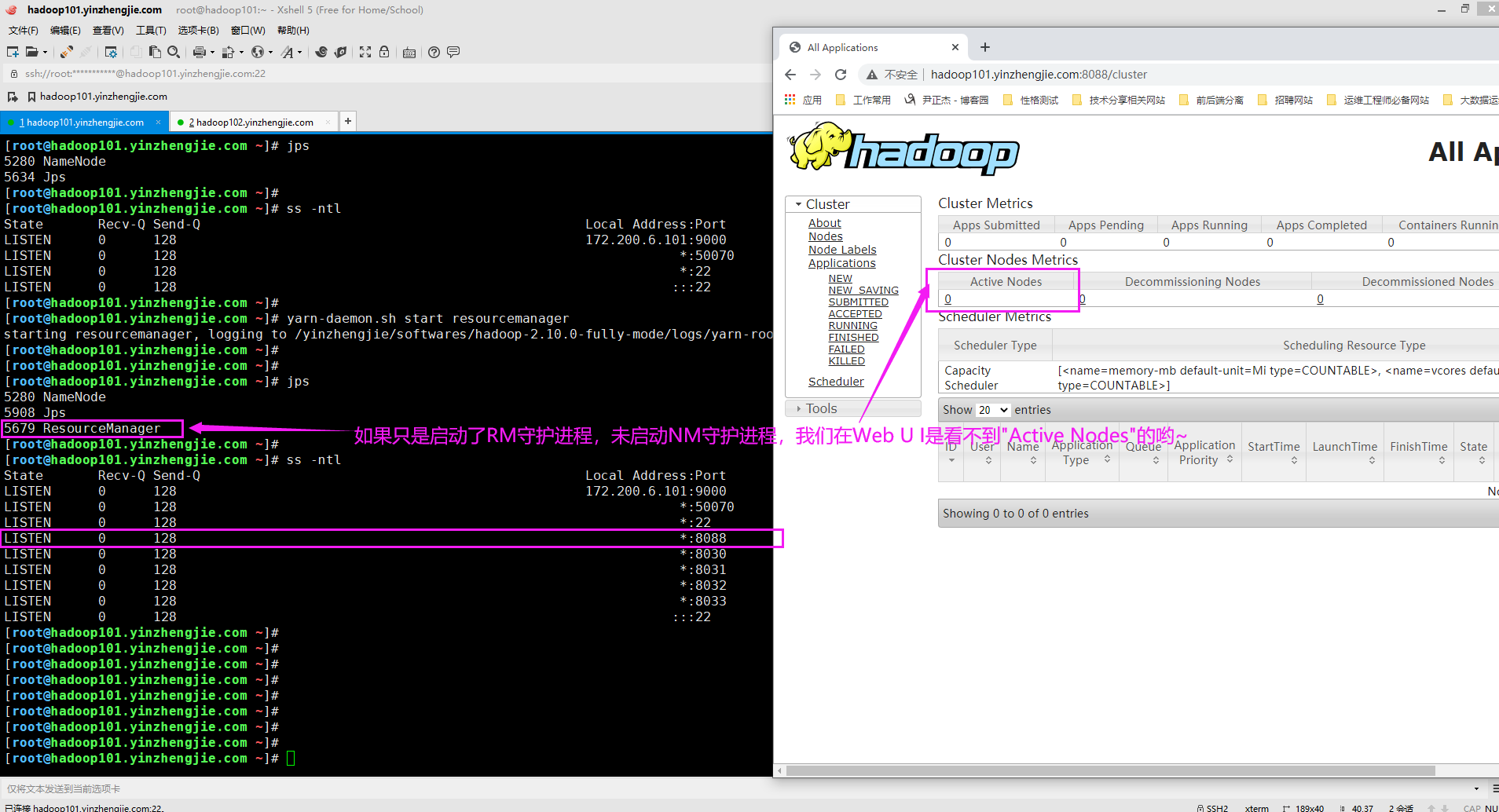

1>.启动resourcemanager守护进程

[root@hadoop101.yinzhengjie.com ~]# jps 5280 NameNode 5634 Jps [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# ss -ntl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 172.200.6.101:9000 *:* LISTEN 0 128 *:50070 *:* LISTEN 0 128 *:22 *:* LISTEN 0 128 :::22 :::* [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /yinzhengjie/softwares/hadoop-2.10.0-fully-mode/logs/yarn-root-resourcemanager-hadoop101.yinzhengjie.com.out [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# jps 5280 NameNode 5908 Jps 5679 ResourceManager [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# ss -ntl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 172.200.6.101:9000 *:* LISTEN 0 128 *:50070 *:* LISTEN 0 128 *:22 *:* LISTEN 0 128 *:8088 *:* LISTEN 0 128 *:8030 *:* LISTEN 0 128 *:8031 *:* LISTEN 0 128 *:8032 *:* LISTEN 0 128 *:8033 *:* LISTEN 0 128 :::22 :::* [root@hadoop101.yinzhengjie.com ~]#

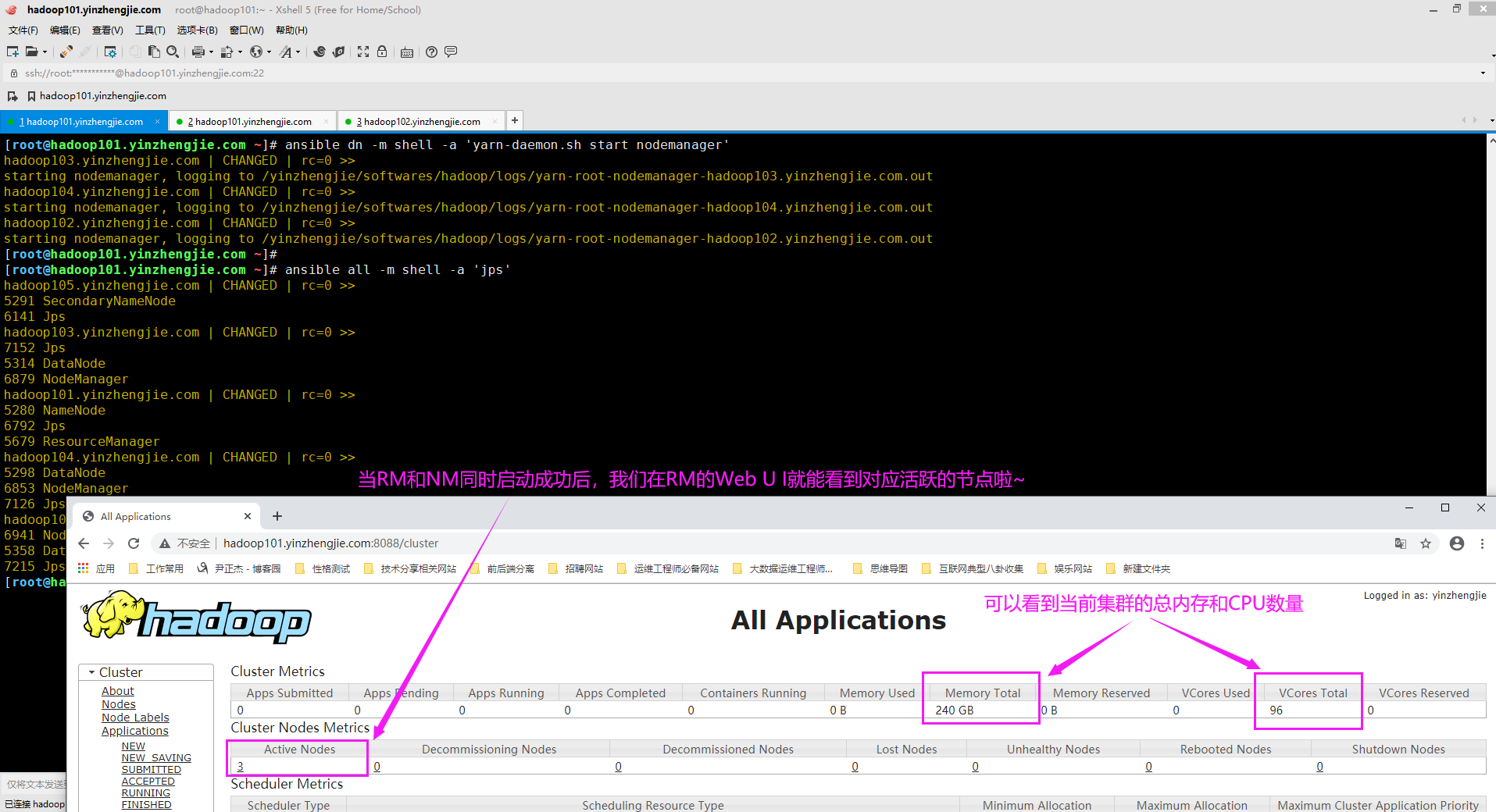

2>.启动NodeManager节点

[root@hadoop101.yinzhengjie.com ~]# ansible all -m shell -a 'jps' hadoop105.yinzhengjie.com | CHANGED | rc=0 >> 5505 Jps 5291 SecondaryNameNode hadoop104.yinzhengjie.com | CHANGED | rc=0 >> 5298 DataNode 5591 Jps hadoop101.yinzhengjie.com | CHANGED | rc=0 >> 5280 NameNode 6061 Jps 5679 ResourceManager hadoop103.yinzhengjie.com | CHANGED | rc=0 >> 5314 DataNode 5608 Jps hadoop102.yinzhengjie.com | CHANGED | rc=0 >> 5650 Jps 5358 DataNode [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]#

[root@hadoop101.yinzhengjie.com ~]# ansible dn -m shell -a 'yarn-daemon.sh start nodemanager' hadoop104.yinzhengjie.com | CHANGED | rc=0 >> starting nodemanager, logging to /yinzhengjie/softwares/hadoop/logs/yarn-root-nodemanager-hadoop104.yinzhengjie.com.out hadoop102.yinzhengjie.com | CHANGED | rc=0 >> starting nodemanager, logging to /yinzhengjie/softwares/hadoop/logs/yarn-root-nodemanager-hadoop102.yinzhengjie.com.out hadoop103.yinzhengjie.com | CHANGED | rc=0 >> starting nodemanager, logging to /yinzhengjie/softwares/hadoop/logs/yarn-root-nodemanager-hadoop103.yinzhengjie.com.out [root@hadoop101.yinzhengjie.com ~]#

[root@hadoop101.yinzhengjie.com ~]# ansible all -m shell -a 'jps' hadoop105.yinzhengjie.com | CHANGED | rc=0 >> 5291 SecondaryNameNode 6141 Jps hadoop103.yinzhengjie.com | CHANGED | rc=0 >> 7152 Jps 5314 DataNode 6879 NodeManager hadoop101.yinzhengjie.com | CHANGED | rc=0 >> 5280 NameNode 6792 Jps 5679 ResourceManager hadoop104.yinzhengjie.com | CHANGED | rc=0 >> 5298 DataNode 6853 NodeManager 7126 Jps hadoop102.yinzhengjie.com | CHANGED | rc=0 >> 6941 NodeManager 5358 DataNode 7215 Jps [root@hadoop101.yinzhengjie.com ~]#

3>.编写脚本管理Hadoop集群

[root@hadoop101.yinzhengjie.com ~]# vim /usr/local/bin/manage-hdfs.sh [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# cat /usr/local/bin/manage-hdfs.sh #!/bin/bash # #******************************************************************** #Author: yinzhengjie #QQ: 1053419035 #Date: 2019-11-27 #FileName: manage-hdfs.sh #URL: http://www.cnblogs.com/yinzhengjie #Description: The test script #Copyright notice: original works, no reprint! Otherwise, legal liability will be investigated. #******************************************************************** #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数"; exit fi #调用操作系统自带的函数,(我这里需要用到action函数,可以使用"declare -f action"查看该函数的定义过程) . /etc/init.d/functions function start_hdfs(){ ansible nn -m shell -a 'hadoop-daemon.sh start namenode' ansible snn -m shell -a 'hadoop-daemon.sh start secondarynamenode' ansible dn -m shell -a 'hadoop-daemon.sh start datanode' #提示用户服务启动成功 action "Starting HDFS:" true } function stop_hdfs(){ ansible nn -m shell -a 'hadoop-daemon.sh stop namenode' ansible snn -m shell -a 'hadoop-daemon.sh stop secondarynamenode' ansible dn -m shell -a 'hadoop-daemon.sh stop datanode' #提示用户服务停止成功 action "Stoping HDFS:" true } function status_hdfs(){ ansible all -m shell -a 'jps' } case $1 in "start") start_hdfs ;; "stop") stop_hdfs ;; "restart") stop_hdfs start_hdfs ;; "status") status_hdfs ;; *) echo "Usage: manage-hdfs.sh start|stop|restart|status" ;; esac [root@hadoop101.yinzhengjie.com ~]#

[root@hadoop101.yinzhengjie.com ~]# vim /usr/local/bin/manage-yarn.sh [root@hadoop101.yinzhengjie.com ~]# [root@hadoop101.yinzhengjie.com ~]# cat /usr/local/bin/manage-yarn.sh #!/bin/bash # #******************************************************************** #Author: yinzhengjie #QQ: 1053419035 #Date: 2019-11-27 #FileName: manage-yarn.sh #URL: http://www.cnblogs.com/yinzhengjie #Description: The test script #Copyright notice: original works, no reprint! Otherwise, legal liability will be investigated. #******************************************************************** #判断用户是否传参 if [ $# -lt 1 ];then echo "请输入参数"; exit fi #调用操作系统自带的函数,(我这里需要用到action函数,可以使用"declare -f action"查看该函数的定义过程) . /etc/init.d/functions function start_yarn(){ ansible nn -m shell -a 'yarn-daemon.sh start resourcemanager' ansible dn -m shell -a 'yarn-daemon.sh start nodemanager' #提示用户服务启动成功 action "Starting HDFS:" true } function stop_yarn(){ ansible nn -m shell -a 'yarn-daemon.sh stop resourcemanager' ansible dn -m shell -a 'yarn-daemon.sh stop nodemanager' #提示用户服务停止成功 action "Stoping HDFS:" true } function status_yarn(){ ansible all -m shell -a 'jps' } case $1 in "start") start_yarn ;; "stop") stop_yarn ;; "restart") stop_yarn start_yarn ;; "status") status_yarn ;; *) echo "Usage: manage-yarn.sh start|stop|restart|status" ;; esac [root@hadoop101.yinzhengjie.com ~]#

本文来自博客园,作者:尹正杰,转载请注明原文链接:https://www.cnblogs.com/yinzhengjie/p/13123597.html,个人微信: "JasonYin2020"(添加时请备注来源及意图备注,有偿付费)

当你的才华还撑不起你的野心的时候,你就应该静下心来学习。当你的能力还驾驭不了你的目标的时候,你就应该沉下心来历练。问问自己,想要怎样的人生。