23. redis实战

| root@k8s-master1:~/k8s-data/dockerfile/web/magedu/redis |

| |

| FROM harbor.nbrhce.com/baseimages/centos:7.9.2009 |

| |

| MAINTAINER zhangshijie "zhangshijie@magedu.net" |

| |

| ADD redis-4.0.14.tar.gz /usr/local/src |

| RUN ln -sv /usr/local/src/redis-4.0.14 /usr/local/redis \ |

| && yum install -y make gcc \ |

| && cd /usr/local/redis \ |

| && make \ |

| && cp src/redis-cli /usr/local/bin/ \ |

| && cp src/redis-server /usr/local/bin/ \ |

| && mkdir -pv /data/redis-data |

| ADD redis.conf /usr/local/redis/redis.conf |

| ADD run_redis.sh /usr/local/redis/run_redis.sh |

| RUN chmod +x /usr/local/redis/run_redis.sh |

| |

| EXPOSE 6379 |

| |

| CMD ["/usr/local/redis/run_redis.sh"] |

| root@k8s-master1:~/k8s-data/dockerfile/web/magedu/redis |

| |

| bind 0.0.0.0 |

| protected-mode yes |

| port 6379 |

| tcp-backlog 511 |

| timeout 0 |

| tcp-keepalive 300 |

| daemonize yes |

| supervised no |

| pidfile /var/run/redis_6379.pid |

| loglevel notice |

| logfile "" |

| databases 16 |

| always-show-logo yes |

| |

| save 900 1 |

| save 5 1 |

| save 300 10 |

| save 60 10000 |

| |

| stop-writes-on-bgsave-error no |

| rdbcompression yes |

| rdbchecksum yes |

| dbfilename dump.rdb |

| dir /data/redis-data |

| slave-serve-stale-data yes |

| slave-read-only yes |

| repl-diskless-sync no |

| repl-diskless-sync-delay 5 |

| repl-disable-tcp-nodelay no |

| slave-priority 100 |

| requirepass 123456 |

| lazyfree-lazy-eviction no |

| lazyfree-lazy-expire no |

| lazyfree-lazy-server-del no |

| slave-lazy-flush no |

| appendonly no |

| appendfilename "appendonly.aof" |

| appendfsync everysec |

| no-appendfsync-on-rewrite no |

| auto-aof-rewrite-percentage 100 |

| auto-aof-rewrite-min-size 64mb |

| aof-load-truncated yes |

| aof-use-rdb-preamble no |

| lua-time-limit 5000 |

| slowlog-log-slower-than 10000 |

| slowlog-max-len 128 |

| latency-monitor-threshold 0 |

| notify-keyspace-events "" |

| hash-max-ziplist-entries 512 |

| hash-max-ziplist-value 64 |

| list-max-ziplist-size -2 |

| list-compress-depth 0 |

| set-max-intset-entries 512 |

| zset-max-ziplist-entries 128 |

| zset-max-ziplist-value 64 |

| hll-sparse-max-bytes 3000 |

| activerehashing yes |

| client-output-buffer-limit normal 0 0 0 |

| client-output-buffer-limit slave 256mb 64mb 60 |

| client-output-buffer-limit pubsub 32mb 8mb 60 |

| hz 10 |

| aof-rewrite-incremental-fsync yes |

| root@k8s-master1:~/k8s-data/dockerfile/web/magedu/redis |

| |

| |

| chmod 777 /usr/sbin/redis-server |

| /usr/local/bin/redis-server /usr/local/redis/redis.conf |

| |

| tail -f /etc/hosts |

| root@k8s-master1:~/k8s-data/dockerfile/web/magedu/redis |

| |

| TAG=$1 |

| nerdctl build -t harbor.nbrhce.com/demo/redis:${TAG} . |

| sleep 3 |

| nerdctl push harbor.nbrhce.com/demo/redis:${TAG} |

23.1 单节点YAML

| root@k8s-master1:~/k8s-data/yaml/magedu/redis |

| kind: Deployment |

| |

| apiVersion: apps/v1 |

| metadata: |

| labels: |

| app: devops-redis |

| name: deploy-devops-redis |

| namespace: redis |

| spec: |

| replicas: 1 |

| selector: |

| matchLabels: |

| app: devops-redis |

| template: |

| metadata: |

| labels: |

| app: devops-redis |

| spec: |

| containers: |

| - name: redis-container |

| image: harbor.nbrhce.com/demo/redis:v4.0.14 |

| imagePullPolicy: Always |

| volumeMounts: |

| - mountPath: "/data/redis-data/" |

| name: redis-datadir |

| volumes: |

| - name: redis-datadir |

| persistentVolumeClaim: |

| claimName: redis-datadir-pvc-1 |

| |

| --- |

| kind: Service |

| apiVersion: v1 |

| metadata: |

| labels: |

| app: devops-redis |

| name: srv-devops-redis |

| namespace: redis |

| spec: |

| type: NodePort |

| ports: |

| - name: http |

| port: 6379 |

| targetPort: 6379 |

| nodePort: 36379 |

| selector: |

| app: devops-redis |

| sessionAffinity: ClientIP |

| sessionAffinityConfig: |

| clientIP: |

| timeoutSeconds: 10800 |

| |

| root@k8s-master1:~/k8s-data/yaml/magedu/redis/pv |

| --- |

| apiVersion: v1 |

| kind: PersistentVolume |

| metadata: |

| name: redis-datadir-pv-1 |

| spec: |

| capacity: |

| storage: 10Gi |

| accessModes: |

| - ReadWriteOnce |

| nfs: |

| path: /data/k8sdata/redis-datadir-1 |

| server: 10.0.0.109 |

| root@k8s-master1:~/k8s-data/yaml/magedu/redis/pv |

| --- |

| apiVersion: v1 |

| kind: PersistentVolumeClaim |

| metadata: |

| name: redis-datadir-pvc-1 |

| namespace: redis |

| spec: |

| volumeName: redis-datadir-pv-1 |

| accessModes: |

| - ReadWriteOnce |

| resources: |

| requests: |

| storage: 10Gi |

23.2 集群YAML

| root@k8s-master1:~/k8s-data/yaml/magedu/redis-cluster |

| apiVersion: v1 |

| kind: Service |

| metadata: |

| name: redis |

| namespace: redis-cluster |

| labels: |

| app: redis |

| spec: |

| selector: |

| app: redis |

| appCluster: redis-cluster |

| ports: |

| - name: redis |

| port: 6379 |

| clusterIP: None |

| |

| --- |

| apiVersion: v1 |

| kind: Service |

| metadata: |

| name: redis-access |

| namespace: redis-cluster |

| labels: |

| app: redis |

| spec: |

| selector: |

| app: redis |

| appCluster: redis-cluster |

| ports: |

| - name: redis-access |

| protocol: TCP |

| port: 6379 |

| targetPort: 6379 |

| |

| --- |

| apiVersion: apps/v1 |

| kind: StatefulSet |

| metadata: |

| name: redis |

| namespace: redis-cluster |

| spec: |

| serviceName: redis |

| replicas: 3 |

| selector: |

| matchLabels: |

| app: redis |

| appCluster: redis-cluster |

| template: |

| metadata: |

| labels: |

| app: redis |

| appCluster: redis-cluster |

| spec: |

| terminationGracePeriodSeconds: 20 |

| affinity: |

| podAntiAffinity: |

| preferredDuringSchedulingIgnoredDuringExecution: |

| - weight: 100 |

| podAffinityTerm: |

| labelSelector: |

| matchExpressions: |

| - key: app |

| operator: In |

| values: |

| - redis |

| topologyKey: kubernetes.io/hostname |

| containers: |

| - name: redis |

| image: redis:4.0.14 |

| command: |

| - "redis-server" |

| args: |

| - "/etc/redis/redis.conf" |

| - "--protected-mode" |

| - "no" |

| resources: |

| requests: |

| cpu: "500m" |

| memory: "500Mi" |

| ports: |

| - containerPort: 6379 |

| name: redis |

| protocol: TCP |

| - containerPort: 16379 |

| name: cluster |

| protocol: TCP |

| volumeMounts: |

| - name: conf |

| mountPath: /etc/redis |

| - name: data |

| mountPath: /var/lib/redis |

| volumes: |

| - name: conf |

| configMap: |

| name: redis-conf |

| items: |

| - key: redis.conf |

| path: redis.conf |

| volumeClaimTemplates: |

| - metadata: |

| name: data |

| namespace: redis-cluster |

| spec: |

| accessModes: [ "ReadWriteOnce" ] |

| resources: |

| requests: |

| storage: 5Gi |

| root@k8s-master1:~/k8s-data/yaml/magedu/redis-cluster |

| appendonly yes |

| cluster-enabled yes |

| cluster-config-file /var/lib/redis/nodes.conf |

| cluster-node-timeout 5000 |

| dir /var/lib/redis |

| port 6379 |

| kubectl create configmap redis-conf --from-file=redis.conf -n redis-cluster |

| kubectl get configmaps -n redis-cluster redis-conf -o yaml |

| |

| root@k8s-master1:~ |

| |

| root@k8s-master1:~/k8s-data/yaml/magedu/redis-cluster/pv |

| apiVersion: v1 |

| kind: PersistentVolume |

| metadata: |

| name: redis-cluster-pv0 |

| spec: |

| capacity: |

| storage: 5Gi |

| accessModes: |

| - ReadWriteOnce |

| nfs: |

| server: 10.0.0.109 |

| path: /data/k8sdata/redis0 |

| |

| --- |

| apiVersion: v1 |

| kind: PersistentVolume |

| metadata: |

| name: redis-cluster-pv1 |

| spec: |

| capacity: |

| storage: 5Gi |

| accessModes: |

| - ReadWriteOnce |

| nfs: |

| server: 10.0.0.109 |

| path: /data/k8sdata/redis1 |

| |

| --- |

| apiVersion: v1 |

| kind: PersistentVolume |

| metadata: |

| name: redis-cluster-pv2 |

| spec: |

| capacity: |

| storage: 5Gi |

| accessModes: |

| - ReadWriteOnce |

| nfs: |

| server: 10.0.0.109 |

| path: /data/k8sdata/redis2 |

| kubectl run -it ubuntu1804 --image=ubuntu:18.04 --restart=Never -n redis-cluster bash |

| apt update |

| apt install python2.7 python-pip redis-tools dnsutils iputils-ping net-tools |

| pip install --upgrade pip |

| pip install redis-trib==0.5.1 |

| |

| |

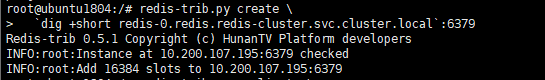

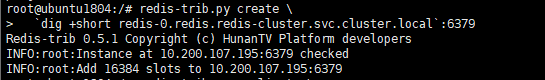

| redis-trib.py create \ |

| `dig +short redis-0.redis.redis-cluster.svc.cluster.local`:6379 |

| |

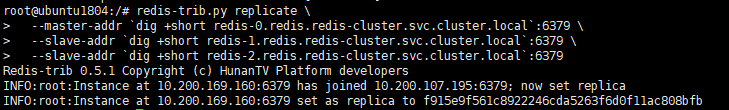

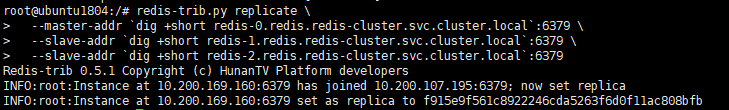

| redis-trib.py replicate \ |

| --master-addr `dig +short redis-0.redis.redis-cluster.svc.cluster.local`:6379 \ |

| --slave-addr `dig +short redis-1.redis.redis-cluster.svc.cluster.local`:6379 |

| |

| redis-trib.py replicate \ |

| --master-addr `dig +short redis-0.redis.redis-cluster.svc.cluster.local`:6379 \ |

| --slave-addr `dig +short redis-1.redis.redis-cluster.svc.cluster.local`:6379 |

| |

| |

| |

| redis-trib.py create \ |

| `dig +short redis-0.redis.redis-cluster.cluster.local`:6379 \ |

| `dig +short redis-1.redis.redis-cluster.cluster.local`:6379 \ |

| `dig +short redis-2.redis.redis-cluster.svc.cluster.local`:6379 |

| |

| |

| redis-trib.py replicate \ |

| --master-addr `dig +short redis-0.redis.redis-cluster.svc.cluster.local`:6379 \ |

| --slave-addr `dig +short redis-3.redis.redis-cluster.svc.cluster.local`:6379 |

| |

| redis-trib.py replicate \ |

| --master-addr `dig +short redis-1.redis.redis-cluster.svc.cluster.local`:6379 \ |

| --slave-addr `dig +short redis-4.redis.redis-cluster.svc.cluster.local`:6379 |

| |

| redis-trib.py replicate \ |

| --master-addr `dig +short redis-2.redis.redis-cluster.svc.cluster.local`:6379 \ |

| --slave-addr `dig +short redis-5.redis.redis-cluster.svc.cluster.local`:6379 |

| 127.0.0.1:6379> CLUSTER INFO |

| cluster_state:ok |

| cluster_slots_assigned:16384 |

| cluster_slots_ok:16384 |

| cluster_slots_pfail:0 |

| cluster_slots_fail:0 |

| cluster_known_nodes:3 |

| cluster_size:1 |

| cluster_current_epoch:2 |

| cluster_my_epoch:0 |

| cluster_stats_messages_ping_sent:1337 |

| cluster_stats_messages_pong_sent:1275 |

| cluster_stats_messages_sent:2612 |

| cluster_stats_messages_ping_received:1275 |

| cluster_stats_messages_pong_received:1337 |

| cluster_stats_messages_received:2612 |

| |

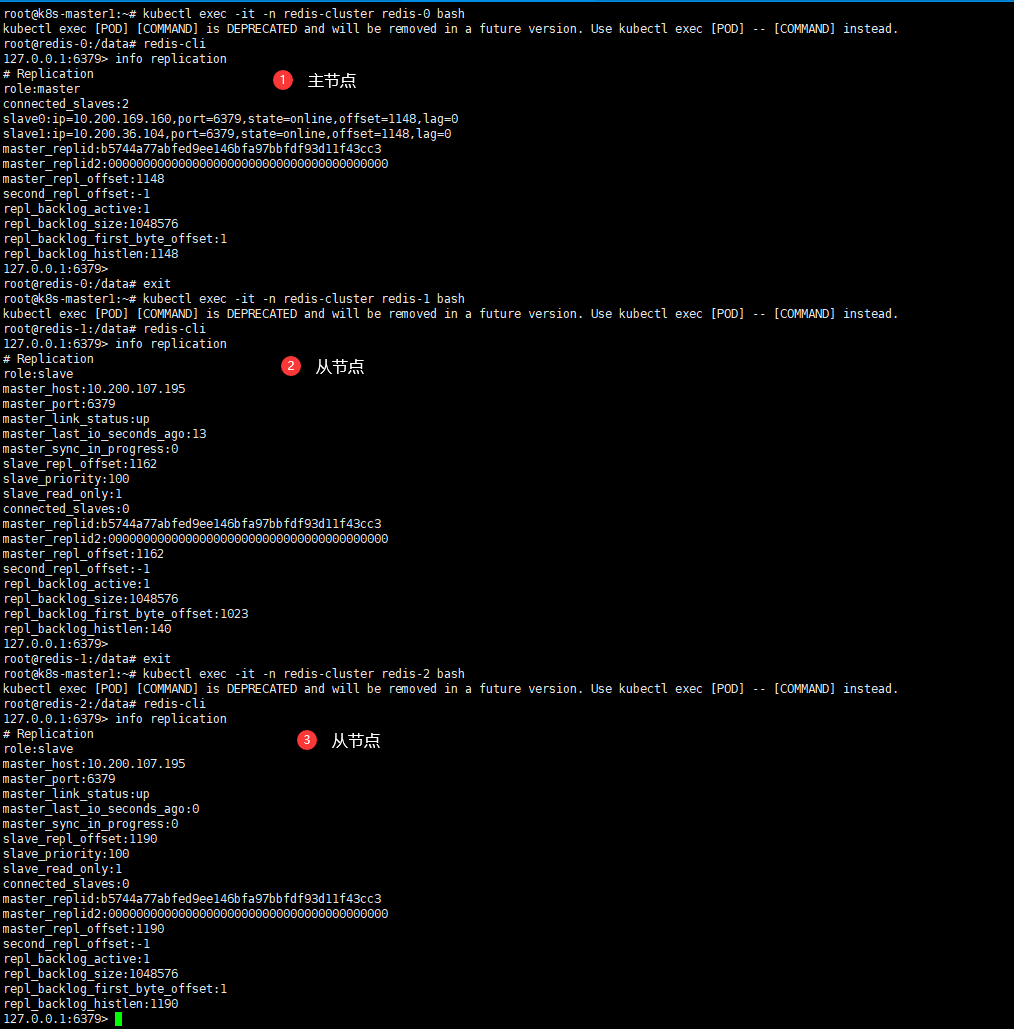

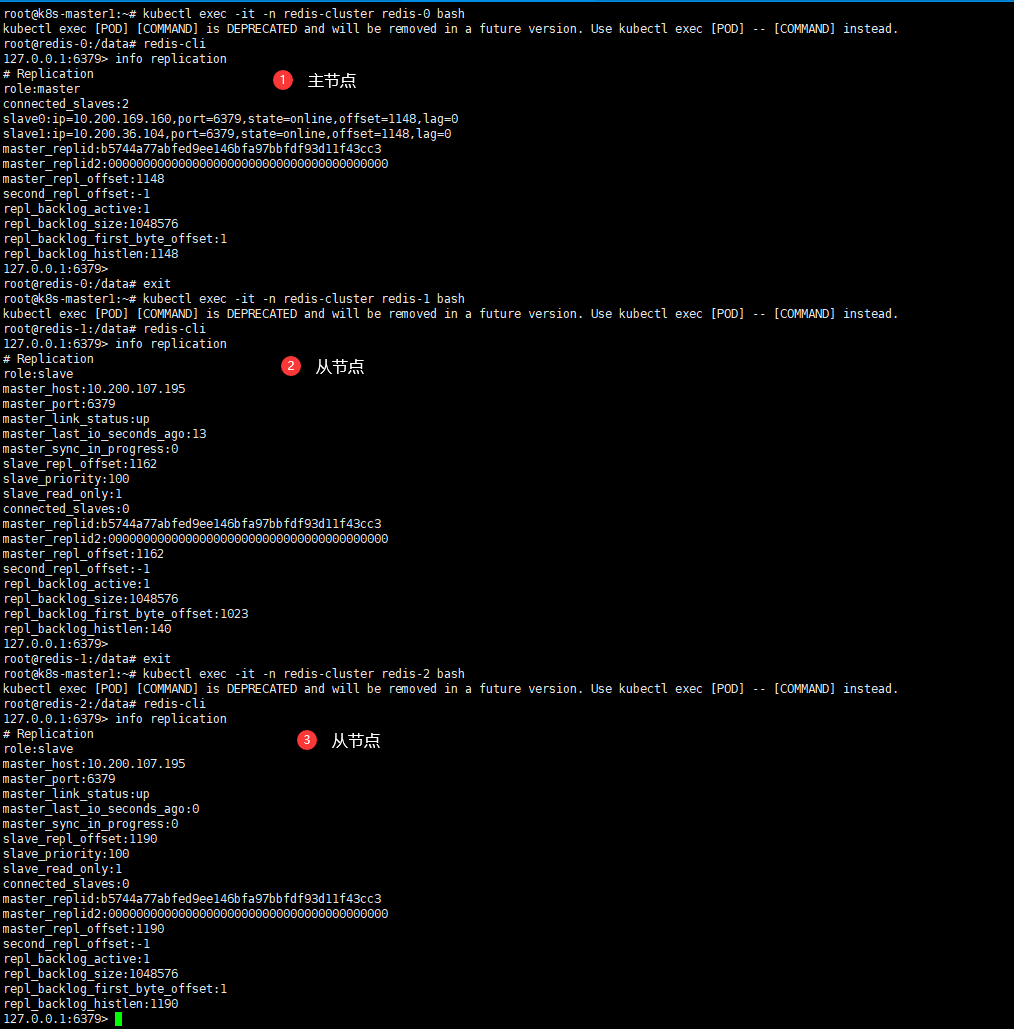

| 127.0.0.1:6379> info replication |

| role:master |

| connected_slaves:2 |

| slave0:ip=10.200.169.160,port=6379,state=online,offset=42,lag=1 |

| slave1:ip=10.200.36.104,port=6379,state=online,offset=42,lag=0 |

| master_replid:b1a3f5758b0741d1591ca58fb1452a3fbf6f7910 |

| master_replid2:0000000000000000000000000000000000000000 |

| master_repl_offset:42 |

| second_repl_offset:-1 |

| repl_backlog_active:1 |

| repl_backlog_size:1048576 |

| repl_backlog_first_byte_offset:1 |

| repl_backlog_histlen:42 |

| 127.0.0.1:6379> |

| |

| 127.0.0.1:6379> CLUSTER NODES |

| a2841545b149a760c6820fd7ce4ed1dcd353dedc 10.200.169.160:6379@16379 slave f915e9f561c8922246cda5263f6d0f11ac808bfb 0 1670735335364 1 connected |

| f915e9f561c8922246cda5263f6d0f11ac808bfb 10.200.107.195:6379@16379 myself,master - 0 1670735333000 0 connected 0-16383 |

| aeccf2ee66fc6ee9d59d86f37b27193b539a1851 10.200.36.104:6379@16379 slave f915e9f561c8922246cda5263f6d0f11ac808bfb 0 1670735335364 2 connected |

- 效果图