20. Volume-存储卷介绍

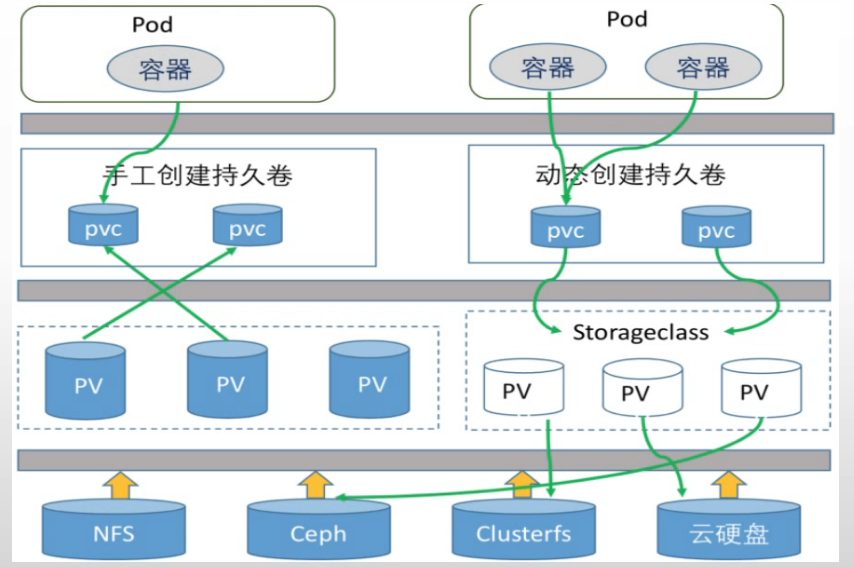

Volume将容器中的指定数据和容器解耦,并将数据存储到指定的位置,不同的存储卷功能不一样,如果是基于网络存储的存储卷可以实现容器间的数据共享和持久化

静态存储卷需要在使用前手动创建PV和PVC,然后绑定至Pod使用

| 常用的几种卷: |

| Secret:是一种包含少量敏感信息例如密码、令牌或秘钥的对象 |

| configmap:配置文件 |

| emptyDir:本地临时卷 |

| hostPath:本地存储卷 |

| nfs:网络存储卷 |

20.1 emptyDir

当Pod被分配给节点时,首先创建emptyDir卷,并且只有pod在该节点运行,该卷就会存在,正如卷的名字所述,它最初是空的,Pod中的容器可以读取和写入emptyDir卷中的相同文件,尽管该卷可以挂载到每个容器中的相同或不同路径上。当出于任何原因从节点中删除Pod时,emptyDir中的数据将被永久删除

| root@deploy-harbor:~/kubernetes/deployment |

| apiVersion: apps/v1 |

| kind: Deployment |

| metadata: |

| name: nginx-deployment |

| namespace: default |

| spec: |

| replicas: 2 |

| selector: |

| matchLabels: |

| app: ng-deploy-80 |

| template: |

| metadata: |

| labels: |

| app: ng-deploy-80 |

| spec: |

| volumes: |

| - name: cache-volume |

| emptyDir: {} |

| containers: |

| - name: ng-deploy-80 |

| image: nginx:1.20.0 |

| ports: |

| - containerPort: 80 |

| volumeMounts: |

| - mountPath: /cache |

| name: cache-volume |

| |

| |

| root@deploy-harbor:~/kubernetes/deployment |

| root@nginx-deployment-6dd4d8f9f7-7q2g4:/ |

| |

| |

| root@k8s-node1:~ |

| /var/lib/containerd/io.containerd.snapshotter.v1.overlayfs/snapshots/1404/fs/70.txt |

| /run/containerd/io.containerd.runtime.v2.task/k8s.io/ea192eb54114c29e0f811399a836415956c47cab310245fe5cb31ceba0063c93/rootfs/70.txt |

20.2 hostPath

hostPath 卷将主机节点的文件系统中文件或目录挂载到集群中,pod删除的时候,卷不会被删除

| root@deploy-harbor:~/kubernetes/hostPath |

| apiVersion: apps/v1 |

| kind: Deployment |

| metadata: |

| name: nginx-deployment |

| namespace: default |

| spec: |

| replicas: 2 |

| selector: |

| matchLabels: |

| app: ng-deploy-80 |

| template: |

| metadata: |

| labels: |

| app: ng-deploy-80 |

| spec: |

| volumes: |

| - name: cache-volume |

| hostPath: |

| path: /data/kubernetes |

| containers: |

| - name: ng-deploy-80 |

| image: nginx:1.20.0 |

| ports: |

| - containerPort: 80 |

| volumeMounts: |

| - mountPath: /cache |

| name: cache-volume |

| root@deploy-harbor:~/kubernetes/hostPath |

| root@nginx-deployment-58cf79797f-kmnbb:/ |

| root@nginx-deployment-58cf79797f-kmnbb:/cache |

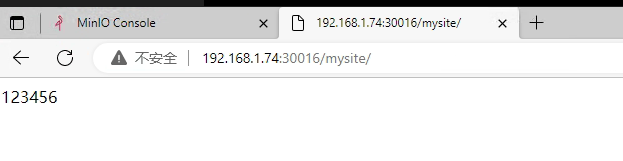

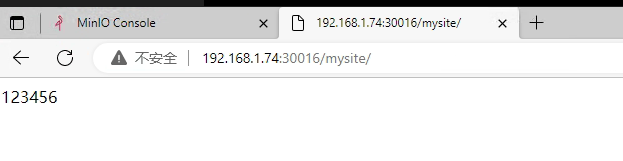

20.3 nfs共享存储

nfs卷允许将现有的NFS(网络文件系统挂载)挂载到容器中,且不像emptyDir会丢失数据,当删除Pod,nfs卷的内容被保留,卷仅仅是被卸载,这意味着NFS卷可以预先上传好数据待pod启动后即可直接使用,并且网络存储可以在多pod之间共享同一份数据,即NFS可以被多个pod同时挂载和读写

| root@deploy-harbor:~/kubernetes/nfs |

| apiVersion: apps/v1 |

| kind: Deployment |

| metadata: |

| name: nginx-deployment |

| namespace: default |

| spec: |

| replicas: 2 |

| selector: |

| matchLabels: |

| app: ng-deploy-80 |

| template: |

| metadata: |

| labels: |

| app: ng-deploy-80 |

| spec: |

| volumes: |

| - name: my-nfs-volume |

| nfs: |

| server: 192.168.1.75 |

| path: /data/k8sdata |

| containers: |

| - name: ng-deploy-80 |

| image: nginx:1.20.0 |

| ports: |

| - containerPort: 80 |

| volumeMounts: |

| - mountPath: /usr/share/nginx/html/mysite |

| name: my-nfs-volume |

| |

| |

| --- |

| apiVersion: v1 |

| kind: Service |

| metadata: |

| name: nginx-deploy-80 |

| spec: |

| ports: |

| - name: http |

| port: 81 |

| targetPort: 80 |

| nodePort: 30016 |

| protocol: TCP |

| type: NodePort |

| selector: |

| app: ng-deploy-80 |

| |

| root@deploy-harbor:~/kubernetes/nfs |

| root@deploy-harbor:/data/k8sdata |

| total 8 |

| drwxr-xr-x 2 root root 4096 Nov 24 14:15 ./ |

| drwxr-xr-x 12 root root 4096 Nov 24 14:15 ../ |

| root@deploy-harbor:/data/k8sdata |

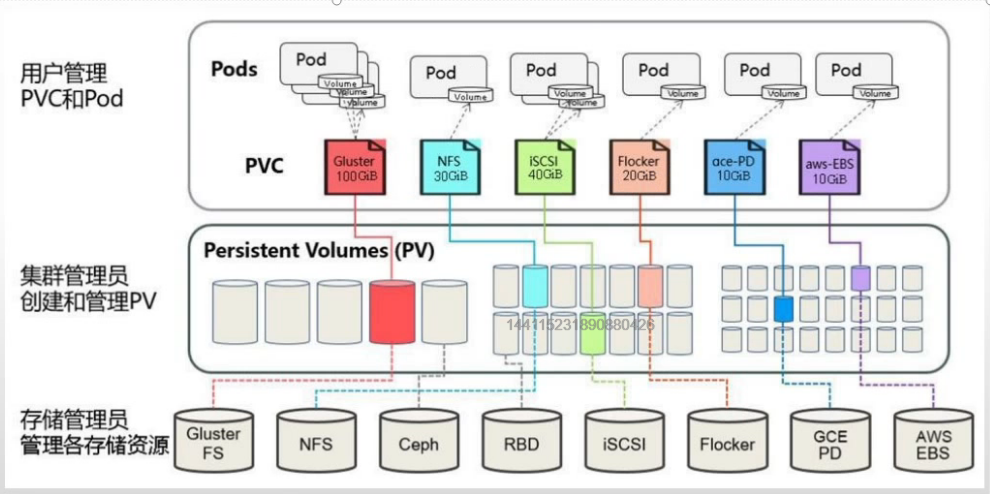

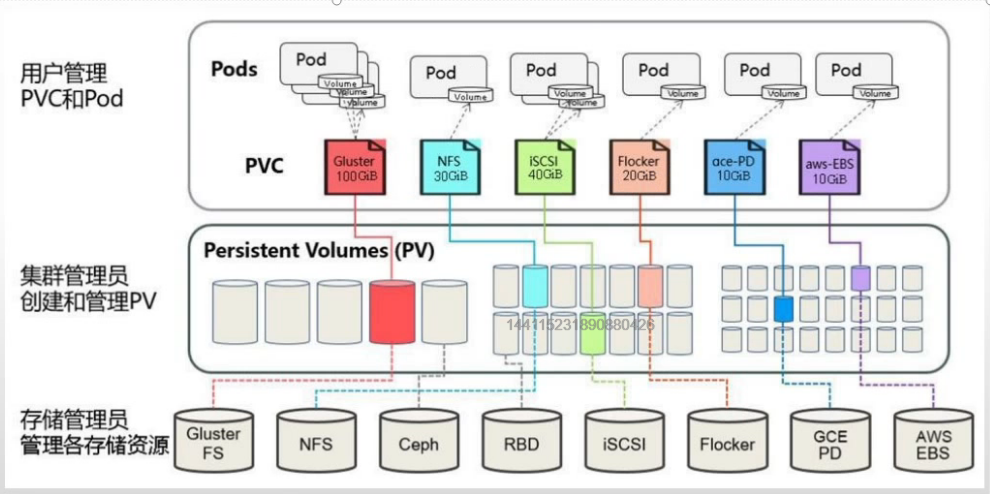

20.4 PV/PVC

| 用于实现pod和storage的解耦,这样我们修改storage的时候不需要修改pod。 |

| 与NFS的区别,可以在PV和PVC层面实现对存储服务器的空间分配、存储的访问权限管理等。 |

| Kubernetes从1.0版本开始支持PersitentVolume和PersitentVolumeClaim |

| PV:是集群中已经由Kubernetes管理员配置的一个网络存储,集群中的存储资源一个集群资源,即不属于任何namespace,PV的数据最终存储在硬件存储,pod不能直接挂载PV,PV需要绑定给PVC并最终由pod挂载PVC使用,PV支持NFS、Ceph、商业存储或云提供商的特定的存储等,可以自定义PV的类型是块还是文件存储、存储空间大小、访问模式等、PV的生命周期独立与Pod,即当使用PV的Pod被删除时可以对PV中的数据没有影响 |

| PVC:是对存储的请求,pod挂载PVC并将数据存储在PVC,而PVC需要绑定到PV才能使用,另外PVC在创建的时候要指定namespace,即pod要和PVC运行在同一个namespace,可以对PVC设置特点的空间大小和访问模式,使用PVC的pod在删除时也可以对PVC中的数据没有影响 |

- PV参数 PV与PVC设置的大小要一致 否则报错无法使用

| Capacity: |

| |

| accessModes: |

| ReadWriteOnce - PV只能被单个节点以读写权限挂载,PWO |

| ReadOnlyMany - PV以可以被多个节点挂载但是权限是只读的,ROX |

| ReadWriteMany - PV可以被多个节点是读写方式挂载使用,RWX |

| |

| persistentVolumeReclaimPolicy |

| kubectl explain PersitentVolume.spec.persistentVolumeReclaimPolicy |

| Retain - 删除PV后保持原样,需要管理员手动删除 |

| Recycle - 空间回收,及删除存储卷上的所有数据(包括目录和隐藏文件)目前仅支持NFS和hostPath |

| Delete - 自动删除存储卷 |

| |

| volumeMode |

| 定义存储卷使用的文件系统是块设备还是文件系统 ,默认为文件系统 |

| |

| mountOptions |

| root@deploy-harbor:~/kubernetes/pv-pvc |

| apiVersion: v1 |

| kind: PersistentVolume |

| metadata: |

| name: myserver-myapp-static-pv |

| namespace: myserver |

| spec: |

| capacity: |

| storage: 5Gi |

| accessModes: |

| - ReadWriteOnce |

| nfs: |

| path: /data/k8sdata/myserver/myappdata |

| server: 192.168.1.75 |

- PVC参数 PV与PVC设置的大小要一致 否则报错无法使用

| accessModes: |

| ReadWriteOnce - PV只能被单个节点以读写权限挂载,PWO |

| ReadOnlyMany - PV以可以被多个节点挂载但是权限是只读的,ROX |

| ReadWriteMany - PV可以被多个节点是读写方式挂载使用,RWX |

| |

| resources: |

| |

| selector: |

| matchLabels |

| matchExpressions |

| volumeName |

| |

| volumeMode |

| 定义PVC使用的文件系统是块设备还是文件系统,默认是文件系统 |

| root@deploy-harbor:~/kubernetes/pv-pvc |

| apiVersion: v1 |

| kind: PersistentVolumeClaim |

| metadata: |

| name: myserver-myapp-static-pvc |

| namespace: myserver |

| spec: |

| |

| volumeName: myserver-myapp-static-pv |

| accessModes: |

| - ReadWriteOnce |

| resources: |

| requests: |

| storage: 5Gi |

| root@deploy-harbor:~/kubernetes/pv-pvc |

| apiVersion: apps/v1 |

| kind: Deployment |

| metadata: |

| name: nginx-deployment |

| namespace: myserver |

| spec: |

| replicas: 2 |

| selector: |

| matchLabels: |

| app: ng-deploy-80 |

| template: |

| metadata: |

| labels: |

| app: ng-deploy-80 |

| spec: |

| volumes: |

| - name: static-datadir |

| persistentVolumeClaim: |

| claimName: myserver-myapp-static-pvc |

| containers: |

| - name: ng-deploy-80 |

| image: nginx:1.20.0 |

| ports: |

| - containerPort: 80 |

| volumeMounts: |

| - mountPath: /usr/share/nginx/html/mysite |

| name: static-datadir |

| |

| |

| --- |

| apiVersion: v1 |

| kind: Service |

| metadata: |

| name: nginx-deploy-80 |

| spec: |

| ports: |

| - name: http |

| port: 81 |

| targetPort: 80 |

| nodePort: 30016 |

| protocol: TCP |

| type: NodePort |

| selector: |

| app: ng-deploy-80 |

| |

| |

| root@deploy-harbor:~/kubernetes/pv-pvc |

| |

| root@nginx-deployment-7d499f5b5f-tt8w7:/ |

| Filesystem 1K-blocks Used Available Use% Mounted on |

| overlay 19430032 10994160 7423548 60% / |

| tmpfs 65536 0 65536 0% /dev |

| tmpfs 999664 0 999664 0% /sys/fs/cgroup |

| shm 65536 0 65536 0% /dev/shm |

| /dev/mapper/ubuntu--vg-ubuntu--lv 19430032 10994160 7423548 60% /etc/hosts |

| tmpfs 1692132 12 1692120 1% /run/secrets/kubernetes.io/serviceaccount |

| 192.168.1.75:/data/k8sdata/myserver/myappdata 19430400 15007232 3410432 82% /usr/share/nginx/html/mysite |

| tmpfs 999664 0 999664 0% /proc/acpi |

| tmpfs 999664 0 999664 0% /proc/scsi |

| tmpfs 999664 0 999664 0% /sys/firmware |

| |

| root@nginx-deployment-7d499f5b5f-tt8w7:/ |

| index |

| root@nginx-deployment-7d499f5b5f-tt8w7:/ |

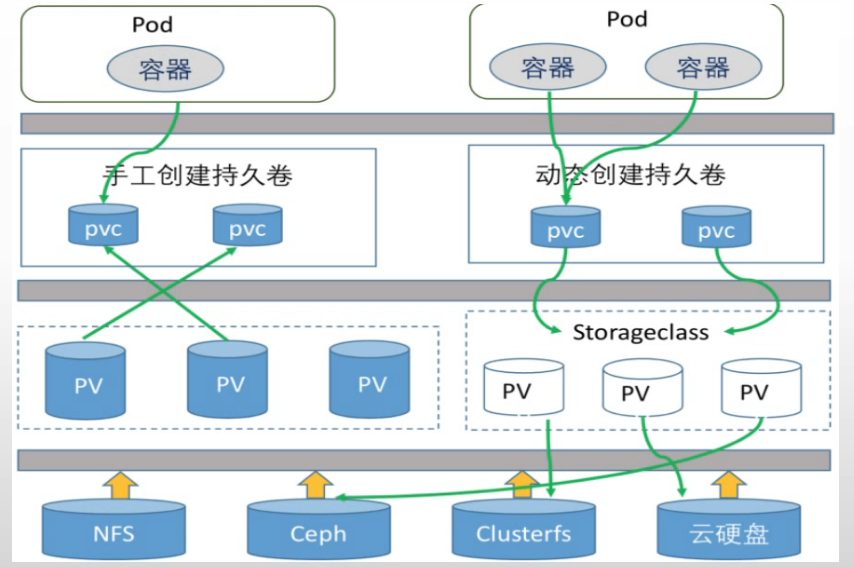

20.5 Volume-存储卷类型

| static:静态存储卷,需要在使用前手动创建PV,然后创建PVC并绑定到PV,然后挂载至pod使用,适用于PV和PVC相对比较固定的业务场景 |

| dyanmin:动态存储卷,先创建一个存储类storageclass,后期pod在使用PVC的时候可以通过存储类动态创建PVC,适用于有状态服务集群如MySQL-主多从、zookeeper集群等。 |

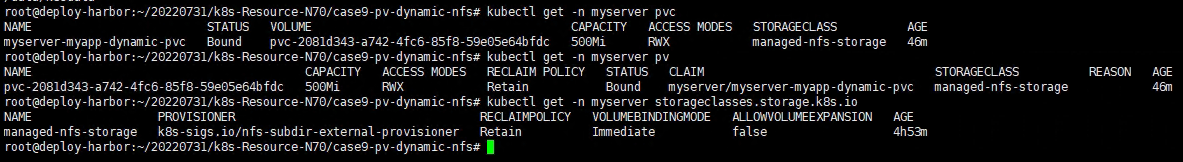

20.6 sc动态存储

下载路径:需要自己手动改

Releases · kubernetes-sigs/nfs-subdir-external-provisioner (github.com)

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| apiVersion: v1 |

| kind: Namespace |

| metadata: |

| name: nfs |

| --- |

| apiVersion: v1 |

| kind: ServiceAccount |

| metadata: |

| name: nfs-client-provisioner |

| |

| namespace: nfs |

| --- |

| kind: ClusterRole |

| apiVersion: rbac.authorization.k8s.io/v1 |

| metadata: |

| name: nfs-client-provisioner-runner |

| rules: |

| - apiGroups: [""] |

| resources: ["nodes"] |

| verbs: ["get", "list", "watch"] |

| - apiGroups: [""] |

| resources: ["persistentvolumes"] |

| verbs: ["get", "list", "watch", "create", "delete"] |

| - apiGroups: [""] |

| resources: ["persistentvolumeclaims"] |

| verbs: ["get", "list", "watch", "update"] |

| - apiGroups: ["storage.k8s.io"] |

| resources: ["storageclasses"] |

| verbs: ["get", "list", "watch"] |

| - apiGroups: [""] |

| resources: ["events"] |

| verbs: ["create", "update", "patch"] |

| --- |

| kind: ClusterRoleBinding |

| apiVersion: rbac.authorization.k8s.io/v1 |

| metadata: |

| name: run-nfs-client-provisioner |

| subjects: |

| - kind: ServiceAccount |

| name: nfs-client-provisioner |

| |

| namespace: nfs |

| roleRef: |

| kind: ClusterRole |

| name: nfs-client-provisioner-runner |

| apiGroup: rbac.authorization.k8s.io |

| --- |

| kind: Role |

| apiVersion: rbac.authorization.k8s.io/v1 |

| metadata: |

| name: leader-locking-nfs-client-provisioner |

| |

| namespace: nfs |

| rules: |

| - apiGroups: [""] |

| resources: ["endpoints"] |

| verbs: ["get", "list", "watch", "create", "update", "patch"] |

| --- |

| kind: RoleBinding |

| apiVersion: rbac.authorization.k8s.io/v1 |

| metadata: |

| name: leader-locking-nfs-client-provisioner |

| |

| namespace: nfs |

| subjects: |

| - kind: ServiceAccount |

| name: nfs-client-provisioner |

| |

| namespace: nfs |

| roleRef: |

| kind: Role |

| name: leader-locking-nfs-client-provisioner |

| apiGroup: rbac.authorization.k8s.io |

- 2-storageclass.yaml 创建动态存储类

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| apiVersion: storage.k8s.io/v1 |

| kind: StorageClass |

| metadata: |

| name: managed-nfs-storage |

| |

| provisioner: k8s-sigs.io/nfs-subdir-external-provisioner |

| reclaimPolicy: Retain |

| mountOptions: |

| |

| |

| - noatime |

| parameters: |

| |

| archiveOnDelete: "true" |

- 3-nfs-provisioner.yaml 定义到哪个nfs创建pv

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| apiVersion: apps/v1 |

| kind: Deployment |

| metadata: |

| name: nfs-client-provisioner |

| labels: |

| app: nfs-client-provisioner |

| |

| namespace: nfs |

| spec: |

| replicas: 1 |

| strategy: |

| type: Recreate |

| selector: |

| matchLabels: |

| app: nfs-client-provisioner |

| template: |

| metadata: |

| labels: |

| app: nfs-client-provisioner |

| spec: |

| serviceAccountName: nfs-client-provisioner |

| containers: |

| - name: nfs-client-provisioner |

| |

| image: registry.cn-qingdao.aliyuncs.com/zhangshijie/nfs-subdir-external-provisioner:v4.0.2 |

| volumeMounts: |

| - name: nfs-client-root |

| mountPath: /persistentvolumes |

| |

| env: |

| - name: PROVISIONER_NAME |

| value: k8s-sigs.io/nfs-subdir-external-provisioner |

| - name: NFS_SERVER |

| value: 192.168.1.75 |

| - name: NFS_PATH |

| value: /data/volumes |

| volumes: |

| - name: nfs-client-root |

| nfs: |

| server: 192.168.1.75 |

| path: /data/volumes |

| |

| |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| /data/k8sdata *(rw,no_root_squash) |

| /data/volumes *(rw,no_root_squash) |

| |

| |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| Export list for deploy-harbor: |

| /data/volumes * |

| /data/k8sdata * |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| |

| kind: PersistentVolumeClaim |

| apiVersion: v1 |

| metadata: |

| name: myserver-myapp-dynamic-pvc |

| namespace: myserver |

| spec: |

| storageClassName: managed-nfs-storage |

| accessModes: |

| - ReadWriteMany |

| resources: |

| requests: |

| storage: 500Mi |

| |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

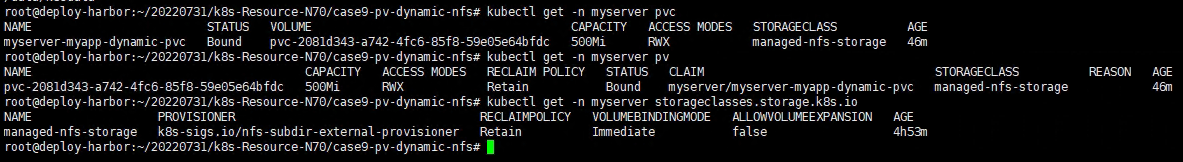

| NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE |

| myserver-myapp-dynamic-pvc Bound pvc-2081d343-a742-4fc6-85f8-59e05e64bfdc 500Mi RWX managed-nfs-storage 46m |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE |

| pvc-2081d343-a742-4fc6-85f8-59e05e64bfdc 500Mi RWX Retain Bound myserver/myserver-myapp-dynamic-pvc managed-nfs-storage 46m |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE |

| managed-nfs-storage k8s-sigs.io/nfs-subdir-external-provisioner Retain Immediate false |

| |

| |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| myserver-myserver-myapp-dynamic-pvc-pvc-2081d343-a742-4fc6-85f8-59e05e64bfdc |

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

| kind: Deployment |

| |

| apiVersion: apps/v1 |

| metadata: |

| labels: |

| app: myserver-myapp |

| name: myserver-myapp-deployment-name |

| namespace: myserver |

| spec: |

| replicas: 1 |

| selector: |

| matchLabels: |

| app: myserver-myapp-frontend |

| template: |

| metadata: |

| labels: |

| app: myserver-myapp-frontend |

| spec: |

| containers: |

| - name: myserver-myapp-container |

| image: nginx:1.20.0 |

| |

| volumeMounts: |

| - mountPath: "/usr/share/nginx/html/statics" |

| name: statics-datadir |

| volumes: |

| - name: statics-datadir |

| persistentVolumeClaim: |

| claimName: myserver-myapp-dynamic-pvc |

| |

| --- |

| kind: Service |

| apiVersion: v1 |

| metadata: |

| labels: |

| app: myserver-myapp-service |

| name: myserver-myapp-service-name |

| namespace: myserver |

| spec: |

| type: NodePort |

| ports: |

| - name: http |

| port: 80 |

| targetPort: 80 |

| nodePort: 30080 |

| selector: |

| app: myserver-myapp-frontend |

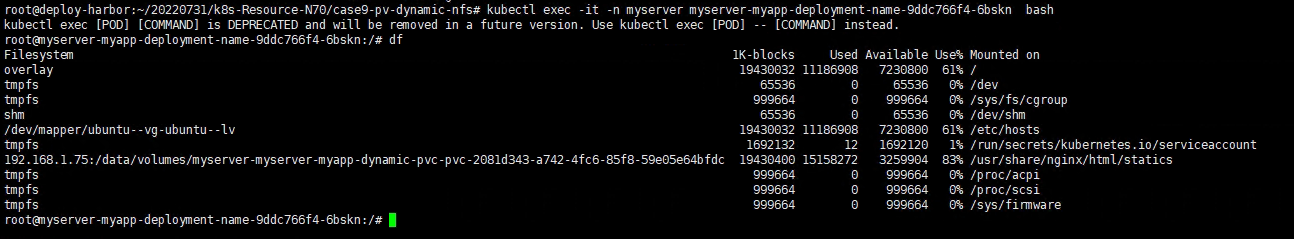

| root@deploy-harbor:~/20220731/k8s-Resource-N70/case9-pv-dynamic-nfs |

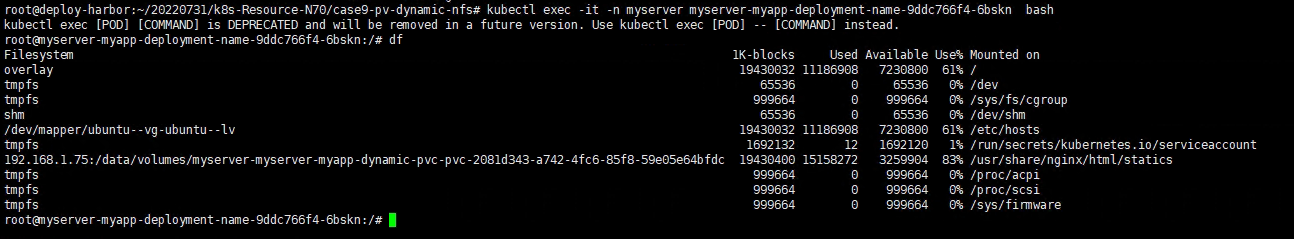

| kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead. |

| root@myserver-myapp-deployment-name-9ddc766f4-6bskn:/ |

| Filesystem 1K-blocks Used Available Use% Mounted on |

| overlay 19430032 11186908 7230800 61% / |

| tmpfs 65536 0 65536 0% /dev |

| tmpfs 999664 0 999664 0% /sys/fs/cgroup |

| shm 65536 0 65536 0% /dev/shm |

| /dev/mapper/ubuntu--vg-ubuntu--lv 19430032 11186908 7230800 61% /etc/hosts |

| tmpfs 1692132 12 1692120 1% /run/secrets/kubernetes.io/serviceaccount |

| 192.168.1.75:/data/volumes/myserver-myserver-myapp-dynamic-pvc-pvc-2081d343-a742-4fc6-85f8-59e05e64bfdc 19430400 15158272 3259904 83% /usr/share/nginx/html/statics |

| tmpfs 999664 0 999664 0% /proc/acpi |

| tmpfs 999664 0 999664 0% /proc/scsi |

| tmpfs 999664 0 999664 0% /sys/firmware |

| root@myserver-myapp-deployment-name-9ddc766f4-6bskn:/ |

- 你会发现他会把nfs路径挂载过来