Kubernetes学习目录

1、基础知识

1.1、准入机制

1.1.1、简介

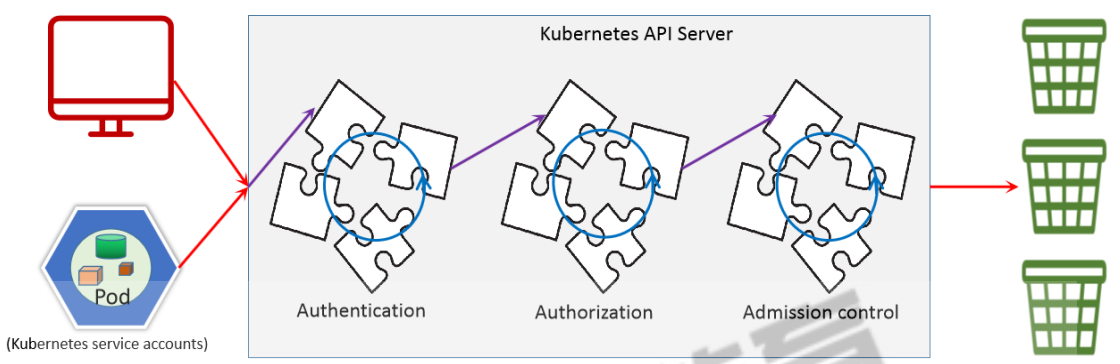

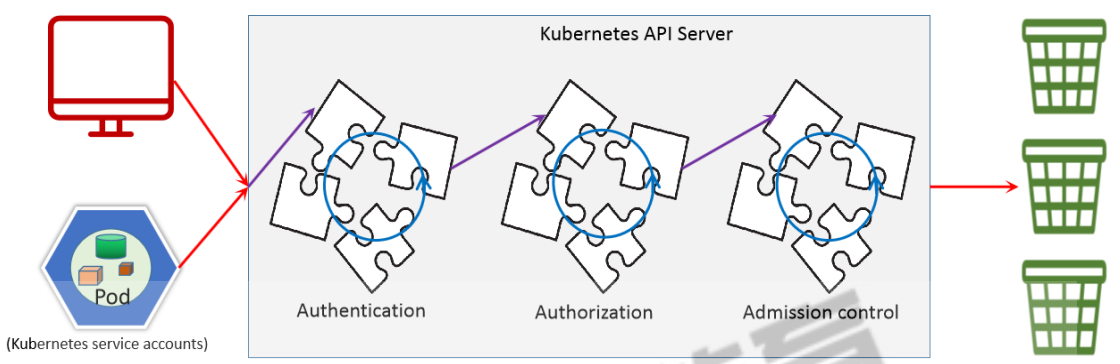

所谓的"准入机制",指的是经过了 用户认证、角色授权之后,当进行一些写操作的时候,需要遵循的一些原

则性要求。准入机制有一大堆的 "准入控制器" 组成,这些准入控制器编译进 kube-apiserver 二进制文件,由集群管理员进行配置。

这些控制器中,最主要的就是:MutatingAdmissionWebhook(变更) 和ValidatingAdmissionWebhook(验证),

变更(mutating)控制器可以修改被其接受的对象;验证(validating)控制器则不行。

1.1.2、准入机制流程图

1.1.3、准入控制过程分类

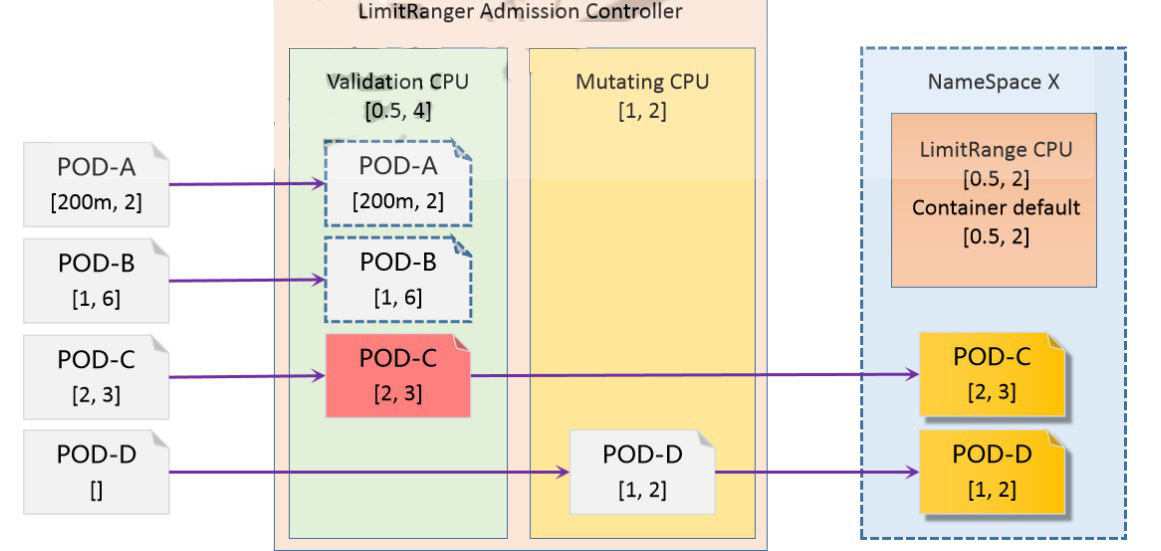

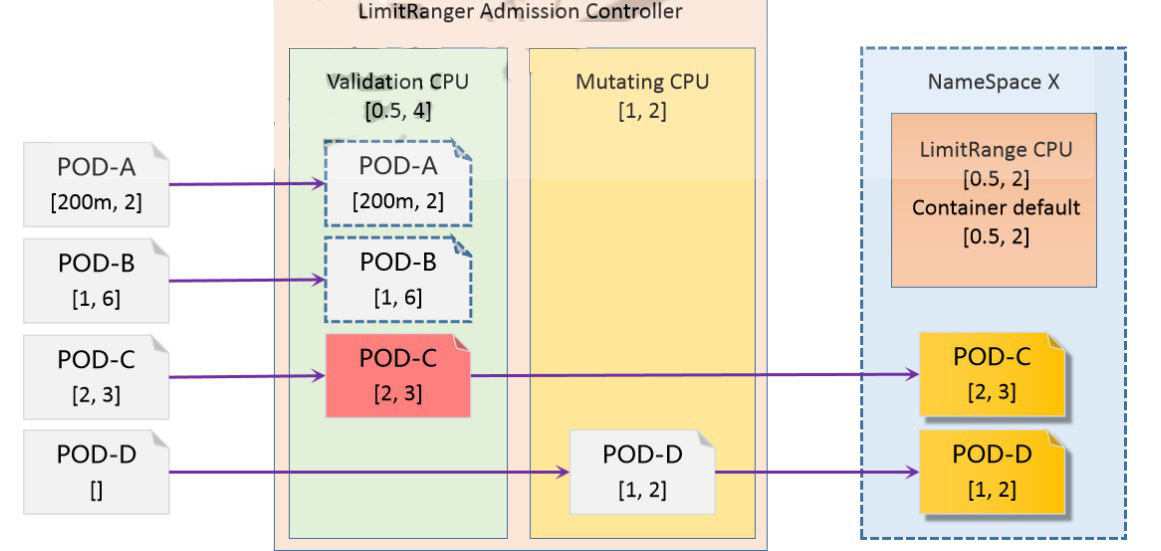

准入控制过程分为两个阶段,运行变更准入控制器 和 运行验证准入控制器。 实际上,某些控制器既是变更

准入控制器又是验证准入控制器。如果任何一个阶段的任何控制器拒绝了该请求,则整个请求将立即被拒绝,

并向终端用户返回一个错误。

最后,除了对对象进行变更外,准入控制器还可以有其它作用:将相关资源作为请求处理的一部分进行变更。

增加使用配额就是一个典型的示例,说明了这样做的必要性。 此类用法都需要相应的回收或回调过程,因为

任一准入控制器都无法确定某个请求能否通过所有其它准入控制器。

1.2、准入控制器启用与关闭

1.2.1、启用

]# grep -i 'admission' /etc/kubernetes/manifests/kube-apiserver.yaml

- --enable-admission-plugins=NodeRestriction

# kubeadm的kubeapiserver的配置文件中就通过属性开启了准入控制器

1.2.2、关闭

# 关闭控制器

--disable-admission-plugins=控制器列表

1.3、查看默认开启的准入控制器

1.3.1、查看默认开启准入控制器

]# kubectl -n kube-system exec -it kube-apiserver-master1 -- kube-apiserver -h | grep 'enable-admission-plugins strings'

--enable-admission-plugins strings admission plugins that should be enabled in addition to default enabled ones

(NamespaceLifecycle, LimitRanger, ServiceAccount, TaintNodesByCondition, PodSecurity, Priority, DefaultTolerationSeconds,

DefaultStorageClass, StorageObjectInUseProtection, PersistentVolumeClaimResize, RuntimeClass, CertificateApproval,

CertificateSigning, CertificateSubjectRestriction, DefaultIngressClass, MutatingAdmissionWebhook,

ValidatingAdmissionPolicy, ValidatingAdmissionWebhook, ResourceQuota). Comma-delimited list of

admission plugins: AlwaysAdmit, AlwaysDeny, AlwaysPullImages, CertificateApproval, CertificateSigning,

CertificateSubjectRestriction, DefaultIngressClass, DefaultStorageClass, DefaultTolerationSeconds,

DenyServiceExternalIPs, EventRateLimit, ExtendedResourceToleration, ImagePolicyWebhook,

LimitPodHardAntiAffinityTopology, LimitRanger, MutatingAdmissionWebhook,

NamespaceAutoProvision, NamespaceExists, NamespaceLifecycle, NodeRestriction, OwnerReferencesPermissionEnforcement,

PersistentVolumeClaimResize, PersistentVolumeLabel, PodNodeSelector, PodSecurity, PodTolerationRestriction, Priority,

ResourceQuota, RuntimeClass, SecurityContextDeny, ServiceAccount, StorageObjectInUseProtection, TaintNodesByCondition,

ValidatingAdmissionPolicy, ValidatingAdmissionWebhook. The order of plugins in this flag does not matter.

默认启用了 18个 准入控制器。而可以启用的准入控制器有 35 个。

1.4、与pod资源控制相关的准入控制器

1.4.1、LimitRanger

为Pod添加默认的计算资源需求和计算资源限制;以及存储资源需求和存储资源限制; 支持分别在容器和Pod级别进行限制;

1.4.2、ResourceQuota

限制资源数量,限制计算资源总量,存储资源总量;资源类型名称ResourceQuota

1.4.3、PodSecurityPolicy

在集群级别限制用户能够在Pod上可配置使用的所有securityContext。由于RBAC的加强,该功能在 Kubernetes v1.21 版本中被弃用,将在 v1.25中删除

2、LimitRanger-实践

2.1、流程图

2.2、创建LimitRanger资源策略

2.2.1、定义资源清单并且应用

kubectl apply -f - <<EOF

apiVersion: v1

kind: LimitRange

metadata:

name: storagelimits

spec:

limits:

- type: PersistentVolumeClaim

max:

storage: "10Gi"

min:

storage: "1Gi"

---

apiVersion: v1

kind: LimitRange

metadata:

name: core-resource-limits

spec:

limits:

- type: Pod

max:

cpu: "4"

memory: "4Gi"

min:

cpu: "500m"

memory: "100Mi"

- type: Container

max:

cpu: "4"

memory: "1Gi"

min:

cpu: "100m"

memory: "100Mi"

default:

cpu: "2"

memory: "512Mi"

defaultRequest:

cpu: "500m"

memory: "100Mi"

maxLimitRequestRatio:

cpu: "4"

- type: PersistentVolumeClaim

max:

storage: "10Gi"

min:

storage: "1Gi"

default:

storage: "5Gi"

defaultRequest:

storage: "1Gi"

maxLimitRequestRatio:

storage: "5"

EOF

# maxLimitRequestRatio 用于设定 上阈值和下阈值之间的比例。

2.2.2、检查创建的结果

]# kubectl get limitranges

NAME CREATED AT

core-resource-limits 2023-03-31T14:24:43Z

storagelimits 2023-03-31T14:24:43Z

]# kubectl describe limitranges core-resource-limits

Name: core-resource-limits

Namespace: default

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Pod memory 100Mi 4Gi - - -

Pod cpu 500m 4 - - -

Container cpu 100m 4 500m 2 4

Container memory 100Mi 1Gi 100Mi 512Mi -

PersistentVolumeClaim storage 1Gi 10Gi 1Gi 5Gi 5

]# kubectl describe limitranges storagelimits

Name: storagelimits

Namespace: default

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

PersistentVolumeClaim storage 1Gi 10Gi - - -

2.3、命令创建一个pod并且检查是否有限制资源

]# kubectl run pod --image=192.168.10.33:80/k8s/pod_test:v0.1

]# kubectl describe pod pod

...

Containers:

pod:

...

Limits:

cpu: 2

memory: 512Mi

Requests:

cpu: 500m

memory: 100Mi

Environment: <none>

2.4、定义一个超资源限制的测试pod

2.4.1、定义资源配置清单并且应用

kubectl apply -f - <<EOF

apiVersion: v1

kind: Pod

metadata:

name: pod-test

spec:

containers:

- name: pod-test

image: 192.168.10.33:80/k8s/pod_test:v0.1

resources:

requests:

memory: 128Mi

cpu: 1

limits:

memory: 1Gi

cpu: 2

EOF

# 此示例,CPU没有可调度的节点

2.4.2、观察pod节点的状态

]# kubectl get pods

NAME READY STATUS RESTARTS AGE

pod 1/1 Running 0 96m

pod-test 0/1 Pending 0 91m # 一直是pending状态

2.4.3、查看调度的日志

]# kubectl describe pod pod-test

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 2m23s default-scheduler 0/5 nodes are available: 2 Insufficient cpu,

3 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }. preemption: 0/5 nodes are available: 2

No preemption victims found for incoming pod, 3 Preemption is not helpful for scheduling.

# 因为CPU利用率不足,所以一直被挂起,无法被调度节点运行

3、ResourceQuota-实践

3.1、作用

3.2、定义资源配置清单且应用

kubectl apply -f - <<EOF

apiVersion: v1

kind: ResourceQuota

metadata:

name: resourcequota-test

spec:

hard:

pods: "5"

count/services: "5"

count/configmaps: "5"

count/secrets: "5"

count/cronjobs.batch: "2"

requests.cpu: "2"

requests.memory: "4Gi"

limits.cpu: "4"

limits.memory: "8Gi"

count/deployments.apps: "2"

count/statefulsets.apps: "2"

persistentvolumeclaims: "6"

requests.storage: "20Gi"

EOF

3.3、查看创建结果

]# kubectl get resourcequotas

# kubectl describe resourcequotas

Name: resourcequota-test

Namespace: default

Resource Used Hard

-------- ---- ----

count/configmaps 1 5

count/cronjobs.batch 0 2

count/deployments.apps 0 2

count/secrets 3 5

count/services 2 5

count/statefulsets.apps 0 2

limits.cpu 4 4

limits.memory 1536Mi 8Gi

persistentvolumeclaims 8 6

pods 2 5

requests.cpu 1500m 2

requests.memory 228Mi 4Gi

requests.storage 1562500Ki 20Gi

3.4、创建大于5个pod看看有没有限制

3.4.1、定义资源配置清单并应用

kubectl apply -f - << EOF

apiVersion: v1

kind: Pod

metadata:

name: nginx-2

spec:

containers:

- image: 192.168.10.33:80/k8s/my_nginx:v1

name: my-nginx

resources:

requests:

memory: 100Mi

cpu: 1

limits:

memory: 128Mi

cpu: 2

EOF

3.4.2、创建多个pod

# 创建到第三个的时间报错,因为CPU数量已经超了

Error from server (Forbidden): error when creating "STDIN": pods "nginx-2"

is forbidden: exceeded quota: resourcequota-test, requested: limits.cpu=2,

requests.cpu=1, used: limits.cpu=4,requests.cpu=2, limited: limits.cpu=4,requests.cpu=2

]# kubectl describe pod nginx

# 节点调度的时候也报错,因为cpu的值不够用

Warning FailedScheduling 84s default-scheduler 0/5 nodes are available: 2

Insufficient cpu, 3 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }.

preemption: 0/5 nodes are available: 2 No preemption victims found for incoming pod,

3 Preemption is not helpful for scheduling..

4、PodSecurityPolicy-(psp)-实践【了解】

4.1、简介

该功能在 Kubernetes v1.21 版本中被弃用,将在 v1.25中删除

由于默认psp是拒绝所有pod的,所以我们在启用psp的时候,需要额外做一些措施 -- 即提前做好psp相关的策略,然后再开启PSP功能。

Policy本身并不会产生实际作用,需要将其与用户或者serviceaccount绑定才可以完成授权。所以PSP的基本的操作步骤是:

1、定义psp相关策略

2、绑定psp资源的角色

3、集群启用PSP功能

如果要在生产环境中使用,必须要提前测试一下,否则不推荐使用,因为它的门槛较多。

4.2、psp规则-资源配置清单

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: privileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: '*'

spec:

privileged: true

allowPrivilegeEscalation: true

allowedCapabilities:

- '*'

allowedUnsafeSysctls:

- '*'

volumes:

- '*'

hostNetwork: true

hostPorts:

- min: 0

max: 65535

hostIPC: true

hostPID: true

runAsUser:

rule: 'RunAsAny'

runAsGroup:

rule: 'RunAsAny'

seLinux:

rule: 'RunAsAny'

supplementalGroups:

rule: 'RunAsAny'

fsGroup:

rule: 'RunAsAny'

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: restricted

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: 'docker/default'

apparmor.security.beta.kubernetes.io/allowedProfileNames: 'runtime/default'

seccomp.security.alpha.kubernetes.io/defaultProfileName: 'docker/default'

apparmor.security.beta.kubernetes.io/defaultProfileName: 'runtime/default'

spec:

privileged: false

allowPrivilegeEscalation: false

allowedUnsafeSysctls: []

requiredDropCapabilities:

- ALL

# Allow core volume types.

volumes:

- 'configMap'

- 'emptyDir'

- 'projected'

- 'secret'

- 'downwardAPI'

- 'persistentVolumeClaim'

hostNetwork: false

hostIPC: false

hostPID: false

runAsUser:

rule: 'MustRunAsNonRoot'

seLinux:

rule: 'RunAsAny'

supplementalGroups:

rule: 'MustRunAs'

ranges:

# Forbid adding the root group.

- min: 1

max: 65535

fsGroup:

rule: 'MustRunAs'

ranges:

# Forbid adding the root group.

- min: 1

max: 65535

readOnlyRootFilesystem: false

4.3、绑定集群-资源配置清单

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: psp-restricted

rules:

- apiGroups: ['policy']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames:

- restricted

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: psp-privileged

rules:

- apiGroups: ['policy']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames:

- privileged

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: privileged-psp-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: psp-privileged

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:masters

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:node

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:serviceaccounts:kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: restricted-psp-user

roleRef:

kind: ClusterRole

name: psp-restricted

apiGroup: rbac.authorization.k8s.io

subjects:

- kind: Group

apiGroup: rbac.authorization.k8s.io

name: system:authenticated

4.4、集群启用PSP功能

配置apiserver增加admission plugin PodSecurityPolicy即可。

编辑 /etc/kubernetes/manifests/kube-apiserver.yaml 文件,添加如下配置 --enable-admission-plugins=NodeRestriction,PodSecurityPolicy

由于kubeadm集群中,api-server是以静态pod的方式来进行管控的,所以我们不用重启,稍等一会,环境自然就开启了PSP功能

4.5、测试

kubectl apply -f - << EOF

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- image: 192.168.10.33:80/k8s/my_nginx:v1

name: my-nginx

EOF