hive 将hive表数据查询出来转为json对象和json数组输出

一、将hive表数据查询出来转为json对象输出

1、将查询出来的数据转为一行一行,并指定分割符的数据

2、使用UDF函数,将每一行数据作为string传入UDF函数中转换为json再返回

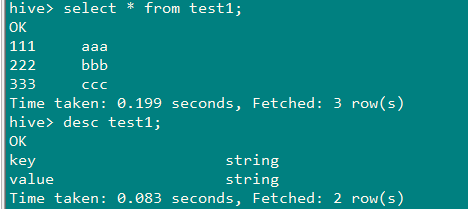

1、准备数据

2、查询出来的数据转为一行一行,并指定分割符的数据

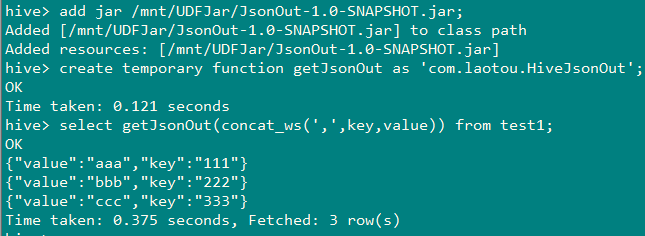

3、准备UDF函数

package com.laotou; import org.apache.hadoop.hive.ql.exec.UDF; import org.json.JSONException; import org.json.JSONObject; /** * @Author: * @Date: 2019/8/9 */ public class HiveJsonOut extends UDF{public static String evaluate(String jsonStr) throws JSONException { String[] split = jsonStr.split(","); JSONObject result = new JSONObject(); result.put("key", split[0]); result.put("value", split[1]); return String.valueOf(result); } }

package com.laotou; import org.apache.hadoop.hive.ql.exec.UDF; import org.json.JSONException; import org.json.JSONObject; /** * @Author: * string转json:{"notifyType":13,"notifyEntity":{"school":"小学","name":"张三","age":"13"}} * @Date: 2019/8/14 */ public class Record2Notify extends UDF { private static final String split_char = "!"; private static final String null_char = "\002"; public static String evaluate(int type, String line) throws JSONException { if (line == null) { return null; } JSONObject notify = new JSONObject(); JSONObject entity = new JSONObject(); notify.put("notifyType", type); String[] columns = line.split(split_char, -1); int size = columns.length / 2; for (int i = 0; i < size; i++) { String key = columns[i*2]; String value = columns[i*2+1]; if (isNull(key)) { throw new JSONException("Null key.1111111111"); } if (!isNull(value)) { entity.put(key, value); } } notify.put("notifyEntity", entity); return notify.toString(); } private static boolean isNull(String value) { return value == null || value.isEmpty() || value.equals(null_char); } public static void main(String[] args) throws JSONException { System.out.println(evaluate(13,"name!张三!age!13!school!小学")); } }

二、将hive表数据查询出来转为json数组输出

思路:

1、使用UDF函数(见上面内容)将查询出来的每一条数据转成json对象

select getJsonOut(concat_ws(',',key,value)) as content from test1

2、将第一步查询的结果进行列转行,并设置为逗号进行分割,得到如下字符串

select concat_ws('!!',collect_list(bb.content)) as new_value from (select getJsonOut(concat_ws(',',key,value)) as content from test1) bb;

结果如图:

3、使用UDF函数(JsonArray)将第2步中得到的字符串放入数组对象,准备UDF函数

package com.laotou;

import org.apache.hadoop.hive.ql.exec.UDF;

import org.json.JSONArray;

import org.json.JSONException;

/**

* create temporary function getJsonArray as 'com.laotou.HiveJson';

* @Author:

* @Date: 2019/8/9

*/

public class HiveJson extends UDF{

public static JSONArray evaluate(String jsonStr) throws JSONException {

String[] split = jsonStr.split("!!");

JSONArray jsonArray = new JSONArray();

jsonArray.put(split[0]);

jsonArray.put(split[1]);

jsonArray.put(split[2]);

jsonArray.put(split[3]);

return jsonArray;

}

}

4、测试

select getJsonArray(new_value) from (select cast(concat_ws('!!',collect_list(bb.content)) as string) as new_value from (select getJsonOut(concat_ws(',',key,value)) as content from test1) bb) cc;

浙公网安备 33010602011771号

浙公网安备 33010602011771号