kafka集群搭建

应用场景

削峰填谷:就像小米卖手机搞饥饿营销,打开他的官网首页就需要排队,把用户的请求存放到消息队列,后端的服务器过一段时间在去处理

异步解耦:京东用户下订单,双十11的时候订单量很大,而后端数据库是没有那么大的处理能力,先把订单放在消息队列,然后让数据库链接消息队列kafka慢慢的把数据写入到数据库里面去实现异步解耦,用户下完订单,消息队列就直接返回结果订单成功,用户就不需要等着订单到达数据

库

顺序收发

细数日常中需要保证顺序的应用场景非常多,例如证券交易过程时间优先原则,交易系统中的订单 创建、支付、退款等流程,航班中的旅客登机消息处理等等。与先进先出FIFO(First In First Out)原理类似,消息队列提供的顺序消息即保证消息FIFO。

分布式事务一致性

交易系统、支付红包等场景需要确保数据的最终一致性,大量引入消息队列的分布式事务,既可以 实现系统之间的解耦,又可以保证最终的数据一致性。

大数据分析

数据在“流动”中产生价值,传统数据分析大多是基于批量计算模型,而无法做到实时的数据分析, 利用消息队列与流式计算引擎相结合,可以很方便的实现业务数据的实时分析。

分布式缓存同步

电商的大促,各个分会场琳琅满目的商品需要实时感知价格变化,大量并发访问数据库导致会场页 面响应时间长,集中式缓存因带宽瓶颈,限制了商品变更的访问流量,通过消息队列构建分布式缓 存,实时通知商品数据的变化

特点 分布式: 多机实现,不允许单机 分区: 一个消息.可以拆分出多个,分别存储在多个位置 多副本: 防止信息丢失,可以多来几个备份 多订阅者: 可以有很多应用连接kafka Zookeeper: 早期版本的Kafka依赖于zookeeper, 2021年4月19日Kafka 2.8.0正式发布,此版本 包括了很多重要改动,最主要的是kafka通过自我管理的仲裁来替代ZooKeeper,即Kafka将不再需 要ZooKeeper! 优势 Kafka 通过 O(1)的磁盘数据结构提供消息的持久化,这种结构对于即使数以 TB 级别以上的消息存 储也能够保持长时间的稳定性能。 高吞吐量:即使是非常普通的硬件Kafka也可以支持每秒数百万的消息。支持通过Kafka 服务器分 区消息。所以的节点都可以读 分布式: Kafka 基于分布式集群实现高可用的容错机制,可以实现自动的故障转移 顺序保证:在大多数使用场景下,数据处理的顺序都很重要。大部分消息队列本来就是排序的,并 且能保证数据会按照特定的顺序来处理。 Kafka保证一个Partiton内的消息的有序性(分区间数据 是无序的,如果对数据的顺序有要求,应将在创建主题时将分区数partitions

设置为1)支持 Hadoop 并行数据加载 通常用于大数据场合,传递单条消息比较大,而Rabbitmq 消息主要是传输业务的指令数据,单条数据 较小

kafka可以在配置文件指定num.partitions=1分区的数量,开发在代码创建topic的时候有额可以指定分片和副本的数量,副本越多占用的空间越多

在Kafka中,数据读写由领导者(Leader)分片负责,而副本(Replica)分片只负责副本数据的同步

Kafka中的每个主题(topic)都可以配置多个分区(partition)以及副本(replica)。每个分区都有一个领导者(leader)以及0个或者多个追随者(follower),领导者是该分区的所有副本中的一个。客户端与领导者分区交互,读取或写入数据。如果领导者分区不可用,则客户端会收到错误消息,Kafka会将其请求重定向到可用的追随者分区。如果追随者分区也不可用,则Kafka会再次尝试寻找可用的分区,或者返回一个错误

生产使用

当堆积的消息过多的时候,如果跑在k8s里面可以观察kafka的pod的内存使用情况,3个pod的内存率使用的都很高的话,可以扩容pod的内存,调整消费者pod的数量如6个消费者就配置kafka集群topic的分区的为6让kafka追上堆积的消息,扩容消费者和kafka的pod内存可以观察kafka消息堆积的数量

集群搭建

zookeeper略,参考zookeeper集群搭建

#上传kafka安装包解压

tar -xf kafka_2.12-2.1.0.tgz

#修改kafka的配置文件

root@zk1:/tools# cat /tools/kafka_2.12-2.1.0/config/server.properties|grep -v "#"|grep -v '^$'

broker.id=1 #broker每个节点的id都是不一样,3个节点修改节点id每个节点都是不一样,必须设置唯一的值

listeners=PLAINTEXT://10.0.0.70:9092 #指定监听地址和端口

num.network.threads=3 #启用几个网络线程去接收用户的请求,访问比较大可以设置多一些

num.io.threads=8 #启用几个io线程去磁盘读取数据,默认是8个可以多设置一些

socket.send.buffer.bytes=102400 #设置buffer的大小,单位是字节

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/data/kafka #指定存储kafka的数据目录

num.partitions=1#默认情况下分几个区进行分片,默认是1个,可以修改为kafka节点一样的数量,如3个节点,就设置为3,写100个G,就往kafka节点1写33个G,kafka节点2些33G,kafka节点3写34个G,如果app比较多的情况下就写入数据就可以并行提高写入的IO性能,单独把数据写入到kafka节点1,那么kafka节点1的io可能会非常高,分开写就大大的提升了写入的性能缩短写入时长,降低单个节点的ip,也可以在应用程序指定

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168 #数据在kafka保存多长时间,默认是168个小时保存一周,到期就自动删除

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.0.0.70:2181,10.0.0.72:2181,10.0.0.73:2181 #配置zookeeper集群的地址

zookeeper.connection.timeout.ms=6000 #链接zookeeper的超时时间,单位是毫秒,6000就是6秒

group.initial.rebalance.delay.ms=0

#创建数据目录

mkdir -p /data/kafka

#创建软链接

root@zk3:/tools# ln -sv /tools/kafka_2.12-2.1.0 /tools/kafka

'/tools/kafka' -> '/tools/kafka_2.12-2.1.0'

#指定配置文件/tools/kafka/config/server.properties启动

root@zk1:/tools# /tools/kafka/bin/kafka-server-start.sh /tools/kafka/config/server.properties &

[1] 72882

root@zk2:/tools# /tools/kafka/bin/kafka-server-start.sh /tools/kafka/config/server.properties &

[1] 72311

root@zk3:/tools# /tools/kafka/bin/kafka-server-start.sh /tools/kafka/config/server.properties &

[1] 72663

#查看日志有started就代表启动成功

[2023-09-24 06:59:15,836] INFO [ThrottledChannelReaper-Produce]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[2023-09-24 06:59:15,838] INFO [ThrottledChannelReaper-Request]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[2023-09-24 06:59:15,838] INFO [ThrottledChannelReaper-Fetch]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[2023-09-24 06:59:15,897] INFO Loading logs. (kafka.log.LogManager)

[2023-09-24 06:59:15,912] INFO Logs loading complete in 15 ms. (kafka.log.LogManager)

[2023-09-24 06:59:15,935] INFO Starting log cleanup with a period of 300000 ms. (kafka.log.LogManager)

[2023-09-24 06:59:15,952] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager)

[2023-09-24 06:59:18,346] INFO Awaiting socket connections on 10.0.0.70:9092. (kafka.network.Acceptor)

[2023-09-24 06:59:18,389] INFO [SocketServer brokerId=1] Started 1 acceptor threads (kafka.network.SocketServer)

[2023-09-24 06:59:18,429] INFO [ExpirationReaper-1-Fetch]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,430] INFO [ExpirationReaper-1-Produce]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,433] INFO [ExpirationReaper-1-DeleteRecords]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,466] INFO [LogDirFailureHandler]: Starting (kafka.server.ReplicaManager$LogDirFailureHandler)

[2023-09-24 06:59:18,562] INFO Creating /brokers/ids/1 (is it secure? false) (kafka.zk.KafkaZkClient)

[2023-09-24 06:59:18,568] INFO Result of znode creation at /brokers/ids/1 is: OK (kafka.zk.KafkaZkClient)

[2023-09-24 06:59:18,569] INFO Registered broker 1 at path /brokers/ids/1 with addresses: ArrayBuffer(EndPoint(10.0.0.70,9092,ListenerName(PLAINTEXT),PLAINTEXT)) (kafka.zk.KafkaZkClient)

[2023-09-24 06:59:18,572] WARN No meta.properties file under dir /data/kafka/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[2023-09-24 06:59:18,671] INFO [ExpirationReaper-1-topic]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,677] INFO [ExpirationReaper-1-Heartbeat]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,679] INFO [ExpirationReaper-1-Rebalance]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2023-09-24 06:59:18,702] INFO [GroupCoordinator 1]: Starting up. (kafka.coordinator.group.GroupCoordinator)

[2023-09-24 06:59:18,704] INFO [GroupCoordinator 1]: Startup complete. (kafka.coordinator.group.GroupCoordinator)

[2023-09-24 06:59:18,718] INFO [GroupMetadataManager brokerId=1] Removed 0 expired offsets in 13 milliseconds. (kafka.coordinator.group.GroupMetadataManager)

[2023-09-24 06:59:18,730] INFO [ProducerId Manager 1]: Acquired new producerId block (brokerId:1,blockStartProducerId:2000,blockEndProducerId:2999) by writing to Zk with path version 3 (kafka.coordinator.transaction.ProducerIdManager)

[2023-09-24 06:59:18,761] INFO [TransactionCoordinator id=1] Starting up. (kafka.coordinator.transaction.TransactionCoordinator)

[2023-09-24 06:59:18,764] INFO [TransactionCoordinator id=1] Startup complete. (kafka.coordinator.transaction.TransactionCoordinator)

[2023-09-24 06:59:18,765] INFO [Transaction Marker Channel Manager 1]: Starting (kafka.coordinator.transaction.TransactionMarkerChannelManager)

[2023-09-24 06:59:18,838] INFO [/config/changes-event-process-thread]: Starting (kafka.common.ZkNodeChangeNotificationListener$ChangeEventProcessThread)

[2023-09-24 06:59:18,867] INFO [SocketServer brokerId=1] Started processors for 1 acceptors (kafka.network.SocketServer)

[2023-09-24 06:59:18,874] INFO Kafka version : 2.1.0 (org.apache.kafka.common.utils.AppInfoParser)

[2023-09-24 06:59:18,874] INFO Kafka commitId : 809be928f1ae004e (org.apache.kafka.common.utils.AppInfoParser)

[2023-09-24 06:59:18,875] INFO [KafkaServer id=1] started (kafka.server.KafkaServer) 代表启动成功

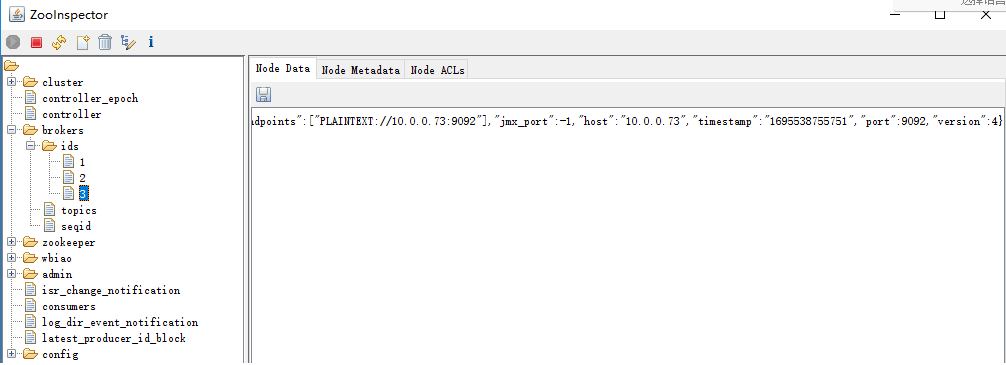

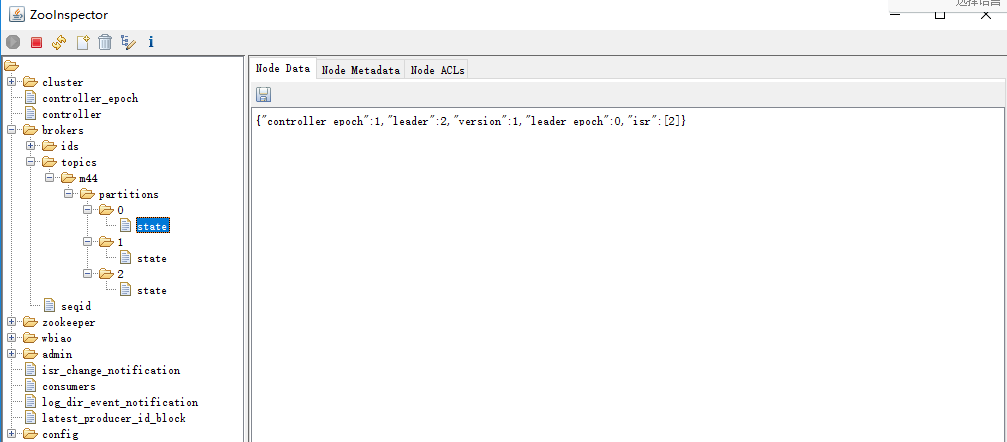

进入zookeeper检查数据

#创建topic #--partitions 3:分区为3个: #--replication-factor 1:指定副本数为1个,指定3个副本的空间占用率会非常高 #--topic magedu:指定topic名称 公司里面一般是开发写的程序进行创建topic,分几个区是开发决定的 #在任意节点执行,创建分区为3个--partitions 3 ,副本数为1个replication-factor 1,指定topic名称为m44 /tools/kafka/bin/kafka-topics.sh --create --zookeeper 10.0.0.70:2181,10.0.0.72:2181,10.0.0.73:2181 --partitions 3 --replication-factor 1 --topic m44 root@zk1:/tools# /tools/kafka/bin/kafka-topics.sh --create --zookeeper 10.0.0.70:2181,10.0.0.72:2181,10.0.0.73:2181 --partitions 3 --replication-factor 1 --topic m44 Created topic "m44". #节点检查 root@zk1:/tools# ls -l /data/kafka/ total 16 -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 4 Sep 24 07:08 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-2 -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 12 Sep 24 07:08 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 12 Sep 24 07:09 replication-offset-checkpoint root@zk2:/tools# ls -l /data/kafka/ total 16 -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 4 Sep 24 07:09 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-0 -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 12 Sep 24 07:09 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 12 Sep 24 07:09 replication-offset-checkpoint root@zk3:/tools# ls -l /data/kafka/ total 16 -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 4 Sep 24 07:09 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-1 -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 12 Sep 24 07:09 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 12 Sep 24 07:09 replication-offset-checkpoint

#创建3分区3副本的,每个分区3个副本 root@zk2:/tools# /tools/kafka/bin/kafka-topics.sh --create --zookeeper 10.0.0.70:2181,10.0.0.72:2181,10.0.0.73:2181 --partitions 3 --replication-factor 3 --topic m45 Created topic "m45". 省略............. #节点 root@zk1:/tools# ll /data/kafka/ total 16 drwxr-xr-x 6 root root 239 Sep 24 07:11 ./ drwxr-xr-x 3 root root 19 Sep 24 06:57 ../ -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 0 Sep 24 06:59 .lock -rw-r--r-- 1 root root 4 Sep 24 07:11 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-2/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-0/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-1/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-2/ -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 36 Sep 24 07:11 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 36 Sep 24 07:11 replication-offset-checkpoint root@zk2:/tools# ll /data/kafka/ total 16 drwxr-xr-x 6 root root 239 Sep 24 07:11 ./ drwxr-xr-x 3 root root 19 Sep 24 06:57 ../ -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 0 Sep 24 06:59 .lock -rw-r--r-- 1 root root 4 Sep 24 07:11 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-0/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-0/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-1/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-2/ -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 36 Sep 24 07:11 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 36 Sep 24 07:11 replication-offset-checkpoint root@zk3:/tools# ll /data/kafka/ total 16 drwxr-xr-x 6 root root 239 Sep 24 07:12 ./ drwxr-xr-x 3 root root 19 Sep 24 06:57 ../ -rw-r--r-- 1 root root 0 Sep 24 06:59 cleaner-offset-checkpoint -rw-r--r-- 1 root root 0 Sep 24 06:59 .lock -rw-r--r-- 1 root root 4 Sep 24 07:11 log-start-offset-checkpoint drwxr-xr-x 2 root root 141 Sep 24 07:07 m44-1/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-0/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-1/ drwxr-xr-x 2 root root 141 Sep 24 07:11 m45-2/ -rw-r--r-- 1 root root 54 Sep 24 06:59 meta.properties -rw-r--r-- 1 root root 36 Sep 24 07:11 recovery-point-offset-checkpoint -rw-r--r-- 1 root root 36 Sep 24 07:12 replication-offset-checkpoint #查看topic root@zk3:/tools# /tools/kafka/bin/kafka-topics.sh --describe --zookeeper 10.0.0.70:2181 --topic m44 Topic:m44 PartitionCount:3 ReplicationFactor:1 Configs: Topic: m44 Partition: 0 Leader: 2 Replicas: 2 Isr: 2 Topic: m44 Partition: 1 Leader: 3 Replicas: 3 Isr: 3 Topic: m44 Partition: 2 Leader: 1 Replicas: 1 Isr: 1 root@zk2:/tools# /tools/kafka/bin/kafka-topics.sh --describe --zookeeper 10.0.0.70:2181 --topic m45 Topic:m45 PartitionCount:3 ReplicationFactor:3 Configs: Topic: m45 Partition: 0 Leader: 1 Replicas: 1,2,3 Isr: 1,2,3 Topic: m45 Partition: 1 Leader: 2 Replicas: 2,3,1 Isr: 2,3,1 Topic: m45 Partition: 2 Leader: 3 Replicas: 3,1,2 Isr: 3,1,2 Topic: m45(Topic名称) Partition: 0(分区) Leader: 1(分区的leader所在的kafka节点id) Replicas: 1,2,3(副本数量和存放的位置) Isr: 1,2,3 Topic: m45(Topic名称) Partition: 1(分区) Leader: 2(分区的leader所在的kafka节点id) Replicas: 2,3,1(副本数量和存放的位置) Isr: 2,3,1 Topic: m45(Topic名称) Partition: 2(分区) Leader: 3(分区的leader所在的kafka节点id) Replicas: 3,1,2(副本数量和存放的位置) Isr: 3,1,2 Isr: 1,2,3代表处于同步状态,处于同步状态代表可以参与选举为leader,如果leader挂了就从这里的分区选取leader继续对外提供读写 #查看所有的topic root@zk2:/tools# /tools/kafka/bin/kafka-topics.sh --list --zookeeper 10.0.0.70:2181 m44 m45 #写入消息 root@zk2:/tools# /tools/kafka/bin/kafka-console-producer.sh --broker-list 10.0.0.70:9092,10.0.0.72:9092,10.0.0.73:9092 --topic m44 test1 test2 >>>test1 >test2 >test3 >mest > #命令行获取消息消费 #测试发送消息 root@zk2:/tools# /tools/kafka/bin/kafka-console-producer.sh --broker-list 10.0.0.70:9092,10.0.0.72:9092,10.0.0.73:9092 --topic m44 >test >tesr1 >111 #测试获取消息 /tools/kafka/bin/kafka-console-consumer.sh --topic m44 --bootstrap-server 10.0.0.72:9092 --from-beginning 111 test2 test3 jkj tesr1 test1 test2 kk test #删除之前m44topic之前 root@zk3:/tools# /tools/kafka/bin/kafka-topics.sh --list --zookeeper 10.0.0.70:2181 __consumer_offsets m44 m45 #删除m44的topic root@zk3:/tools# /tools/kafka/bin/kafka-topics.sh --delete --zookeeper 10.0.0.70:2181 --topic m44 Topic m44 is marked for deletion. Note: This will have no impact if delete.topic.enable is not set to true. [2023-09-24 07:33:42,195] INFO [GroupCoordinator 3]: Removed 0 offsets associated with deleted partitions: m44-1, m44-2, m44-0. (kafka.coordinator.group.GroupCoordinator) [2023-09-24 07:33:42,244] INFO [ReplicaFetcherManager on broker 3] Removed fetcher for partitions Set() (kafka.server.ReplicaFetcherManager) [2023-09-24 07:33:42,244] INFO [ReplicaAlterLogDirsManager on broker 3] Removed fetcher for partitions Set() (kafka.server.ReplicaAlterLogDirsManager) [2023-09-24 07:33:42,247] INFO [ReplicaFetcherManager on broker 3] Removed fetcher for partitions Set(m44-1) (kafka.server.ReplicaFetcherManager) [2023-09-24 07:33:42,247] INFO [ReplicaAlterLogDirsManager on broker 3] Removed fetcher for partitions Set(m44-1) (kafka.server.ReplicaAlterLogDirsManager) [2023-09-24 07:33:42,250] INFO [ReplicaFetcherManager on broker 3] Removed fetcher for partitions Set() (kafka.server.ReplicaFetcherManager) [2023-09-24 07:33:42,250] INFO [ReplicaAlterLogDirsManager on broker 3] Removed fetcher for partitions Set() (kafka.server.ReplicaAlterLogDirsManager) [2023-09-24 07:33:42,252] INFO [ReplicaFetcherManager on broker 3] Removed fetcher for partitions Set(m44-1) (kafka.server.ReplicaFetcherManager) [2023-09-24 07:33:42,252] INFO [ReplicaAlterLogDirsManager on broker 3] Removed fetcher for partitions Set(m44-1) (kafka.server.ReplicaAlterLogDirsManager) [2023-09-24 07:33:42,271] INFO Log for partition m44-1 is renamed to /data/kafka/m44-1.8ab418351dd3472798c291bcec908ae1-delete and is scheduled for deletion (kafka.log.LogManager) #删除之后检查 root@zk3:/tools# /tools/kafka/bin/kafka-topics.sh --list --zookeeper 10.0.0.70:2181 __consumer_offsets

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 10年+ .NET Coder 心语 ── 封装的思维:从隐藏、稳定开始理解其本质意义

· 地球OL攻略 —— 某应届生求职总结

· 提示词工程——AI应用必不可少的技术

· Open-Sora 2.0 重磅开源!

· 周边上新:园子的第一款马克杯温暖上架