kubernetes集群升级

1.集群升级版本和方案说明

#集群版本升级说明 小版本升级:1.21升级到1.21.5,小版本的升级是稳定的升级,是属于稳定更新,一般是修复此版本的某些bug 大版本升级:1.21升级到1.26(1.24),大版本更新可能会出现api的变化,其他插件的变化,做大版本升级需要提前把所有在生产环境运行的pod的yaml文件在最新版的1.26-k8s集群环境做测试,把需要修改的api和各种插件都在测试环境配置好,没问题在升级 集群升级方案1: 生产环境的k8s集群升级,准备2套环境,一套是现有的正在运行的k8s环境业务都是跑在上面1.21.0,然后在重新部署一套最新版本的生产k8s集群1.24.0,慢慢把旧版本生产k8s集群 pod迁移到新环境,然后在新环境测试业务是否正常,如正常无任何故障后,可以把业务流量切到新环境,旧k8s环境保留一段时间,待k8s新环境无任何异常以后,在把旧环境卸载掉 #执行此方案,最好在部署个nginx调度业务流量,客户先到nginx调度,在到k8s环境,方便随时切换新旧k8s集群环境 集群升级方案2: 滚动升级 升级之前也需要把生产的pod全部在最新版本k8s集群做api、插件的测试确保所有的pod在最新版本k8s集群运行正常 先把master一个一个进行升级,升级过程中最低保证有一个master是在工作,然后在升级node也是逐个升级node节点,保证业务不会中断的情况下进行升级 master升级 在所有node节点的Nginx-kube-lb.conf的配置文件吧其中一个master节点删除,重启Nginx,然后就可以对下线的这个master节点进行升级 直到把master节点的二进制文件替换成最新版本的master二进制文件,换完在加入到node节点的负载均衡配置文件kube-lb.conf重启Nginx就升级完成 以次的执行实现所有master的升级 node升级 node升级需要把升级node服务器上面的业务pod都给驱逐了,并且保证生产的pod飘移到其他node节点资源都够用,然后就可以把kubelet和kube-proxy停止了,然后把node节点的二进制文件替换掉就升级完成

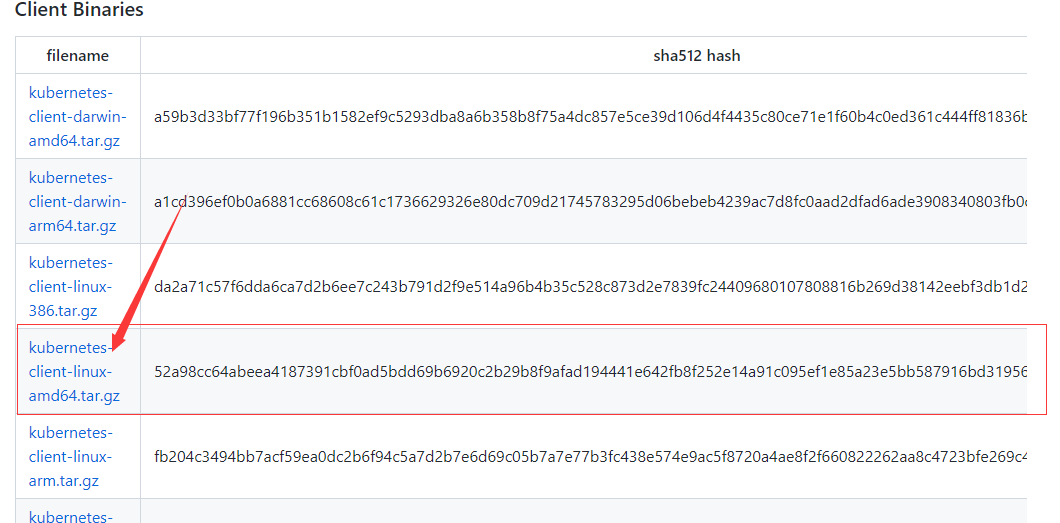

升级的二进制的文件都是要自己去github上的kubeneter项目的下载

客户端的二进制命令,要下载linux的amd架构64位的

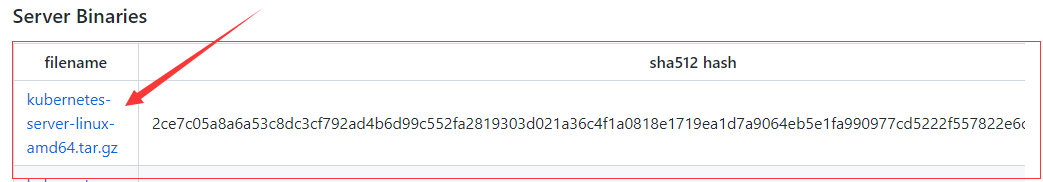

server配置

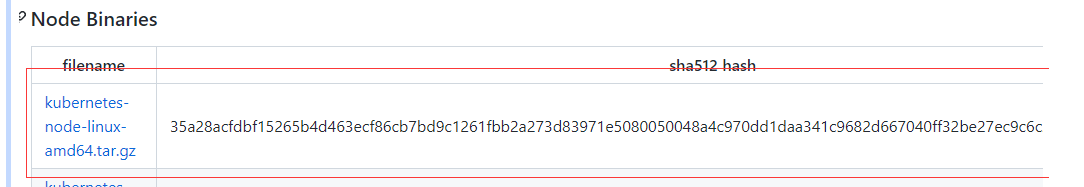

node下载

2.升级master节点

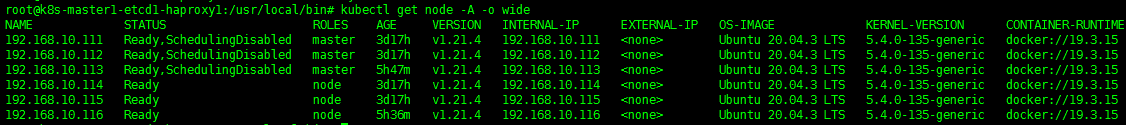

master升级是替换掉编译好的二进制文件kube-apiserver、kube-controller-manager、kube-proxy、kube-scheduler、kubectl、kubelet 其中kube-apiserver、kube-controller-manager、kube-proxy、kube-scheduler、kubelet都是以服务形式运行的需要停止服务进行替换,而kubectl只是一个命令行客户端 除了kubectl是客户端工具,算下kube-apiserver、kube-controller-manager、kube-proxy、kube-scheduler、kubelet都是通过service去启动,通过servicer去启动的都要关闭了,不关闭都无法替换二进制文件 升级之前,要把测试环境先升级,测试环境升级后全部pod重新跑一遍有问题就修改yaml文件,测试环境升级全部pod的yaml文件的api可用性确定好没有问题之后再去升级生产环境,然后拿测试环境的yaml文件到生产环境 #升级之前检查集群 root@k8s-master1-etcd1-haproxy1:/usr/local/src# kubectl get node -A -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME 192.168.10.111 Ready,SchedulingDisabled master 3d11h v1.21.0 192.168.10.111 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.112 Ready,SchedulingDisabled master 3d11h v1.21.0 192.168.10.112 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.113 Ready,SchedulingDisabled master 5m2s v1.21.0 192.168.10.113 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.114 Ready node 3d11h v1.21.0 192.168.10.114 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.115 Ready node 3d11h v1.21.0 192.168.10.115 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.116 Ready node 5h6m v1.21.0 192.168.10.116 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 #使用滚动升级的方式升级master #所有node和master节点注销掉192.168.10.113和192.168.10.111进行master升级 cat >/etc/kube-lb/conf/kube-lb.conf<<'EOF' user root; worker_processes 1; error_log /etc/kube-lb/logs/error.log warn; events { worker_connections 3000; } stream { upstream backend { #server 192.168.10.113:6443 max_fails=2 fail_timeout=3s; #server 192.168.10.111:6443 max_fails=2 fail_timeout=3s; server 192.168.10.112:6443 max_fails=2 fail_timeout=3s; } server { listen 127.0.0.1:6443; proxy_connect_timeout 1s; proxy_pass backend; } } EOF #重启负载均衡 systemctl restart kube-lb.service #上传压缩包文件 root@k8s-harbor-deploy:/tools# ls -la kubernetes* -rw-r--r-- 1 root root 29154332 Oct 18 03:05 kubernetes-client-linux-amd64.tar.gz -rw-r--r-- 1 root root 118151109 Oct 18 03:18 kubernetes-node-linux-amd64.tar.gz -rw-r--r-- 1 root root 325784034 Oct 18 03:43 kubernetes-server-linux-amd64.tar.gz -rw-r--r-- 1 root root 525314 Oct 18 03:19 kubernetes.tar.gz #解压 tar -xf kubernetes-client-linux-amd64.tar.gz tar -xf kubernetes-node-linux-amd64.tar.gz tar -xf kubernetes-server-linux-amd64.tar.gz tar -xf kubernetes.tar.gz #确认每个master要升级的文件所在的目录 root@k8s-master1-etcd1-haproxy1:/usr/local/bin# ls -l kube* -rwxr-xr-x 1 root root 122064896 Jan 2 22:31 kube-apiserver -rwxr-xr-x 1 root root 116281344 Jan 2 22:31 kube-controller-manager -rwxr-xr-x 1 root root 43130880 Jan 2 22:32 kube-proxy -rwxr-xr-x 1 root root 47104000 Jan 2 22:31 kube-scheduler -rwxr-xr-x 1 root root 46436352 Jan 2 22:31 kubectl -rwxr-xr-x 1 root root 118062928 Jan 2 22:32 kubelet #要升级的6个二进制文件kube-apiserver、kube-controller-manager、kube-proxy、kube-scheduler、kubectl、kubelet #/tools/kubernetes/server/bin最新版本二进制文件所在目录 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ls -l /tools/kubernetes/server/bin total 1072912 -rwxr-xr-x 1 root root 50581504 Aug 12 2021 apiextensions-apiserver -rwxr-xr-x 1 root root 48529408 Aug 12 2021 kube-aggregator -rwxr-xr-x 1 root root 122101760 Aug 12 2021 kube-apiserver -rw-r--r-- 1 root root 8 Aug 12 2021 kube-apiserver.docker_tag -rw------- 1 root root 126893056 Aug 12 2021 kube-apiserver.tar -rwxr-xr-x 1 root root 116314112 Aug 12 2021 kube-controller-manager -rw-r--r-- 1 root root 8 Aug 12 2021 kube-controller-manager.docker_tag -rw------- 1 root root 121105408 Aug 12 2021 kube-controller-manager.tar -rwxr-xr-x 1 root root 43134976 Aug 12 2021 kube-proxy -rw-r--r-- 1 root root 8 Aug 12 2021 kube-proxy.docker_tag -rw------- 1 root root 105137664 Aug 12 2021 kube-proxy.tar -rwxr-xr-x 1 root root 47112192 Aug 12 2021 kube-scheduler -rw-r--r-- 1 root root 8 Aug 12 2021 kube-scheduler.docker_tag -rw------- 1 root root 51903488 Aug 12 2021 kube-scheduler.tar -rwxr-xr-x 1 root root 44642304 Aug 12 2021 kubeadm -rwxr-xr-x 1 root root 46424064 Aug 12 2021 kubectl -rwxr-xr-x 1 root root 54984840 Aug 12 2021 kubectl-convert -rwxr-xr-x 1 root root 118164016 Aug 12 2021 kubelet -rwxr-xr-x 1 root root 1593344 Aug 12 2021 mounter #查看各个插件的版本 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ./kube-apiserver --version Kubernetes v1.21.4 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ./kube-scheduler --version Kubernetes v1.21.4 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ./kube-proxy --version Kubernetes v1.21.4 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ./kube-controller-manager --version Kubernetes v1.21.4 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# ./kubelet --version Kubernetes v1.21.4 #停止服务 systemctl stop kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet #查看集群状态 root@k8s-master2-etcd2-haproxy2:~# kubectl get node -A NAME STATUS ROLES AGE VERSION 192.168.10.111 NotReady,SchedulingDisabled master 3d17h v1.21.0 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.0 192.168.10.113 NotReady,SchedulingDisabled master 5h16m v1.21.0 192.168.10.114 Ready node 3d16h v1.21.0 192.168.10.115 Ready node 3d16h v1.21.0 192.168.10.116 Ready node 5h6m v1.21.0 #拷贝二进制文件 scp kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet kubectl 192.168.10.111:/usr/local/bin/ scp kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet kubectl 192.168.10.113:/usr/local/bin/ #启动服务 systemctl start kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet #检查 root@k8s-master3-etcd3-haproxy3:~# kubectl get node -A -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.111 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.0 192.168.10.112 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.113 Ready,SchedulingDisabled master 5h19m v1.21.4 192.168.10.113 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.114 Ready node 3d17h v1.21.0 192.168.10.114 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.115 Ready node 3d17h v1.21.0 192.168.10.115 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.116 Ready node 5h9m v1.21.0 192.168.10.116 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 #再次升级192.168.10.112的master #修改所有节点配置文件 cat >/etc/kube-lb/conf/kube-lb.conf<<'EOF' user root; worker_processes 1; error_log /etc/kube-lb/logs/error.log warn; events { worker_connections 3000; } stream { upstream backend { server 192.168.10.113:6443 max_fails=2 fail_timeout=3s; server 192.168.10.111:6443 max_fails=2 fail_timeout=3s; #server 192.168.10.112:6443 max_fails=2 fail_timeout=3s; } server { listen 127.0.0.1:6443; proxy_connect_timeout 1s; proxy_pass backend; } } EOF #重启负载均衡 systemctl restart kube-lb.service #停止服务 systemctl stop kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet #拷贝二进制文件 scp kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet kubectl 192.168.10.112:/usr/local/bin/ #启动服务 systemctl start kube-apiserver kube-controller-manager kube-proxy kube-scheduler kubelet #检查,master全部升级为1.24 root@k8s-master2-etcd2-haproxy2:~# kubectl get node -A -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.111 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.113 Ready,SchedulingDisabled master 5h24m v1.21.4 192.168.10.113 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.114 Ready node 3d17h v1.21.0 192.168.10.114 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.115 Ready node 3d17h v1.21.0 192.168.10.115 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.116 Ready node 5h13m v1.21.0 192.168.10.116 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15

3.升级node节点

node节点升级kubelet和kube-proxy node升级和master还不太一样,因为master上面没有运行业务容器,但是node上是运行业务容器的,所以要先把node节点驱逐出k8s环境,驱逐后node就没有业务容器了,就可以做升级操作了 确定node节点的安装路径,kubectl命令依赖/root/.kube/config文件,kubectl命令是通过/root/.kube/config文件和apiserver进行交换 root@k8s-node1:/usr/local/bin# pwd /usr/local/bin root@k8s-node1:/usr/local/bin# ls -l kube* -rwxr-xr-x 1 root root 43130880 Jan 2 22:35 kube-proxy -rwxr-xr-x 1 root root 46436352 Jan 2 22:35 kubectl -rwxr-xr-x 1 root root 118062928 Jan 2 22:35 kubelet 需要驱逐node 每个node节点上都有daemonset容器,没法驱逐比如calico,且每个node节点上都有这个pod,因此无法驱逐到其它节点上运行,但是这种pod也不需要重建,因为每个节点都有了,而它无法驱逐,所以可以把这pod忽略掉 root@k8s-node1:/usr/local/bin# kubectl get pod -o wide -n kube-system NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES calico-kube-controllers-78bc9689fb-22s58 1/1 Running 0 3d17h 192.168.10.115 192.168.10.115 <none> <none> calico-node-4nxsv 1/1 Running 0 5h30m 192.168.10.115 192.168.10.115 <none> <none> calico-node-bzccb 1/1 Running 0 5h31m 192.168.10.111 192.168.10.111 <none> <none> calico-node-msvg7 1/1 Running 0 5h22m 192.168.10.116 192.168.10.116 <none> <none> calico-node-wfv28 1/1 Running 0 5h30m 192.168.10.114 192.168.10.114 <none> <none> calico-node-xr2df 1/1 Running 0 5h32m 192.168.10.113 192.168.10.113 <none> <none> calico-node-zbtc8 1/1 Running 0 5h32m 192.168.10.112 192.168.10.112 <none> <none> coredns-7b47c747d7-wnpxg 1/1 Running 0 2d22h 10.200.36.68 192.168.10.114 <none> <none> 驱逐node:192.168.10.114,192.168.10.115,生产环境最好确保node有足够的资源接收被驱逐的pod,以上以生产环境有业务情况下比较严谨的先驱逐再升级 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl drain 192.168.10.114 --ignore-daemonsets --force root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl drain 192.168.10.115 --ignore-daemonsets --force root@k8s-harbor-deploy:/etc/kubeasz# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.113 Ready,SchedulingDisabled master 5h35m v1.21.4 192.168.10.114 Ready,SchedulingDisabled node 3d17h v1.21.0 192.168.10.115 Ready,SchedulingDisabled node 3d17h v1.21.0 这时192.168.10.114、192.168.10.115也被打上SchedulingDisabled的标签了,业务容器都迁移了,不会再向这个主机调度新的pod #192.168.10.114、192.168.10.115的node节点停止服务 systemctl stop kubelet kube-proxy #拷贝二进制文件到192.168.10.114、192.168.10.115的node节点 scp kubectl kube-proxy kubelet 192.168.10.114:/usr/local/bin/ scp kubectl kube-proxy kubelet 192.168.10.115:/usr/local/bin/ #192.168.10.114、192.168.10.115的node节点启动服务 systemctl start kubelet kube-proxy 查看node的版本 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.113 Ready,SchedulingDisabled master 5h38m v1.21.4 192.168.10.114 Ready,SchedulingDisabled node 3d17h v1.21.4 192.168.10.115 Ready,SchedulingDisabled node 3d17h v1.21.4 192.168.10.116 Ready node 5h28m v1.21.0 恢复192.168.10.114、192.168.10.115的node节点调度状态,让它恢复正常 kubectl uncordon 192.168.10.114 kubectl uncordon 192.168.10.115 #查看集群节点调度状态 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.113 Ready,SchedulingDisabled master 5h39m v1.21.4 192.168.10.114 Ready node 3d17h v1.21.4 192.168.10.115 Ready node 3d17h v1.21.4 192.168.10.116 Ready node 5h29m v1.21.0 #升级其最后一个node #驱逐node root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl drain 192.168.10.116 --ignore-daemonsets --forc #停止服务 systemctl stop kubelet kube-proxy #拷贝二进制文件 scp kubectl kube-proxy kubelet 192.168.10.116:/usr/local/bin/ #启动服务 systemctl start kubelet kube-proxy #恢复192.168.10.116的node节点调度状态 kubectl uncordon 192.168.10.116 #查看集群节点调度状态 root@k8s-harbor-deploy:/tools/kubernetes/server/bin# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.113 Ready,SchedulingDisabled master 5h45m v1.21.4 192.168.10.114 Ready node 3d17h v1.21.4 192.168.10.115 Ready node 3d17h v1.21.4 192.168.10.116 Ready node 5h35m v1.21.4 集群升级完成,再把/etc/kube-lb/conf/kube-lb.conf文件中api server注释都解开 cat >/etc/kube-lb/conf/kube-lb.conf<<'EOF' user root; worker_processes 1; error_log /etc/kube-lb/logs/error.log warn; events { worker_connections 3000; } stream { upstream backend { server 192.168.10.113:6443 max_fails=2 fail_timeout=3s; server 192.168.10.111:6443 max_fails=2 fail_timeout=3s; server 192.168.10.112:6443 max_fails=2 fail_timeout=3s; } server { listen 127.0.0.1:6443; proxy_connect_timeout 1s; proxy_pass backend; } } EOF #重启负载均衡 systemctl restart kube-lb.service #最后检查 root@k8s-master1-etcd1-haproxy1:/usr/local/bin# kubectl get node -A -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME 192.168.10.111 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.111 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.112 Ready,SchedulingDisabled master 3d17h v1.21.4 192.168.10.112 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.113 Ready,SchedulingDisabled master 5h47m v1.21.4 192.168.10.113 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.114 Ready node 3d17h v1.21.4 192.168.10.114 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.115 Ready node 3d17h v1.21.4 192.168.10.115 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15 192.168.10.116 Ready node 5h36m v1.21.4 192.168.10.116 <none> Ubuntu 20.04.3 LTS 5.4.0-135-generic docker://19.3.15

分类:

kubernetes

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 无需6万激活码!GitHub神秘组织3小时极速复刻Manus,手把手教你使用OpenManus搭建本

· C#/.NET/.NET Core优秀项目和框架2025年2月简报

· 什么是nginx的强缓存和协商缓存

· 一文读懂知识蒸馏

· Manus爆火,是硬核还是营销?