tensorflow进阶篇-4(损失函数2)

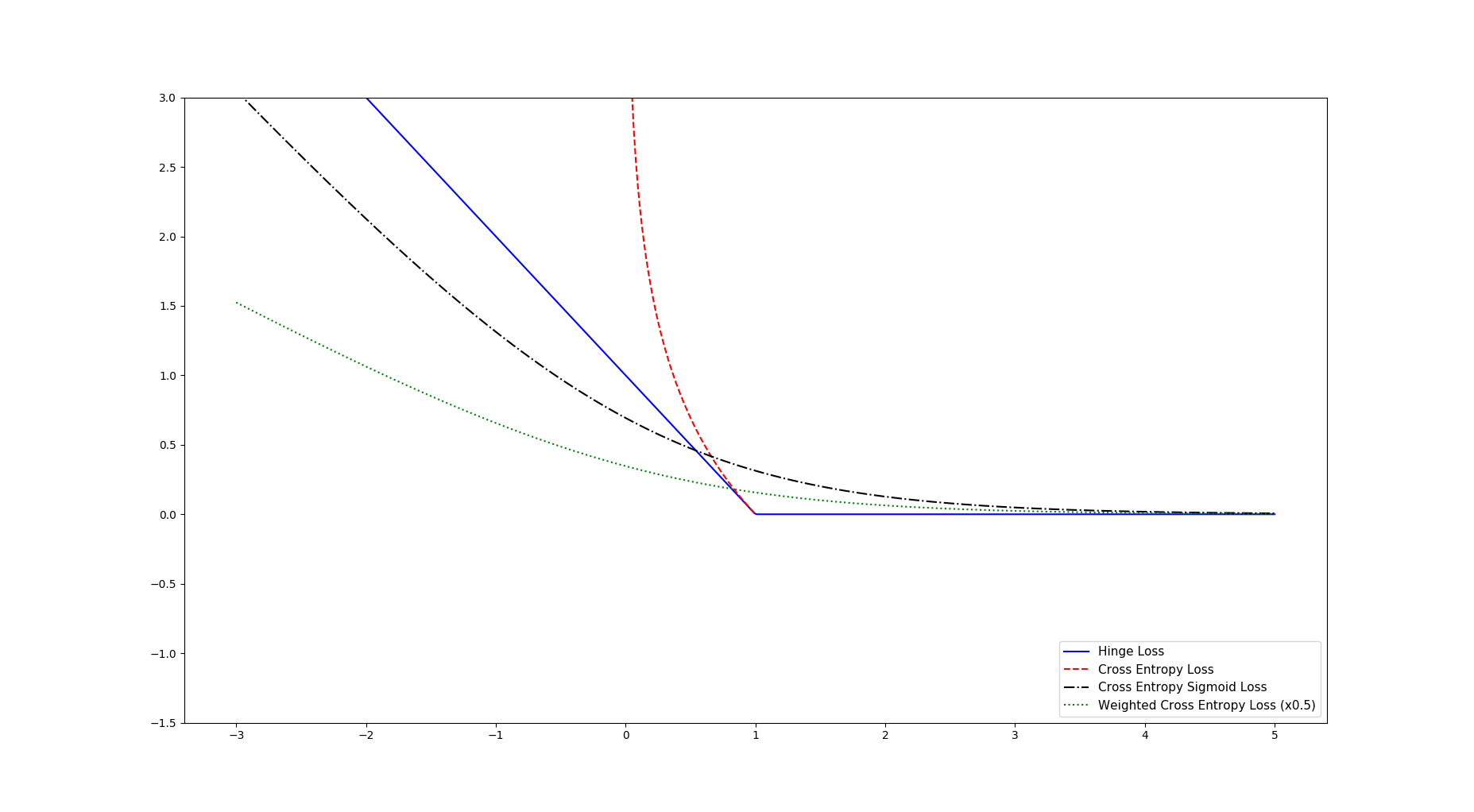

Hinge损失函数主要用来评估支持向量机算法,但有时也用来评估神经网络算法。下面的示例中是计算两个目标类(-1,1)之间的损失。下面的代码中,使用目标值1,所以预测值离1越近,损失函数值越小:

# Use for predicting binary (-1, 1) classes # L = max(0, 1 - (pred * actual)) hinge_y_vals = tf.maximum(0., 1. - tf.multiply(target, x_vals)) hinge_y_out = sess.run(hinge_y_vals)

两类交叉函数熵损失函数(Cross-entropy loss)有时也作为逻辑损失函数,比如,当预测两类目标0或者1时,希望度量函数预测值到真实分类值(0或者1)的距离,这个距离经常是0到1之间的实数。

# L = -actual * (log(pred)) - (1-actual)(log(1-pred)) xentropy_y_vals = - tf.multiply(target, tf.log(x_vals)) - tf.multiply((1. - target), tf.log(1. - x_vals)) xentropy_y_out = sess.run(xentropy_y_vals)

Sigmoid交叉熵损失函数与上一个损失函数非常类似,有一点不同的是,它先把想x_vals值通过sigmoid函数转换,再计算交叉熵损失:

# L = -actual * (log(sigmoid(pred))) - (1-actual)(log(1-sigmoid(pred))) # or # L = max(actual, 0) - actual * pred + log(1 + exp(-abs(actual))) xentropy_sigmoid_y_vals = tf.nn.sigmoid_cross_entropy_with_logits(logits=x_vals, labels=targets) xentropy_sigmoid_y_out = sess.run(xentropy_sigmoid_y_vals)

加权交叉熵损失函数(Weighted cross entropy loss)是Sigmoid交叉熵损失函数的加权,对正目标加权。

# L = -actual * (log(pred)) * weights - (1-actual)(log(1-pred)) # or # L = (1 - pred) * actual + (1 + (weights - 1) * pred) * log(1 + exp(-actual)) weight = tf.constant(0.5) #正目标加权 权值为0.5 xentropy_weighted_y_vals = tf.nn.weighted_cross_entropy_with_logits(logits=x_vals,targets=targets, pos_weight=weight) xentropy_weighted_y_out = sess.run(xentropy_weighted_y_vals)

利用matplotlib绘画出以上的损失函数为:

完整代码:

import matplotlib.pyplot as plt import tensorflow as tf from tensorflow.python.framework import ops ops.reset_default_graph() # Create graph sess = tf.Session() x_vals = tf.linspace(-3., 5., 500) target = tf.constant(1.) targets = tf.fill([500,], 1.) # Hinge loss # Use for predicting binary (-1, 1) classes # L = max(0, 1 - (pred * actual)) hinge_y_vals = tf.maximum(0., 1. - tf.multiply(target, x_vals)) hinge_y_out = sess.run(hinge_y_vals) # Cross entropy loss # L = -actual * (log(pred)) - (1-actual)(log(1-pred)) xentropy_y_vals = - tf.multiply(target, tf.log(x_vals)) - tf.multiply((1. - target), tf.log(1. - x_vals)) xentropy_y_out = sess.run(xentropy_y_vals) # Sigmoid entropy loss # L = -actual * (log(sigmoid(pred))) - (1-actual)(log(1-sigmoid(pred))) # or # L = max(actual, 0) - actual * pred + log(1 + exp(-abs(actual))) xentropy_sigmoid_y_vals = tf.nn.sigmoid_cross_entropy_with_logits(logits=x_vals, labels=targets) xentropy_sigmoid_y_out = sess.run(xentropy_sigmoid_y_vals) # Weighted (softmax) cross entropy loss # L = -actual * (log(pred)) * weights - (1-actual)(log(1-pred)) # or # L = (1 - pred) * actual + (1 + (weights - 1) * pred) * log(1 + exp(-actual)) weight = tf.constant(0.5) xentropy_weighted_y_vals = tf.nn.weighted_cross_entropy_with_logits(logits=x_vals,targets=targets, pos_weight=weight) xentropy_weighted_y_out = sess.run(xentropy_weighted_y_vals) # Plot the output x_array = sess.run(x_vals) plt.plot(x_array, hinge_y_out, 'b-', label='Hinge Loss') plt.plot(x_array, xentropy_y_out, 'r--', label='Cross Entropy Loss') plt.plot(x_array, xentropy_sigmoid_y_out, 'k-.', label='Cross Entropy Sigmoid Loss') plt.plot(x_array, xentropy_weighted_y_out, 'g:', label='Weighted Cross Entropy Loss (x0.5)') plt.ylim(-1.5, 3) #plt.xlim(-1, 3) plt.legend(loc='lower right', prop={'size': 11}) plt.show()

Softmax交叉熵损失函数(Softmax cross-entropy loss)是作用于非归一化的输出结果只针对单个目标分类的计算损失。通过softmax函数将输出结果转化成概率分布,然后计算真值概率分布的损失:

# Softmax entropy loss # L = -actual * (log(softmax(pred))) - (1-actual)(log(1-softmax(pred))) unscaled_logits = tf.constant([[1., -3., 10.]]) target_dist = tf.constant([[0.1, 0.02, 0.88]]) softmax_xentropy = tf.nn.softmax_cross_entropy_with_logits(logits=unscaled_logits, labels=target_dist) print(sess.run(softmax_xentropy))

输出:[ 1.16012561]

稀疏Softmax交叉熵损失函数(Sparse Softmax cross-entropy loss)和上一个损失函数类似,它是把目标函数分类为true的转化成index,而Softmax交叉熵损失函数将目标转成概率分布:

# Sparse entropy loss # L = sum( -actual * log(pred) ) unscaled_logits = tf.constant([[1., -3., 10.]]) sparse_target_dist = tf.constant([2]) sparse_xentropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=unscaled_logits, labels=sparse_target_dist) print(sess.run(sparse_xentropy))

输出:[ 0.00012564]

两类交叉熵损失函数有时也作为逻辑损失函数。