envoy部分六:envoy的集群管理

1)集群管理器与服务发现机制

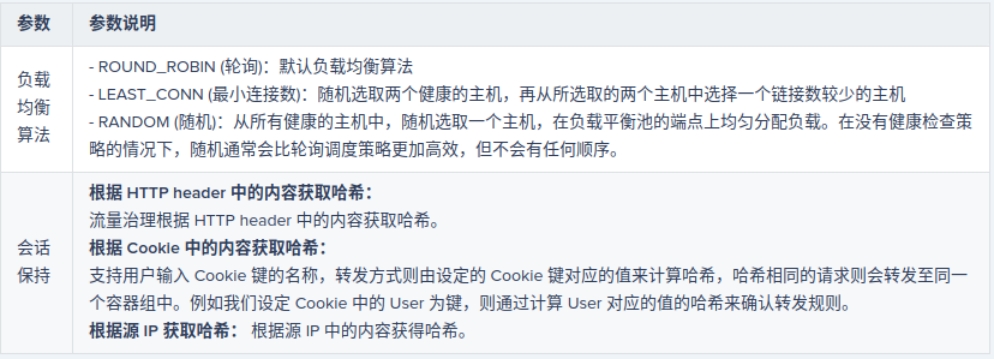

3)负载均衡策略

(1)分布式负载均衡 负载均衡算法:加权轮询、加权最少连接、环哈希、磁悬浮和随机等 区域感知路由 (2)全局负载均衡 位置优先级 位置权重 均衡器子集

二、Cluster Manager

1)Envoy支持同时配置任意数量的上游集群,并基于Cluster Manager 管理它们

(1)Cluster Manager负责为集群管理上游主机的健康状态、负载均衡机制、连接类型及适用协 议等; (2)生成集群配置的方式由静态或动态(CDS)两种;

2)集群预热

(1)集群在服务器启动或者通过 CDS 进行初始化时需要一个预热的过程,这意味着集群存在下列状况 初始服务发现加载 (例如DNS 解析、EDS 更新等)完成之前不可用 配置了主动健康状态检查机制时,Envoy会主动 发送健康状态检测请求报文至发现的每个上游主机; 于是,初始的主动健康检查成功完成之前不可用 (2)于是,新增集群初始化完成之前对Envoy的其它组件来说不可见;而对于需要更新的集群,在其预热完成后通过与旧集群的原子交换来确保不会发生流量中断类的错误;

配置单个集群

v3格式的集群配置框架全览

{ "transport_socket_matches": [], "name": "...", "alt_stat_name": "...", "type": "...", "cluster_type": "{...}", "eds_cluster_config": "{...}", "connect_timeout": "{...}", "per_connection_buffer_limit_bytes": "{...}", "lb_policy": "...", "load_assignment": "{...}", "health_checks": [], "max_requests_per_connection": "{...}", "circuit_breakers": "{...}", "upstream_http_protocol_options": "{...}", "common_http_protocol_options": "{...}", "http_protocol_options": "{...}", "http2_protocol_options": "{...}", "typed_extension_protocol_options": "{...}", "dns_refresh_rate": "{...}", "dns_failure_refresh_rate": "{...}", "respect_dns_ttl": "...", "dns_lookup_family": "...", "dns_resolvers": [], "use_tcp_for_dns_lookups": "...", "outlier_detection": "{...}", "cleanup_interval": "{...}", "upstream_bind_config": "{...}", "lb_subset_config": "{...}", "ring_hash_lb_config": "{...}", "maglev_lb_config": "{...}", "original_dst_lb_config": "{...}", "least_request_lb_config": "{...}", "common_lb_config": "{...}", "transport_socket": "{...}", "metadata": "{...}", "protocol_selection": "...", "upstream_connection_options": "{...}", "close_connections_on_host_health_failure": "...", "ignore_health_on_host_removal": "...", "filters": [], "track_timeout_budgets": "...", "upstream_config": "{...}", "track_cluster_stats": "{...}", "preconnect_policy": "{...}", "connection_pool_per_downstream_connection": "..." }

1、集群管理器配置上游集群时需要知道如何解析集群成员,相应的解析机制即为服务发现

(1)集群中的每个成员由endpoint进行标识,它可由用户静态配置,也可通过EDS或DNS服务 动态发现; Static :静态配置,即显式指定每个上游主机的已解析名称(IP地址/端口或unix 域套按字文件); ◆Strict DNS:严格DNS,Envoy将持续和异步地解析指定的DNS目标,并将DNS结果中的返回的每 个IP地址视为上游集群中可用成员; Logical DNS:逻辑DNS,集群仅使用在需要启动新连接时返回的第一个IP地址,而非严格获取 DNS查询的结果并假设它们构成整个上游集群;适用于必须通过DNS访问的大规模Web服务集群; Original destination:当传入连接通过iptables的REDIRECT或TPROXY target或使用代理协议重定向 到Envoy时,可以使用原始目标集群; Endpoint discovery service (EDS) :EDS是一种基于GRPC或REST-JSON API的xDS 管理服务器获取集 群成员的服务发现方式; Custom cluster :Envoy还支持在集群配置上的cluster_type字段中指定使用自定义集群发现机制;

2、Envoy的服务发现并未采用完全一致的机制,而是假设主机以最终一致的方式加入或 离开网格,它结合主动健康状态检查机制来判定集群的健康状态;

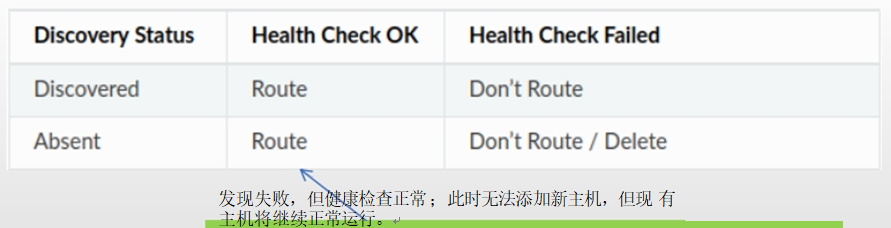

(1)健康与否的决策机制以完全分布式的方式进行,因此可以很好地应对网络分区 (2)为集群启用主机健康状态检查机制后,Envoy基于如下方式判定是否路由请求到一个主机

1、故障处理机制

1)、Envoy提供了一系列开箱即用的故障处理机制

超时(timeout)

有限次数的重试,并支持可变的重试延迟

主动健康检查与异常探测

连接池

断路器

3)、结合流量管理机制,用户可为每个服务/版本定制所需的故障恢复机制

2、Upstreams 健康状态检测

1)健康状态检测用于确保代理服务器不会将下游客户端的请求代理至工作异常的上游主机

2)Envoy支持两种类型的健康状态检测,二者均基于集群进行定义

(1)主动检测(Active Health Checking):Envoy周期性地发送探测报文至上游主机,并根据其响应 判断其 健康状态;Envoy目前支持三种类型的主动检测: HTTP:向上游主机发送HTTP请求报文 L3/L4:向上游主机发送L3/L4请求报文,基于响应的结果判定其健康状态,或仅通过连接状态进行判定; ◆Redis:向上游的redis服务器发送Redis PING ; (2)被动检测(Passive Health Checking):Envoy通过异常检测(Outlier Detection)机制进行被动模式的健 康状态检测 目前,仅http router、tcp proxy和redis proxy三个过滤器支持异常值检测; Envoy支持以下类型的异常检测 连续5XX:意指所有类型的错误,非http router过滤器生成的错误也会在内部映射为5xx错误代码; 连续网关故障:连续5XX的子集,单纯用于http的502、503或504错误,即网关故障; 连续的本地原因故障:Envoy无法连接到上游主机或与上游主机的通信被反复中断; 成功率:主机的聚合成功率数据阈值;

集群的主机健康状态检测机制需要显式定义,否则,发现的所有上游主机即被视为可用;定义语法

clusters: - name: ... ... load_assignment: endpoints: - lb_endpoints: - endpoint: health_check_config: port_value: ... # 自定义健康状态检测时使用的端口; ... ... health_checks: - timeout: ... # 超时时长 interval: ... # 时间间隔 initial_jitter: ... # 初始检测时间点散开量,以毫秒为单位; interval_jitter: ... # 间隔检测时间点散开量,以毫秒为单位; unhealthy_threshold: ... # 将主机标记为不健康状态的检测阈值,即至少多少次不健康的检测后才将其标记为不可用; healthy_threshold: ... # 将主机标记为健康状态的检测阈值,但初始检测成功一次即视主机为健康; http_health_check: {...} # HTTP类型的检测;包括此种类型在内的以下四种检测类型必须设置一种; tcp_health_check: {...} # TCP类型的检测; grpc_health_check: {...} # GRPC专用的检测; custom_health_check: {...} # 自定义检测; reuse_connection: ... # 布尔型值,是否在多次检测之间重用连接,默认值为true; unhealthy_interval: ... # 标记为“unhealthy” 状态的端点的健康检测时间间隔,一旦重新标记为“healthy” 即转为正常时间间隔; unhealthy_edge_interval: ... # 端点刚被标记为“unhealthy” 状态时的健康检测时间间隔,随后即转为同unhealthy_interval的定义; healthy_edge_interval: ... # 端点刚被标记为“healthy” 状态时的健康检测时间间隔,随后即转为同interval的定义; tls_options: { … } # tls相关的配置 transport_socket_match_criteria: {…} # Optional key/value pairs that will be used to match a transport socket from those specified in the cluster’s transport socket matches.

TCP类型的检测

clusters: - name: local_service connect_timeout: 0.25s lb_policy: ROUND_ROBIN type: EDS eds_cluster_config: eds_config: api_config_source: api_type: GRPC grpc_services: - envoy_grpc: cluster_name: xds_cluster health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 2 tcp_health_check: {}

非空负载的tcp检测可以使用send和receive来分别指定请求负荷及 于响应报文中期望模糊匹配 的结果

{ "send": "{...}", "receive": [] }

http类型的检测可以自定义使用的path、 host和期望的响应码等,并能够在必要时修 改(添加/删除)请求报文的标头 。

具体配置语法如下:

health_checks: [] - ... http_health_check: "host": "..." # 检测时使用的主机标头,默认为空,此时使用集群名称; "path": "..." # 检测时使用的路径,例如/healthz;必选参数; “service_name_matcher”: “...” # 用于验证检测目标集群服务名称的参数,可选; "request_headers_to_add": [] # 向检测报文添加的自定义标头列表; "request_headers_to_remove": [] # 从检测报文中移除的标头列表; "expected_statuses": [] # 期望的响应码列表;

配置示例

clusters: - name: local_service connect_timeout: 0.25s lb_policy: ROUND_ROBIN type: EDS eds_cluster_config: eds_config: api_config_source: api_type: GRPC grpc_services: - envoy_grpc: cluster_name: xds_cluster health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 2 http_health_check: host: ... # 默认为空值,并自动使用集群为其值; path: ... # 检测针对的路径,例如/healthz; expected_statuses: ... # 期望的响应码,默认为200;

1)异常主机驱逐机制

确定主机异常 -> 若尚未驱逐主机,且已驱逐的数量低于允许的阈值,则已经驱逐主机 -> 主机处于驱逐状态一定时长 -> 超出时长后自动恢复服务

2) 异常探测通过outlier_dection字段定义在集群上下文中

lusters: - name: ... ... outlier_detection: consecutive_5xx: ... # 因连续5xx 错误而弹出主机之前允许出现的连续5xx 响应或本地原始错误的数量,默认为5; interval: ... # 弹射分析扫描之间的时间间隔,默认为10000ms 或10s ; base_ejection_time: ... # 主机被弹出的基准时长,实际时长等于基 准 时 长乘以主机已经弹出的次数;默认为30000ms或30s ; max_ejection_percent: ... # 因异常探测而允许弹出的上游集群中的主机数量百分比,默认为10%;不过,无论如何,至少要弹出一个主机; enforcing_consecutive_5xx: ... # 基于连续的5xx 检测到主机异常时主机将被弹出的几率,可用于禁止弹出或缓慢弹出;默认为100; enforcing_success_rate: ... # 基于成功率检测到主机异常时主机将被弹出的几率,可用于禁止弹出或缓慢弹出;默认为100; success_rate_minimum_hosts: ... # 对集群启动成功率异常检测的最少主机数,默认值为5; success_rate_request_volume: ... # 在检测的一次时间间隔中必须收集的总请求的最小值,默认值为100; success_rate_stdev_factor: ... # 用确定成功率异常值弹出的弹射阈值的因子;弹射阈值=均值-(因子*平均成功率标准差);不过,此处设置的值需要除以1000以得到因子,例如,需要使用1.3为因子时,需要将该参数值设定为1300; consecutive_gateway_failure: ... # 因连续网关故障而弹出主机的最少连续故障数,默认为5; enforcing_consecutive_gateway_failure: ... # 基于连续网关故障检测到异常状态时而弹出主机的几率的百分比,默认为0; split_external_local_origin_errors: ... # 是否区分本地原因而导致的故障和外部故障,默认为false;此项设置为true时,以下三项方能生效; consecutive_local_origin_failure: ... # 因本地原因的故障而弹出主机的最少故障次数,默认为5; enforcing_consecutive_local_origin_failure: ... # 基于连续的本地故障检测到异常状态而弹出主机的几率百分比,默认为100; enforcing_local_origin_success_rate: ... # 基于本地故障检测的成功率统计检测到异常状态而弹出主机的几率,默认为100; failure_percentage_threshold: { …} # 确定基于故障百分比的离群值检测时要使用的故障百分比,如果给定主机的故障百分比大于或等于该值,它将被弹出;默认为 85; enforcing_failure_percentage: {…} # 基于故障百分比统计信息检测到异常状态时,实际弹出主机的几率的百分比;此设置可用于禁用弹出或使其缓慢上升;默认为 0; enforcing_failure_percentage_local_origin: { …} #基于本地故障百分比统计信息检测到异常状态时,实际主机的概率的百分比;默认为0; failure_percentage_minimum_hosts: {…} # 集群中执行基于故障百分比的弹出的主机的最小数量;若集群中的主机总数小于此值,将不会执行基于故障百分比的弹出;默认为 5; failure_percentage_request_volume: { …} # 必须在一个时间间隔(由上面的时间间隔持续时间定义)中收集总请求的最小数量,以对此主机执行基于故障百分比的弹出;如果数量低于此设置,则不会对此主机执行基于故障百分比的弹出;默认为50; max_ejection_time: {…} # 主机弹出的最长时间;如果未指定,则使用默认值(300000ms或300s )或 base_ejection_time值中的大者;

1) 同主动健康检查一样,异常检测也要配置在集群级别;下面的示例用于配置在返回3个连续 5xx错误时将主机弹出30秒:

consecutive_5xx: "3" base_ejection_time: "30s"

2) 在新服务上启用异常检测时应该从不太严格的规则集开始,以便仅弹出具有网关连接错误的 主机(HTTP 503),并且仅在10%的时间内弹出它们

consecutive_gateway_failure: "3" base_ejection_time: "30s" enforcing_consecutive_gateway_failure: "10"

3) 同时,高流量、稳定的服务可以使用统计信息来弹出频繁异常容的主机;下面的配置示例将 弹出错误率低于群集平均值1个标准差的任何端点,统计信息每10秒进行一次评估,并且算法 不会针对任何在10秒内少于500个请求的主机运行

interval: "10s" base_ejection_time: "30s" success_rate_minimum_hosts: "10" success_rate_request_volume: "500" success_rate_stdev_factor: "1000“ # divided by 1000 to get a double

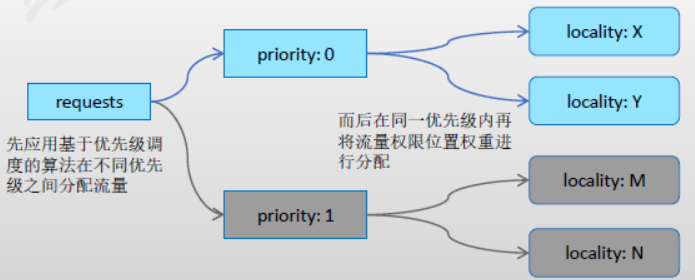

1)Envoy提供了几种不同的负载均衡策略,并可大体分为全局负载均衡和分布式负载均衡两类

(1)分布式负载均衡:Envoy自身基于上游主机(区域感知)的位置及健康状态等来确定如何分配负载至相关端点 主动健康检查 区域感知路由 负载均衡算法 (2)全局负载均衡:这是一种通过单个具有全局权限的组件来统一决策负载机制, Envoy的控制平面即是该类组件之一,它能够通过指定各种参数来调整应用于各端点的负载 优先级 位置权重 端点权重端点健康状态

3) Cluster中与负载均衡相关的配置参数

... load_assignment: {...} cluster_name: ... endpoints: [] # LocalityLbEndpoints列表,每个列表项主要由位置、端点列表、权重和优先级四项组成; - locality: {...} # 位置定义 region: ... zone: ... sub_zone: ... lb_endpoints: [] # 端点列表 - endpoint: {...} # 端点定义 address: {...} # 端点地址 health_check_config: {...} # 当前端点与健康状态检查相关的配置; load_balancing_weight: ... # 当前端点的负载均衡权重,可选; metadata: {...} # 基于匹配的侦听器、过滤器链、路由和端点等为过滤器提供额外信息的元数据,常用用于提供服务 配置或辅助负载均 衡; health_status: ... # 端点是经EDS发现时,此配置项用于管理式设定端点的健康状态,可用值有UNKOWN、HEALTHY、UNHEALTHY、DRAINING 、TIMEOUT和DEGRADED ; load_balancing_weight: {...} # 权重 priority: ... # 优先级 policy: {...} # 负载均衡策略设定 drop_overloads: [] # 过载保护机制,丢弃过载流量的机制; overprovisioning_factor: ... # 整数值,定义超配因子(百分比),默认值为140,即1.4; endpoint_stale_after: ... # 过期时长,过期之前未收到任何新流量分配的端点将被视为过时,并标记为不健康;默认值0表示永不过时 ; lb_subset_config: {...} ring_hash_lb_config: {...} original_dst_lb_config: {...} least_request_lb_config: {...} common_lb_config: {...} health_panic_threshold: ... # Panic阈值,默认为50%; zone_aware_lb_config: {...} # 区域感知路由的相关配置; locality_weighted_lb_config: {...} # 局部权重负载均衡相关的配置; ignore_new_hosts_until_first_hc: ... # 是否在新加入的主机经历第一次健康状态检查之前不予考虑进负载均衡;

1) Cluster Manager使用负载均衡策略将下游请求调度至选中的上游主机,它支持如下几个算法

(1)加权轮询(weighted round robin):算法名称为ROUND_ROBIN (2)加权最少请求(weighted least request):算法名称为LEAST_REQUEST (3)环哈希(ring hash):算法名称为RING_HASH,其工作方式类似于一致性哈希算法; (4)磁悬浮(maglev):类似于环哈希,但其大小固定为65537,并需要 各主机映射的节点填满整个环; 无论配置的主机和位置权重如何,算法都会尝试确保将每 个主机至 少映射一 次;算法 名称为MAGLEV (5)随机(random):未配置健康检查策略,则随机负载均衡算法通常比轮询更好;

2 负载算法:加权最少请求

1) 加权最少请求算法根据主机的权重相同或不同而使用不同的算法

(1)所有主机的权重均相同 这是一种复杂度为O(1)调度算法,它随机选择N个(默认为2,可配置)可用主机并从中挑选具有 最少活动请求的主机; 研究表明,这种称之为P2C的算法效果不亚于O(N)复杂度的全扫描算法,它确保了集群中具有最 大连接数的端点决不会收到新的请求,直到其连接数小于等于其它主机; (2)所有主机的权重并不完全相同,即集群中的两个或更多的主机具有不同的权重 调度算法将使用加权轮询调度的模式,权重将根据主机在请求时的请求负载进行动态调整,方法 是权重除以当前的活动请求计数;例如,权重为2且活动请求计数为4的主机的综合权重为2/4 = 0.5 ); 该算法在稳态下可提供良好的平衡效果,但可能无法尽快适应不太均衡的负载场景; 与P2C不同,主机将永远不会真正排空,即便随着时间的推移它将收到更少的请求。

2)LEAST_REQUEST 的配置参数

least_request_lb_config: choice_count: "{...}" # 从健康主机中随机挑选出多少个做为样本进行最少连接数比较;

1) Envoy使用ring/modulo算法对同一集群中的上游主机实行一致性哈希算法,但它需要依赖于在 路由中定义了相应的哈希策略时方才有效。

(1)通过散列其地址的方式将每个主机映射到一个环上 (2)然后,通过散列请求的某些属性后将其映射在环上 ,并以顺 时针方式 找到最接 近的对应 主机从而完成路由; (3)该技术通常也称为“ Ketama” 散列,并且像所有基于散列的负载平衡器一样 ,仅在使用协议路 由指 定要散列的值时才有效;

2) 为了避免环偏斜,每个主机都经过哈希处理,并按其权重成比例地放置在环上。

最佳做法是显式设置minimum_ring_size和maximum_ring_size参数,并监视min_hashes_per_host和max_hashes_per_host指标以确保请求的能得到良好的均衡

3) 配置参数

ring_hash_lb_config: "minimum_ring_size": "{...}", # 哈希环的最小值,环越大调度结果越接近权重酷比,默认为1024,最在值为8M; "hash_function": "...", # 哈希算法,支持XX_HASH和MURMUR_HASH_2两种,默认为前一种; "maximum_ring_size": "{...}" # 哈希环的最大值,默认为8M;不过,值越大越消耗计算资源;

1) route.RouteAction.HashPolicy

(1)用于一致性哈希算法的散列策略列表,即指定将请求报文的哪部分属性进行哈希运算并 映射至主机的哈希环上以完成路由 (2)列表中每个哈希策略都将单独评估,合并后的结果用于路由请求 组合的方法是确定性的,以便相同的哈希策略列表将产生相同的哈希 (3)哈希策略检查的请求的特定部分不存时将会导致无法生成哈希结果 如果(且仅当)所有已配置的哈希策略均无法生成哈希,则不会为该路由生成哈希,在这种情况 下,其行为与未指定任何哈希策略的行为相同(即,环形哈希负载均衡器将选择一个随机后端) (4)若哈希策略将“terminal”属性设置为true,并且已经生成了哈希,则哈希算法将立即返 回,而忽略哈希策略列表的其余部分

2) 路由哈希策略定义在路由配置中

route_config: ... virutal_host:s: - ... routes: - match: ... route: ... hash_policy: [] # 指定哈希策略列表,每个列表项仅可设置如下header、cookie或connection_properties三者之一; header: {...} header_name: ... # 要哈希的首部名称 cookie: {...} name: ... # cookie 的名称,其值将用于哈希计算,必选项; ttl: ... # 持续时长,不存在带有ttl的cookie将自动生成该cookie;如果T TL存在且为零,则生成的cookie 将是会话cookie path: ... # cookie的路径; connection_properties: {...} source_ip: ... # 布尔型值,是否哈希源IP地址; terminal: ... # 是否启用哈希算法的短路标志,即一旦当前策略生成哈希值,将不再考虑列表中后续的其它哈希策略;

下面的示例将哈希请求报文的源IP地址和User-Agent首部;

static_resources: listeners: - address: ... filter_chains: - filters: - name: envoy.http_connection_manager ... route: cluster: webcluster1 hash_policy: - connection_properties: source_ip: true - header: header_name: User-Agent http_filters: - name: envoy.router clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: RING_HASH ring_hash_lb_config: maximum_ring_size: 1048576 minimum_ring_size: 512 load_assignment: ...

1) Maglev是环哈希算法的一种特殊形式,它使用固定为6 537的环大小;

(1)环构建算法将每个主机按其权重成比例地放置在环上,直到 环完全填 满为止; 例如,如 果主机 A的 权 重为1,主机B的权重为2,则主机A将具有21,846项,而主机B将 具有43,691项 (总计65,537 项) (2)该算法尝试将每个主机至少放置一次在表中,而不 管配置的 主机和位 置权重如 何,因此 在某些极 端 情况下,实际比例可能与配置的权重不同; (3)最佳做法是监视min_entries_per_host和max_entries_per_host指标以确保没有主机出现异常配置;

2) 在需要一致哈希的任何地方,Maglev都可以取代环哈希;同时与环形哈希算法一样,Magelev仅在使用协议路由指定要哈希的值时才有效;

(1)通常,与环哈希ketama算法相比,Maglev具有显着更快的表查找建立时间 以及主机 选择时间 (2)稳定性略逊于环哈希

七、节点优先级及优先级调度

(1)locality:从大到小可由region(地域)、zone(区域)和sub_zone(子区域)进行逐级标识; (2)load_balancing_weight:可选参数,用于为每个priority/region/zone/sub_zone配置权重,取值范围[1,n);通常,一个 locality权重除以具有相同优先级的所有locality的权重之和即为当前locality的流量比例;此配置仅启用了位置加权负载均衡机制时才会生效; (3)priority:此LocalityLbEndpoints组的优先级,默认为最高优先级0;

3) 注意,也可在同一位置配置多个LbEndpoints,但这通常仅在不同组需要 具有不同 的负载均衡权重或不同的优先级时才需要;

# endpoint.LocalityLbEndpoints { "locality": "{...}", "lb_endpoints": [], "load_balancing_weight": "{...}", “priority”: “... “ # 0为最高优先级,可用范围为[0,N],但配置时必须按顺序使用各优先级数字,而不能跳过;默认为0; }

1) 调度时,Envoy仅将流量调度至最高优先级的一组端点(LocalityLbEnpoints)

(1)在最高优先级的端点变得不健康时,流量才会按比例转移至次一个优先级的点;例如一个优先级中20%的端点不健康时,也将有20%的流量转移至次一个优先级端点; (2)超配因子:也可为一组端点设定超配因子,实现部分端点故障时仍将更大比例 的流量导 向至本组端点; 计算公式:转移的流量=100%-健康的端点比例*超配因子;于是,对于1.4的因子来说,20%的故障比例时,所有 流量仍将保留在当前组;当健康的端点比例低于72%时,才会有部分流量转移至次优先级端点; 一个优先级别当前处理流量的能力也称为健康评分(健康主机比例*超配因子,上限为100%); (3)若各个优先级的健康评分总和(也称为标准化的总健康状态 )小于100,则Envoy会认为没有足够的健 康端点来分配所有待处理的流量,此时,各级别会根据其健康分值的比例重新分配100%的流量;例如,对于具有{20,30}健康评分的两个组(标准化的总健康状况为50)将被标准化, 并导致负载比例为40% 和60%;

2) 另外,优先级调度还支持同一优先级内部的端点降级(DEGRADED)机制,其工作方式类同于在两个不同优先级之间的端点分配流量的机制

(1)非降级端点健康比例*超配因子大于等于100%时,降级端点不承接流量; (2)非降级端点的健康比例*超配因子小于100%时,降级端点承接与100%差额部分的流量;

1) 调度期间,Envoy仅考虑上游主机列表中的可用(健康或降级)端点,但可用端点的百分比过 低时,Envoy将忽略所有端点的健康状态并将流量调度给所有端点;此百分比即为Panic阈值,也称为恐慌阈值;

(1)默认的Panic阈值为50%; (2)Panic阈值用于避免在流量增长时导致主机故障进入级联状态;

给定优先级中的可用端点数量下降时,Envoy会将一些流量转 移至较低优先级的端点;

◆若在低优先级中找到的承载所有流量的端点,则忽略恐慌阈值;

◆否则,Envoy会在所有优先级之间分配流量,并在给定的优先级的可用性低于恐慌阈值时将该优先的流量分配 至该优先级的所有主机;

# Cluster.CommonLbConfig { "healthy_panic_threshold": "{...}", # 百分比数值,定义恐慌阈值,默认为50%; "zone_aware_lb_config": "{...}", "locality_weighted_lb_config": "{...}", "update_merge_window": "{...}", "ignore_new_hosts_until_first_hc": "..." }

下面的示例基于不同的locality分别定义了两组不同优先级的端点组

clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: webcluster1 endpoints: - locality: region: cn-north-1 priority: 0 lb_endpoints: - endpoint: address: socket_address: address: webservice1 port_value: 80 - locality: region: cn-north-2 priority: 1 lb_endpoints: - endpoint: address: socket_address: address: webservice2 port_value: 80 health_checks: - ...

1、位置加权负载均衡配置介绍

1)、位置加权负载均衡(Locality weighted load balancing)即为特定的Locality及相关的LbEndpoints 组显式赋予权重,并根据此权重比在各Locality之间分配流量;

所有Locality的所有Endpoint均可用时,则根据位置权重在各Locality之间进行加权轮询;

例如,cn-north-1和cn-north-2两个region的权重分别为1 和2时,且各region内的端点均处理于健康状态,则流量分配比 例为“1:2”,即一个33%,一个是67%;

启用位置加权负载均衡及位置权重定义的方法

cluster: - name: ... ... common_lb_config: locality_weighted_lb_config: {} # 启用位置加权负载均衡机制,它没有可用的子参数; ... load_assignment: endpoints: locality: "{...}" lb_endpoints": [] load_balancing_weight: "{}" # 整数值,定义当前位置或优先级的权重,最小值为1; priority: "..."

2)、当某Locality的某些Endpoint不可用时,Envoy则按比例动态调整该Locality的权重;

位置加权负载均衡方式也支持为LbEndpoint配置超配因子,默认为1.4;

于是,一个Locality(假设为X)的有效权重计 算方式如下:

health(L_X) = 140 * healthy_X_backends / total_X_backends effective_weight(L_X) = locality_weight_X * min(100, health(L_X)) load to L_X = effective_weight(L_X) / Σ_c(effective_weight(L_c))

例如,假设位置X和Y分别 拥有 1和 2的权重,则Y的健 康端点比 例只有50%时,其权重调 整为 “2×(1.4×0.5)=1.4”,于是流量分配比例变为“ 1:1.4”;

(1)选择priority; (2)从选出的priority中选择locality; (3)从选出的locality中选择Endpoint;

clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN common_lb_config: locality_weighted_lb_config: {} load_assignment: cluster_name: webcluster1 policy: overprovisioning_factor: 140 endpoints: - locality: region: cn-north-1 priority: 0 load_balancing_weight: 1 lb_endpoints: - endpoint: address: socket_address: address: colored port_value: 80 - locality: region: cn-north-2 priority: 0 load_balancing_weight: 2 lb_endpoints: - endpoint: address: socket_address: address: myservice port_value: 80

说明

(1)该集群定义了两个Locality ,cn-north-1和 cn-north-2, 它们分别具有权重 1和 2;它们具有相同的优先级0; (2)于是,所有端点都健康时,该集群的流量会以1:2 的比例分配至cn-north-1和cn-north-2; (3)假设cn-north-2具有两个端点,且一个端点 健康状 态检测失败时,则流量分配变更为1:1.4;

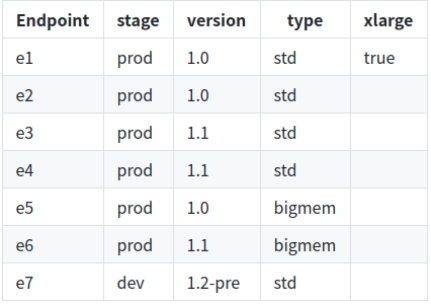

1) Envoy还支持在一个集群中基于子集实现更细粒度的流量分发

(1)首先,在集群的上游主机上添加元数据(键值标签) ,并使用 子集选择 器(分类元数据)将 上游主机 划分 为子集; (2)而后,在路由配置中指定负载均衡器可以选择的且 必须具有 匹配的元 数据的上 游主机, 从而实现 向 特定子集的路由; (3)各子集内的主机间的负载均衡采用集群定义的策略(lb_policy);

2) 配置了子集,但路由并未指定元数据或不存在与指定元数据匹配的子集时,则子集均衡均衡 器为其应用“回退策略”

(1)NO_FALLBACK:请求失败,类似集群中不存在任何主机;此为默认 策略; (2)ANY_ENDPOINT:在所有主机间进行调度,不再考虑主机元数据; (3)DEFAULT_SUBSET:调度至默认的子集,该子集需要事先定义;

1) 子集必须预定义方可由子集负载均衡器在调度时使用

定义主机元数据:键值数据

(1)主机的子集元数据必须要定义在“envoy.lb”过滤器下; (2)仅当使用ClusterLoadAssignments定义主机时才支持主机元数据; 通过EDS发现的端点 通过load_assignment字段定义的端点

配置示例

load_assignment: cluster_name: webcluster1 endpoints: - lb_endpoints: - endpoint: address: socket_address: protocol: TCP address: ep1 port_value: 80 metadata: filter_metadata: envoy.lb: version: '1.0' stage: 'prod'

2)子集必须预定义方可由子集负载均衡器在调度时使用

clusters: - name ... ... lb_subset_config: fallback_policy: "..." # 回退策略,默认为NO_FALLBACK default_subset: "{...}" # 回退策略DEFAULT_SUBSET使用的默认子集; subset_selectors : [] # 子集选择器 - keys: [] # 定义一个选择器,指定用于归类主机元数据的键列表; fallback_policy: ... # 当前选择器专用的回退策略; locality_weight_aware: "..." # 是否在将请求路由到子集时考虑端点的位置和位置权重;存在一些潜在的缺陷; scale_locality_weight: "..." # 是否将子集与主机中的主机比率来缩放每个位置的权重; panic_mode_any: "..." # 是否在配置回退策略且其相应的子集无法找到主机时尝试从整个集群中选择主机; list_as_any": ..."

(1)对于每个选择器,Envoy会遍历主机并检查其“envoy.lb”过滤器元数据,并为每个惟一的键值组 合创建一个子集; (2)若某主机元数据可以匹配该选择器中指定每个键,则会将该主机添加至此选择器中;这同时意味 着,一个主机可能同时满足多个子集选择器的适配条件,此时,该主机将同时隶属于多个子集; (3)若所有主机均未定义元数据,则不会生成任何子集;

3) 路由元数据匹配(metadata_match)

(1)仅在上游集群中与metadata_match中设置的元数据匹配的子集时才能完成流量路由; (2)使用了weighted_clusters定义路由目标时,其内部的各目标集群也可定义专用的metadata_match;

routes: - name: ... match: {...} route: {...} # 路由目标,cluster和weighted_clusters只能使用其一; cluster: metadata_match: {...} # 子集负载均衡器使用的端点元数据匹配条件;若使用了weighted_clusters且内部定义了metadat_match, # 则元数据将被合并,且weighted_cluster中定义的值优先;过滤器名称应指定为envoy.lb; filter_metadata: {...} # 元数据过滤器 envoy.lb: {...} key1: value1 key2: value2 ... weighted_clusters: {...} clusters: [] - name: ... weight: ... metadata_match: {...}

不存在与路由元数据匹配的子集时,将启用后退策略 ;

5、子集选择器配置示例

子集选择器定义

clusters: - name: webclusters lb_policy: ROUND_ROBIN lb_subset_config: fallback_policy: DEFAULT_SUBSET default_subset: stage: prod version: '1.0' type: std subset_selectors: - keys: [stage, type] - keys: [stage, version] - keys: [version] - keys: [xlarge, version]

集群元数据

映射出十个子集

stage=prod, type=std (e1, e2, e3, e4) stage=prod, type=bigmem (e5, e6) stage=dev, type=std (e7) stage=prod, version=1.0 (e1, e2, e5) stage=prod, version=1.1 (e3, e4, e6) stage=dev, version=1.2-pre (e7) version=1.0 (e1, e2, e5) version=1.1 (e3, e4, e6) version=1.2-pre (e7) version=1.0, xlarge=true (e1)

额外还有一个默认的子集

stage=prod, type=std, version=1.0 (e1, e2)

1) 通常,始发集群和上游集群属于不同区域的部署中 ,Envoy执行区域感 知路由

2)区域感知路由(zone aware routing)用于尽可能地向上游集群中的本地区域发送流量,并大致确保将流量均衡分配至上游相关的所有端点;它依赖于以下几个先决条件

(1)始发集群(客户端)和上游集群(服务端)都未处于恐慌模式; 启用了区域感知路由; (2)始发集群与上游集群具有相同数量的区域; (3)上游集群具有能承载所有请求流量的主机;

3) Envoy将流量路由到本地区域,还是进行跨区域路由取决于始发集群和 上游集群 中健康主 机的百分比

(1)始发集群的本地区域百分比大于上游集群中本地区域的百分比: (2)Envoy 计算可以直接路由到上游集群的本地区域的请求的百分比,其余的请求被路由到其它区域; (3)始发集群本地区域百分比小于上游集群中的本地区域百分比:可实现所有请求的本地区域路由,并可承载一部分其 它区域的跨区域路由;

4) 目前,区域感知路由仅支持0优先级;

common_lb_config: zone_aware_lb_config: "routing_enabled": "{...}", # 值类型为百分比,用于配置在多大比例的请求流量上启用区域感知路由机制,默认为100%,; "min_cluster_size": "{...}" # 配置区域感知路由所需的最小上游集群大小,上游集群大小小于指定的值时即使配置了区域感知路由也不会执行 区域感知路由;默认值为6,可用值为64位整数;

1) 调度时,可用的目标上游主机范围将根据下游发出的请求连接上的元数据进行选定, 并将请求调度至此范围内的某主机;

(1)连接请求会被指向到将其重定向至Envoy之前的原始目标地址;换句话说,是直接转发 连接到客户端连接的目标地址,即没有做负载均衡; 原始连接请求发往Envoy之前会被iptables的REDIRECT或TPROXY重定向,其目标地址也就会发生 变动; (2)这是专用于原始目标集群(Original destination,即type参数为ORIGINAL_DST的集群) 的负载均衡器; (3)请求中的新目标由负载均衡器添按需添加到集群中,而集群也会周期性地清理不再被使 用的目标主机;

3) 需要注意的是,原始目标集群不与其它负载均衡策略相兼容;

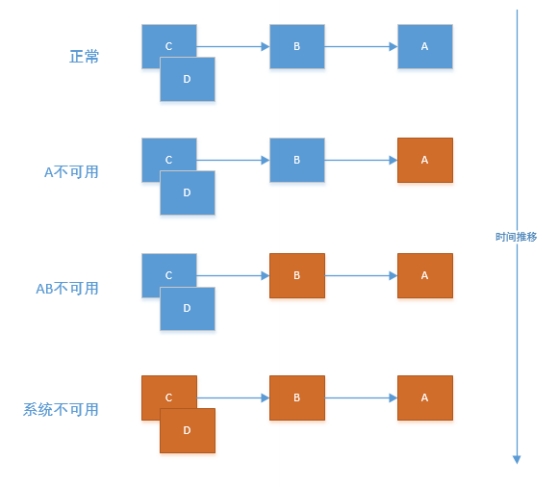

1) 多级服务调度用场景中,某上游服务因网络 故障或服务繁忙无法响应请求时很可能会导 致多级上游调用者大规模级联故障,进而导 致整个系统不可用, 此 即为服务的雪崩效应 ;

2)服务雪崩效应是一种因“服务提供者”的 不可用导致“服务消费者”的不可用,并将不可用逐渐放大的过程;

服务网格之上的微服务应用中,多级调用 的长调用链并不鲜见;

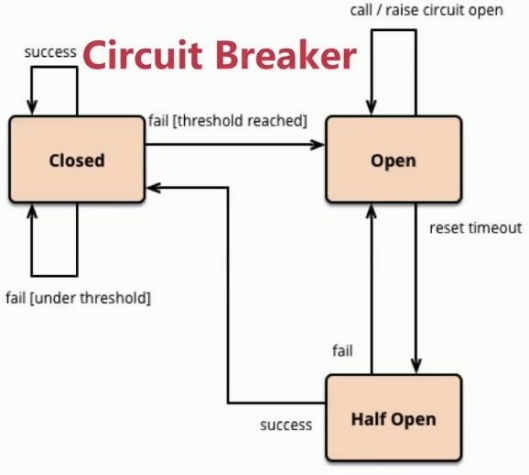

3) 熔断:上游服务(被调用者,即服务提供者)因压 力 过大而变得响应过慢甚至失败时,下游服务(服务消费 者)通过暂时切断对上游的请求调用达到牺牲局 部,保全上游甚至是整体之目的;

(1)熔断打开(Open):在固定时间窗口内,检测到的失败指标 达到指定的阈值时启动熔断; 所有请求会直接失败而不再发往后端端点; (2)熔断半打开(Half Open):断路器在工作一段时间后自动切 换至半打开状态,并根据下一次请求的返回结果判定状态 切换 请求成功:转为熔断关闭状态; 请求失败:切回熔断打开状态; (3)熔断关闭(Closed):一定时长后上游服务可能会变得再次可 用,此时下游即可关闭熔断,并再次请求其服务;

总结起来, 熔断是分布式应用常用的一种流量管理模 式,它能够让应用程序免受上游服务失败、延迟 峰值或 其它网络异常的侵害。

十二、Envoy断路器

1) Envoy支持多种类型的完全分布式断路机 制,达到由其定义的 阈值时,相应的断路器即会溢出:

(1)集群最大连接数:Envoy同上游集群建立的最大连接数,仅适用于HTTP/1.1,因为HTTP/2可以链路复用; 集群最大请求数:在给定的时间,集群中的所有主机未完成的最大请求数,仅适用于HTTP/2; (2)集群可挂起的最大请求数:连接池满载时所允许的等待队列的最大长度; (3)集群最大活动并发重试次数:给定时间内集群中所有主机可以执行的最大并发重试次数; (3)集群最大并发连接池:可以同时实例化出的最大连接池数量;

注 意 :在Istio中,熔断的功能通过连接池(连接池管理)和故障实例隔离(异常点检测)进 行定义,而Envoy的断路器通常仅对应于Istio中的连接池功能;

通过限制某个客户端对 目标服务的连接数、访问请 求、队列长度和重试次数等 ,避免对一个服务的过量访问 某个服务实例频繁超时或者出错时交其昨时逐出,以避免影响整个服务

十三、连接池和熔断器

1) 连接池的常用指标

(1)最大连接数:表示在任何给定时间内, Envoy 与上游集群建立的最大连接数,适用于 HTTP/1.1; (2)每连接最大请求数:表示在任何给定时间内,上游集群中所有主机可以处理的最大请求数;若设为 1 则会禁止 keep alive 特性; (3)最大请求重试次数:在指定时间内对目标主机最大重试次数 (4)连接超时时间:TCP 连接超时时间,最小值必须大于 1ms;最大连接数和连接超时时间是对 TCP 和 HTTP 都有效的 通用连接设置; (5)最大等待请求数:待处理请求队列的长度,若该断路器溢出,集群的 upstream_rq_pending_overflow计数器就会递增 熔断器的常用指标(Istio上下文) (6)连续错误响应个数:在一个检查周期内,连续出现5xx错误的个数,例502、503状态码 (7)检查周期:将会对检查周期内的响应码进行筛选 (8)隔离实例比例:上游实例中,允许被隔离的最大比例;采用向上取整机制,假设有10个实例,13%则最多会隔离2个 实例 (9)最短隔离时间:实例第一次被隔离的时间,之后每次隔离时间为隔离次数与最短隔离时间的乘积

3) 与连接池相关的参数有两个定义在cluster的上下文

--- clusters: - name: ... ... connect_timeout: ... # TCP 连接的超时时长,即主机网络连接超时,合理的设置可以能够改善因调用服务变慢而导致整个链接变慢的情形; max_requests_per_connection: ... # 每个连接可以承载的最大请求数,HTTP/1.1和HTTP/2的连接池均受限于此设置,无设置则无限制,1表示禁用keep-alive ... circuit_breakers: {...} # 熔断相关的配置,可选; threasholds: [] # 适用于特定路由优先级的相关指标及阈值的列表; - priority: ... # 当前断路器适用的路由优先级; max_connections: ... # 可发往上游集群的最大并发连接数,仅适用于H TTP/1,默认为1024;超过指定数量的连接则将其短路; max_pending_requests: ... # 允许请求服务时的可挂起的最大请求数,默认为1024;;超过指定数量的连接则将其短路; max_requests: ... # Envoy可调度给上游集群的最大并发请求数,默认为1024;仅适用于HTTP/2 max_retries: ... # 允许发往上游集群的最大并发重试数量(假设配置了retry_policy),默认为3; track_remaining: ... # 其值为true时表示将公布统计数据以显示断路器打开前所剩余的资源数量;默认为false; max_connection_pools: ... # 每个集群可同时打开的最大连接池数量,默认为无限制;

4) 集群级断路器配置示例

clusters: - name: service_httpbin connect_timeout: 2s type: LOGICAL_DNS dns_lookup_family: V4_ONLY lb_policy: ROUND_ROBIN load_assignment: cluster_name: service_httpbin endpoints: - lb_endpoints: - endpoint: address: socket_address: address: httpbin.org port_value: 80 circuit_breakers: thresholds: max_connections: 1 max_pending_requests: 1 max_retries: 3

5) 可使用工具fortio进行压力测试

fortio load -c 2 -qps 0 -n 20 -loglevel Warning URL 项目地址:https://github.com/fortio/fortio

1、health-check

实验环境

envoy:Front Proxy,地址为172.31.18.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.18.11 webserver02:第二个后端服务 webserver02-sidecar:第二个后端服务的Sidecar Proxy,地址为172.31.18.12

front-envoy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: web_cluster_01 } http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: myservice, port_value: 80 } health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 2 http_health_check: path: /livez expected_statuses: start: 200 end: 399

front-envoy-with-tcp-check.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: web_cluster_01 } http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: myservice, port_value: 80 } health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 2 tcp_health_check: {}

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

docker-compose.yaml

version: '3.3' services: envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml # - ./front-envoy-with-tcp-check.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.18.2 aliases: - front-proxy depends_on: - webserver01-sidecar - webserver02-sidecar webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.18.11 aliases: - myservice webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.18.12 aliases: - myservice webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.18.0/24

实验验证

docker-compose up

克隆窗口测试

# 持续请求服务上的特定路径/livez root@test:~# while true; do curl 172.31.18.2; sleep 1; done iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! ...... # 等服务调度就绪后,另启一个终端,修改其中任何一个服务的/livez响应为非"OK"值,例如,修改第一个后端端点; root@test:~# curl -X POST -d 'livez=FAIL' http://172.31.18.11/livez # 通过请求的响应结果即可观测服务调度及响应的记录 iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! #不在调度到172.31.18.11 # 请求中,可以看出第一个端点因主动健康状态检测失败,因而会被自动移出集群,直到其再次转为健康为止; # 我们可使用类似如下命令修改为正常响应结果; root@test:~# curl -X POST -d 'livez=OK' http://172.31.18.11/livez iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.18.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.18.11! #172.31.18.11故障恢复,参与调度

2、outlier-detection

实验环境

envoy:Front Proxy,地址为172.31.20.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.20.11 webserver02:第二个后端服务 webserver02-sidecar:第二个后端服务的Sidecar Proxy,地址为172.31.20.12 webserver03:第三个后端服务 webserver03-sidecar:第三个后端服务的Sidecar Proxy,地址为172.31.20.13

front-envoy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: web_cluster_01 } http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: myservice, port_value: 80 } outlier_detection: consecutive_5xx: 3 base_ejection_time: 10s max_ejection_percent: 10

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

docker-compose.yaml

version: '3.3' services: envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.20.2 aliases: - front-proxy depends_on: - webserver01-sidecar - webserver02-sidecar - webserver03-sidecar webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.20.11 aliases: - myservice webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.20.12 aliases: - myservice webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.20.13 aliases: - myservice webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.20.0/24

实验验证

docker-compose up

克隆窗口测试

# 持续请求服务上的特定路径/livez root@test:~# while true; do curl 172.31.20.2/livez && echo; sleep 1; done OK OK OK OK OK ...... # 等服务调度就绪后,另启一个终端,修改其中任何一个服务的/livez响应为非"OK"值,例如,修改第一个后端端点; root@test:~# curl -X POST -d 'livez=FAIL' http://172.31.20.11/livez # 而后回到docker-compose命令的控制台上,或者直接通过请求的响应结果 ,即可观测服务调度及响应的记录 webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:43:54] "POST /livez HTTP/1.1" 200 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:43:55] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:43:56] "GET /livez HTTP/1.1" 200 - webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:43:57] "GET /livez HTTP/1.1" 506 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:43:58] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:43:59] "GET /livez HTTP/1.1" 200 - webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:44:00] "GET /livez HTTP/1.1" 506 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:44:01] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:44:02] "GET /livez HTTP/1.1" 200 - webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:44:03] "GET /livez HTTP/1.1" 506 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:44:04] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:44:05] "GET /livez HTTP/1.1" 200 - # 请求中,可以看出第一个端点因响应5xx的响应码,每次被加回之后,会再次弹出,除非使用类似如下命令修改为正常响应结果; root@test:~#curl -X POST -d 'livez=OK' http://172.31.20.11/livez webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:45:32] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:45:33] "GET /livez HTTP/1.1" 200 - webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:45:34] "GET /livez HTTP/1.1" 200 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:45:35] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:45:36] "GET /livez HTTP/1.1" 200 - webserver01_1 | 127.0.0.1 - - [02/Dec/2021 13:45:37] "GET /livez HTTP/1.1" 200 - webserver02_1 | 127.0.0.1 - - [02/Dec/2021 13:45:38] "GET /livez HTTP/1.1" 200 - webserver03_1 | 127.0.0.1 - - [02/Dec/2021 13:45:39] "GET /livez HTTP/1.1" 200 -

3、 least-requests

实验环境

envoy:Front Proxy,地址为172.31.22.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.22.11 webserver02:第二个后端服务 webserver02-sidecar:第二个后端服务的Sidecar Proxy,地址为172.31.22.12 webserver03:第三个后端服务 webserver03-sidecar:第三个后端服务的Sidecar Proxy,地址为172.31.22.13

front-envoy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: web_cluster_01 } http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.25s type: STRICT_DNS lb_policy: LEAST_REQUEST load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: red port_value: 80 load_balancing_weight: 1 - endpoint: address: socket_address: address: blue port_value: 80 load_balancing_weight: 3 - endpoint: address: socket_address: address: green port_value: 80 load_balancing_weight: 5

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

send-request.sh

#!/bin/bash declare -i red=0 declare -i blue=0 declare -i green=0 #interval="0.1" counts=300 echo "Send 300 requests, and print the result. This will take a while." echo "" echo "Weight of all endpoints:" echo "Red:Blue:Green = 1:3:5" for ((i=1; i<=${counts}; i++)); do if curl -s http://$1/hostname | grep "red" &> /dev/null; then # $1 is the host address of the front-envoy. red=$[$red+1] elif curl -s http://$1/hostname | grep "blue" &> /dev/null; then blue=$[$blue+1] else green=$[$green+1] fi # sleep $interval done echo "" echo "Response from:" echo "Red:Blue:Green = $red:$blue:$green"

docker-compose.yaml

version: '3.3' services: envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.22.2 aliases: - front-proxy depends_on: - webserver01-sidecar - webserver02-sidecar - webserver03-sidecar webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.22.11 aliases: - myservice - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.22.12 aliases: - myservice - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.22.13 aliases: - myservice - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.22.0/24

实验验证

docker-compose up

克隆窗口测试

# 使用如下脚本即可直接发起服务请求,并根据结果中统计的各后端端点的响应大体比例,判定其是否能够大体符合加权最少连接的调度机制; ./send-request.sh 172.31.22.2 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/least-requests# ./send-request.sh 172.31.22.2 Send 300 requests, and print the result. This will take a while. Weight of all endpoints: Red:Blue:Green = 1:3:5 Response from: Red:Blue:Green = 56:80:164 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/least-requests# ./send-request.sh 172.31.22.2 Send 300 requests, and print the result. This will take a while. Weight of all endpoints: Red:Blue:Green = 1:3:5 Response from: Red:Blue:Green = 59:104:137

4、weighted-rr

envoy:Front Proxy,地址为172.31.27.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.27.11 webserver02:第二个后端服务 webserver02-sidecar:第二个后端服务的Sidecar Proxy,地址为172.31.27.12 webserver03:第三个后端服务 webserver03-sidecar:第三个后端服务的Sidecar Proxy,地址为172.31.27.13

front-envoy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: web_cluster_01 } http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: red port_value: 80 load_balancing_weight: 1 - endpoint: address: socket_address: address: blue port_value: 80 load_balancing_weight: 3 - endpoint: address: socket_address: address: green port_value: 80 load_balancing_weight: 5

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

send-request.sh

#!/bin/bash declare -i red=0 declare -i blue=0 declare -i green=0 #interval="0.1" counts=300 echo "Send 300 requests, and print the result. This will take a while." echo "" echo "Weight of all endpoints:" echo "Red:Blue:Green = 1:3:5" for ((i=1; i<=${counts}; i++)); do if curl -s http://$1/hostname | grep "red" &> /dev/null; then # $1 is the host address of the front-envoy. red=$[$red+1] elif curl -s http://$1/hostname | grep "blue" &> /dev/null; then blue=$[$blue+1] else green=$[$green+1] fi # sleep $interval done echo "" echo "Response from:" echo "Red:Blue:Green = $red:$blue:$green"

docker-compose.yaml

version: '3.3' services: envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.27.2 aliases: - front-proxy depends_on: - webserver01-sidecar - webserver02-sidecar - webserver03-sidecar webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.27.11 aliases: - myservice - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.27.12 aliases: - myservice - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.27.13 aliases: - myservice - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.27.0/24

实验验证

docker-compose up

窗口克隆测试

# 使用如下脚本即可直接发起服务请求,并根据结果中统计的各后端端点的响应大体比例,判定其是否能够大体符合加权最少连接的调度机制; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/weighted-rr# ./send-request.sh 172.31.27.2 Send 300 requests, and print the result. This will take a while. Weight of all endpoints: Red:Blue:Green = 1:3:5 Response from: Red:Blue:Green = 55:81:164

实验环境

envoy:Front Proxy,地址为172.31.31.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.31.11, 别名为red和webservice1 webserver02:第二个后端服务 webserver02-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.31.12, 别名为blue和webservice1 webserver03:第三个后端服务 webserver03-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.31.13, 别名为green和webservice1 webserver04:第四个后端服务 webserver04-sidecar:第四个后端服务的Sidecar Proxy,地址为172.31.31.14, 别名为gray和webservice2 webserver05:第五个后端服务 webserver05-sidecar:第五个后端服务的Sidecar Proxy,地址为172.31.31.15, 别名为black和webservice2

front-envoy.yaml

admin: access_log_path: "/dev/null" address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - address: socket_address: { address: 0.0.0.0, port_value: 80 } name: listener_http filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager codec_type: auto stat_prefix: ingress_http route_config: name: local_route virtual_hosts: - name: backend domains: - "*" routes: - match: prefix: "/" route: cluster: webcluster1 http_filters: - name: envoy.filters.http.router clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN http2_protocol_options: {} load_assignment: cluster_name: webcluster1 policy: overprovisioning_factor: 140 endpoints: - locality: region: cn-north-1 priority: 0 load_balancing_weight: 10 lb_endpoints: - endpoint: address: socket_address: { address: webservice1, port_value: 80 } - locality: region: cn-north-2 priority: 0 load_balancing_weight: 20 lb_endpoints: - endpoint: address: socket_address: { address: webservice2, port_value: 80 } health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 1 http_health_check: path: /livez expected_statuses: start: 200 end: 399

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

send-request.sh

#!/bin/bash declare -i colored=0 declare -i colorless=0 interval="0.1" while true; do if curl -s http://$1/hostname | grep -E "red|blue|green" &> /dev/null; then # $1 is the host address of the front-envoy. colored=$[$colored+1] else colorless=$[$colorless+1] fi echo $colored:$colorless sleep $interval done

docker-compose.yaml

version: '3' services: front-envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: - envoymesh expose: # Expose ports 80 (for general traffic) and 9901 (for the admin server) - "80" - "9901" webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.31.11 aliases: - webservice1 - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.31.12 aliases: - webservice1 - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.31.13 aliases: - webservice1 - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar webserver04-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: gray networks: envoymesh: ipv4_address: 172.31.31.14 aliases: - webservice2 - gray webserver04: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver04-sidecar" depends_on: - webserver04-sidecar webserver05-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: black networks: envoymesh: ipv4_address: 172.31.31.15 aliases: - webservice2 - black webserver05: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver05-sidecar" depends_on: - webserver05-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.31.0/24

实验验证

docker-compose up

窗口克隆测试

# 通过send-requests.sh脚本进行测试,可发现,用户请求被按权重分配至不同的locality之上,每个locality内部再按负载均衡算法进行调度; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/locality-weighted#./send-requests.sh 172.31.31.2 ...... 283:189 283:190 283:191 284:191 285:191 286:191 286:192 286:193 287:193 ...... # 可以试着将权重较高的一组中的某一主机的健康状态团置为不可用;

实验环境

envoy:Front Proxy,地址为172.31.25.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.25.11 webserver02:第二个后端服务 webserver02-sidecar:第二个后端服务的Sidecar Proxy,地址为172.31.25.12 webserver03:第三个后端服务 webserver03-sidecar:第三个后端服务的Sidecar Proxy,地址为172.31.25.13

front-envoy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: webservice domains: ["*"] routes: - match: { prefix: "/" } route: cluster: web_cluster_01 hash_policy: # - connection_properties: # source_ip: true - header: header_name: User-Agent http_filters: - name: envoy.filters.http.router clusters: - name: web_cluster_01 connect_timeout: 0.5s type: STRICT_DNS lb_policy: RING_HASH ring_hash_lb_config: maximum_ring_size: 1048576 minimum_ring_size: 512 load_assignment: cluster_name: web_cluster_01 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: myservice port_value: 80 health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 2 http_health_check: path: /livez expected_statuses: start: 200 end: 399

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

send-request.sh

#!/bin/bash declare -i red=0 declare -i blue=0 declare -i green=0 interval="0.1" counts=200 echo "Send 300 requests, and print the result. This will take a while." for ((i=1; i<=${counts}; i++)); do if curl -s http://$1/hostname | grep "red" &> /dev/null; then # $1 is the host address of the front-envoy. red=$[$red+1] elif curl -s http://$1/hostname | grep "blue" &> /dev/null; then blue=$[$blue+1] else green=$[$green+1] fi sleep $interval done echo "" echo "Response from:" echo "Red:Blue:Green = $red:$blue:$green"

docker-compose.yaml

version: '3.3' services: envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.25.2 aliases: - front-proxy depends_on: - webserver01-sidecar - webserver02-sidecar - webserver03-sidecar webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.25.11 aliases: - myservice - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.25.12 aliases: - myservice - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.25.13 aliases: - myservice - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.25.0/24

实验验证

docker-compose up

克隆窗口测试

# 我们在路由hash策略中,hash计算的是用户的浏览器类型,因而,使用如下命令持续发起请求可以看出,用户请求将始终被定向到同一个后端端点;因为其浏览器类型一直未变。 while true; do curl 172.31.25.2; sleep .3; done # 我们可以模拟使用另一个浏览器再次发请求;其请求可能会被调度至其它节点,也可能仍然调度至前一次的相同节点之上;这取决于hash算法的计算结果; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/ring-hash# while true; do curl 172.31.25.2; sleep .3; done iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: red, ServerIP: 172.31.25.11! ...... # 也可使用如下脚本,验证同一个浏览器的请求是否都发往了同一个后端端点,而不同浏览器则可能会被重新调度; root@test:~# while true; do index=$[$RANDOM%10]; curl -H "User-Agent: Browser_${index}" 172.31.25.2/user-agent && curl -H "User-Agent: Browser_${index}" 172.31.25.2/hostname && echo ; sleep .1; done User-Agent: Browser_0 ServerName: green User-Agent: Browser_0 ServerName: green User-Agent: Browser_2 ServerName: red User-Agent: Browser_2 ServerName: red User-Agent: Browser_5 ServerName: blue User-Agent: Browser_9 ServerName: red # 也可以使用如下命令,将一个后端端点的健康检查结果置为失败,动态改变端点,并再次判定其调度结果,验证此前调度至该节点的请求是否被重新分配到了其它节点; root@test:~# curl -X POST -d 'livez=FAIL' http://172.31.25.11/livez iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! iKubernetes demoapp v1.0 !! ClientIP: 127.0.0.1, ServerName: blue, ServerIP: 172.31.25.12! #172.31.25.11故障,被调度到172.31.25.12了

实验环境

envoy:Front Proxy,地址为172.31.29.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.29.11, 别名为red和webservice1 webserver02:第二个后端服务 webserver02-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.29.12, 别名为blue和webservice1 webserver03:第三个后端服务 webserver03-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.29.13, 别名为green和webservice1 webserver04:第四个后端服务 webserver04-sidecar:第四个后端服务的Sidecar Proxy,地址为172.31.29.14, 别名为gray和webservice2 webserver05:第五个后端服务 webserver05-sidecar:第五个后端服务的Sidecar Proxy,地址为172.31.29.15, 别名为black和webservice2

front-envoy.yaml

admin: access_log_path: "/dev/null" address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - address: socket_address: address: 0.0.0.0 port_value: 80 name: listener_http filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager codec_type: auto stat_prefix: ingress_http route_config: name: local_route virtual_hosts: - name: backend domains: - "*" routes: - match: prefix: "/" route: cluster: webcluster1 http_filters: - name: envoy.filters.http.router clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN http2_protocol_options: {} load_assignment: cluster_name: webcluster1 policy: overprovisioning_factor: 140 endpoints: - locality: region: cn-north-1 priority: 0 lb_endpoints: - endpoint: address: socket_address: address: webservice1 port_value: 80 - locality: region: cn-north-2 priority: 1 lb_endpoints: - endpoint: address: socket_address: address: webservice2 port_value: 80 health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 1 http_health_check: path: /livez expected_statuses: start: 200 end: 399

front-envoy-v2.yaml

admin: access_log_path: "/dev/null" address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - address: socket_address: address: 0.0.0.0 port_value: 80 name: listener_http filter_chains: - filters: - name: envoy.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.config.filter.network.http_connection_manager.v2.HttpConnectionManager codec_type: auto stat_prefix: ingress_http route_config: name: local_route virtual_hosts: - name: backend domains: - "*" routes: - match: prefix: "/" route: cluster: webcluster1 http_filters: - name: envoy.router clusters: - name: webcluster1 connect_timeout: 0.5s type: STRICT_DNS lb_policy: ROUND_ROBIN http2_protocol_options: {} load_assignment: cluster_name: webcluster1 policy: overprovisioning_factor: 140 endpoints: - locality: region: cn-north-1 priority: 0 lb_endpoints: - endpoint: address: socket_address: address: webservice1 port_value: 80 - locality: region: cn-north-2 priority: 1 lb_endpoints: - endpoint: address: socket_address: address: webservice2 port_value: 80 health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 1 http_health_check: path: /livez

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 }

docker-compose.yaml

version: '3' services: front-envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy-v2.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.29.2 aliases: - front-proxy expose: # Expose ports 80 (for general traffic) and 9901 (for the admin server) - "80" - "9901" webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.29.11 aliases: - webservice1 - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.29.12 aliases: - webservice1 - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.29.13 aliases: - webservice1 - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar webserver04-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: gray networks: envoymesh: ipv4_address: 172.31.29.14 aliases: - webservice2 - gray webserver04: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver04-sidecar" depends_on: - webserver04-sidecar webserver05-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: black networks: envoymesh: ipv4_address: 172.31.29.15 aliases: - webservice2 - black webserver05: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver05-sidecar" depends_on: - webserver05-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.29.0/24

实验验证

docker-compose up

窗口克隆测试

持续请求服务,可发现,请求均被调度至优先级为0的webservice1相关的后端端点之上; while true; do curl 172.31.29.2; sleep .5; done # 等确定服务的调度结果后,另启一个终端,修改webservice1中任何一个后端端点的/livez响应为非"OK"值,例如,修改第一个后端端点; curl -X POST -d 'livez=FAIL' http://172.31.29.11/livez # 而后通过请求的响应结果可发现,因过载因子为1.4,客户端的请求仍然始终只发往webservice1的后端端点blue和green之上; # 等确定服务的调度结果后,再修改其中任何一个服务的/livez响应为非"OK"值,例如,修改第一个后端端点; curl -X POST -d 'livez=FAIL' http://172.31.29.12/livez # 请求中,可以看出第一个端点因响应5xx的响应码,每次被加回之后,会再次弹出,除非使用类似如下命令修改为正常响应结果; curl -X POST -d 'livez=OK' http://172.31.29.11/livez # 而后通过请求的响应结果可发现,因过载因子为1.4,优先级为0的webserver1已然无法锁住所有的客户端请求,于是,客户端的请求的部分流量将被转发至webservice2的端点之上;

实验环境

envoy:Front Proxy,地址为172.31.33.2 [e1, e7]:7个后端服务

front-envoy.yaml

admin: access_log_path: "/dev/null" address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - address: socket_address: { address: 0.0.0.0, port_value: 80 } name: listener_http filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager codec_type: auto stat_prefix: ingress_http route_config: name: local_route virtual_hosts: - name: backend domains: - "*" routes: - match: prefix: "/" headers: - name: x-custom-version exact_match: pre-release route: cluster: webcluster1 metadata_match: filter_metadata: envoy.lb: version: "1.2-pre" stage: "dev" - match: prefix: "/" headers: - name: x-hardware-test exact_match: memory route: cluster: webcluster1 metadata_match: filter_metadata: envoy.lb: type: "bigmem" stage: "prod" - match: prefix: "/" route: weighted_clusters: clusters: - name: webcluster1 weight: 90 metadata_match: filter_metadata: envoy.lb: version: "1.0" - name: webcluster1 weight: 10 metadata_match: filter_metadata: envoy.lb: version: "1.1" metadata_match: filter_metadata: envoy.lb: stage: "prod" http_filters: - name: envoy.filters.http.router clusters: - name: webcluster1 connect_timeout: 0.5s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: webcluster1 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: e1 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.0" type: "std" xlarge: true - endpoint: address: socket_address: address: e2 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.0" type: "std" - endpoint: address: socket_address: address: e3 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.1" type: "std" - endpoint: address: socket_address: address: e4 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.1" type: "std" - endpoint: address: socket_address: address: e5 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.0" type: "bigmem" - endpoint: address: socket_address: address: e6 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "prod" version: "1.1" type: "bigmem" - endpoint: address: socket_address: address: e7 port_value: 80 metadata: filter_metadata: envoy.lb: stage: "dev" version: "1.2-pre" type: "std" lb_subset_config: fallback_policy: DEFAULT_SUBSET default_subset: stage: "prod" version: "1.0" type: "std" subset_selectors: - keys: ["stage", "type"] - keys: ["stage", "version"] - keys: ["version"] - keys: ["xlarge", "version"] health_checks: - timeout: 5s interval: 10s unhealthy_threshold: 2 healthy_threshold: 1 http_health_check: path: /livez expected_statuses: start: 200 end: 399

test.sh

#!/bin/bash declare -i v10=0 declare -i v11=0 for ((counts=0; counts<200; counts++)); do if curl -s http://$1/hostname | grep -E "e[125]" &> /dev/null; then # $1 is the host address of the front-envoy. v10=$[$v10+1] else v11=$[$v11+1] fi done echo "Requests: v1.0:v1.1 = $v10:$v11"

docker-compose.yaml

version: '3' services: front-envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: envoymesh: ipv4_address: 172.31.33.2 expose: # Expose ports 80 (for general traffic) and 9901 (for the admin server) - "80" - "9901" e1: image: ikubernetes/demoapp:v1.0 hostname: e1 networks: envoymesh: ipv4_address: 172.31.33.11 aliases: - e1 expose: - "80" e2: image: ikubernetes/demoapp:v1.0 hostname: e2 networks: envoymesh: ipv4_address: 172.31.33.12 aliases: - e2 expose: - "80" e3: image: ikubernetes/demoapp:v1.0 hostname: e3 networks: envoymesh: ipv4_address: 172.31.33.13 aliases: - e3 expose: - "80" e4: image: ikubernetes/demoapp:v1.0 hostname: e4 networks: envoymesh: ipv4_address: 172.31.33.14 aliases: - e4 expose: - "80" e5: image: ikubernetes/demoapp:v1.0 hostname: e5 networks: envoymesh: ipv4_address: 172.31.33.15 aliases: - e5 expose: - "80" e6: image: ikubernetes/demoapp:v1.0 hostname: e6 networks: envoymesh: ipv4_address: 172.31.33.16 aliases: - e6 expose: - "80" e7: image: ikubernetes/demoapp:v1.0 hostname: e7 networks: envoymesh: ipv4_address: 172.31.33.17 aliases: - e7 expose: - "80" networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.33.0/24

实验验证

docker-compose up

窗口克隆测试

# test.sh脚本接受front-envoy的地址,并持续向该地址发起请求,而后显示流量分配的结果;根据路由规则,未指定x-hardware-test和x-custom-version且给予了相应值的请求,均会调度给默认子集,且在两个组之间进行流量分发; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# ./test.sh 172.31.33.2 Requests: v1.0:v1.1 = 183:17 # 我们可以指定特殊的首部发出特定的请求,例如附带有”x-hardware-test: memory”的请求,将会被分发至特定的子集;该子集要求标签type的值为bigmem,而标签stage的值为prod;该子集共有e5和e6两个端点 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-hardware-test: memory" 172.31.33.2/hostname ServerName: e6 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-hardware-test: memory" 172.31.33.2/hostname ServerName: e5 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-hardware-test: memory" 172.31.33.2/hostname ServerName: e6 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-hardware-test: memory" 172.31.33.2/hostname ServerName: e5 # 或者,我们也可以指定特殊的首部发出特定的请求,例如附带有”x-custom-version: pre-release”的请求,将会被分发至特定的子集;该子集要求标签version的值为1.2-pre,而标签stage的值为dev;该子集有e7一个端点; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-custom-version: pre-release" 172.31.33.2/hostname ServerName: e7 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-custom-version: pre-release" 172.31.33.2/hostname ServerName: e7 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-custom-version: pre-release" 172.31.33.2/hostname ServerName: e7 root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/lb-subsets# curl -H "x-custom-version: pre-release" 172.31.33.2/hostname ServerName: e7

9、circuit-breaker

envoy:Front Proxy,地址为172.31.35.2 webserver01:第一个后端服务 webserver01-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.35.11, 别名为red和webservice1 webserver02:第二个后端服务 webserver02-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.35.12, 别名为blue和webservice1 webserver03:第三个后端服务 webserver03-sidecar:第一个后端服务的Sidecar Proxy,地址为172.31.35.13, 别名为green和webservice1 webserver04:第四个后端服务 webserver04-sidecar:第四个后端服务的Sidecar Proxy,地址为172.31.35.14, 别名为gray和webservice2 webserver05:第五个后端服务 webserver05-sidecar:第五个后端服务的Sidecar Proxy,地址为172.31.35.15, 别名为black和webservice2

front-envoy.yaml

admin: access_log_path: "/dev/null" address: socket_address: { address: 0.0.0.0, port_value: 9901 } static_resources: listeners: - address: socket_address: { address: 0.0.0.0, port_value: 80 } name: listener_http filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager codec_type: auto stat_prefix: ingress_http route_config: name: local_route virtual_hosts: - name: backend domains: - "*" routes: - match: prefix: "/livez" route: cluster: webcluster2 - match: prefix: "/" route: cluster: webcluster1 http_filters: - name: envoy.filters.http.router clusters: - name: webcluster1 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: webcluster1 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: webservice1 port_value: 80 circuit_breakers: thresholds: max_connections: 1 max_pending_requests: 1 max_retries: 3 - name: webcluster2 connect_timeout: 0.25s type: STRICT_DNS lb_policy: ROUND_ROBIN load_assignment: cluster_name: webcluster2 endpoints: - lb_endpoints: - endpoint: address: socket_address: address: webservice2 port_value: 80 outlier_detection: interval: "1s" consecutive_5xx: "3" consecutive_gateway_failure: "3" base_ejection_time: "10s" enforcing_consecutive_gateway_failure: "100" max_ejection_percent: "30" success_rate_minimum_hosts: "2"

envoy-sidecar-proxy.yaml

admin: profile_path: /tmp/envoy.prof access_log_path: /tmp/admin_access.log address: socket_address: address: 0.0.0.0 port_value: 9901 static_resources: listeners: - name: listener_0 address: socket_address: { address: 0.0.0.0, port_value: 80 } filter_chains: - filters: - name: envoy.filters.network.http_connection_manager typed_config: "@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager stat_prefix: ingress_http codec_type: AUTO route_config: name: local_route virtual_hosts: - name: local_service domains: ["*"] routes: - match: { prefix: "/" } route: { cluster: local_cluster } http_filters: - name: envoy.filters.http.router clusters: - name: local_cluster connect_timeout: 0.25s type: STATIC lb_policy: ROUND_ROBIN load_assignment: cluster_name: local_cluster endpoints: - lb_endpoints: - endpoint: address: socket_address: { address: 127.0.0.1, port_value: 8080 } circuit_breakers: thresholds: max_connections: 1 max_pending_requests: 1 max_retries: 2

send-requests.sh

#!/bin/bash # if [ $# -ne 2 ] then echo "USAGE: $0 <URL> <COUNT>" exit 1; fi URL=$1 COUNT=$2 c=1 #interval="0.2" while [[ ${c} -le ${COUNT} ]]; do #echo "Sending GET request: ${URL}" curl -o /dev/null -w '%{http_code}\n' -s ${URL} & (( c++ )) # sleep $interval done wait

docker-compose.yaml

version: '3' services: front-envoy: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./front-envoy.yaml:/etc/envoy/envoy.yaml networks: - envoymesh expose: # Expose ports 80 (for general traffic) and 9901 (for the admin server) - "80" - "9901" webserver01-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: red networks: envoymesh: ipv4_address: 172.31.35.11 aliases: - webservice1 - red webserver01: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver01-sidecar" depends_on: - webserver01-sidecar webserver02-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: blue networks: envoymesh: ipv4_address: 172.31.35.12 aliases: - webservice1 - blue webserver02: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver02-sidecar" depends_on: - webserver02-sidecar webserver03-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: green networks: envoymesh: ipv4_address: 172.31.35.13 aliases: - webservice1 - green webserver03: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver03-sidecar" depends_on: - webserver03-sidecar webserver04-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: gray networks: envoymesh: ipv4_address: 172.31.35.14 aliases: - webservice2 - gray webserver04: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver04-sidecar" depends_on: - webserver04-sidecar webserver05-sidecar: image: envoyproxy/envoy-alpine:v1.20.0 environment: - ENVOY_UID=0 volumes: - ./envoy-sidecar-proxy.yaml:/etc/envoy/envoy.yaml hostname: black networks: envoymesh: ipv4_address: 172.31.35.15 aliases: - webservice2 - black webserver05: image: ikubernetes/demoapp:v1.0 environment: - PORT=8080 - HOST=127.0.0.1 network_mode: "service:webserver05-sidecar" depends_on: - webserver05-sidecar networks: envoymesh: driver: bridge ipam: config: - subnet: 172.31.35.0/24

实验验证

docker-compose up

窗口克隆测试

# 通过send-requests.sh脚本进行webcluster1的请求测试,可发现,有部分请求的响应码为5xx,这其实就是被熔断的处理结果; root@test:/apps/servicemesh_in_practise-develop/Cluster-Manager/circuit-breaker# ./send-requests.sh http://172.31.35.2/ 300 200 200 200 503 #熔断 200 200