八、docker的跨主机网络通信--flanneld

Flannel是CoreOS团队针对Kubernetes设计的一个网络规划服务,简单来说,它的功能是让集群中的不同节点主机创建的Docker容器都具有全集群唯一的虚拟IP地址。但在默认的Docker配置中,每个节点上的Docker服务会分别负责所在节点容器的IP分配。这样导致的一个问题是,不同节点上容器可能获得相同的内外IP地址。并使这些容器之间能够之间通过IP地址相互找到,也就是相互ping通。Flannel设计目的就是为集群中所有节点重新规划IP地址的使用规则,从而使得不同节点上的容器能够获得"同属一个内网"且"不重复的"IP地址,并让属于不同节点上的容器能够直接通过内网IP通信。

Flannel实质上是一种"覆盖网络(overlay network)",即表示运行在一个网上的网(应用层网络),并不依靠ip地址来传递消息,而是采用一种映射机制,把ip地址和identifiers做映射来资源定位。也就是将TCP数据包装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VxLAN、AWS VPC和GCE路由等数据转发方式。

Flannel 使用etcd存储配置数据和子网分配信息。flannel 启动之后,后台进程首先检索配置和正在使用的子网列表,然后选择一个可用的子网,然后尝试去注册它。etcd也存储这个每个主机对应的ip。flannel 使用etcd的watch机制监视/coreos.com/network/subnets下面所有元素的变化信息,并且根据它来维护一个路由表。为了提高性能,flannel优化了Universal TAP/TUN设备,对TUN和UDP之间的ip分片做了代理。

二、flannel的原理

每个主机配置一个ip段和子网个数。例如,可以配置一个覆盖网络使用 10.1.0.0/16段,每个主机/24个子网。因此主机a可以接受10.1.15.0/24,主机B可以接受10.1.20.0/24的包。flannel使用etcd来维护分配的子网到实际的ip地址之间的映射。对于数据路径,flannel 使用udp来封装ip数据报,转发到远程主机。选择UDP作为转发协议是因为他能穿透防火墙。例如,AWS Classic无法转发IPoIP or GRE 网络包,是因为它的安全组仅仅支持TCP/UDP/ICMP。 Flannel工作原理流程图如下 (默认的节点间数据通信方式是UDP转发; flannel默认使用8285端口作为UDP封装报文的端口,VxLan使用8472端口)

这样整个数据包的传递就完成了,这里需要解释三个问题: 1) UDP封装是怎么回事? 在UDP的数据内容部分其实是另一个ICMP(也就是ping命令)的数据包。原始数据是在起始节点的Flannel服务上进行UDP封装的,投递到目的节点后就被另一端的Flannel服务 还原成了原始的数据包,两边的Docker服务都感觉不到这个过程的存在。

2) 为什么每个节点上的Docker会使用不同的IP地址段? 这个事情看起来很诡异,但真相十分简单。其实只是单纯的因为Flannel通过Etcd分配了每个节点可用的IP地址段后,偷偷的修改了Docker的启动参数。 在运行了Flannel服务的节点上可以查看到Docker服务进程运行参数(ps aux|grep docker|grep "bip"),例如“--bip=10.1.15.0/24”这个参数,它限制了所在节点容器获得的IP范围。这个IP范围是由Flannel自动分配的,由Flannel通过保存在Etcd服务中的记录确保它们不会重复。

3) 为什么在发送节点上的数据会从docker0路由到flannel0虚拟网卡,在目的节点会从flannel0路由到docker0虚拟网卡?

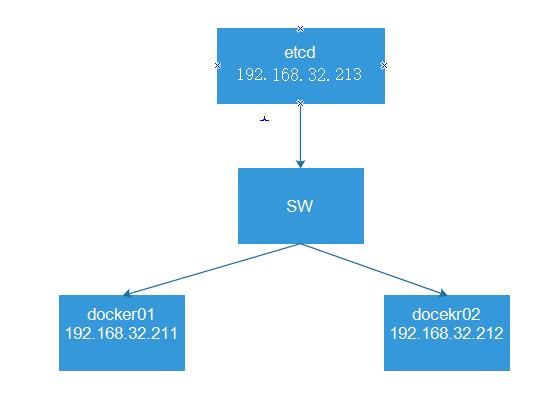

1、网络拓扑

System OS: CentOS Linux release 7.6.1810 (Core) Docker version: Docker version 20.10.1, build 831ebea node1 192.168.32.211 docker01 docker+flanneld node2 192.168.32.212 docker02 docker+flanneld node3 192.168.32.213 ectd01 etcd

node1和node2

开启内核ipv4转发功能 echo "net.ipv4.ip_forward = 1" >> /etc/sysctl.conf sysctl -p 清除iptables底层默认规则,并开启允许转发功能 iptables -P INPUT ACCEPT iptables -P FORWARD ACCEPT iptables -F iptables -L -n 关闭seLinux和防火墙

4、部署etcd

yum install -y etcd

node3

#[Member] #ETCD_CORS="" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" #ETCD_WAL_DIR="" #ETCD_LISTEN_PEER_URLS="http://localhost:2380" ETCD_LISTEN_CLIENT_URLS="http://192.168.32.213:2379" #ETCD_MAX_SNAPSHOTS="5" #ETCD_MAX_WALS="5" ETCD_NAME="default" #ETCD_SNAPSHOT_COUNT="100000" #ETCD_HEARTBEAT_INTERVAL="100" #ETCD_ELECTION_TIMEOUT="1000" #ETCD_QUOTA_BACKEND_BYTES="0" #ETCD_MAX_REQUEST_BYTES="1572864" #ETCD_GRPC_KEEPALIVE_MIN_TIME="5s" #ETCD_GRPC_KEEPALIVE_INTERVAL="2h0m0s" #ETCD_GRPC_KEEPALIVE_TIMEOUT="20s" # #[Clustering] #ETCD_INITIAL_ADVERTISE_PEER_URLS="http://localhost:2380" ETCD_ADVERTISE_CLIENT_URLS="http://192.168.32.213:2379" #ETCD_DISCOVERY="" #ETCD_DISCOVERY_FALLBACK="proxy" #ETCD_DISCOVERY_PROXY="" #ETCD_DISCOVERY_SRV="" #ETCD_INITIAL_CLUSTER="default=http://localhost:2380" #ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" #ETCD_INITIAL_CLUSTER_STATE="new" #ETCD_STRICT_RECONFIG_CHECK="true" #ETCD_ENABLE_V2="true" # #[Proxy] #ETCD_PROXY="off" #ETCD_PROXY_FAILURE_WAIT="5000" #ETCD_PROXY_REFRESH_INTERVAL="30000" #ETCD_PROXY_DIAL_TIMEOUT="1000" #ETCD_PROXY_WRITE_TIMEOUT="5000" #ETCD_PROXY_READ_TIMEOUT="0" # #[Security] #ETCD_CERT_FILE="" #ETCD_KEY_FILE="" #ETCD_CLIENT_CERT_AUTH="false" #ETCD_TRUSTED_CA_FILE="" #ETCD_AUTO_TLS="false" #ETCD_PEER_CERT_FILE="" #ETCD_PEER_KEY_FILE="" #ETCD_PEER_CLIENT_CERT_AUTH="false" #ETCD_PEER_TRUSTED_CA_FILE="" #ETCD_PEER_AUTO_TLS="false" # #[Logging] #ETCD_DEBUG="false" #ETCD_LOG_PACKAGE_LEVELS="" #ETCD_LOG_OUTPUT="default" # #[Unsafe] #ETCD_FORCE_NEW_CLUSTER="false" # #[Version] #ETCD_VERSION="false" #ETCD_AUTO_COMPACTION_RETENTION="0" # #[Profiling] #ETCD_ENABLE_PPROF="false" #ETCD_METRICS="basic" # #[Auth] #ETCD_AUTH_TOKEN="simple"

3)启动etcd

systemctl start etcd

systemctl enable etcd

node3

[root@node3 ~]# systemctl status etcd ● etcd.service - Etcd Server Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled) Active: active (running) since Thu 2020-12-24 23:31:48 CST; 2min 38s ago Main PID: 7205 (etcd) CGroup: /system.slice/etcd.service └─7205 /usr/bin/etcd --name=default --data-dir=/var/lib/etcd/default.etcd --listen-client-urls=http://192.168.32.213:2379 Dec 24 23:31:48 node3 etcd[7205]: 8e9e05c52164694d received MsgVoteResp from 8e9e05c52164694d at term 2 Dec 24 23:31:48 node3 etcd[7205]: 8e9e05c52164694d became leader at term 2 Dec 24 23:31:48 node3 etcd[7205]: raft.node: 8e9e05c52164694d elected leader 8e9e05c52164694d at term 2 Dec 24 23:31:48 node3 etcd[7205]: published {Name:default ClientURLs:[http://192.168.32.213:2379]} to cluster cdf818194e3a8c32 Dec 24 23:31:48 node3 etcd[7205]: setting up the initial cluster version to 3.3 Dec 24 23:31:48 node3 systemd[1]: Started Etcd Server. Dec 24 23:31:48 node3 etcd[7205]: set the initial cluster version to 3.3 Dec 24 23:31:48 node3 etcd[7205]: enabled capabilities for version 3.3 Dec 24 23:31:48 node3 etcd[7205]: ready to serve client requests Dec 24 23:31:48 node3 etcd[7205]: serving insecure client requests on 192.168.32.213:2379, this is strongly discouraged!

5) 配置etcd中关于flannel的key(这个只在安装了etcd的机器上操作)

[root@node3 ~]# etcdctl --endpoints="http://192.168.32.213:2379" set /atomic.io/network/config '{"Network": "10.1.0.0/16", "Backend":{"Type":"vxlan"}}' {"Network": "10.1.0.0/16", "Backend":{"Type":"vxlan"}}

5、部署flannel

1)node1和node2安装flannel

yum install -y flannel

# Flanneld configuration options # etcd url location. Point this to the server where etcd runs FLANNEL_ETCD_ENDPOINTS="http://192.168.32.213:2379" # etcd config key. This is the configuration key that flannel queries # For address range assignment FLANNEL_ETCD_PREFIX="/atomic.io/network" # Any additional options that you want to pass #FLANNEL_OPTIONS=""

node1和node2

systemctl start flanneld.service

systemctl enable flanneld.service

4) 查看flannel信息

ps -ef|grep flannel

root 8191 1 0 23:55 ? 00:00:00 /usr/bin/flanneld -etcd-endpoints=http://192.168.32.213:2379 -etcd-prefix=/atomic.io/network

root 8237 6556 0 23:55 pts/0 00:00:00 grep --color=auto flanne

node1

[root@node1 ~]# cat /var/run/flannel/docker DOCKER_OPT_BIP="--bip=10.1.83.1/24" DOCKER_OPT_IPMASQ="--ip-masq=true" DOCKER_OPT_MTU="--mtu=1450" DOCKER_NETWORK_OPTIONS=" --bip=10.1.83.1/24 --ip-masq=true --mtu=1450" #在node1上分发的是10.1.83.1/24的网络

查看flannel网卡

[root@node1 docker]# ifconfig flannel.1 flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450 inet 10.1.83.0 netmask 255.255.255.255 broadcast 0.0.0.0 inet6 fe80::98a4:88ff:fec9:22c1 prefixlen 64 scopeid 0x20<link> ether 9a:a4:88:c9:22:c1 txqueuelen 0 (Ethernet) RX packets 0 bytes 0 (0.0 B) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 0 bytes 0 (0.0 B) TX errors 0 dropped 8 overruns 0 carrier 0 collisions 0

node2

[root@node2 ~]# cat /var/run/flannel/docker DOCKER_OPT_BIP="--bip=10.1.24.1/24" DOCKER_OPT_IPMASQ="--ip-masq=true" DOCKER_OPT_MTU="--mtu=1450" DOCKER_NETWORK_OPTIONS=" --bip=10.1.24.1/24 --ip-masq=true --mtu=1450" #在node2上分发的是10.1.24.1/24的网络

查看flannel网卡

[root@node2 ~]# ifconfig flannel.1 flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450 inet 10.1.24.0 netmask 255.255.255.255 broadcast 0.0.0.0 inet6 fe80::b825:6bff:fe00:f651 prefixlen 64 scopeid 0x20<link> ether ba:25:6b:00:f6:51 txqueuelen 0 (Ethernet) RX packets 0 bytes 0 (0.0 B) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 0 bytes 0 (0.0 B) TX errors 0 dropped 8 overruns 0 carrier 0 collisions 0

在node1和node2

#下载docker-ce的yum源 wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.tuna.tsinghua.edu.cn/docker-ce/linux/centos/docker-ce.repo #把docker-ce源的地址修改为清华源的地址 sed -i 's#download.docker.com#mirrors.tuna.tsinghua.edu.cn/docker-ce#g' /etc/yum.repos.d/docker-ce.repo #更新docker-ce.repo yum makecache fast #安装docker-ce yum install -y docker-ce systemctl start docker

node1和node2

方法一:

vim /usr/lib/systemd/system/docker.service配置文件中的在ExecStart行添加$DOCKER_NETWORK_OPTIONS ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock $DOCKER_NETWORK_OPTIONS #$DOCKER_NETWORK_OPTIONS为该节点/var/run/flannel/docker下的信息 #[root@node2 ~]# cat /var/run/flannel/docker #DOCKER_OPT_BIP="--bip=10.1.24.1/24" #DOCKER_OPT_IPMASQ="--ip-masq=true" #DOCKER_OPT_MTU="--mtu=1450" #DOCKER_NETWORK_OPTIONS=" --bip=10.1.24.1/24 --ip-masq=true --mtu=1450"

[root@node2 ~]# systemctl daemon-reload [root@node2 ~]# systemctl restart docker [root@node2 ~]# docker run -itd --name test02 busybox 725d4f01dde74090d45e1bbc4b03e59e4ec27c54dd3aff35170bb076ffba496a [root@node2 ~]# docker exec -it test02 /bin/sh / # ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:01:18:02 inet addr:10.1.24.2 Bcast:10.1.24.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:656 (656.0 B) TX bytes:0 (0.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

直接在/usr/lib/systemd/system/docker.service配置文件中的在ExecStart行添加$DOCKER_NETWORK_OPTIONS的值信息

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --bip=10.1.83.1/24 --ip-masq=true --mtu=1450 #[root@node1 ~]# cat /var/run/flannel/docker #DOCKER_OPT_BIP="--bip=10.1.83.1/24" #DOCKER_OPT_IPMASQ="--ip-masq=true" #DOCKER_OPT_MTU="--mtu=1450" #DOCKER_NETWORK_OPTIONS=" --bip=10.1.83.1/24 --ip-masq=true --mtu=1450"

[root@node1 docker]# systemctl daemon-reload [root@node1 docker]# systemctl restart docker [root@node1 docker]# docker run -itd --name test01 busybox 87f57e4248d8043f2caf704bd351e063283c74963ae2b385dff33998af34f099 [root@node1 docker]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 87f57e4248d8 busybox "sh" 3 seconds ago Up 2 seconds test01 [root@node1 docker]# docker exec -it test01 /bin/sh / # ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:01:53:02 inet addr:10.1.83.2 Bcast:10.1.83.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:656 (656.0 B) TX bytes:0 (0.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

[root@node1 docker]# docker run -itd --name test01 busybox 87f57e4248d8043f2caf704bd351e063283c74963ae2b385dff33998af34f099 [root@node1 docker]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 87f57e4248d8 busybox "sh" 3 seconds ago Up 2 seconds test01 [root@node1 docker]# docker exec -it test01 /bin/sh / # ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:01:53:02 inet addr:10.1.83.2 Bcast:10.1.83.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:656 (656.0 B) TX bytes:0 (0.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

docker02:node2上启动一个叫test02的busybox

[root@node2 ~]# docker run -itd --name test02 busybox 725d4f01dde74090d45e1bbc4b03e59e4ec27c54dd3aff35170bb076ffba496a [root@node2 ~]# docker exec -it test02 /bin/sh / # ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:01:18:02 inet addr:10.1.24.2 Bcast:10.1.24.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:656 (656.0 B) TX bytes:0 (0.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

test01可以Ping通test02

[root@node1 docker]# docker exec -it test01 /bin/sh / # ping 10.1.24.2 PING 10.1.24.2 (10.1.24.2): 56 data bytes 64 bytes from 10.1.24.2: seq=0 ttl=62 time=2.147 ms 64 bytes from 10.1.24.2: seq=1 ttl=62 time=0.539 ms 64 bytes from 10.1.24.2: seq=2 ttl=62 time=0.481 ms