Spark之RDD算子练习

RDD算子的分类

Transformation(转换):根据数据集创建一个新的 数据集,计算后返回一个新的RDD。例如,一个RDD进行map操作后,生成了新的RDD。

Action(动作):对RDD结果计算返回一个数值value给驱动程序,或者把结果存储到外部存储系统中;

例如:collect算子将数据集的所有元数据收集完成返回给驱动程序。

Transformation

RDD中的所有转换都是延迟加载的,也就是说,他们并不会直接计算结果。相反的,他们只是记住这些应用到基础数据集(例如一个文件)上转换动作。只有当发生一个要求返回结果给Driver的动作或者将结果写入到外部存储中,这写转换才会真正的运行,这种设计让Spark更加有效率的运行。

Transformation算子练习

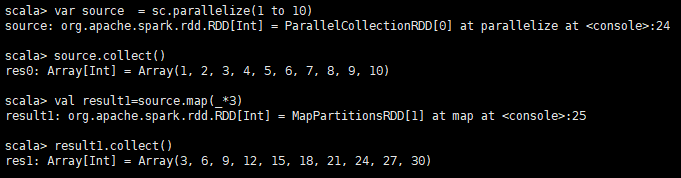

说明:返回一个新的RDD,该RDD由每一个输入元素经过func函数转换后组成

scala> var source = sc.parallelize(1 to 10) source: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[0] at parallelize at <console>:24 scala> source.collect() res0: Array[Int] = Array(1, 2, 3, 4, 5, 6, 7, 8, 9, 10) scala> val result1 = source.map(_ * 3) result1: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[1] at map at <console>:25 scala> result1.collect()

res1: Array[Int] = Array(3, 6, 9, 12, 15, 18, 21, 24, 27, 30)

l类似于map,但独立地在RDD的每一个分片上运行,因此在类型为T的RDD上运行时,func的函数类型必须是Iterator[T] => Iterator[U]。

假设有N个元素,有M个分区,那么map的函数的将被调用N次,而mapPartitions被调用M次,一个函数一次处理所有分区

scala> val rdd=sc.parallelize(List(("zhangsan","male"),("xiaohong","female"),("lisi","male"),("rose","female"))) rdd: org.apache.spark.rdd.RDD[(String, String)] = ParallelCollectionRDD[2] at parallelize at <console>:24 scala> :paste // Entering paste mode (ctrl-D to finish) def partitionsFun(iter : Iterator[(String,String)]) : Iterator[String] = { var woman = List[String]() while (iter.hasNext){ val next = iter.next() next match { case (_,"female") => woman = next._1 :: woman case _ => } } woman.iterator } // Exiting paste mode, now interpreting. partitionsFun: (iter: Iterator[(String, String)])Iterator[String] scala> val result=rdd.mapPartitions(partitionsFun) result: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[3] at mapPartitions at <console>:27 scala> result.collect() res0: Array[String] = Array(xiaohong, rose)

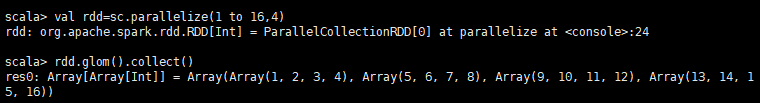

将每一个分区形成一个数组,形成新的RDD类型时RDD[Array[T]]

scala> val rdd=sc.parallelize(1 to 16,4) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[0] at parallelize at <console>:24 scala> rdd.glom().collect() res0: Array[Array[Int]] = Array(Array(1, 2, 3, 4), Array(5, 6, 7, 8), Array(9, 10, 11, 12), Array(13, 14, 15, 16))

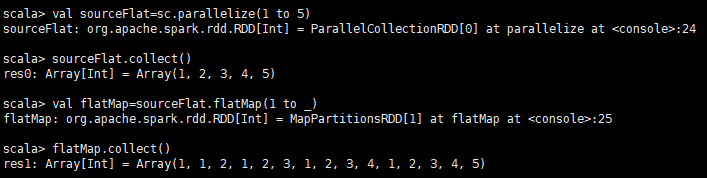

类似于map,但是每一个输入元素可以被映射为0或多个输出元素(所以func应该返回一个序列,而不是单一元素)

scala> val sourceFlat=sc.parallelize(1 to 5) sourceFlat: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[0] at parallelize at <console>:24 scala> sourceFlat.collect() res0: Array[Int] = Array(1, 2, 3, 4, 5) scala> val flatMap=sourceFlat.flatMap(1 to _) flatMap: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[1] at flatMap at <console>:25 scala> flatMap.collect() res1: Array[Int] = Array(1, 1, 2, 1, 2, 3, 1, 2, 3, 4, 1, 2, 3, 4, 5)

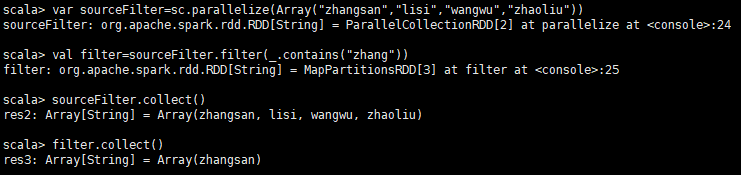

返回一个新的RDD,该RDD由经过func函数计算后返回值为true的输入元素组成

scala> var sourceFilter=sc.parallelize(Array("zhangsan","lisi","wangwu","zhaoliu")) sourceFilter: org.apache.spark.rdd.RDD[String] = ParallelCollectionRDD[2] at parallelize at <console>:24 scala> val filter=sourceFilter.filter(_.contains("zhang")) filter: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[3] at filter at <console>:25 scala> sourceFilter.collect() res2: Array[String] = Array(zhangsan, lisi, wangwu, zhaoliu) scala> filter.collect() res3: Array[String] = Array(zhangsan)

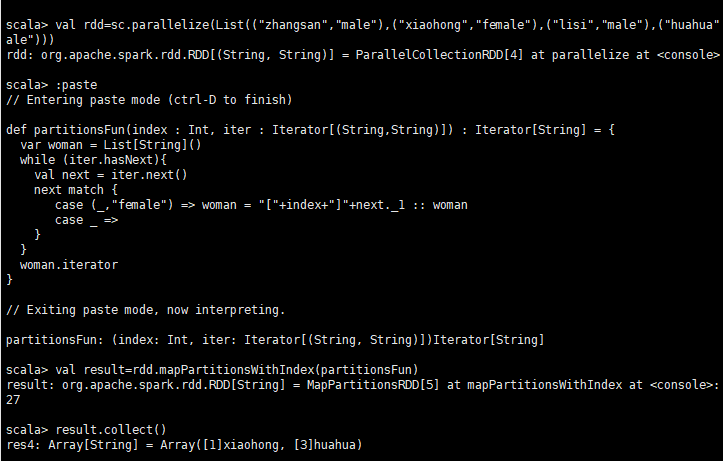

类似于mapPartitions,但func带有一个整数参数表示分片的索引值,因此在类型为T的RDD上运行时,

func的函数类型必须是(Int, Interator[T]) => Iterator[U]

scala> val rdd=sc.parallelize(List(("zhangsan","male"),("xiaohong","female"),("lisi","male"),("huahua",ale"))) rdd: org.apache.spark.rdd.RDD[(String, String)] = ParallelCollectionRDD[4] at parallelize at <console>: scala> :paste // Entering paste mode (ctrl-D to finish) def partitionsFun(index : Int, iter : Iterator[(String,String)]) : Iterator[String] = { var woman = List[String]() while (iter.hasNext){ val next = iter.next() next match { case (_,"female") => woman = "["+index+"]"+next._1 :: woman case _ => } } woman.iterator } // Exiting paste mode, now interpreting. partitionsFun: (index: Int, iter: Iterator[(String, String)])Iterator[String] scala> val result=rdd.mapPartitionsWithIndex(partitionsFun) result: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[5] at mapPartitionsWithIndex at <console>:27 scala> result.collect() res4: Array[String] = Array([1]xiaohong, [3]huahua)

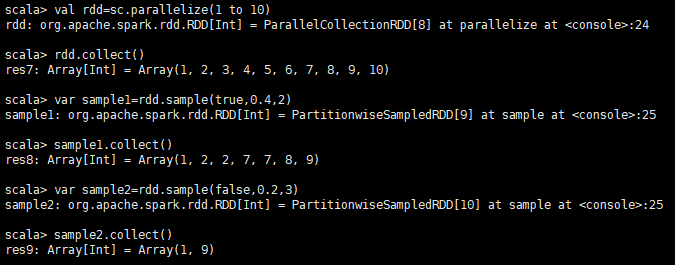

以指定的随机种子随机抽样出数量为fraction的数据,withReplacement表示是抽出的数据是否放回,true为有放回的抽样,false为无放回的抽样

,seed用于指定随机数生成器种子。例子从RDD中随机且有放回的抽出50%的数据,随机种子值为3(即可能以1 2 3的其中一个起始值)

scala> val rdd=sc.parallelize(1 to 10) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[8] at parallelize at <console>:24 scala> rdd.collect() res7: Array[Int] = Array(1, 2, 3, 4, 5, 6, 7, 8, 9, 10) scala> var sample1=rdd.sample(true,0.4,2) sample1: org.apache.spark.rdd.RDD[Int] = PartitionwiseSampledRDD[9] at sample at <console>:25 scala> sample1.collect() res8: Array[Int] = Array(1, 2, 2, 7, 7, 8, 9) scala> var sample2=rdd.sample(false,0.2,3) sample2: org.apache.spark.rdd.RDD[Int] = PartitionwiseSampledRDD[10] at sample at <console>:25 scala> sample2.collect() res9: Array[Int] = Array(1, 9)

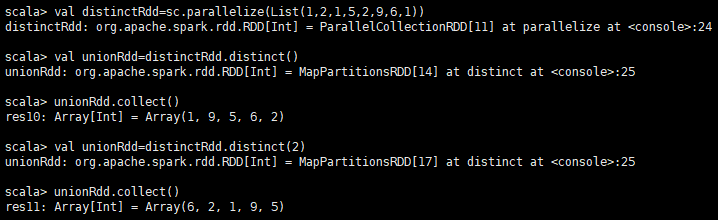

对源RDD进行去重后返回一个新的RDD. 默认情况下,只有8个并行任务来操作,但是可以传入一个可选的numTasks参数改变它。

scala> val distinctRdd=sc.parallelize(List(1,2,1,5,2,9,6,1)) distinctRdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[11] at parallelize at <console>:24 scala> val unionRdd=distinctRdd.distinct() unionRdd: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[14] at distinct at <console>:25 scala> unionRdd.collect() res10: Array[Int] = Array(1, 9, 5, 6, 2) scala> val unionRdd=distinctRdd.distinct(2) unionRdd: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[17] at distinct at <console>:25 scala> unionRdd.collect() res11: Array[Int] = Array(6, 2, 1, 9, 5)

对RDD进行分区操作,如果原有的partionRDD和现有的partionRDD是一致的话就不进行分区, 否则会生成ShuffleRDD。

scala> val rdd=sc.parallelize(Array((1,"aa"),(2,"bb"),(3,"cc"),(4,"dd")),4) rdd: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[20] at parallelize at <console>:24 scala> rdd.partitions.size res15: Int = 4 scala> var rdd2=rdd.partitionBy(new org.apache.spark.HashPartitioner(2)) rdd2: org.apache.spark.rdd.RDD[(Int, String)] = ShuffledRDD[21] at partitionBy at <console>:25 scala> rdd2.partitions.size res16: Int = 2

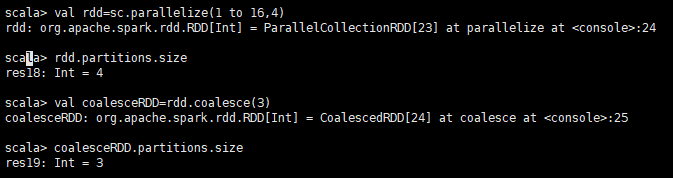

与repartition的区别: repartition(numPartitions:Int):RDD[T]和coalesce(numPartitions:Int,shuffle:Boolean=false):RDD[T] repartition只是coalesce接口中shuffle为true的实现. 缩减分区数,用于大数据集过滤后,提高小数据集的执行效率。

scala> val rdd=sc.parallelize(1 to 16,4) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[23] at parallelize at <console>:24 scala> rdd.partitions.size res18: Int = 4 scala> val coalesceRDD=rdd.coalesce(3) coalesceRDD: org.apache.spark.rdd.RDD[Int] = CoalescedRDD[24] at coalesce at <console>:25 scala> coalesceRDD.partitions.size res19: Int = 3

根据分区数,从新通过网络随机洗牌所有数据。

scala> val rdd = sc.parallelize(1 to 16,4) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[25] at parallelize at <console>:24 scala> rdd.partitions.size res20: Int = 4 scala> val rerdd = rdd.repartition(2) rerdd: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[29] at repartition at <console>:25 scala> rerdd.partitions.size res21: Int = 2 scala> val rerdd = rdd.repartition(4) rerdd: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[33] at repartition at <console>:25 scala> rerdd.partitions.size res22: Int = 4

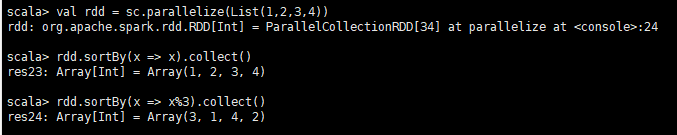

用func先对数据进行处理,按照处理后的数据比较结果排序。

scala> val rdd = sc.parallelize(List(1,2,3,4)) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[34] at parallelize at <console>:24 scala> rdd.sortBy(x => x).collect() res23: Array[Int] = Array(1, 2, 3, 4) scala> rdd.sortBy(x => x%3).collect() res24: Array[Int] = Array(3, 1, 4, 2)

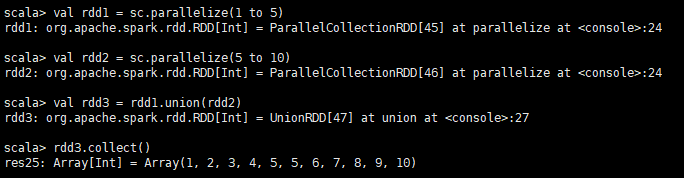

对源RDD和参数RDD求并集后返回一个新的RDD 不去重

scala> val rdd1 = sc.parallelize(1 to 5) rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[45] at parallelize at <console>:24 scala> val rdd2 = sc.parallelize(5 to 10) rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[46] at parallelize at <console>:24 scala> val rdd3 = rdd1.union(rdd2) rdd3: org.apache.spark.rdd.RDD[Int] = UnionRDD[47] at union at <console>:27 scala> rdd3.collect() res25: Array[Int] = Array(1, 2, 3, 4, 5, 5, 6, 7, 8, 9, 10)

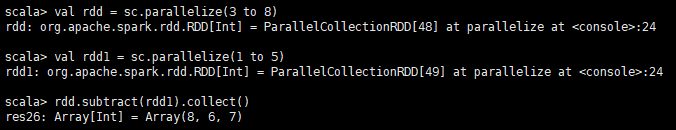

计算差的一种函数,去除两个RDD中相同的元素,不同的RDD将保留下来

scala> val rdd = sc.parallelize(3 to 8) rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[48] at parallelize at <console>:24 scala> val rdd1 = sc.parallelize(1 to 5) rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[49] at parallelize at <console>:24 scala> rdd.subtract(rdd1).collect() res26: Array[Int] = Array(8, 6, 7)

对源RDD和参数RDD求交集后返回一个新的RDD

scala> val rdd1 = sc.parallelize(1 to 7) rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[54] at parallelize at <console>:24 scala> val rdd2 = sc.parallelize(5 to 10) rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[55] at parallelize at <console>:24 scala> val rdd3 = rdd1.intersection(rdd2) rdd3: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[61] at intersection at <console>:27 scala> rdd3.collect() res27: Array[Int] = Array(5, 6, 7)

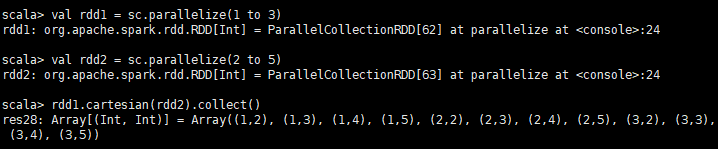

笛卡尔积

scala> val rdd1 = sc.parallelize(1 to 3) rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[62] at parallelize at <console>:24 scala> val rdd2 = sc.parallelize(2 to 5) rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[63] at parallelize at <console>:24 scala> rdd1.cartesian(rdd2).collect() res28: Array[(Int, Int)] = Array((1,2), (1,3), (1,4), (1,5), (2,2), (2,3), (2,4), (2,5), (3,2), (3,3), (3,4), (3,5))

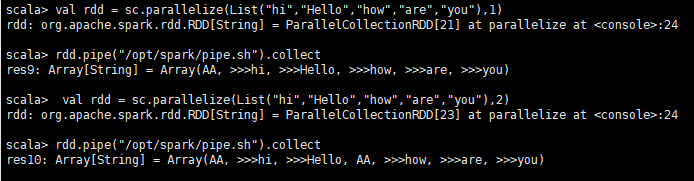

管道,对于每个分区,都执行一个perl或者shell脚本,返回输出的RDD

Shell脚本pipe.sh: #!/bin/sh echo "AA" while read LINE; do echo ">>>"${LINE} done scala> val rdd = sc.parallelize(List("hi","Hello","how","are","you"),1) rdd: org.apache.spark.rdd.RDD[String] = ParallelCollectionRDD[21] at parallelize at <console>:24 scala> rdd.pipe("/opt/spark/pipe.sh").collect res9: Array[String] = Array(AA, >>>hi, >>>Hello, >>>how, >>>are, >>>you) scala> val rdd = sc.parallelize(List("hi","Hello","how","are","you"),2) rdd: org.apache.spark.rdd.RDD[String] = ParallelCollectionRDD[23] at parallelize at <console>:24 scala> rdd.pipe("/opt/spark/pipe.sh").collect res10: Array[String] = Array(AA, >>>hi, >>>Hello, AA, >>>how, >>>are, >>>you)

运行rdd.pipe("/opt/spark/pipe.sh").collect可能出现权限问题

给权限:chmod 777 /opt/spark/pipr.sh

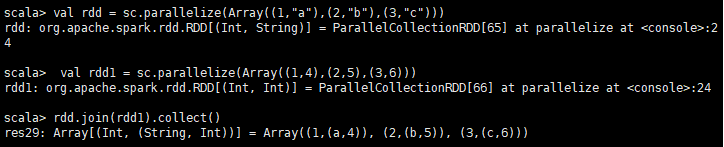

在类型为(K,V)和(K,W)的RDD上调用,返回一个相同key对应的所有元素对在一起的(K,(V,W))的RDD

scala> val rdd = sc.parallelize(Array((1,"a"),(2,"b"),(3,"c"))) rdd: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[65] at parallelize at <console>:24 scala> val rdd1 = sc.parallelize(Array((1,4),(2,5),(3,6))) rdd1: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[66] at parallelize at <console>:24 scala> rdd.join(rdd1).collect() res29: Array[(Int, (String, Int))] = Array((1,(a,4)), (2,(b,5)), (3,(c,6)))

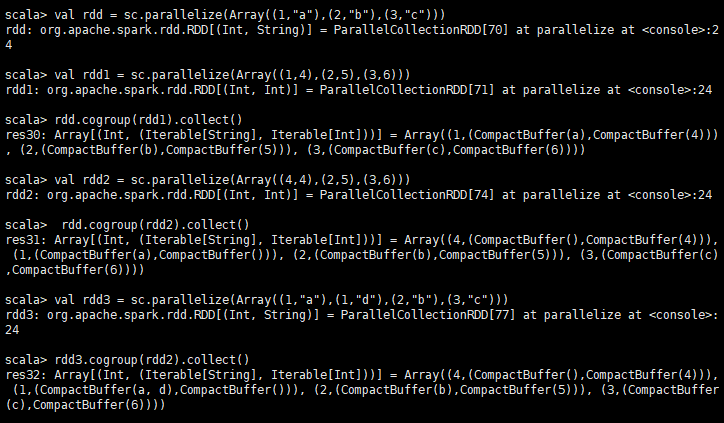

在类型为(K,V)和(K,W)的RDD上调用,返回一个(K,(Iterable<V>,Iterable<W>))类型的RDD

scala> val rdd = sc.parallelize(Array((1,"a"),(2,"b"),(3,"c"))) rdd: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[70] at parallelize at <console>:24 scala> val rdd1 = sc.parallelize(Array((1,4),(2,5),(3,6))) rdd1: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[71] at parallelize at <console>:24 scala> rdd.cogroup(rdd1).collect() res30: Array[(Int, (Iterable[String], Iterable[Int]))] = Array((1,(CompactBuffer(a),CompactBuffer(4))), (2,(CompactBuffer(b),CompactBuffer(5))), (3,(CompactBuffer(c),CompactBuffer(6)))) scala> val rdd2 = sc.parallelize(Array((4,4),(2,5),(3,6))) rdd2: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[74] at parallelize at <console>:24 scala> rdd.cogroup(rdd2).collect() res31: Array[(Int, (Iterable[String], Iterable[Int]))] = Array((4,(CompactBuffer(),CompactBuffer(4))), (1,(CompactBuffer(a),CompactBuffer())), (2,(CompactBuffer(b),CompactBuffer(5))), (3,(CompactBuffer(c),CompactBuffer(6)))) scala> val rdd3 = sc.parallelize(Array((1,"a"),(1,"d"),(2,"b"),(3,"c"))) rdd3: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[77] at parallelize at <console>:24 scala> rdd3.cogroup(rdd2).collect() res32: Array[(Int, (Iterable[String], Iterable[Int]))] = Array((4,(CompactBuffer(),CompactBuffer(4))), (1,(CompactBuffer(a, d),CompactBuffer())), (2,(CompactBuffer(b),CompactBuffer(5))), (3,(CompactBuffer(c),CompactBuffer(6))))

在一个(K,V)的RDD上调用,返回一个(K,V)的RDD,使用指定的reduce函数,将相同key的值聚合到一起,reduce任务的个数可以通过第二个可选的参数来设置。

scala> val rdd = sc.parallelize(List(("female",1),("male",5),("female",5),("male",2))) rdd: org.apache.spark.rdd.RDD[(String, Int)] = ParallelCollectionRDD[80] at parallelize at <console>:24 scala> val reduce = rdd.reduceByKey((x,y) => x+y) reduce: org.apache.spark.rdd.RDD[(String, Int)] = ShuffledRDD[81] at reduceByKey at <console>:25 scala> reduce.collect() res33: Array[(String, Int)] = Array((female,6), (male,7))

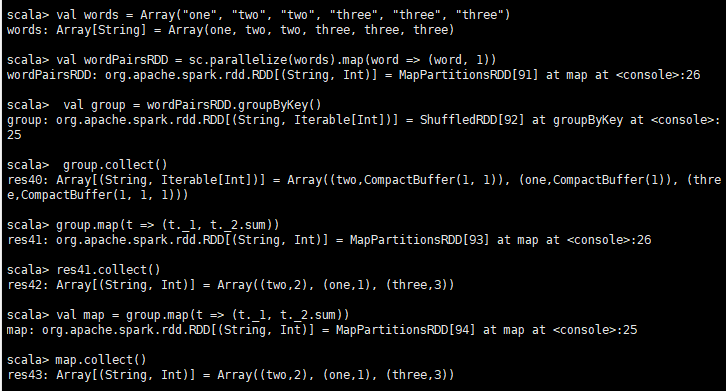

groupByKey也是对每个key进行操作,但只生成一个sequence。

scala> val words = Array("one", "two", "two", "three", "three", "three") words: Array[String] = Array(one, two, two, three, three, three) scala> val wordPairsRDD = sc.parallelize(words).map(word => (word, 1)) wordPairsRDD: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[91] at map at <console>:26 scala> val group = wordPairsRDD.groupByKey() group: org.apache.spark.rdd.RDD[(String, Iterable[Int])] = ShuffledRDD[92] at groupByKey at <console>:25 scala> group.collect() res40: Array[(String, Iterable[Int])] = Array((two,CompactBuffer(1, 1)), (one,CompactBuffer(1)), (three,CompactBuffer(1, 1, 1))) scala> group.map(t => (t._1, t._2.sum)) res41: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[93] at map at <console>:26 scala> res41.collect() res42: Array[(String, Int)] = Array((two,2), (one,1), (three,3)) scala> val map = group.map(t => (t._1, t._2.sum)) map: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[94] at map at <console>:25 scala> map.collect() res43: Array[(String, Int)] = Array((two,2), (one,1), (three,3))

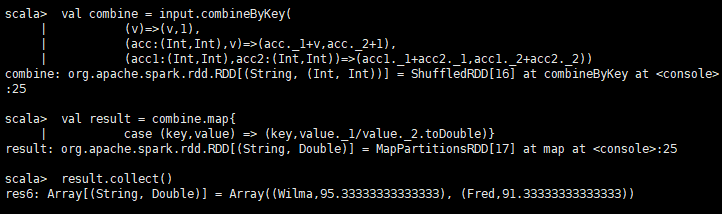

createCombiner: V => C, mergeValue: (C, V) => C, mergeCombiners: (C, C) => C)

对相同K,把V合并成一个集合。

createCombiner: combineByKey() 会遍历分区中的所有元素,因此每个元素的键要么还没有遇到过,要么就 和之前的某个元素的键相同。

如果这是一个新的元素,combineByKey() 会使用一个叫作 createCombiner() 的函数来创建 那个键对应的累加器的初始值

mergeValue: 如果这是一个在处理当前分区之前已经遇到的键, 它会使用 mergeValue() 方法将该键的累加器对应的当前值与这个新的值进行合并

mergeCombiners: 由于每个分区都是独立处理的, 因此对于同一个键可以有多个累加器。如果有两个或者更多的分区都有对应同一个键的累加器,

就需要使用用户提供的 mergeCombiners() 方法将各个分区的结果进行合并。

scala> val input = sc.parallelize(scores) input: org.apache.spark.rdd.RDD[(String, Int)] = ParallelCollectionRDD[15] at parallelize at <console>:26 scala> val combine = input.combineByKey( | (v)=>(v,1), | (acc:(Int,Int),v)=>(acc._1+v,acc._2+1), | (acc1:(Int,Int),acc2:(Int,Int))=>(acc1._1+acc2._1,acc1._2+acc2._2)) combine: org.apache.spark.rdd.RDD[(String, (Int, Int))] = ShuffledRDD[16] at combineByKey at <console>:25 scala> val result = combine.map{ | case (key,value) => (key,value._1/value._2.toDouble)} result: org.apache.spark.rdd.RDD[(String, Double)] = MapPartitionsRDD[17] at map at <console>:25 scala> result.collect() res6: Array[(String, Double)] = Array((Wilma,95.33333333333333), (Fred,91.33333333333333))

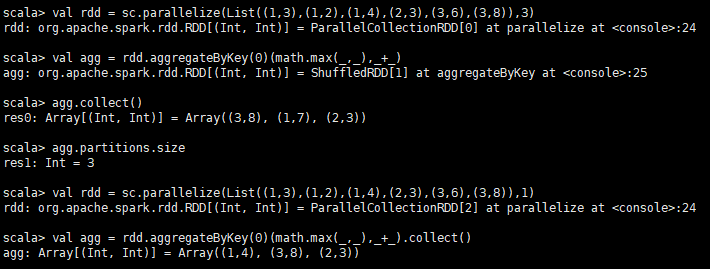

(zeroValue:U,[partitioner: Partitioner]) (seqOp: (U, V) => U,combOp: (U, U) => U)

在kv对的RDD中,,按key将value进行分组合并,合并时,将每个value和初始值作为seq函数的参数,进行计算,返回的结果作为一个新的kv对,

然后再将结果按照key进行合并,最后将每个分组的value传递给combine函数进行计算(先将前两个value进行计算,

将返回结果和下一个value传给combine函数,以此类推),将key与计算结果作为一个新的kv对输出。

seqOp函数用于在每一个分区中用初始值逐步迭代value,combOp函数用于合并每个分区中的结果。

scala> val rdd = sc.parallelize(List((1,3),(1,2),(1,4),(2,3),(3,6),(3,8)),3) rdd: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[0] at parallelize at <console>:24 scala> val agg = rdd.aggregateByKey(0)(math.max(_,_),_+_) agg: org.apache.spark.rdd.RDD[(Int, Int)] = ShuffledRDD[1] at aggregateByKey at <console>:25 scala> agg.collect() res0: Array[(Int, Int)] = Array((3,8), (1,7), (2,3)) scala> agg.partitions.size res1: Int = 3 scala> val rdd = sc.parallelize(List((1,3),(1,2),(1,4),(2,3),(3,6),(3,8)),1) rdd: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[2] at parallelize at <console>:24 scala> val agg = rdd.aggregateByKey(0)(math.max(_,_),_+_).collect() agg: Array[(Int, Int)] = Array((1,4), (3,8), (2,3))

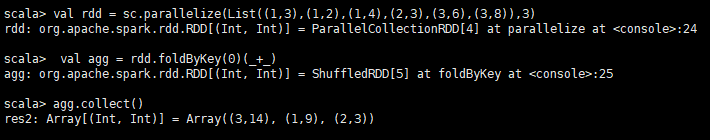

(zeroValue: V)(func: (V, V) => V): RDD[(K, V)]

aggregateByKey的简化操作,seqop和combop相同

scala> val rdd = sc.parallelize(List((1,3),(1,2),(1,4),(2,3),(3,6),(3,8)),3) rdd: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[4] at parallelize at <console>:24 scala> val agg = rdd.foldByKey(0)(_+_) agg: org.apache.spark.rdd.RDD[(Int, Int)] = ShuffledRDD[5] at foldByKey at <console>:25 scala> agg.collect() res2: Array[(Int, Int)] = Array((3,14), (1,9), (2,3))

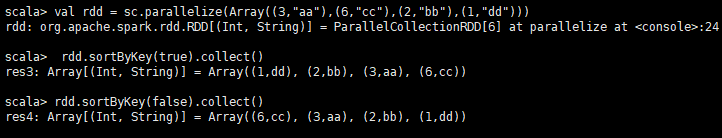

在一个(K,V)的RDD上调用,K必须实现Ordered接口,返回一个按照key进行排序的(K,V)的RDD

scala> val rdd = sc.parallelize(Array((3,"aa"),(6,"cc"),(2,"bb"),(1,"dd"))) rdd: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[6] at parallelize at <console>:24 scala> rdd.sortByKey(true).collect() res3: Array[(Int, String)] = Array((1,dd), (2,bb), (3,aa), (6,cc)) scala> rdd.sortByKey(false).collect() res4: Array[(Int, String)] = Array((6,cc), (3,aa), (2,bb), (1,dd))

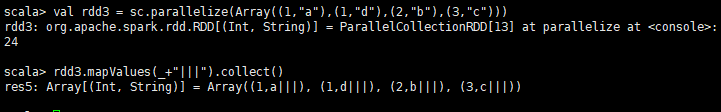

针对于(K,V)形式的类型只对V进行操作

scala> val rdd3 = sc.parallelize(Array((1,"a"),(1,"d"),(2,"b"),(3,"c"))) rdd3: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[13] at parallelize at <console>:24 scala> rdd3.mapValues(_+"|||").collect() res5: Array[(Int, String)] = Array((1,a|||), (1,d|||), (2,b|||), (3,c|||))

浙公网安备 33010602011771号

浙公网安备 33010602011771号