BytePS源码解析

# 导入BytePS模块 import byteps.torch as bps

# 初始化BytePS bps.init()

# 设置训练进程使用的GPU torch.cuda.set_device(bps.local_rank())

local_rank: """A function that returns the local BytePS rank of the calling process, within the node that it is running on. For example, if there are seven processes running on a node, their local ranks will be zero through six, inclusive. Returns: An integer scalar with the local BytePS rank of the calling process.

"""

# 在push和pull过程中,把32位梯度压缩成16位。(注:精度损失问题怎么解决的?) compression = bps.Compression.fp16 if args.fp16_pushpull else bps.Compression.none

compression: """Optional gradient compression algorithm used during push_pull.""" """Compress all floating point gradients to 16-bit.""" 注:梯度压缩是这个意思?

# optimizer = bps.DistributedOptimizer(optimizer, named_parameters=model.named_parameters(), compression=compression)

#

bps.broadcast_parameters(model.state_dict(), root_rank=0)

root_rank: The rank of the process from which parameters will be broadcasted to all other processes. 注:这里的root_rank是本地的还是全局的?本地的,通常是0号进程。

push_pull_async: """ A function that performs asynchronous averaging or summation of the input tensor over all the BytePS processes. The input tensor is not modified. The reduction operation is keyed by the name. If name is not provided, an incremented auto-generated name is used. The tensor type and shape must be the same on all BytePS processes for a given name. The reduction will not start until all processes are ready to send and receive the tensor. Arguments: tensor: A tensor to average or sum. average: A flag indicating whether to compute average or summation, defaults to average. name: A name of the reduction operation. Returns: A handle to the push_pull operation that can be used with `poll()` or `synchronize()`. """

#

bps.broadcast_optimizer_state(optimizer, root_rank=0)

Python通过ctypes函数库调用C/C++。

节点之间的通信格式是key-value。

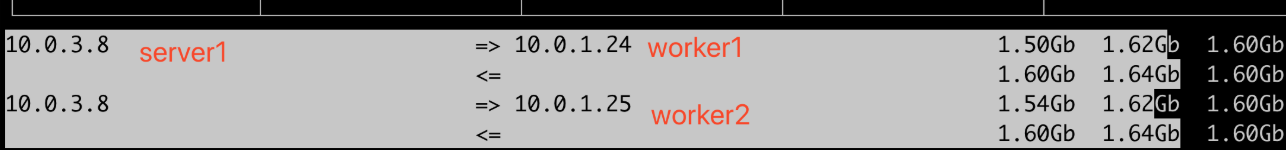

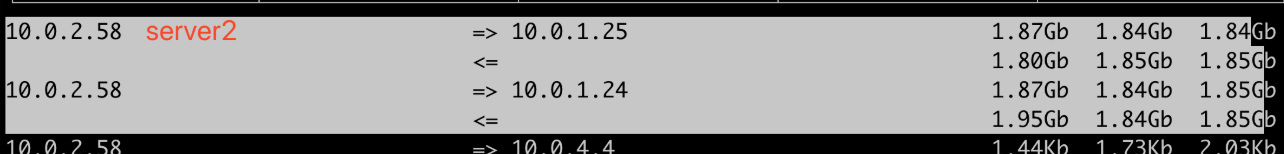

一个节点中,只有0号进程才参与网络通信。

scheduler和server都是直接用MXNet代码,没用BytePS。

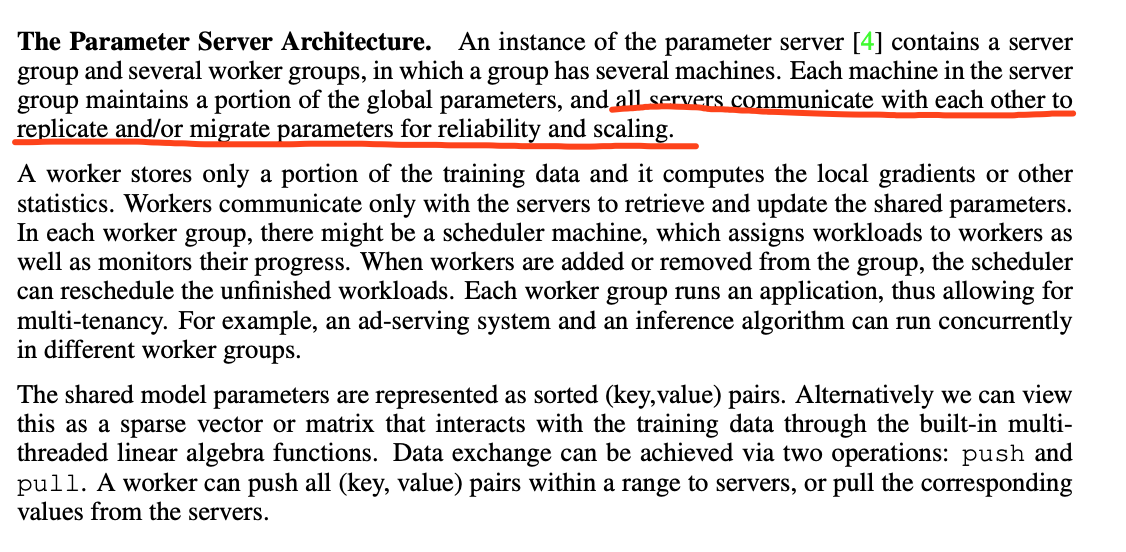

worker之间没有通信,server之间也没有通信。(注:李沐论文中说的Parameter Server之间有通信,是为了备份容错。)

rank: # A function that returns the BytePS rank of the calling process. 注:全局进程编号,通常用于控制日志打印。

size: # A function that returns the number of BytePS processes.

local_size: # A function that returns the number of BytePS processes within the node the current process is running on.

""" An optimizer that wraps another torch.optim.Optimizer, using an push_pull to average gradient values before applying gradients to model weights. push_pull operations are executed after each gradient is computed by `loss.backward()` in parallel with each other. The `step()` method ensures that all push_pull operations are finished before applying gradients to the model. DistributedOptimizer exposes the `synchronize()` method, which forces push_pull operations to finish before continuing the execution. It's useful in conjunction with gradient clipping, or other operations that modify gradients in place before `step()` is executed. Example of gradient clipping: ``` output = model(data) loss = F.nll_loss(output, target) loss.backward() optimizer.synchronize() torch.nn.utils.clip_grad_norm(model.parameters(), args.clip) optimizer.step() ``` Arguments: optimizer: Optimizer to use for computing gradients and applying updates. named_parameters: A mapping between parameter names and values. Used for naming of push_pull operations. Typically just `model.named_parameters()`. compression: Compression algorithm used during push_pull to reduce the amount of data sent during the each parameter update step. Defaults to not using compression. backward_passes_per_step: Number of expected backward passes to perform before calling step()/synchronize(). This allows accumulating gradients over multiple mini-batches before executing averaging and applying them. """ # We dynamically create a new class that inherits from the optimizer that was passed in. # The goal is to override the `step()` method with an push_pull implementation.

common/__init__.py:C++基础API的Python封装,如BytePSBasics local_rank。

communicator.h和communicator.cc:附加的基于socket的信号通信,用于同步,如BytePSCommSocket。

global.h和global.cc:如全局初始化BytePSGlobal::Init,worker的pslite单例BytePSGlobal::GetPS。

core_loops.h和core_loops.cc:死循环,如PushLoop和PullLoop,处理队列中的任务,即TensorTableEntry。(注:改成事件循环,理论上可以减少CPU占用)

logging.h和logging.cc:日志组件。(注:无关逻辑,先忽略)

nccl_manager.h和nccl_manager.cc:管理NCCL,如IsCrossPcieSwitch。

operations.h和operations.cc:如GetPullQueueList、GetPushQueueList、

ready_table.h和ready_table.cc:维护key对应的任务的准备状态。根据功能分为PUSH、COPY、PCIE_REDUCE和NCCL_REDUCE等。

schedule_queue.h和schedule_queue.cc:任务调度队列,提供任务给事件循环。

shared_memory.h和shared_memory.cc:共享内存,用于存储CPU中的张量。(注:用的是POSIX API,即共享内存文件shm_open,结合内存映射mmap,相比System V API,有更好的可移植性)

ops.h和ops.cc:如DoPushPull。

adapter.h和adapter.cc:C++和Python的张量数据类型适配。

cpu_reducer.h和cpu_reducer.cpp:

common.h和common.cc:

ops.py:如_push_pull_function_factory。

torch/__init__.py:如DistributedOptimizer。

// Total key space is 0 to 2^64 - 1 // It will be divided to N PS servers, for now we assume N <= 2^16

ps::KVWorker,继承SimpleApp,用于向server Push,或者从server Pull key-value数据,还有Wait函数。

ps is_recovery,节点是不是恢复的。(注:有可能中途断掉过?)

ps::Postoffice,全局管理单例。

ps::StartAsync,异步初始化节点。

ZPush/ZPull:zero-copy Push/Pull, This function is similar to Push except that all data will not be copied into system for better performance. It is the caller's responsibility to keep the content to be not changed before actually finished.

ADD_NODE

BARRIER

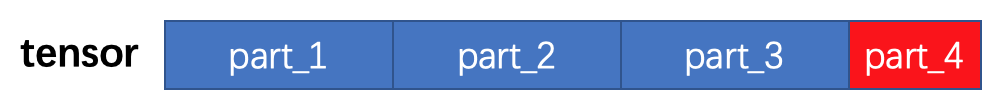

Tensor Partition

张量划分,可以让多个server并行分担计算和网络带宽,同时有利于异步pipeline。

_name_to_cxt:哈希表,保存初始化过的张量(能用于PS通信)。

declared_key:初始化过的张量的编号,从0递增。

GetPartitionBound:张量划分的单块字节数。

key = declared_key * 2^16 + part_num。

共享内存

_key_shm_addr:哈希表,每块共享内存的起始地址。

_key_shm_size:哈希表,每块共享内存的大小。

cudaHostRegister:把host内存注册为pin memory,用于CUDA。这样CPU->GPU,只需要一次copy。(注:pin memory就是page locked和non pageable,不使用虚拟内存,直接物理内存,也就不会有内存页交换到硬盘上,自然不会有缺页中断)

numa_max_node() returns the highest node number available on the current system. (See the node numbers in /sys/devices/system/node/ ). Also see numa_num_configured_nodes(). numa_set_preferred() sets the preferred node for the current task to node. The system will attempt to allocate memory from the preferred node, but will fall back to other nodes if no memory is available on the the preferred node. Passing a node of -1 argument specifies local allocation and is equivalent to calling numa_set_localalloc(). numa_set_interleave_mask() sets the memory interleave mask for the current task to nodemask. All new memory allocations are page interleaved over all nodes in the interleave mask. Interleaving can be turned off again by passing an empty mask (numa_no_nodes). The page interleaving only occurs on the actual page fault that puts a new page into the current address space. It is also only a hint: the kernel will fall back to other nodes if no memory is available on the interleave target.

注:priority的作用是啥?值都为0。

注:named_parameters的作用是啥?

注:param_groups是啥?

注:梯度reduce和权值更新分别在哪里做?

参考链接

https://github.com/bytedance/byteps

https://pytorch.org/docs/stable/distributed.html

https://www.cs.cmu.edu/~muli/file/parameter_server_nips14.pdf

https://mxnet.incubator.apache.org/versions/master/faq/distributed_training.html