pytorch 孪生网络 训练与预测

# coding: utf-8

# # One Shot Learning with Siamese Networks

#

# This is the jupyter notebook that accompanies

# ## Imports

# All the imports are defined here

# In[2]:

#get_ipython().run_line_magic('matplotlib', 'inline')

import torchvision

import torchvision.datasets as dset

import torchvision.transforms as transforms

from torch.utils.data import DataLoader,Dataset

import matplotlib.pyplot as plt

import torchvision.utils

import numpy as np

import random

from PIL import Image

import torch

from torch.autograd import Variable

import PIL.ImageOps

import torch.nn as nn

from torch import optim

import torch.nn.functional as F

# ## Helper functions

# Set of helper functions

# In[3]:

def imshow(img,text=None,should_save=False):

npimg = img.numpy()

plt.axis("off")

if text:

plt.text(75, 8, text, style='italic',fontweight='bold',

bbox={'facecolor':'white', 'alpha':0.8, 'pad':10})

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

def show_plot(iteration,loss):

plt.plot(iteration,loss)

plt.show()

# ## Configuration Class

# A simple class to manage configuration

# In[4]:

class Config():

training_dir = "./data/faces/training/"

testing_dir = "./data/faces/testing/"

train_batch_size = 8#64

train_number_epochs = 201#100

# ## Custom Dataset Class

# This dataset generates a pair of images. 0 for geniune pair and 1 for imposter pair

# In[5]:

class SiameseNetworkDataset(Dataset):

def __init__(self,imageFolderDataset,transform=None,should_invert=True):

self.imageFolderDataset = imageFolderDataset

self.transform = transform

self.should_invert = should_invert

def __getitem__(self,index):

tttt = self.imageFolderDataset.imgs

img0_tuple = random.choice(self.imageFolderDataset.imgs)

#we need to make sure approx 50% of images are in the same class

should_get_same_class = random.randint(0,1)

if should_get_same_class:

while True:

#keep looping till the same class image is found

img1_tuple = random.choice(self.imageFolderDataset.imgs)

if img0_tuple[1]==img1_tuple[1]:

break

else:

while True:

#keep looping till a different class image is found

img1_tuple = random.choice(self.imageFolderDataset.imgs)

if img0_tuple[1] !=img1_tuple[1]:

break

img0 = Image.open(img0_tuple[0])

img1 = Image.open(img1_tuple[0])

img0 = img0.convert("L")

img1 = img1.convert("L")

if self.should_invert:

img0 = PIL.ImageOps.invert(img0)

img1 = PIL.ImageOps.invert(img1)

if self.transform is not None:

img0 = self.transform(img0)

img1 = self.transform(img1)

return img0, img1 , torch.from_numpy(np.array([int(img1_tuple[1]!=img0_tuple[1])],dtype=np.float32))

def __len__(self):

return len(self.imageFolderDataset.imgs)

# ## Using Image Folder Dataset

# In[6]: ##########import torchvision.datasets as dset

folder_dataset = dset.ImageFolder(root=Config.training_dir)

# In[7]:

siamese_dataset = SiameseNetworkDataset(imageFolderDataset=folder_dataset,

transform=transforms.Compose([transforms.Resize((100,100)),

transforms.ToTensor()

])

,should_invert=False)

# ## Visualising some of the data

# The top row and the bottom row of any column is one pair. The 0s and 1s correspond to the column of the image.

# 1 indiciates dissimilar, and 0 indicates similar.

# In[8]: ## from torch.utils.data import DataLoader,Dataset

vis_dataloader = DataLoader(siamese_dataset,

shuffle=True,

num_workers=8,

batch_size=8)

dataiter = iter(vis_dataloader)

example_batch = next(dataiter)

concatenated = torch.cat((example_batch[0],example_batch[1]),0)

imshow(torchvision.utils.make_grid(concatenated))

print(example_batch[2].numpy())

# ## Neural Net Definition

# We will use a standard convolutional neural network

# In[9]:

class SiameseNetwork(nn.Module):

def __init__(self):

super(SiameseNetwork, self).__init__()

self.cnn1 = nn.Sequential(

nn.ReflectionPad2d(1),

nn.Conv2d(1, 4, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(4),

nn.ReflectionPad2d(1),

nn.Conv2d(4, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

nn.ReflectionPad2d(1),

nn.Conv2d(8, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

)

self.fc1 = nn.Sequential(

nn.Linear(8*100*100, 500),

nn.ReLU(inplace=True),

nn.Linear(500, 500),

nn.ReLU(inplace=True),

nn.Linear(500, 5))

def forward_once(self, x):

output = self.cnn1(x)

output = output.view(output.size()[0], -1)

output = self.fc1(output)

return output

def forward(self, input1, input2):

output1 = self.forward_once(input1)

output2 = self.forward_once(input2)

return output1, output2

# ## Contrastive Loss

# In[10]:

class ContrastiveLoss(torch.nn.Module):

"""

Contrastive loss function.

Based on: http://yann.lecun.com/exdb/publis/pdf/hadsell-chopra-lecun-06.pdf

"""

def __init__(self, margin=2.0):

super(ContrastiveLoss, self).__init__()

self.margin = margin

def forward(self, output1, output2, label):

euclidean_distance = F.pairwise_distance(output1, output2, keepdim = True)

loss_contrastive = torch.mean((1-label) * torch.pow(euclidean_distance, 2) +

(label) * torch.pow(torch.clamp(self.margin - euclidean_distance, min=0.0), 2))

return loss_contrastive

# ## Training Time!

# In[22]:

train_dataloader = DataLoader(siamese_dataset,

shuffle=True,

num_workers=8,

batch_size=Config.train_batch_size)

# In[23]:

##train

if True:

net = SiameseNetwork().cuda()

criterion = ContrastiveLoss()

optimizer = optim.Adam(net.parameters(),lr = 0.0005 )

# In[24]:

counter = []

loss_history = []

iteration_number= 0

# In[25]:

#train

for epoch in range(0,Config.train_number_epochs):

for i, data in enumerate(train_dataloader,0):

img0, img1 , label = data

img0, img1 , label = img0.cuda(), img1.cuda() , label.cuda()

optimizer.zero_grad()

output1,output2 = net(img0,img1)

loss_contrastive = criterion(output1,output2,label)

loss_contrastive.backward()

optimizer.step()

if i %10 == 0 :

print("Epoch number {}\n Current loss {}\n".format(epoch,loss_contrastive.item()))

iteration_number +=10

counter.append(iteration_number)

loss_history.append(loss_contrastive.item())

if 0 == epoch % 100 and 0 != epoch and 0==i:

torch.save(net.state_dict(),'/media/data_1/Yang/project/2019/project/Facial-Similarity-with-Siamese-Networks-in-Pytorch/Face-Siamese.pt')

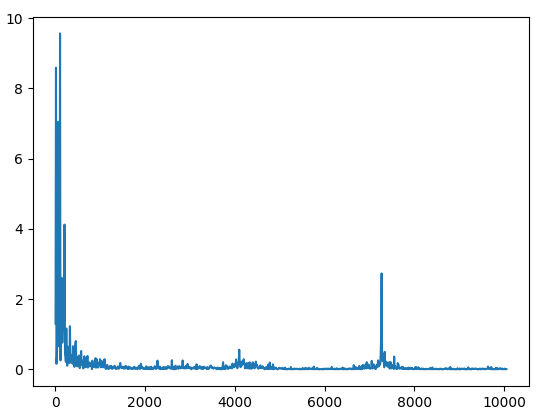

show_plot(counter,loss_history)

# ## Some simple testing

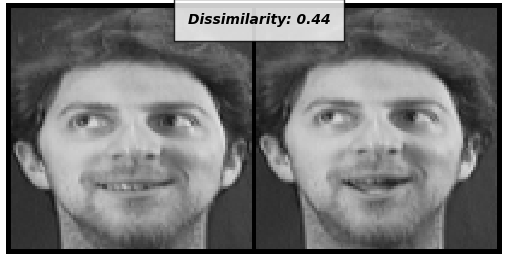

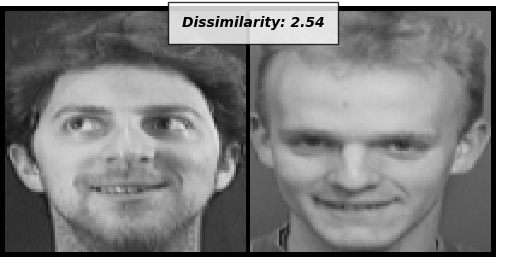

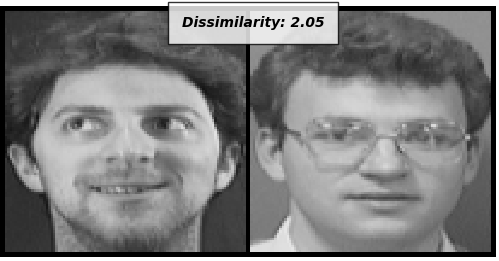

# The last 3 subjects were held out from the training, and will be used to test. The Distance between each image pair denotes the degree of similarity the model found between the two images. Less means it found more similar, while higher values indicate it found them to be dissimilar.

# In[28]:

###test

if True:

net = SiameseNetwork().cuda()

net.load_state_dict(torch.load('/media/data_1/Yang/project/2019/project/Facial-Similarity-with-Siamese-Networks-in-Pytorch/Face-Siamese.pt'))

net.eval()

folder_dataset_test = dset.ImageFolder(root=Config.testing_dir)

siamese_dataset = SiameseNetworkDataset(imageFolderDataset=folder_dataset_test,

transform=transforms.Compose([transforms.Resize((100,100)),

transforms.ToTensor()

])

,should_invert=False)

test_dataloader = DataLoader(siamese_dataset,num_workers=6,batch_size=1,shuffle=True)

dataiter = iter(test_dataloader)

x0,_,_ = next(dataiter)

for i in range(10):

_,x1,label2 = next(dataiter)

concatenated = torch.cat((x0,x1),0)

output1,output2 = net(Variable(x0).cuda(),Variable(x1).cuda())

euclidean_distance = F.pairwise_distance(output1, output2)

imshow(torchvision.utils.make_grid(concatenated),'Dissimilarity: {:.2f}'.format(euclidean_distance.item()))

[[1.]

[0.]

[1.]

[0.]

[1.]

[1.]

[0.]

[0.]]

0代表相似,1代表不相似

loss曲线:

测试:

好记性不如烂键盘---点滴、积累、进步!

浙公网安备 33010602011771号

浙公网安备 33010602011771号