1 # Smooth Support Vector Regression之实现

2

3 import numpy

4 from matplotlib import pyplot as plt

5 from mpl_toolkits.mplot3d import Axes3D

6

7

8 numpy.random.seed(0)

9

10 def peaks(x1, x2):

11 term1 = 3 * (1 - x1) ** 2 * numpy.exp(-x1 ** 2 - (x2 + 1) ** 2)

12 term2 = -10 * (x1 / 5 - x1 ** 3 - x2 ** 5) * numpy.exp(-x1 ** 2 - x2 ** 2)

13 term3 = -numpy.exp(-(x1 + 1) ** 2 - x2 ** 2) / 3

14 val = term1 + term2 + term3

15 return val

16

17

18 # 生成回归数据

19 X1 = numpy.linspace(-3, 3, 30)

20 X2 = numpy.linspace(-3, 3, 30)

21 X1, X2 = numpy.meshgrid(X1, X2)

22 X = numpy.hstack((X1.reshape((-1, 1)), X2.reshape((-1, 1)))) # 待回归数据之样本点

23 Y = peaks(X1, X2).reshape((-1, 1))

24 Yerr = Y + numpy.random.normal(0, 0.4, size=Y.shape) # 待回归数据之观测值

25

26

27

28 class SSVR(object):

29

30 def __init__(self, X, Y_, c=50, mu=1, epsilon=0.5, beta=10):

31 '''

32 X: 样本点数据集, 1行代表1个样本

33 Y_: 观测值数据集, 1行代表1个观测值

34 '''

35 self.__X = X # 待回归数据之样本点

36 self.__Y_ = Y_ # 待回归数据之观测值

37 self.__c = c # 误差项权重参数

38 self.__mu = mu # gaussian kernel参数

39 self.__epsilon = epsilon # 管道残差参数

40 self.__beta = beta # 光滑化参数

41

42 self.__A = X.T

43

44

45 def get_estimation(self, x, alpha, b):

46 '''

47 获取估计值

48 '''

49 A = self.__A

50 mu = self.__mu

51

52 x = numpy.array(x).reshape((-1, 1))

53 KAx = self.__get_KAx(A, x, mu)

54 regVal = self.__calc_hVal(KAx, alpha, b)

55 return regVal

56

57

58 def get_MAE(self, alpha, b):

59 '''

60 获取平均绝对误差

61 '''

62 X = self.__X

63 Y_ = self.__Y_

64

65 Y = numpy.array(list(self.get_estimation(x, alpha, b) for x in X)).reshape((-1, 1))

66 RES = Y_ - Y

67 MAE = numpy.linalg.norm(RES, ord=1) / alpha.shape[0]

68 return MAE

69

70

71 def get_GE(self):

72 '''

73 获取泛化误差

74 '''

75 X = self.__X

76 Y_ = self.__Y_

77

78 cnt = 0

79 GE = 0

80 for idx in range(X.shape[0]):

81 x = X[idx:idx+1, :]

82 y_ = Y_[idx, 0]

83

84 self.__X = numpy.vstack((X[:idx, :], X[idx+1:, :]))

85 self.__Y_ = numpy.vstack((Y_[:idx, :], Y_[idx+1:, :]))

86 self.__A = self.__X.T

87 alpha, b, tab = self.optimize()

88 if not tab:

89 continue

90 cnt += 1

91 y = self.get_estimation(x, alpha, b)

92 GE += (y_ - y) ** 2

93 GE /= cnt

94

95 self.__X = X

96 self.__Y_ = Y_

97 self.__A = X.T

98 return GE

99

100

101 def optimize(self, maxIter=1000, EPSILON=1.e-9):

102 '''

103 maxIter: 最大迭代次数

104 EPSILON: 收敛判据, 梯度趋于0则收敛

105 '''

106 A, Y_ = self.__A, self.__Y_

107 c = self.__c

108 mu = self.__mu

109 epsilon = self.__epsilon

110 beta = self.__beta

111

112 alpha, b = self.__init_alpha_b((A.shape[1], 1))

113 KAA = self.__get_KAA(A, mu)

114 JVal = self.__calc_JVal(KAA, Y_, c, epsilon, beta, alpha, b)

115 grad = self.__calc_grad(KAA, Y_, c, epsilon, beta, alpha, b)

116 Hess = self.__calc_Hess(KAA, Y_, c, epsilon, beta, alpha, b)

117

118 for i in range(maxIter):

119 print("iterCnt: {:3d}, JVal: {}".format(i, JVal))

120 if self.__converged1(grad, EPSILON):

121 return alpha, b, True

122

123 dCurr = -numpy.matmul(numpy.linalg.inv(Hess), grad)

124 ALPHA = self.__calc_ALPHA_by_ArmijoRule(alpha, b, JVal, grad, dCurr, KAA, Y_, c, epsilon, beta)

125

126 delta = ALPHA * dCurr

127 alphaNew = alpha + delta[:-1, :]

128 bNew = b + delta[-1, -1]

129 JValNew = self.__calc_JVal(KAA, Y_, c, epsilon, beta, alphaNew, bNew)

130 if self.__converged2(delta, JValNew - JVal, EPSILON):

131 return alphaNew, bNew, True

132

133 alpha, b, JVal = alphaNew, bNew, JValNew

134 grad = self.__calc_grad(KAA, Y_, c, epsilon, beta, alpha, b)

135 Hess = self.__calc_Hess(KAA, Y_, c, epsilon, beta, alpha, b)

136 else:

137 if self.__converged1(grad, EPSILON):

138 return alpha, b, True

139 return alpha, b, False

140

141

142 def __converged2(self, delta, JValDelta, EPSILON):

143 val1 = numpy.linalg.norm(delta)

144 val2 = numpy.linalg.norm(JValDelta)

145 if val1 <= EPSILON or val2 <= EPSILON:

146 return True

147 return False

148

149

150 def __calc_ALPHA_by_ArmijoRule(self, alphaCurr, bCurr, JCurr, gCurr, dCurr, KAA, Y_, c, epsilon, beta, C=1.e-4, v=0.5):

151 i = 0

152 ALPHA = v ** i

153 delta = ALPHA * dCurr

154 alphaNext = alphaCurr + delta[:-1, :]

155 bNext = bCurr + delta[-1, -1]

156 JNext = self.__calc_JVal(KAA, Y_, c, epsilon, beta, alphaNext, bNext)

157 while True:

158 if JNext <= JCurr + C * ALPHA * numpy.matmul(dCurr.T, gCurr)[0, 0]: break

159 i += 1

160 ALPHA = v ** i

161 delta = ALPHA * dCurr

162 alphaNext = alphaCurr + delta[:-1, :]

163 bNext = bCurr + delta[-1, -1]

164 JNext = self.__calc_JVal(KAA, Y_, c, epsilon, beta, alphaNext, bNext)

165 return ALPHA

166

167

168 def __converged1(self, grad, EPSILON):

169 if numpy.linalg.norm(grad) <= EPSILON:

170 return True

171 return False

172

173

174 def __calc_Hess(self, KAA, Y_, c, epsilon, beta, alpha, b):

175 Hess_J1 = self.__calc_Hess_J1(alpha)

176 Hess_J2 = self.__calc_Hess_J2(KAA, Y_, c, epsilon, beta, alpha, b)

177 Hess = Hess_J1 + Hess_J2

178 return Hess

179

180

181 def __calc_Hess_J2(self, KAA, Y_, c, epsilon, beta, alpha, b):

182 Hess_J2_alpha_alpha = numpy.zeros((KAA.shape[0], KAA.shape[0]))

183 Hess_J2_alpha_b = numpy.zeros((KAA.shape[0], 1))

184 Hess_J2_b_alpha = numpy.zeros((1, KAA.shape[0]))

185 Hess_J2_b_b = 0

186

187 z = Y_ - numpy.matmul(KAA, alpha) - b

188 term1 = z - epsilon

189 term2 = -z - epsilon

190 for i in range(z.shape[0]):

191 term3 = self.__s(term1[i, 0], beta) ** 2 + self.__p(term1[i, 0], beta) * self.__d(term1[i, 0], beta)

192 term4 = self.__s(term2[i, 0], beta) ** 2 + self.__p(term2[i, 0], beta) * self.__d(term2[i, 0], beta)

193 term5 = term3 + term4

194 Hess_J2_alpha_alpha += term5 * numpy.matmul(KAA[:, i:i+1], KAA[i:i+1, :])

195 Hess_J2_alpha_b += term5 * KAA[:, i:i+1]

196 Hess_J2_b_b += term5

197 Hess_J2_alpha_alpha *= c

198 Hess_J2_alpha_b *= c

199 Hess_J2_b_alpha = Hess_J2_alpha_b.reshape((1, -1))

200 Hess_J2_b_b *= c

201

202 Hess_J2_upper = numpy.hstack((Hess_J2_alpha_alpha, Hess_J2_alpha_b))

203 Hess_J2_lower = numpy.hstack((Hess_J2_b_alpha, [[Hess_J2_b_b]]))

204 Hess_J2 = numpy.vstack((Hess_J2_upper, Hess_J2_lower))

205 return Hess_J2

206

207

208 def __calc_Hess_J1(self, alpha):

209 I = numpy.identity(alpha.shape[0])

210 term = numpy.hstack((I, numpy.zeros((I.shape[0], 1))))

211 Hess_J1 = numpy.vstack((term, numpy.zeros((1, term.shape[1]))))

212 return Hess_J1

213

214

215 def __calc_grad(self, KAA, Y_, c, epsilon, beta, alpha, b):

216 grad_J1 = self.__calc_grad_J1(alpha)

217 grad_J2 = self.__calc_grad_J2(KAA, Y_, c, epsilon, beta, alpha, b)

218 grad = grad_J1 + grad_J2

219 return grad

220

221

222 def __calc_grad_J2(self, KAA, Y_, c, epsilon, beta, alpha, b):

223 grad_J2_alpha = numpy.zeros((KAA.shape[0], 1))

224 grad_J2_b = 0

225

226 z = Y_ - numpy.matmul(KAA, alpha) - b

227 term1 = z - epsilon

228 term2 = -z - epsilon

229 for i in range(z.shape[0]):

230 term3 = self.__p(term1[i, 0], beta) * self.__s(term1[i, 0], beta) - self.__p(term2[i, 0], beta) * self.__s(term2[i, 0], beta)

231 grad_J2_alpha += term3 * KAA[:, i:i+1]

232 grad_J2_b += term3

233 grad_J2_alpha *= -c

234 grad_J2_b *= -c

235

236 grad_J2 = numpy.vstack((grad_J2_alpha, [[grad_J2_b]]))

237 return grad_J2

238

239

240 def __calc_grad_J1(self, alpha):

241 grad_J1 = numpy.vstack((alpha, [[0]]))

242 return grad_J1

243

244

245 def __calc_JVal(self, KAA, Y_, c, epsilon, beta, alpha, b):

246 J1 = self.__calc_J1(alpha)

247 J2 = self.__calc_J2(KAA, Y_, c, epsilon, beta, alpha, b)

248 JVal = J1 + J2

249 return JVal

250

251

252 def __calc_J2(self, KAA, Y_, c, epsilon, beta, alpha, b):

253 z = Y_ - numpy.matmul(KAA, alpha) - b

254 term2 = numpy.sum(self.__p_epsilon_square(item[0], epsilon, beta) for item in z)

255 J2 = term2 * c / 2

256 return J2

257

258

259 def __calc_J1(self, alpha):

260 J1 = numpy.sum(alpha * alpha) / 2

261 return J1

262

263

264 def __p(self, x, beta):

265 val = numpy.log(numpy.exp(beta * x) + 1) / beta

266 return val

267

268

269 def __s(self, x, beta):

270 val = 1 / (numpy.exp(-beta * x) + 1)

271 return val

272

273

274 def __d(self, x, beta):

275 term = numpy.exp(beta * x)

276 val = beta * term / (term + 1) ** 2

277 return val

278

279

280 def __p_epsilon_square(self, x, epsilon, beta):

281 term1 = self.__p(x - epsilon, beta) ** 2

282 term2 = self.__p(-x - epsilon, beta) ** 2

283 val = term1 + term2

284 return val

285

286

287 def __get_KAA(self, A, mu):

288 KAA = numpy.zeros((A.shape[1], A.shape[1]))

289 for rowIdx in range(KAA.shape[0]):

290 for colIdx in range(rowIdx + 1):

291 x1 = A[:, rowIdx:rowIdx+1]

292 x2 = A[:, colIdx:colIdx+1]

293 val = self.__calc_gaussian(x1, x2, mu)

294 KAA[rowIdx, colIdx] = KAA[colIdx, rowIdx] = val

295 return KAA

296

297

298 def __calc_gaussian(self, x1, x2, mu):

299 val = numpy.exp(-mu * numpy.linalg.norm(x1 - x2) ** 2)

300 # val = numpy.sum(x1 * x2)

301 return val

302

303

304 def __init_alpha_b(self, shape):

305 '''

306 alpha、b之初始化

307 '''

308 alpha, b = numpy.zeros(shape), 0

309 return alpha, b

310

311

312 def __calc_hVal(self, KAx, alpha, b):

313 hVal = numpy.matmul(alpha.T, KAx)[0, 0] + b

314 return hVal

315

316

317 def __get_KAx(self, A, x, mu):

318 KAx = numpy.zeros((A.shape[1], 1))

319 for rowIdx in range(KAx.shape[0]):

320 x1 = A[:, rowIdx:rowIdx+1]

321 val = self.__calc_gaussian(x1, x, mu)

322 KAx[rowIdx, 0] = val

323 return KAx

324

325

326

327 class PeaksPlot(object):

328

329 def peaks_plot(self, X, Y_, ssvrObj, alpha, b):

330 surfX1 = numpy.linspace(numpy.min(X[:, 0]), numpy.max(X[:, 0]), 50)

331 surfX2 = numpy.linspace(numpy.min(X[:, 1]), numpy.max(X[:, 1]), 50)

332 surfX1, surfX2 = numpy.meshgrid(surfX1, surfX2)

333 surfY_ = peaks(surfX1, surfX2)

334

335 surfY = numpy.zeros(surfX1.shape)

336 for rowIdx in range(surfY.shape[0]):

337 for colIdx in range(surfY.shape[1]):

338 surfY[rowIdx, colIdx] = ssvrObj.get_estimation((surfX1[rowIdx, colIdx], surfX2[rowIdx, colIdx]), alpha, b)

339

340 fig = plt.figure(figsize=(10, 3))

341 ax1 = plt.subplot(1, 2, 1, projection="3d")

342 ax2 = plt.subplot(1, 2, 2, projection="3d")

343

344 norm = plt.Normalize(surfY_.min(), surfY_.max())

345 colors = plt.cm.rainbow(norm(surfY_))

346 surf = ax1.plot_surface(surfX1, surfX2, surfY_, facecolors=colors, shade=True, alpha=0.5)

347 surf.set_facecolor("white")

348 ax1.scatter3D(X[:, 0:1], X[:, 1:2], Y_, s=1, c="r")

349 ax1.set(xlabel="$x_1$", ylabel="$x_2$", zlabel="$f(x_1, x_2)$", xlim=(-3, 3), ylim=(-3, 3), zlim=(-8, 8))

350 ax1.set(title="Original Function")

351 ax1.view_init(0, 0)

352

353 norm2 = plt.Normalize(surfY.min(), surfY.max())

354 colors2 = plt.cm.rainbow(norm2(surfY))

355 surf2 = ax2.plot_surface(surfX1, surfX2, surfY, facecolors=colors2, shade=True, alpha=0.5)

356 surf2.set_facecolor("white")

357 ax2.scatter3D(X[:, 0:1], X[:, 1:2], Y_, s=1, c="r")

358 ax2.set(xlabel="$x_1$", ylabel="$x_2$", zlabel="$f(x_1, x_2)$", xlim=(-3, 3), ylim=(-3, 3), zlim=(-8, 8))

359 ax2.set(title="Estimated Function")

360 ax2.view_init(0, 0)

361

362 fig.tight_layout()

363 fig.savefig("peaks_plot.png", dpi=100)

364

365

366

367 if __name__ == "__main__":

368 ssvrObj = SSVR(X, Yerr, c=50, mu=1, epsilon=0.5)

369 alpha, b, tab = ssvrObj.optimize()

370

371 ppObj = PeaksPlot()

372 ppObj.peaks_plot(X, Yerr, ssvrObj, alpha, b)

下面给出$p_{\epsilon}^2(x, \beta)$的1阶及2阶导数:

下面给出$p_{\epsilon}^2(x, \beta)$的1阶及2阶导数:

View Code

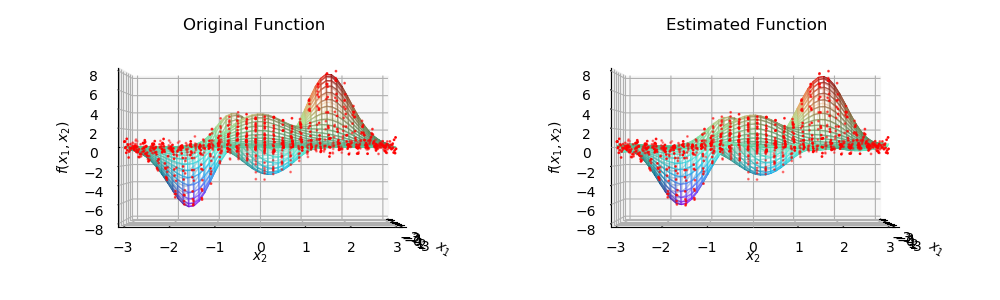

View Code 左侧为样本点分布及原始函数图像, 右侧为样本点分布及估计函数图像.

左侧为样本点分布及原始函数图像, 右侧为样本点分布及估计函数图像.