在kubernetes上部署zookeeper,kafka集群

本文采用网上镜像:mirrorgooglecontainers/kubernetes-zookeeper:1.0-3.4.10

准备共享存储:nfs,glusterfs,seaweed或其他,并在node节点挂载

本次采用seaweed分布式文件系统

node节点挂载:

./weed mount -filer=192.168.11.103:8801 -dir=/data/ -filer.path=/ &

准备kubernetes集群环境:

[root@k8s-master sts]# kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME k8s-master Ready master 97d v1.15.0 192.168.10.171 <none> CentOS Linux 7 (Core) 3.10.0-862.el7.x86_64 docker://19.3.1 k8s-node1 Ready node 97d v1.15.0 192.168.11.63 <none> CentOS Linux 7 (Core) 3.10.0-862.el7.x86_64 docker://19.3.1 [root@k8s-master sts]#

创建pv

使用本地目录创建pv, 确定node节点已经挂载共享存储..使用本地目录也可以

[root@k8s-master sts]# cat zk-pv.yaml kind: PersistentVolume apiVersion: v1 metadata: name: pv-zk1 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 3Gi accessModes: - ReadWriteOnce hostPath: path: "/data/zookeeper1" persistentVolumeReclaimPolicy: Recycle --- kind: PersistentVolume apiVersion: v1 metadata: name: pv-zk2 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 3Gi accessModes: - ReadWriteOnce hostPath: path: "/data/zookeeper2" persistentVolumeReclaimPolicy: Recycle --- kind: PersistentVolume apiVersion: v1 metadata: name: pv-zk3 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 3Gi accessModes: - ReadWriteOnce hostPath: path: "/data/zookeeper3" persistentVolumeReclaimPolicy: Recycle [root@k8s-master sts]#

如下:

[root@k8s-master sts]# kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pv-zk1 3Gi RWO Recycle Available anything 5s pv-zk2 3Gi RWO Recycle Available anything 5s pv-zk3 3Gi RWO Recycle Available anything 5s

创建zk sts:

[root@k8s-master sts]# cat zk-sts.yaml --- apiVersion: v1 kind: Service metadata: name: zk-hs namespace: bigdata labels: app: zk spec: ports: - port: 2888 name: server - port: 3888 name: leader-election clusterIP: None selector: app: zk --- apiVersion: v1 kind: Service metadata: name: zk-cs namespace: bigdata labels: app: zk spec: ports: - port: 2181 name: client selector: app: zk --- apiVersion: policy/v1beta1 kind: PodDisruptionBudget metadata: name: zk-pdb namespace: bigdata spec: selector: matchLabels: app: zk maxUnavailable: 1 --- apiVersion: apps/v1beta1 kind: StatefulSet metadata: name: zk namespace: bigdata spec: serviceName: zk-hs replicas: 3 updateStrategy: type: RollingUpdate podManagementPolicy: Parallel updateStrategy: type: RollingUpdate template: metadata: labels: app: zk spec: containers: - name: kubernetes-zookeeper imagePullPolicy: IfNotPresent image: "mirrorgooglecontainers/kubernetes-zookeeper:1.0-3.4.10" resources: requests: memory: "128Mi" cpu: "0.1" ports: - containerPort: 2181 name: client - containerPort: 2888 name: server - containerPort: 3888 name: leader-election command: - sh - -c - "start-zookeeper \ --servers=3 \ --data_dir=/var/lib/zookeeper/data \ --data_log_dir=/var/lib/zookeeper/data/log \ --conf_dir=/opt/zookeeper/conf \ --client_port=2181 \ --election_port=3888 \ --server_port=2888 \ --tick_time=2000 \ --init_limit=10 \ --sync_limit=5 \ --heap=512M \ --max_client_cnxns=60 \ --snap_retain_count=3 \ --purge_interval=12 \ --max_session_timeout=40000 \ --min_session_timeout=4000 \ --log_level=INFO" readinessProbe: exec: command: - sh - -c - "zookeeper-ready 2181" initialDelaySeconds: 10 timeoutSeconds: 5 livenessProbe: exec: command: - sh - -c - "zookeeper-ready 2181" initialDelaySeconds: 10 timeoutSeconds: 5 volumeMounts: - name: datadir mountPath: /var/lib/zookeeper securityContext: runAsUser: 1000 fsGroup: 1000 volumeClaimTemplates: - metadata: name: datadir annotations: volume.beta.kubernetes.io/storage-class: "anything" spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 3Gi [root@k8s-master sts]#

源模板文件:https://github.com/kow3ns/kubernetes-zookeeper/blob/master/manifests/zookeeper_mini.yaml

注意:有修改

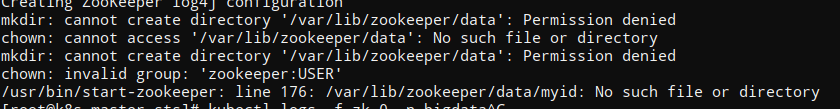

由于镜像默认是zookeeper用户,所有会出现没有权限创建zookeeper目,如下所示:

修改挂载目录权限即可.简单暴力: chmod 777 ${{dirname}}

zk启动成功后:如下,pvc绑定pv

[root@k8s-master sts]# kubectl get pods -n bigdata NAME READY STATUS RESTARTS AGE zk-0 1/1 Running 0 18m zk-1 1/1 Running 3 18m zk-2 1/1 Running 2 18m [root@k8s-master sts]# kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pv-zk1 3Gi RWO Recycle Bound bigdata/datadir-zk-2 anything 23m pv-zk2 3Gi RWO Recycle Bound bigdata/datadir-zk-0 anything 23m pv-zk3 3Gi RWO Recycle Bound bigdata/datadir-zk-1 anything 23m [root@k8s-master sts]# kubectl get pvc -n bigdata NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE datadir-zk-0 Bound pv-zk2 3Gi RWO anything 23m datadir-zk-1 Bound pv-zk3 3Gi RWO anything 23m datadir-zk-2 Bound pv-zk1 3Gi RWO anything 23m [root@k8s-master sts]#

在node节点查看数据目录结构如下:

[root@k8s-node1 data]# tree -L 3 /data/ /data/ ├── test │ ├── a.txt │ └── aaa.jpg ├── zookeeper1 │ └── data │ ├── log │ ├── myid │ └── version-2 ├── zookeeper2 │ └── data │ ├── log │ ├── myid │ └── version-2 └── zookeeper3 └── data ├── log ├── myid └── version-2 13 directories, 5 files [root@k8s-node1 data]#

查看主机名:

[root@k8s-master sts]# for i in 0 1 2; do kubectl exec zk-$i -n bigdata -- hostname; done zk-0 zk-1 zk-2

查看myid

[root@k8s-master sts]# for i in 0 1 2; do echo "myid zk-$i";kubectl exec zk-$i -n bigdata -- cat /var/lib/zookeeper/data/myid; done myid zk-0 1 myid zk-1 2 myid zk-2 3

查看完整域名

[root@k8s-master sts]# for i in 0 1 2; do kubectl exec zk-$i -n bigdata -- hostname -f; done zk-0.zk-hs.bigdata.svc.cluster.local zk-1.zk-hs.bigdata.svc.cluster.local zk-2.zk-hs.bigdata.svc.cluster.local

查看配置:

[root@k8s-master sts]# kubectl exec zk-0 -n bigdata -- cat /opt/zookeeper/conf/zoo.cfg #This file was autogenerated DO NOT EDIT clientPort=2181 dataDir=/var/lib/zookeeper/data dataLogDir=/var/lib/zookeeper/data/log tickTime=2000 initLimit=10 syncLimit=5 maxClientCnxns=60 minSessionTimeout=4000 maxSessionTimeout=40000 autopurge.snapRetainCount=3 autopurge.purgeInteval=12 server.1=zk-0.zk-hs.bigdata.svc.cluster.local:2888:3888 server.2=zk-1.zk-hs.bigdata.svc.cluster.local:2888:3888 server.3=zk-2.zk-hs.bigdata.svc.cluster.local:2888:3888 [root@k8s-master sts]#

测试zookeeper集群整体性:

在zk-1节点写入数据并查看

[root@k8s-master sts]# kubectl exec -ti zk-1 -n bigdata -- bash zookeeper@zk-1:/$ zkCli.sh Connecting to localhost:2181 [zk: localhost:2181(CONNECTED) 0] create /hello world Created /hello [zk: localhost:2181(CONNECTED) 1] get /hello world cZxid = 0x100000004 ctime = Wed Nov 13 02:41:56 UTC 2019 mZxid = 0x100000004 mtime = Wed Nov 13 02:41:56 UTC 2019 pZxid = 0x100000004 cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x0 dataLength = 5 numChildren = 0 [zk: localhost:2181(CONNECTED) 2]

在其他节点查看数据是否同步:

比如在zk-0节点查看数据:

[root@k8s-master sts]# kubectl exec -ti zk-0 -n bigdata -- bash zookeeper@zk-0:/$ zkCli.sh Connecting to localhost:2181 [zk: localhost:2181(CONNECTED) 0] ls / [hello, zookeeper] [zk: localhost:2181(CONNECTED) 1] ls /hello [] [zk: localhost:2181(CONNECTED) 2] get /hello world cZxid = 0x100000004 ctime = Wed Nov 13 02:41:56 UTC 2019 mZxid = 0x100000004 mtime = Wed Nov 13 02:41:56 UTC 2019 pZxid = 0x100000004 cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x0 dataLength = 5 numChildren = 0 [zk: localhost:2181(CONNECTED) 3]

具体使用:

将应用接入zookeeper集群

部署kafka集群

创建pv

[root@k8s-master sts]# cat kafka-pv.yaml kind: PersistentVolume apiVersion: v1 metadata: name: pv-kafka1 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 5Gi accessModes: - ReadWriteOnce hostPath: path: "/data/kafka1" persistentVolumeReclaimPolicy: Recycle --- kind: PersistentVolume apiVersion: v1 metadata: name: pv-kafka2 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 5Gi accessModes: - ReadWriteOnce hostPath: path: "/data/kafka2" persistentVolumeReclaimPolicy: Recycle --- kind: PersistentVolume apiVersion: v1 metadata: name: pv-kafka3 namespace: bigdata annotations: volume.beta.kubernetes.io/storage-class: "anything" labels: type: local spec: capacity: storage: 5Gi accessModes: - ReadWriteOnce hostPath: path: "/data/kafka3" persistentVolumeReclaimPolicy: Recycle [root@k8s-master sts]#

创建kafka StatefulSet

[root@k8s-master sts]# cat kafka-sts.yaml --- apiVersion: v1 kind: Service metadata: name: kafka-hs namespace: bigdata labels: app: kafka spec: ports: - port: 9093 name: server clusterIP: None selector: app: kafka --- apiVersion: policy/v1beta1 kind: PodDisruptionBudget metadata: name: kafka-pdb namespace: bigdata spec: selector: matchLabels: app: kafka maxUnavailable: 1 --- apiVersion: apps/v1beta1 kind: StatefulSet metadata: name: kafka namespace: bigdata spec: serviceName: kafka-hs replicas: 3 podManagementPolicy: Parallel updateStrategy: type: RollingUpdate template: metadata: labels: app: kafka spec: terminationGracePeriodSeconds: 300 containers: - name: k8skafka imagePullPolicy: IfNotPresent image: mirrorgooglecontainers/kubernetes-kafka:1.0-10.2.1 resources: requests: memory: "256Mi" cpu: "0.1" ports: - containerPort: 9093 name: server command: - sh - -c - "exec kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=${HOSTNAME##*-} \ --override listeners=PLAINTEXT://:9093 \ --override zookeeper.connect=zk-cs.bigdata.svc.cluster.local:2181 \ --override log.dir=/var/lib/kafka \ --override auto.create.topics.enable=true \ --override auto.leader.rebalance.enable=true \ --override background.threads=10 \ --override compression.type=producer \ --override delete.topic.enable=false \ --override leader.imbalance.check.interval.seconds=300 \ --override leader.imbalance.per.broker.percentage=10 \ --override log.flush.interval.messages=9223372036854775807 \ --override log.flush.offset.checkpoint.interval.ms=60000 \ --override log.flush.scheduler.interval.ms=9223372036854775807 \ --override log.retention.bytes=-1 \ --override log.retention.hours=168 \ --override log.roll.hours=168 \ --override log.roll.jitter.hours=0 \ --override log.segment.bytes=1073741824 \ --override log.segment.delete.delay.ms=60000 \ --override message.max.bytes=1000012 \ --override min.insync.replicas=1 \ --override num.io.threads=8 \ --override num.network.threads=3 \ --override num.recovery.threads.per.data.dir=1 \ --override num.replica.fetchers=1 \ --override offset.metadata.max.bytes=4096 \ --override offsets.commit.required.acks=-1 \ --override offsets.commit.timeout.ms=5000 \ --override offsets.load.buffer.size=5242880 \ --override offsets.retention.check.interval.ms=600000 \ --override offsets.retention.minutes=1440 \ --override offsets.topic.compression.codec=0 \ --override offsets.topic.num.partitions=50 \ --override offsets.topic.replication.factor=3 \ --override offsets.topic.segment.bytes=104857600 \ --override queued.max.requests=500 \ --override quota.consumer.default=9223372036854775807 \ --override quota.producer.default=9223372036854775807 \ --override replica.fetch.min.bytes=1 \ --override replica.fetch.wait.max.ms=500 \ --override replica.high.watermark.checkpoint.interval.ms=5000 \ --override replica.lag.time.max.ms=10000 \ --override replica.socket.receive.buffer.bytes=65536 \ --override replica.socket.timeout.ms=30000 \ --override request.timeout.ms=30000 \ --override socket.receive.buffer.bytes=102400 \ --override socket.request.max.bytes=104857600 \ --override socket.send.buffer.bytes=102400 \ --override unclean.leader.election.enable=true \ --override zookeeper.session.timeout.ms=6000 \ --override zookeeper.set.acl=false \ --override broker.id.generation.enable=true \ --override connections.max.idle.ms=600000 \ --override controlled.shutdown.enable=true \ --override controlled.shutdown.max.retries=3 \ --override controlled.shutdown.retry.backoff.ms=5000 \ --override controller.socket.timeout.ms=30000 \ --override default.replication.factor=1 \ --override fetch.purgatory.purge.interval.requests=1000 \ --override group.max.session.timeout.ms=300000 \ --override group.min.session.timeout.ms=6000 \ --override inter.broker.protocol.version=0.10.2-IV0 \ --override log.cleaner.backoff.ms=15000 \ --override log.cleaner.dedupe.buffer.size=134217728 \ --override log.cleaner.delete.retention.ms=86400000 \ --override log.cleaner.enable=true \ --override log.cleaner.io.buffer.load.factor=0.9 \ --override log.cleaner.io.buffer.size=524288 \ --override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308 \ --override log.cleaner.min.cleanable.ratio=0.5 \ --override log.cleaner.min.compaction.lag.ms=0 \ --override log.cleaner.threads=1 \ --override log.cleanup.policy=delete \ --override log.index.interval.bytes=4096 \ --override log.index.size.max.bytes=10485760 \ --override log.message.timestamp.difference.max.ms=9223372036854775807 \ --override log.message.timestamp.type=CreateTime \ --override log.preallocate=false \ --override log.retention.check.interval.ms=300000 \ --override max.connections.per.ip=2147483647 \ --override num.partitions=3 \ --override producer.purgatory.purge.interval.requests=1000 \ --override replica.fetch.backoff.ms=1000 \ --override replica.fetch.max.bytes=1048576 \ --override replica.fetch.response.max.bytes=10485760 \ --override reserved.broker.max.id=1000 " env: - name: KAFKA_HEAP_OPTS value : "-Xmx256M -Xms256M" - name: KAFKA_OPTS value: "-Dlogging.level=INFO" volumeMounts: - name: datadir mountPath: /var/lib/kafka readinessProbe: exec: command: - sh - -c - "/opt/kafka/bin/kafka-broker-api-versions.sh --bootstrap-server=localhost:9093" securityContext: runAsUser: 1000 fsGroup: 1000 volumeClaimTemplates: - metadata: name: datadir annotations: volume.beta.kubernetes.io/storage-class: "anything" spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 5Gi [root@k8s-master sts]#

注意:修改

--override zookeeper.connect=zk-cs.bigdata.svc.cluster.local:2181 zookeeper地址

image: mirrorgooglecontainers/kubernetes-kafka:1.0-10.2.1 镜像地址

正常情况下就跑起来了:

[root@k8s-master sts]# kubectl get pods -n bigdata NAME READY STATUS RESTARTS AGE kafka-0 1/1 Running 0 3m30s kafka-1 1/1 Running 0 3m30s kafka-2 1/1 Running 0 3m30s zk-0 1/1 Running 0 139m zk-1 1/1 Running 3 139m zk-2 1/1 Running 2 139m [root@k8s-master sts]#

通过zookeeper查看broker:

[root@k8s-master sts]# kubectl exec -ti zk-1 -n bigdata bash zookeeper@zk-1:/$ zkCli.sh Connecting to localhost:2181 [zk: localhost:2181(CONNECTED) 0] ls / [cluster, controller, controller_epoch, brokers, zookeeper, admin, isr_change_notification, consumers, hello, config] [zk: localhost:2181(CONNECTED) 1] ls /brokers [ids, topics, seqid] [zk: localhost:2181(CONNECTED) 2] ls /brokers/ids [0, 1, 2] [zk: localhost:2181(CONNECTED) 3] [zk: localhost:2181(CONNECTED) 3] get /brokers/ids/0 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://kafka-0.kafka-hs.bigdata.svc.cluster.local:9093"],"jmx_port":-1,"host":"kafka-0.kafka-hs.bigdata.svc.cluster.local","timestamp":"1573619460916","port":9093,"version":4} cZxid = 0x10000004b ctime = Wed Nov 13 04:31:00 UTC 2019 mZxid = 0x10000004b mtime = Wed Nov 13 04:31:00 UTC 2019 pZxid = 0x10000004b cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x16e628b4c0a0006 dataLength = 254 numChildren = 0 [zk: localhost:2181(CONNECTED) 4] get /brokers/ids/1 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://kafka-1.kafka-hs.bigdata.svc.cluster.local:9093"],"jmx_port":-1,"host":"kafka-1.kafka-hs.bigdata.svc.cluster.local","timestamp":"1573619460909","port":9093,"version":4} cZxid = 0x100000048 ctime = Wed Nov 13 04:31:00 UTC 2019 mZxid = 0x100000048 mtime = Wed Nov 13 04:31:00 UTC 2019 pZxid = 0x100000048 cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x36e628b4c540004 dataLength = 254 numChildren = 0 [zk: localhost:2181(CONNECTED) 5] get /brokers/ids/2 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://kafka-2.kafka-hs.bigdata.svc.cluster.local:9093"],"jmx_port":-1,"host":"kafka-2.kafka-hs.bigdata.svc.cluster.local","timestamp":"1573619460932","port":9093,"version":4} cZxid = 0x10000004e ctime = Wed Nov 13 04:31:00 UTC 2019 mZxid = 0x10000004e mtime = Wed Nov 13 04:31:00 UTC 2019 pZxid = 0x10000004e cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x26e628b922c0005 dataLength = 254 numChildren = 0 [zk: localhost:2181(CONNECTED) 6]

kafka基本操作测试

[root@k8s-master sts]# kubectl exec -it kafka-0 -n bigdata sh $ pwd /opt/kafka/bin #创建test topic $ ./kafka-topics.sh --create --topic test --zookeeper zk-cs.bigdata.svc.cluster.local:2181 --partitions 3 --replication-factor 3 Created topic "test". #查看topic $ ./kafka-topics.sh --zookeeper zk-cs.bigdata.svc.cluster.local:2181 --list test $

##模拟生产者,消费者

#生产者 $ ./kafka-console-producer.sh --topic test --broker-list kafka-0.kafka-hs.bigdata.svc.cluster.local:9093,kafka-1.kafka-hs.bigdata.svc.cluster.local:9093,kafka-2.kafka-hs.bigdata.svc.cluster.local:9093 this is a test message hell world #消费者消费数据 $ ./kafka-console-consumer.sh --topic test --zookeeper zk-cs.bigdata.svc.cluster.local:2181 --from-beginning Using the ConsoleConsumer with old consumer is deprecated and will be removed in a future major release. Consider using the new consumer by passing [bootstrap-server] instead of [zookeeper]. hell world this is a test message

具体业务接入kafka示例:

k8s集群内部域名:

broker-list:kafka-0.kafka-hs.bigdata.svc.cluster.local:9093,kafka-1.kafka-hs.bigdata.svc.cluster.local:9093,kafka-2.kafka-hs.bigdata.svc.cluster.local:9093

zookeeper:zk-cs.bigdata.svc.cluster.local:2181

k8s集群外部域名:

broker-list:

zookeeper:

通过nodeport:

zk:

apiVersion: v1 kind: Service metadata: name: zk-cs namespace: bigdata labels: app: zk spec: type: NodePort ports: - port: 2181 targetPort: 2181 name: client nodePort: 32181 selector: app: zk

外网访问:

./bin/zkCli.sh -server 192.168.100.102:32181

[zk: 192.168.100.102:32181(CONNECTED) 1] ls / [cluster, controller_epoch, brokers, zookeeper, admin, isr_change_notification, consumers, hello, config] [zk: 192.168.100.102:32181(CONNECTED) 2] get /hello world cZxid = 0x100000004 ctime = Wed Nov 13 10:41:56 CST 2019 mZxid = 0x100000004 mtime = Wed Nov 13 10:41:56 CST 2019 pZxid = 0x100000004 cversion = 0 dataVersion = 0 aclVersion = 0 ephemeralOwner = 0x0 dataLength = 5 numChildren = 0 [zk: 192.168.100.102:32181(CONNECTED) 3]