TabularSemanticParsing瞄一眼~

机器

- Our implementation has been tested with Pytorch 1.7 and Cuda 11 with a single GPU. 所以需要重新配置一台机器

root@haomeiya006:~# nvidia-smi

Wed Dec 30 17:53:46 2020

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 450.80.02 Driver Version: 450.80.02 CUDA Version: 11.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla T4 On | 00000000:00:07.0 Off | 0 |

| N/A 29C P8 9W / 70W | 0MiB / 15109MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

虚拟环境

(base) xuehp@haomeiya006:~/git/TabularSemanticParsing$ conda create --name TabularSemanticParsing python=3.7.5

conda activate TabularSemanticParsing

(TabularSemanticParsing) xuehp@haomeiya006:~/git/TabularSemanticParsing$ pip install -r requirements.txt

代码

xuehp@haomeiya006:~/git$ git clone --depth=1 https://github.com/xuehuiping/TabularSemanticParsing.git

数据集

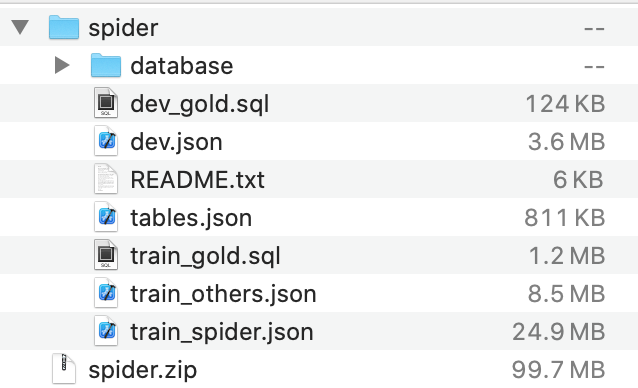

- 下载数据集 https://drive.google.com/u/1/uc?export=download&confirm=pft3&id=1_AckYkinAnhqmRQtGsQgUKAnTHxxX5J0,Spider,spider.zip (95M) 成功

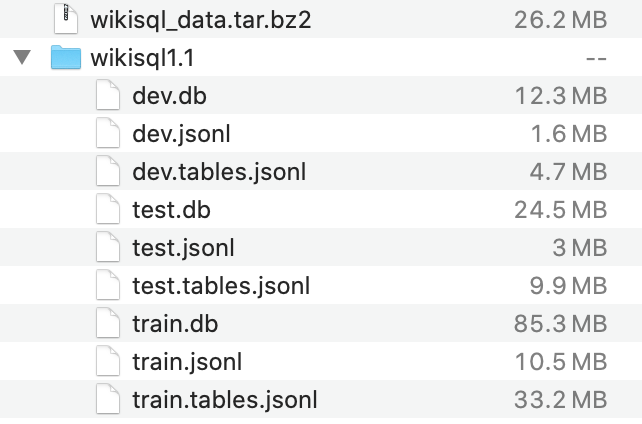

- 下载数据集 https://github.com/salesforce/WikiSQL/blob/master/data.tar.bz2,WikiSQL,data.tar.bz2,25M 成功

处理数据

./experiment-bridge.sh configs/bridge/wikisql-bridge-bert-large.sh --process_data 0

wiki数据集处理成功

(源代码脚本拼写错误)

训练

(TabularSemanticParsing) xuehp@haomeiya006:~/git/TabularSemanticParsing$ ./experiment-bridge.sh configs/bridge/wikisql-bridge-bert-large.sh --train 0

具体执行:

run python3 -m src.experiments --train --data_dir data/wikisql1.1/ --db_dir data/wikisql1.1/ --dataset_name wikisql --question_split --question_only --denormalize_sql --omit_from_clause --table_shuffling --use_lstm_encoder --use_meta_data_encoding --use_picklist --anchor_text_match_threshold 0.85 --top_k_picklist_matches 2 --num_random_tables_added 0 --save_best_model_only --schema_augmentation_factor 1 --data_augmentation_factor 1 --vocab_min_freq 0 --text_vocab_min_freq 0 --program_vocab_min_freq 0 --num_values_per_field 0 --max_in_seq_len 512 --max_out_seq_len 60 --model bridge --num_steps 30000 --curriculum_interval 0 --num_peek_steps 400 --num_accumulation_steps 3 --train_batch_size 16 --dev_batch_size 24 --encoder_input_dim 1024 --encoder_hidden_dim 512 --decoder_input_dim 512 --num_rnn_layers 1 --num_const_attn_layers 0 --emb_dropout_rate 0.3 --pretrained_lm_dropout_rate 0 --rnn_layer_dropout_rate 0.1 --rnn_weight_dropout_rate 0 --cross_attn_dropout_rate 0 --cross_attn_num_heads 8 --res_input_dropout_rate 0.2 --res_layer_dropout_rate 0 --ff_input_dropout_rate 0.4 --ff_hidden_dropout_rate 0.0 --pretrained_transformer bert-large-uncased --bert_finetune_rate 0.00005 --learning_rate 0.0003 --learning_rate_scheduler inverse-square --trans_learning_rate_scheduler inverse-square --warmup_init_lr 0.0003 --warmup_init_ft_lr 0 --num_warmup_steps 3000 --grad_norm 0.3 --decoding_algorithm beam-search --beam_size 64 --bs_alpha 1.0 --gpu 0

```

Model directory created: /home/xuehp/git/TabularSemanticParsing/model/wikisql.bridge.lstm.meta.ts.ppl-0.85.2.dn.no_from.feat.bert-large-uncased.xavier-1024-512-512-16-3-0.0003-inv-sqr-0.0003-3000-5e-05-inv-sqr-0.0-3000-0.3-0.3-0.0-0.0-1-8-0.1-0.0-res-0.2-0.0-ff-0.4-0.0.201230-193241.ywos

Visualization directory created: /home/xuehp/git/TabularSemanticParsing/viz/wikisql.bridge.lstm.meta.ts.ppl-0.85.2.dn.no_from.feat.bert-large-uncased.xavier-1024-512-512-16-3-0.0003-inv-sqr-0.0003-3000-5e-05-inv-sqr-0.0-3000-0.3-0.3-0.0-0.0-1-8-0.1-0.0-res-0.2-0.0-ff-0.4-0.0.201230-193241.ywos

* text vocab size = 30522

* program vocab size = 99

pretrained_transformer = bert-large-uncased

fix_pretrained_transformer_parameters = False

Downloading: 0%| | 260k/1.34G [00:15<28:47:34, 13.0kB/s]

不动了、、、

应该是下载某个东西,但是不能访问外网

```

再次尝试,还这样

```

Model directory created: /home/xuehp/git/TabularSemanticParsing/model/wikisql.bridge.lstm.meta.ts.ppl-0.85.2.dn.no_from.feat.bert-large-uncased.xavier-1024-512-512-16-3-0.0003-inv-sqr-0.0003-3000-5e-05-inv-sqr-0.0-3000-0.3-0.3-0.0-0.0-1-8-0.1-0.0-res-0.2-0.0-ff-0.4-0.0.201230-194649.y3mh

Visualization directory created: /home/xuehp/git/TabularSemanticParsing/viz/wikisql.bridge.lstm.meta.ts.ppl-0.85.2.dn.no_from.feat.bert-large-uncased.xavier-1024-512-512-16-3-0.0003-inv-sqr-0.0003-3000-5e-05-inv-sqr-0.0-3000-0.3-0.3-0.0-0.0-1-8-0.1-0.0-res-0.2-0.0-ff-0.4-0.0.201230-194649.y3mh

* text vocab size = 30522

* program vocab size = 99

pretrained_transformer = bert-large-uncased

fix_pretrained_transformer_parameters = False

Downloading: 0%| | 16.4k/1.34G [00:20<3000:45:50, 125B/s]

```