一次可行性测试

Windows:

IDEA、Navicat Premium15

Linux:

Hadoop伪分布式集群、PostgreSQL(Timescale)、Hive、Sqoop、Presto

创建关系表

hadoop01:

新建demo数据库:

su postgres

psql

create database demo;

\c demo

create extension timescaledb;

-- 用户表

-- id(主键)、姓名、性别、年龄、出生日期

create table person(

id int primary key,

name char(20) not null,

sex char(20) not null,

age int not null,

birthday char(20) not null

);

-- 地区表

-- 邮编、省份、城市

create table address(

zipcode int primary key,

province char(20) not null,

city char(20) not null

);

-- 订单表

-- 订单编号、商品、价格、邮编

create table orderID(

noun char(20) primary key,

goods char(20) not null,

price int not null,

zipcode int not null,

foreign key(zipcode) references address(zipcode)

);

-- 记录表

-- time、id、订单编号、电话

create table record(

time timestamptz not null,

id int not null,

noun char(20) not null,

tel char(20) not null,

foreign key(id) references person(id),

foreign key(noun) references orderID(noun),

primary key(time,noun)

);

SELECT create_hypertable('record', 'time');

在navicat中查看数据库与表是否创建完成

插入数据

向用户表插入数据

public class Person {

public static void main(String[] args) throws ClassNotFoundException, SQLException {

Class.forName("org.postgresql.Driver");

Connection conn = DriverManager.getConnection("jdbc:postgresql://192.168.115.130:5432/demo", "postgres", "201314");

PreparedStatement st = null;

for (int i = 1000; i < 10000; i++) {

String a =getBirthday();

String sql = "INSERT INTO person(id,name,sex,age,birthday) VALUES (?,?,?,?,?)";

st = conn.prepareStatement(sql); //预编译

st.setInt(1, i);

st.setString(2, getName());

st.setString(3, getSex());

st.setInt(4, getAge(a));

st.setString(5, getBirthday());

st.executeUpdate();

}

System.out.println("完成");

st.close();

conn.close();

}

//随机姓名

public static String getName() {

Random random = new Random();

String[] Surname = "赵, 钱, 孙, 李, 周, 吴, 郑, 王, 冯, 陈, 褚, 卫, 蒋, 沈, 韩, 杨, 朱, 秦, 尤, 许".split(",");

int index = random.nextInt(Surname.length - 1);

String name = Surname[index]; // 获得一个随机的姓氏

/* 从常用字中选取一个或两个字作为名 */

if (random.nextBoolean()) {

name += Person.getChinese() + Person.getChinese();

} else {

name += Person.getChinese();

}

return name;

}

public static String getChinese() {

String str = null;

int highPos, lowPos;

Random random = new Random();

highPos = (176 + Math.abs(random.nextInt(71)));// 区码,0xA0打头,从第16区开始,即0xB0=11*16=176,16~55一级汉字,56~87二级汉字

random = new Random();

lowPos = 161 + Math.abs(random.nextInt(94));// 位码,0xA0打头,范围第1~94列

byte[] bArr = new byte[2];

bArr[0] = (new Integer(highPos)).byteValue();

bArr[1] = (new Integer(lowPos)).byteValue();

try {

str = new String(bArr, "GB2312"); // 区位码组合成汉字

} catch (UnsupportedEncodingException e) {

e.printStackTrace();

}

return str;

}

//随机性别

public static String getSex() {

int randNum = new Random().nextInt(2) + 1;

return randNum == 1 ? "男" : "女";

}

//年龄

public static Integer getAge(String birthday) {

if ("".equals(birthday) || birthday == null) {

return null;

}

SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd");

String nowDate = sdf.format(new Date());

String[] nowDates = nowDate.split("-");// 当前时间

String[] dates = birthday.split("-");// 分割时间线 - 年[0],月[1],日[2]

int age = Integer.parseInt(nowDates[0]) - Integer.parseInt(dates[0]);// 年龄默认为当前时间和生日相减

if (dates.length >= 2) {// 根据月推算出年龄是否需要增加

// 如果当前月份大于生日月份,岁数不变,否则加一

Integer nowMonth = Integer.parseInt(nowDate.substring(5,7));

Integer birthMonth = Integer.parseInt(birthday.substring(5,7));

if (nowMonth < birthMonth)

age++;

if (!"dates.length >= 3 && nowMonth".equals(birthMonth)) {// 月份相同才计算对应年龄

// 如果天数大于当前生日月份,岁数不变,否则加一

Integer nowDay = Integer.parseInt(nowDates[2]);// 当前天数

Integer birDay = Integer.parseInt(dates[2]);// 生日天数

if (nowDay < birDay)

age++;

}

}

return age;

}

//出生日期

public static String getBirthday(){

Random rand = new Random();

SimpleDateFormat format = new SimpleDateFormat("yyyy-MM-dd");

Calendar cal = Calendar.getInstance();

cal.set(1961, 0, 1);

long start = cal.getTimeInMillis();

cal.set(2001, 0, 1);

long end = cal.getTimeInMillis();

java.util.Date d = new java.util.Date(start + (long)(rand.nextDouble() * (end - start)));

return format.format(d);

}

}

向地区表插入数据:

public class Address {

public static void main(String[] args) throws ClassNotFoundException, SQLException {

Class.forName("org.postgresql.Driver");

Connection conn = DriverManager.getConnection("jdbc:postgresql://192.168.115.130:5432/demo", "postgres", "201314");

PreparedStatement st = null;

String[] zipcode = ("100000,100010,100032,100080,100020," +

"300041,300171,300100,300202,300300," +

"400010,400080,404000,408000,402260," +

"430000,441000,434000,438000,442400," +

"210000,214000,213000,225000,215000," +

"510000,518000,519000,515000,512000," +

"610000,643000,621000,629000,510400," +

"310000,312000,314000,315000,325000," +

"201900,200003,201800,201200,200040," +

"140100,140200,140300,140400,140500").split(",");

String[] provice = "北京市,天津市,重庆市,湖北省,江苏省,广东省,四川省,浙江省,上海市,山西省".split(",");

String[] city = ("北京市,东城区,西城区,海淀区,朝阳区," +

"和平区,河东区,南开区,河西区,东丽区," +

"渝中区,大渡口,万州区,南川区,江津区," +

"武汉市,襄樊市,荆州市,黄冈市,神农架区," +

"南京市,无锡市,常州市,扬州市,苏州市," +

"广州市,深圳市,珠海市,汕头市,韶关市," +

"成都市,自贡市,绵阳市,遂宁市,攀枝花市," +

"杭州市,绍兴市,嘉兴市,宁波市,温州市," +

"宝山区,黄埔区,嘉定区,浦东新区,静安区," +

"太原市,大同市,阳泉市,长治市,晋城市").split(",");

for (int i = 0; i < 50; i++) {

String sql = "INSERT INTO address(zipcode,province,city) VALUES (?,?,?)";

st = conn.prepareStatement(sql);

st.setInt(1, Integer.valueOf(zipcode[i]));

st.setString(2, provice[i / 5]);

st.setString(3, city[i]);

st.executeUpdate();

}

System.out.println("完成");

st.close();

conn.close();

}

}

向订单表插入数据:

public class OrderID {

public static void main(String[] args) throws ClassNotFoundException, SQLException {

Class.forName("org.postgresql.Driver");

Connection conn = DriverManager.getConnection("jdbc:postgresql://192.168.115.130:5432/demo", "postgres", "201314");

PreparedStatement st = null;

String[] zipcode = ("100000,100010,100032,100080,100020," +

"300041,300171,300100,300202,300300," +

"400010,400080,404000,408000,402260," +

"430000,441000,434000,438000,442400," +

"210000,214000,213000,225000,215000," +

"510000,518000,519000,515000,512000," +

"610000,643000,621000,629000,510400," +

"310000,312000,314000,315000,325000," +

"201900,200003,201800,201200,200040," +

"140100,140200,140300,140400,140500").split(",");

String[] g = "苹果,雪梨,西瓜,柠檬,金桔,榴莲,火龙果,龙眼,青蛇果,芒果,桂圆,菠萝,车厘子,山竹,草莓".split(",");

int[] p = {4,2,1,4,3,6,5,5,9,7,10,2,25,16,12};

for (int i = 10000; i < 100000; i++) {

int randNum1 = new Random().nextInt(15);

int randNum2 = new Random().nextInt(50);

String sql = "INSERT INTO orderid(noun,goods,price,zipcode) VALUES (?,?,?,?)";

st = conn.prepareStatement(sql);

st.setString(1,"sf"+i);

st.setString(2,g[randNum1]);

st.setInt(3, p[randNum1]);

st.setInt(4, Integer.valueOf(zipcode[randNum2]));

st.executeUpdate();

}

System.out.println("完成");

st.close();

conn.close();

}

}

向记录表插入数据:

public class Record {

public static void main(String[] args) throws ClassNotFoundException, SQLException, InterruptedException {

Class.forName("org.postgresql.Driver");

Connection conn = DriverManager.getConnection("jdbc:postgresql://192.168.115.130:5432/demo", "postgres", "201314");

PreparedStatement st = null;

int a[] = new int[10000];

String b[] = new String[10000];

for (int i = 0; i < 10000; i++) {

a[i]=i;

b[i] = getTel();

}

for (int i = 10000; i < 50000; i++) {

Random random = new Random();

int s = random.nextInt(9999)%(9000) + 1000;

String sql = "INSERT INTO record(time,id,noun,tel) VALUES (NOW(),?,?,?)";

st = conn.prepareStatement(sql);

st.setInt(1,a[s]);

st.setString(2,"sf"+i);

st.setString(3, b[s]);

st.executeUpdate();

Thread.sleep(100);

}

System.out.println("完成");

st.close();

conn.close();

}

//随机电话号码

private static String getTel() {

String[] telFirst="134,135,136,137,138,139,150,151,152,157,158,159,130,131,132,155,156,133,153".split(",");

int index=getNum(0,telFirst.length-1);

String first=telFirst[index];

String second=String.valueOf(getNum(1,888)+10000).substring(1);

String third=String.valueOf(getNum(1,9100)+10000).substring(1);

return first+second+third;

}

public static int getNum(int start,int end)

{

return (int)(Math.random()*(end-start+1)+start);

}

}

数据迁移

用sqoop将数据迁移到hdfs中

cd /usr/local/sqoop/bin

./sqoop list-tables --connect jdbc:postgresql://hadoop01:5432/demo --driver org.postgresql.Driver --username postgres --password 201314

查看数据表中数据

./sqoop eval --connect jdbc:postgresql://hadoop01:5432/demo --driver org.postgresql.Driver --username postgres --password 201314 -e 'select * from person'

hdfs dfs -mkdir /user/demo

hdfs dfs -chmod 777 /user/demo

hdfs dfs -ls /user

导入使用查询语句查询的数据到指定目录,并指定分隔符

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --query 'select * from person where $CONDITIONS and 1=1' --target-dir /user/demo/person --fields-terminated-by '\t' --m 1

进入浏览器输入网址http://hadoop01:9870/explorer.html#/user/demo查看

继续导入:

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --query 'select * from address where $CONDITIONS and 1=1' --target-dir /user/demo/address --fields-terminated-by '\t' --m 1

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --query 'select * from orderid where $CONDITIONS and 1=1' --target-dir /user/demo/orderid --fields-terminated-by '\t' --m 1

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --query 'select * from record where $CONDITIONS and 1=1' --target-dir /user/demo/record --fields-terminated-by '\t' --m 1

完成。

用sqoop将数据迁移到hive中

cp /usr/local/hive/lib/hive-common-3.1.2.jar /usr/local/sqoop/lib

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --table person --hive-import --hive-overwrite --lines-terminated-by "\n" --fields-terminated-by "\t" --m 1

进入浏览器输入网址查看:http://hadoop01:9870/explorer.html#/user/hive/warehouse/

在navicat中查看hive数据库

继续导入:

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --table address --hive-import --hive-overwrite --lines-terminated-by "\n" --fields-terminated-by "\t" --m 1

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --table orderid --hive-import --hive-overwrite --lines-terminated-by "\n" --fields-terminated-by "\t" --m 1

./sqoop import --connect jdbc:postgresql://hadoop01:5432/demo --username postgres --password 201314 --table record --hive-import --hive-overwrite --lines-terminated-by "\n" --fields-terminated-by "\t" --m 1

完成。

查询数据

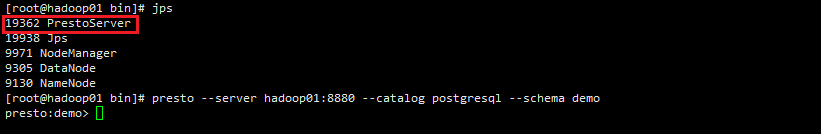

使用presto查询pgsql中的数据,idea连接presto查询pgsql中的数据

修改配置文件postgresql.properties

cd /usr/local/presto/etc/catalog

vim postgresql.properties

useUnicode=true&characterEncoding=utf8

launcher start //打开presto

presto --server hadoop01:8880 --catalog postgresql --schema demo //连接pgsql

单表:

1.查询用户表的前10条记录

select * from postgresql.public.person limit 10;

2.统计用户表中有多少条数据,其中男性多少,女性多少

select count(*) from postgresql.public.person;

select count(*) from postgresql.public.person where sex like '男%';

select count(*) from postgresql.public.person where sex like '女%';

select count(*) from person

union

select count(*) from person where sex like '男%'

union

select count(*) from person where sex like '女%';

此处不能使用sex='男',查询结果为0

3.查询地区表中的所有省份

select distinct province from postgresql.public.address;

4.查询订单表中价格高于10块的订单有多少条

select count(*) from postgresql.public.orderid where price>10;

5.查询记录表中id为8888的订单

select * from postgresql.public.record where id=8888;

多表:

6.查询id为8888的顾客的总消费

select record.noun,goods,price from postgresql.public.record,postgresql.public.orderid

where orderid.noun=record.noun and record.id=8888;

select sum(price) from postgresql.public.record,postgresql.public.orderid

where orderid.noun=record.noun and record.id=8888;

7.查询有多少人购买了西瓜

select count(*) from orderid where goods like '西瓜%';

select count(distinct id) from postgresql.public.record,postgresql.public.orderid

where orderid.noun=record.noun and orderid.goods like '西瓜%';

8.所有购买西瓜的这些人中,有多少来自北京的男性

select distinct person.id,sex,province from

postgresql.public.record,postgresql.public.orderid,postgresql.public.person,postgresql.public.address

where orderid.noun=record.noun and person.id=record.id

and address.zipcode=orderid.zipcode and orderid.goods like '西瓜%'

and person.sex like '男%' and address.province like '北京%';

统计人数

select count(distinct person.id) as count from

postgresql.public.record,postgresql.public.orderid,postgresql.public.person,postgresql.public.address

where orderid.noun=record.noun and person.id=record.id

and address.zipcode=orderid.zipcode and orderid.goods like '西瓜%'

and person.sex like '男%' and address.province like '北京%';

9.重庆渝中区的人在10:30~10:40这个时间段里购买最多的商品是什么,购买了多少次

select goods,count(goods) from orderid,address

where orderid.zipcode=address.zipcode and address.province like '重庆%' and address.city like '渝中%' group by goods order by count(goods) desc;

select goods,count(goods) from orderid,address,record

where orderid.zipcode=address.zipcode and address.province like '重庆%' and address.city like '渝中%'

and orderid.noun=record.noun and record.time Between '2021-11-15 10:30:00' AND '2021-11-15 10:40:00'

group by goods order by count(goods) desc;

select * from postgresql.public.record where time > cast('2021-11-15 10:30:00' as timestamp) and time < cast('2021-11-15 10:40:00' as timestamp);

select * from postgresql.public.record where time between cast('2021-11-15 10:30:00' as timestamp) and cast('2021-11-15 10:40:00' as timestamp);

select goods,count(goods) from postgresql.public.orderid,postgresql.public.address,postgresql.public.record

where orderid.zipcode=address.zipcode and address.province like '重庆%' and address.city like '渝中%'

and orderid.noun=record.noun and record.time between cast('2021-11-15 10:30:00' as timestamp) and cast('2021-11-15 10:40:00' as timestamp)

group by goods order by count(orderid.goods) desc limit 1;

10.查询id为1000的顾客的所有信息

select * from person,record,orderid,address where record.noun=orderid.noun and

address.zipcode=orderid.zipcode and person.id=record.id and person.id=1000;

select * from postgresql.public.person,postgresql.public.record,postgresql.public.orderid,postgresql.public.address

where record.noun=orderid.noun and address.zipcode=orderid.zipcode and person.id=record.id and person.id=1000;

发现问题:单个顾客的不同订单所在地址不同。在设计表时,应该将邮编与用户绑定。

查询hive

hdfs dfsadmin -safemode leave //关闭hdfs的安全模式

hive //打开hive

退出hive,使用presto进行连接查询

nohup hive --service metastore >/dev/null 2>&1 & //启动hive的metastore服务

launcher start //启动presto

presto --server hadoop01:8880 --catalog hive --schema default

show schemas;

show tables from default;

select * from person limit 10;

发现莫名其妙查不到数据,检查一番后,原因是hive里面的数据被清空了,所有重新导入了一次

再次查询:

1.查询用户表的前10条数据

select * from person limit 10;

2.统计用户表中有多少条数据,其中男性多少,女性多少

select count(*) from person

union

select count(*) from person where sex like '男%'

union

select count(*) from person where sex like '女%';

3.查询地区表中的所有省份

select distinct province from address;

4.查询订单表中价格高于10块的订单有多少条

select count(*) from orderid where price>10;

5.查询记录表中id为8888的订单

select * from record where id=8888;

6.查询id为8888的顾客的总消费

select sum(price) from record,orderid where orderid.noun=record.noun and id=8888;

7.查询有多少人购买了西瓜

select count(distinct id) from record,orderid where orderid.noun=record.noun and goods like '西瓜%';

8.所有购买西瓜的这些人中,有多少来自北京的男性

select count(distinct person.id) as count from record,orderid,person,address

where orderid.noun=record.noun and person.id=record.id and address.zipcode=orderid.zipcode

and goods like '西瓜%' and sex like '男%' and province like '北京%';

9.重庆渝中区的人在10:30~10:40这个时间段里购买最多的商品是什么,购买了多少次

select goods,count(goods) from orderid,address,record

where orderid.zipcode=address.zipcode and province like '重庆%' and city like '渝中%'

and orderid.noun=record.noun and time between '2021-11-15 10:30:00' and '2021-11-15 10:40:00'

group by goods order by count(orderid.goods) desc limit 1;

10.查询id为1000的顾客的所有信息

select * from person,record,orderid,address where record.noun=orderid.noun and address.zipcode=orderid.zipcode and person.id=record.id and person.id=1000;

可以看出在使用presto在查询hive的数据时,语句比查询postgresql要简洁一些,可以直接使用sql语句,同时在查询时间时不再需要使用cast()函数进行数据类型转换。

JDBC连接

连接postgresql:

测试:

public class JdbcDemo01 {

public static void main(String[] args) throws SQLException, ClassNotFoundException{

Connection conn = null;

//String url = "jdbc:postgresql://hadoop01:5432/demo";

//String user = "postgres";

//String pwd = "201314";

//conn = DriverManager.getConnection(url, user, pwd);

conn = DriverManager.getConnection("jdbc:postgresql://hadoop01:5432/demo", "postgres", "201314");

Class.forName("org.postgresql.Driver");

Statement st = conn.createStatement();

ResultSet rs = st.executeQuery("select id,name from Person limit 10");

while (rs.next()) {

System.out.println("id="+rs.getString(1)+",name is "+rs.getString(2));

}

rs.close();

st.close();

conn.close();

}

}

连接presto:

测试:

public class PrestoDemo01 {

public static void main(String[] args) throws SQLException, ClassNotFoundException {

Class.forName("com.facebook.presto.jdbc.PrestoDriver");

Connection connection = DriverManager.getConnection("jdbc:presto://hadoop01:8880/postgres/demo", "root", null);

Statement st = connection.createStatement();

ResultSet rs = st.executeQuery("select id,name from postgresql.public.person limit 10");

while (rs.next()) {

System.out.println("id="+rs.getString(1)+",name is "+rs.getString(2));

}

rs.close();

st.close();

connection.close();

}

}