爬虫:爬取扇贝上python常用单词,减少登陆和贝壳的繁琐

import requests

import re

file = open("vocabulary.doc", "w", encoding="utf-8")

def spider(url):

res = requests.get(url).text

pattern = '<strong>([a-z,A-Z]*?)</strong>\s*</td>\s*<td class="span10">(.*?)</td>'

vocabulary_list = re.findall(pattern, res)

for vocabulary in vocabulary_list:

file.writelines((vocabulary[0].strip(''), vocabulary[1].strip(''), "\n"))

url_list = ["https://www.shanbay.com/wordlist/104899/202159/?page=",

"https://www.shanbay.com/wordlist/104899/202162/?page=",

]

for url in url_list:

for i in range(1, 10):

url = "https://www.shanbay.com/wordlist/104899/202159/?page=" + str(i)

spider(url)

file.close()# 太实诚了,先放了源码,几行代码,纯粹是免登陆,免199贝壳去支付...拿下网页的单词

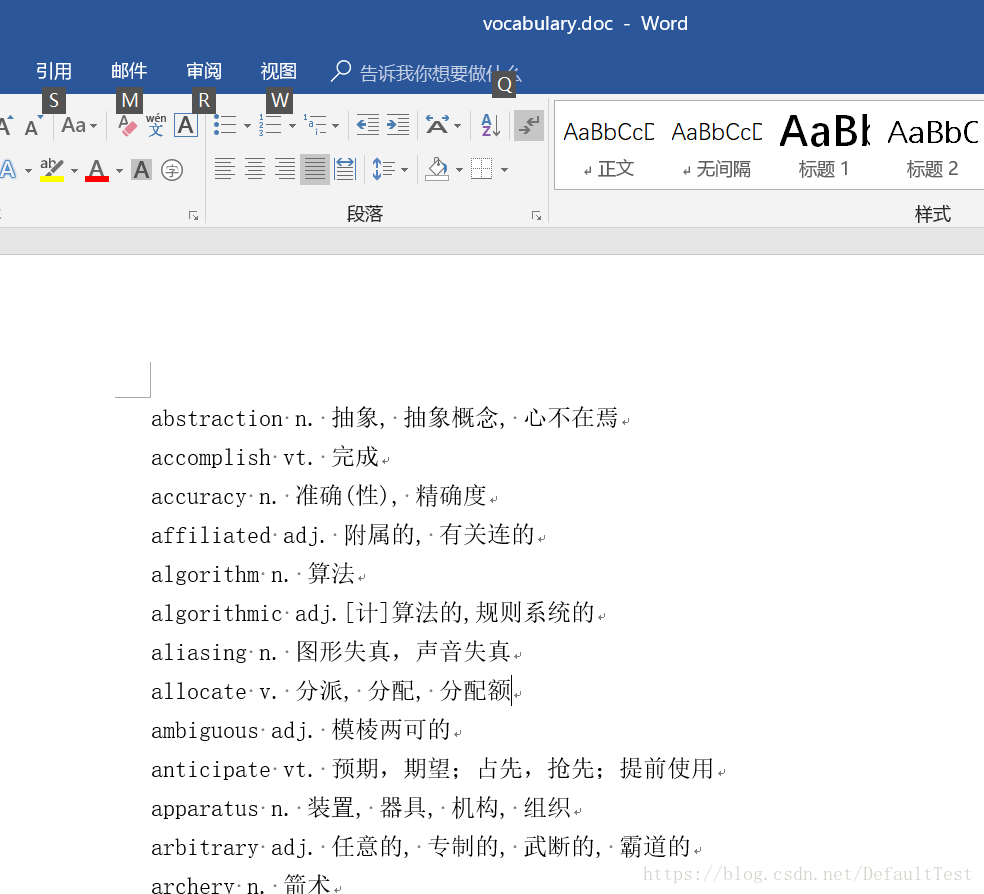

# 会生成一个word的结果文档在代码运行的同一目录下,结果如下,没有可以排版,最好是放在excel下。